- 1mac 添加永久路由的方法_mac添加永久路由

- 2第十篇:深入文件夹:Python中的文件管理和自动化技术

- 3大模型工具Ollama 应用千问和llama3模型_ollama下载模型地址

- 4java app接口安全认证_app与后台交互之间的几种安全认证机制

- 5Appian发布最新版本:通过AI流程自动化推动业务发展

- 6Linux:详解 用户,用户组的解释创建等。_linux用户

- 7百度智能云API——实现语言理解功能(python_百度智能云 api

- 8自动控制工程技术人员的工作内容有哪些

- 9使用Mona插件辅助漏洞挖掘

- 10【自然语言处理】Text Summarization文本摘要与注意力机制_注意力机制的摘要提取

2.Hive下载与安装

赞

踩

2.Hive下载与安装

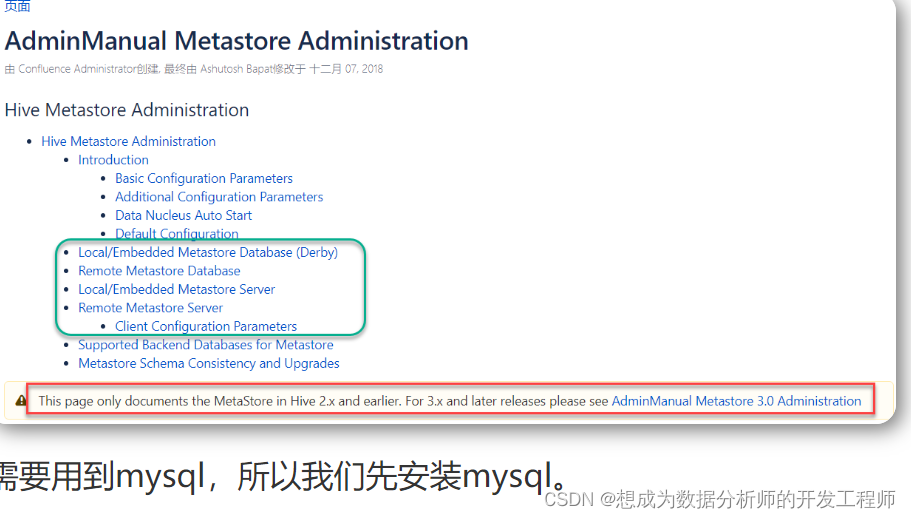

2.1 三种安装方式的区别

Hive中metastore(元数据存储)的三种模式:

a)内嵌Derby模式

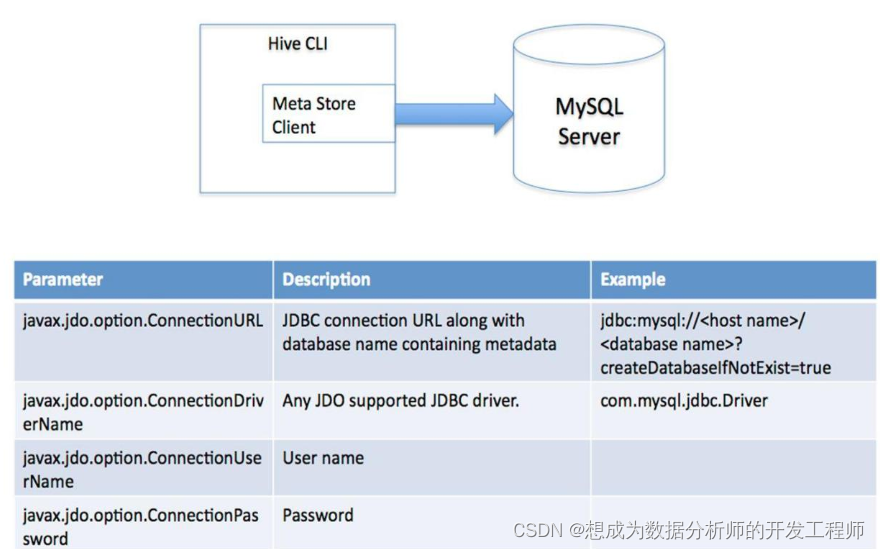

b)直连数据库模式

c)远程服务器模式

2.1.1 内嵌Derby模式

此模式连接到一个In-memory 的数据库Derby,一般用于Unit Test(单元测试目前用的也少),一台服务器即可,基本不用。

2.1.2 直连数据库模式(mysql)

通过网络连接到一个数据库中。

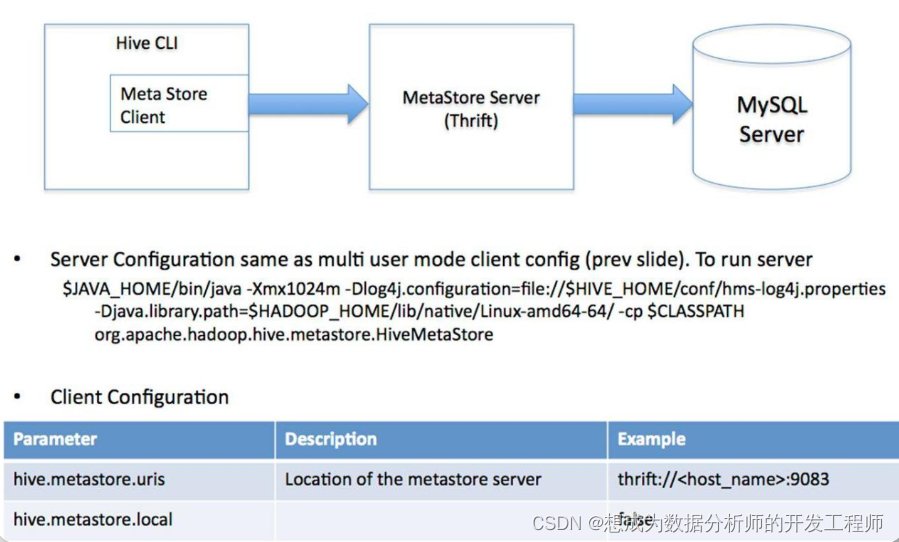

2.1.3 远程服务模式

用于非Java客户端访问元数据库,在服务器端启动MetaStoreServer,客户端利用Thrift协议通过MetaStoreServer访问元数据库。起到了解耦的作用,如果更换其他数据库只需要修改MetaStoreServer即可。

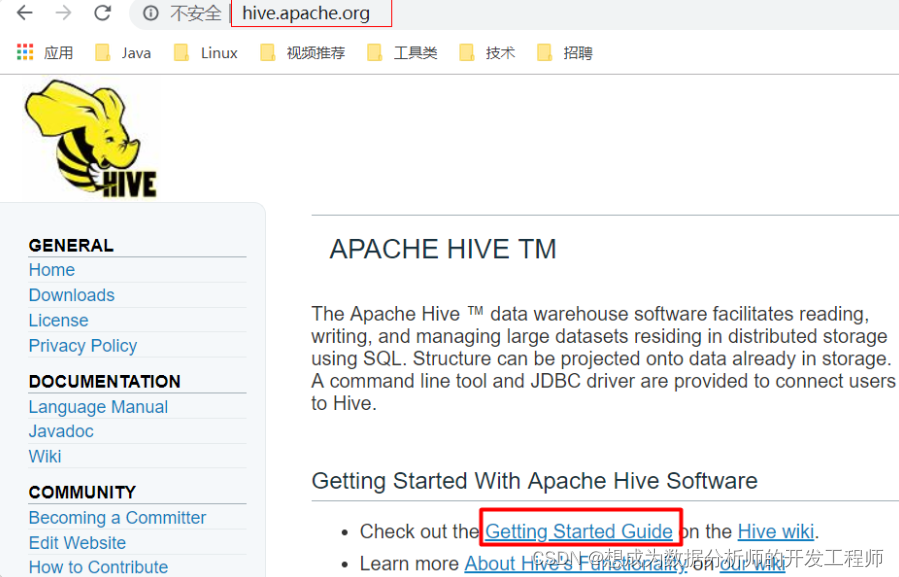

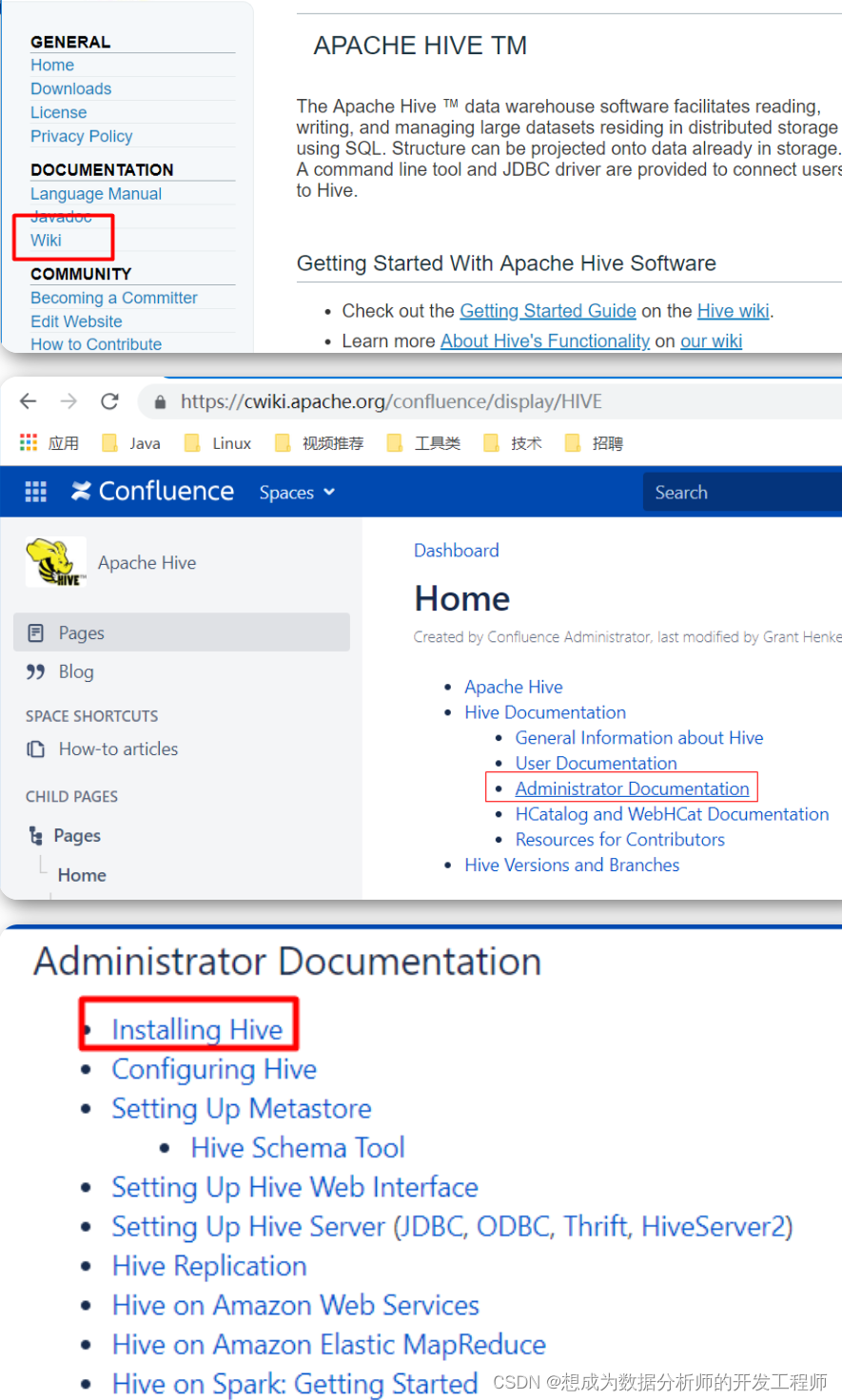

2.2 官方文档介绍

2.2.1 下载地址

-

Hive官网地址:http://hive.apache.org/

-

下载地址

https://dlcdn.apache.org/hive/

以下网址可以选择的版本更加全面:

http://archive.apache.org/dist/hive/ -

文档查看地址

https://cwiki.apache.org/confluence/display/Hive/GettingStarted -

github地址:https://github.com/apache/hive

2.2.2 官网阅读

Requirements

Java 1.7 Note: Hive versions 1.2 onward require Java 1.7 or newer. Hive versions 0.14 to 1.1 work with Java 1.6 as well. Users are strongly advised to start moving to Java 1.8 (see HIVE-8607).

Hadoop 2.x (preferred), 1.x (not supported by Hive 2.0.0 onward). Hive versions up to 0.13 also supported Hadoop 0.20.x, 0.23.x.

Hive is commonly used in production Linux and Windows environment. Mac is a commonly used development environment. The instructions in this document are applicable to Linux and Mac. Using it on Windows would require slightly different steps.

Installing Hive from a Stable Release (从稳定版本安装hive)

Start by downloading the most recent stable release of Hive from one of the Apache download mirrors (see Hive Releases).

Next you need to unpack the tarball. This will result in the creation of a subdirectory named hive-x.y.z (where x.y.z is the release number):

$ tar -xzvf hive-x.y.z.tar.gz

- 1

Set the environment variable HIVE_HOME to point to the installation directory:

$ cd hive-x.y.z

$ export HIVE_HOME={{pwd}}

- 1

- 2

Finally, add $HIVE_HOME/bin to your PATH:

$ export PATH=$HIVE_HOME/bin:$PATH

- 1

Building Hive from Source(源码安装 了解)

The Hive GIT repository for the most recent Hive code is located here: git clone https://git-wip-us.apache.org/repos/asf/hive.git (the master branch).

All release versions are in branches named “branch-0.#” or “branch-1.#” or the upcoming “branch-2.#”, with the exception of release 0.8.1 which is in “branch-0.8-r2”. Any branches with other names are feature branches for works-in-progress. See Understanding Hive Branches for details.

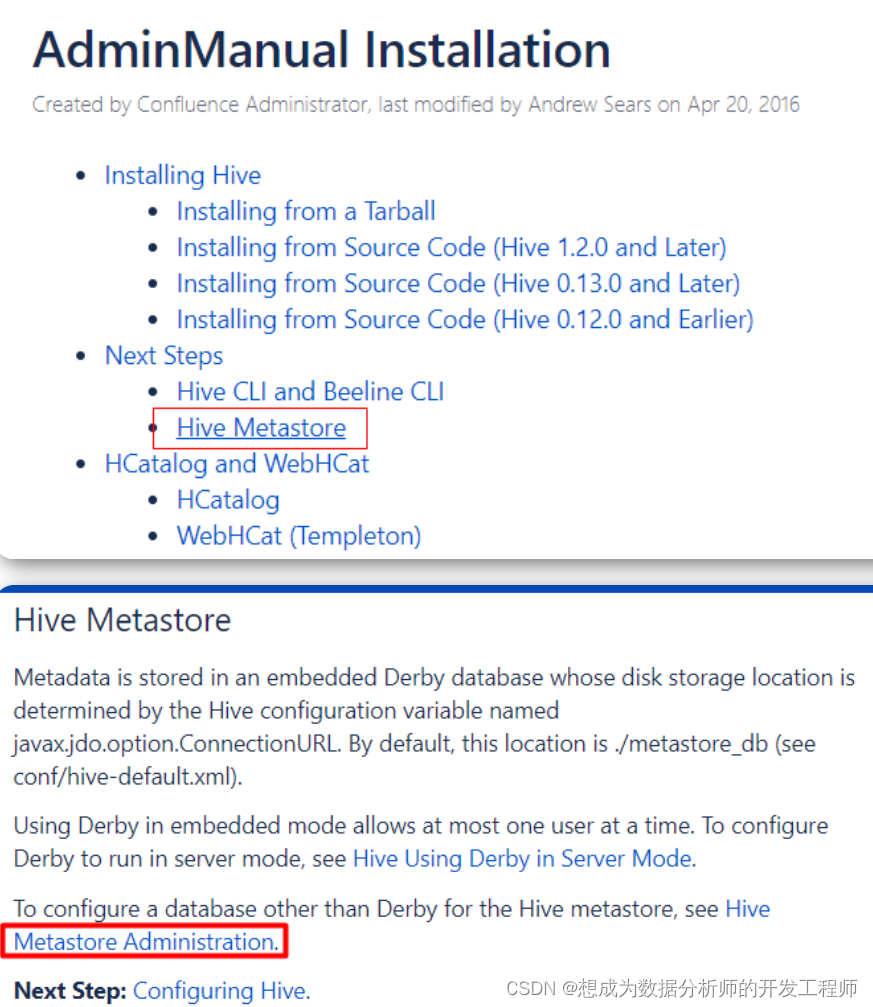

默认情况下Metadata(元数据存储)在嵌入式Derby数据库中,其磁盘存储位置由名为javax.jdo.option.Connectionurl的hive配置变量决定。默认情况下,此位置为./metastore_db(参见conf/hive-default.xml)。在嵌入式模式下使用Derby最多一次允许一个用户。若要将Derby配置为在服务器模式下运行,请参见服务器模式下使用Derby的Hive。若要为Hive元存储配置除Derby以外的数据库,请参见Hive Metastore Administration。

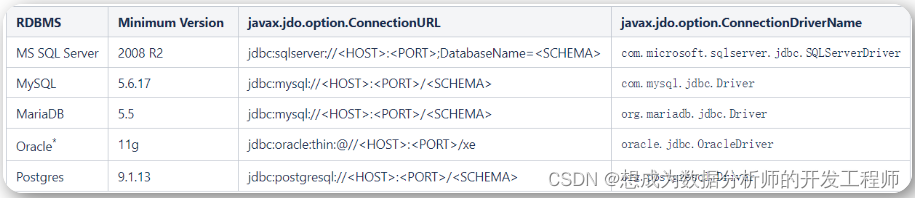

2.3 虚拟机安装MySQL教程(node1上配置)

当前hive对关系型数据库版本要求如下

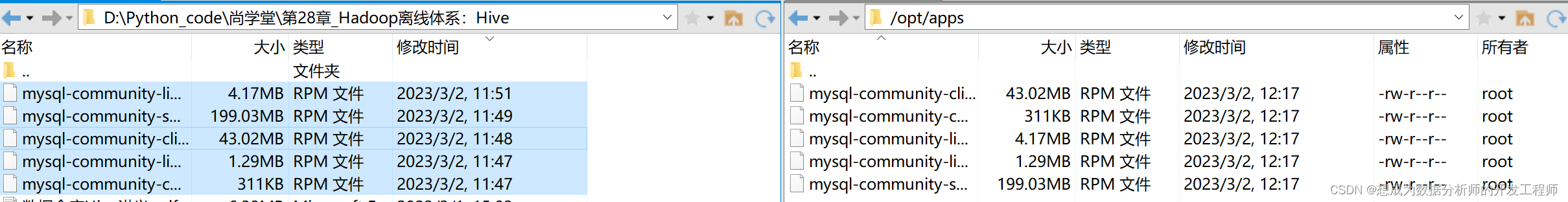

- 将mysql的五个rpm包上传到/opt/apps目录

- 检查当前节点上是否存在mysql安装包,存在则删除:

[root@localhost apps]# rpm -qa | grep mysql # 检查是否存在mysql

[root@localhost apps]# rpm -qa | grep mariadb-libs # 检查是否存在这个包

mariadb-libs-5.5.68-1.el7.x86_64

[root@node1 apps]# rpm -e --nodeps mariadb-libs

#如果不处理 mariadb-libs,后续安装的时候会出现如下错误提示:

错误:依赖检测失败:

mariadb-libs 被 mysql-community-libs-5.7.28-1.el7.x86_64 取代

mariadb-libs 被 mysql-community-libs-compat-5.7.28-1.el7.x86_64 取代

#找到的mysql相关的包都删除 *号指代实际包名, 请实际情况输入完整包名

#rpm -e --nodeps mysql-community-libs-*

#rpm -e --nodeps mysql-community-server-*

#rpm -e --nodeps mysql-community-common-*

#rpm -e --nodeps mysql-community-client-*

#rpm -e --nodeps mysql-community-libs-compat-*

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 检查并删除老版本mysql的开发头文件和库

[root@localhost apps]# find -iname 'mysql*'

./mysql-community-server-5.7.28-1.el7.x86_64.rpm

./mysql-community-libs-5.7.28-1.el7.x86_64.rpm

./mysql-community-client-5.7.28-1.el7.x86_64.rpm

./mysql-community-libs-compat-5.7.28-1.el7.x86_64.rpm

./mysql-community-common-5.7.28-1.el7.x86_64.rpm

- 1

- 2

- 3

- 4

- 5

- 6

注意:卸载后/var/lib/mysql中的数据及/etc/my.cnf不会删除,如果确定没用后就手工删除

- 安装依赖包:net-tools libaio

yum install -y net-tools libaio

- 1

- 安装mysql

rpm -ivh mysql-community-*

- 1

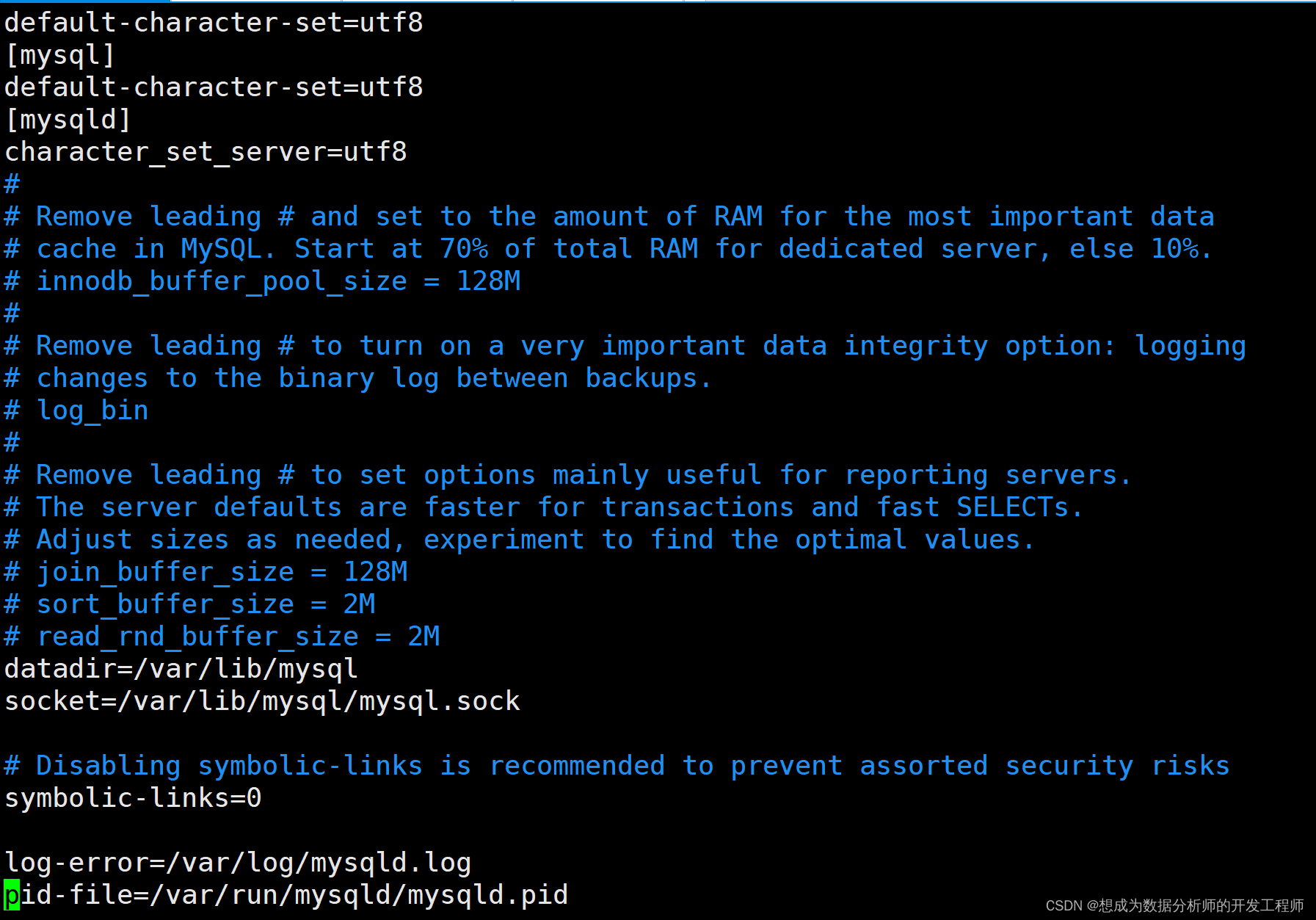

- 修改/etc/my.cnf文件,设置数据库的编码方式:

[client]

default-character-set=utf8

[mysql]

default-character-set=utf8

[mysqld]

character_set_server=utf8

- 1

- 2

- 3

- 4

- 5

- 6

在最后一行种,mysqld就是保存密码的文件;倒数第二行是错误日志

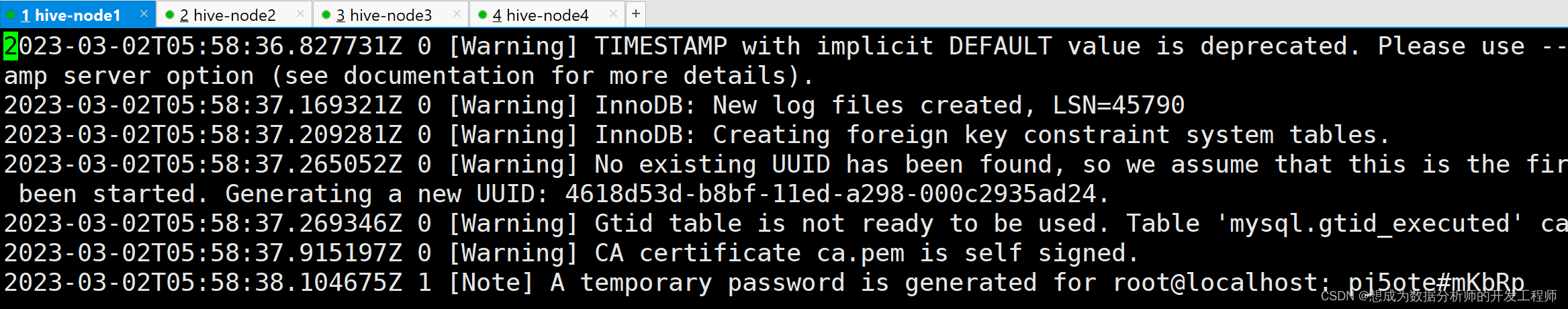

- 初始化数据库

[root@localhost apps]# mysqld --initialize --user=mysql

- 1

- 找到随机密码:

在/var/log/mysqld.log中有一行:

A temporary password is generated for root@localhost,后面就是随机密码

mysql -uroot -p"yAe7QGVJ;HlR"使用随机密码登录系统

- 启动mysql服务:

systemctl start mysqld

- 1

- 登录mysql数据库:

[root@node1 apps]# mysql -uroot -p

Enter password: 输入临时密码

- 1

- 2

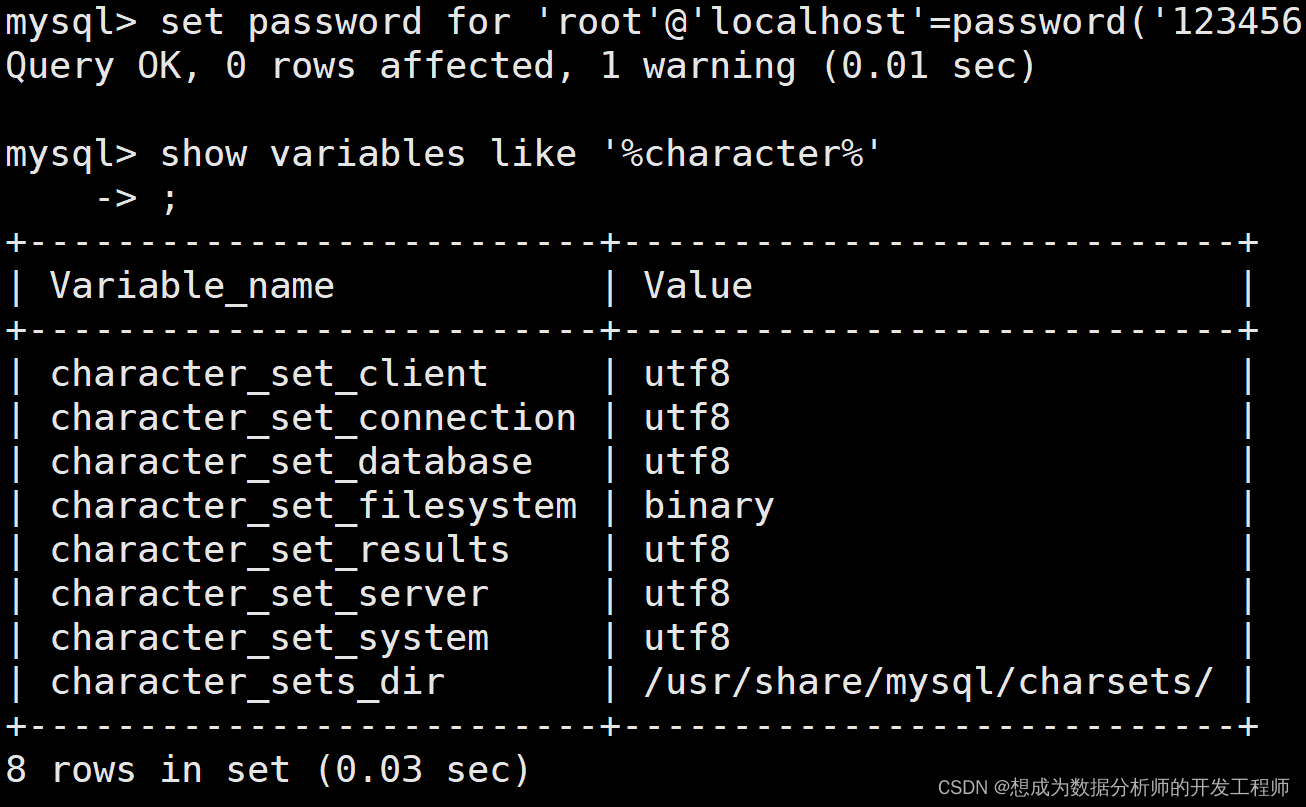

- 修改默认密码

mysql> set password for 'root'@'localhost'=password('123456');

- 1

- 查看编码方式:

mysql> show variables like '%character%';

- 1

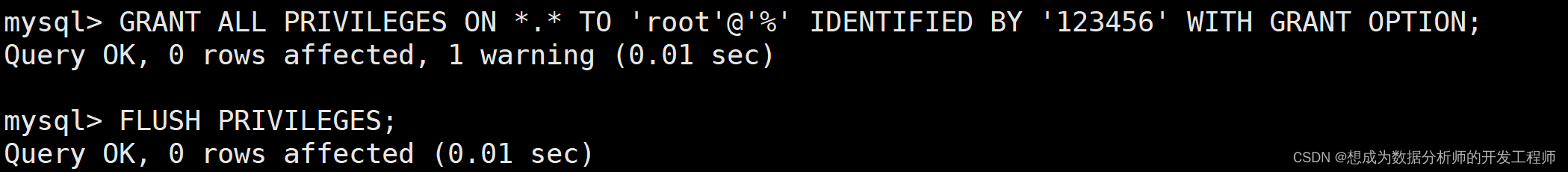

- 给root设置远程登录权限(处理只能在本机使用,不可以远程使用mysql)

mysql>GRANT ALL PRIVILEGES ON *.* TO 'root'@'%' IDENTIFIED BY '123456' WITH GRANT OPTION;

mysql>FLUSH PRIVILEGES;

mysql> exit

Bye

[root@node1 apps]# mysql -uroot -p123456

- 1

- 2

- 3

- 4

- 5

2.4 直连数据库(MySQL)模式安装(node2)

- 把apache-hive-3.1.2-bin.tar.gz上传到node2的/opt/apps目录下

- 解压apache-hive-3.1.2-bin.tar.gz到/opt/module/目录下面

[root@node2 apps]# tar -zxvf /opt/apps/apache-hive-3.1.2-bin.tar.gz -C /opt/

- 1

- 修改apache-hive-3.1.2-bin.tar.gz的名称为hive-3.1.2

[root@node2 apps]# mv /opt/apache-hive-3.1.2-bin/ /opt/hive-3.1.2

- 1

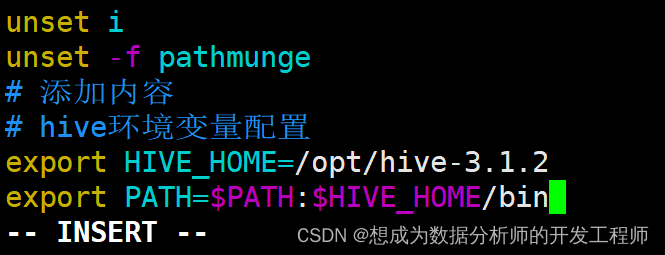

- 修改/etc/profile,添加环境变量

[root@node2 apps]# vim /etc/profile

#添加内容

# hive环境变量配置

exprot HIVE_HOME=/opt/hive-3.1.2

export PATH=$PATH:$HIVE_HOME/bin

#使环境变量生效

[root@node2 hive-3.1.2]# source /etc/profile

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 解决日志Jar包冲突

[root@localhost lib]# pwd

/opt/hive-3.1.2/lib

[root@localhost lib]# mv log4j-slf4j-impl-2.10.0.jar log4j-slf4j-impl-2.10.0.bak_up2021

- 1

- 2

- 3

- 将MySQL的JDBC驱动拷贝到Hive的lib目录下

[root@node2 apps]# cp /opt/apps/mysql-connector-java-5.1.48.jar $HIVE_HOME/lib

[root@localhost conf]# pwd

/opt/hive-3.1.2/conf

[root@localhost conf]# cp hive-default.xml.template hive-site.xml

- 1

- 2

- 3

- 4

- 配置Metastore到MySql

修改hive-site.xml

[root@localhost conf]# vim hive-site.xml

- 1

全部删除后,复制如下内容

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- jdbc连接的URL设置 -->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://node1:3306/hive?useSSL=false</value>

</property>

<!-- jdbc连接的Driver类设置-->

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<!-- 指定jdbc连接的username-->

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<!-- 指定jdbc连接mysql的password -->

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

</property>

<!-- Hive默认在HDFS的工作目录 -->

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<!-- Hive元数据存储的验证 -->

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

<!-- 元数据存储授权 -->

<property>

<name>hive.metastore.event.db.notification.api.auth</name>

<value>false</value>

</property>

</configuration>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

linux配置文件格式化内容

首先,进入到ESC命令模式,光标放到左边,ctrl+V,选中ctrl划到最后一行。按shift+:,输入xmllint -format -然后按回车

- 在node1上mysql数据库中创建数据库实例hive、

[root@localhost apps]# mysql -uroot -p123456

mysql: [Warning] Using a password on the command line interface can be insecure.

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 6

Server version: 5.7.28 MySQL Community Server (GPL)

Copyright (c) 2000, 2019, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> create database hive;

Query OK, 1 row affected (0.05 sec)

mysql> use hive

Database changed

mysql> show tables;

Empty set (0.00 sec)

mysql> quit

Bye

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 在node2上初始化hive的元数据到mysql数据库的hive实例下

[root@node2 conf]# schematool -initSchema -dbType mysql -verbose

- 1

- 2

在node1的mysql数据库中查看hive实例下表,会发现多了74张表。

2.5 直连数据库式启动和使用

-

启动hadoop集群,在node1上执行startha.sh(参考HDFS专栏中有源码),如果有namenode没有启动起来,只需要在对应的节点上执行命令:

hdfs --daemon start namenode -

在node2上启动hive:

[root@node2 ~]# hive

- 1

- 使用hive,创建表:node2

hive> show databases;

OK

default

Time taken: 0.923 seconds, Fetched: 1 row(s)

hive> show tables;

OK

Time taken: 0.081 seconds

hive> create table tb_test(id int);

OK

Time taken: 1.001 seconds

hive> show tables;

OK

tb_test

Time taken: 0.087 seconds, Fetched: 1 row(s)

hive> create table test(id int,age int);

OK

Time taken: 0.123 seconds

hive> show tables;

OK

tb_test

test

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

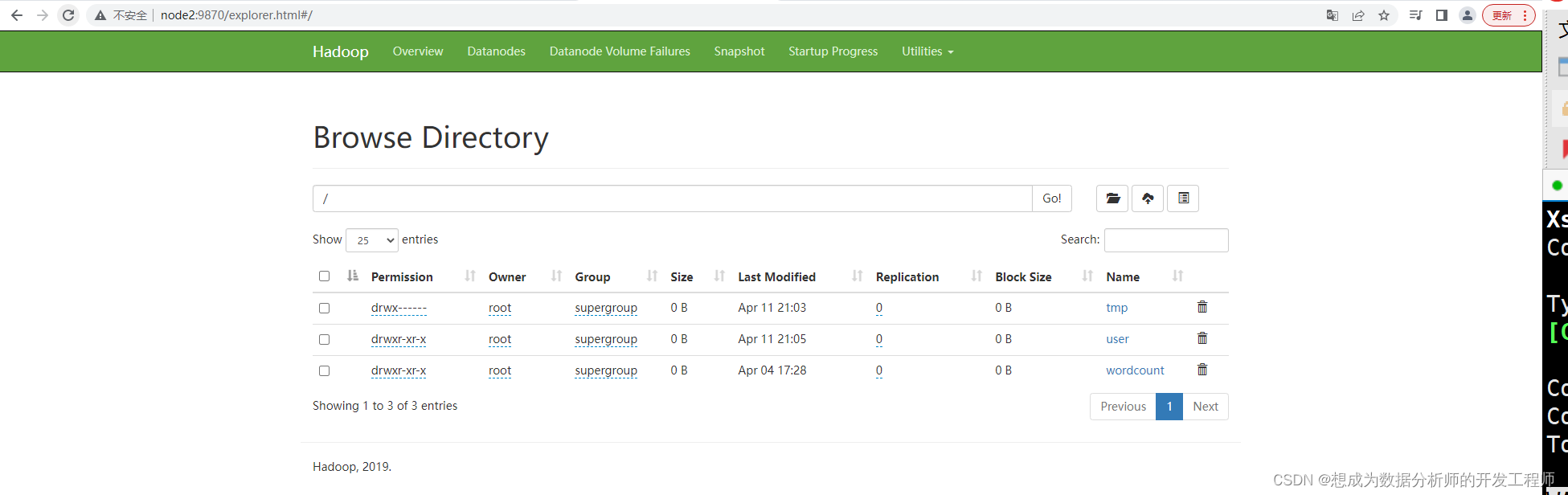

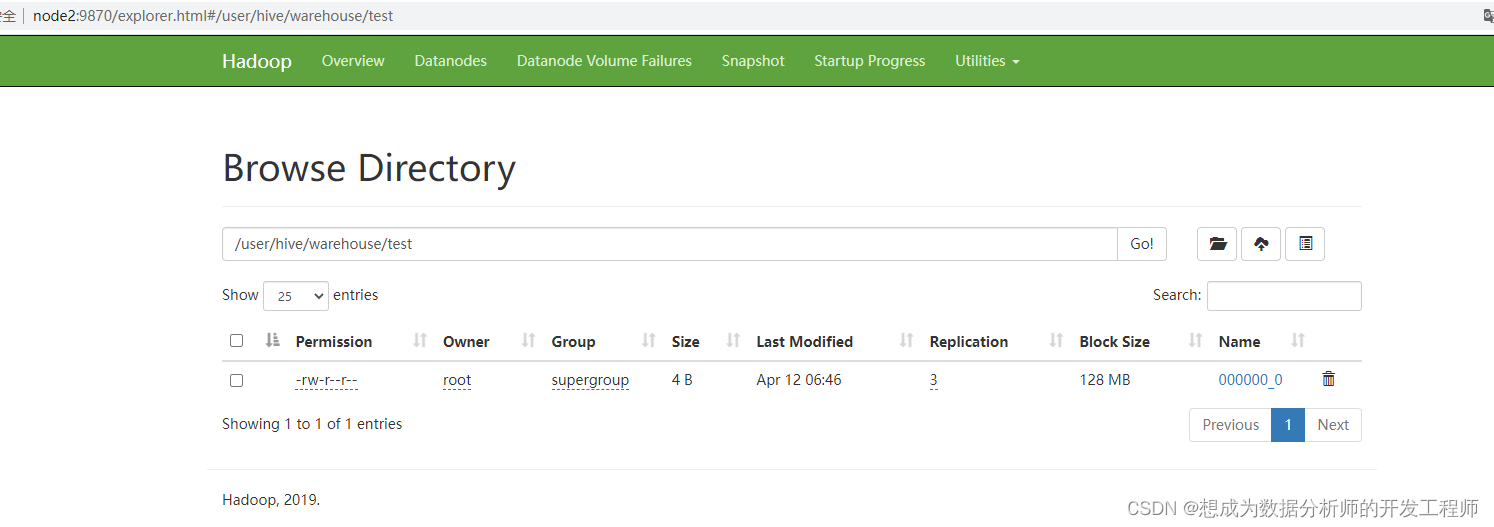

- 访问active的namenode,查看文件列表

http://node2:9870/explorer.html#/user/hive/warehouse

-

表在hdfs也有对应的目录,但是目录下没有数据。

-

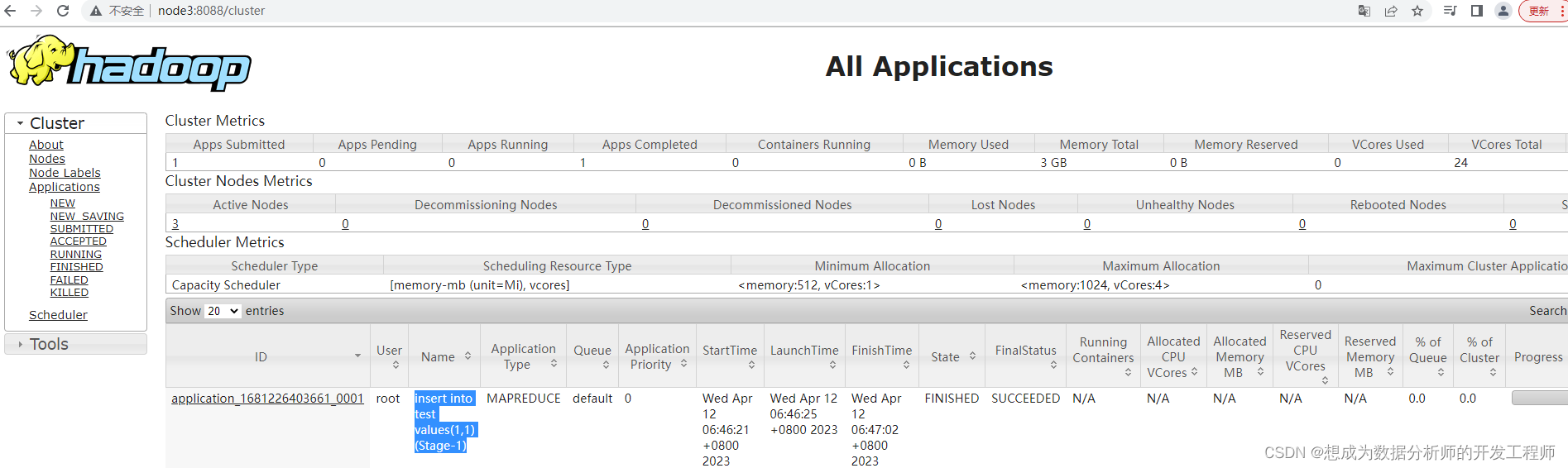

向test表插入数据(超级慢)

hive> insert into test values(1,1);

Query ID = root_20211108214257_de79649c-530d-4420-9236-d8514d3d8928

Total jobs = 3

Launching Job 1 out of 3

Number of reduce tasks determined at compile time: 1

- 1

- 2

- 3

- 4

- 5

- 6

当任务执行完之后,再查看HDFS的文件列表页面,在test目录下多出文件。

- 在node1中连接上mysql查看表中的数据

[root@node1 ~]# mysql -uroot -p123456

mysql> show tables;

+-------------------------------+

| Tables_in_hive |

+-------------------------------+

| COLUMNS_V2 | 保存表中列的数据

......

| DBS | 保存的是数据库实例

......

| TBLS | 保存的表数据

+-------------------------------+

74 rows in set (0.00 sec)

......

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 查看DBS表中的数据:

mysql> select * from DBS;

+-------+-----------------------+--------------------------------------+---------+------------+------------+-----------+

| DB_ID | DESC | DB_LOCATION_URI | NAME | OWNER_NAME | OWNER_TYPE | CTLG_NAME |

+-------+-----------------------+--------------------------------------+---------+------------+------------+-----------+

| 1 | Default Hive database | hdfs://mycluster/user/hive/warehouse | default | public | ROLE | hive |

+-------+-----------------------+--------------------------------------+---------+------------+------------+-----------+

1 row in set (0.00 sec)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 查看TBLS表中数据:

mysql> select * from TBLS;

+--------+-------------+-------+------------------+-------+------------+-----------+-------+----------+---------------+--------------------+--------------------+--------------------+

| TBL_ID | CREATE_TIME | DB_ID | LAST_ACCESS_TIME | OWNER | OWNER_TYPE | RETENTION | SD_ID | TBL_NAME | TBL_TYPE | VIEW_EXPANDED_TEXT | VIEW_ORIGINAL_TEXT | IS_REWRITE_ENABLED |

+--------+-------------+-------+------------------+-------+------------+-----------+-------+----------+---------------+--------------------+--------------------+--------------------+

| 1 | 1681218331 | 1 | 0 | root | USER | 0 | 1 | tb_test | MANAGED_TABLE | NULL | NULL | |

| 2 | 1681218351 | 1 | 0 | root | USER | 0 | 2 | test | MANAGED_TABLE | NULL | NULL | |

+--------+-------------+-------+------------------+-------+------------+-----------+-------+----------+---------------+--------------------+--------------------+--------------------+

2 rows in set (0.00 sec)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 查看表 COLUMNS_V2中的数据:

mysql> select * from COLUMNS_V2;

#表id 描述 列名 类型 顺序

+-------+---------+-------------+-----------+-------------+

| CD_ID | COMMENT | COLUMN_NAME | TYPE_NAME | INTEGER_IDX |

+-------+---------+-------------+-----------+-------------+

| 1 | NULL | id | int | 0 |

| 2 | NULL | age | int | 1 |

| 2 | NULL | id | int | 0 |

+-------+---------+-------------+-----------+-------------+

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 在node2上hive中查看表中的数据:

我们把hive的客户端和服务器放在一台虚拟机

hive> select * from tb_test;

OK

Time taken: 0.443 seconds

hive> select * from test;

OK

1 1

Time taken: 0.281 seconds, Fetched: 1 row(s)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

注意:目前的这种配置方式,是将hive的服务器端和客户端放在一台服务器上(node2)。如果想通过jdbc程序访问该hive是没有办法的,这是因为hive没有开启hiveserver2服务。

2.6 远程服务器模式安装

思考:hive是如何连接到hdfs的?

答案:通过环境变量。

规划:node3为服务器端 node4为客户端

具体安装配置步骤:

- 从node2上将/opt/hive-3.1.2拷贝到node3和node4上

[root@node2 ~]# scp -r /opt/hive-3.1.2/ node3:/opt

[root@node2 ~]# scp -r /opt/hive-3.1.2/ node4:/opt

- 1

- 2

- 修改node3上的hive_site.xml文件

[root@node3 conf]# pwd

/opt/hive-3.1.2/conf

[root@node3 conf]# vim hive-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- jdbc连接的URL设置 将hive改为hive_remote-->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://node1:3306/hive_remote?useSSL=false</value>

</property>

<!-- jdbc连接的Driver类设置-->

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<!-- 指定jdbc连接的username-->

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<!-- 指定jdbc连接mysql的password -->

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

</property>

<!-- Hive默认在HDFS的工作目录 将hive改为hive_remote -->

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive_remote/warehouse</value>

</property>

<!-- Hive元数据存储的验证 -->

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

<!-- 元数据存储授权 -->

<property>

<name>hive.metastore.event.db.notification.api.auth</name>

<value>false</value>

</property>

</configuration>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 配置node3上hive的环境变量:

[root@node3 conf]# vim /etc/profile

#在文件的最下位置添加如下内容

# hive环境变量配置

export HIVE_HOME=/opt/hive-3.1.2

export PATH=$PATH:$HIVE_HOME/bin

[root@node3 conf]# source /etc/profile

- 1

- 2

- 3

- 4

- 5

- 6

- 配置node3上hive的环境变量:

[root@node4 conf]# vim /etc/profile

#在文件的最下位置添加如下内容

# hive环境变量配置

export HIVE_HOME=/opt/hive-3.1.2

export PATH=$PATH:$HIVE_HOME/bin

[root@node3 conf]# source /etc/profile

- 1

- 2

- 3

- 4

- 5

- 6

- 在node1的mysql中创建一个数据库实例:hive_remote

[root@node1 ~]# mysql -uroot -p123456

mysql> create database hive_remote;

Query OK, 1 row affected (0.00 sec)

mysql> use hive_remote;

Database changed

mysql> show tables;

Empty set (0.00 sec) # 神马表都没有,根本没有hive的元数据

mysql> quit;

Bye

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 在node3上初始化hive的元数据到mysql数据库的hive_remote实例下

我们在配置文件中配置过路径

[root@node3 conf]# schematool -initSchema -dbType mysql -verbose

- 1

- 2

- 在node1的mysql数据库中查看hive_remote实例下表,会发现多了74张表。到目前位置这个和直连数据的方式是一样,hive的服务器和客户端都在node3上,没有启动Metastore或hiveserver2,通过jdbc也无法访问。

- 在node3上查看被占用的端口号:

[root@node3 conf]# netstat -nlpt

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 0.0.0.0:8042 0.0.0.0:* LISTEN 15641/java

- 1

- 2

- 3

- 4

经过观察发现9083端口没有被占用。

- 在node3上启动metastore服务

[root@node3 ~]# hive --service metastore

2021-11-08 22:43:50: Starting Hive Metastore Server

- 1

- 2

该命令为阻塞命令,如上所示执行命令后无法继续输入命令了。

- 复制一个node3连接的窗口

[root@node3 ~]# netstat -nlpt

tcp6 0 0 :::9083 :::* LISTEN 22358/java

- 1

- 2

- 如果在9步骤不需要窗口的命令被阻塞,也可以通过如下方式:

[root@node3 ~]# hive --service metastore &

[1] 23017

[root@node3 ~]# 2021-11-08 22:50:08: Starting Hive Metastore Server

# 查看是否有hive的服务进程

[root@node3 ~]# ps aux|grep hive

root 23017 126 15.8 1986692 157732 pts/0 Sl 22:50 0:16 /usr/java/default/bin/java -Dproc_jar -Dproc_metastore -Dlog4j.configurationFile=hive-log4j2.properties -Djava.util.logging.config.file=/opt/hive-3.1.2/conf/parquet-logging.properties -Dyarn.log.dir=/opt/hadoop-3.1.3/logs -Dyarn.log.file=hadoop.log -Dyarn.home.dir=/opt/hadoop-3.1.3 -Dyarn.root.logger=INFO,console -Djava.library.path=/opt/hadoop-3.1.3/lib/native -Xmx256m -Dhadoop.log.dir=/opt/hadoop-3.1.3/logs -Dhadoop.log.file=hadoop.log -Dhadoop.home.dir=/opt/hadoop-3.1.3 -Dhadoop.id.str=root -Dhadoop.root.logger=INFO,console -Dhadoop.policy.file=hadoop-policy.xml -Dhadoop.security.logger=INFO,NullAppender org.apache.hadoop.util.RunJar /opt/hive-3.1.2/lib/hive-metastore-3.1.2.jar org.apache.hadoop.hive.metastore.HiveMetaStore

- 1

- 2

- 3

- 4

- 5

- 6

- 7

这样操作的话,步骤10就不再需要新复制一个xshell连接窗口了。

- 修改node4上的hive-site.xml配置文件:

[root@node4 ~]# cd /opt/hive-3.1.2/conf/

[root@node4 conf]# vim hive-site.xml

- 1

- 2

- node4上hive客户端启动hive:

[root@node4 conf]# hive

hive>

- 1

- 2

2.7 远程服务器模式测试

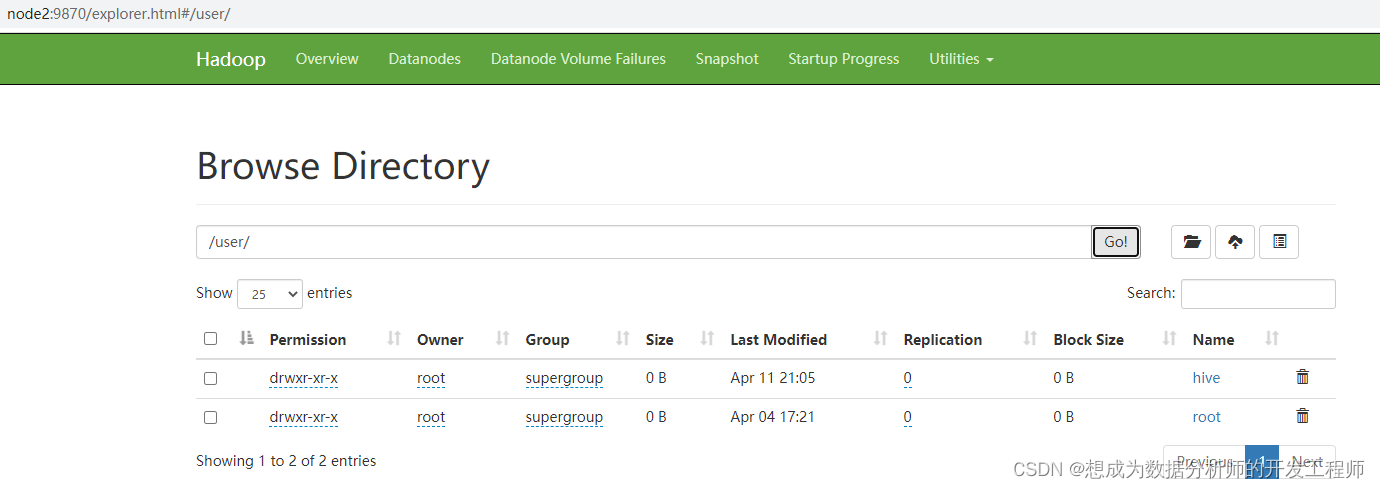

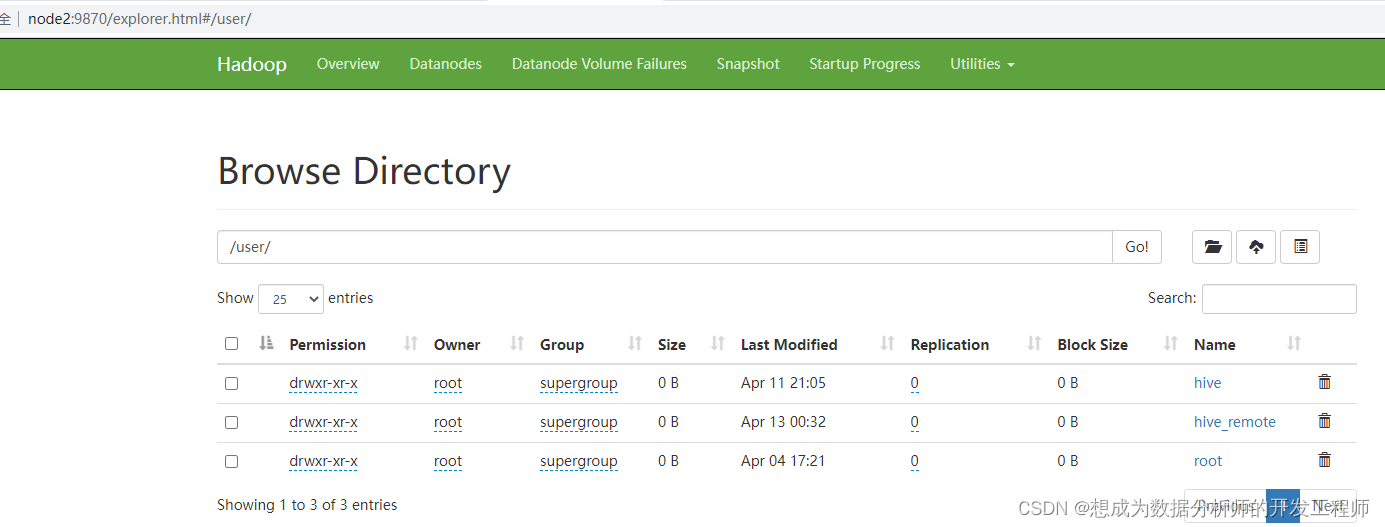

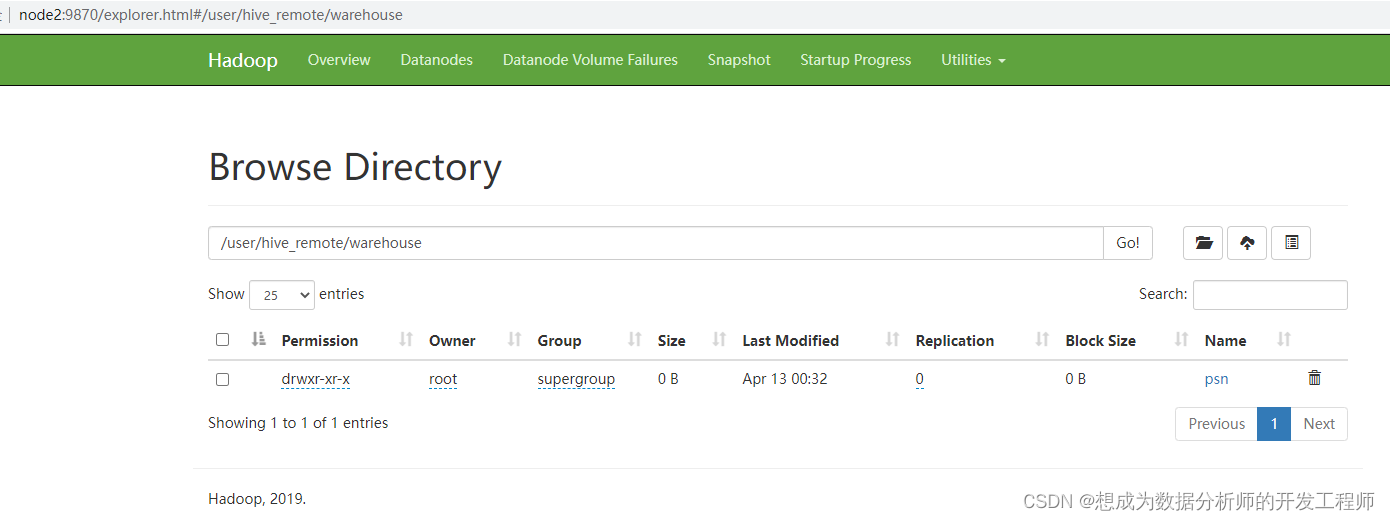

在node4上建表之前查看hdfs文件列表

在node4上hive客户端上创建表psn

hive> create table psn(id int,age int);

OK

Time taken: 4.147 seconds

- 1

- 2

- 3

建表后,再次查看hdfs文件列表

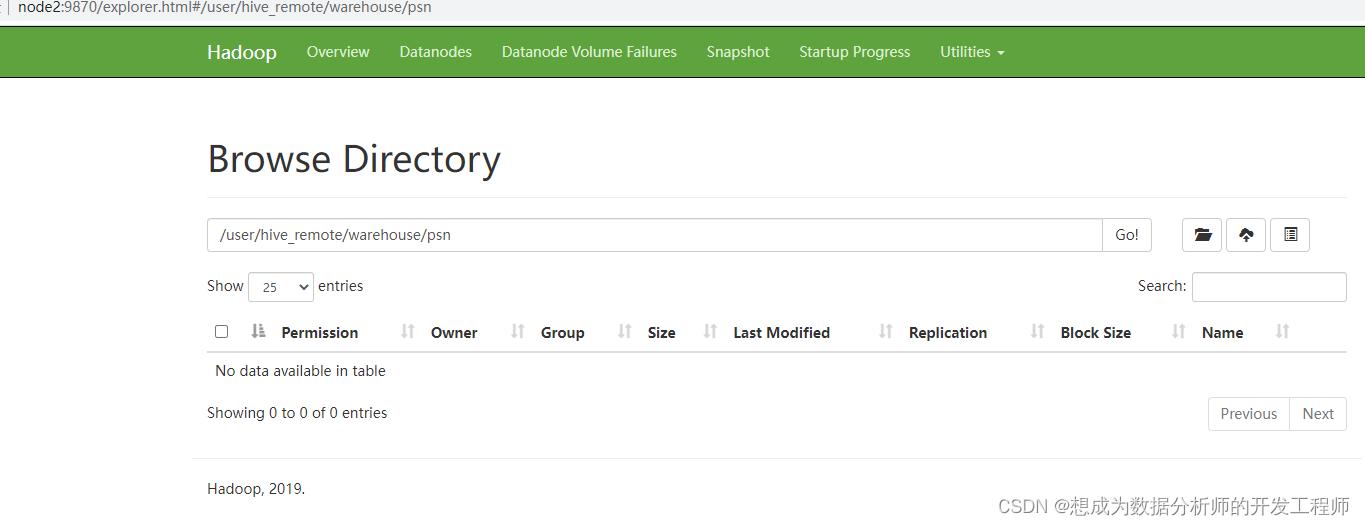

在未添加数据之前,psn目录没有文件:

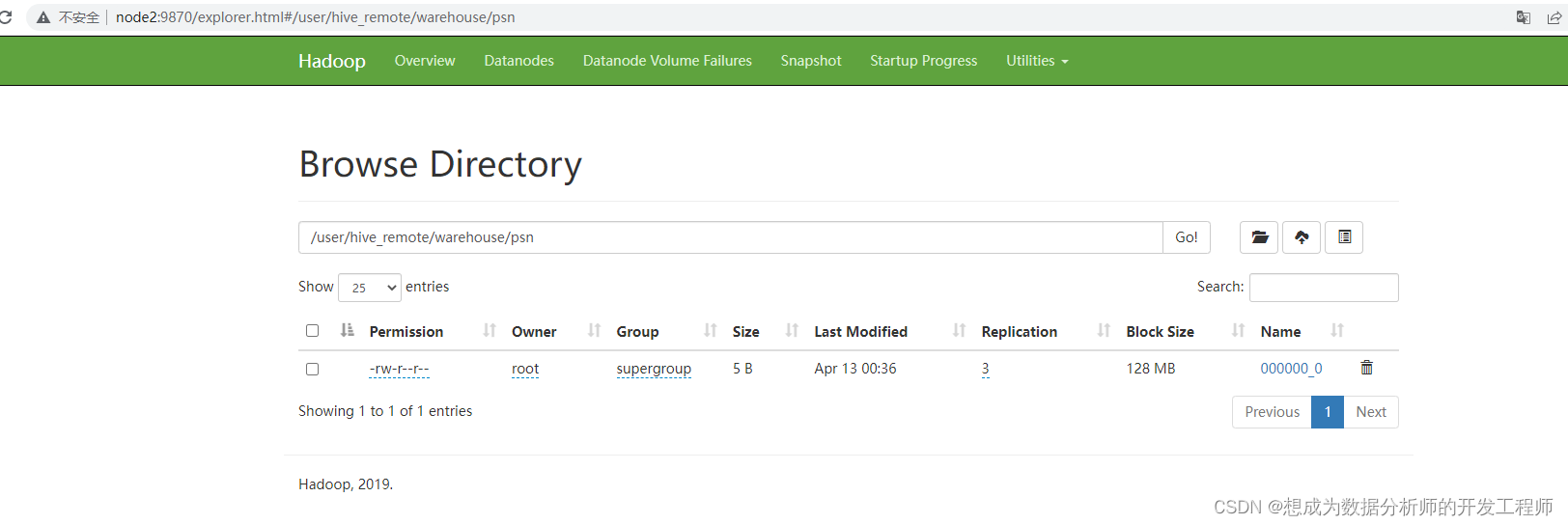

向hive中的psn表添加一条数据:

hive> insert into psn values(1,18);

Query ID = root_20211108232041_de210fde-034f-4282-99ee-7185a8bd4ce3

Total jobs = 3

Launching Job 1 out of 3

- 1

- 2

- 3

- 4

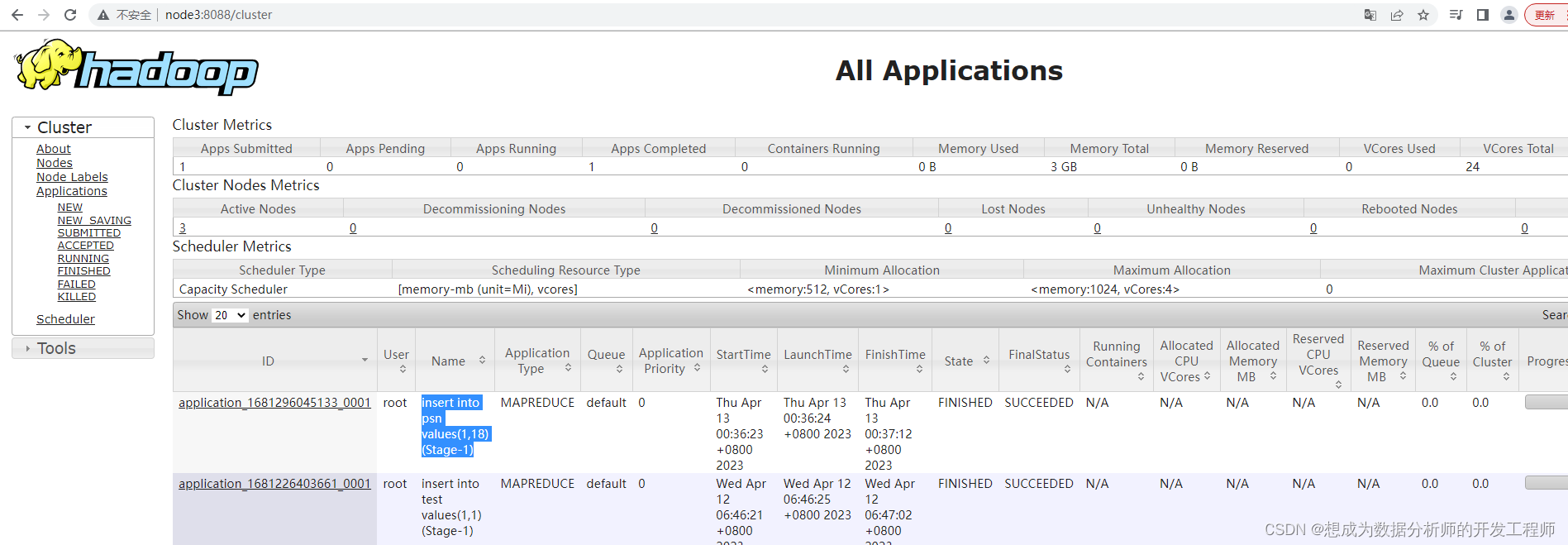

查看yarn的web页面:

通过hdfs命令查看该文件下的内容:(任意一台虚拟机都可以)

[root@node3 conf]# hdfs dfs -cat /user/hive_remote/warehouse/psn/000000_0

2023-04-13 00:41:15,696 INFO sasl.SaslDataTransferClient: SASL encryption trust check: localHostTrusted = false, remoteHostTrusted = false

118

[root@node3 conf]# hdfs dfs -get /user/hive_remote/warehouse/psn/000000_0

2023-04-13 00:41:44,030 INFO sasl.SaslDataTransferClient: SASL encryption trust check: localHostTrusted = false, remoteHostTrusted = false

[root@node3 conf]# cat -A 000000_0

1^A18$

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

^A 它是hive的默认分隔符