热门标签

热门文章

- 1NPDP考前注意事项有哪些?你需要懂的考试规则_npdp报名注意事项

- 2CVSS4.0将于2023年底正式发布_cvss4.0 3.1

- 3springcloud(1)—— eureka本地集群搭建以及实现微服务的负载均衡调用_本地eureka

- 4打造安全高效的身份管理:七大顶级CIAM工具推荐

- 5声网Agora发布创业支持计划:聚合50+合作伙伴、11项资源扶持创业者

- 6MySQL 狠甩 Oracle 稳居 Top1,私有云最受重用,大数据人才匮乏! | 中国大数据应用年度报告...

- 7使用 Spring Boot + POI 实现动态 DOCX 模版导出

- 8AI绘画-Stable Diffusion【进阶篇】:黑白线稿上色_stable diffusion 线稿 上色

- 92022年度“功能测试转测试开发容易吗?”最新版测试开发学习路线,从基础到项目实战,_测试转实施好转吗

- 10fork后如何同步最新的代码_fork的代码怎么保持最新

当前位置: article > 正文

LangChain 版OpenAI-Translatorv2.0-学习笔记_langchainv2

作者:Li_阴宅 | 2024-07-20 07:54:57

赞

踩

langchainv2

大纲:

三个重点:

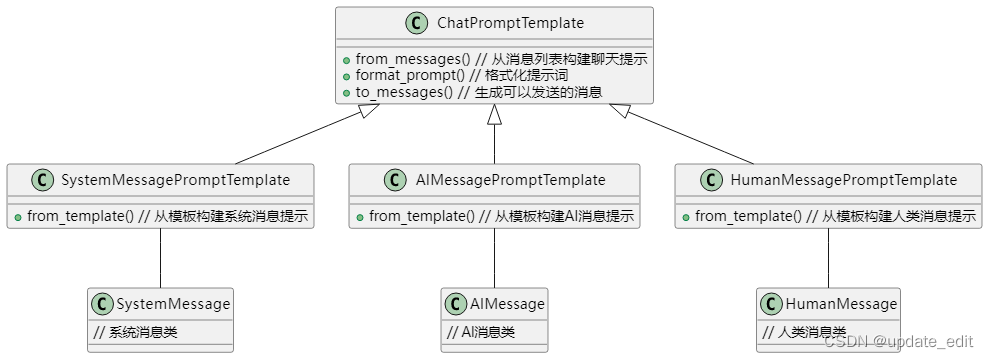

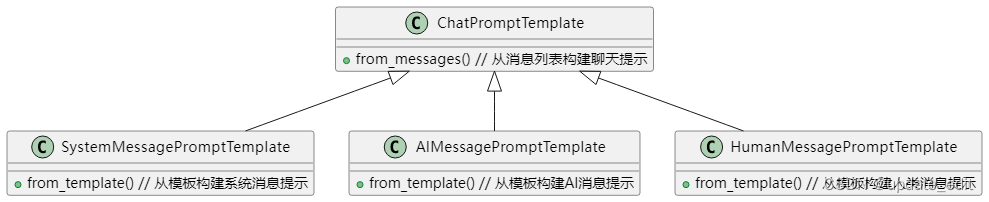

1.深入理解 Chat Model 和 Chat Prompt Template

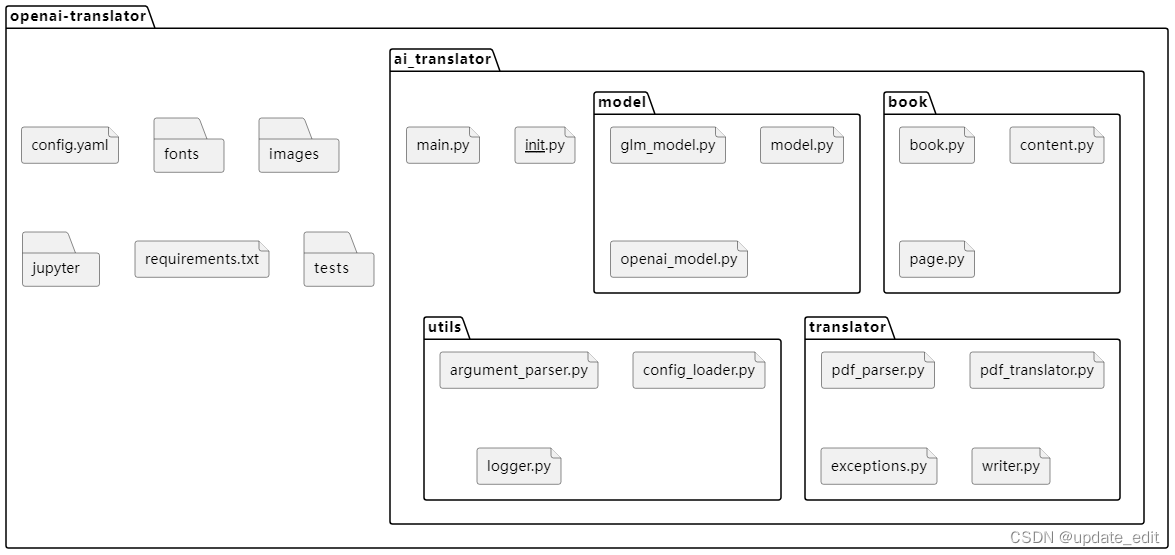

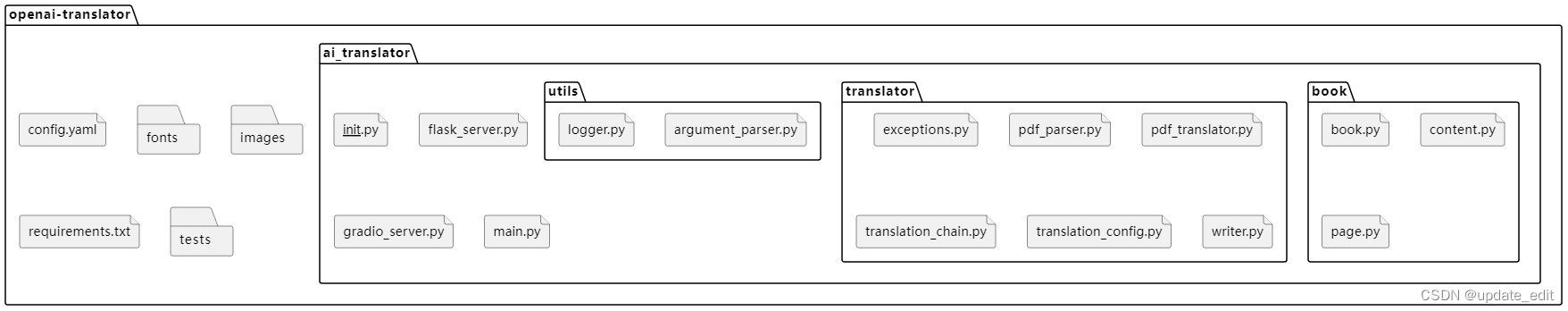

2.基于 LangChain 优化 OpenAI-Translator 架构设计

3.OpenAI-Translator v2.0 功能特性研发

知识点:

- LangChain Chat Model - 回顾lanchain的Chat Model模块,并在项目中使用

- Chat Prompt Template - 在项目中使用 设计提示词模版

- Chat Model - 在项目中使用聊天模块

- LLMChain - 使用 LLMChain 简化构造 Chat Prompt

- 在 LangChain 中实现对大模型的管理

- 使用 TranslationChain

- Gradio - 使用 Gradio 作为前端

- Flask - 使用 Flask 作为 Server

其他:

https://osschat.io/chat?project=LangChain 用魔法打败魔法,将遗忘的知识点快速学习下。

https://codebeautify.org/python-formatter-beautifier 将打印内容美化

1.深入理解 Chat Model 和 Chat Prompt Template

第3和4点对比实现翻译,一个是使用Chat Model 一个是使用LLMChain

(1)温故:LangChain Chat Model 使用方法和流程

from langchain.chat_models import ChatOpenAI chat_model = ChatOpenAI(model_name="gpt-3.5-turbo") from langchain.schema import ( AIMessage, HumanMessage, SystemMessage ) messages = [ SystemMessage(content='You are a helpful assistant.'), HumanMessage(content='Who won the world serirs in 2020?'), AIMessage(content='The Las Angeles Dodgers won the world Series in 2020.'), HumanMessage(content='Where was it played?') ] print(messages) result = chat_model(messages) print(type(result)) print(result.content)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

(2)使用 Chat Prompt Template 设计翻译提示模板

from langchain.schema import AIMessage, HumanMessage, SystemMessage from langchain.prompts.chat import ( ChatPromptTemplate, SystemMessagePromptTemplate, AIMessagePromptTemplate, HumanMessagePromptTemplate ) # 定义system 消息 template = ( """You are a translation expert, proficient in various language. \n Translation {language1} to {language2} """ ) # 将上面的多行提示词传入到 system prompt 中 system_message_prompt = SystemMessagePromptTemplate.from_template(template=template) print(system_message_prompt) # 翻译内容使用 human 来实现 human_template = '{text}' human_message_prompt = HumanMessagePromptTemplate.from_template(human_template) print(human_message_prompt) # 使用 system 和 human 构建翻译提示词 todo:加深对ChatPromptTemplate模块的理解 chat_prompt_template = ChatPromptTemplate.from_messages( [system_message_prompt, human_message_prompt] ) print(chat_prompt_template) # 将提示词中的 format 参数传入提示词 prompt # chat_prompt_template.format_prompt( # language1="English", # language2='Chinese', # text="I Love programming." # )

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

(3)使用 Chat Model 实现双语翻译

# 使用 to_messages() 方法将提示词生成可以发送的 messages

chat_prompt = chat_prompt_template.format_prompt(

language1="English",

language2='Chinese',

text="I Love programming."

).to_messages()

# 实现翻译- 使用 Chat Model 实现翻译

from langchain.chat_models import ChatOpenAI

translate_model = ChatOpenAI(model_name='gpt-3.5-turbo', temperature=0)

translate_result = translate_model(chat_prompt)

print(translate_result.content)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

(4)使用 LLMChain 简化构造 Chat Prompt

# 使用system 和 human 构建翻译提示词 todo:加深对ChatPromptTemplate模块的理解 chat_prompt_template = ChatPromptTemplate.from_messages( [system_message_prompt, human_message_prompt] ) from langchain import LLMChain from langchain.chat_models import ChatOpenAI translate_model = ChatOpenAI(model_name='gpt-3.5-turbo', temperature=0) translation_chain = LLMChain(llm=translate_model, prompt=chat_prompt_template) chain_result = translation_chain.run( { "text": 'hello world!', "language1": "English", "language2": "Chinese", } ) print(chain_result) # chain_result = translation_chain.run( # { # "text": 'hello world!', # "language1": "English", # "language2": "French", # } # ) # print(chain_result)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

2.基于 LangChain 优化 OpenAI-Translator 架构设计

- 重构:V-1.0 结构:

- 重构后V-2.0 结构:

(1)由 LangChain 框架接手大模型管理

将 v1.0 版本的model模块大部分内容让langchain实现:

和第1块的第3个比较类似

from langchain.chat_models import ChatOpenAI from langchain.chains import LLMChain from langchain.prompts.chat import ( ChatPromptTemplate, SystemMessagePromptTemplate, HumanMessagePromptTemplate, AIMessagePromptTemplate ) from utils import LOG class Translation: def __init__(self, model_name:str = "gpt-3.5-turbo", verbose:bool = True): # 翻译任务的提示词始终有system 角色承担 template = ( """You are a translation expert, proficient in various languages. \n Translates {source_language} to {target_language}.""" ) system_message_prompt = SystemMessagePromptTemplate.from_template(template) # 待翻译的内容由 human 角色承担 human_template = ("{text}") human_message_prompt = HumanMessagePromptTemplate.from_template(human_template) # 使用human_prompt 和 system_prompt构建ChatPromptTemplate (messages?) chat_prompt_template = ChatPromptTemplate.from_messages( [system_message_prompt, human_message_prompt] ) # 为了翻译结果的稳定,将temperature 设置为 chat = ChatOpenAI(model_name=model_name, temperature=0, verbose=verbose) self.chain = LLMChain(llm = chat, promppt=chat_prompt_template, verbose=verbose) def run(self, text:str, source_language:str, target_language:str) -> (str, bool): result = "" try: result = self.chain.run({ "text":text, "source_language":source_language, "target_language":target_language }) except Exception as e: LOG.error(f"An error occurred during translation: {e}") return result, False return result, True

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

(2) 聚焦应用自身的 Prompt 设计

使用 TranslationChain 实现翻译接口

更简洁统一的配置管理

3.OpenAI-Translator v2.0 功能特性研发

基于Gradio的图形化界面设计与实现

基于 Flask 的 Web Server 设计与实现

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/Li_阴宅/article/detail/855848

推荐阅读

相关标签