- 1[转]大数据环境搭建步骤详解(Hadoop,Hive,Zookeeper,Kafka,Flume,Hbase,Spark等安装与配置)_大数据集群搭建,hadoop,hive,hbase

- 22024年最新如何安装Pycharm最新版本-详细教程_pycharmeve和arm64,2024年最新C C++开发最佳实践手册全网独一份_pycharm2024安装教程

- 35.gradle配置和project_gradle implementation project

- 4【秋招总结】双非本小菜鸡的坎坷秋招之路(附面经)_古茗offer 3天

- 5Allure精通指南(02)Mac和Windows系统环境配置_allure配置环境变量

- 6如何把自己的数据分享给chatgpt让它处理你的数据_分享用gpt写的

- 7资讯|WebRTC M90 更新_webrtc m100

- 8物体检测框架 RetinaNet 深度神经网络简介

- 9江大白 | 基于Pytorch框架,从零实现Transformer模型实战(建议收藏!)_transformer实战

- 10SQL注入之WAF绕过技巧_sql绕过waf的方法

Langchain教程 | langchain+OpenAI+PostgreSQL(PGVector) 实现全链路教程,简单易懂入门_langchain pgvector

赞

踩

前提:

在阅读本文前,建议要有一定的langchain基础,以及langchain中document loader和text spliter有相关的认知,不然会比较难理解文本内容。

如果是没有任何基础的同学建议看下这个专栏:人工智能 | 大模型 | 实战与教程

本文主要展示如何结合langchain使用Postgres矢量数据库,其他相关的基础内容,可以看专栏了解,都已经拆分好了,一步步食用即可,推荐线路:langchain基础、document loader加载器、text spliter文档拆分器等按顺序学习。

PGVector是一个开源向量相似性搜索

Postgres

它支持:- 精确和近似最近邻搜索- L2距离,内积和余弦距离

基础库准备:

- # Pip install necessary package

- %pip install --upgrade --quiet pgvector

- %pip install --upgrade --quiet psycopg2-binary

- %pip install --upgrade --quiet tiktoken

- %pip install --upgrade --quiet openai

- from langchain.docstore.document import Document

- from langchain_community.document_loaders import TextLoader

- from langchain_community.vectorstores.pgvector import PGVector

- from langchain_community.embeddings.openai import OpenAIEmbeddings

- from langchain_text_splitters import RecursiveCharacterTextSplitter

我们想使用OpenAIEmbeddings所以我们必须获得OpenAI API密钥。

提示:因为国内政策原因,建议采购代理key,至于哪家好用,这里就不推荐了。

em.py 设置环境变量

- import getpass

- import os

-

- os.environ["OPENAI_API_KEY"] = getpass.getpass("OpenAI API Key:")

加载环境变量,openai库会自动读取该参数OPEN_API_KEY

- ## Loading Environment Variables

- from dotenv import load_dotenv

-

- load_dotenv()

这里使用的文本内容是: 人民财评:花香阵阵游人醉,“春日经济”热力足

将链接中的文本内容保存到 :state_of_the_union.txt

拆分中文文档需要用到递归型的字符拆分器 RecursiveCharacterTextSplitter,同时要使用中文分隔符:句号。逗号,顿号、感叹号!等。

- loader = TextLoader("../../modules/state_of_the_union.txt")

- documents = loader.load()

- text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=0)

- docs = text_splitter.split_documents(documents)

-

- embeddings = OpenAIEmbeddings()

连接Postgre矢量存储库

- # PGVector needs the connection string to the database.

- CONNECTION_STRING = "postgresql+psycopg2://harrisonchase@localhost:5432/test3"

-

- # # Alternatively, you can create it from environment variables.

- # import os

-

类还内置了一个更直观的方法:connection_string_from_db_params()

- CONNECTION_STRING = PGVector.connection_string_from_db_params(

- driver=os.environ.get("PGVECTOR_DRIVER", "psycopg2"),

- host=os.environ.get("PGVECTOR_HOST", "localhost"),

- port=int(os.environ.get("PGVECTOR_PORT", "5432")),

- database=os.environ.get("PGVECTOR_DATABASE", "postgres"),

- user=os.environ.get("PGVECTOR_USER", "postgres"),

- password=os.environ.get("PGVECTOR_PASSWORD", "postgres"),

- )

使用欧氏距离进行相似性搜索(默认)

- # The PGVector Module will try to create a table with the name of the collection.

- # So, make sure that the collection name is unique and the user has the permission to create a table.

-

- COLLECTION_NAME = "state_of_the_union_test"

-

- db = PGVector.from_documents(

- embedding=embeddings,

- documents=docs,

- collection_name=COLLECTION_NAME,

- connection_string=CONNECTION_STRING,

- )

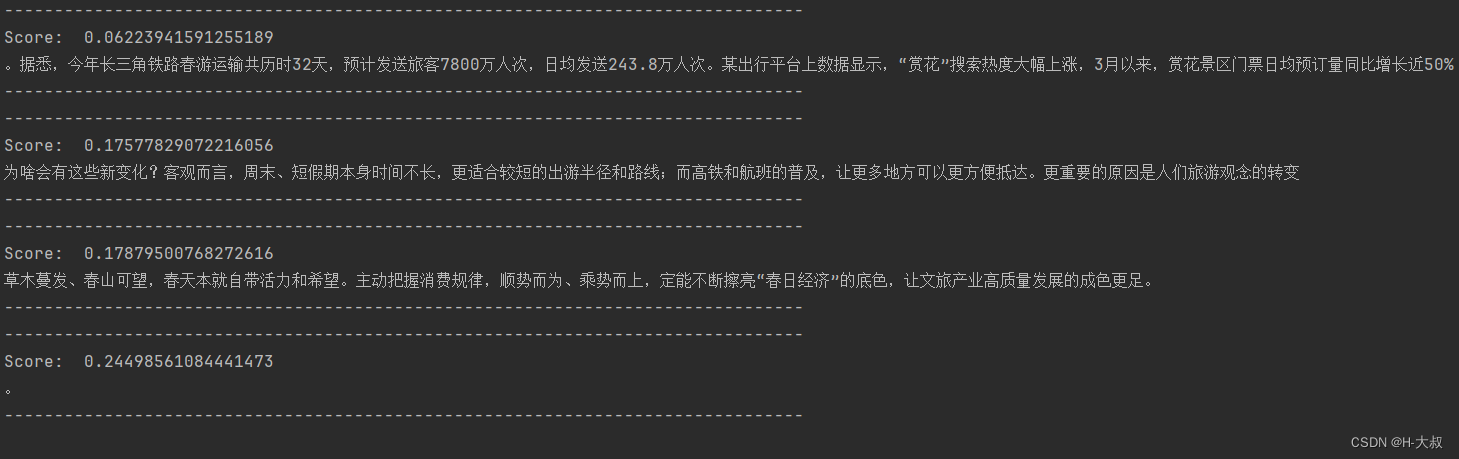

- query = "今年长三角铁路春游运输共历时多少天?"

- docs_with_score = db.similarity_search_with_score(query)

- for doc, score in docs_with_score:

- print("-" * 80)

- print("Score: ", score)

- print(doc.page_content)

- print("-" * 80)

输出结果:

最大边际相关性搜索

最大边际相关性优化了查询的相似性和所选文档的多样性。

docs_with_score = db.max_marginal_relevance_search_with_score(query)- for doc, score in docs_with_score:

- print("-" * 80)

- print("Score: ", score)

- print(doc.page_content)

- print("-" * 80)

打印结果:

使用vectorstore

上面,我们从头开始创建了一个vectorstore。但是,我们经常希望使用现有的vectorstore。为了做到这一点,我们可以直接初始化它。

- store = PGVector(

- collection_name=COLLECTION_NAME,

- connection_string=CONNECTION_STRING,

- embedding_function=embeddings,

- )

添加文档

我们可以向现有的vectorstore添加文档。

store.add_documents([Document(page_content="今年春游创收客观,实际增长30%。")])docs_with_score = db.similarity_search_with_score("春游增长多少")print(docs_with_score[0])print(docs_with_score[1])覆盖向量存储

如果您有一个现有的集合,您可以通过执行以下操作来覆盖它from_documents和设置pre_delete_collection=真

- db = PGVector.from_documents(

- documents=docs,

- embedding=embeddings,

- collection_name=COLLECTION_NAME,

- connection_string=CONNECTION_STRING,

- pre_delete_collection=True,

- )

将VectorStore用作检索器

retriever = store.as_retriever()与OpenAI结合使用完整代码

里面包含了详细的步骤和注释,直接复制就可运行。

- import os

- from langchain_community.document_loaders import TextLoader

- from langchain_core.output_parsers import StrOutputParser

- from langchain_core.prompts import ChatPromptTemplate

- from langchain_core.runnables import RunnableParallel, RunnablePassthrough

- from langchain_openai import OpenAIEmbeddings, ChatOpenAI

- from langchain_community.vectorstores.pgvector import PGVector

- from langchain_text_splitters import RecursiveCharacterTextSplitter

- from dotenv import load_dotenv

-

- # 加载环境变量或者加载.env文件

- load_dotenv()

- # 导入文本文件

- loader = TextLoader("./demo_static/splitters_test.txt")

- # 生成文档加载器

- documents = loader.load()

- # 文档拆分,每块最大限制20,覆盖量10

- text_splitter = RecursiveCharacterTextSplitter(

- separators=["\n\n", "\n", "。", "?", ";"],

- chunk_size=100,

- chunk_overlap=20,

- )

- # 开始拆分文档

- docs = text_splitter.split_documents(documents)

- # print(len(docs))

- # print(docs)

-

- # 初始化嵌入式OpenAI大语言模型,手动指定key和代理地址

- embeddings = OpenAIEmbeddings(openai_api_key=os.getenv("OEPNAPI_API_KEY"),

- openai_api_base=os.getenv("OPENAI_API_BASE"))

- # 连接矢量存储库,链接换成自己专属的*

- CONNECTION_STRING = "postgresql+psycopg2://postgres:password@localhost:5432/postgres"

- # 矢量存储名

- COLLECTION_NAME = "state_of_the_union_test"

- # 建立索引库

- vector = PGVector.from_documents(

- embedding=embeddings,

- documents=docs,

- collection_name=COLLECTION_NAME,

- connection_string=CONNECTION_STRING,

- use_jsonb=True,

- pre_delete_collection=True,

- )

- # 生成检索器

- retriever = vector.as_retriever()

- # 一个对话模板,内含2个变量context和question

- template = """Answer the question based only on the following context:

- {context}

- Question: {question}

- """

- # 基于模板生成提示

- prompt = ChatPromptTemplate.from_template(template)

- # 基于对话openai生成模型

- model = ChatOpenAI(openai_api_key=os.getenv("OEPNAPI_API_KEY"),

- openai_api_base=os.getenv("OPENAI_API_BASE"))

- # 生成输出解析器

- output_parser = StrOutputParser()

- # 将检索索引器和输入内容(问题)生成检索

- setup_and_retrieval = RunnableParallel(

- {"context": retriever, "question": RunnablePassthrough()}

- )

- # 建立增强链

- chain = setup_and_retrieval | prompt | model | output_parser

- # 问题

- question = "今年长三角铁路春游运输共历时多少天?"

- # 发起请求

- res = chain.invoke(question)

- # 打印结果

- print(res)

打印结果:

32天创作不易,来个三连(点赞、收藏、关注),同学们的满意是我(H-大叔)的动力。

代码运行有问题或其他建议,请在留言区评论,看到就会回复,不用私聊。

专栏人工智能 | 大模型 | 实战与教程里面还有其他人工智能|大数据方面的文章,可继续食用,持续更新。