- 1代码知识点_alapi接口

- 2Android Studio-学习笔记线性布局07_线性布局的嵌套安卓

- 3带你正确认识Unicode和UTF-8

- 4Gitee + Typora,搭建你的免费高速图床_typora gitee

- 5【深度学习】从30+场秋招面试中总结出的超强面经——目标检测篇(含答案)...

- 6Python词云实现_词云python代码

- 7Ubuntu 安装 TeamViewer_ubuntu teamviewer version

- 8gitee上传代码到仓库_gitee 推送代码文件到已有仓库

- 9Grass注册使用教程,利用闲置WiFi带宽赚钱_grass教程

- 10Pytorch安装失败解决办法(pip install torch提示安装失败;import torch 提示 from torch._C import * ImportError: DLL l)_pin install torch无法安装

Hive SQL的窗口函数及特殊函数回顾_hive sql ntile(4)

赞

踩

Hive SQL 中有很多窗口函数值得我们在平时的数据开发处理中好好使用。通常包含排序类、聚合类、累计计算,等。在数据开发的

此篇就简单罗列一些窗口函数的SQL例子,做一个复习回顾。

专用窗口函数

rank,dense_rank, row_number, ntile,等。

RANK() 生成数据项在分组中的排名,排名相等会在名次中留下空位

DENSE_RANK() 生成数据项在分组中的排名,排名相等会在名次中不会留下空位

- select

- *

- ,rank() over(partition by `班级` order by `成绩` desc) as `班级排名`

- ,rank() over (order by `成绩` desc) as ranking -- 相同分数,相同排名,接下来的跳过+n排名(不连续排名)

- ,dense_rank() over (order by `成绩` desc) as dese_ranking -- 相同分数,相同排名,接下来的继续+1排名(连续排名)

- ,row_number() over (order by `成绩` desc) as row_num -- 按成绩倒序,编号(排序)

- from database_name.table_a;

聚合函数作为窗口函数

假设:按照学生学号 student_no(每个学生有唯一学号),统计每个学生的 总分、平均分、目前考试科目、最高分、最低分。

- select

- *

- ,sum(score) over (partition by student_no) as current_sum -- 每个学生总成绩

- ,avg(score) over (partition by student_no) as current_avg -- 平均分

- ,count(score) over (partition by student_no) as current_cnt

- ,max(score) over (partition by student_no) as current_max

- ,min(score) over (partition by student_no) as current_min

- from database_name.table_a;

按照学号升序排序,对成绩score统计一些累计指标。

- select

- *

- ,sum(score) over (order by student_no) as current_sum -- 累计求和

- ,avg(score) over (order by student_no) as current_avg

- ,count(score) over (order by student_no) as current_cnt

- ,max(score) over (order by student_no) as current_max

- ,min(score) over (order by student_no) as current_min

- from database_name.table_a;

按照 成绩 倒序排序,rank()是连续排名, 1, 2, 3, 4,。。。遇到相同分数,继续排序下去。

- select

- *

- ,rank() over(order by `成绩` desc) as ranking

- from database_name.table_a;

对数据进行偏移计算

主要考虑关键词: rows n preceding, rows n following, rows between [n|unbounded] preceding and [n|current|unbounded] following。或者 lag(offset) over(partition by order by ),lead(offset) over(partition by order by )。

指定最靠近的3行作为汇总对象: rows n preceding:包含本条记录的前n行记录

- select

- *

- ,avg(sale_price) over(order by product_id rows 2 preceding) as moving_avg -- 移动平均

- from database_name.table_a;

指定包含本条记录,前1行,后1行 记录的 sale_price平均值

- select

- *

- ,avg(sale_price) over(order by product_id rows 1 preceding and 1 following) as moving_avg -- 移动平均

- from database_name.table_a;

- SELECT

- cookieid,

- createtime,

- pv,

- SUM(pv) OVER(PARTITION BY cookieid ORDER BY createtime) AS pv1, -- 默认为从起点到当前行

- SUM(pv) OVER(PARTITION BY cookieid ORDER BY createtime ROWS BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW) AS pv2, -- 从起点到当前行,结果同pv1

- SUM(pv) OVER(PARTITION BY cookieid) AS pv3, -- 分组内所有行

- SUM(pv) OVER(PARTITION BY cookieid ORDER BY createtime ROWS BETWEEN 3 PRECEDING AND CURRENT ROW) AS pv4, -- 当前行+往前3行

- SUM(pv) OVER(PARTITION BY cookieid ORDER BY createtime ROWS BETWEEN 3 PRECEDING AND 1 FOLLOWING) AS pv5, -- 当前行+往前3行+往后1行

- SUM(pv) OVER(PARTITION BY cookieid ORDER BY createtime ROWS BETWEEN CURRENT ROW AND UNBOUNDED FOLLOWING) AS pv6 -- 当前行+往后所有行

- FROM win1

- order by createtime;

对数据的偏移统计,还可以用到

LAG(expr, offset, default_value) over([partition by col1] [order by col2] [desc|asc]) ,表示向前偏移offset行记录,按照over后面的条件,进行expr统计,如果偏移offset后越界,可以是null, 也可以是指定的default_value。

LEAD(expr, offset, default_value) over([partition by col1] [order by col2] [desc|asc]) ,表示向后偏移offset行记录,按照over后面的条件,进行expr统计,如果偏移offset后越界,可以是null, 也可以是指定的default_value。

LAG(字段,n,为空时的默认值)向上n行

- SELECT

- cookieid,

- createtime,

- url,

- ROW_NUMBER() OVER(PARTITION BY cookieid ORDER BY createtime) AS rn,

- LAG(createtime, 1, '1970-01-01 00:00:00') OVER(PARTITION BY cookieid ORDER BY createtime) AS last_1_time,

- LAG(createtime, 2) OVER(PARTITION BY cookieid ORDER BY createtime) AS last_2_time

- FROM win4;

LEAD(字段,n,为空时的默认值)向下n行

- SELECT

- cookieid,

- createtime,

- url,

- ROW_NUMBER() OVER(PARTITION BY cookieid ORDER BY createtime) AS rn,

- LEAD(createtime,1,'1970-01-01 00:00:00') OVER(PARTITION BY cookieid ORDER BY createtime) AS next_1_time,

- LEAD(createtime,2) OVER(PARTITION BY cookieid ORDER BY createtime) AS next_2_time

- FROM win4;

- select

- seq,

- LAG(seq+100, 1, -1) over (partition by window order by seq) as r1

- from sliding_window;

- --

- select

- c_Double_a,c_String_b,c_int_a,

- lead(c_int_a, 1) over(partition by c_Double_a order by c_String_b) as r2

- from dual;

-

- select

- c_String_a,c_time_b,c_Double_a,

- lead(c_Double_a,1) over(partition by c_String_a order by c_time_b) as r3

- from dual;

-

- select

- c_String_in_fact_num,c_String_a,c_int_a,

- lead(c_int_a) over(partition by c_String_in_fact_num order by c_String_a) as r4

- from dual;

分片函数

NTILE分片函数,随机分配n个编号给相应的分组。

ntile 用于将分组数据按照顺序切分成n片,并返回当前切片值。如果切片不均匀,默认增加第一个切片的分布。

- SELECT

- cookieid,

- createtime,

- pv,

- NTILE(2) OVER(PARTITION BY cookieid ORDER BY createtime) AS rn1, -- 分组内将数据分成2片

- NTILE(3) OVER(PARTITION BY cookieid ORDER BY createtime) AS rn2, -- 分组内将数据分成3片

- NTILE(4) OVER(ORDER BY createtime) AS rn3 -- 将所有数据分成4片

- FROM win2

- ORDER BY

- cookieid,

- createtime;

- -- 现在需要将所有职工根据部门按sal高到低切分为3组,并获得职工自己组内的序号。

- select

- deptno

- ,ename

- ,sal

- ,ntile(3)over(partition by deptno order by sal desc) as nt3

- from emp;

PERCENT_RANK 返回位置百分数,按照rank进行排序之后,找出当前位置的rank所在的位置百分数

- SELECT

- dept,

- userid,

- sal,

- PERCENT_RANK() OVER(ORDER BY sal) AS rn1, -- 分组内

- RANK() OVER(ORDER BY sal) AS rn11, -- 分组内RANK值

- SUM(1) OVER(PARTITION BY NULL) AS rn12, -- 分组内总行数

- PERCENT_RANK() OVER(PARTITION BY dept ORDER BY sal) AS rn2

- FROM win3;

FIRST_VALUE()取分组后截止到当前行相应字段的第一个值

- SELECT cookieid,

- createtime,

- url,

- ROW_NUMBER() OVER(PARTITION BY cookieid ORDER BY createtime) AS rn,

- FIRST_VALUE(url) OVER(PARTITION BY cookieid ORDER BY createtime) AS first1,

- LAST_VALUE(url) OVER(PARTITION BY cookieid ORDER BY createtime DESC) AS first2,

- LAST_VALUE(url) OVER(PARTITION BY cookieid ORDER BY createtime) AS last1

- FROM lxw1234;

NTH_VALUE 用于计算第n个值。如果n超过窗口的最大长度,返回NULL。

- select

- user_id,

- price,

- nth_value(price, 2) over(partition by user_id) as nth_value

- from test_src;

CLUSTER_SAMPLE 分组抽样

- -- 从每组中抽取10%的样本

- select

- key, value

- from (

- select

- key, value,

- cluster_sample(10, 1) over(partition by key) as flag

- from tbl

- ) sub

- where flag=true;

CUME_DIST 累计分布

- -- 现在需要将所有职工根据部门分组,再求sal在同一组内的前百分之几。

- select

- deptno

- ,ename

- ,sal

- ,concat(round(cume_dist(sal) over(partition by deptno order by sal desc)*100, 2), '%') as cume_dist

- from emp;

此外,还有一些针对字符串的常用操作,具体可以参考阿里云Maxcompute文档。

WM_CONCAT 用指定的separator做分隔符,链接str中的值。

- -- 对表进行分组排序后合并: 对表test按照id列进行分组排序,并将同组的内容进行合并。

- SELECT

- id,

- WM_CONCAT('',alphabet) as res

- FROM test

- GROUP BY

- id

- ORDER BY

- id

- LIMIT 100;

-- collect_list(col) 在给定Group内,将col指定的表达式聚合为一个数组。

需要对应的配置

- set project odps.sql.type.system.odps2=true;

- 或

- set odps.sql.type.system.odps2=true;

COLLECT_SET(col) 在给定Group内,将col指定的表达式聚合为一个无重复元素的集合数组,输出类型是set(集合)。

以下为一些常用的统计方差函数。

-- variance/var_pop(col) 计算 col 列方差

-- var_samp 用于计算指定数字列的样本方差。

-- covar_pop 用于计算指定两个数字列的总体协方差。

-- COVAR_SAMP 用于计算指定两个数字列的样本协方差。

PERCENTILE 返回指定列精确的第p位百分数。p必须在0和1之间。

-- DOUBLE PERCENTILE(BIGINT col, p)

- SELECT

- PERCENTILE(c1,0),

- PERCENTILE(c1,0.3),

- PERCENTILE(c1,0.5),

- PERCENTILE(c1,1)

- FROM var_test;

以上就是复习常用的窗口函数和一些字符串统计函数。还有比较实用的是 行转列(一行转多行)、列转行等数据处理,可以参考explode、lateral view 关键词,及 explode 与 split、lateral view 等 并用。

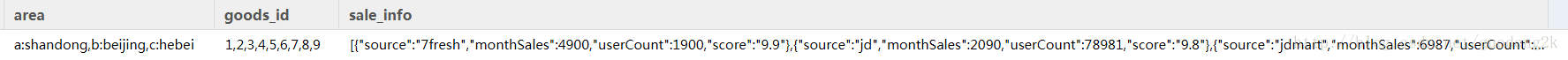

以下图为 表 table_explode_lateral_view 的记录。

需求1: 只拆解 goods_id 字段

- select

- explode(split(goods_id, ',')) as new_goods_id

- from table_explode_lateral_view;

需求2: 只拆解area字段

- select

- explode(split(area, ',')) as new_area

- from table_explode_lateral_view;

LATERAL VIEW的使用:

侧视图的意义是配合explode(或者其他的UDTF),一个语句生成把单行数据拆解成多行后的数据结果集。

- select

- goods_id2,

- sale_info

- from table_explode_lateral_view

- LATERAL VIEW explode(split(goods_id,','))goods as goods_id2;

其中LATERAL VIEW explode(split(goods_id,',')) goods相当于一个虚拟表,与原表explode_lateral_view笛卡尔积关联。

也可以多重使用

- select

- goods_id2,

- sale_info,

- area2

- from table_explode_lateral_view

- LATERAL VIEW explode(split(goods_id,',')) goods as goods_id2

- LATERAL VIEW explode(split(area,',')) area as area2;

也是三个表笛卡尔积的结果。

需求3: 解析以上sale_info成二维表。

- select

- get_json_object(concat('{',sale_info_1,'}'),'$.source') as source,

- get_json_object(concat('{',sale_info_1,'}'),'$.monthSales') as monthSales,

- get_json_object(concat('{',sale_info_1,'}'),'$.userCount') as monthSales,

- get_json_object(concat('{',sale_info_1,'}'),'$.score') as monthSales

- from table_explode_lateral_view

- LATERAL VIEW explode(split(regexp_replace(regexp_replace(sale_info,'\\[\\{',''),'}]',''),'},\\{')) sale_info as sale_info_1;

也可以使用json_tuple对一个json字段/或者数组,同时对多个key进行解析。

- select

- sale_info_1

- from table_explode_lateral_view

- lateral view json_tuple(sale_info,'source','monthSales','userCount', 'score') sale_info_new as sale_info_1;

更多细节,还需要根据实际情况进行处理。