HNU-深度学习-电商多模态图文检索

赞

踩

前言

主要是跟着baseline搭了一遍,没有想到很好的优化。

有官方教程,但是有点谬误,所以就想着自己记录一下我的完成过程。

github项目地址:

https://github.com/OFA-Sys/Chinese-CLIP

官方文档:

电商多模态图文检索——Chinese-CLIP baseline全流程示例_天池notebook-阿里云天池

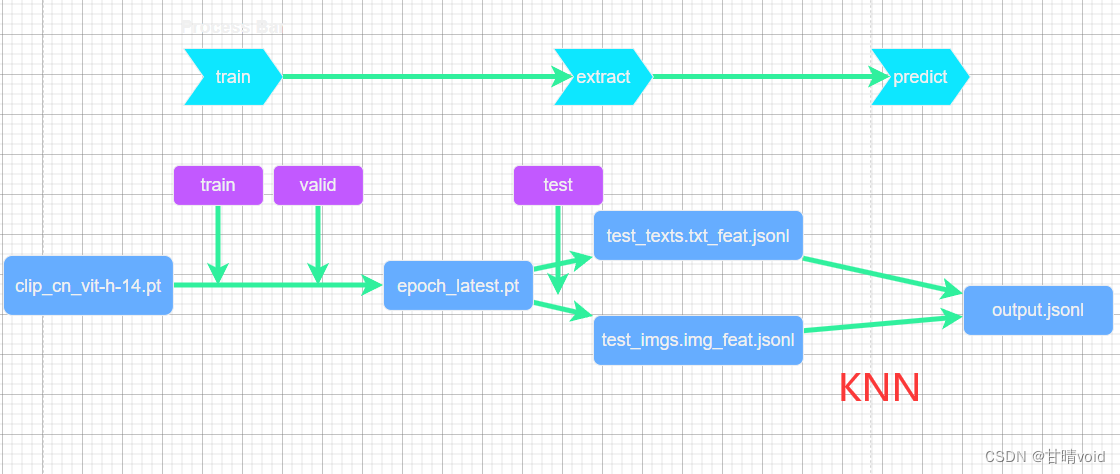

全流程如下:

准备阶段

(1)从github上clone这个链接

git clone git@github.com:OFA-Sys/Chinese-CLIP.git(2)安装相关依赖

pip install -r Chinese-CLIP/requirements.txt(3)下载预处理数据

- # 详细的预处理数据流程,请参见github readme: https://github.com/OFA-Sys/Chinese-CLIP#数据集格式预处理

- # 我们这里方便起见,直接下载已经预处理好(处理成LMDB格式)的电商检索数据集

- wget https://clip-cn-beijing.oss-cn-beijing.aliyuncs.com/datasets/MUGE.zip

- mv MUGE.zip datapath/datasets/

- cd datapath/datasets/

- unzip MUGE.zip

- cd ../..

- tree datapath/

(4)下载Chinese-CLIP预训练参数(ckpt)

我这里使用了较新的模型,以期获得更好的效果。

wget https://clip-cn-beijing.oss-cn-beijing.aliyuncs.com/checkpoints/clip_cn_vit-b-16.pt微调(finetune)

在Chinese-CLIP文件夹内创建如下脚本,并运行。

- export PYTHONPATH=${PYTHONPATH}:`pwd`/cn_clip;

- torchrun \

- --nproc_per_node=4 --nnodes=1 --node_rank=0 \

- --master_addr=localhost \

- --master_port=9010 cn_clip/training/main.py \

- --train-data=../datapath//datasets/MUGE/lmdb/train \

- --val-data=../datapath//datasets/MUGE/lmdb/valid \

- --num-workers=4 \

- --valid-num-workers=4 \

- --resume=../datapath//pretrained_weights/clip_cn_vit-h-14.pt \

- --reset-data-offset \

- --reset-optimizer \

- --name=muge_finetune_vit-h-14_roberta-huge_bs48_1gpu \

- --save-step-frequency=999999 \

- --save-epoch-frequency=1 \

- --log-interval=10 \

- --report-training-batch-acc \

- --context-length=52 \

- --warmup=100 \

- --batch-size=48 \

- --valid-batch-size=48 \

- --valid-step-interval=1000 \

- --valid-epoch-interval=1 \

- --lr=3e-06 \

- --wd=0.001 \

- --max-epochs=1 \

- --vision-model=ViT-H-14 \

- --use-augment \

- --grad-checkpointing \

- --text-model=RoBERTa-wwm-ext-large-chinese

上述参数可以调整,具体含义见github链接。

若无问题,就开始运行。

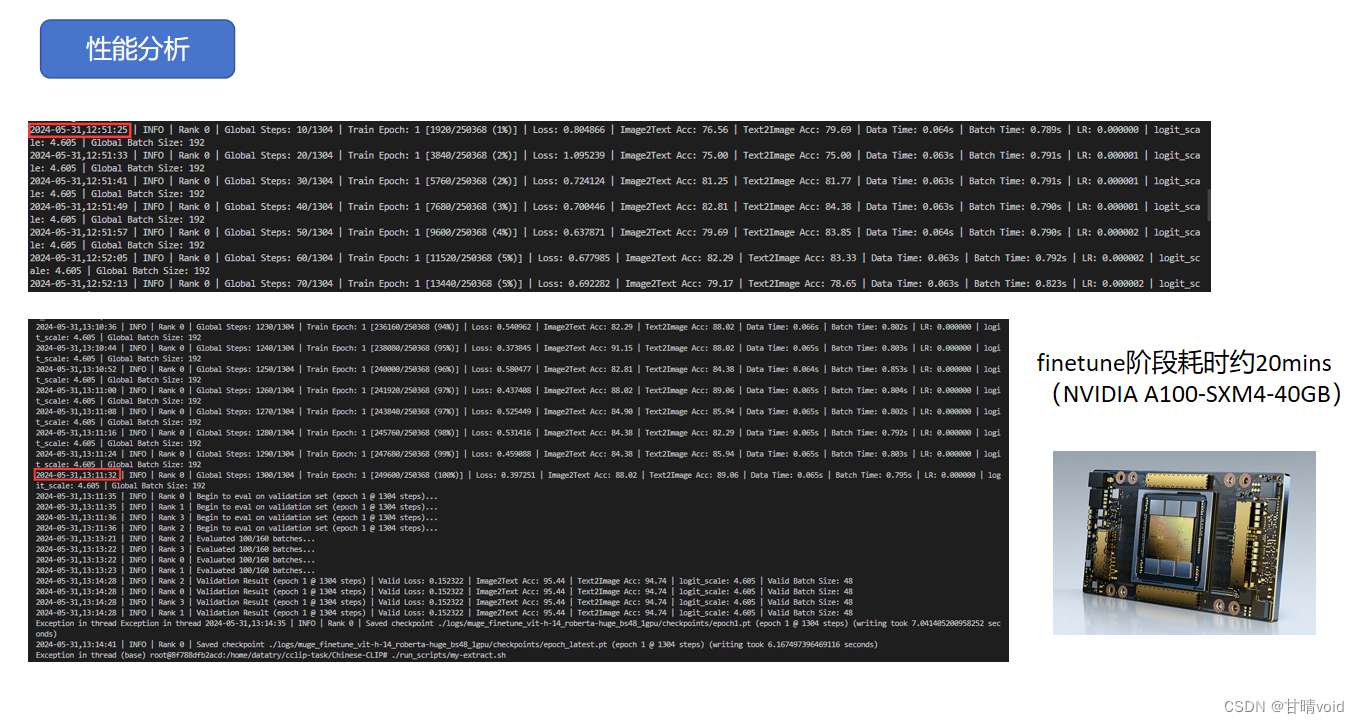

我截取了我的运行输出,供参考。

最后结束的时候输出会报错,没关系,只要保存了checkpoint即可,注意这个地址记住。

- (base) root@8f788dfb2acd:/home/datatry/cclip-task/Chinese-CLIP# ./run_scripts/my-fintune.sh

- W0531 12:50:57.900870 140054594544448 torch/distributed/run.py:757]

- W0531 12:50:57.900870 140054594544448 torch/distributed/run.py:757] *****************************************

- W0531 12:50:57.900870 140054594544448 torch/distributed/run.py:757] Setting OMP_NUM_THREADS environment variable for each process to be 1 in default, to avoid your system being overloaded, please further tune the variable for optimal performance in your application as needed.

- W0531 12:50:57.900870 140054594544448 torch/distributed/run.py:757] *****************************************

- Loading vision model config from cn_clip/clip/model_configs/ViT-H-14.json

- Loading text model config from cn_clip/clip/model_configs/RoBERTa-wwm-ext-large-chinese.json

- Loading vision model config from cn_clip/clip/model_configs/ViT-H-14.json

- Loading text model config from cn_clip/clip/model_configs/RoBERTa-wwm-ext-large-chinese.json

- Loading vision model config from cn_clip/clip/model_configs/ViT-H-14.json

- Loading text model config from cn_clip/clip/model_configs/RoBERTa-wwm-ext-large-chinese.json

- Loading vision model config from cn_clip/clip/model_configs/ViT-H-14.json

- Loading text model config from cn_clip/clip/model_configs/RoBERTa-wwm-ext-large-chinese.json

- 2024-05-31,12:51:11 | INFO | Rank 0 | Grad-checkpointing activated.

- 2024-05-31,12:51:11 | INFO | Rank 3 | Grad-checkpointing activated.

- 2024-05-31,12:51:11 | INFO | Rank 2 | Grad-checkpointing activated.

- 2024-05-31,12:51:11 | INFO | Rank 1 | Grad-checkpointing activated.

- 2024-05-31,12:51:13 | INFO | Rank 3 | train LMDB file contains 129380 images and 250314 pairs.

- 2024-05-31,12:51:13 | INFO | Rank 2 | train LMDB file contains 129380 images and 250314 pairs.

- 2024-05-31,12:51:13 | INFO | Rank 0 | train LMDB file contains 129380 images and 250314 pairs.

- 2024-05-31,12:51:13 | INFO | Rank 3 | val LMDB file contains 29806 images and 30588 pairs.

- 2024-05-31,12:51:13 | INFO | Rank 3 | Use GPU: 3 for training

- 2024-05-31,12:51:13 | INFO | Rank 3 | => begin to load checkpoint '../datapath//pretrained_weights/clip_cn_vit-h-14.pt'

- 2024-05-31,12:51:13 | INFO | Rank 2 | val LMDB file contains 29806 images and 30588 pairs.

- 2024-05-31,12:51:13 | INFO | Rank 2 | Use GPU: 2 for training

- 2024-05-31,12:51:13 | INFO | Rank 2 | => begin to load checkpoint '../datapath//pretrained_weights/clip_cn_vit-h-14.pt'

- 2024-05-31,12:51:13 | INFO | Rank 0 | val LMDB file contains 29806 images and 30588 pairs.

- 2024-05-31,12:51:13 | INFO | Rank 0 | Params:

- 2024-05-31,12:51:13 | INFO | Rank 0 | accum_freq: 1

- 2024-05-31,12:51:13 | INFO | Rank 0 | aggregate: True

- 2024-05-31,12:51:13 | INFO | Rank 0 | batch_size: 48

- 2024-05-31,12:51:13 | INFO | Rank 0 | bert_weight_path: None

- 2024-05-31,12:51:13 | INFO | Rank 0 | beta1: 0.9

- 2024-05-31,12:51:13 | INFO | Rank 0 | beta2: 0.98

- 2024-05-31,12:51:13 | INFO | Rank 0 | checkpoint_path: ./logs/muge_finetune_vit-h-14_roberta-huge_bs48_1gpu/checkpoints

- 2024-05-31,12:51:13 | INFO | Rank 0 | clip_weight_path: None

- 2024-05-31,12:51:13 | INFO | Rank 0 | context_length: 52

- 2024-05-31,12:51:13 | INFO | Rank 0 | debug: False

- 2024-05-31,12:51:13 | INFO | Rank 0 | device: cuda:0

- 2024-05-31,12:51:13 | INFO | Rank 0 | distllation: False

- 2024-05-31,12:51:13 | INFO | Rank 0 | eps: 1e-06

- 2024-05-31,12:51:13 | INFO | Rank 0 | freeze_vision: False

- 2024-05-31,12:51:13 | INFO | Rank 0 | gather_with_grad: False

- 2024-05-31,12:51:13 | INFO | Rank 0 | grad_checkpointing: True

- 2024-05-31,12:51:13 | INFO | Rank 0 | kd_loss_weight: 0.5

- 2024-05-31,12:51:13 | INFO | Rank 0 | local_device_rank: 0

- 2024-05-31,12:51:13 | INFO | Rank 0 | log_interval: 10

- 2024-05-31,12:51:13 | INFO | Rank 0 | log_level: 20

- 2024-05-31,12:51:13 | INFO | Rank 0 | log_path: ./logs/muge_finetune_vit-h-14_roberta-huge_bs48_1gpu/out_2024-05-31-12-51-01.log

- 2024-05-31,12:51:13 | INFO | Rank 0 | logs: ./logs/

- 2024-05-31,12:51:13 | INFO | Rank 0 | lr: 3e-06

- 2024-05-31,12:51:13 | INFO | Rank 0 | mask_ratio: 0

- 2024-05-31,12:51:13 | INFO | Rank 0 | max_epochs: 1

- 2024-05-31,12:51:13 | INFO | Rank 0 | max_steps: 1304

- 2024-05-31,12:51:13 | INFO | Rank 0 | name: muge_finetune_vit-h-14_roberta-huge_bs48_1gpu

- 2024-05-31,12:51:13 | INFO | Rank 0 | num_workers: 4

- 2024-05-31,12:51:13 | INFO | Rank 0 | precision: amp

- 2024-05-31,12:51:13 | INFO | Rank 0 | rank: 0

- 2024-05-31,12:51:13 | INFO | Rank 0 | report_training_batch_acc: True

- 2024-05-31,12:51:13 | INFO | Rank 0 | reset_data_offset: True

- 2024-05-31,12:51:13 | INFO | Rank 0 | reset_optimizer: True

- 2024-05-31,12:51:13 | INFO | Rank 0 | resume: ../datapath//pretrained_weights/clip_cn_vit-h-14.pt

- 2024-05-31,12:51:13 | INFO | Rank 0 | save_epoch_frequency: 1

- 2024-05-31,12:51:13 | INFO | Rank 0 | save_step_frequency: 999999

- 2024-05-31,12:51:13 | INFO | Rank 0 | seed: 123

- 2024-05-31,12:51:13 | INFO | Rank 0 | skip_aggregate: False

- 2024-05-31,12:51:13 | INFO | Rank 0 | skip_scheduler: False

- 2024-05-31,12:51:13 | INFO | Rank 0 | teacher_model_name: None

- 2024-05-31,12:51:13 | INFO | Rank 0 | text_model: RoBERTa-wwm-ext-large-chinese

- 2024-05-31,12:51:13 | INFO | Rank 0 | train_data: ../datapath//datasets/MUGE/lmdb/train

- 2024-05-31,12:51:13 | INFO | Rank 0 | use_augment: True

- 2024-05-31,12:51:13 | INFO | Rank 0 | use_bn_sync: False

- 2024-05-31,12:51:13 | INFO | Rank 0 | use_flash_attention: False

- 2024-05-31,12:51:13 | INFO | Rank 0 | val_data: ../datapath//datasets/MUGE/lmdb/valid

- 2024-05-31,12:51:13 | INFO | Rank 0 | valid_batch_size: 48

- 2024-05-31,12:51:13 | INFO | Rank 0 | valid_epoch_interval: 1

- 2024-05-31,12:51:13 | INFO | Rank 0 | valid_num_workers: 4

- 2024-05-31,12:51:13 | INFO | Rank 0 | valid_step_interval: 1000

- 2024-05-31,12:51:13 | INFO | Rank 0 | vision_model: ViT-H-14

- 2024-05-31,12:51:13 | INFO | Rank 0 | warmup: 100

- 2024-05-31,12:51:13 | INFO | Rank 0 | wd: 0.001

- 2024-05-31,12:51:13 | INFO | Rank 0 | world_size: 4

- 2024-05-31,12:51:13 | INFO | Rank 0 | Use GPU: 0 for training

- 2024-05-31,12:51:13 | INFO | Rank 0 | => begin to load checkpoint '../datapath//pretrained_weights/clip_cn_vit-h-14.pt'

- 2024-05-31,12:51:13 | INFO | Rank 1 | train LMDB file contains 129380 images and 250314 pairs.

- 2024-05-31,12:51:13 | INFO | Rank 1 | val LMDB file contains 29806 images and 30588 pairs.

- 2024-05-31,12:51:13 | INFO | Rank 1 | Use GPU: 1 for training

- 2024-05-31,12:51:13 | INFO | Rank 1 | => begin to load checkpoint '../datapath//pretrained_weights/clip_cn_vit-h-14.pt'

- 2024-05-31,12:51:14 | INFO | Rank 0 | => loaded checkpoint '../datapath//pretrained_weights/clip_cn_vit-h-14.pt' (epoch 7 @ 0 steps)

- 2024-05-31,12:51:14 | INFO | Rank 2 | => loaded checkpoint '../datapath//pretrained_weights/clip_cn_vit-h-14.pt' (epoch 7 @ 0 steps)

- 2024-05-31,12:51:14 | INFO | Rank 2 | Start epoch 1

- 2024-05-31,12:51:14 | INFO | Rank 3 | => loaded checkpoint '../datapath//pretrained_weights/clip_cn_vit-h-14.pt' (epoch 7 @ 0 steps)

- 2024-05-31,12:51:14 | INFO | Rank 3 | Start epoch 1

- 2024-05-31,12:51:14 | INFO | Rank 1 | => loaded checkpoint '../datapath//pretrained_weights/clip_cn_vit-h-14.pt' (epoch 7 @ 0 steps)

- 2024-05-31,12:51:14 | INFO | Rank 1 | Start epoch 1

- /opt/conda/lib/python3.8/site-packages/torch/utils/checkpoint.py:464: UserWarning: torch.utils.checkpoint: the use_reentrant parameter should be passed explicitly. In version 2.4 we will raise an exception if use_reentrant is not passed. use_reentrant=False is recommended, but if you need to preserve the current default behavior, you can pass use_reentrant=True. Refer to docs for more details on the differences between the two variants.

- warnings.warn(

- /opt/conda/lib/python3.8/site-packages/torch/utils/checkpoint.py:464: UserWarning: torch.utils.checkpoint: the use_reentrant parameter should be passed explicitly. In version 2.4 we will raise an exception if use_reentrant is not passed. use_reentrant=False is recommended, but if you need to preserve the current default behavior, you can pass use_reentrant=True. Refer to docs for more details on the differences between the two variants.

- warnings.warn(

- /opt/conda/lib/python3.8/site-packages/torch/utils/checkpoint.py:464: UserWarning: torch.utils.checkpoint: the use_reentrant parameter should be passed explicitly. In version 2.4 we will raise an exception if use_reentrant is not passed. use_reentrant=False is recommended, but if you need to preserve the current default behavior, you can pass use_reentrant=True. Refer to docs for more details on the differences between the two variants.

- warnings.warn(

- /opt/conda/lib/python3.8/site-packages/torch/utils/checkpoint.py:464: UserWarning: torch.utils.checkpoint: the use_reentrant parameter should be passed explicitly. In version 2.4 we will raise an exception if use_reentrant is not passed. use_reentrant=False is recommended, but if you need to preserve the current default behavior, you can pass use_reentrant=True. Refer to docs for more details on the differences between the two variants.

- warnings.warn(

- 2024-05-31,12:51:25 | INFO | Rank 0 | Global Steps: 10/1304 | Train Epoch: 1 [1920/250368 (1%)] | Loss: 0.804866 | Image2Text Acc: 76.56 | Text2Image Acc: 79.69 | Data Time: 0.064s | Batch Time: 0.789s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:51:33 | INFO | Rank 0 | Global Steps: 20/1304 | Train Epoch: 1 [3840/250368 (2%)] | Loss: 1.095239 | Image2Text Acc: 75.00 | Text2Image Acc: 75.00 | Data Time: 0.063s | Batch Time: 0.791s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:51:41 | INFO | Rank 0 | Global Steps: 30/1304 | Train Epoch: 1 [5760/250368 (2%)] | Loss: 0.724124 | Image2Text Acc: 81.25 | Text2Image Acc: 81.77 | Data Time: 0.063s | Batch Time: 0.791s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:51:49 | INFO | Rank 0 | Global Steps: 40/1304 | Train Epoch: 1 [7680/250368 (3%)] | Loss: 0.700446 | Image2Text Acc: 82.81 | Text2Image Acc: 84.38 | Data Time: 0.063s | Batch Time: 0.790s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:51:57 | INFO | Rank 0 | Global Steps: 50/1304 | Train Epoch: 1 [9600/250368 (4%)] | Loss: 0.637871 | Image2Text Acc: 79.69 | Text2Image Acc: 83.85 | Data Time: 0.064s | Batch Time: 0.790s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:52:05 | INFO | Rank 0 | Global Steps: 60/1304 | Train Epoch: 1 [11520/250368 (5%)] | Loss: 0.677985 | Image2Text Acc: 82.29 | Text2Image Acc: 83.33 | Data Time: 0.063s | Batch Time: 0.792s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:52:13 | INFO | Rank 0 | Global Steps: 70/1304 | Train Epoch: 1 [13440/250368 (5%)] | Loss: 0.692282 | Image2Text Acc: 79.17 | Text2Image Acc: 78.65 | Data Time: 0.063s | Batch Time: 0.823s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:52:21 | INFO | Rank 0 | Global Steps: 80/1304 | Train Epoch: 1 [15360/250368 (6%)] | Loss: 0.638739 | Image2Text Acc: 83.33 | Text2Image Acc: 82.29 | Data Time: 0.063s | Batch Time: 0.807s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:52:29 | INFO | Rank 0 | Global Steps: 90/1304 | Train Epoch: 1 [17280/250368 (7%)] | Loss: 0.765517 | Image2Text Acc: 78.65 | Text2Image Acc: 77.60 | Data Time: 0.063s | Batch Time: 0.807s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:52:37 | INFO | Rank 0 | Global Steps: 100/1304 | Train Epoch: 1 [19200/250368 (8%)] | Loss: 0.535626 | Image2Text Acc: 85.94 | Text2Image Acc: 85.42 | Data Time: 0.063s | Batch Time: 0.790s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:52:45 | INFO | Rank 0 | Global Steps: 110/1304 | Train Epoch: 1 [21120/250368 (8%)] | Loss: 0.582258 | Image2Text Acc: 83.85 | Text2Image Acc: 85.42 | Data Time: 0.063s | Batch Time: 0.790s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:52:52 | INFO | Rank 0 | Global Steps: 120/1304 | Train Epoch: 1 [23040/250368 (9%)] | Loss: 0.627735 | Image2Text Acc: 85.42 | Text2Image Acc: 82.29 | Data Time: 0.064s | Batch Time: 0.792s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:53:00 | INFO | Rank 0 | Global Steps: 130/1304 | Train Epoch: 1 [24960/250368 (10%)] | Loss: 0.614012 | Image2Text Acc: 82.29 | Text2Image Acc: 82.29 | Data Time: 0.063s | Batch Time: 0.791s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:53:08 | INFO | Rank 0 | Global Steps: 140/1304 | Train Epoch: 1 [26880/250368 (11%)] | Loss: 0.544107 | Image2Text Acc: 85.42 | Text2Image Acc: 83.85 | Data Time: 0.064s | Batch Time: 0.807s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:53:16 | INFO | Rank 0 | Global Steps: 150/1304 | Train Epoch: 1 [28800/250368 (12%)] | Loss: 0.712038 | Image2Text Acc: 82.29 | Text2Image Acc: 80.73 | Data Time: 0.064s | Batch Time: 0.793s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:53:24 | INFO | Rank 0 | Global Steps: 160/1304 | Train Epoch: 1 [30720/250368 (12%)] | Loss: 0.585527 | Image2Text Acc: 80.73 | Text2Image Acc: 84.38 | Data Time: 0.063s | Batch Time: 0.822s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:53:32 | INFO | Rank 0 | Global Steps: 170/1304 | Train Epoch: 1 [32640/250368 (13%)] | Loss: 0.801278 | Image2Text Acc: 80.73 | Text2Image Acc: 80.21 | Data Time: 0.063s | Batch Time: 0.806s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:53:40 | INFO | Rank 0 | Global Steps: 180/1304 | Train Epoch: 1 [34560/250368 (14%)] | Loss: 0.481002 | Image2Text Acc: 83.85 | Text2Image Acc: 86.98 | Data Time: 0.064s | Batch Time: 0.801s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:53:48 | INFO | Rank 0 | Global Steps: 190/1304 | Train Epoch: 1 [36480/250368 (15%)] | Loss: 0.587554 | Image2Text Acc: 82.81 | Text2Image Acc: 84.38 | Data Time: 0.063s | Batch Time: 0.792s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:53:56 | INFO | Rank 0 | Global Steps: 200/1304 | Train Epoch: 1 [38400/250368 (15%)] | Loss: 0.693925 | Image2Text Acc: 79.17 | Text2Image Acc: 81.77 | Data Time: 0.063s | Batch Time: 0.797s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:54:04 | INFO | Rank 0 | Global Steps: 210/1304 | Train Epoch: 1 [40320/250368 (16%)] | Loss: 0.335370 | Image2Text Acc: 91.15 | Text2Image Acc: 90.62 | Data Time: 0.063s | Batch Time: 0.793s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:54:12 | INFO | Rank 0 | Global Steps: 220/1304 | Train Epoch: 1 [42240/250368 (17%)] | Loss: 0.510772 | Image2Text Acc: 84.38 | Text2Image Acc: 85.94 | Data Time: 0.063s | Batch Time: 0.795s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:54:20 | INFO | Rank 0 | Global Steps: 230/1304 | Train Epoch: 1 [44160/250368 (18%)] | Loss: 0.693151 | Image2Text Acc: 82.81 | Text2Image Acc: 83.85 | Data Time: 0.063s | Batch Time: 0.802s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:54:28 | INFO | Rank 0 | Global Steps: 240/1304 | Train Epoch: 1 [46080/250368 (18%)] | Loss: 0.637074 | Image2Text Acc: 80.21 | Text2Image Acc: 83.85 | Data Time: 0.063s | Batch Time: 0.792s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:54:36 | INFO | Rank 0 | Global Steps: 250/1304 | Train Epoch: 1 [48000/250368 (19%)] | Loss: 0.502691 | Image2Text Acc: 88.54 | Text2Image Acc: 86.98 | Data Time: 0.063s | Batch Time: 0.792s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:54:44 | INFO | Rank 0 | Global Steps: 260/1304 | Train Epoch: 1 [49920/250368 (20%)] | Loss: 0.545955 | Image2Text Acc: 83.85 | Text2Image Acc: 82.81 | Data Time: 0.063s | Batch Time: 0.829s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:54:52 | INFO | Rank 0 | Global Steps: 270/1304 | Train Epoch: 1 [51840/250368 (21%)] | Loss: 0.521744 | Image2Text Acc: 81.77 | Text2Image Acc: 85.42 | Data Time: 0.064s | Batch Time: 0.817s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:55:00 | INFO | Rank 0 | Global Steps: 280/1304 | Train Epoch: 1 [53760/250368 (21%)] | Loss: 0.563992 | Image2Text Acc: 83.85 | Text2Image Acc: 84.38 | Data Time: 0.063s | Batch Time: 0.814s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:55:08 | INFO | Rank 0 | Global Steps: 290/1304 | Train Epoch: 1 [55680/250368 (22%)] | Loss: 0.491873 | Image2Text Acc: 83.85 | Text2Image Acc: 89.06 | Data Time: 0.062s | Batch Time: 0.786s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:55:16 | INFO | Rank 0 | Global Steps: 300/1304 | Train Epoch: 1 [57600/250368 (23%)] | Loss: 0.604035 | Image2Text Acc: 79.69 | Text2Image Acc: 81.77 | Data Time: 0.063s | Batch Time: 0.794s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:55:24 | INFO | Rank 0 | Global Steps: 310/1304 | Train Epoch: 1 [59520/250368 (24%)] | Loss: 0.668683 | Image2Text Acc: 82.29 | Text2Image Acc: 78.65 | Data Time: 0.063s | Batch Time: 0.792s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:55:32 | INFO | Rank 0 | Global Steps: 320/1304 | Train Epoch: 1 [61440/250368 (25%)] | Loss: 0.638269 | Image2Text Acc: 84.38 | Text2Image Acc: 82.81 | Data Time: 0.064s | Batch Time: 0.791s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:55:40 | INFO | Rank 0 | Global Steps: 330/1304 | Train Epoch: 1 [63360/250368 (25%)] | Loss: 0.323913 | Image2Text Acc: 88.54 | Text2Image Acc: 88.54 | Data Time: 0.064s | Batch Time: 0.792s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:55:49 | INFO | Rank 0 | Global Steps: 340/1304 | Train Epoch: 1 [65280/250368 (26%)] | Loss: 0.576394 | Image2Text Acc: 84.38 | Text2Image Acc: 84.38 | Data Time: 0.063s | Batch Time: 0.803s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:55:56 | INFO | Rank 0 | Global Steps: 350/1304 | Train Epoch: 1 [67200/250368 (27%)] | Loss: 0.589320 | Image2Text Acc: 84.90 | Text2Image Acc: 82.81 | Data Time: 0.061s | Batch Time: 0.787s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:56:05 | INFO | Rank 0 | Global Steps: 360/1304 | Train Epoch: 1 [69120/250368 (28%)] | Loss: 0.468100 | Image2Text Acc: 85.42 | Text2Image Acc: 86.46 | Data Time: 0.064s | Batch Time: 0.829s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:56:13 | INFO | Rank 0 | Global Steps: 370/1304 | Train Epoch: 1 [71040/250368 (28%)] | Loss: 0.512677 | Image2Text Acc: 84.38 | Text2Image Acc: 86.98 | Data Time: 0.063s | Batch Time: 0.792s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:56:21 | INFO | Rank 0 | Global Steps: 380/1304 | Train Epoch: 1 [72960/250368 (29%)] | Loss: 0.550826 | Image2Text Acc: 86.46 | Text2Image Acc: 82.81 | Data Time: 0.064s | Batch Time: 0.820s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:56:29 | INFO | Rank 0 | Global Steps: 390/1304 | Train Epoch: 1 [74880/250368 (30%)] | Loss: 0.620187 | Image2Text Acc: 82.81 | Text2Image Acc: 81.25 | Data Time: 0.062s | Batch Time: 0.787s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:56:37 | INFO | Rank 0 | Global Steps: 400/1304 | Train Epoch: 1 [76800/250368 (31%)] | Loss: 0.795713 | Image2Text Acc: 81.25 | Text2Image Acc: 79.69 | Data Time: 0.063s | Batch Time: 0.810s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:56:45 | INFO | Rank 0 | Global Steps: 410/1304 | Train Epoch: 1 [78720/250368 (31%)] | Loss: 0.833368 | Image2Text Acc: 80.21 | Text2Image Acc: 81.25 | Data Time: 0.063s | Batch Time: 0.789s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:56:53 | INFO | Rank 0 | Global Steps: 420/1304 | Train Epoch: 1 [80640/250368 (32%)] | Loss: 0.408414 | Image2Text Acc: 89.06 | Text2Image Acc: 87.50 | Data Time: 0.064s | Batch Time: 0.791s | LR: 0.000003 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:57:01 | INFO | Rank 0 | Global Steps: 430/1304 | Train Epoch: 1 [82560/250368 (33%)] | Loss: 0.426235 | Image2Text Acc: 88.02 | Text2Image Acc: 86.98 | Data Time: 0.063s | Batch Time: 0.787s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:57:09 | INFO | Rank 0 | Global Steps: 440/1304 | Train Epoch: 1 [84480/250368 (34%)] | Loss: 0.610983 | Image2Text Acc: 83.85 | Text2Image Acc: 82.81 | Data Time: 0.064s | Batch Time: 0.789s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:57:17 | INFO | Rank 0 | Global Steps: 450/1304 | Train Epoch: 1 [86400/250368 (35%)] | Loss: 0.480076 | Image2Text Acc: 83.85 | Text2Image Acc: 87.50 | Data Time: 0.064s | Batch Time: 0.810s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:57:25 | INFO | Rank 0 | Global Steps: 460/1304 | Train Epoch: 1 [88320/250368 (35%)] | Loss: 0.736626 | Image2Text Acc: 78.65 | Text2Image Acc: 81.77 | Data Time: 0.063s | Batch Time: 0.807s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:57:33 | INFO | Rank 0 | Global Steps: 470/1304 | Train Epoch: 1 [90240/250368 (36%)] | Loss: 0.717082 | Image2Text Acc: 79.69 | Text2Image Acc: 81.77 | Data Time: 0.064s | Batch Time: 0.793s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:57:41 | INFO | Rank 0 | Global Steps: 480/1304 | Train Epoch: 1 [92160/250368 (37%)] | Loss: 0.689953 | Image2Text Acc: 80.21 | Text2Image Acc: 80.73 | Data Time: 0.064s | Batch Time: 0.803s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:57:49 | INFO | Rank 0 | Global Steps: 490/1304 | Train Epoch: 1 [94080/250368 (38%)] | Loss: 0.637135 | Image2Text Acc: 84.38 | Text2Image Acc: 81.25 | Data Time: 0.063s | Batch Time: 0.793s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:57:57 | INFO | Rank 0 | Global Steps: 500/1304 | Train Epoch: 1 [96000/250368 (38%)] | Loss: 0.551889 | Image2Text Acc: 84.90 | Text2Image Acc: 83.33 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:58:05 | INFO | Rank 0 | Global Steps: 510/1304 | Train Epoch: 1 [97920/250368 (39%)] | Loss: 0.527987 | Image2Text Acc: 82.81 | Text2Image Acc: 86.46 | Data Time: 0.064s | Batch Time: 0.793s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:58:13 | INFO | Rank 0 | Global Steps: 520/1304 | Train Epoch: 1 [99840/250368 (40%)] | Loss: 0.533399 | Image2Text Acc: 85.42 | Text2Image Acc: 82.81 | Data Time: 0.064s | Batch Time: 0.795s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:58:21 | INFO | Rank 0 | Global Steps: 530/1304 | Train Epoch: 1 [101760/250368 (41%)] | Loss: 0.468345 | Image2Text Acc: 84.90 | Text2Image Acc: 86.98 | Data Time: 0.064s | Batch Time: 0.793s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:58:29 | INFO | Rank 0 | Global Steps: 540/1304 | Train Epoch: 1 [103680/250368 (41%)] | Loss: 0.503810 | Image2Text Acc: 86.98 | Text2Image Acc: 84.90 | Data Time: 0.065s | Batch Time: 0.802s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:58:37 | INFO | Rank 0 | Global Steps: 550/1304 | Train Epoch: 1 [105600/250368 (42%)] | Loss: 0.521391 | Image2Text Acc: 85.94 | Text2Image Acc: 85.42 | Data Time: 0.062s | Batch Time: 0.786s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:58:45 | INFO | Rank 0 | Global Steps: 560/1304 | Train Epoch: 1 [107520/250368 (43%)] | Loss: 0.595308 | Image2Text Acc: 79.17 | Text2Image Acc: 83.85 | Data Time: 0.064s | Batch Time: 0.804s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:58:53 | INFO | Rank 0 | Global Steps: 570/1304 | Train Epoch: 1 [109440/250368 (44%)] | Loss: 0.503112 | Image2Text Acc: 84.90 | Text2Image Acc: 86.46 | Data Time: 0.064s | Batch Time: 0.793s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:59:01 | INFO | Rank 0 | Global Steps: 580/1304 | Train Epoch: 1 [111360/250368 (44%)] | Loss: 0.473905 | Image2Text Acc: 85.94 | Text2Image Acc: 85.42 | Data Time: 0.064s | Batch Time: 0.797s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:59:09 | INFO | Rank 0 | Global Steps: 590/1304 | Train Epoch: 1 [113280/250368 (45%)] | Loss: 0.622852 | Image2Text Acc: 81.77 | Text2Image Acc: 81.25 | Data Time: 0.064s | Batch Time: 0.808s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:59:17 | INFO | Rank 0 | Global Steps: 600/1304 | Train Epoch: 1 [115200/250368 (46%)] | Loss: 0.487094 | Image2Text Acc: 86.46 | Text2Image Acc: 84.90 | Data Time: 0.064s | Batch Time: 0.814s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:59:25 | INFO | Rank 0 | Global Steps: 610/1304 | Train Epoch: 1 [117120/250368 (47%)] | Loss: 0.398079 | Image2Text Acc: 87.50 | Text2Image Acc: 88.54 | Data Time: 0.063s | Batch Time: 0.819s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:59:33 | INFO | Rank 0 | Global Steps: 620/1304 | Train Epoch: 1 [119040/250368 (48%)] | Loss: 0.488776 | Image2Text Acc: 86.98 | Text2Image Acc: 86.46 | Data Time: 0.064s | Batch Time: 0.805s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:59:41 | INFO | Rank 0 | Global Steps: 630/1304 | Train Epoch: 1 [120960/250368 (48%)] | Loss: 0.447914 | Image2Text Acc: 86.46 | Text2Image Acc: 87.50 | Data Time: 0.062s | Batch Time: 0.786s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:59:49 | INFO | Rank 0 | Global Steps: 640/1304 | Train Epoch: 1 [122880/250368 (49%)] | Loss: 0.634602 | Image2Text Acc: 81.25 | Text2Image Acc: 83.85 | Data Time: 0.063s | Batch Time: 0.821s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,12:59:57 | INFO | Rank 0 | Global Steps: 650/1304 | Train Epoch: 1 [124800/250368 (50%)] | Loss: 0.566197 | Image2Text Acc: 84.90 | Text2Image Acc: 84.90 | Data Time: 0.064s | Batch Time: 0.801s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:00:05 | INFO | Rank 0 | Global Steps: 660/1304 | Train Epoch: 1 [126720/250368 (51%)] | Loss: 0.591476 | Image2Text Acc: 82.29 | Text2Image Acc: 84.38 | Data Time: 0.064s | Batch Time: 0.809s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:00:13 | INFO | Rank 0 | Global Steps: 670/1304 | Train Epoch: 1 [128640/250368 (51%)] | Loss: 0.673138 | Image2Text Acc: 80.73 | Text2Image Acc: 81.25 | Data Time: 0.062s | Batch Time: 0.809s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:00:21 | INFO | Rank 0 | Global Steps: 680/1304 | Train Epoch: 1 [130560/250368 (52%)] | Loss: 0.478046 | Image2Text Acc: 86.46 | Text2Image Acc: 86.98 | Data Time: 0.062s | Batch Time: 0.793s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:00:30 | INFO | Rank 0 | Global Steps: 690/1304 | Train Epoch: 1 [132480/250368 (53%)] | Loss: 0.613619 | Image2Text Acc: 82.29 | Text2Image Acc: 82.29 | Data Time: 0.063s | Batch Time: 0.787s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:00:38 | INFO | Rank 0 | Global Steps: 700/1304 | Train Epoch: 1 [134400/250368 (54%)] | Loss: 0.466058 | Image2Text Acc: 87.50 | Text2Image Acc: 85.94 | Data Time: 0.064s | Batch Time: 0.801s | LR: 0.000002 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:00:46 | INFO | Rank 0 | Global Steps: 710/1304 | Train Epoch: 1 [136320/250368 (54%)] | Loss: 0.561358 | Image2Text Acc: 82.29 | Text2Image Acc: 84.38 | Data Time: 0.064s | Batch Time: 0.808s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:00:54 | INFO | Rank 0 | Global Steps: 720/1304 | Train Epoch: 1 [138240/250368 (55%)] | Loss: 0.450772 | Image2Text Acc: 85.94 | Text2Image Acc: 84.90 | Data Time: 0.065s | Batch Time: 0.813s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:01:02 | INFO | Rank 0 | Global Steps: 730/1304 | Train Epoch: 1 [140160/250368 (56%)] | Loss: 0.476233 | Image2Text Acc: 85.94 | Text2Image Acc: 86.46 | Data Time: 0.065s | Batch Time: 0.793s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:01:10 | INFO | Rank 0 | Global Steps: 740/1304 | Train Epoch: 1 [142080/250368 (57%)] | Loss: 0.387905 | Image2Text Acc: 89.06 | Text2Image Acc: 89.06 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:01:18 | INFO | Rank 0 | Global Steps: 750/1304 | Train Epoch: 1 [144000/250368 (58%)] | Loss: 0.627155 | Image2Text Acc: 81.25 | Text2Image Acc: 80.73 | Data Time: 0.065s | Batch Time: 0.793s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:01:26 | INFO | Rank 0 | Global Steps: 760/1304 | Train Epoch: 1 [145920/250368 (58%)] | Loss: 0.629064 | Image2Text Acc: 82.81 | Text2Image Acc: 82.81 | Data Time: 0.065s | Batch Time: 0.805s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:01:34 | INFO | Rank 0 | Global Steps: 770/1304 | Train Epoch: 1 [147840/250368 (59%)] | Loss: 0.477794 | Image2Text Acc: 83.33 | Text2Image Acc: 87.50 | Data Time: 0.062s | Batch Time: 0.800s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:01:42 | INFO | Rank 0 | Global Steps: 780/1304 | Train Epoch: 1 [149760/250368 (60%)] | Loss: 0.493179 | Image2Text Acc: 85.42 | Text2Image Acc: 84.38 | Data Time: 0.065s | Batch Time: 0.805s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:01:50 | INFO | Rank 0 | Global Steps: 790/1304 | Train Epoch: 1 [151680/250368 (61%)] | Loss: 0.375111 | Image2Text Acc: 89.06 | Text2Image Acc: 92.71 | Data Time: 0.062s | Batch Time: 0.790s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:01:58 | INFO | Rank 0 | Global Steps: 800/1304 | Train Epoch: 1 [153600/250368 (61%)] | Loss: 0.536498 | Image2Text Acc: 82.29 | Text2Image Acc: 81.25 | Data Time: 0.064s | Batch Time: 0.855s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:02:06 | INFO | Rank 0 | Global Steps: 810/1304 | Train Epoch: 1 [155520/250368 (62%)] | Loss: 0.601442 | Image2Text Acc: 83.33 | Text2Image Acc: 85.42 | Data Time: 0.062s | Batch Time: 0.799s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:02:14 | INFO | Rank 0 | Global Steps: 820/1304 | Train Epoch: 1 [157440/250368 (63%)] | Loss: 0.598158 | Image2Text Acc: 82.81 | Text2Image Acc: 85.42 | Data Time: 0.064s | Batch Time: 0.803s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:02:22 | INFO | Rank 0 | Global Steps: 830/1304 | Train Epoch: 1 [159360/250368 (64%)] | Loss: 0.465662 | Image2Text Acc: 86.98 | Text2Image Acc: 85.94 | Data Time: 0.064s | Batch Time: 0.802s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:02:30 | INFO | Rank 0 | Global Steps: 840/1304 | Train Epoch: 1 [161280/250368 (64%)] | Loss: 0.429245 | Image2Text Acc: 89.06 | Text2Image Acc: 86.98 | Data Time: 0.064s | Batch Time: 0.812s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:02:38 | INFO | Rank 0 | Global Steps: 850/1304 | Train Epoch: 1 [163200/250368 (65%)] | Loss: 0.746637 | Image2Text Acc: 80.21 | Text2Image Acc: 77.08 | Data Time: 0.064s | Batch Time: 0.813s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:02:46 | INFO | Rank 0 | Global Steps: 860/1304 | Train Epoch: 1 [165120/250368 (66%)] | Loss: 0.437707 | Image2Text Acc: 85.42 | Text2Image Acc: 85.94 | Data Time: 0.062s | Batch Time: 0.802s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:02:54 | INFO | Rank 0 | Global Steps: 870/1304 | Train Epoch: 1 [167040/250368 (67%)] | Loss: 0.348013 | Image2Text Acc: 90.10 | Text2Image Acc: 90.10 | Data Time: 0.065s | Batch Time: 0.788s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:03:02 | INFO | Rank 0 | Global Steps: 880/1304 | Train Epoch: 1 [168960/250368 (67%)] | Loss: 0.582639 | Image2Text Acc: 85.42 | Text2Image Acc: 85.42 | Data Time: 0.061s | Batch Time: 0.798s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:03:10 | INFO | Rank 0 | Global Steps: 890/1304 | Train Epoch: 1 [170880/250368 (68%)] | Loss: 0.421704 | Image2Text Acc: 84.90 | Text2Image Acc: 87.50 | Data Time: 0.064s | Batch Time: 0.802s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:03:19 | INFO | Rank 0 | Global Steps: 900/1304 | Train Epoch: 1 [172800/250368 (69%)] | Loss: 0.454098 | Image2Text Acc: 87.50 | Text2Image Acc: 82.81 | Data Time: 0.064s | Batch Time: 0.808s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:03:27 | INFO | Rank 0 | Global Steps: 910/1304 | Train Epoch: 1 [174720/250368 (70%)] | Loss: 0.547316 | Image2Text Acc: 84.38 | Text2Image Acc: 85.42 | Data Time: 0.067s | Batch Time: 0.806s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:03:35 | INFO | Rank 0 | Global Steps: 920/1304 | Train Epoch: 1 [176640/250368 (71%)] | Loss: 0.423129 | Image2Text Acc: 85.42 | Text2Image Acc: 85.42 | Data Time: 0.065s | Batch Time: 0.802s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:03:43 | INFO | Rank 0 | Global Steps: 930/1304 | Train Epoch: 1 [178560/250368 (71%)] | Loss: 0.385930 | Image2Text Acc: 88.54 | Text2Image Acc: 88.02 | Data Time: 0.062s | Batch Time: 0.791s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:03:51 | INFO | Rank 0 | Global Steps: 940/1304 | Train Epoch: 1 [180480/250368 (72%)] | Loss: 0.535218 | Image2Text Acc: 84.90 | Text2Image Acc: 84.90 | Data Time: 0.062s | Batch Time: 0.803s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:03:59 | INFO | Rank 0 | Global Steps: 950/1304 | Train Epoch: 1 [182400/250368 (73%)] | Loss: 0.629635 | Image2Text Acc: 83.33 | Text2Image Acc: 83.85 | Data Time: 0.062s | Batch Time: 0.806s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:04:07 | INFO | Rank 0 | Global Steps: 960/1304 | Train Epoch: 1 [184320/250368 (74%)] | Loss: 0.522842 | Image2Text Acc: 84.38 | Text2Image Acc: 86.46 | Data Time: 0.063s | Batch Time: 0.798s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:04:15 | INFO | Rank 0 | Global Steps: 970/1304 | Train Epoch: 1 [186240/250368 (74%)] | Loss: 0.660933 | Image2Text Acc: 81.25 | Text2Image Acc: 81.77 | Data Time: 0.065s | Batch Time: 0.800s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:04:23 | INFO | Rank 0 | Global Steps: 980/1304 | Train Epoch: 1 [188160/250368 (75%)] | Loss: 0.446656 | Image2Text Acc: 88.54 | Text2Image Acc: 86.98 | Data Time: 0.061s | Batch Time: 0.788s | LR: 0.000001 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:04:31 | INFO | Rank 0 | Global Steps: 990/1304 | Train Epoch: 1 [190080/250368 (76%)] | Loss: 0.461819 | Image2Text Acc: 87.50 | Text2Image Acc: 87.50 | Data Time: 0.064s | Batch Time: 0.805s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:04:39 | INFO | Rank 2 | Begin to eval on validation set (epoch 1 @ 1000 steps)...

- 2024-05-31,13:04:39 | INFO | Rank 3 | Begin to eval on validation set (epoch 1 @ 1000 steps)...

- 2024-05-31,13:04:39 | INFO | Rank 1 | Begin to eval on validation set (epoch 1 @ 1000 steps)...

- 2024-05-31,13:04:39 | INFO | Rank 0 | Global Steps: 1000/1304 | Train Epoch: 1 [192000/250368 (77%)] | Loss: 0.427380 | Image2Text Acc: 85.94 | Text2Image Acc: 84.38 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:04:39 | INFO | Rank 0 | Begin to eval on validation set (epoch 1 @ 1000 steps)...

- /opt/conda/lib/python3.8/site-packages/torch/utils/checkpoint.py:91: UserWarning: None of the inputs have requires_grad=True. Gradients will be None

- warnings.warn(

- /opt/conda/lib/python3.8/site-packages/torch/utils/checkpoint.py:91: UserWarning: None of the inputs have requires_grad=True. Gradients will be None

- warnings.warn(

- /opt/conda/lib/python3.8/site-packages/torch/utils/checkpoint.py:91: UserWarning: None of the inputs have requires_grad=True. Gradients will be None

- warnings.warn(

- /opt/conda/lib/python3.8/site-packages/torch/utils/checkpoint.py:91: UserWarning: None of the inputs have requires_grad=True. Gradients will be None

- warnings.warn(

- 2024-05-31,13:06:24 | INFO | Rank 2 | Evaluated 100/160 batches...

- 2024-05-31,13:06:24 | INFO | Rank 3 | Evaluated 100/160 batches...

- 2024-05-31,13:06:25 | INFO | Rank 0 | Evaluated 100/160 batches...

- 2024-05-31,13:06:26 | INFO | Rank 1 | Evaluated 100/160 batches...

- 2024-05-31,13:07:31 | INFO | Rank 3 | Validation Result (epoch 1 @ 1000 steps) | Valid Loss: 0.153259 | Image2Text Acc: 95.42 | Text2Image Acc: 94.77 | logit_scale: 4.605 | Valid Batch Size: 48

- 2024-05-31,13:07:31 | INFO | Rank 1 | Validation Result (epoch 1 @ 1000 steps) | Valid Loss: 0.153259 | Image2Text Acc: 95.42 | Text2Image Acc: 94.77 | logit_scale: 4.605 | Valid Batch Size: 48

- 2024-05-31,13:07:31 | INFO | Rank 0 | Validation Result (epoch 1 @ 1000 steps) | Valid Loss: 0.153259 | Image2Text Acc: 95.42 | Text2Image Acc: 94.77 | logit_scale: 4.605 | Valid Batch Size: 48

- 2024-05-31,13:07:31 | INFO | Rank 2 | Validation Result (epoch 1 @ 1000 steps) | Valid Loss: 0.153259 | Image2Text Acc: 95.42 | Text2Image Acc: 94.77 | logit_scale: 4.605 | Valid Batch Size: 48

- 2024-05-31,13:07:39 | INFO | Rank 0 | Global Steps: 1010/1304 | Train Epoch: 1 [193920/250368 (77%)] | Loss: 0.422392 | Image2Text Acc: 87.50 | Text2Image Acc: 87.50 | Data Time: 0.065s | Batch Time: 0.804s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:07:47 | INFO | Rank 0 | Global Steps: 1020/1304 | Train Epoch: 1 [195840/250368 (78%)] | Loss: 0.503139 | Image2Text Acc: 84.38 | Text2Image Acc: 83.33 | Data Time: 0.065s | Batch Time: 0.835s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:07:55 | INFO | Rank 0 | Global Steps: 1030/1304 | Train Epoch: 1 [197760/250368 (79%)] | Loss: 0.560809 | Image2Text Acc: 85.94 | Text2Image Acc: 82.29 | Data Time: 0.065s | Batch Time: 0.801s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:08:03 | INFO | Rank 0 | Global Steps: 1040/1304 | Train Epoch: 1 [199680/250368 (80%)] | Loss: 0.511506 | Image2Text Acc: 85.42 | Text2Image Acc: 83.85 | Data Time: 0.064s | Batch Time: 0.817s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:08:11 | INFO | Rank 0 | Global Steps: 1050/1304 | Train Epoch: 1 [201600/250368 (81%)] | Loss: 0.648607 | Image2Text Acc: 78.65 | Text2Image Acc: 81.77 | Data Time: 0.065s | Batch Time: 0.802s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:08:19 | INFO | Rank 0 | Global Steps: 1060/1304 | Train Epoch: 1 [203520/250368 (81%)] | Loss: 0.474479 | Image2Text Acc: 84.90 | Text2Image Acc: 85.94 | Data Time: 0.064s | Batch Time: 0.797s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:08:27 | INFO | Rank 0 | Global Steps: 1070/1304 | Train Epoch: 1 [205440/250368 (82%)] | Loss: 0.581409 | Image2Text Acc: 82.29 | Text2Image Acc: 80.73 | Data Time: 0.064s | Batch Time: 0.800s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:08:35 | INFO | Rank 0 | Global Steps: 1080/1304 | Train Epoch: 1 [207360/250368 (83%)] | Loss: 0.559054 | Image2Text Acc: 84.38 | Text2Image Acc: 85.94 | Data Time: 0.065s | Batch Time: 0.802s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:08:43 | INFO | Rank 0 | Global Steps: 1090/1304 | Train Epoch: 1 [209280/250368 (84%)] | Loss: 0.492287 | Image2Text Acc: 87.50 | Text2Image Acc: 86.46 | Data Time: 0.064s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:08:52 | INFO | Rank 0 | Global Steps: 1100/1304 | Train Epoch: 1 [211200/250368 (84%)] | Loss: 0.435179 | Image2Text Acc: 86.98 | Text2Image Acc: 88.54 | Data Time: 0.065s | Batch Time: 0.790s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:09:00 | INFO | Rank 0 | Global Steps: 1110/1304 | Train Epoch: 1 [213120/250368 (85%)] | Loss: 0.373192 | Image2Text Acc: 84.38 | Text2Image Acc: 86.98 | Data Time: 0.065s | Batch Time: 0.801s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:09:08 | INFO | Rank 0 | Global Steps: 1120/1304 | Train Epoch: 1 [215040/250368 (86%)] | Loss: 0.439508 | Image2Text Acc: 86.46 | Text2Image Acc: 85.94 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:09:16 | INFO | Rank 0 | Global Steps: 1130/1304 | Train Epoch: 1 [216960/250368 (87%)] | Loss: 0.621569 | Image2Text Acc: 81.77 | Text2Image Acc: 79.17 | Data Time: 0.064s | Batch Time: 0.809s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:09:24 | INFO | Rank 0 | Global Steps: 1140/1304 | Train Epoch: 1 [218880/250368 (87%)] | Loss: 0.730607 | Image2Text Acc: 80.21 | Text2Image Acc: 80.73 | Data Time: 0.065s | Batch Time: 0.801s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:09:32 | INFO | Rank 0 | Global Steps: 1150/1304 | Train Epoch: 1 [220800/250368 (88%)] | Loss: 0.525511 | Image2Text Acc: 82.29 | Text2Image Acc: 81.25 | Data Time: 0.064s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:09:40 | INFO | Rank 0 | Global Steps: 1160/1304 | Train Epoch: 1 [222720/250368 (89%)] | Loss: 0.612495 | Image2Text Acc: 80.21 | Text2Image Acc: 82.81 | Data Time: 0.064s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:09:48 | INFO | Rank 0 | Global Steps: 1170/1304 | Train Epoch: 1 [224640/250368 (90%)] | Loss: 0.344216 | Image2Text Acc: 88.54 | Text2Image Acc: 92.19 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:09:56 | INFO | Rank 0 | Global Steps: 1180/1304 | Train Epoch: 1 [226560/250368 (90%)] | Loss: 0.427709 | Image2Text Acc: 89.06 | Text2Image Acc: 88.54 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:10:04 | INFO | Rank 0 | Global Steps: 1190/1304 | Train Epoch: 1 [228480/250368 (91%)] | Loss: 0.512698 | Image2Text Acc: 86.46 | Text2Image Acc: 86.46 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:10:12 | INFO | Rank 0 | Global Steps: 1200/1304 | Train Epoch: 1 [230400/250368 (92%)] | Loss: 0.530723 | Image2Text Acc: 82.81 | Text2Image Acc: 85.42 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:10:20 | INFO | Rank 0 | Global Steps: 1210/1304 | Train Epoch: 1 [232320/250368 (93%)] | Loss: 0.467769 | Image2Text Acc: 86.98 | Text2Image Acc: 85.42 | Data Time: 0.065s | Batch Time: 0.802s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:10:28 | INFO | Rank 0 | Global Steps: 1220/1304 | Train Epoch: 1 [234240/250368 (94%)] | Loss: 0.480477 | Image2Text Acc: 86.98 | Text2Image Acc: 86.46 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:10:36 | INFO | Rank 0 | Global Steps: 1230/1304 | Train Epoch: 1 [236160/250368 (94%)] | Loss: 0.540962 | Image2Text Acc: 82.29 | Text2Image Acc: 88.02 | Data Time: 0.066s | Batch Time: 0.802s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:10:44 | INFO | Rank 0 | Global Steps: 1240/1304 | Train Epoch: 1 [238080/250368 (95%)] | Loss: 0.373845 | Image2Text Acc: 91.15 | Text2Image Acc: 88.02 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:10:52 | INFO | Rank 0 | Global Steps: 1250/1304 | Train Epoch: 1 [240000/250368 (96%)] | Loss: 0.580477 | Image2Text Acc: 82.81 | Text2Image Acc: 84.38 | Data Time: 0.064s | Batch Time: 0.853s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:11:00 | INFO | Rank 0 | Global Steps: 1260/1304 | Train Epoch: 1 [241920/250368 (97%)] | Loss: 0.437408 | Image2Text Acc: 88.02 | Text2Image Acc: 89.06 | Data Time: 0.065s | Batch Time: 0.804s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:11:08 | INFO | Rank 0 | Global Steps: 1270/1304 | Train Epoch: 1 [243840/250368 (97%)] | Loss: 0.525449 | Image2Text Acc: 84.90 | Text2Image Acc: 85.94 | Data Time: 0.065s | Batch Time: 0.802s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:11:16 | INFO | Rank 0 | Global Steps: 1280/1304 | Train Epoch: 1 [245760/250368 (98%)] | Loss: 0.531416 | Image2Text Acc: 84.38 | Text2Image Acc: 82.29 | Data Time: 0.065s | Batch Time: 0.792s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:11:24 | INFO | Rank 0 | Global Steps: 1290/1304 | Train Epoch: 1 [247680/250368 (99%)] | Loss: 0.459088 | Image2Text Acc: 84.38 | Text2Image Acc: 85.94 | Data Time: 0.065s | Batch Time: 0.803s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:11:32 | INFO | Rank 0 | Global Steps: 1300/1304 | Train Epoch: 1 [249600/250368 (100%)] | Loss: 0.397251 | Image2Text Acc: 88.02 | Text2Image Acc: 89.06 | Data Time: 0.065s | Batch Time: 0.795s | LR: 0.000000 | logit_scale: 4.605 | Global Batch Size: 192

- 2024-05-31,13:11:35 | INFO | Rank 0 | Begin to eval on validation set (epoch 1 @ 1304 steps)...

- 2024-05-31,13:11:35 | INFO | Rank 1 | Begin to eval on validation set (epoch 1 @ 1304 steps)...

- 2024-05-31,13:11:36 | INFO | Rank 3 | Begin to eval on validation set (epoch 1 @ 1304 steps)...

- 2024-05-31,13:11:36 | INFO | Rank 2 | Begin to eval on validation set (epoch 1 @ 1304 steps)...

- 2024-05-31,13:13:21 | INFO | Rank 2 | Evaluated 100/160 batches...

- 2024-05-31,13:13:22 | INFO | Rank 3 | Evaluated 100/160 batches...

- 2024-05-31,13:13:22 | INFO | Rank 0 | Evaluated 100/160 batches...

- 2024-05-31,13:13:23 | INFO | Rank 1 | Evaluated 100/160 batches...

- 2024-05-31,13:14:28 | INFO | Rank 2 | Validation Result (epoch 1 @ 1304 steps) | Valid Loss: 0.152322 | Image2Text Acc: 95.44 | Text2Image Acc: 94.74 | logit_scale: 4.605 | Valid Batch Size: 48

- 2024-05-31,13:14:28 | INFO | Rank 0 | Validation Result (epoch 1 @ 1304 steps) | Valid Loss: 0.152322 | Image2Text Acc: 95.44 | Text2Image Acc: 94.74 | logit_scale: 4.605 | Valid Batch Size: 48

- 2024-05-31,13:14:28 | INFO | Rank 3 | Validation Result (epoch 1 @ 1304 steps) | Valid Loss: 0.152322 | Image2Text Acc: 95.44 | Text2Image Acc: 94.74 | logit_scale: 4.605 | Valid Batch Size: 48

- 2024-05-31,13:14:28 | INFO | Rank 1 | Validation Result (epoch 1 @ 1304 steps) | Valid Loss: 0.152322 | Image2Text Acc: 95.44 | Text2Image Acc: 94.74 | logit_scale: 4.605 | Valid Batch Size: 48

- Exception in thread Exception in thread 2024-05-31,13:14:35 | INFO | Rank 0 | Saved checkpoint ./logs/muge_finetune_vit-h-14_roberta-huge_bs48_1gpu/checkpoints/epoch1.pt (epoch 1 @ 1304 steps) (writing took 7.041405200958252 seconds)

- 2024-05-31,13:14:41 | INFO | Rank 0 | Saved checkpoint ./logs/muge_finetune_vit-h-14_roberta-huge_bs48_1gpu/checkpoints/epoch_latest.pt (epoch 1 @ 1304 steps) (writing took 6.167497396469116 seconds)

性能分析

我用了4张A100,跑了20分钟。

这一步结束应该会得到一个epoch_latest.pt,是一个保存的权重。

特征提取(extract)

这一步根据上面微调的权重结果,进行推理,得到测试集的特征。

在Chinese-CLIP文件夹内创建如下脚本,并运行。

- export PYTHONPATH=${PYTHONPATH}:`pwd`/cn_clip;

- python -u cn_clip/eval/extract_features.py \

- --extract-image-feats \

- --extract-text-feats \

- --image-data="../datapath/datasets/MUGE/lmdb/test/imgs" \

- --text-data="../datapath/datasets/MUGE/test_texts.jsonl" \

- --img-batch-size=32 \

- --text-batch-size=32 \

- --context-length=52 \

- --resume=logs/muge_finetune_vit-h-14_roberta-huge_bs48_1gpu/checkpoints/epoch_latest.pt \

- --vision-model=ViT-H-14 \

- --text-model=RoBERTa-wwm-ext-large-chinese

主要是根据刚刚训练的结果,对测试集的文本和图片做一个特征提取,这一步将分别生成每条询问文本和所有供选择图片的特征矩阵。

给出我的运行过程,仅供参考。

- (base) root@8f788dfb2acd:/home/datatry/cclip-task/Chinese-CLIP# ./run_scripts/my-extract.sh

- Params:

- context_length: 52

- debug: False

- extract_image_feats: True

- extract_text_feats: True

- image_data: ../datapath/datasets/MUGE/lmdb/test/imgs

- image_feat_output_path: None

- img_batch_size: 32

- precision: amp

- resume: logs/muge_finetune_vit-h-14_roberta-huge_bs48_1gpu/checkpoints/epoch_latest.pt

- text_batch_size: 32

- text_data: ../datapath/datasets/MUGE/test_texts.jsonl

- text_feat_output_path: None

- text_model: RoBERTa-wwm-ext-large-chinese

- vision_model: ViT-H-14

- Loading vision model config from cn_clip/clip/model_configs/ViT-H-14.json

- Loading text model config from cn_clip/clip/model_configs/RoBERTa-wwm-ext-large-chinese.json

- Preparing image inference dataset.

- Preparing text inference dataset.

- Begin to load model checkpoint from logs/muge_finetune_vit-h-14_roberta-huge_bs48_1gpu/checkpoints/epoch_latest.pt.

- => loaded checkpoint 'logs/muge_finetune_vit-h-14_roberta-huge_bs48_1gpu/checkpoints/epoch_latest.pt' (epoch 1 @ 1304 steps)

- Make inference for texts...

- 100%|██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 157/157 [00:15<00:00, 10.02it/s]

- 5004 text features are stored in ../datapath/datasets/MUGE/test_texts.txt_feat.jsonl

- Make inference for images...

- 100%|██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 950/950 [11:09<00:00, 1.42it/s]

- 30399 image features are stored in ../datapath/datasets/MUGE/test_imgs.img_feat.jsonl

- Done!

预测(predict)

这一步是根据刚刚得到的询问和图片各自的特征矩阵,通过K-NearestNeighbor(K近邻)算法得到离每一个“询问”最接近的“图片”回答。

在Chinese-CLIP文件夹内创建如下脚本,并运行。

- export PYTHONPATH=${PYTHONPATH}:`pwd`/cn_clip;

- python -u cn_clip/eval/make_topk_predictions.py \

- --image-feats="../datapath/datasets/MUGE/test_imgs.img_feat.jsonl" \

- --text-feats="../datapath/datasets/MUGE/test_texts.txt_feat.jsonl" \

- --top-k=10 \

- --eval-batch-size=32768 \

- --output="../datapath/datasets/MUGE/test_predictions.jsonl"

这一步只是运行一个K近邻算法,不属于深度学习的范畴,故不需要太大的资源消耗。中心思想是对于每个询问矩阵,走一遍该算法,并对所有图片生成一个相似度,然后取最接近的那10个(实现可能不一样,主体思路是这样)。

给出我的运行结果供参考。

- (base) root@8f788dfb2acd:/home/datatry/cclip-task/Chinese-CLIP# ./run_scripts/my-predict.sh

- Params:

- eval_batch_size: 32768

- image_feats: ../datapath/datasets/MUGE/test_imgs.img_feat.jsonl

- output: ../datapath/datasets/MUGE/test_predictions.jsonl

- text_feats: ../datapath/datasets/MUGE/test_texts.txt_feat.jsonl

- top_k: 10

- Begin to load image features...

- 30399it [00:10, 2784.06it/s]

- Finished loading image features.

- Begin to compute top-10 predictions for texts...

- 5004it [01:54, 43.73it/s]

- Top-10 predictions are saved in ../datapath/datasets/MUGE/test_predictions.jsonl

- Done!

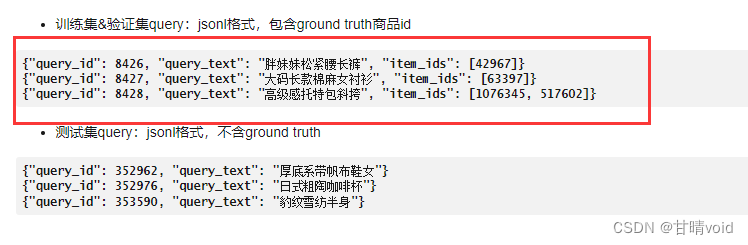

合并结果,提交

这一步出来的格式是类似这样

- {"text_id": 342160, "image_ids": [511583, 911643, 599057, 224560, 434528, 808493, 155774, 368883, 282239, 908966]}

- {"text_id": 342169, "image_ids": [686662, 1122644, 969455, 277134, 492780, 990543, 394387, 978460, 154994, 392466]}

- {"text_id": 342177, "image_ids": [1069979, 75011, 814416, 642083, 173661, 108900, 787973, 46653, 752130, 495966]}

- {"text_id": 342198, "image_ids": [1015004, 277415, 25870, 945533, 727387, 1122730, 1061659, 944337, 442797, 862027]}

- {"text_id": 342202, "image_ids": [1122365, 376418, 270450, 529722, 566880, 426942, 599959, 19213, 1011537, 221047]}

- {"text_id": 342204, "image_ids": [499085, 306775, 1101124, 1031161, 926078, 1073678, 345498, 265649, 829036, 244305]}

- {"text_id": 342219, "image_ids": [823609, 173789, 414377, 719108, 821670, 993925, 566018, 1014959, 954656, 1073819]}

- {"text_id": 342224, "image_ids": [533760, 872048, 935209, 237532, 295600, 149511, 335224, 24635, 260950, 369096]}

而我们需要的格式是类似这样:

需要把text文本加回去

可以使用如下python代码

进入datapath/datasets/MUGE/lmdb文件夹下,创建改代码,运行即可得到最终的结果output.jsonl

- import json

-

- # 文件路径

- file1_path = "test_texts.jsonl"

- file2_path = "test_predictions.jsonl"

- output_path = "output.jsonl"

-

- # 读取第一个文件并存储到字典中

- data1 = {}

- with open(file1_path, "r", encoding="utf-8") as f1:

- for line in f1:

- obj = json.loads(line.strip())

- # 存储 text_id 和 text 到字典中

- data1[obj['text_id']] = obj['text']

-

- # 读取第二个文件并拼接数据

- output_data = []

- with open(file2_path, "r", encoding="utf-8") as f2:

- for line in f2:

- obj = json.loads(line.strip())

- query_id = obj['text_id']

- if query_id in data1:

- combined_obj = {

- "query_id": query_id,

- "query_text": data1[query_id],

- "item_ids": obj['image_ids']

- }

- output_data.append(combined_obj)

-

- # 写入到输出文件

- with open(output_path, "w", encoding="utf-8") as outfile:

- for item in output_data:

- json.dump(item, outfile, ensure_ascii=False)

- outfile.write("\n")

提交结果。

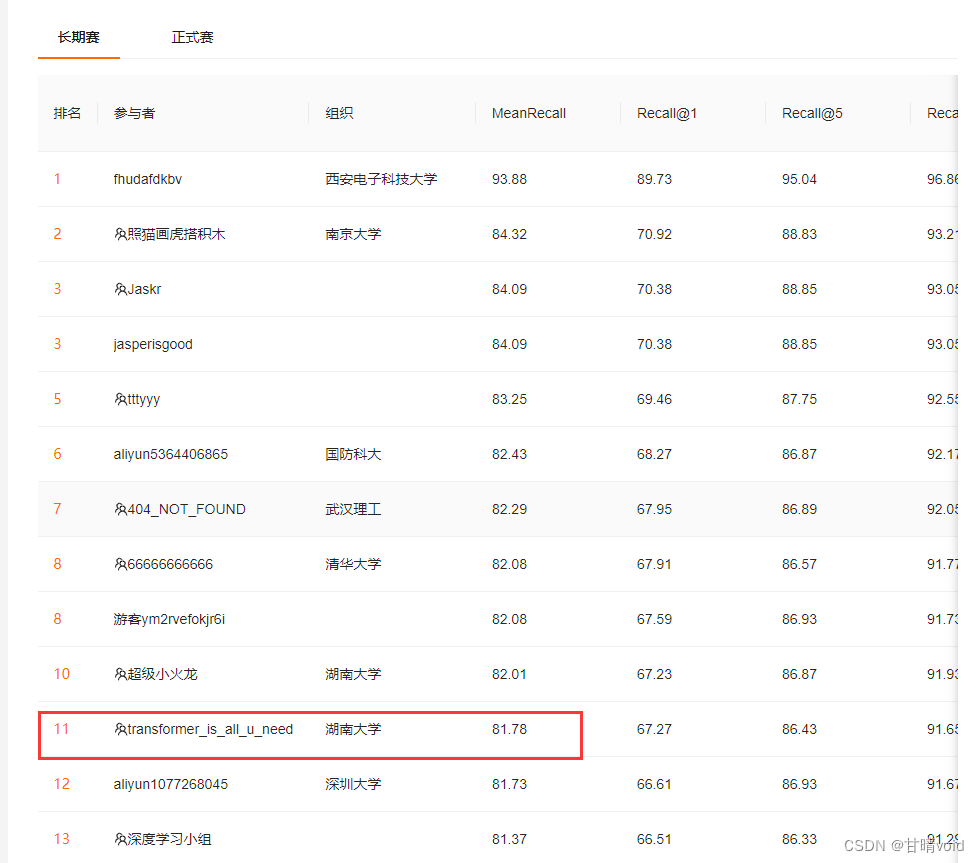

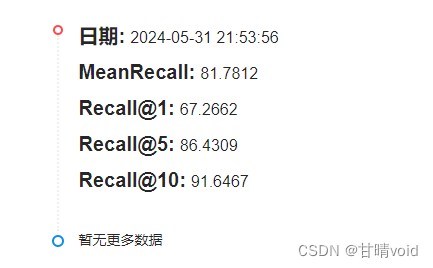

暂时排名如下: