- 1大数据分析技术与方法有哪些?_大数据技术方法有哪些

- 2HanLP作者出品|推荐一本自然语言处理入门书籍|包邮送5本

- 3Stable Diffusion入门使用技巧及个人试用实例分享--SD提示词及ControlNet篇

- 4【Datawhale AI 夏令营】基于术语词典干预的机器翻译挑战赛 Task 1

- 5【JAVA】可视化窗口制作_java可视化界面

- 6HIVE——常用sql命令总结_hive执行sql文件

- 7使用FastReport设计分组汇总及合计报表(图文)_delphi fastreport 分组合计

- 8GitHub敏感信息扫描工具

- 9Python自动化实现抖音自动刷视频_抖音自动化测试

- 10你需要掌握的插件化知识_插件化技术

大数据基础平台搭建-(三)Hadoop集群HA+Zookeeper搭建_大数据基础平台搭建-(三

赞

踩

大数据基础平台搭建-(三)Hadoop集群HA+Zookeeper搭建

大数据平台系列文章:

1、大数据基础平台搭建-(一)基础环境准备

2、大数据基础平台搭建-(二)Hadoop集群搭建

3、大数据基础平台搭建-(三)Hadoop集群HA+Zookeeper搭建

4、大数据基础平台搭建-(四)HBase集群HA+Zookeeper搭建

5、大数据基础平台搭建-(五)Hive搭建

大数据平台是基于Apache Hadoop_3.3.4搭建的;

目录

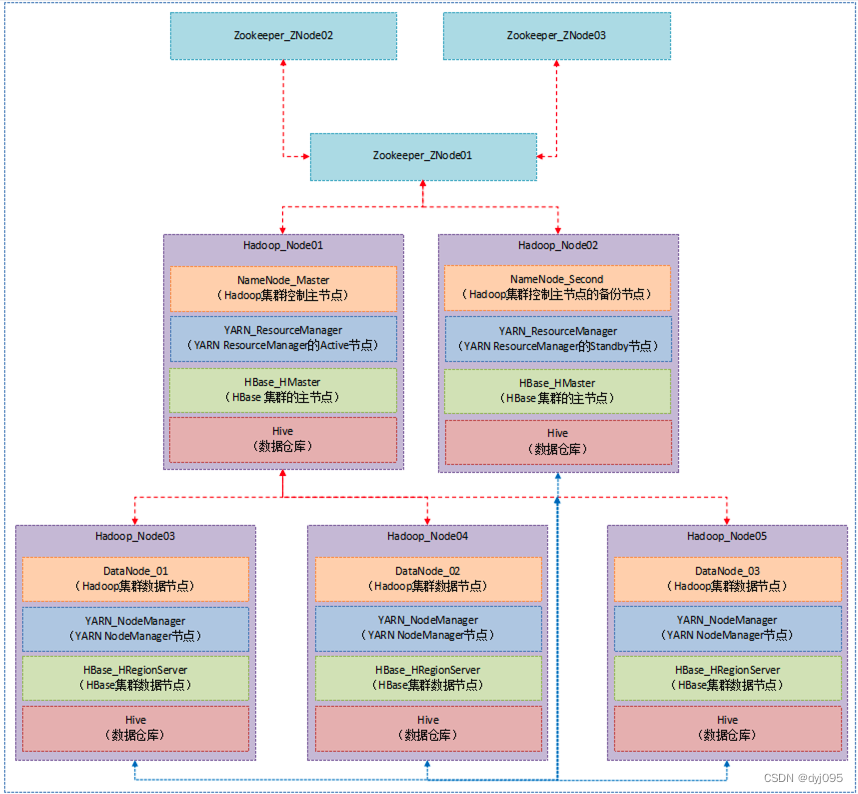

一、部署架构

二、Hadoop集群节点分布情况

| 序号 | 服务节点 | NameNode节点 | Zookeeper节点 | journalnode节点 | datanode节点 | resourcemanager节点 |

|---|---|---|---|---|---|---|

| 1 | hNode1 | √ | √ | √ | - | √ |

| 2 | hNode2 | √ | √ | √ | - | √ |

| 3 | hNode3 | - | √ | √ | √ | - |

| 4 | hNode4 | - | - | - | √ | - |

| 5 | hNode5 | - | - | - | √ | - |

三、搭建Zookeeper集群

1、在hnode1服务器上部署Zookeeper

1). 解压安装包

[root@hnode1 ~]# cd /opt/

[root@hnode1 opt]# tar -xzvf ./apache-zookeeper-3.8.0-bin.tar.gz /opt/zk/apache-zookeeper-3.8.0-bin

[root@hnode1 opt]# cd /opt/zk/apache-zookeeper-3.8.0-bin

- 1

- 2

- 3

2). 配置环境变量

[root@hnode1 apache-zookeeper-3.8.0-bin]# vim /etc/profile

- 1

#Zookeeper

export ZOOKEEPER_HOME=/opt/zk/apache-zookeeper-3.8.0-bin

export PATH=$PATH:$ZOOKEEPER_HOME/bin

- 1

- 2

- 3

[root@hnode1 apache-zookeeper-3.8.0-bin]# source /etc/profile

- 1

3). 配置zookeeper

[root@znode apache-zookeeper-3.8.0-bin]# mkdir zkData

[root@znode apache-zookeeper-3.8.0-bin]# cd conf

[root@znode conf]# cp ./zoo_sample.cfg ./zoo.cfg

[root@znode conf]# vim ./zoo.cfg

- 1

- 2

- 3

- 4

dataDir=/opt/zk/apache-zookeeper-3.8.0-bin/zkData

#添加集群中其他节点的信息

server.1=hnode1:2888:3888

server.2=hnode2:2888:3888

server.3=hnode3:2888:3888

- 1

- 2

- 3

- 4

- 5

[root@hnode1 apache-zookeeper-3.8.0-bin]# source /etc/profile

- 1

4). 在zkData目录生成myid文件

[root@znode apache-zookeeper-3.8.0-bin]# cd zkData/

[root@znode zkData]# vim myid

- 1

- 2

1

- 1

2、在hnode2服务器上部署Zookeeper

1). 从hnode1服务器复制Zookeeper安装目录

[root@hnode2 ~]# cd /opt/

[root@hnode2 opt]# mkdir zk

[root@hnode2 opt]# cd zk

[root@hnode2 zk]# scp -r root@hnode1:/opt/zk/apache-zookeeper-3.8.0-bin ./

- 1

- 2

- 3

- 4

2). 配置环境变量

[root@hnode2 zk]# vim /etc/profile

- 1

#Zookeeper

export ZOOKEEPER_HOME=/opt/zk/apache-zookeeper-3.8.0-bin

export PATH=$PATH:$ZOOKEEPER_HOME/bin

- 1

- 2

- 3

[root@hnode2 zk]# source /etc/profile

- 1

3). 修改myid

[root@hnode2 zk]# cd apache-zookeeper-3.8.0-bin/zkData/

[root@hnode2 zkData]# vim myid

- 1

- 2

2

- 1

3、在hnode3服务器上部署Zookeeper

1). 从hnode1服务器复制Zookeeper安装目录

[root@hnode3 ~]# cd /opt/

[root@hnode3 opt]# mkdir zk

[root@hnode3 opt]# cd zk

[root@hnode3 zk]# scp -r root@hnode1:/opt/zk/apache-zookeeper-3.8.0-bin ./

- 1

- 2

- 3

- 4

2). 配置环境变量

[root@hnode3 zk]# vim /etc/profile

- 1

#Zookeeper

export ZOOKEEPER_HOME=/opt/zk/apache-zookeeper-3.8.0-bin

export PATH=$PATH:$ZOOKEEPER_HOME/bin

- 1

- 2

- 3

[root@hnode3 zk]# source /etc/profile

- 1

3). 修改myid

[root@hnode3 zk]# cd apache-zookeeper-3.8.0-bin/zkData/

[root@hnode3 zkData]# vim myid

- 1

- 2

3

- 1

四、修改Hadoop配置,HA模式

1、在hnode1编辑core-site.xml

[root@hnode1 hadoop]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode1 hadoop]# vim core-site.xml

- 1

- 2

<configuration> <!-- 在读写SequenceFiles时缓存区大小128k --> <property> <name>io.file.buffer.size</name> <value>131072</value> </property> <!-- 指定 hadoop 数据的存储目录 --> <property> <name>hadoop.tmp.dir</name> <value>/opt/hadoop/data</value> </property> <!-- 配置 HDFS 网页登录使用的静态用户为 root --> <property> <name>hadoop.http.staticuser.user</name> <value>root</value> </property> <property> <name>fs.defaultFS</name> <value>hdfs://cluster</value> </property> <property> <name>fs.trash.interval</name> <value>1440</value> </property> <property> <name>ha.zookeeper.quorum</name> <value>hnode1:2181,hnode2:2181,hnode3:2181</value> </property> <property> <name>hadoop.zk.address</name> <value>hnode1:2181,hnode2:2181,hnode3:2181</value> </property> <property> <name>ha.zookeeper.session-timeout.ms</name> <value>10000</value> <description>hadoop链接zookeeper的超时时长设置ms</description> </property> </configuration>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

2、在hnode1上编辑hdfs-site.xml

[root@hnode1 hadoop]# vim hdfs-site.xml

- 1

<configuration> <!-- 指定hdfs元数据存储的路径 --> <property> <name>dfs.namenode.name.dir</name> <value>/opt/hadoop/data/namenode</value> </property> <!-- 指定hdfs数据存储的路径 --> <property> <name>dfs.datanode.data.dir</name> <value>/opt/hadoop/data/datanode</value> </property> <!-- 数据备份的个数 --> <property> <name>dfs.replication</name> <value>2</value> </property> <!-- 关闭权限验证 --> <property> <name>dfs.permissions.enabled</name> <value>false</value> </property> <!-- 开启WebHDFS功能(基于REST的接口服务) --> <property> <name>dfs.webhdfs.enabled</name> <value>true</value> </property> <!-- //以下为HDFS HA的配置// --> <!-- 指定hdfs的nameservices名称为mycluster --> <property> <name>dfs.nameservices</name> <value>cluster</value> </property> <!-- 指定cluster的两个namenode的名称分别为nn1,nn2 --> <property> <name>dfs.ha.namenodes.cluster</name> <value>nn1,nn2</value> </property> <!-- 配置nn1,nn2的rpc通信端口 --> <property> <name>dfs.namenode.rpc-address.cluster.nn1</name> <value>hnode1:8020</value> </property> <property> <name>dfs.namenode.rpc-address.cluster.nn2</name> <value>hnode2:8020</value> </property> <!-- 配置nn1,nn2的http通信端口 --> <property> <name>dfs.namenode.http-address.cluster.nn1</name> <value>hnode1:50070</value> </property> <property> <name>dfs.namenode.http-address.cluster.nn2</name> <value>hnode2:50070</value> </property> <!-- 指定namenode元数据存储在journalnode中的路径 --> <property> <name>dfs.namenode.shared.edits.dir</name> <value>qjournal://hnode1:8485;hnode2:8485;hnode3:8485/cluster</value> </property> <!-- 指定journalnode日志文件存储的路径 --> <property> <name>dfs.journalnode.edits.dir</name> <value>/opt/hadoop/data/journal</value> </property> <!-- 指定HDFS客户端连接active namenode的java类 --> <property> <name>dfs.client.failover.proxy.provider.cluster</name> <value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value> </property> <!-- 配置隔离机制为ssh --> <property> <name>dfs.ha.fencing.methods</name> <value>sshfence</value> </property> <!-- 指定秘钥的位置 --> <property> <name>dfs.ha.fencing.ssh.private-key-files</name> <value>/root/.ssh/id_rsa</value> </property> <!-- 开启自动故障转移 --> <property> <name>dfs.ha.automatic-failover.enabled</name> <value>true</value> </property> </configuration>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

3、在hnode1上编辑yarn-site.xml

[root@hnode1 hadoop]# vim yarn-site.xml

- 1

<configuration> <!-- 容错 --> <property> <name>yarn.resourcemanager.connect.retry-interval.ms</name> <value>10000</value> </property> <property> <name>yarn.resourcemanager.ha.enabled</name> <value>true</value> </property> <property> <name>yarn.resourcemanager.ha.automatic-failover.enabled</name> <value>true</value> </property> <!-- ResourceManager重启容错 --> <property> <name>yarn.resourcemanager.recovery.enabled</name> <value>true</value> <description>RM 重启过程中不影响正在运行的作业</description> </property> <!-- 应用的状态信息存储方案:ZK --> <property> <name>yarn.resourcemanager.store.class</name> <value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value> <description>应用的状态等信息保存方式:ha只支持ZKRMStateStore</description> </property> <!-- yarn集群配置 --> <property> <name>yarn.resourcemanager.cluster-id</name> <value>cluster</value> </property> <property> <name>yarn.resourcemanager.ha.rm-ids</name> <value>rm1,rm2</value> </property> <property> <name>yarn.resourcemanager.scheduler.class</name> <value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler</value> </property> <property> <name>yarn.resourcemanager.work-preserving-recovery.enabled</name> <value>true</value> </property> <!-- rm1 configs --> <property> <name>yarn.resourcemanager.hostname.rm1</name> <value>hnode2</value> </property> <property> <name>yarn.resourcemanager.address.rm1</name> <value>hnode2:8032</value> </property> <property> <name>yarn.resourcemanager.scheduler.address.rm1</name> <value>hnode2:8030</value> </property> <property> <name>yarn.resourcemanager.webapp.https.address.rm1</name> <value>hnode2:8090</value> </property> <property> <name>yarn.resourcemanager.webapp.address.rm1</name> <value>hnode2:8088</value> </property> <property> <name>yarn.resourcemanager.resource-tracker.address.rm1</name> <value>hnode2:8031</value> </property> <property> <name>yarn.resourcemanager.admin.address.rm1</name> <value>hnode2:8033</value> </property> <!-- rm2 configs --> <property> <name>yarn.resourcemanager.hostname.rm2</name> <value>hnode3</value> </property> <property> <name>yarn.resourcemanager.address.rm2</name> <value>hnode3:8032</value> </property> <property> <name>yarn.resourcemanager.scheduler.address.rm2</name> <value>hnode3:8030</value> </property> <property> <name>yarn.resourcemanager.webapp.https.address.rm2</name> <value>hnode3:8090</value> </property> <property> <name>yarn.resourcemanager.webapp.address.rm2</name> <value>hnode3:8088</value> </property> <property> <name>yarn.resourcemanager.resource-tracker.address.rm2</name> <value>hnode3:8031</value> </property> <property> <name>yarn.resourcemanager.admin.address.rm2</name> <value>hnode3:8033</value> </property> <!-- Node Manager Configs 每个节点都要配置 --> <property> <description>Address where the localizer IPC is. ********* </description> <name>yarn.nodemanager.localizer.address</name> <value>hnode2:8040</value> </property> <property> <description>Address where the localizer IPC is. ********* </description> <name>yarn.nodemanager.address</name> <value>hnode2:8050</value> </property> <property> <description>NM Webapp address. ********* </description> <name>yarn.nodemanager.webapp.address</name> <value>hnode2:8042</value> </property> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.local-dirs</name> <value>/tmp/hadoop/yarn/local</value> </property> <property> <name>yarn.nodemanager.log-dirs</name> <value>/tmp/hadoop/yarn/log</value> </property> <!--资源优化--> <property> <name>yarn.nodemanager.resource.memory-mb</name> <value>2048</value> </property> <property> <name>yarn.nodemanager.resource.cpu-vcores</name> <value>2</value> </property> <property> <name>yarn.scheduler.minimum-allocation-mb</name> <value>2048</value> </property> <!--日志聚合--> <property> <name>yarn.log-aggregation-enable</name> <value>true</value> </property> <property> <name>yarn.log-aggregation.retain-seconds</name> <value>86400</value> </property> <property> <name>yarn.nodemanager.vmem-check-enabled</name> <value>false</value> </property> <property> <name>yarn.application.classpath</name> <value>/opt/hadoop/hadoop-3.3.4/etc/hadoop:/opt/hadoop/hadoop-3.3.4/share/hadoop/common/lib/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/common/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/hdfs:/opt/hadoop/hadoop-3.3.4/share/hadoop/hdfs/lib/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/hdfs/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/mapreduce/lib/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/mapreduce/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/yarn:/opt/hadoop/hadoop-3.3.4/share/hadoop/yarn/lib/*:/opt/hadoop/hadoop-3.3.4/share/hadoop/yarn/*</value> </property> </configuration>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

- 149

- 150

- 151

- 152

- 153

- 154

- 155

- 156

- 157

- 158

- 159

- 160

- 161

- 162

- 163

- 164

- 165

- 166

- 167

- 168

- 169

- 170

- 171

4、将hnode1节点上修改的hadoop配置同步到hnode2节点上

将hnode1服务器上的core-site.xml、hdfs-site.xml、yarn-site.xml同步到hnode2上

[root@hnode2 opt]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode2 hadoop]# rm -rf core-site.xml

[root@hnode2 hadoop]# rm -rf hdfs-site.xml

[root@hnode2 hadoop]# rm -rf yarn-site.xml

[root@hnode2 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/core-site.xml ./

[root@hnode2 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/hdfs-site.xml ./

[root@hnode2 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/yarn-site.xml ./

- 1

- 2

- 3

- 4

- 5

- 6

- 7

5、将hnode1节点上修改的hadoop配置同步到hnode3节点上

将hnode1服务器上的core-site.xml、hdfs-site.xml、yarn-site.xml同步到hnode3上

[root@hnode3 opt]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode3 hadoop]# rm -rf core-site.xml

[root@hnode3 hadoop]# rm -rf hdfs-site.xml

[root@hnode3 hadoop]# rm -rf yarn-site.xml

[root@hnode3 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/core-site.xml ./

[root@hnode3 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/hdfs-site.xml ./

[root@hnode3 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/yarn-site.xml ./

- 1

- 2

- 3

- 4

- 5

- 6

- 7

6、将hnode1节点上修改的hadoop配置同步到hnode4节点上

将hnode1服务器上的core-site.xml、hdfs-site.xml、yarn-site.xml同步到hnode4上

[root@hnode4 opt]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode4 hadoop]# rm -rf core-site.xml

[root@hnode4 hadoop]# rm -rf hdfs-site.xml

[root@hnode4 hadoop]# rm -rf yarn-site.xml

[root@hnode4 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/core-site.xml ./

[root@hnode4 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/hdfs-site.xml ./

[root@hnode4 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/yarn-site.xml ./

- 1

- 2

- 3

- 4

- 5

- 6

- 7

7、将hnode1节点上修改的hadoop配置同步到hnode5节点上

将hnode1服务器上的core-site.xml、hdfs-site.xml、yarn-site.xml同步到hnode5上

[root@hnode5 opt]# cd /opt/hadoop/hadoop-3.3.4/etc/hadoop/

[root@hnode5 hadoop]# rm -rf core-site.xml

[root@hnode5 hadoop]# rm -rf hdfs-site.xml

[root@hnode5 hadoop]# rm -rf yarn-site.xml

[root@hnode5 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/core-site.xml ./

[root@hnode5 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/hdfs-site.xml ./

[root@hnode5 hadoop]# scp root@hnode1:/opt/hadoop/hadoop-3.3.4/etc/hadoop/yarn-site.xml ./

- 1

- 2

- 3

- 4

- 5

- 6

- 7

8、删除并重新创建hadoop的data(/opt/hadoop/data)目录

因为hadoop之前做过初始化,所以需要删除重建data目录;如果大家的hadoop集群是第一次部署还未执行过初始化,则不需要执行此步

五、Hadoop集群初始化、启动

1、启动Zookeeper集群

1). 在hnode1节点上启动Zookeeper

由于我们采用root账号启动Zookeeper集群会报下面的错,所以需要在start-dfs.sh和stop-dfs.sh中添加配置

ERROR: Attempting to operate on hdfs journalnode as root

ERROR: but there is no HDFS_JOURNALNODE_USER defined. Aborting operation.

Stopping ZK Failover Controllers on NN hosts [hnode1 hnode2]

ERROR: Attempting to operate on hdfs zkfc as root

ERROR: but there is no HDFS_ZKFC_USER defined. Aborting operation.

[root@hnode1 opt]#cd /opt/hadoop/hadoop-3.3.4/sbin

[root@hnode1 sbin]# vim start-dfs.sh

- 1

- 2

在start-dfs.sh起始位置添加

HDFS_JOURNALNODE_USER=root

HDFS_ZKFC_USER=root

- 1

- 2

[root@hnode1 sbin]# vim stop-dfs.sh

- 1

在stop-dfs.sh起始位置添加

HDFS_JOURNALNODE_USER=root

HDFS_ZKFC_USER=root

- 1

- 2

[root@hnode1 sbin]# zkServer.sh start

- 1

2). 在hnode2节点上启动Zookeeper

[root@hnode2 opt]# zkServer.sh start

- 1

3). 在hnode3节点上启动Zookeeper

[root@hnode3 opt]# zkServer.sh start

- 1

2、在你配置的各个journalnode节点启动该进程

1). 在hnode1节点上启动journalnode

[root@hnode1 opt]# hadoop-daemon.sh start journalnode

- 1

2). 在hnode2节点上启动journalnode

[root@hnode2 opt]# hadoop-daemon.sh start journalnode

- 1

3). 在hnode3节点上启动journalnode

[root@hnode2 opt]# hadoop-daemon.sh start journalnode

- 1

3、格式化NameNode(先选取一个namenode(hnode1)节点进行格式化)

[root@hnode1 hadoop]# hadoop namenode -format

- 1

4、要把在hnode1节点上生成的元数据复制到另一个NameNode(hnode2)节点上

[root@hnode2 hadoop]# scp -r root@hnode1:/opt/hadoop/data ./

- 1

5、格式化zkfc

[root@hnode1 hadoop]# hdfs zkfc -formatZK

- 1

6、启动Hadoop集群

hadoop.sh脚本参见大数据基础平台搭建-(二)Hadoop集群搭建

有时候执行hadoop.sh start的时候会HDFS会启动失败,原因是8485yarn还没启动完成就要连接此端口会连接失败,如果遇到此种情情况就在每台journalnode节点服务器上执行hadoop-daemon.sh start journalnode,再执行hadoop.sh start

[root@hnode1 hadoop]# cd /opt/hadoop

[root@hnode1 hadoop]# ./hadoop.sh start

- 1

- 2

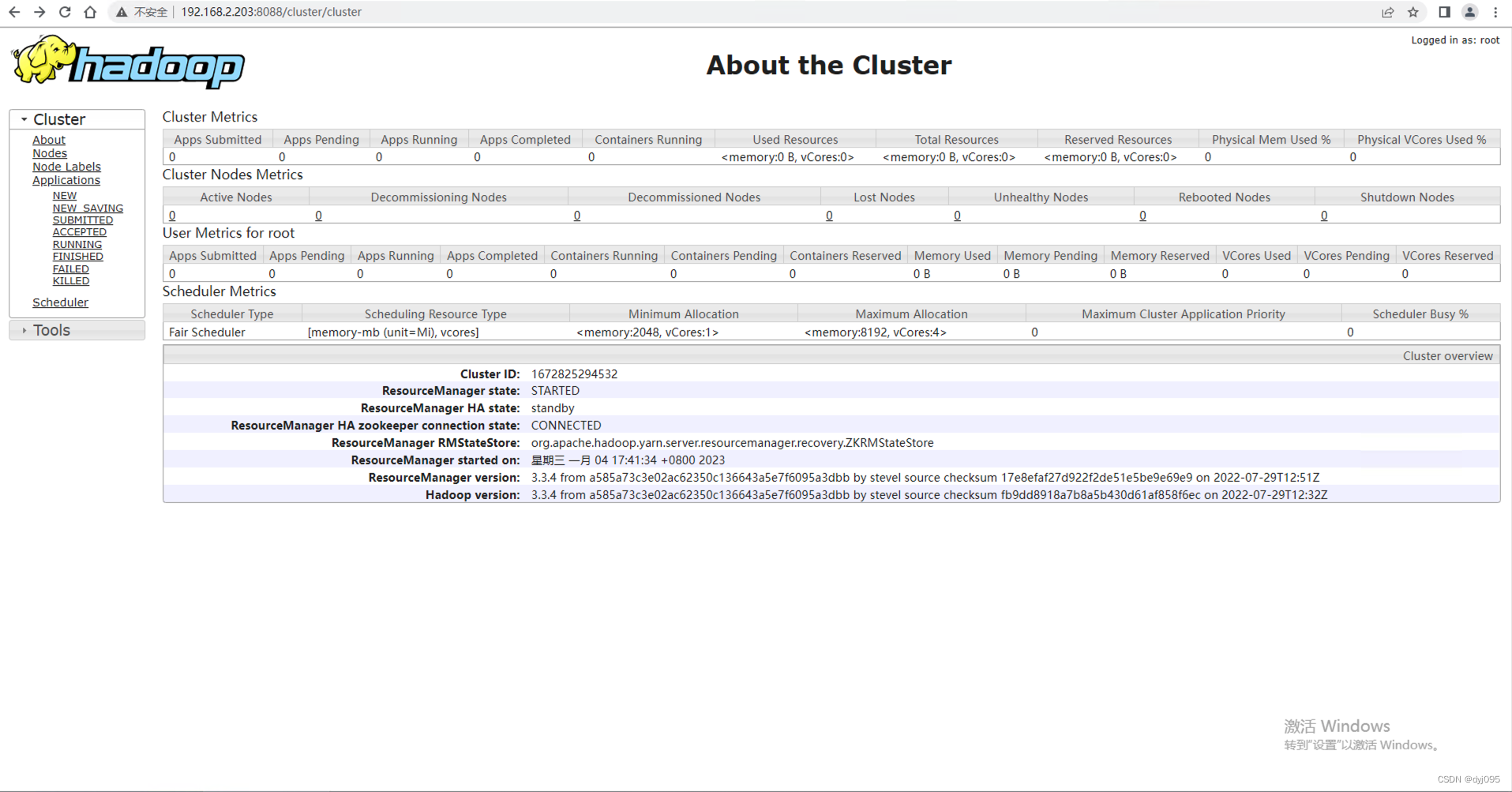

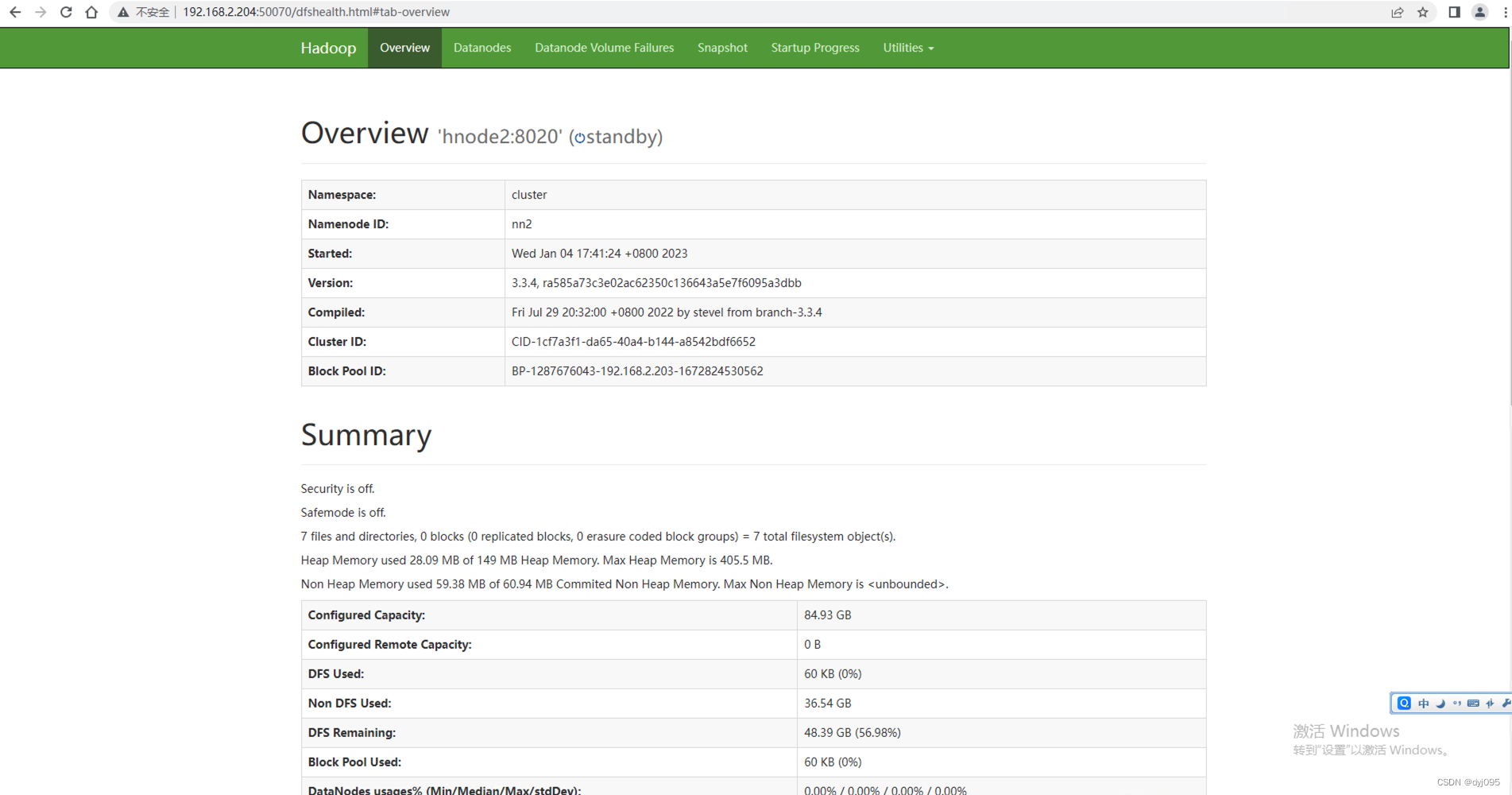

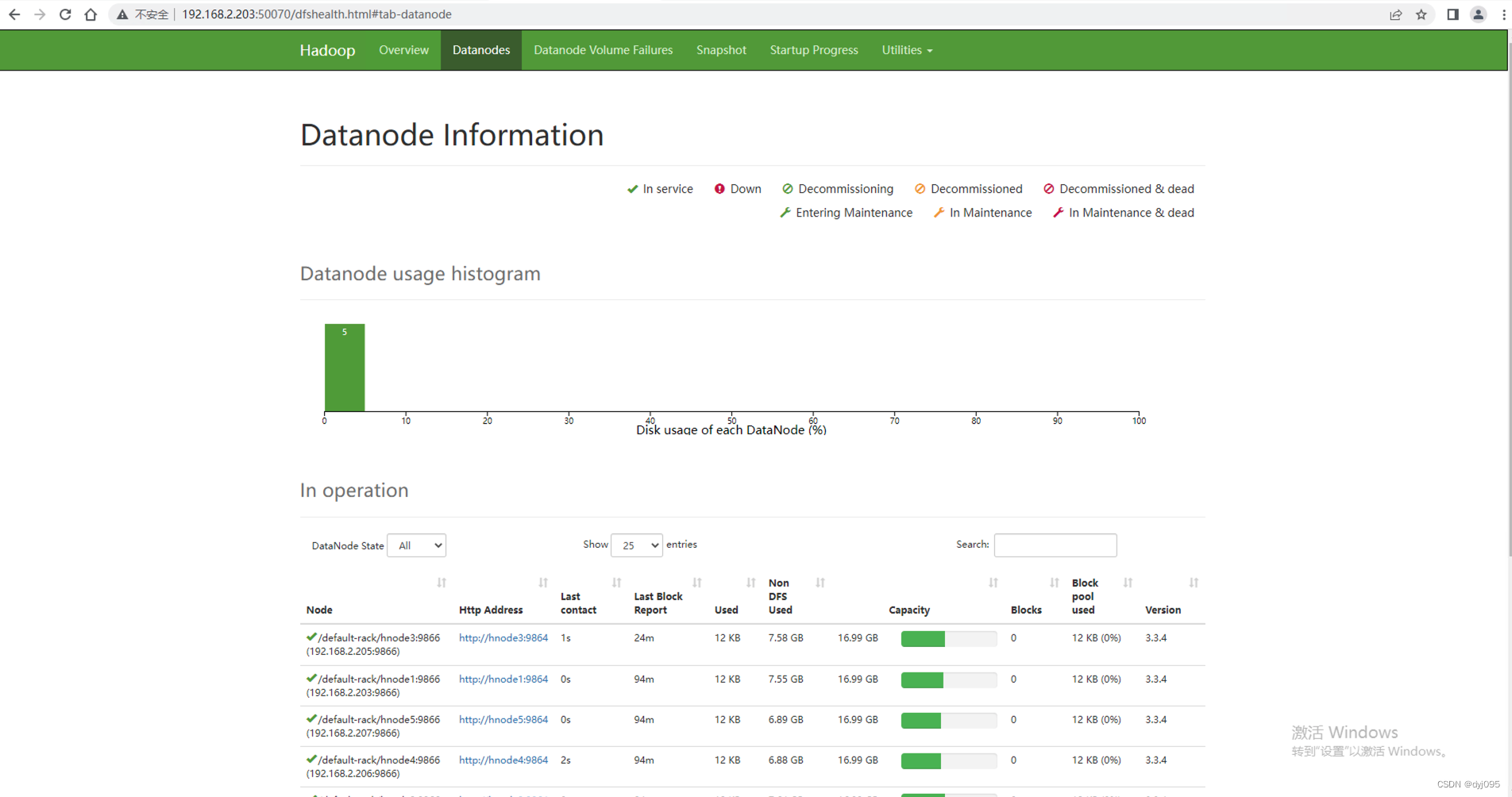

六、确认Hadoop集群的状态

1、查看HDFS

http://hnode1:8088

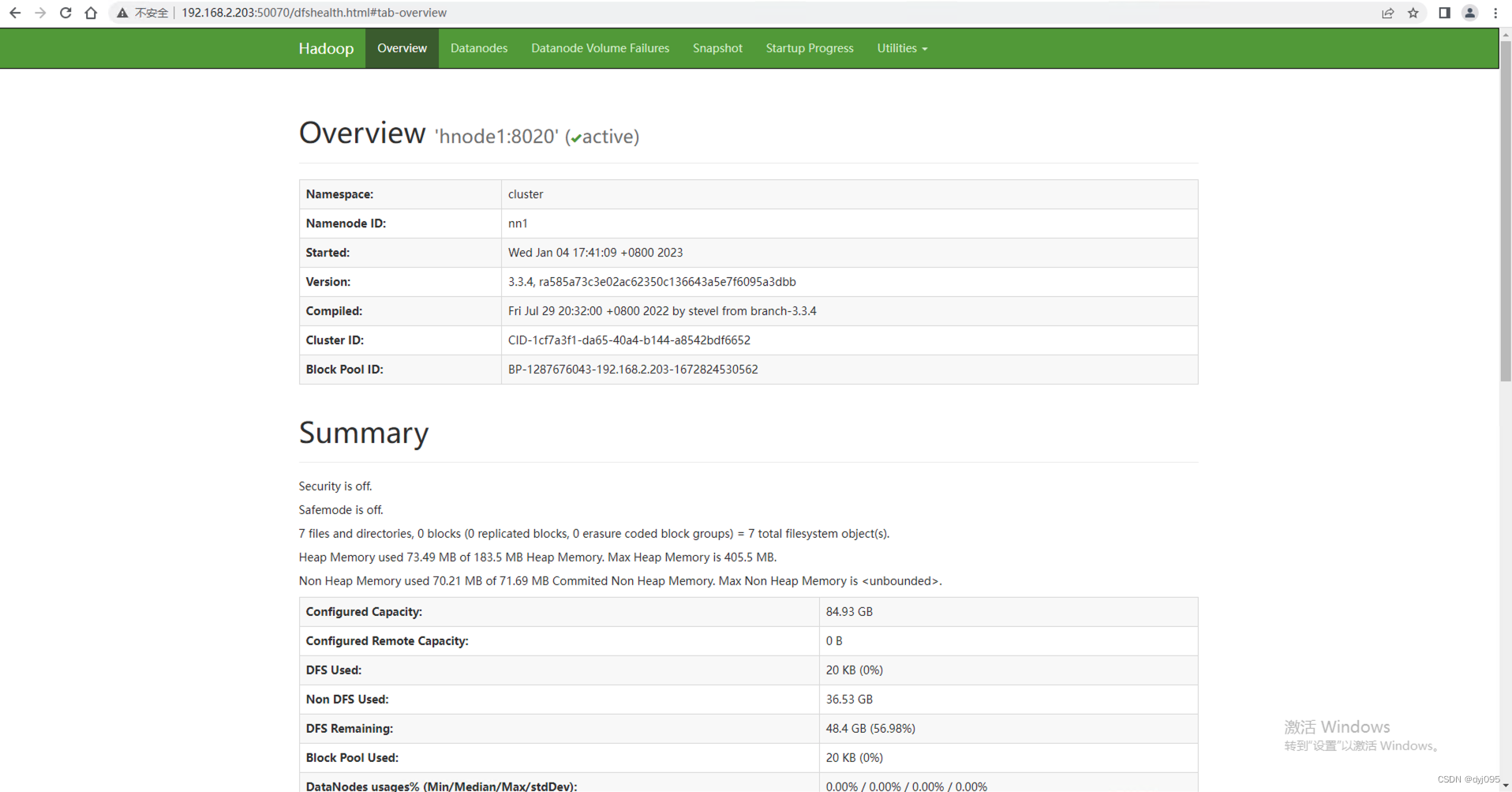

2、 查看DataNode

http://hnode1:50070

1)、NameNode主节点状态

2)、NameNode备份节点状态

3)、数据节点的状态

3、查看HistoryServer

http://hnode2:19888/jobhistory