- 1第四天:第一个基于NetBIOS over TCP/IP报文格式的Name Query程序发送_netbios 报文 格式

- 2Dart笔记:build_runner-用于 Dart 代码生成和模块化编译的构建系统

- 3程序员必备的技能矩阵图_软件开发技能矩阵

- 4scp(远程文件复制)指令详解 _scpsl远程服务器指令

- 5嵌入式软件工程师学习路线图_嵌入式工程师发展路线图

- 6git增加删除submodule_删除 submodule windows

- 7云计算、大数据、人工智能、物联网、虚拟现实技术、区块链技术(新一代信息技术)学习这一篇够了!_畅想未来物联网与大数据、人工智能、云技术技术结合应用?

- 8SQL语言与SQL在线实验工具的使用【修订版】_sql语句在线测试工具

- 9Hadoop配置文件详解(core-site.xml、hdfs-site.xm、mapred-site.xml、yarn-site.xml)_hdfs-site.xml和core-site.xml

- 10Jeecg-Boot 存在前台SQL注入漏洞(CVE-2023-1454)

springboot 接入 kafka 组件 xml方式_kafka bean xml

赞

踩

1. kafka相关简介

1.1 kafka是什么

kafka是一个高吞吐量,分布式的消息队列系统,把数据从一个系统搬运到其它系统。同时它还提供一段时间内的数据存储,以及按索引或者时间戳索引的数据检索

1.2 kafka的作用

流量削峰(请求徒增,后端无法承受)

多系统之间解耦(不同语言的系统不需要写客户端互相通信)

流式处理(大数据系统的数据输入和输出源)

日志接收和处理等(应用→kafka→logstash→es/hbase)

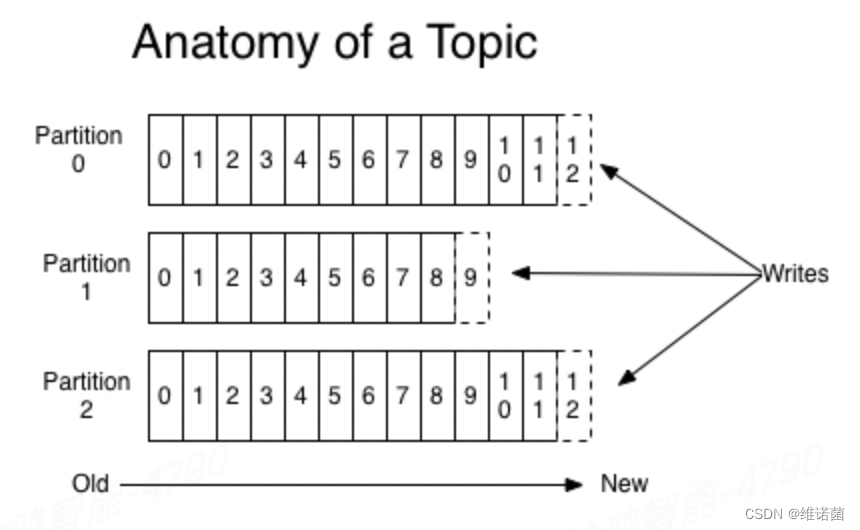

1.3 topic和partition(分区)关系

topic不保证数据的先后顺序,但是partition(分区)是保证数据写入的先后顺序,一个topic可以有一个分区,也可以有多个分区

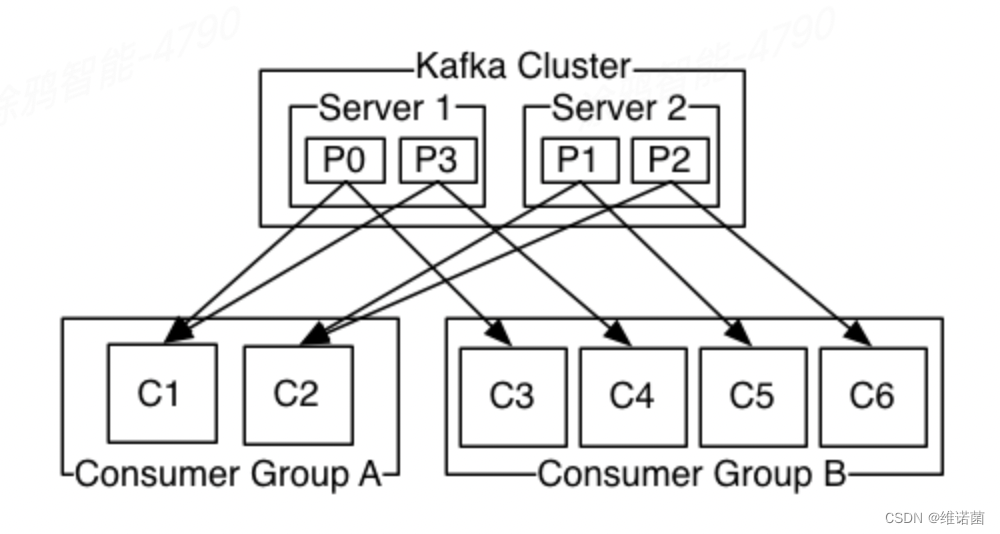

1.4 kafka消费组的概念

consumer group是一组相同逻辑的机器/线程。他们订阅同一个topic时,会分别消费不同的partition,保证同一条消息在一个consumer group中不会被消费多次。

consumer group和partition的概念主要是为了增加消费端的并发消费能力,以提升消费速度。

在一个group中,一个consumer可以消费多个partition。但是一个partition只会被一个consumer消费。

一般而言可以认为consumer就是一个kafka client机器上的线程,一个consumer group可能包含n台有m个线程的kafka client机器。那么当这个group消费一个有n*m个partition的topic时,每个线程正好消费一个partition,性能最好。

2 SpringBoot接入kafka组件

2.1 相关依赖

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>

- 1

- 2

- 3

- 4

2.2 优势

通过本文可以实现对kafka消费端消息的统一拦截处理,以及相关的消息分发

2.3 kafka消费工厂重写

public class GenericKafkaConsumerFactory<K, V> extends DefaultKafkaConsumerFactory<K, V> { public GenericKafkaConsumerFactory(Map<String, Object> configs, boolean broadcastGroup) throws NoSuchFieldException, IllegalAccessException { super(configs); //主要是为了一些特殊的业务场景准备,有部分业务场景同一个topic的消息需要被同一个业务组的应用消费 //比如异步通知其他pod同应用更新内存数据,此时就需要再分组后添加ip地址来生成一个唯一分组,保证可以消费到 if(broadcastGroup){ Object groupId = configs.get(ConsumerConfig.GROUP_ID_CONFIG); Assert.notNull(groupId, "group.id is empty"); groupId = groupId.toString() + "_" + NetUtils.getLocalHost(); //修改kafka分组 try { Class<DefaultKafkaConsumerFactory> defaultKafkaConsumerFactoryClass = DefaultKafkaConsumerFactory.class; Field kafkaConfigs = defaultKafkaConsumerFactoryClass.getDeclaredField("configs"); kafkaConfigs.setAccessible(true); Map<String, Object> updateConfigs = (Map<String, Object>) kafkaConfigs.get(this); updateConfigs.put(ConsumerConfig.GROUP_ID_CONFIG, groupId); } catch (Exception e) { log.error("kafka消费端分组随机数设置失败",e); } } } }

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

2.4 kafka消息监听容器实现

public class GenericKafkaMessageListenerContainer implements InitializingBean { GenericKafkaMessageListenerContainer kafkaMessageListenerContainer = null; List<ContainerProperties> containerPropertiesList = new ArrayList<>(); GenericKafkaListener genericKafkaListener; protected List<KafkaConsumerConfig> consumerConfigs; DefaultKafkaConsumerFactory factory = null; @Override public void afterPropertiesSet() throws Exception { log.info("------- 初始化kafka监听容器"); for(KafkaConsumerConfig consumerConfig : consumerConfigs){ //设置kafka监听器监听的topic ContainerProperties containerProperties = new ContainerProperties(consumerConfig.getTopic()); KafkaMessageListenerContainer container = new KafkaMessageListenerContainer(factory, containerProperties); //将业务消费端配置加入到监听器中 genericKafkaListener.addConsumerConfig(consumerConfig); container.setupMessageListener(genericKafkaListener); container.start(); } log.info("------- 初始化kafka监听容器完成"); } }

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

public class KafkaConsumerConfig {

private String topic;

private String key; //messagekey 过滤暂不支持

private IMqKafkaBizConsumer consumer;

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

public interface IMqKafkaBizConsumer<T> {

/**

* 消息成功消费返回null,否则返回data

*

* @param data

* @return 返回true表示消费成功,false表示消费失败,但是抛弃这条消息不做处理。抛出异常会启动重投机制。

*/

boolean consumber(String topic, T data, int partition, long offset, String key) throws Exception;

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

统一监听器

监听实现,如果有监听的topic有消息,统一会回调onMessage方法,此时我们需要根据之前的topic和自定义consumer的映射,找到consumer将消息交由他处理

public class GenericKafkaListener<T> implements MessageListener<String, T> { protected Map<String, KafkaConsumerConfig> consumerConfigMap = new ConcurrentHashMap<>(); //加入KafkaConsumer配置 public void addConsumerConfig(KafkaConsumerConfig kafkaConsumerConfig){ consumerConfigMap.put(kafkaConsumerConfig.getTopic(), kafkaConsumerConfig); } public void onMessage(ConsumerRecord<String, T> record){ // 处理收到的消息 System.out.println("Received message "+ record.topic()+" : " + record.value()); String topic = record.topic(); KafkaConsumerConfig consumerConfig = consumerConfigMap.get(topic); IMqKafkaBizConsumer consumer = consumerConfig.getConsumer(); boolean t = false; try { //根据topic找到对应的业务consumer,将topic消息交由对应的consumer处理 t = consumer.consumber(record.topic(), record.value(), record.partition(), record.offset(), record.key()); if (!t) { log.warn("[topic:" + record.topic() + "],消费失败,但业务允许放弃:" + record.value().toString()); } } catch (Exception e) { //报错是否允许直接跳过 if (consumerConfig.isIgnore()) { return; } else { throw new RuntimeException( "-1,[topic:" + record.topic() + "],异常重试:" + record.value().toString() + "kafka_error1:", e); } } return; } }

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

3. 生产端xml配置

<bean id="kafkaProducerFactory" class="org.springframework.kafka.core.DefaultKafkaProducerFactory"> <constructor-arg> <map> <entry key="bootstrap.servers" value="${kafka.bootstrap.servers}"/> <entry key="retries" value="5"/> <entry key="batch.size" value="16384"/> <entry key="linger.ms" value="2"/> <entry key="buffer.memory" value="33554432"/> <entry key="key.serializer" value="org.apache.kafka.common.serialization.StringSerializer"></entry> <entry key="value.serializer" value="org.apache.kafka.common.serialization.StringSerializer"></entry> <!-- <entry key="use.sasl" value="${kafka.use.sasl:true}"/>--> <!-- <entry key="servers.sasl" value="${kafka.biz.servers.sasl}"/>--> </map> </constructor-arg> </bean> <bean id="kafkaTemplate" class="org.springframework.kafka.core.KafkaTemplate"> <constructor-arg ref="kafkaProducerFactory"/> <constructor-arg name="autoFlush" value="true"/> </bean>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

之后在代码中注入kafkaTemplate 就可以使用其调用

kafkaTemplate.send(topic, message);

- 1

4. 消费端xml配置以及使用

<bean id="consumerFactory" class="com.nuwa.retransmission.infrastructure.kafka.GenericKafkaConsumerFactory" > <constructor-arg> <map> <entry key="bootstrap.servers" value="${kafka.bootstrap.servers}" /> <entry key="enable.auto.commit" value="false" /> <entry key="auto.commit.interval.ms" value="3000"/> <entry key="session.timeout.ms" value="60000"/> <entry key="request.timeout.ms" value="61000"/> <entry key="group.id" value="nuwa-retransmission"/> <entry key="key.deserializer" value="org.apache.kafka.common.serialization.StringDeserializer" /> <entry key="value.deserializer" value="org.apache.kafka.common.serialization.StringDeserializer" /> </map> </constructor-arg> <constructor-arg name="broadcastGroup" value="true"></constructor-arg> </bean> <bean id="messageListenerContainer" class="com.nuwa.retransmission.infrastructure.kafka.GenericKafkaMessageListenerContainer"> <property name="factory" ref="consumerFactory"/> <property name="kafkaTemplate" ref="kafkaTemplate"/> //配置Kafka统一监听器 <property name="genericKafkaListener" ref="genericKafkaListener"/> //消费端配置信息 <property name="consumerConfigs"> <list> <bean class="com.basic.mq.domain.KafkaConsumerConfig"> //监听的topic <property name="topic" value="topic2"/> //监听topic回调的consumer <property name="consumer"> <bean id="eventConsumer" class="com.nuwa.retransmission.service.impl.AppAndApiAuthSyncConsumer"/> </property> </bean> </list> </property> </bean> <bean id="genericKafkaListener" class="com.nuwa.retransmission.infrastructure.kafka.GenericKafkaListener"/>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

消费端代码

public class AppAndApiAuthSyncConsumer implements IMqKafkaBizConsumer<String> { private static final Logger LOGGER = LoggerFactory.getLogger(AppAndApiAuthSyncConsumer.class); @Override public boolean consumber(String topic, String kafkaMqData, int i, long l, String s1) { String oldName = Thread.currentThread().getName(); Thread.currentThread().setName("Thread_AppAndApiAuthSyncConsumer_" + UUID.randomUUID()); LOGGER.info("topic {}, receive {}", topic, data); return true; } }

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17