- 1开发者实战 | OpenVINO™ 赋能 BLIP 实现视觉语言 AI 边缘部署

- 2第十三届蓝桥杯省赛C++B组错题笔记_小明特别喜欢顺子。顺子指的就是连续的三个数字:123、456 等。顺子日期指的就是在

- 3详解机器学习概念、算法

- 4自然语言处理实战项目7-利用层次聚类方法做文本的排重,从大量的文本中找出相似文本_相似文本聚类

- 5Python学习笔记:面向对象高级编程(上)_python中面向对象高级编程

- 6【git基础】git merge使用简介

- 7强化CentOS安全防线:如何有效应对常见安全威胁

- 8Android跳转到应用下载平台,给当前APP评分_安卓 给app我们评分

- 9Kalibr进行相机-IMU联合标定踩坑记录RuntimeError: Optimization failed!_kalibr runtimeerror: optimization failed!

- 10大模型工具学习系统性综述+开源工具平台,清华、人大、北邮、UIUC、NYU、CMU等40多位研究者联合发布...

人脸检测MTCNN和人脸识别Facenet(附源码)_facenet人脸识别中mtcnn检测的关键点

赞

踩

原文链接:人脸检测MTCNN和人脸识别Facenet(附源码)

在说到人脸检测我们首先会想到利用Harr特征提取和Adaboost分类器进行人脸检测(有兴趣的可以去一看这篇博客第九节、人脸检测之Haar分类器),其检测效果也是不错的,但是目前人脸检测的应用场景逐渐从室内演变到室外,从单一限定场景发展到广场、车站、地铁口等场景,人脸检测面临的要求越来越高,比如:人脸尺度多变、数量冗大、姿势多样包括俯拍人脸、戴帽子口罩等的遮挡、表情夸张、化妆伪装、光照条件恶劣、分辨率低甚至连肉眼都较难区分等。在这样复杂的环境下基于Haar特征的人脸检测表现的不尽人意。随着深度学习的发展,基于深度学习的人脸检测技术取得了巨大的成功,在这一节我们将会介绍MTCNN算法,它是基于卷积神经网络的一种高精度的实时人脸检测和对齐技术。

搭建人脸识别系统的第一步就是人脸检测,也就是在图片中找到人脸的位置。在这个过程中输入的是一张含有人脸的图像,输出的是所有人脸的矩形框。一般来说,人脸检测应该能够检测出图像中的所有人脸,不能有漏检,更不能有错检。

获得人脸之后,第二步我们要做的工作就是人脸对齐,由于原始图像中的人脸可能存在姿态、位置上的差异,为了之后的统一处理,我们要把人脸“摆正”。为此,需要检测人脸中的关键点,比如眼睛的位置、鼻子的位置、嘴巴的位置、脸的轮廓点等。根据这些关键点可以使用仿射变换将人脸统一校准,以消除姿势不同带来的误差。

一 MTCNN算法结构

MTCNN算法是一种基于深度学习的人脸检测和人脸对齐方法,它可以同时完成人脸检测和人脸对齐的任务,相比于传统的算法,它的性能更好,检测速度更快。

MTCNN算法包含三个子网络:Proposal Network(P-Net)、Refine Network(R-Net)、Output Network(O-Net),这三个网络对人脸的处理依次从粗到细。

在使用这三个子网络之前,需要使用图像金字塔将原始图像缩放到不同的尺度,然后将不同尺度的图像送入这三个子网络中进行训练,目的是为了可以检测到不同大小的人脸,从而实现多尺度目标检测。

1、P-Net网络

P-Net的主要目的是为了生成一些候选框,我们通过使用P-Net网络,对图像金字塔图像上不同尺度下的图像的每一个$12\times{12}$区域都做一个人脸检测(实际上在使用卷积网络实现时,一般会把一张$h\times{w}$的图像送入P-Net中,最终得到的特征图每一点都对应着一个大小为$12\times{12}$的感受野,但是并没有遍历全一张图像每一个$12\times{12}$的图像)。

P-Net的输入是一个$12\times{12}\times{3}$的RGB图像,在训练的时候,该网络要判断这个$12\times{12}$的图像中是否存在人脸,并且给出人脸框的回归和人脸关键点定位;

在测试的时候输出只有$N$个边界框的4个坐标信息和score,当然这4个坐标信息已经使用网络的人脸框回归进行校正过了,score可以看做是分类的输出(即人脸的概率):

- 网络的第一部分输出是用来判断该图像是否包含人脸,输出向量大小为$1\times{1}\times{2}$,也就是两个值,即图像是人脸的概率和图像不是人脸的概率。这两个值加起来严格等于1,之所以使用两个值来表示,是为了方便定义交叉熵损失函数。

- 网络的第二部分给出框的精确位置,一般称为框回归。P-Net输入的$12\times{12}$的图像块可能并不是完美的人脸框的位置,如有的时候人脸并不正好为方形,有可能$12\times{12}$的图像偏左或偏右,因此需要输出当前框位置相对完美的人脸框位置的偏移。这个偏移大小为$1\times{1}\times{4}$,即表示框左上角的横坐标的相对偏移,框左上角的纵坐标的相对偏移、框的宽度的误差、框的高度的误差。

- 网络的第三部分给出人脸的5个关键点的位置。5个关键点分别对应着左眼的位置、右眼的位置、鼻子的位置、左嘴巴的位置、右嘴巴的位置。每个关键点需要两维来表示,因此输出是向量大小为$1\times{1}\times{10}$。

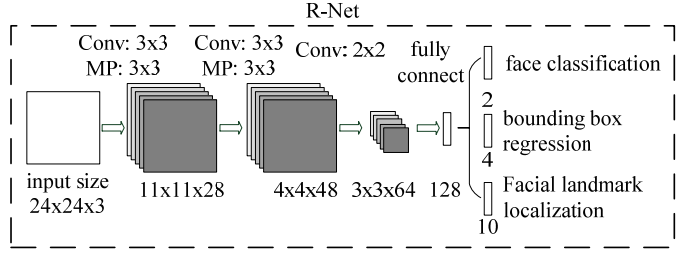

2、R-Net

由于P-Net的检测时比较粗略的,所以接下来使用R-Net进一步优化。R-Net和P-Net类似,不过这一步的输入是前面P-Net生成的边界框,每个边界框的大小都是$24\times{24}\times{3}$,可以通过缩放得到。网络的输出和P-Net是一样的。这一步的目的主要是为了去除大量的非人脸框。

3、O-Net

进一步将R-Net的所得到的区域缩放到$48\times{48}\times{3}$,输入到最后的O-Net,O-Net的结构与P-Net类似,只不过在测试输出的时候多了关键点位置的输出。输入大小为$48\times{48}\times{3}$的图像,输出包含$P$个边界框的坐标信息,score以及关键点位置。

从P-Net到R-Net,再到最后的O-Net,网络输入的图像越来越大,卷积层的通道数越来越多,网络的深度也越来越深,因此识别人脸的准确率应该也是越来越高的。同时P-Net网络的运行速度越快,R-Net次之、O-Net运行速度最慢。之所以使用三个网络,是因为一开始如果直接对图像使用O-Net网络,速度回非常慢。实际上P-Net先做了一层过滤,将过滤后的结果再交给R-Net进行过滤,最后将过滤后的结果交给效果最好但是速度最慢的O-Net进行识别。这样在每一步都提前减少了需要判别的数量,有效地降低了计算的时间。

二 MTCNN损失函数

由于MTCNN包含三个子网络,因此其损失函数也由三部分组成。针对人脸识别问题,直接使用交叉熵代价函数,对于框回归和关键点定位,使用$L2$损失。最后把这三部分的损失各自乘以自身的权重累加起来,形成最后的总损失。在训练P-Net和R-Net的时候,我们主要关注目标框的准确度,而较少关注关键点判定的损失,因此关键点损失所占的权重较小。对于O-Net,比较关注的是关键点的位置,因此关键点损失所占的权重就会比较大。

1、人脸识别损失函数

在针对人脸识别的问题,对于输入样本$x_i$,我们使用交叉熵代价函数:

其中$y_i^{det}$表示样本的真实标签,$p_i$表示网络输出为人脸的概率。

2、框回归

对于目标框的回归,我们采用的是欧氏距离:

其中$\hat{y}_i^{box}$表示网络输出之后校正得到的边界框的坐标,$y_i^{box}$是目标的真实边界框。

3、关键点损失函数

对于关键点,我们也采用的是欧氏距离:

其中$\hat{y}_i^{landmark}$表示网络输出之后得到的关键点的坐标,$y_i^{landmark}$是关键点的真实坐标。

4、总损失

把上面三个损失函数按照不同的权重联合起来:

其中$N$是训练样本的总数,$\alpha_j$表示各个损失所占的权重,在P-Net和R-net中,设置$\alpha_{det}=1,\alpha_{box}=0.5,\alpha_{landmark}=0.5$,在O-Net中,设置$\alpha_{det}=1,\alpha_{box}=0.5,\alpha_{landmark}=1$,$\beta_i^j\in\{0,1\}$表示样本类型指示符。

5、Online Hard sample mining

In particular, in each mini-batch, we sort the losses computed in the forward propagation from all samples and select the top 70% of them as hard samples. Then we only compute the gradients from these hard samples in the backward propagation.That means we ignore the easy samples that are less helpful to strengthen the detector during training. Experiments show that this strategy yields better performance without manual sampleselection.

这段话也就是说,我们在训练的时候取前向传播损失值(从大到小)前70%的样本,来进行反向传播更新参数。

6、训练数据

该算法训练数据来源于wider和celeba两个公开的数据库,wider提供人脸检测数据,在大图上标注了人脸框groundtruth的坐标信息,celeba提供了5个landmark点的数据。根据参与任务的不同,将训练数据分为四类:

- 负样本:滑动窗口和Ground True的IOU小于0.3;

- 正样本:滑动窗口和Ground True的IOU大于0.65;

- 中间样本:滑动窗口和Ground True的IOU大于0.4小于0.65;

- 关键点:包含5个关键点做标的;

上面滑动窗口指的是:通过滑动窗口或者随机采样的方法获取尺寸为$12\times{12}$的框:

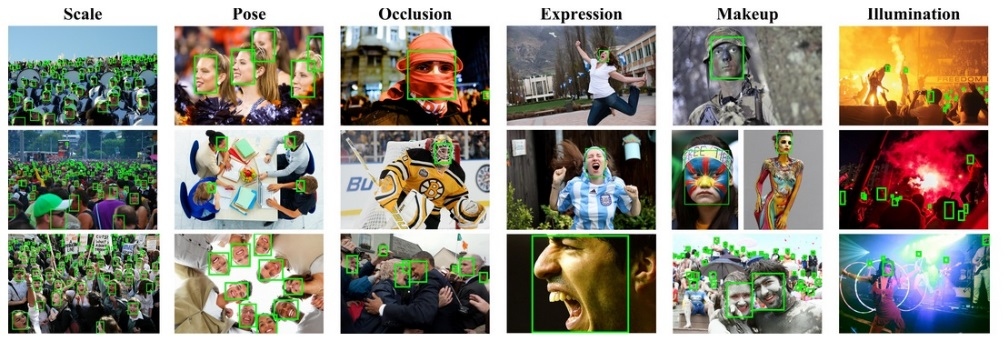

wider数据集,数据可以从http://mmlab.ie.cuhk.edu.hk/projects/WIDERFace/地址下载。该数据集有32,203张图片,共有93,703张脸被标记,如下图所示:

celeba人脸关键点检测的训练数据,数据可从http://mmlab.ie.cuhk.edu.hk/archive/CNN_FacePoint.htm地址下载。该数据集包含5,590张 LFW数据集的图片和7,876张从网站下载的图片。

三 人脸识别

在上面我们已经介绍了人脸检测,人脸检测是人脸相关任务的前提,人脸相关的任务主要有以下几种:

- 人脸跟踪(视频中跟踪人脸位置变化);

- 人脸验证(输入两张人脸,判断是否属于同一人);

- 人脸识别(输入一张人脸,判断其属于人脸数据库记录中哪一个人);

- 人脸聚类(输入一批人脸,将属于同一人的自动归为一类);

下面我们来详细介绍人脸识别技术:当我们通过MTCNN网络检测到人脸区域图像时,我们使用深度卷积网络,将输入的人脸图像转换为一个向量的表示,也就是所谓的特征。

那我们如何对人脸提取特征?我们先来回忆一下VGG16网络,输入的是图像,经过一系列卷积计算、全连接网络之后,输出的是类别概率。

在通常的图像应用中,可以去掉全连接层,使用卷积层的最后一层当做图像的“特征”,如上图中的conv5_3。但如果对人脸识别问题同样采用这样的方法,即,使用卷积层最后一层做为人脸的“向量表示”,效果其实是不好的。如何改进?我们之后再谈,这里先谈谈我们希望这种人脸的“向量表示”应该具有哪些性质。

在理想的状况下,我们希望“向量表示”之间的距离就可以直接反映人脸的相似度:

-

对于同一个人的人脸图像,对应的向量的欧几里得距离应该比较小;

-

对于不同人的人脸图像,对应的向量之间的欧几里得距离应该比较大;

例如:设人脸图像为$x_1,x_2$,对应的特征为$f(x_1),f(x_2)$,当$x_1,x_2$对应是同一个人的人脸时,$f(x_1),f(x_2)$的距离$\|f(x_1)-f(x_2)\|_2$应该很小,而当$x_1,x_2$对应的不是同一个人的人脸时,$f(x_1),f(x_2)$的距离$\|f(x_1)-f(x_2)\|_2$应该很大。

在原始的VGG16模型中,我们使用的是softmax损失,softmax是类别间的损失,对于人脸来说,每一类就是一个人。尽管使用softmax损失可以区别每个人,但其本质上没有对每一类的向量表示之间的距离做出要求。

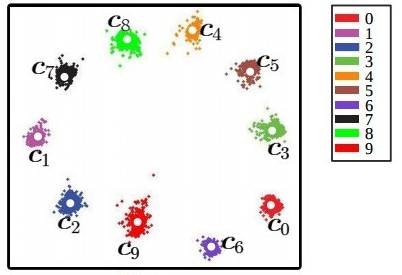

举个例子,使用CNN对MNIST进行分类,我们设计一个特殊的卷积网络,让最后一层的向量变为2维,此时可以画出每一类对应的2维向量表示的图(图中一种颜色对应一种类别):

上图是我们直接使用softmax训练得到的结果,它就不符合我们希望特征具有的特点:

-

我们希望同一类对应的向量表示尽可能接近。但这里同一类(如紫色),可能具有很大的类间距离;

-

我们希望不同类对应的向量应该尽可能远。但在图中靠中心的位置,各个类别的距离都很近;

对于人脸图像同样会出现类似的情况,对此,有很改进方法。这里介绍其中两种:三元组损失函数,中心损失函数。

1、三元组损失

三元组损失函数的原理:既然目标是特征之间的距离应该具备某些性质,那么我们就围绕这个距离来设计损失。具体的,我们每次都在训练数据中抽出三张人脸图像,第一张图像标记为$x_i^a$,第二章图像标记为$x_i^p$,第三张图像标记为$x_i^n$。在这样一个"三元组"中,$x_i^a$和$x_i^p$对应的是同一个人的图像,而$x_i^n$是另外一个人的人脸图像。因此距离$\|f(x_i^a)-f(x_i^p)\|_2$应该很小,而距离$\|f(x_i^a)-f(x_i^n)\|_2$应该很大。严格来说,三元组损失要求满足以下不等式:

即相同人脸间的距离平方至少要比不同人脸间的距离平方小$\alpha$(取平方主要是为了方便求导),据此,设计损失函数为:

这样的话,当三元组的距离满足$\|f(x_i^a)-f(x_i^p)\|_2^2+\alpha < \|f(x_i^a)-f(x_i^n)\|_2^2$时,损失$L_i=0$。当距离不满足上述不等式时,就会有值为$\|f(x_i^a)-f(x_i^p)\|_2^2+\alpha - \|f(x_i^a)-f(x_i^n)\|_2^2$的损失,此外,在训练时会固定$\|f(x)\|_2=1$,以确保特征不会无限的"远离"。

三元组损失直接对距离进行优化,因此可以解决人脸的特征表示问题。但是在训练过程中,三元组的选择非常地有技巧性。如果每次都是随机选择三元组,虽然模型可以正确的收敛,但是并不能达到最好的性能。如果加入"难例挖掘",即每次都选择最难分辨率的三元组进行训练,模型又往往不能正确的收敛。对此,又提出每次都选择那些"半难"的数据进行训练,让模型在可以收敛的同时也保持良好的性能。此外,使用三元组损失训练人脸模型通常还需要非常大的人脸数据集,才能取得较好的效果。

2、中心损失

与三元组损失不同,中心损失不直接对距离进行优化,它保留了原有的分类模型,但又为每个类(在人脸模型中,一个类就对应一个人)指定了一个类别中心。同一类的图像对应的特征都应该尽量靠近自己的类别中心,不同类的类别中心尽量远离。与三元组损失函数,使用中心损失训练人脸模型不需要使用特别的采样方法,而且利用较少的图像就可以达到与单元组损失相似的效果。下面我们一起来学习中心损失的定义:

设输入的人脸图像为$x_i$,该人脸对应的类别是$y_i$,对每个类别都规定一个类别中心,记作$c_{yi}$。希望每个人脸图像对应的特征$f(x_i)$都尽可能接近中心$c_{yi}$。因此定义损失函数为:

多张图像的中心损失就是将它们的值累加:

这是一个非常简单的定义。不过还有一个问题没有解决,那就是如何确定每个类别的中心$c_{yi}$呢?从理论上来说,类别$y_i$的最佳中心应该是它对应所有图片的特征的平均值。但如果采用这样的定义,那么在每一次梯度下降时,都要对所有图片计算一次$c_{yi}$,计算复杂度太高了。针对这种情况,不妨近似处理下,在初始阶段,先随机确定$c_{yi}$,接着在每个batch内,使用$L_i=\|f(x_i)-c_{yi}\|_2^2$对当前batch内的$c_{yi}$也计算梯度,并使得该梯度更新$c_{yi}$,此外,不能只使用中心损失来训练分类模型,还需要加入softmax损失,也就是说,损失最后由两部分组成,即$L=L_{softmax}+\lambda{L_{center}}$,其中$\lambda$是一个超参数。

最后来总结使用中心损失来训练人脸模型的过程。首先随机初始化各个中心$c_{yi}$,接着不断地取出batch进行训练,在每个batch中,使用总的损失$L$,除了使用神经网络模型的参数对模型进行更新外,也对$c_{yi}$进行计算梯度,并更新中心的位置。

中心损失可以让训练处的特征具有"内聚性"。还是以MNIST的例子来说,在未加入中心损失时,训练的结果不具有内聚性。在加入中心损失后,得到的特征如下:

当中心损失的权重$\lambda$越大时,生成的特征就会具有越明显的"内聚性"。

四 人脸识别的实现

下面我们会介绍一个经典的人脸识别系统-谷歌人脸识别系统facenet,该网络主要包含两部分:

- MTCNN部分:用于人脸检测和人脸对齐,输出$160\times{160}$大小的图像;

- CNN部分:可以直接将人脸图像(默认输入是$160\times{160}$大小)映射到欧几里得空间,空间距离的长度代表了人脸图像的相似性。只要该映射空间生成、人脸识别,验证和聚类等任务就可以轻松完成;

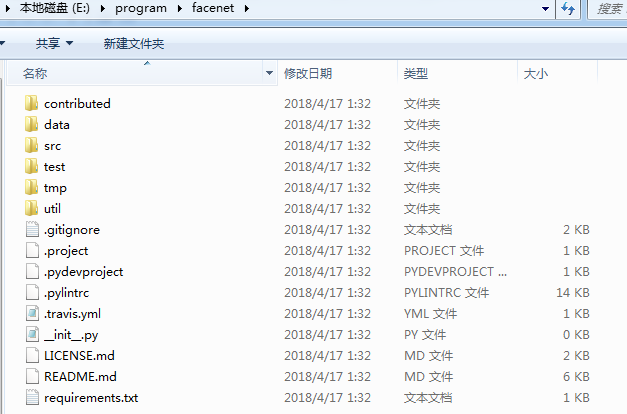

先去GitHub下载facenet源码:https://github.com/davidsandberg/facenet,解压后如下图所示;

打开requirements.txt,我们可以看到我们需要安装以下依赖:

- tensorflow==1.7

- scipy

- scikit-learn

- opencv-python

- h5py

- matplotlib

- Pillow

- requests

- psutil

后面在运行程序时,如果出现安装包兼容问题,建议这里使用pip安装,不要使用conda。

1、配置Facenet环境

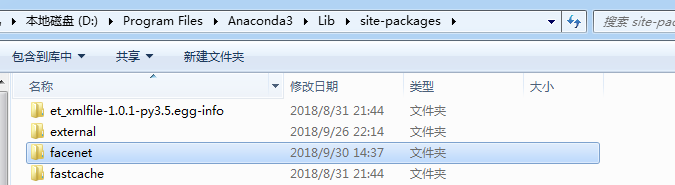

将facebet文件夹加到python引入库的默认搜索路径中,将facenet文件整个复制到anaconda3安装文件目录下lib\site-packages下:

然后把剪切src目录下的文件,然后删除facenet下的所有文件,粘贴src目录下的文件到facenet下,这样做的目的是为了导入src目录下的包(这样import align.detect_face不会报错)。

在Anaconda Prompt中运行python,输入import facenet,不报错即可:

2、下载LFW数据集

接下来将会讲解如何使用已经训练好的模型在LFW(Labeled Faces in the Wild)数据库上测试,不过我还需要先来介绍一下LFW数据集。

LFW数据集是由美国马赛诸塞大学阿姆斯特分校计算机实验室整理的人脸检测数据集,是评估人脸识别算法效果的公开测试数据集。LFW数据集共有13233张jpeg格式图片,属于5749个不同的人,其中有1680人对应不止一张图片,每张图片尺寸都是$250\times{250}$,并且被标示出对应的人的名字。LFW数据集中每张图片命名方式为"lfw/name/name_xxx.jpg",这里"xxx"是前面补零的四位图片编号。例如,前美国总统乔治布什的第十张图片为"lfw/George_W_Bush/George_W_Bush_0010.jpg"。

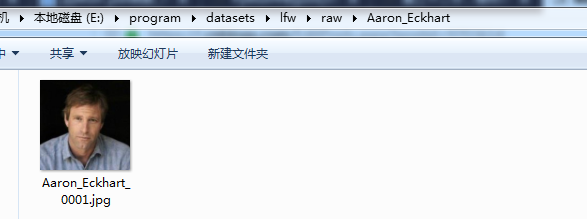

数据集的下载地址为:http://vis-www.cs.umass.edu/lfw/lfw.tgz,下载完成后,解压数据集,打开打开其中一个文件夹,如下:

在lfw下新建一个文件夹raw,把lfw中所有的文件(除了raw)移到raw文件夹中。可以看到我的数据集lfw是放在datasets文件夹下,其中datasets文件夹和facenet是在同一路径下。

3、LFW数据集预处理(LFW数据库上的人脸检测和对齐)

我们需要将检测所使用的数据集校准为和训练模型所使用的数据集大小一致($160\times{160}$),转换后的数据集存储在lfw_mtcnnpy_160文件夹内,

处理的第一步是使用MTCNN网络进行人脸检测和对齐,并缩放到$160\times{160}$。

MTCNN的实现主要在文件夹facenet/src/align中,文件夹的内容如下:

- detect_face.py:定义了MTCNN的模型结构,由P-Net、R-Net、O-Net组成,这三个网络已经提供了预训练的模型,模型数据分别对应文件det1.npy、det2.npy、det3.npy。

- align_dataset_matcnn.py:是使用MTCNN的模型进行人脸检测和对齐的入口代码。

使用脚本align_dataset_mtcnn.py对LFW数据库进行人脸检测和对齐的方法通过运行命令,我们打开Anaconda Prompt,来到facenet所在的路径下,运行如下命令:

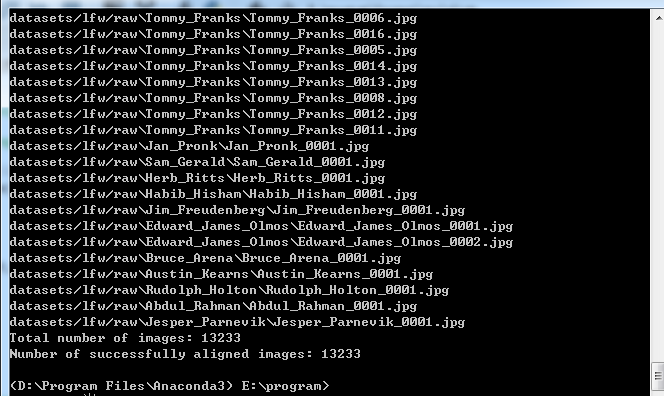

python facenet/src/align/align_dataset_mtcnn.py datasets/lfw/raw datasets/lfw/lfw_mtcnnpy_160 --image_size 160 --margin 32 --random_order

该命令会创建一个datasets/lfw/lfw_mtcnnpy_160的文件夹,并将所有对齐好的人脸图像存放到这个文件夹中,数据的结构和原先的datasets/lfw/raw一样。参数--image_size 160 --margin 32的含义是在MTCNN检测得到的人脸框的基础上缩小32像素(训练时使用的数据偏大),并缩放到$160\times{160}$大小,因此最后得到的对齐后的图像都是$160\times{160}$像素的,这样的话,就成功地从原始图像中检测并对齐了人脸。

下面我们来简略的分析一下align_dataset_mtcnn.py源文件,先上源代码如下,然后我们来解读一下main()函数

- """Performs face alignment and stores face thumbnails in the output directory."""

- # MIT License

- #

- # Copyright (c) 2016 David Sandberg

- #

- # Permission is hereby granted, free of charge, to any person obtaining a copy

- # of this software and associated documentation files (the "Software"), to deal

- # in the Software without restriction, including without limitation the rights

- # to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

- # copies of the Software, and to permit persons to whom the Software is

- # furnished to do so, subject to the following conditions:

- #

- # The above copyright notice and this permission notice shall be included in all

- # copies or substantial portions of the Software.

- #

- # THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

- # IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

- # FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

- # AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

- # LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

- # OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

- # SOFTWARE.

-

- from __future__ import absolute_import

- from __future__ import division

- from __future__ import print_function

-

- from scipy import misc

- import sys

- import os

- import argparse

- import tensorflow as tf

- import numpy as np

- import facenet

- import align.detect_face

- import random

- from time import sleep

-

-

-

- '''

- 使用MTCNN网络进行人脸检测和对齐

- '''

-

- def main(args):

- '''

- args:

- args:参数,关键字参数

- '''

-

- sleep(random.random())

- #设置对齐后的人脸图像存放的路径

- output_dir = os.path.expanduser(args.output_dir)

- if not os.path.exists(output_dir):

- os.makedirs(output_dir)

- # Store some git revision info in a text file in the log directory 保存一些配置参数等信息

- src_path,_ = os.path.split(os.path.realpath(__file__))

- facenet.store_revision_info(src_path, output_dir, ' '.join(sys.argv))

-

- '''1、获取LFW数据集 获取每个类别名称以及该类别下所有图片的绝对路径'''

- dataset = facenet.get_dataset(args.input_dir)

-

- print('Creating networks and loading parameters')

-

- '''2、建立MTCNN网络,并预训练(即使用训练好的网络初始化参数)'''

- with tf.Graph().as_default():

- gpu_options = tf.GPUOptions(per_process_gpu_memory_fraction=args.gpu_memory_fraction)

- sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options, log_device_placement=False))

- with sess.as_default():

- pnet, rnet, onet = align.detect_face.create_mtcnn(sess, None)

-

- minsize = 20 # minimum size of face

- threshold = [ 0.6, 0.7, 0.7 ] # three steps's threshold

- factor = 0.709 # scale factor

-

- # Add a random key to the filename to allow alignment using multiple processes

- random_key = np.random.randint(0, high=99999)

- bounding_boxes_filename = os.path.join(output_dir, 'bounding_boxes_%05d.txt' % random_key)

-

- '''3、每个图片中人脸所在的边界框写入记录文件中'''

- with open(bounding_boxes_filename, "w") as text_file:

- nrof_images_total = 0

- nrof_successfully_aligned = 0

- if args.random_order:

- random.shuffle(dataset)

- #获取每一个人,以及对应的所有图片的绝对路径

- for cls in dataset:

- #每一个人对应的输出文件夹

- output_class_dir = os.path.join(output_dir, cls.name)

- if not os.path.exists(output_class_dir):

- os.makedirs(output_class_dir)

- if args.random_order:

- random.shuffle(cls.image_paths)

- #遍历每一张图片

- for image_path in cls.image_paths:

- nrof_images_total += 1

- filename = os.path.splitext(os.path.split(image_path)[1])[0]

- output_filename = os.path.join(output_class_dir, filename+'.png')

- print(image_path)

- if not os.path.exists(output_filename):

- try:

- img = misc.imread(image_path)

- except (IOError, ValueError, IndexError) as e:

- errorMessage = '{}: {}'.format(image_path, e)

- print(errorMessage)

- else:

- if img.ndim<2:

- print('Unable to align "%s"' % image_path)

- text_file.write('%s\n' % (output_filename))

- continue

- if img.ndim == 2:

- img = facenet.to_rgb(img)

- img = img[:,:,0:3]

-

- #人脸检测 bounding_boxes:表示边界框 形状为[n,5] 5对应x1,y1,x2,y2,score

- #_:人脸关键点坐标 形状为 [n,10]

- bounding_boxes, _ = align.detect_face.detect_face(img, minsize, pnet, rnet, onet, threshold, factor)

- #边界框个数

- nrof_faces = bounding_boxes.shape[0]

- if nrof_faces>0:

- #[n,4] 人脸框

- det = bounding_boxes[:,0:4]

- #保存所有人脸框

- det_arr = []

- img_size = np.asarray(img.shape)[0:2]

- if nrof_faces>1:

- #一张图片中检测多个人脸

- if args.detect_multiple_faces:

- for i in range(nrof_faces):

- det_arr.append(np.squeeze(det[i]))

- else:

- bounding_box_size = (det[:,2]-det[:,0])*(det[:,3]-det[:,1])

- img_center = img_size / 2

- offsets = np.vstack([ (det[:,0]+det[:,2])/2-img_center[1], (det[:,1]+det[:,3])/2-img_center[0] ])

- offset_dist_squared = np.sum(np.power(offsets,2.0),0)

- index = np.argmax(bounding_box_size-offset_dist_squared*2.0) # some extra weight on the centering

- det_arr.append(det[index,:])

- else:

- #只有一个人脸框

- det_arr.append(np.squeeze(det))

-

- #遍历每一个人脸框

- for i, det in enumerate(det_arr):

- #[4,] 边界框扩大margin区域,并进行裁切

- det = np.squeeze(det)

- bb = np.zeros(4, dtype=np.int32)

- bb[0] = np.maximum(det[0]-args.margin/2, 0)

- bb[1] = np.maximum(det[1]-args.margin/2, 0)

- bb[2] = np.minimum(det[2]+args.margin/2, img_size[1])

- bb[3] = np.minimum(det[3]+args.margin/2, img_size[0])

- cropped = img[bb[1]:bb[3],bb[0]:bb[2],:]

- #缩放到指定大小,并保存图片,以及边界框位置信息

- scaled = misc.imresize(cropped, (args.image_size, args.image_size), interp='bilinear')

- nrof_successfully_aligned += 1

- filename_base, file_extension = os.path.splitext(output_filename)

- if args.detect_multiple_faces:

- output_filename_n = "{}_{}{}".format(filename_base, i, file_extension)

- else:

- output_filename_n = "{}{}".format(filename_base, file_extension)

- misc.imsave(output_filename_n, scaled)

- text_file.write('%s %d %d %d %d\n' % (output_filename_n, bb[0], bb[1], bb[2], bb[3]))

- else:

- print('Unable to align "%s"' % image_path)

- text_file.write('%s\n' % (output_filename))

-

- print('Total number of images: %d' % nrof_images_total)

- print('Number of successfully aligned images: %d' % nrof_successfully_aligned)

-

-

- def parse_arguments(argv):

- '''

- 解析命令行参数

- '''

- parser = argparse.ArgumentParser()

-

- #定义参数 input_dir、output_dir为外部参数名

- parser.add_argument('input_dir', type=str, help='Directory with unaligned images.')

- parser.add_argument('output_dir', type=str, help='Directory with aligned face thumbnails.')

- parser.add_argument('--image_size', type=int,

- help='Image size (height, width) in pixels.', default=160)

- parser.add_argument('--margin', type=int,

- help='Margin for the crop around the bounding box (height, width) in pixels.', default=32)

- parser.add_argument('--random_order',

- help='Shuffles the order of images to enable alignment using multiple processes.', action='store_true')

- parser.add_argument('--gpu_memory_fraction', type=float,

- help='Upper bound on the amount of GPU memory that will be used by the process.', default=1.0)

- parser.add_argument('--detect_multiple_faces', type=bool,

- help='Detect and align multiple faces per image.', default=False)

- #解析

- return parser.parse_args(argv)

-

- if __name__ == '__main__':

- main(parse_arguments(sys.argv[1:]))

- 首先加载LFW数据集;

- 建立MTCNN网络,并预训练(即使用训练好的网络初始化参数),Google Facenet的作者在建立网络时,自己重写了CNN网络所需的各个组件,包括conv层,MaxPool层,Softmax层等等,由于作者写的比较复杂。有兴趣的同学看看MTCNN 的 TensorFlow 实现这篇博客,博主使用Keras重新实现了MTCNN网络,也比较好懂代码链接:https://github.com/FortiLeiZhang/model_zoo/tree/master/TensorFlow/mtcnn;

- 调用align.detect_face.detect_face()函数进行人脸检测,返回校准后的人脸边界框的位置、score、以及关键点坐标;

- 对人脸框进行处理,从原图中裁切(先进行了边缘扩展32个像素)、以及缩放(缩放到$160\times{160}$)等,并保存相关信息到文件;

关于人脸检测的具体细节可以查看detect_face()函数,代码也比较长,这里我放上代码,具体细节部分可以参考MTCNN 的 TensorFlow 实现这篇博客。

- def detect_face(img, minsize, pnet, rnet, onet, threshold, factor):

- """Detects faces in an image, and returns bounding boxes and points for them.

- img: input image

- minsize: minimum faces' size

- pnet, rnet, onet: caffemodel

- threshold: threshold=[th1, th2, th3], th1-3 are three steps's threshold

- factor: the factor used to create a scaling pyramid of face sizes to detect in the image.

- """

- factor_count=0

- total_boxes=np.empty((0,9))

- points=np.empty(0)

- h=img.shape[0]

- w=img.shape[1]

- #最小值 假设是250x250

- minl=np.amin([h, w])

- #假设最小人脸 minsize=20,由于我们P-Net人脸检测窗口大小为12x12,

- #因此必须缩放才能使得检测窗口检测到完整的人脸 m=0.6

- m=12.0/minsize

- #180

- minl=minl*m

- # create scale pyramid 不同尺度金字塔,保存每个尺度缩放尺度系数0.6 0.6*0.7 ...

- scales=[]

- while minl>=12:

- scales += [m*np.power(factor, factor_count)]

- minl = minl*factor

- factor_count += 1

-

- # first stage P-Net

- for scale in scales:

- #缩放图像

- hs=int(np.ceil(h*scale))

- ws=int(np.ceil(w*scale))

- im_data = imresample(img, (hs, ws))

- #归一化[-1,1]之间

- im_data = (im_data-127.5)*0.0078125

- img_x = np.expand_dims(im_data, 0)

- img_y = np.transpose(img_x, (0,2,1,3))

- out = pnet(img_y)

- #输入图像是[1,150,150,3] 则输出为[1,70,70,4] 边界框,每一个特征点都对应一个12x12大小检测窗口

- out0 = np.transpose(out[0], (0,2,1,3))

- #输入图像是[1,150,150,3] 则输出为[1,70,70,2] 人脸概率

- out1 = np.transpose(out[1], (0,2,1,3))

-

- #输出为[n,9] 前4位为人脸框在原图中的位置,第5位为判定为人脸的概率,后4位为框回归的值

- boxes, _ = generateBoundingBox(out1[0,:,:,1].copy(), out0[0,:,:,:].copy(), scale, threshold[0])

-

- # inter-scale nms 非极大值抑制,然后保存剩下的bb

- pick = nms(boxes.copy(), 0.5, 'Union')

- if boxes.size>0 and pick.size>0:

- boxes = boxes[pick,:]

- total_boxes = np.append(total_boxes, boxes, axis=0)

-

- #图片按照所有scale走完一遍,会得到在原图上基于不同scale的所有的bb,然后对这些bb再进行一次NMS

- #并且这次NMS的threshold要提高

- numbox = total_boxes.shape[0]

- if numbox>0:

- pick = nms(total_boxes.copy(), 0.7, 'Union')

- total_boxes = total_boxes[pick,:]

- #使用框回归校准bb 框回归:框左上角的横坐标的相对偏移,框左上角的纵坐标的相对偏移、框的宽度的误差、框的高度的误差。

- regw = total_boxes[:,2]-total_boxes[:,0]

- regh = total_boxes[:,3]-total_boxes[:,1]

- qq1 = total_boxes[:,0]+total_boxes[:,5]*regw

- qq2 = total_boxes[:,1]+total_boxes[:,6]*regh

- qq3 = total_boxes[:,2]+total_boxes[:,7]*regw

- qq4 = total_boxes[:,3]+total_boxes[:,8]*regh

- #[n,8]

- total_boxes = np.transpose(np.vstack([qq1, qq2, qq3, qq4, total_boxes[:,4]]))

- #把每一个bb转换为正方形

- total_boxes = rerec(total_boxes.copy())

- total_boxes[:,0:4] = np.fix(total_boxes[:,0:4]).astype(np.int32)

- #把超过原图边界的坐标裁切一下,这时候得到真正原图上bb的坐标

- dy, edy, dx, edx, y, ey, x, ex, tmpw, tmph = pad(total_boxes.copy(), w, h)

-

- numbox = total_boxes.shape[0]

- if numbox>0:

- # second stage R-Net 对于P-Net输出的bb,缩放到24x24大小

- tempimg = np.zeros((24,24,3,numbox))

- for k in range(0,numbox):

- tmp = np.zeros((int(tmph[k]),int(tmpw[k]),3))

- tmp[dy[k]-1:edy[k],dx[k]-1:edx[k],:] = img[y[k]-1:ey[k],x[k]-1:ex[k],:]

- if tmp.shape[0]>0 and tmp.shape[1]>0 or tmp.shape[0]==0 and tmp.shape[1]==0:

- tempimg[:,:,:,k] = imresample(tmp, (24, 24))

- else:

- return np.empty()

- #标准化 [-1,1]

- tempimg = (tempimg-127.5)*0.0078125

- #转置[n,24,24,3]

- tempimg1 = np.transpose(tempimg, (3,1,0,2))

- out = rnet(tempimg1)

- out0 = np.transpose(out[0])

- out1 = np.transpose(out[1])

- score = out1[1,:]

- ipass = np.where(score>threshold[1])

- total_boxes = np.hstack([total_boxes[ipass[0],0:4].copy(), np.expand_dims(score[ipass].copy(),1)])

- mv = out0[:,ipass[0]]

- if total_boxes.shape[0]>0:

- pick = nms(total_boxes, 0.7, 'Union')

- total_boxes = total_boxes[pick,:]

- total_boxes = bbreg(total_boxes.copy(), np.transpose(mv[:,pick]))

- total_boxes = rerec(total_boxes.copy())

-

- numbox = total_boxes.shape[0]

- if numbox>0:

- # third stage O-Net

- total_boxes = np.fix(total_boxes).astype(np.int32)

- dy, edy, dx, edx, y, ey, x, ex, tmpw, tmph = pad(total_boxes.copy(), w, h)

- tempimg = np.zeros((48,48,3,numbox))

- for k in range(0,numbox):

- tmp = np.zeros((int(tmph[k]),int(tmpw[k]),3))

- tmp[dy[k]-1:edy[k],dx[k]-1:edx[k],:] = img[y[k]-1:ey[k],x[k]-1:ex[k],:]

- if tmp.shape[0]>0 and tmp.shape[1]>0 or tmp.shape[0]==0 and tmp.shape[1]==0:

- tempimg[:,:,:,k] = imresample(tmp, (48, 48))

- else:

- return np.empty()

- tempimg = (tempimg-127.5)*0.0078125

- tempimg1 = np.transpose(tempimg, (3,1,0,2))

- out = onet(tempimg1)

- #关键点

- out0 = np.transpose(out[0])

- #框回归

- out1 = np.transpose(out[1])

- #人脸概率

- out2 = np.transpose(out[2])

- score = out2[1,:]

- points = out1

- ipass = np.where(score>threshold[2])

- points = points[:,ipass[0]]

- #[n,5]

- total_boxes = np.hstack([total_boxes[ipass[0],0:4].copy(), np.expand_dims(score[ipass].copy(),1)])

- mv = out0[:,ipass[0]]

-

- w = total_boxes[:,2]-total_boxes[:,0]+1

- h = total_boxes[:,3]-total_boxes[:,1]+1

- #人脸关键点

- points[0:5,:] = np.tile(w,(5, 1))*points[0:5,:] + np.tile(total_boxes[:,0],(5, 1))-1

- points[5:10,:] = np.tile(h,(5, 1))*points[5:10,:] + np.tile(total_boxes[:,1],(5, 1))-1

- if total_boxes.shape[0]>0:

- total_boxes = bbreg(total_boxes.copy(), np.transpose(mv))

- pick = nms(total_boxes.copy(), 0.7, 'Min')

- total_boxes = total_boxes[pick,:]

- points = points[:,pick]

-

- #返回bb:[n,5] x1,y1,x2,y2,score 和关键点[n,10]

- return total_boxes, points

4、使用已有模型验证LFW数据集准确率

项目的原作者提供了两个预训练的模型,分别是基于CASIA-WebFace和VGGFace2人脸库训练的,下载地址:https://github.com/davidsandberg/facenet:

注意:这两个模型文件需要FQ才能够下载!!!!!!

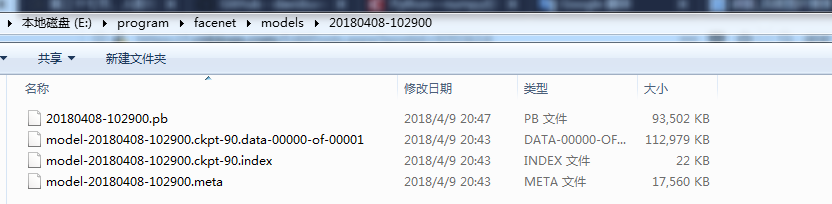

这里我们使用的预训练模型是基于数据集CASIA-WebFace的,并且使用的卷积网络结构是Inception ResNet v1,训练好的模型在LFW上可以达到99.05%左右的准确率。下载好模型后,将文件解压到facenet/models文件夹下(models文件夹需要自己新建)。解压后,会得到一个20180408-102900的文件夹,里面包含四个文件:

- model.meta:模型文件,该文件保存了metagraph信息,即计算图的结构;

- model.ckpt.data:权重文件,该文件保存了graph中所有遍历的数据;

- model.ckpt.index:该文件保存了如何将meta和data匹配起来的信息;

- pb文件:将模型文件和权重文件整合合并为一个文件,主要用途是便于发布,详细内容可以参考博客https://blog.csdn.net/yjl9122/article/details/78341689;

- 一般情况下还会有个checkpoint文件,用于保存文件的绝对路径,告诉TF最新的检查点文件(也就是上图中后三个文件)是哪个,保存在哪里,在使用tf.train.latest_checkpoint加载的时候要用到这个信息,但是如果改变或者删除了文件中保存的路径,那么加载的时候会出错,找不到文件;

到这里、我们的准备工作已经基本完成,测试数据集LFW,模型、程序都有了,我们接下来开始评估模型的准确率。

我们打开Anaconda Prompt,来到facenet路径下(注意这里是facenet路径下),运行如下命令:

python src/validate_on_lfw.py ../datasets/lfw/lfw_mtcnnpy_160 models/20180408-102900运行结果如下:

由此,我们验证了模型在LFW上的准确率为99.7%。

validate_on_lfw.py源码如下:

- """Validate a face recognizer on the "Labeled Faces in the Wild" dataset (http://vis-www.cs.umass.edu/lfw/).

- Embeddings are calculated using the pairs from http://vis-www.cs.umass.edu/lfw/pairs.txt and the ROC curve

- is calculated and plotted. Both the model metagraph and the model parameters need to exist

- in the same directory, and the metagraph should have the extension '.meta'.

- """

- # MIT License

- #

- # Copyright (c) 2016 David Sandberg

- #

- # Permission is hereby granted, free of charge, to any person obtaining a copy

- # of this software and associated documentation files (the "Software"), to deal

- # in the Software without restriction, including without limitation the rights

- # to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

- # copies of the Software, and to permit persons to whom the Software is

- # furnished to do so, subject to the following conditions:

- #

- # The above copyright notice and this permission notice shall be included in all

- # copies or substantial portions of the Software.

- #

- # THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

- # IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

- # FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

- # AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

- # LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

- # OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

- # SOFTWARE.

-

- from __future__ import absolute_import

- from __future__ import division

- from __future__ import print_function

-

- import tensorflow as tf

- import numpy as np

- import argparse

- import facenet

- import lfw

- import os

- import sys

- from tensorflow.python.ops import data_flow_ops

- from sklearn import metrics

- from scipy.optimize import brentq

- from scipy import interpolate

-

- def main(args):

-

- with tf.Graph().as_default():

-

- with tf.Session() as sess:

-

- # Read the file containing the pairs used for testing list

- #每个元素如下:同一个人[Abel_Pacheco 1 4] 不同人[Ben_Kingsley 1 Daryl_Hannah 1]

- pairs = lfw.read_pairs(os.path.expanduser(args.lfw_pairs))

-

- # Get the paths for the corresponding images

- # 获取测试图片的路径,actual_issame表示是否是同一个人

- paths, actual_issame = lfw.get_paths(os.path.expanduser(args.lfw_dir), pairs)

-

- #定义占位符

- image_paths_placeholder = tf.placeholder(tf.string, shape=(None,1), name='image_paths')

- labels_placeholder = tf.placeholder(tf.int32, shape=(None,1), name='labels')

- batch_size_placeholder = tf.placeholder(tf.int32, name='batch_size')

- control_placeholder = tf.placeholder(tf.int32, shape=(None,1), name='control')

- phase_train_placeholder = tf.placeholder(tf.bool, name='phase_train')

-

- #使用队列机制读取数据

- nrof_preprocess_threads = 4

- image_size = (args.image_size, args.image_size)

- eval_input_queue = data_flow_ops.FIFOQueue(capacity=2000000,

- dtypes=[tf.string, tf.int32, tf.int32],

- shapes=[(1,), (1,), (1,)],

- shared_name=None, name=None)

- eval_enqueue_op = eval_input_queue.enqueue_many([image_paths_placeholder, labels_placeholder, control_placeholder], name='eval_enqueue_op')

- image_batch, label_batch = facenet.create_input_pipeline(eval_input_queue, image_size, nrof_preprocess_threads, batch_size_placeholder)

-

- # Load the model

- input_map = {'image_batch': image_batch, 'label_batch': label_batch, 'phase_train': phase_train_placeholder}

- facenet.load_model(args.model, input_map=input_map)

-

- # Get output tensor

- embeddings = tf.get_default_graph().get_tensor_by_name("embeddings:0")

- #

- #创建一个协调器,管理线程

- coord = tf.train.Coordinator()

- tf.train.start_queue_runners(coord=coord, sess=sess)

-

- #开始评估

- evaluate(sess, eval_enqueue_op, image_paths_placeholder, labels_placeholder, phase_train_placeholder, batch_size_placeholder, control_placeholder,

- embeddings, label_batch, paths, actual_issame, args.lfw_batch_size, args.lfw_nrof_folds, args.distance_metric, args.subtract_mean,

- args.use_flipped_images, args.use_fixed_image_standardization)

-

-

- def evaluate(sess, enqueue_op, image_paths_placeholder, labels_placeholder, phase_train_placeholder, batch_size_placeholder, control_placeholder,

- embeddings, labels, image_paths, actual_issame, batch_size, nrof_folds, distance_metric, subtract_mean, use_flipped_images, use_fixed_image_standardization):

- # Run forward pass to calculate embeddings

- print('Runnning forward pass on LFW images')

-

- # Enqueue one epoch of image paths and labels

- nrof_embeddings = len(actual_issame)*2 # nrof_pairs * nrof_images_per_pair

- nrof_flips = 2 if use_flipped_images else 1

- nrof_images = nrof_embeddings * nrof_flips

- labels_array = np.expand_dims(np.arange(0,nrof_images),1)

- image_paths_array = np.expand_dims(np.repeat(np.array(image_paths),nrof_flips),1)

- control_array = np.zeros_like(labels_array, np.int32)

- if use_fixed_image_standardization:

- control_array += np.ones_like(labels_array)*facenet.FIXED_STANDARDIZATION

- if use_flipped_images:

- # Flip every second image

- control_array += (labels_array % 2)*facenet.FLIP

- sess.run(enqueue_op, {image_paths_placeholder: image_paths_array, labels_placeholder: labels_array, control_placeholder: control_array})

-

- embedding_size = int(embeddings.get_shape()[1])

- assert nrof_images % batch_size == 0, 'The number of LFW images must be an integer multiple of the LFW batch size'

- nrof_batches = nrof_images // batch_size

- emb_array = np.zeros((nrof_images, embedding_size))

- lab_array = np.zeros((nrof_images,))

- for i in range(nrof_batches):

- feed_dict = {phase_train_placeholder:False, batch_size_placeholder:batch_size}

- emb, lab = sess.run([embeddings, labels], feed_dict=feed_dict)

- lab_array[lab] = lab

- emb_array[lab, :] = emb

- if i % 10 == 9:

- print('.', end='')

- sys.stdout.flush()

- print('')

- embeddings = np.zeros((nrof_embeddings, embedding_size*nrof_flips))

- if use_flipped_images:

- # Concatenate embeddings for flipped and non flipped version of the images

- embeddings[:,:embedding_size] = emb_array[0::2,:]

- embeddings[:,embedding_size:] = emb_array[1::2,:]

- else:

- embeddings = emb_array

-

- assert np.array_equal(lab_array, np.arange(nrof_images))==True, 'Wrong labels used for evaluation, possibly caused by training examples left in the input pipeline'

- tpr, fpr, accuracy, val, val_std, far = lfw.evaluate(embeddings, actual_issame, nrof_folds=nrof_folds, distance_metric=distance_metric, subtract_mean=subtract_mean)

-

- print('Accuracy: %2.5f+-%2.5f' % (np.mean(accuracy), np.std(accuracy)))

- print('Validation rate: %2.5f+-%2.5f @ FAR=%2.5f' % (val, val_std, far))

-

- auc = metrics.auc(fpr, tpr)

- print('Area Under Curve (AUC): %1.3f' % auc)

- eer = brentq(lambda x: 1. - x - interpolate.interp1d(fpr, tpr)(x), 0., 1.)

- print('Equal Error Rate (EER): %1.3f' % eer)

-

- def parse_arguments(argv):

- '''

- 参数解析

- '''

- parser = argparse.ArgumentParser()

-

- parser.add_argument('lfw_dir', type=str,

- help='Path to the data directory containing aligned LFW face patches.')

- parser.add_argument('--lfw_batch_size', type=int,

- help='Number of images to process in a batch in the LFW test set.', default=100)

- parser.add_argument('model', type=str,

- help='Could be either a directory containing the meta_file and ckpt_file or a model protobuf (.pb) file')

- parser.add_argument('--image_size', type=int,

- help='Image size (height, width) in pixels.', default=160)

- parser.add_argument('--lfw_pairs', type=str,

- help='The file containing the pairs to use for validation.', default='data/pairs.txt')

- parser.add_argument('--lfw_nrof_folds', type=int,

- help='Number of folds to use for cross validation. Mainly used for testing.', default=10)

- parser.add_argument('--distance_metric', type=int,

- help='Distance metric 0:euclidian, 1:cosine similarity.', default=0)

- parser.add_argument('--use_flipped_images',

- help='Concatenates embeddings for the image and its horizontally flipped counterpart.', action='store_true')

- parser.add_argument('--subtract_mean',

- help='Subtract feature mean before calculating distance.', action='store_true')

- parser.add_argument('--use_fixed_image_standardization',

- help='Performs fixed standardization of images.', action='store_true')

- return parser.parse_args(argv)

-

- if __name__ == '__main__':

- main(parse_arguments(sys.argv[1:]))

- 首先加载data/pairs.txt文件,该文件保存着测试使用的图片,其中有同一个人,以及不同人的图片对;

- 创建一个对象,使用TF的队列机制加载数据;

- 加载facenet模型;

- 启动QueueRunner,计算测试图片对的距离,根据距离(距离小于1为同一个人,否则相反)和实际标签来进行评估准确率;

5、在LFW数据集上使用已有模型

在实际应用过程中,我们有时候还会关心如何在自己的图像上应用已有模型。下面我们以计算人脸之间的距离为例,演示如何将模型应用到自己的数据上。

假设我们现在有三张图片,我们把他们存放在facenet/src目录下,文件分别叫做img1.jpg,img2.jpg,img3.jpg。这三张图像中各包含有一个人的人脸,我们希望计算它们两两之间的距离。使用facenet/src/compare.py文件来实现。

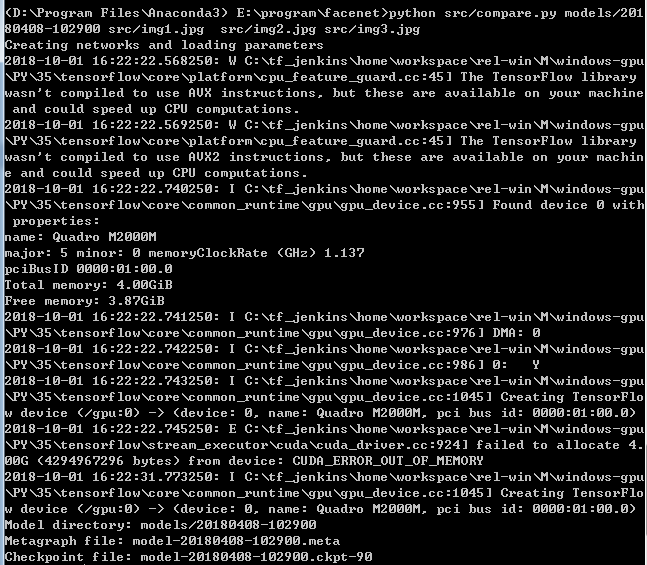

我们打开Anaconda Prompt,来到facenet路径下(注意这里是facenet路径下),运行如下命令:

python src/compare.py models/20180408-102900 src/img1.jpg src/img2.jpg src/img3.jpg 运行结果如下:

我们尝试使用不同的三个人的图片进行测试:

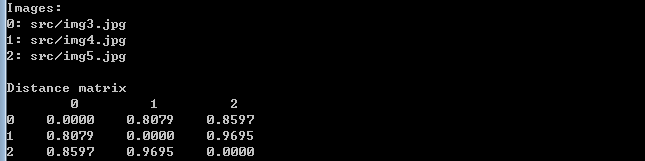

python src/compare.py models/20180408-102900 src/img3.jpg src/img4.jpg src/img5.jpg

我们会发现同一个人的图片,测试得到的距离值偏小,而不同的人测试得到的距离偏大。正常情况下同一个人测得距离应该小于1,不同人测得距离应该大于1。然而上面的结果却不是这样,我认为这多半与我们选取的照片有关。在选取测试照片时,我们尽量要选取脸部较为清晰并且端正的图片,并且要与训练数据具有相同分布的图片,即尽量选取一些外国人的图片进行测试。

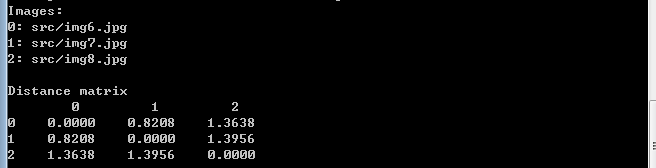

python src/compare.py models/20180408-102900 src/img6.jpg src/img7.jpg src/img8.jpg

我们可以看到这个效果还是不错的,因此如果我们想在我们华人图片上也取得不错的效果,我们需要用华人的数据集进行训练模型。

compare.py源码如下:

- """Performs face alignment and calculates L2 distance between the embeddings of images."""

-

- # MIT License

- #

- # Copyright (c) 2016 David Sandberg

- #

- # Permission is hereby granted, free of charge, to any person obtaining a copy

- # of this software and associated documentation files (the "Software"), to deal

- # in the Software without restriction, including without limitation the rights

- # to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

- # copies of the Software, and to permit persons to whom the Software is

- # furnished to do so, subject to the following conditions:

- #

- # The above copyright notice and this permission notice shall be included in all

- # copies or substantial portions of the Software.

- #

- # THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

- # IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

- # FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

- # AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

- # LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

- # OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

- # SOFTWARE.

-

- from __future__ import absolute_import

- from __future__ import division

- from __future__ import print_function

-

- from scipy import misc

- import tensorflow as tf

- import numpy as np

- import sys

- import os

- import copy

- import argparse

- import facenet

- import align.detect_face

-

- def main(args):

-

- #使用MTCNN网络在原始图片中进行检测和对齐

- images = load_and_align_data(args.image_files, args.image_size, args.margin, args.gpu_memory_fraction)

-

- with tf.Graph().as_default():

-

- with tf.Session() as sess:

-

- # Load the facenet model

- facenet.load_model(args.model)

-

- # Get input and output tensors

- # 输入图像占位符

- images_placeholder = tf.get_default_graph().get_tensor_by_name("input:0")

- #卷及网络最后输出的"特征"

- embeddings = tf.get_default_graph().get_tensor_by_name("embeddings:0")

- #训练?

- phase_train_placeholder = tf.get_default_graph().get_tensor_by_name("phase_train:0")

-

- # Run forward pass to calculate embeddings

- feed_dict = { images_placeholder: images, phase_train_placeholder:False }

- emb = sess.run(embeddings, feed_dict=feed_dict)

-

- nrof_images = len(args.image_files)

-

- print('Images:')

- for i in range(nrof_images):

- print('%1d: %s' % (i, args.image_files[i]))

- print('')

-

- # Print distance matrix

- print('Distance matrix')

- print(' ', end='')

- for i in range(nrof_images):

- print(' %1d ' % i, end='')

- print('')

- for i in range(nrof_images):

- print('%1d ' % i, end='')

- for j in range(nrof_images):

- #对特征计算两两之间的距离以得到人脸之间的相似度

- dist = np.sqrt(np.sum(np.square(np.subtract(emb[i,:], emb[j,:]))))

- print(' %1.4f ' % dist, end='')

- print('')

-

-

- def load_and_align_data(image_paths, image_size, margin, gpu_memory_fraction):

- '''

- 返回经过MTCNN处理后的人脸图像集合 [n,160,160,3]

- '''

- minsize = 20 # minimum size of face

- threshold = [ 0.6, 0.7, 0.7 ] # three steps's threshold

- factor = 0.709 # scale factor

-

- #创建P-Net,R-Net,O-Net网络,并加载参数

- print('Creating networks and loading parameters')

- with tf.Graph().as_default():

- gpu_options = tf.GPUOptions(per_process_gpu_memory_fraction=gpu_memory_fraction)

- sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options, log_device_placement=False))

- with sess.as_default():

- pnet, rnet, onet = align.detect_face.create_mtcnn(sess, None)

-

-

- tmp_image_paths=copy.copy(image_paths)

- img_list = []

- #遍历测试图片

- for image in tmp_image_paths:

- img = misc.imread(os.path.expanduser(image), mode='RGB')

- img_size = np.asarray(img.shape)[0:2]

- #人脸检测 bounding_boxes:表示边界框 形状为[n,5] 5对应x1,y1,x2,y2,score

- #_:人脸关键点坐标 形状为 [n,10]

- bounding_boxes, _ = align.detect_face.detect_face(img, minsize, pnet, rnet, onet, threshold, factor)

- if len(bounding_boxes) < 1:

- image_paths.remove(image)

- print("can't detect face, remove ", image)

- continue

- #对图像进行处理:扩展、裁切、缩放

- det = np.squeeze(bounding_boxes[0,0:4])

- bb = np.zeros(4, dtype=np.int32)

- bb[0] = np.maximum(det[0]-margin/2, 0)

- bb[1] = np.maximum(det[1]-margin/2, 0)

- bb[2] = np.minimum(det[2]+margin/2, img_size[1])

- bb[3] = np.minimum(det[3]+margin/2, img_size[0])

- cropped = img[bb[1]:bb[3],bb[0]:bb[2],:]

- aligned = misc.imresize(cropped, (image_size, image_size), interp='bilinear')

- #归一化处理

- prewhitened = facenet.prewhiten(aligned)

- img_list.append(prewhitened)

- #[n,160,160,3]

- images = np.stack(img_list)

- return images

-

- def parse_arguments(argv):

- '''

- 参数解析

- '''

- parser = argparse.ArgumentParser()

-

- parser.add_argument('model', type=str,

- help='Could be either a directory containing the meta_file and ckpt_file or a model protobuf (.pb) file')

- parser.add_argument('image_files', type=str, nargs='+', help='Images to compare')

- parser.add_argument('--image_size', type=int,

- help='Image size (height, width) in pixels.', default=160)

- parser.add_argument('--margin', type=int,

- help='Margin for the crop around the bounding box (height, width) in pixels.', default=44)

- parser.add_argument('--gpu_memory_fraction', type=float,

- help='Upper bound on the amount of GPU memory that will be used by the process.', default=1.0)

- return parser.parse_args(argv)

-

- if __name__ == '__main__':

- main(parse_arguments(sys.argv[1:]))

- 首先使用MTCNN网络对原始测试图片进行检测和对齐,即得到[n,160,160,3]的输出;

- 从模型文件(meta,ckpt文件)中加载facenet网络;

- 把处理后的测试图片输入网络,得到每个图像的特征,对特征计算两两之间的距离以得到人脸之间的相似度;

6、重新训练新模型

从头训练一个新模型需要非常多的数据集,这里我们以CASIA-WebFace为例,这个 dataset 在原始地址已经下载不到了,而且这个 dataset 据说有很多无效的图片,所以这里我们使用的是清理过的数据库。该数据库可以在百度网盘有下载:下载地址,提取密码为 3zbb;或者在如下网址下载https://download.csdn.net/download/dsbhgkrgherk/10228992。

这个数据库有 10575 个类别494414张图像,每个类别都有各自的文件夹,里面有同一个人的几张或者几十张不等的脸部图片。我们先利用MTCNN 从这些照片中把人物的脸框出来,然后交给下面的 Facenet 去训练。

下载好之后,解压到datasets/casia/raw目录下,如图:

其中每个文件夹代表一个人,文件夹保存这个人的所有人脸图片。与LFW数据集类似,我们先利用MTCNN对原始图像进行人脸检测和对齐,我们打开Anaconda Prompt,来到facenet路径下,运行如下命令:

python src/align/align_dataset_mtcnn.py ../datasets/casia/raw ../datasets/casia/casia_maxpy_mtcnnpy_182 --image_size 182 --margin 44 --random_order对齐后的图像保存在路径datasets/casia/casia_maxpy_mtcnnpy_182下,每张图像的大小都是$182\times{182}$。而最终网络的输入是$160\times{160}$,之所以先生成$182\times{182}$的图像,是为了留出一定的空间给数据增强的裁切环节。我们会在$182\times{182}$的图像上随机裁切出$160\times{160}$的区域,再送入神经网络进行训练。

使用如下命令开始训练:

python src/train_softmax.py --logs_base_dir ./logs --models_base_dir ./models --data_dir ../datasets/casia/casia_maxpy_mtcnnpy_182 --image_size 160 --model_def models.inception_resnet_v1 --lfw_dir ../datasets/lfw/lfw_mtcnnpy_160 --optimizer RMSPROP --learning_rate -1 --max_nrof_epochs 80 --keep_probability 0.8 --random_crop --random_flip --learning_rate_schedule_file data/learning_rate_schedule_classifier_casia.txt --weight_decay 5e-5 --center_loss_factor 1e-2 --center_loss_alfa 0.9上面命令中有很多参数,我们来一一介绍。首先是文件src/train_softmax.py文件,它采用中心损失和softmax损失结合来训练模型,其中参数如下:

- --logs_base_dir./logs:将会把训练日志保存到./logs中,在运行时,会在./logs文件夹下新建一个以当前时间命名的文讲夹。最终的日志会保存在这个文件夹中,所谓的日志文件,实际上指的是tf中的events文件,它主要包含当前损失、当前训练步数、当前学习率等信息。后面我们会使用TensorBoard查看这些信息;

- --models_base_dir ./models:最终训练好的模型保存在./models文件夹下,在运行时,会在./models文件夹下新建一个以当前时间命名的文讲夹,并用来保存训练好的模型;

- --data_dir ../datasets/casis/casia_maxpy_mtcnnpy_182:指定训练所使用的数据集的路径,这里使用的就是刚才对齐好的CASIA-WebFace人脸数据;

- --image_size 160:输入网络的图片尺寸是$160\times{160}$大小;

- --mode_def models.inception_resnet_v1:指定了训练所使用的卷积网络是inception_resnet_v1网络。项目所支持的网络在src/models目录下,包含inception_resnet_v1,inception_resnet_v2和squeezenet三个模型,前两个模型较大,最后一个模型较小。如果在训练时出现内存或者显存不足的情况可以尝试使用sequeezenet网络,也可以修改batch_size 大小为32或者64(默认是90);

- --lfw_dir ../datasets/lfw/lfw_mtcnnpy_160:指定了LFW数据集的路径。如果指定了这个参数,那么每训练完一个epoch,就会在LFW数据集上执行一次测试,并将测试的准确率写入到日志文件中;

- --optimizer RMSPROP :指定训练使用的优化方法;

- --learning_rate -1:指定学习率,指定了负数表示忽略这个参数,而使用后面的--learning_rate_schedule_file参数规划学习率;

- --max_nrof_epochs 80:指定训练轮数epoch;

- --keep_probability 0.8:指定弃权的神经元保留率;

- --random_crop:表明在数据增强时使用随机裁切;

- --random_flip :表明在数据增强时使用随机随机翻转;

- --learning_rate_schedule_file data/learning_rate_schedule_classifier_casia.txt:在之前指定了--learning_rate -1,因此最终的学习率将由参数--learning_rate_schedule_file决定。这个参数指定一个文件data/learning_rate_schedule_classifier_casia.txt,该文件内容如下:

# Learning rate schedule # Maps an epoch number to a learning rate 0: 0.05 60: 0.005 80: 0.0005 91: -1

- --weight_decay 5e-5:正则化系数;

- --center_loss_factor 1e-2 :中心损失和Softmax损失的平衡系数;

- --center_loss_alfa 0.9:中心损失的内部参数;

除了上面我们使用到的参数,还有许多参数,下面介绍一些比较重要的:

- pretrained_model :models/20180408-102900 预训练模型,使用预训练模型可以加快训练速度(微调时经常使用到);

- batch_size:batch大小,越大,需要的内存也会越大;

- random_rotate:表明在数据增强时使用随机旋转;

由于CASIA-WebFace数据集比较大、训练起来周期较长,下面我们使用CASIA-WebFace一部分数据进行训练,运行结果如下:

其中Epoch:[32][683/1000]表示当前为第32个epoch以及当前epoch内的第683个训练batch,程序中默认参数epoch_size为1000,表示一个epoch有1000个batch。Time表示这一步的消耗的时间,Lr是学习率,Loss为当前batch的损失,Xent是softmax损失,RegLoss是正则化损失和中心损失之和,Cl是中心损失(注意这里的损失都是平均损失,即当前batch损失和/batch_size);

生成日志文件和模型文件:

我们启动Anaconda Prompt,首先来到日志文件的上级路径下,这一步是必须的,然后输入如下命令:

tensorboard --logdir E:\program\facenet\logs\20181007-12244

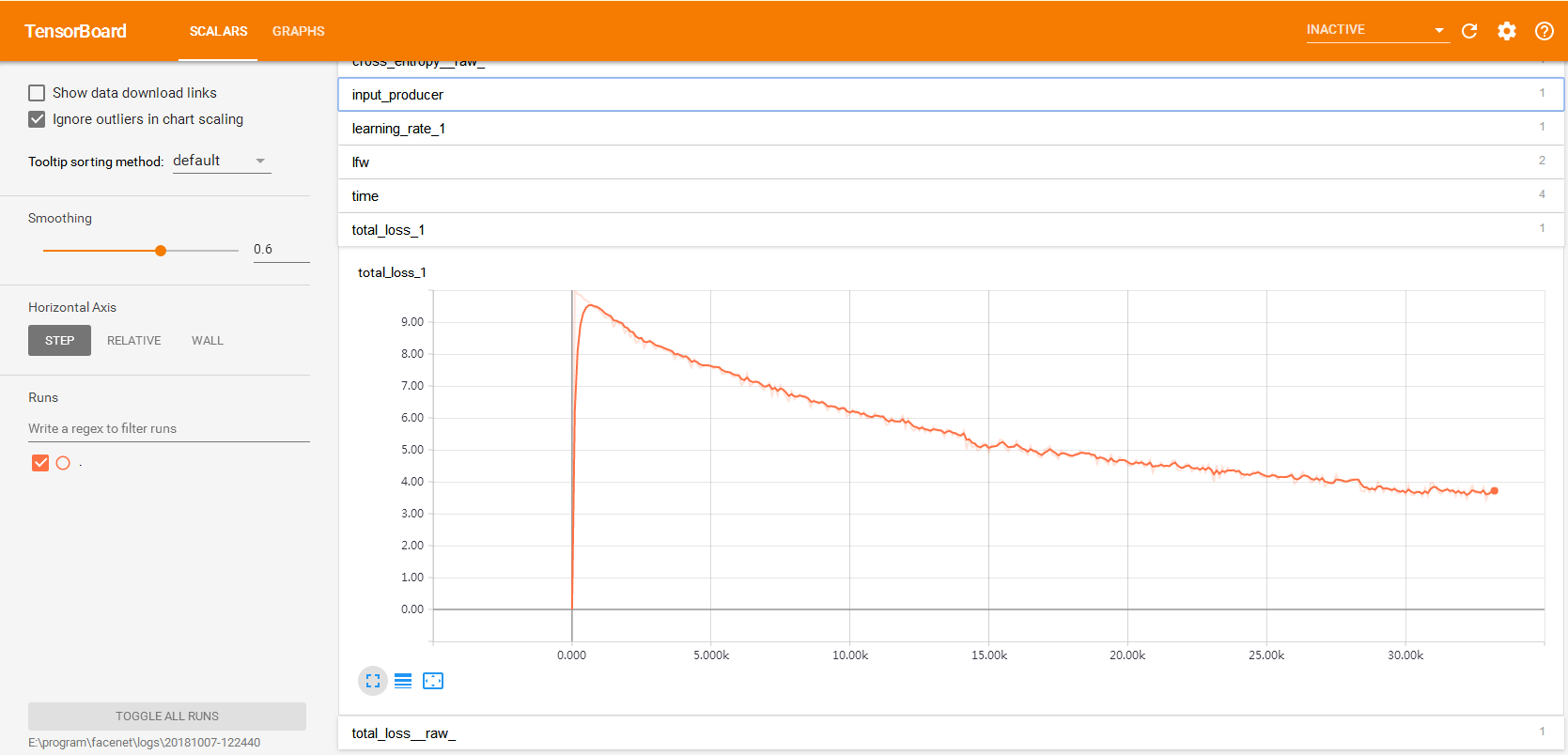

接着打开浏览器,输入http://127.0.0.1:6006,这里127.0.0.1是本机地址,6006是端口号。打开后,单击SCALARS,我们会看到我们在程序中创建的变量total_loss_1,点击它,会显示如下内容:

上图为训练过程中损失函数的变化过程,横坐标为迭代步数,这里为33k左右,主要是因为我迭代了33个epoch后终止了程序,每个epoch又迭代1000个batch。

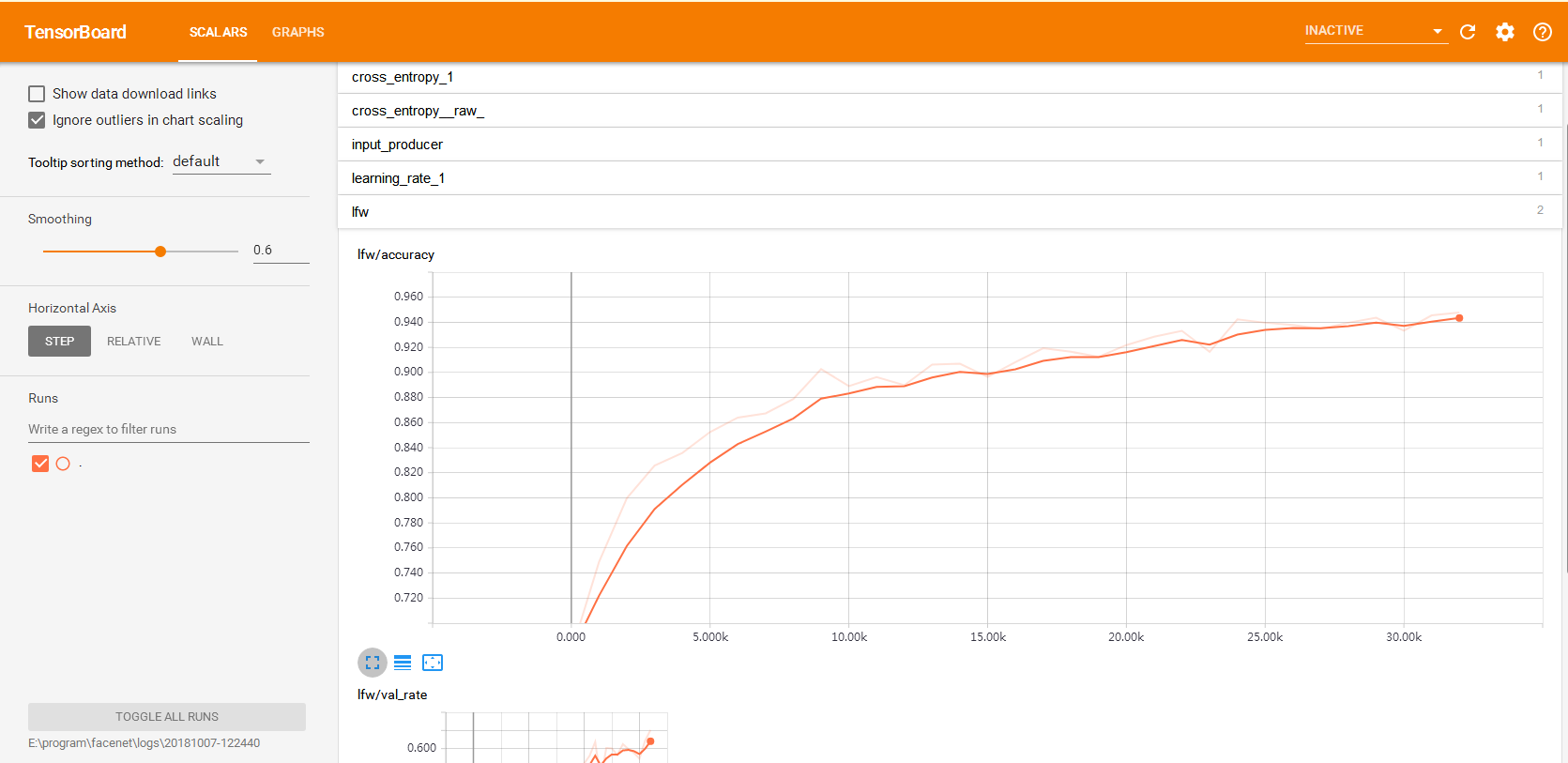

与之对应的,每个epoch结束还会在LFW数据集上做一次验证,对应的准确率变化曲线如下:

在左侧有个smoothing滚动条,可以用来改变右侧标量的曲线,我们还可以勾选上show data download links,然后下载数据。

train_softmax.py源码如下:

- """Training a face recognizer with TensorFlow using softmax cross entropy loss

- """

- # MIT License

- #

- # Copyright (c) 2016 David Sandberg

- #

- # Permission is hereby granted, free of charge, to any person obtaining a copy

- # of this software and associated documentation files (the "Software"), to deal

- # in the Software without restriction, including without limitation the rights

- # to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

- # copies of the Software, and to permit persons to whom the Software is

- # furnished to do so, subject to the following conditions:

- #

- # The above copyright notice and this permission notice shall be included in all

- # copies or substantial portions of the Software.

- #

- # THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

- # IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

- # FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

- # AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

- # LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

- # OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

- # SOFTWARE.

-

- from __future__ import absolute_import

- from __future__ import division

- from __future__ import print_function

-

- from datetime import datetime

- import os.path

- import time

- import sys

- import random

- import tensorflow as tf

- import numpy as np

- import importlib

- import argparse

- import facenet

- import lfw

- import h5py

- import math

- import tensorflow.contrib.slim as slim

- from tensorflow.python.ops import data_flow_ops

- from tensorflow.python.framework import ops

- from tensorflow.python.ops import array_ops

-

- def main(args):

- #导入CNN网络模块

- network = importlib.import_module(args.model_def)

- image_size = (args.image_size, args.image_size)

-

- #当前时间

- subdir = datetime.strftime(datetime.now(), '%Y%m%d-%H%M%S')

- #日志文件夹路径

- log_dir = os.path.join(os.path.expanduser(args.logs_base_dir), subdir)

- if not os.path.isdir(log_dir): # Create the log directory if it doesn't exist

- os.makedirs(log_dir)

- #模型文件夹路径

- model_dir = os.path.join(os.path.expanduser(args.models_base_dir), subdir)

- if not os.path.isdir(model_dir): # Create the model directory if it doesn't exist

- os.makedirs(model_dir)

-

- stat_file_name = os.path.join(log_dir, 'stat.h5')

-

- # Write arguments to a text file

- facenet.write_arguments_to_file(args, os.path.join(log_dir, 'arguments.txt'))

-

- # Store some git revision info in a text file in the log directory

- src_path,_ = os.path.split(os.path.realpath(__file__))

- facenet.store_revision_info(src_path, log_dir, ' '.join(sys.argv))

-

- np.random.seed(seed=args.seed)

- random.seed(args.seed)

- #训练数据集准备工作:获取每个类别名称以及该类别下所有图片的绝对路径

- dataset = facenet.get_dataset(args.data_dir)

- if args.filter_filename:

- dataset = filter_dataset(dataset, os.path.expanduser(args.filter_filename),

- args.filter_percentile, args.filter_min_nrof_images_per_class)

-

- if args.validation_set_split_ratio>0.0:

- train_set, val_set = facenet.split_dataset(dataset, args.validation_set_split_ratio, args.min_nrof_val_images_per_class, 'SPLIT_IMAGES')

- else:

- train_set, val_set = dataset, []

-

- #类别个数 每一个人都是一个类别

- nrof_classes = len(train_set)

-

- print('Model directory: %s' % model_dir)

- print('Log directory: %s' % log_dir)

- #指定了预训练模型?

- pretrained_model = None

- if args.pretrained_model:

- pretrained_model = os.path.expanduser(args.pretrained_model)

- print('Pre-trained model: %s' % pretrained_model)

- #指定了lfw数据集路径?用于测试

- if args.lfw_dir:

- print('LFW directory: %s' % args.lfw_dir)

- # Read the file containing the pairs used for testing

- pairs = lfw.read_pairs(os.path.expanduser(args.lfw_pairs))

- # Get the paths for the corresponding images

- lfw_paths, actual_issame = lfw.get_paths(os.path.expanduser(args.lfw_dir), pairs)

-

- with tf.Graph().as_default():

- tf.set_random_seed(args.seed)

- #训练步数

- global_step = tf.Variable(0, trainable=False)

-

- # Get a list of image paths and their labels

- # image_list:list 每一个元素对应一个图像的路径

- # label_list:list 每一个元素对应一个图像的标签 使用0,1,2...表示

- image_list, label_list = facenet.get_image_paths_and_labels(train_set)

- assert len(image_list)>0, 'The training set should not be empty'

-

- val_image_list, val_label_list = facenet.get_image_paths_and_labels(val_set)

-

- # Create a queue that produces indices into the image_list and label_list

- labels = ops.convert_to_tensor(label_list, dtype=tf.int32)

- #图像个数

- range_size = array_ops.shape(labels)[0]

- #创建一个索引队列,队列产生0到range_size-1的元素

- index_queue = tf.train.range_input_producer(range_size, num_epochs=None,

- shuffle=True, seed=None, capacity=32)

- #每次出队args.batch_size*args.epoch_size个元素 即一个epoch样本数

- index_dequeue_op = index_queue.dequeue_many(args.batch_size*args.epoch_size, 'index_dequeue')

-

- #定义占位符

- learning_rate_placeholder = tf.placeholder(tf.float32, name='learning_rate')

- batch_size_placeholder = tf.placeholder(tf.int32, name='batch_size')

- phase_train_placeholder = tf.placeholder(tf.bool, name='phase_train')

- image_paths_placeholder = tf.placeholder(tf.string, shape=(None,1), name='image_paths')

- labels_placeholder = tf.placeholder(tf.int32, shape=(None,1), name='labels')

- control_placeholder = tf.placeholder(tf.int32, shape=(None,1), name='control')

-

- #新建一个队列,数据流操作,fifo,队列中每一项包含一个输入图像路径和相应的标签、control shapes:对应的是每一项输入的形状

- nrof_preprocess_threads = 4

- input_queue = data_flow_ops.FIFOQueue(capacity=2000000,

- dtypes=[tf.string, tf.int32, tf.int32],

- shapes=[(1,), (1,), (1,)],

- shared_name=None, name=None)

- #返回一个入队操作

- enqueue_op = input_queue.enqueue_many([image_paths_placeholder, labels_placeholder, control_placeholder], name='enqueue_op')

- #返回一个出队操作,即每次训练获取batch大小的数据

- image_batch, label_batch = facenet.create_input_pipeline(input_queue, image_size, nrof_preprocess_threads, batch_size_placeholder)

-

- #复制副本

- image_batch = tf.identity(image_batch, 'image_batch')

- image_batch = tf.identity(image_batch, 'input')

- label_batch = tf.identity(label_batch, 'label_batch')

-

- print('Number of classes in training set: %d' % nrof_classes)

- print('Number of examples in training set: %d' % len(image_list))

-

- print('Number of classes in validation set: %d' % len(val_set))

- print('Number of examples in validation set: %d' % len(val_image_list))

-

- print('Building training graph')

-

- # Build the inference graph

- #创建CNN网络,最后一层输出 prelogits:[batch_size,128]

- prelogits, _ = network.inference(image_batch, args.keep_probability,

- phase_train=phase_train_placeholder, bottleneck_layer_size=args.embedding_size,

- weight_decay=args.weight_decay)

- #输出每个类别的概率 [batch_size,人数]

- logits = slim.fully_connected(prelogits, len(train_set), activation_fn=None,

- weights_initializer=slim.initializers.xavier_initializer(),

- weights_regularizer=slim.l2_regularizer(args.weight_decay),

- scope='Logits', reuse=False)

-

- #先计算每一行的l2范数,然后对每一行的元素/该行范数

- embeddings = tf.nn.l2_normalize(prelogits, 1, 1e-10, name='embeddings')

-

- # Norm for the prelogits

- eps = 1e-4

- #默认prelogits先求绝对值,然后沿axis=1求1范数,最后求平均

- prelogits_norm = tf.reduce_mean(tf.norm(tf.abs(prelogits)+eps, ord=args.prelogits_norm_p, axis=1))

- #把变量prelogits_norm * args.prelogits_norm_loss_factor放入tf.GraphKeys.REGULARIZATION_LOSSES集合

- tf.add_to_collection(tf.GraphKeys.REGULARIZATION_LOSSES, prelogits_norm * args.prelogits_norm_loss_factor)

-

- # Add center loss 计算中心损失,并追加到tf.GraphKeys.REGULARIZATION_LOSSES集合

- prelogits_center_loss, _ = facenet.center_loss(prelogits, label_batch, args.center_loss_alfa, nrof_classes)

- tf.add_to_collection(tf.GraphKeys.REGULARIZATION_LOSSES, prelogits_center_loss * args.center_loss_factor)

-

- #学习率指数衰减

- learning_rate = tf.train.exponential_decay(learning_rate_placeholder, global_step,

- args.learning_rate_decay_epochs*args.epoch_size, args.learning_rate_decay_factor, staircase=True)

- tf.summary.scalar('learning_rate', learning_rate)

-

- # Calculate the average cross entropy loss across the batch

- cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(

- labels=label_batch, logits=logits, name='cross_entropy_per_example')

- cross_entropy_mean = tf.reduce_mean(cross_entropy, name='cross_entropy')

- #加入交叉熵代价函数

- tf.add_to_collection('losses', cross_entropy_mean)

-

- #计算准确率 correct_prediction:[batch_size,1]

- correct_prediction = tf.cast(tf.equal(tf.argmax(logits, 1), tf.cast(label_batch, tf.int64)), tf.float32)

- accuracy = tf.reduce_mean(correct_prediction)

-

- # Calculate the total losses https://blog.csdn.net/uestc_c2_403/article/details/72415791

- regularization_losses = tf.get_collection(tf.GraphKeys.REGULARIZATION_LOSSES)

- total_loss = tf.add_n([cross_entropy_mean] + regularization_losses, name='total_loss')

-

- # Build a Graph that trains the model with one batch of examples and updates the model parameters

- train_op = facenet.train(total_loss, global_step, args.optimizer,

- learning_rate, args.moving_average_decay, tf.global_variables(), args.log_histograms)

-

- # Create a saver

- saver = tf.train.Saver(tf.trainable_variables(), max_to_keep=3)

-

- # Build the summary operation based on the TF collection of Summaries.

- summary_op = tf.summary.merge_all()

-

- # Start running operations on the Graph.

- gpu_options = tf.GPUOptions(per_process_gpu_memory_fraction=args.gpu_memory_fraction)

- sess = tf.Session(config=tf.ConfigProto(gpu_options=gpu_options, log_device_placement=False))

- sess.run(tf.global_variables_initializer())

- sess.run(tf.local_variables_initializer())

- summary_writer = tf.summary.FileWriter(log_dir, sess.graph)

- #创建一个协调器,管理线程

- coord = tf.train.Coordinator()

- #启动start_queue_runners之后, 才会开始填充文件队列、并读取数据

- tf.train.start_queue_runners(coord=coord, sess=sess)

-

- #开始执行图

- with sess.as_default():

- #加载预训练模型

- if pretrained_model:

- print('Restoring pretrained model: %s' % pretrained_model)

- saver.restore(sess, pretrained_model)

-

- # Training and validation loop

- print('Running training')

- nrof_steps = args.max_nrof_epochs*args.epoch_size

- nrof_val_samples = int(math.ceil(args.max_nrof_epochs / args.validate_every_n_epochs)) # Validate every validate_every_n_epochs as well as in the last epoch

- stat = {

- 'loss': np.zeros((nrof_steps,), np.float32),

- 'center_loss': np.zeros((nrof_steps,), np.float32),

- 'reg_loss': np.zeros((nrof_steps,), np.float32),

- 'xent_loss': np.zeros((nrof_steps,), np.float32),

- 'prelogits_norm': np.zeros((nrof_steps,), np.float32),

- 'accuracy': np.zeros((nrof_steps,), np.float32),

- 'val_loss': np.zeros((nrof_val_samples,), np.float32),

- 'val_xent_loss': np.zeros((nrof_val_samples,), np.float32),

- 'val_accuracy': np.zeros((nrof_val_samples,), np.float32),

- 'lfw_accuracy': np.zeros((args.max_nrof_epochs,), np.float32),

- 'lfw_valrate': np.zeros((args.max_nrof_epochs,), np.float32),

- 'learning_rate': np.zeros((args.max_nrof_epochs,), np.float32),

- 'time_train': np.zeros((args.max_nrof_epochs,), np.float32),

- 'time_validate': np.zeros((args.max_nrof_epochs,), np.float32),

- 'time_evaluate': np.zeros((args.max_nrof_epochs,), np.float32),

- 'prelogits_hist': np.zeros((args.max_nrof_epochs, 1000), np.float32),

- }

- #开始迭代 epochs轮

- for epoch in range(1,args.max_nrof_epochs+1):

- step = sess.run(global_step, feed_dict=None)

- # Train for one epoch

- t = time.time()

- cont = train(args, sess, epoch, image_list, label_list, index_dequeue_op, enqueue_op, image_paths_placeholder, labels_placeholder,

- learning_rate_placeholder, phase_train_placeholder, batch_size_placeholder, control_placeholder, global_step,

- total_loss, train_op, summary_op, summary_writer, regularization_losses, args.learning_rate_schedule_file,

- stat, cross_entropy_mean, accuracy, learning_rate,

- prelogits, prelogits_center_loss, args.random_rotate, args.random_crop, args.random_flip, prelogits_norm, args.prelogits_hist_max, args.use_fixed_image_standardization)

- stat['time_train'][epoch-1] = time.time() - t

-

- if not cont:

- break

-

- t = time.time()

- if len(val_image_list)>0 and ((epoch-1) % args.validate_every_n_epochs == args.validate_every_n_epochs-1 or epoch==args.max_nrof_epochs):

- validate(args, sess, epoch, val_image_list, val_label_list, enqueue_op, image_paths_placeholder, labels_placeholder, control_placeholder,

- phase_train_placeholder, batch_size_placeholder,

- stat, total_loss, regularization_losses, cross_entropy_mean, accuracy, args.validate_every_n_epochs, args.use_fixed_image_standardization)

- stat['time_validate'][epoch-1] = time.time() - t

-

- # Save variables and the metagraph if it doesn't exist already

- save_variables_and_metagraph(sess, saver, summary_writer, model_dir, subdir, epoch)

-

- # Evaluate on LFW

- t = time.time()

- if args.lfw_dir:

- evaluate(sess, enqueue_op, image_paths_placeholder, labels_placeholder, phase_train_placeholder, batch_size_placeholder, control_placeholder,

- embeddings, label_batch, lfw_paths, actual_issame, args.lfw_batch_size, args.lfw_nrof_folds, log_dir, step, summary_writer, stat, epoch,

- args.lfw_distance_metric, args.lfw_subtract_mean, args.lfw_use_flipped_images, args.use_fixed_image_standardization)

- stat['time_evaluate'][epoch-1] = time.time() - t

-

- print('Saving statistics')

- with h5py.File(stat_file_name, 'w') as f:

- for key, value in stat.items():

- f.create_dataset(key, data=value)

-

- return model_dir

-

- def find_threshold(var, percentile):

- hist, bin_edges = np.histogram(var, 100)

- cdf = np.float32(np.cumsum(hist)) / np.sum(hist)

- bin_centers = (bin_edges[:-1]+bin_edges[1:])/2

- #plt.plot(bin_centers, cdf)

- threshold = np.interp(percentile*0.01, cdf, bin_centers)

- return threshold

-

- def filter_dataset(dataset, data_filename, percentile, min_nrof_images_per_class):

- with h5py.File(data_filename,'r') as f:

- distance_to_center = np.array(f.get('distance_to_center'))

- label_list = np.array(f.get('label_list'))

- image_list = np.array(f.get('image_list'))

- distance_to_center_threshold = find_threshold(distance_to_center, percentile)

- indices = np.where(distance_to_center>=distance_to_center_threshold)[0]

- filtered_dataset = dataset

- removelist = []

- for i in indices:

- label = label_list[i]

- image = image_list[i]

- if image in filtered_dataset[label].image_paths:

- filtered_dataset[label].image_paths.remove(image)

- if len(filtered_dataset[label].image_paths)<min_nrof_images_per_class:

- removelist.append(label)

-

- ix = sorted(list(set(removelist)), reverse=True)

- for i in ix:

- del(filtered_dataset[i])

-

- return filtered_dataset

-

- def train(args, sess, epoch, image_list, label_list, index_dequeue_op, enqueue_op, image_paths_placeholder, labels_placeholder,

- learning_rate_placeholder, phase_train_placeholder, batch_size_placeholder, control_placeholder, step,

- loss, train_op, summary_op, summary_writer, reg_losses, learning_rate_schedule_file,

- stat, cross_entropy_mean, accuracy,

- learning_rate, prelogits, prelogits_center_loss, random_rotate, random_crop, random_flip, prelogits_norm, prelogits_hist_max, use_fixed_image_standardization):

- batch_number = 0

-

- if args.learning_rate>0.0:

- lr = args.learning_rate

- else:

- lr = facenet.get_learning_rate_from_file(learning_rate_schedule_file, epoch)

-

- if lr<=0:

- return False

-

- #一个epoch,batch_size*epoch_size个样本

- index_epoch = sess.run(index_dequeue_op)

- label_epoch = np.array(label_list)[index_epoch]

- image_epoch = np.array(image_list)[index_epoch]

-

- # Enqueue one epoch of image paths and labels

- labels_array = np.expand_dims(np.array(label_epoch),1)

- image_paths_array = np.expand_dims(np.array(image_epoch),1)

- control_value = facenet.RANDOM_ROTATE * random_rotate + facenet.RANDOM_CROP * random_crop + facenet.RANDOM_FLIP * random_flip + facenet.FIXED_STANDARDIZATION * use_fixed_image_standardization

- control_array = np.ones_like(labels_array) * control_value

- sess.run(enqueue_op, {image_paths_placeholder: image_paths_array, labels_placeholder: labels_array, control_placeholder: control_array})

-

- # Training loop 一个epoch

- train_time = 0

- while batch_number < args.epoch_size:

- start_time = time.time()

- feed_dict = {learning_rate_placeholder: lr, phase_train_placeholder:True, batch_size_placeholder:args.batch_size}

- tensor_list = [loss, train_op, step, reg_losses, prelogits, cross_entropy_mean, learning_rate, prelogits_norm, accuracy, prelogits_center_loss]

- if batch_number % 100 == 0:

- loss_, _, step_, reg_losses_, prelogits_, cross_entropy_mean_, lr_, prelogits_norm_, accuracy_, center_loss_, summary_str = sess.run(tensor_list + [summary_op], feed_dict=feed_dict)

- summary_writer.add_summary(summary_str, global_step=step_)

- else:

- loss_, _, step_, reg_losses_, prelogits_, cross_entropy_mean_, lr_, prelogits_norm_, accuracy_, center_loss_ = sess.run(tensor_list, feed_dict=feed_dict)

-

- duration = time.time() - start_time

- stat['loss'][step_-1] = loss_

- stat['center_loss'][step_-1] = center_loss_

- stat['reg_loss'][step_-1] = np.sum(reg_losses_)

- stat['xent_loss'][step_-1] = cross_entropy_mean_

- stat['prelogits_norm'][step_-1] = prelogits_norm_

- stat['learning_rate'][epoch-1] = lr_

- stat['accuracy'][step_-1] = accuracy_

- stat['prelogits_hist'][epoch-1,:] += np.histogram(np.minimum(np.abs(prelogits_), prelogits_hist_max), bins=1000, range=(0.0, prelogits_hist_max))[0]

-

- duration = time.time() - start_time

- print('Epoch: [%d][%d/%d]\tTime %.3f\tLoss %2.3f\tXent %2.3f\tRegLoss %2.3f\tAccuracy %2.3f\tLr %2.5f\tCl %2.3f' %

- (epoch, batch_number+1, args.epoch_size, duration, loss_, cross_entropy_mean_, np.sum(reg_losses_), accuracy_, lr_, center_loss_))

- batch_number += 1

- train_time += duration

- # Add validation loss and accuracy to summary

- summary = tf.Summary()

- #pylint: disable=maybe-no-member

- summary.value.add(tag='time/total', simple_value=train_time)

- summary_writer.add_summary(summary, global_step=step_)

- return True

-

- def validate(args, sess, epoch, image_list, label_list, enqueue_op, image_paths_placeholder, labels_placeholder, control_placeholder,

- phase_train_placeholder, batch_size_placeholder,

- stat, loss, regularization_losses, cross_entropy_mean, accuracy, validate_every_n_epochs, use_fixed_image_standardization):

-

- print('Running forward pass on validation set')

-

- nrof_batches = len(label_list) // args.lfw_batch_size

- nrof_images = nrof_batches * args.lfw_batch_size

-

- # Enqueue one epoch of image paths and labels

- labels_array = np.expand_dims(np.array(label_list[:nrof_images]),1)

- image_paths_array = np.expand_dims(np.array(image_list[:nrof_images]),1)

- control_array = np.ones_like(labels_array, np.int32)*facenet.FIXED_STANDARDIZATION * use_fixed_image_standardization

- sess.run(enqueue_op, {image_paths_placeholder: image_paths_array, labels_placeholder: labels_array, control_placeholder: control_array})

-

- loss_array = np.zeros((nrof_batches,), np.float32)

- xent_array = np.zeros((nrof_batches,), np.float32)

- accuracy_array = np.zeros((nrof_batches,), np.float32)

-

- # Training loop

- start_time = time.time()

- for i in range(nrof_batches):

- feed_dict = {phase_train_placeholder:False, batch_size_placeholder:args.lfw_batch_size}

- loss_, cross_entropy_mean_, accuracy_ = sess.run([loss, cross_entropy_mean, accuracy], feed_dict=feed_dict)

- loss_array[i], xent_array[i], accuracy_array[i] = (loss_, cross_entropy_mean_, accuracy_)

- if i % 10 == 9:

- print('.', end='')

- sys.stdout.flush()

- print('')

-

- duration = time.time() - start_time

-

- val_index = (epoch-1)//validate_every_n_epochs

- stat['val_loss'][val_index] = np.mean(loss_array)

- stat['val_xent_loss'][val_index] = np.mean(xent_array)

- stat['val_accuracy'][val_index] = np.mean(accuracy_array)

-

- print('Validation Epoch: %d\tTime %.3f\tLoss %2.3f\tXent %2.3f\tAccuracy %2.3f' %

- (epoch, duration, np.mean(loss_array), np.mean(xent_array), np.mean(accuracy_array)))

-

-

- def evaluate(sess, enqueue_op, image_paths_placeholder, labels_placeholder, phase_train_placeholder, batch_size_placeholder, control_placeholder,

- embeddings, labels, image_paths, actual_issame, batch_size, nrof_folds, log_dir, step, summary_writer, stat, epoch, distance_metric, subtract_mean, use_flipped_images, use_fixed_image_standardization):

- start_time = time.time()

- # Run forward pass to calculate embeddings

- print('Runnning forward pass on LFW images')

-

- # Enqueue one epoch of image paths and labels

- nrof_embeddings = len(actual_issame)*2 # nrof_pairs * nrof_images_per_pair

- nrof_flips = 2 if use_flipped_images else 1

- nrof_images = nrof_embeddings * nrof_flips

- labels_array = np.expand_dims(np.arange(0,nrof_images),1)

- image_paths_array = np.expand_dims(np.repeat(np.array(image_paths),nrof_flips),1)

- control_array = np.zeros_like(labels_array, np.int32)

- if use_fixed_image_standardization:

- control_array += np.ones_like(labels_array)*facenet.FIXED_STANDARDIZATION

- if use_flipped_images:

- # Flip every second image

- control_array += (labels_array % 2)*facenet.FLIP

- sess.run(enqueue_op, {image_paths_placeholder: image_paths_array, labels_placeholder: labels_array, control_placeholder: control_array})

-

- embedding_size = int(embeddings.get_shape()[1])

- assert nrof_images % batch_size == 0, 'The number of LFW images must be an integer multiple of the LFW batch size'

- nrof_batches = nrof_images // batch_size

- emb_array = np.zeros((nrof_images, embedding_size))

- lab_array = np.zeros((nrof_images,))

- for i in range(nrof_batches):

- feed_dict = {phase_train_placeholder:False, batch_size_placeholder:batch_size}

- emb, lab = sess.run([embeddings, labels], feed_dict=feed_dict)

- lab_array[lab] = lab

- emb_array[lab, :] = emb

- if i % 10 == 9:

- print('.', end='')

- sys.stdout.flush()

- print('')

- embeddings = np.zeros((nrof_embeddings, embedding_size*nrof_flips))

- if use_flipped_images:

- # Concatenate embeddings for flipped and non flipped version of the images

- embeddings[:,:embedding_size] = emb_array[0::2,:]

- embeddings[:,embedding_size:] = emb_array[1::2,:]

- else:

- embeddings = emb_array

-

- assert np.array_equal(lab_array, np.arange(nrof_images))==True, 'Wrong labels used for evaluation, possibly caused by training examples left in the input pipeline'

- _, _, accuracy, val, val_std, far = lfw.evaluate(embeddings, actual_issame, nrof_folds=nrof_folds, distance_metric=distance_metric, subtract_mean=subtract_mean)

-

- print('Accuracy: %2.5f+-%2.5f' % (np.mean(accuracy), np.std(accuracy)))

- print('Validation rate: %2.5f+-%2.5f @ FAR=%2.5f' % (val, val_std, far))

- lfw_time = time.time() - start_time

- # Add validation loss and accuracy to summary

- summary = tf.Summary()

- #pylint: disable=maybe-no-member

- summary.value.add(tag='lfw/accuracy', simple_value=np.mean(accuracy))

- summary.value.add(tag='lfw/val_rate', simple_value=val)

- summary.value.add(tag='time/lfw', simple_value=lfw_time)

- summary_writer.add_summary(summary, step)

- with open(os.path.join(log_dir,'lfw_result.txt'),'at') as f:

- f.write('%d\t%.5f\t%.5f\n' % (step, np.mean(accuracy), val))

- stat['lfw_accuracy'][epoch-1] = np.mean(accuracy)

- stat['lfw_valrate'][epoch-1] = val

-

- def save_variables_and_metagraph(sess, saver, summary_writer, model_dir, model_name, step):

- # Save the model checkpoint

- print('Saving variables')

- start_time = time.time()

- checkpoint_path = os.path.join(model_dir, 'model-%s.ckpt' % model_name)

- saver.save(sess, checkpoint_path, global_step=step, write_meta_graph=False)

- save_time_variables = time.time() - start_time

- print('Variables saved in %.2f seconds' % save_time_variables)

- metagraph_filename = os.path.join(model_dir, 'model-%s.meta' % model_name)

- save_time_metagraph = 0

- if not os.path.exists(metagraph_filename):

- print('Saving metagraph')

- start_time = time.time()

- saver.export_meta_graph(metagraph_filename)

- save_time_metagraph = time.time() - start_time

- print('Metagraph saved in %.2f seconds' % save_time_metagraph)

- summary = tf.Summary()

- #pylint: disable=maybe-no-member

- summary.value.add(tag='time/save_variables', simple_value=save_time_variables)

- summary.value.add(tag='time/save_metagraph', simple_value=save_time_metagraph)

- summary_writer.add_summary(summary, step)

-

-

- def parse_arguments(argv):

- '''

- 参数解析

- '''

- parser = argparse.ArgumentParser()

-

- #日志文件保存路径

- parser.add_argument('--logs_base_dir', type=str,

- help='Directory where to write event logs.', default='~/logs/facenet')

- #模型文件保存路径

- parser.add_argument('--models_base_dir', type=str,

- help='Directory where to write trained models and checkpoints.', default='~/models/facenet')

- #GOU内存分配指定大小(百分比)

- parser.add_argument('--gpu_memory_fraction', type=float,

- help='Upper bound on the amount of GPU memory that will be used by the process.', default=1.0)

- #加载预训练模型

- parser.add_argument('--pretrained_model', type=str,

- help='Load a pretrained model before training starts.')

- #经过MTCNN对齐和人脸检测后的数据存放路径

- parser.add_argument('--data_dir', type=str,

- help='Path to the data directory containing aligned face patches.',

- default='~/datasets/casia/casia_maxpy_mtcnnalign_182_160')

- #指定网络结构

- parser.add_argument('--model_def', type=str,

- help='Model definition. Points to a module containing the definition of the inference graph.', default='models.inception_resnet_v1')

- #训练epoch数

- parser.add_argument('--max_nrof_epochs', type=int,

- help='Number of epochs to run.', default=500)

- #指定batch大小

- parser.add_argument('--batch_size', type=int,

- help='Number of images to process in a batch.', default=90)

- #指定图片大小

- parser.add_argument('--image_size', type=int,

- help='Image size (height, width) in pixels.', default=160)

- #每一个epoch的batches数量

- parser.add_argument('--epoch_size', type=int,

- help='Number of batches per epoch.', default=1000)

- #embedding的维度

- parser.add_argument('--embedding_size', type=int,

- help='Dimensionality of the embedding.', default=128)

- #随机裁切?

- parser.add_argument('--random_crop',

- help='Performs random cropping of training images. If false, the center image_size pixels from the training images are used. ' +

- 'If the size of the images in the data directory is equal to image_size no cropping is performed', action='store_true')

- #随即翻转

- parser.add_argument('--random_flip',

- help='Performs random horizontal flipping of training images.', action='store_true')

- #随机旋转

- parser.add_argument('--random_rotate',

- help='Performs random rotations of training images.', action='store_true')

- parser.add_argument('--use_fixed_image_standardization',

- help='Performs fixed standardization of images.', action='store_true')

- #弃权系数

- parser.add_argument('--keep_probability', type=float,

- help='Keep probability of dropout for the fully connected layer(s).', default=1.0)

- #正则化系数

- parser.add_argument('--weight_decay', type=float,

- help='L2 weight regularization.', default=0.0)

- #中心损失和Softmax损失的平衡系数

- parser.add_argument('--center_loss_factor', type=float,

- help='Center loss factor.', default=0.0)

- #中心损失的内部参数

- parser.add_argument('--center_loss_alfa', type=float,

- help='Center update rate for center loss.', default=0.95)

- parser.add_argument('--prelogits_norm_loss_factor', type=float,

- help='Loss based on the norm of the activations in the prelogits layer.', default=0.0)

- parser.add_argument('--prelogits_norm_p', type=float,

- help='Norm to use for prelogits norm loss.', default=1.0)

- parser.add_argument('--prelogits_hist_max', type=float,

- help='The max value for the prelogits histogram.', default=10.0)

- #优化器

- parser.add_argument('--optimizer', type=str, choices=['ADAGRAD', 'ADADELTA', 'ADAM', 'RMSPROP', 'MOM'],

- help='The optimization algorithm to use', default='ADAGRAD')

- #学习率

- parser.add_argument('--learning_rate', type=float,

- help='Initial learning rate. If set to a negative value a learning rate ' +

- 'schedule can be specified in the file "learning_rate_schedule.txt"', default=0.1)

- parser.add_argument('--learning_rate_decay_epochs', type=int,

- help='Number of epochs between learning rate decay.', default=100)

- parser.add_argument('--learning_rate_decay_factor', type=float,

- help='Learning rate decay factor.', default=1.0)

- parser.add_argument('--moving_average_decay', type=float,

- help='Exponential decay for tracking of training parameters.', default=0.9999)

- parser.add_argument('--seed', type=int,

- help='Random seed.', default=666)

- parser.add_argument('--nrof_preprocess_threads', type=int,

- help='Number of preprocessing (data loading and augmentation) threads.', default=4)

- parser.add_argument('--log_histograms',

- help='Enables logging of weight/bias histograms in tensorboard.', action='store_true')

- parser.add_argument('--learning_rate_schedule_file', type=str,

- help='File containing the learning rate schedule that is used when learning_rate is set to to -1.', default='data/learning_rate_schedule.txt')

- parser.add_argument('--filter_filename', type=str,

- help='File containing image data used for dataset filtering', default='')