热门标签

热门文章

- 1菜鸡博主的2019年春招实习之旅(已完结)_zilliz是外企吗

- 2python中调试pdb库用法详解_python pdb

- 3SpringBoot(一)使用itelliJ社区版创建SpringBoot项目_idea社区版springboot插件

- 4【每日一题】求一个整数的惩罚数

- 5【计算机网络】网络层 : IP 数据报格式 ( IP 数据报首部格式 )_ip首部格式

- 6论文阅读笔记:《ERNIE 2.0: A Continual Pre-training Framework for Language Understanding》_持续性预训练

- 7中文JsonList转化为对象List_jsonlist转list

- 8华为OD机试 - 求字符串中所有整数的最小和(Java & JS & Python & C & C++)_输入字符串s,输出s中包含所有整数的最小和 java

- 9【yolo系列:yolov7改进wise-iou】

- 10打造一个智能微信聊天机器人:用Python编程实现_开源 微信 机器人 python

当前位置: article > 正文

docker 安装canal

作者:羊村懒王 | 2024-04-11 08:55:28

赞

踩

docker 安装canal

一、新建文件夹

新建文件夹logs, 新建文件canal.properties instance.properties docker.compose.yml

canal.propertie 修改如下:

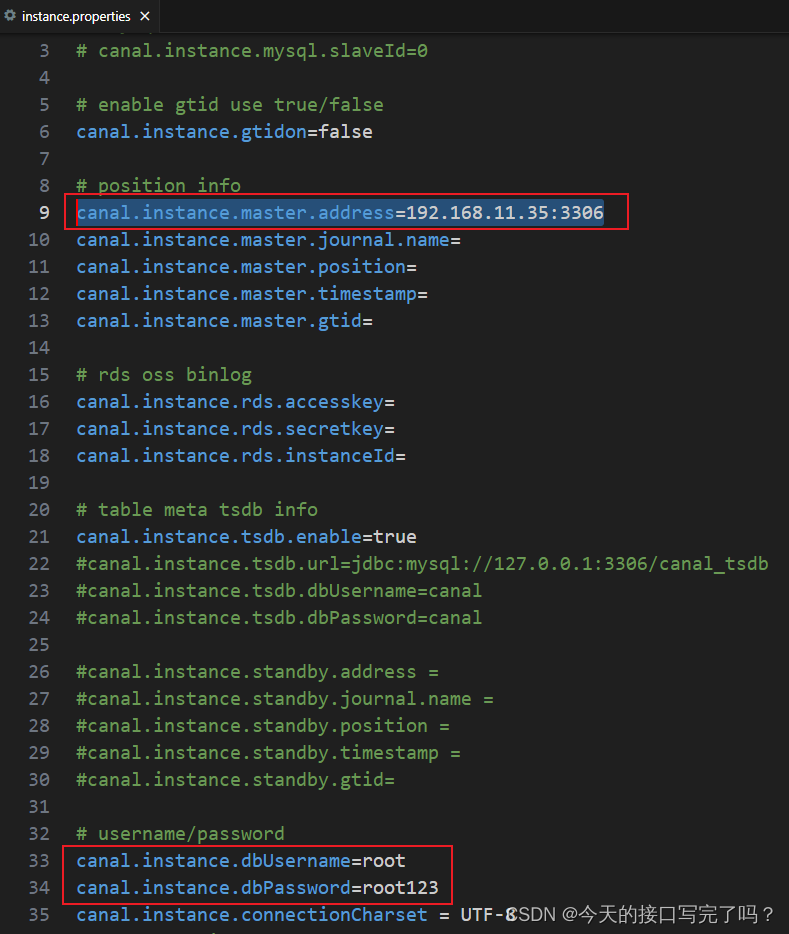

修改instance.properties内容如下

1.1 canal.properties

1.1 canal.properties

- #################################################

- ######### common argument #############

- #################################################

- # tcp bind ip

- canal.ip =

- # register ip to zookeeper

- canal.register.ip =

- canal.port = 11111

- canal.metrics.pull.port = 11112

- # canal instance user/passwd

- # canal.user = canal

- # canal.passwd = E3619321C1A937C46A0D8BD1DAC39F93B27D4458

-

- # canal admin config

- #canal.admin.manager = 127.0.0.1:8089

- canal.admin.port = 11110

- canal.admin.user = admin

- canal.admin.passwd = 4ACFE3202A5FF5CF467898FC58AAB1D615029441

- # admin auto register

- #canal.admin.register.auto = true

- #canal.admin.register.cluster =

- #canal.admin.register.name =

-

- canal.zkServers =

- # flush data to zk

- canal.zookeeper.flush.period = 1000

- canal.withoutNetty = false

- # tcp, kafka, rocketMQ, rabbitMQ, pulsarMQ

- canal.serverMode = rabbitMQ

- # flush meta cursor/parse position to file

- canal.file.data.dir = ${canal.conf.dir}

- canal.file.flush.period = 1000

- ## memory store RingBuffer size, should be Math.pow(2,n)

- canal.instance.memory.buffer.size = 16384

- ## memory store RingBuffer used memory unit size , default 1kb

- canal.instance.memory.buffer.memunit = 1024

- ## meory store gets mode used MEMSIZE or ITEMSIZE

- canal.instance.memory.batch.mode = MEMSIZE

- canal.instance.memory.rawEntry = true

-

- ## detecing config

- canal.instance.detecting.enable = false

- #canal.instance.detecting.sql = insert into retl.xdual values(1,now()) on duplicate key update x=now()

- canal.instance.detecting.sql = select 1

- canal.instance.detecting.interval.time = 3

- canal.instance.detecting.retry.threshold = 3

- canal.instance.detecting.heartbeatHaEnable = false

-

- # support maximum transaction size, more than the size of the transaction will be cut into multiple transactions delivery

- canal.instance.transaction.size = 1024

- # mysql fallback connected to new master should fallback times

- canal.instance.fallbackIntervalInSeconds = 60

-

- # network config

- canal.instance.network.receiveBufferSize = 16384

- canal.instance.network.sendBufferSize = 16384

- canal.instance.network.soTimeout = 30

-

- # binlog filter config

- canal.instance.filter.druid.ddl = true

- canal.instance.filter.query.dcl = false

- canal.instance.filter.query.dml = false

- canal.instance.filter.query.ddl = false

- canal.instance.filter.table.error = false

- canal.instance.filter.rows = false

- canal.instance.filter.transaction.entry = false

- canal.instance.filter.dml.insert = false

- canal.instance.filter.dml.update = false

- canal.instance.filter.dml.delete = false

-

- # binlog format/image check

- canal.instance.binlog.format = ROW,STATEMENT,MIXED

- canal.instance.binlog.image = FULL,MINIMAL,NOBLOB

-

- # binlog ddl isolation

- canal.instance.get.ddl.isolation = false

-

- # parallel parser config

- canal.instance.parser.parallel = true

- ## concurrent thread number, default 60% available processors, suggest not to exceed Runtime.getRuntime().availableProcessors()

- #canal.instance.parser.parallelThreadSize = 16

- ## disruptor ringbuffer size, must be power of 2

- canal.instance.parser.parallelBufferSize = 256

-

- # table meta tsdb info

- canal.instance.tsdb.enable = true

- canal.instance.tsdb.dir = ${canal.file.data.dir:../conf}/${canal.instance.destination:}

- canal.instance.tsdb.url = jdbc:h2:${canal.instance.tsdb.dir}/h2;CACHE_SIZE=1000;MODE=MYSQL;

- canal.instance.tsdb.dbUsername = canal

- canal.instance.tsdb.dbPassword = canal

- # dump snapshot interval, default 24 hour

- canal.instance.tsdb.snapshot.interval = 24

- # purge snapshot expire , default 360 hour(15 days)

- canal.instance.tsdb.snapshot.expire = 360

-

- #################################################

- ######### destinations #############

- #################################################

- canal.destinations = example

- # conf root dir

- canal.conf.dir = ../conf

- # auto scan instance dir add/remove and start/stop instance

- canal.auto.scan = true

- canal.auto.scan.interval = 5

- # set this value to 'true' means that when binlog pos not found, skip to latest.

- # WARN: pls keep 'false' in production env, or if you know what you want.

- canal.auto.reset.latest.pos.mode = false

-

- canal.instance.tsdb.spring.xml = classpath:spring/tsdb/h2-tsdb.xml

- #canal.instance.tsdb.spring.xml = classpath:spring/tsdb/mysql-tsdb.xml

-

- canal.instance.global.mode = spring

- canal.instance.global.lazy = false

- canal.instance.global.manager.address = ${canal.admin.manager}

- #canal.instance.global.spring.xml = classpath:spring/memory-instance.xml

- canal.instance.global.spring.xml = classpath:spring/file-instance.xml

- #canal.instance.global.spring.xml = classpath:spring/default-instance.xml

-

- ##################################################

- ######### MQ Properties #############

- ##################################################

- # aliyun ak/sk , support rds/mq

- canal.aliyun.accessKey =

- canal.aliyun.secretKey =

- canal.aliyun.uid=

-

- canal.mq.flatMessage = true

- canal.mq.canalBatchSize = 50

- canal.mq.canalGetTimeout = 100

- # Set this value to "cloud", if you want open message trace feature in aliyun.

- canal.mq.accessChannel = local

-

- canal.mq.database.hash = true

- canal.mq.send.thread.size = 30

- canal.mq.build.thread.size = 8

-

- ##################################################

- ######### Kafka #############

- ##################################################

- kafka.bootstrap.servers = 127.0.0.1:9092

- kafka.acks = all

- kafka.compression.type = none

- kafka.batch.size = 16384

- kafka.linger.ms = 1

- kafka.max.request.size = 1048576

- kafka.buffer.memory = 33554432

- kafka.max.in.flight.requests.per.connection = 1

- kafka.retries = 0

-

- kafka.kerberos.enable = false

- kafka.kerberos.krb5.file = ../conf/kerberos/krb5.conf

- kafka.kerberos.jaas.file = ../conf/kerberos/jaas.conf

-

- # sasl demo

- # kafka.sasl.jaas.config = org.apache.kafka.common.security.scram.ScramLoginModule required \\n username=\"alice\" \\npassword="alice-secret\";

- # kafka.sasl.mechanism = SCRAM-SHA-512

- # kafka.security.protocol = SASL_PLAINTEXT

-

- ##################################################

- ######### RocketMQ #############

- ##################################################

- rocketmq.producer.group = test

- rocketmq.enable.message.trace = false

- rocketmq.customized.trace.topic =

- rocketmq.namespace =

- rocketmq.namesrv.addr = 127.0.0.1:9876

- rocketmq.retry.times.when.send.failed = 0

- rocketmq.vip.channel.enabled = false

- rocketmq.tag =

-

- ##################################################

- ######### RabbitMQ #############

- ##################################################

- rabbitmq.host = 192.168.11.44:5672

- rabbitmq.virtual.host = /

- rabbitmq.exchange = e1

- rabbitmq.username = guest

- rabbitmq.password = guest

- rabbitmq.deliveryMode = 2

-

-

- ##################################################

- ######### Pulsar #############

- ##################################################

- pulsarmq.serverUrl =

- pulsarmq.roleToken =

- pulsarmq.topicTenantPrefix =

1.2 instance.properties

- #################################################

- ## mysql serverId , v1.0.26+ will autoGen

- # canal.instance.mysql.slaveId=0

-

- # enable gtid use true/false

- canal.instance.gtidon=false

-

- # position info

- canal.instance.master.address=192.168.11.79:3308

- canal.instance.master.journal.name=

- canal.instance.master.position=

- canal.instance.master.timestamp=

- canal.instance.master.gtid=

-

- # rds oss binlog

- canal.instance.rds.accesskey=

- canal.instance.rds.secretkey=

- canal.instance.rds.instanceId=

-

- # table meta tsdb info

- canal.instance.tsdb.enable=true

- #canal.instance.tsdb.url=jdbc:mysql://127.0.0.1:3306/canal_tsdb

- #canal.instance.tsdb.dbUsername=canal

- #canal.instance.tsdb.dbPassword=canal

-

- #canal.instance.standby.address =

- #canal.instance.standby.journal.name =

- #canal.instance.standby.position =

- #canal.instance.standby.timestamp =

- #canal.instance.standby.gtid=

-

- # username/password

- canal.instance.dbUsername=root

- canal.instance.dbPassword=123456

- canal.instance.connectionCharset = UTF-8

- # enable druid Decrypt database password

- canal.instance.enableDruid=false

- #canal.instance.pwdPublicKey=MFwwDQYJKoZIhvcNAQEBBQADSwAwSAJBALK4BUxdDltRRE5/zXpVEVPUgunvscYFtEip3pmLlhrWpacX7y7GCMo2/JM6LeHmiiNdH1FWgGCpUfircSwlWKUCAwEAAQ==

-

- # table regex

- canal.instance.filter.regex=.*\\..*

- # table black regex

- canal.instance.filter.black.regex=mysql\\.slave_.*

- # table field filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

- #canal.instance.filter.field=test1.t_product:id/subject/keywords,test2.t_company:id/name/contact/ch

- # table field black filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

- #canal.instance.filter.black.field=test1.t_product:subject/product_image,test2.t_company:id/name/contact/ch

-

- # mq config

- canal.mq.topic=example

- # dynamic topic route by schema or table regex

- #canal.mq.dynamicTopic=mytest1.user,topic2:mytest2\\..*,.*\\..*

- canal.mq.partition=0

- # hash partition config

- #canal.mq.enableDynamicQueuePartition=false

- #canal.mq.partitionsNum=3

- #canal.mq.dynamicTopicPartitionNum=test.*:4,mycanal:6

- #canal.mq.partitionHash=test.table:id^name,.*\\..*

- #################################################

1.3 docker.compose.yml

- version: '3'

- services:

- canal:

- container_name: canal_latest

- image: canal/canal-server:v1.1.5

- restart: always

- ports:

- - 11111:11111

- volumes:

- - ./canal.properties:/home/admin/canal-server/conf/canal.properties

- - ./instance.properties:/home/admin/canal-server/conf/example/instance.properties

- - ./logs:/home/admin/canal-server/logs

二、启动容器 :先进入canal 目录

docker-compose up -d

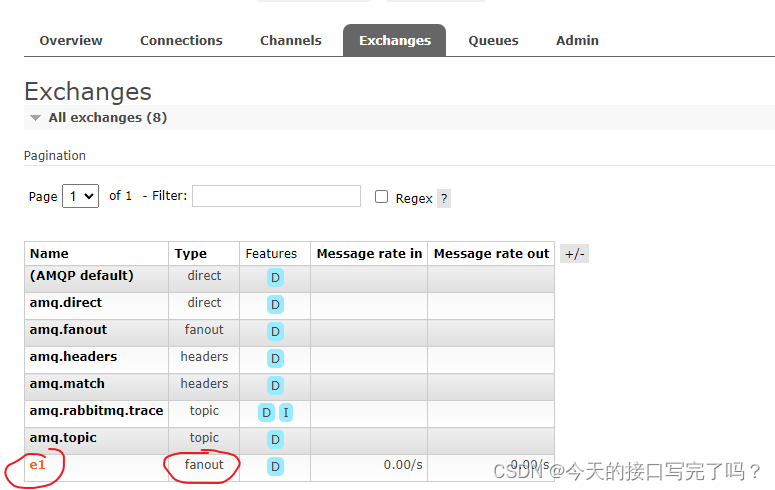

三、 手动创建一个交换机 ,名字和配置文件保持一致

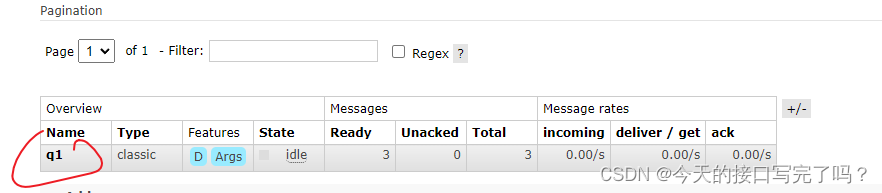

然后绑定一个消息队列,修改自己配置的mysql 数据

观察消息队列

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/羊村懒王/article/detail/403991

推荐阅读

相关标签