热门标签

热门文章

- 1本届挑战赛亚军方案:基于大模型和多AGENT协同的运维_assess and summarize: improve outage understanding

- 2Gartner发布2023年新兴技术成熟度曲线:未来十年将影响企业和社会的25项颠覆性技术_gatner 2023新兴技术成熟度曲线

- 3【深度学习&NLP】数据预处理的详细说明(含数据清洗、分词、过滤停用词、实体识别、词性标注、向量化、划分数据集等详细的处理步骤以及一些常用的方法)_nlp数据预处理

- 4检测头篇 | 原创自研 | YOLOv8 更换 挤压激励增强精准头 | 附详细结构图_yolov8 修改检测头

- 5java中word导入数据库_java导入word文档存到数据库

- 6为什么mysql没走索引?_mysql不走索引

- 7如何在Centos7下安装Nginx_centos nginx

- 8学生信息管理系统模块问题篇_教务管理系统的学生模块遇到那些问题

- 9零基础应该怎么学剪辑,大概要学多长时间?在磨金石教育学靠谱吗?_磨金石教育学习剪辑靠谱吗

- 10人工智能面临的15个风险_人工智能程序存在错误风险

当前位置: article > 正文

深度学习练手项目(一)-----利用PyTorch实现MNIST手写数字识别_一个可以进行迭代的深度学习项目练手csdn

作者:花生_TL007 | 2024-03-25 19:21:21

赞

踩

一个可以进行迭代的深度学习项目练手csdn

一、前言

MNIST手写数字识别程序就不过多赘述了,这个程序在深度学习中的地位跟C语言中的Hello World地位并驾齐驱,虽然很基础,但很重要,是深度学习入门必备的程序之一。

二、MNIST数据集介绍

MNIST包括6万张28*28的训练样本,1万张测试样本。

三、PyTorch实现

3.1 定义超参数

# 定义超参数

BATCH_SIZE=512 #大概需要2G的显存

EPOCHS=20 # 总共训练批次

DEVICE = torch.device("cuda" if torch.cuda.is_available() else "cpu") # 让torch判断是否使用GPU,建议使用GPU环境,因为会快很多

- 1

- 2

- 3

- 4

3.2 导入训练、测试数据

# 分别导入训练、测试数据,PyTorch中已经集成了MNIST数据集,我们只需要DataLoader导入即可

train_loader = torch.utils.data.DataLoader(

datasets.MNIST('data', train=True, download=True,

transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=BATCH_SIZE, shuffle=True)

test_loader = torch.utils.data.DataLoader(

datasets.MNIST('data', train=False, transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])),

batch_size=BATCH_SIZE, shuffle=True)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

3.3 搭建深度学习网络模型

class ConvNet(nn.Module): def __init__(self): super().__init__() # batch*1*28*28(每次会送入batch个样本,输入通道数1(黑白图像),图像分辨率是28x28) # 下面的卷积层Conv2d的第一个参数指输入通道数,第二个参数指输出通道数,第三个参数指卷积核的大小 self.conv1 = nn.Conv2d(1, 10, 5) # 输入通道数1,输出通道数10,核的大小5 self.conv2 = nn.Conv2d(10, 20, 3) # 输入通道数10,输出通道数20,核的大小3 # 下面的全连接层Linear的第一个参数指输入通道数,第二个参数指输出通道数 self.fc1 = nn.Linear(20*10*10, 500) # 输入通道数是2000,输出通道数是500 self.fc2 = nn.Linear(500, 10) # 输入通道数是500,输出通道数是10,即10分类 def forward(self,x): in_size = x.size(0) # 在本例中in_size=512,也就是BATCH_SIZE的值。输入的x可以看成是512*1*28*28的张量。 out = self.conv1(x) # batch*1*28*28 -> batch*10*24*24(28x28的图像经过一次核为5x5的卷积,输出变为24x24) out = F.relu(out) # batch*10*24*24(激活函数ReLU不改变形状)) out = F.max_pool2d(out, 2, 2) # batch*10*24*24 -> batch*10*12*12(2*2的池化层会减半) out = self.conv2(out) # batch*10*12*12 -> batch*20*10*10(再卷积一次,核的大小是3) out = F.relu(out) # batch*20*10*10 out = out.view(in_size, -1) # batch*20*10*10 -> batch*2000(out的第二维是-1,说明是自动推算,本例中第二维是20*10*10) out = self.fc1(out) # batch*2000 -> batch*500 out = F.relu(out) # batch*500 out = self.fc2(out) # batch*500 -> batch*10 out = F.log_softmax(out, dim=1) # 计算log(softmax(x)) return out

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

3.4 确定要使用的优化算法

这里使用简单粗暴的Adam

model = ConvNet().to(DEVICE)

optimizer = optim.Adam(model.parameters())

- 1

- 2

3.5 定义训练函数

def train(model, device, train_loader, optimizer, epoch):

model.train()

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = F.nll_loss(output, target)

loss.backward()

optimizer.step()

if(batch_idx+1)%30 == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.item()))

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

3.6 定义测试函数

def test(model, device, test_loader): model.eval() test_loss = 0 correct = 0 with torch.no_grad(): for data, target in test_loader: data, target = data.to(device), target.to(device) output = model(data) test_loss += F.nll_loss(output, target, reduction='sum').item() # 将一批的损失相加 pred = output.max(1, keepdim=True)[1] # 找到概率最大的下标 correct += pred.eq(target.view_as(pred)).sum().item() test_loss /= len(test_loader.dataset) print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format( test_loss, correct, len(test_loader.dataset), 100. * correct / len(test_loader.dataset)))

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

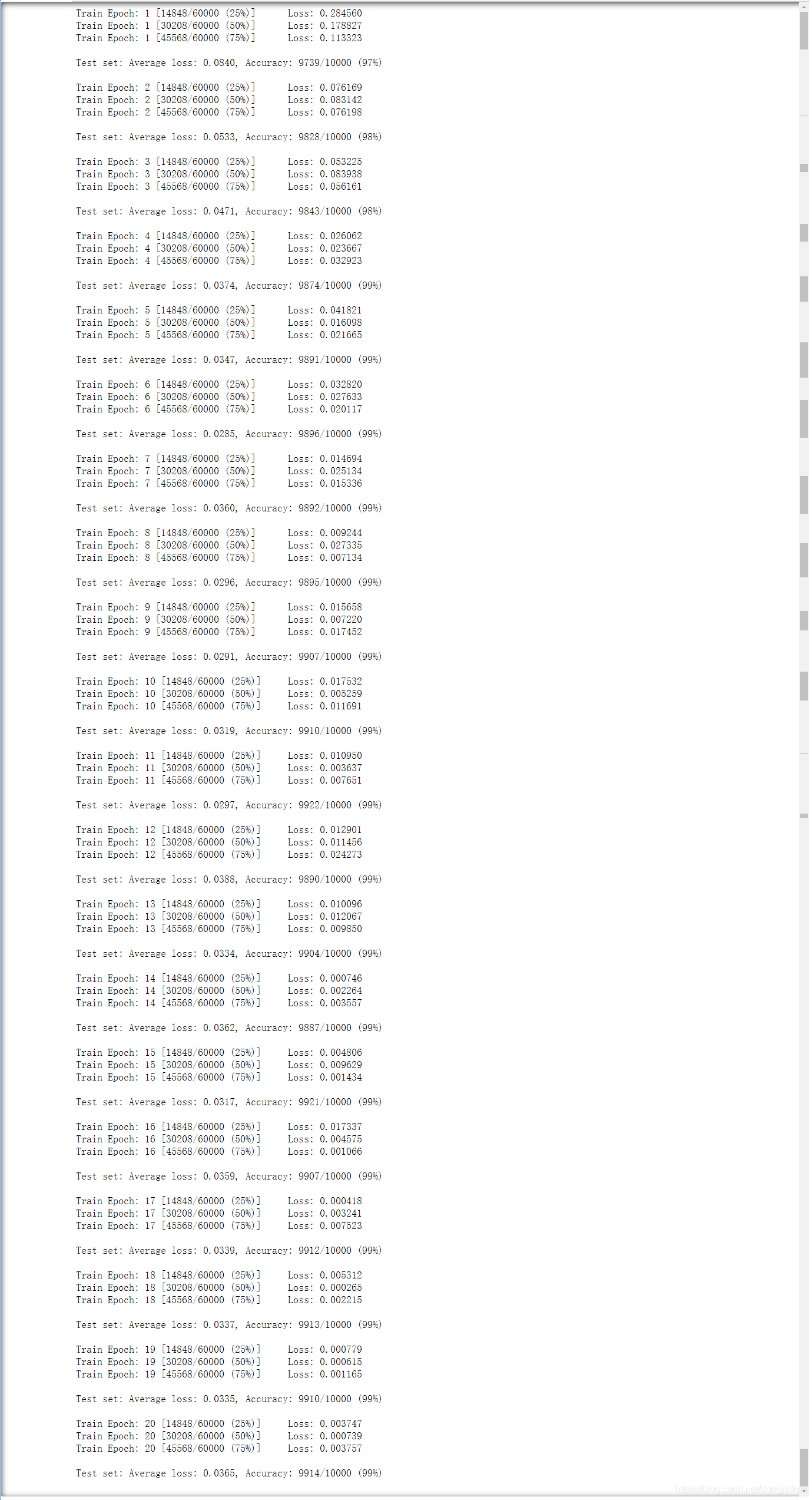

3.7 训练并测试

for epoch in range(1, EPOCHS + 1):

train(model, DEVICE, train_loader, optimizer, epoch)

test(model, DEVICE, test_loader)

- 1

- 2

- 3

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/花生_TL007/article/detail/312000

推荐阅读

相关标签