热门标签

热门文章

- 1微信小程序:实现音乐播放器的功能_this.data.audiocontext.play()

- 2vue滚动加载列表数据展示封装懒加载_vue 滚动加载数据

- 3Vue3 实现滚动加载更多_vue3 如何实现滚动加载

- 4Kafka 生产者_kafka多个生产者

- 5H5网页使用支付宝授权登录获取用户信息详解_alipay.system.oauth.token

- 6spark编程实战(四) —— 词频统计(WordCount)和 Top K_spark统计以上5个文件中的单词词频(wordcount),结果保存到hdfs中,截图频率最高的5

- 7docker ui 部署_docker x-ui

- 8机器学习集成学习与模型融合!

- 9堆排序算法(图解详细流程)

- 10mac photoshop install无法安装_MAC安装软件显示‘无法确认安全’或‘将其移至废纸篓’解决办法...

当前位置: article > 正文

语音识别教程:Whisper_whisper使用教程

作者:weixin_40725706 | 2024-06-12 12:51:13

赞

踩

whisper使用教程

语音识别教程:Whisper

一、前言

最近看国外教学视频的需求,有些不是很适应,找了找AI字幕效果也不是很好,遂打算基于Whisper和GPT做一个AI字幕给自己。

二、具体步骤

1、安装FFmpeg

Windows:

-

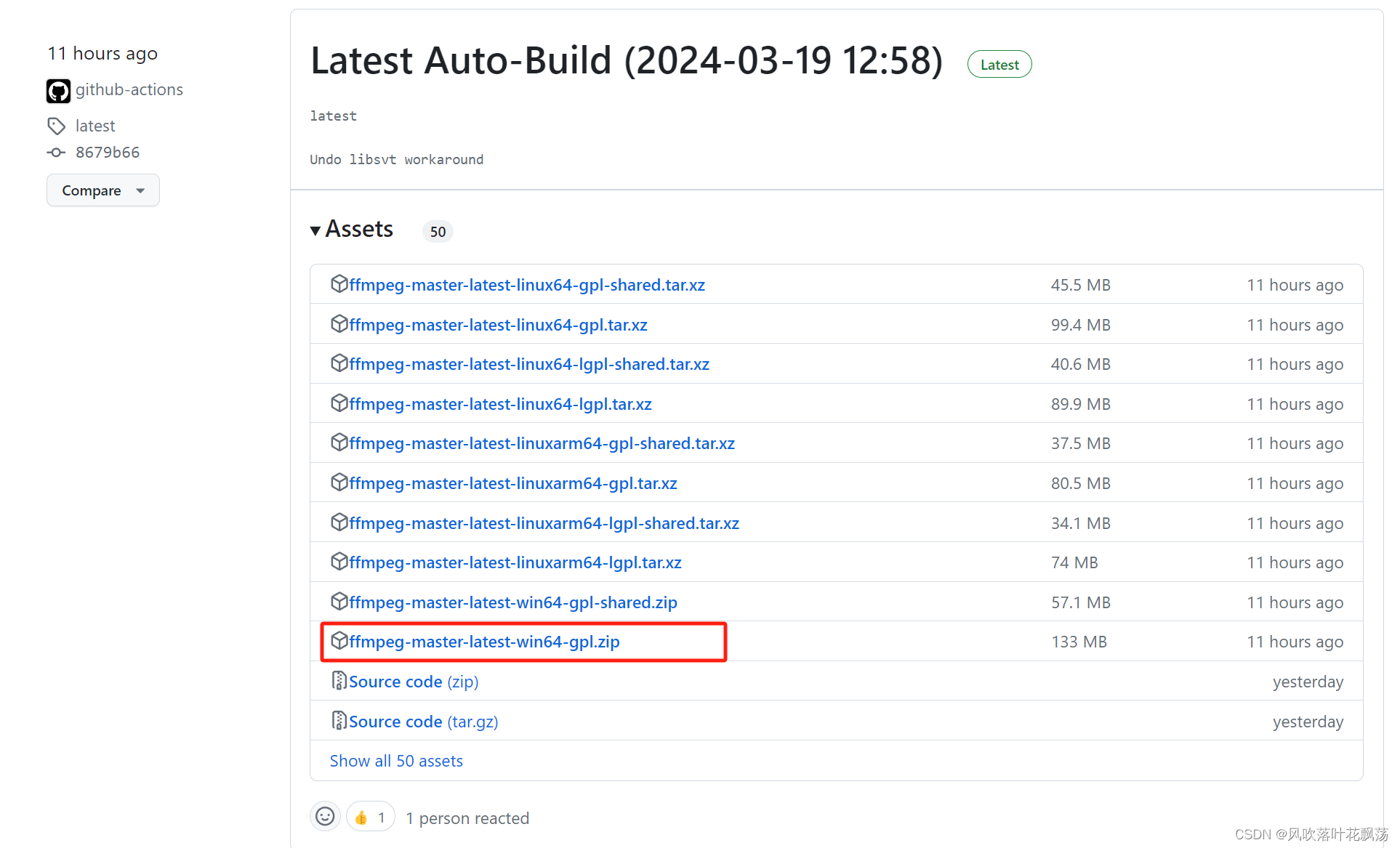

进入 https://github.com/BtbN/FFmpeg-Builds/releases,点击 windows版本的FFMPEG对应的图标,进入下载界面点击 download 下载按钮。

-

解压下载好的zip文件到指定目录(放到你喜欢的位置)

-

将解压后的文件目录中 bin 目录(包含 ffmpeg.exe )添加进 path 环境变量中

-

DOS 命令行输入 ffmpeg -version, 出现以下界面说明安装完成:

2、安装Whisper模型

运行以下程序,会自动安装Whisper-small的模型,并识别音频audio.mp3 输出识别到的文本。(如果没有科学上网的手段请手动下载)

import whisper

model = whisper.load_model("small")

result = model.transcribe("audio.mp3")

print(result["text"])

- 1

- 2

- 3

- 4

运行结果如下

三、其他

实时录制音频并转录

import pyaudio import wave import numpy as np from pydub import AudioSegment from audioHandle import addAudio_volume,calculate_volume from faster_whisper import WhisperModel model_size = "large-v3" # Run on GPU with FP16 model = WhisperModel(model_size, device="cuda", compute_type="float16") def GetIndex(): p = pyaudio.PyAudio() # 要找查的设备名称中的关键字 target = '立体声混音' for i in range(p.get_device_count()): devInfo = p.get_device_info_by_index(i) # if devInfo['hostApi'] == 0: if devInfo['name'].find(target) >= 0 and devInfo['hostApi'] == 0: print(devInfo) print(devInfo['index']) return devInfo['index'] return -1 # 配置 FORMAT = pyaudio.paInt16 # 数据格式 CHANNELS = 1 # 声道数 RATE = 16000 # 采样率 CHUNK = 1024 # 数据块大小 RECORD_SECONDS = 5 # 录制时长 WAVE_OUTPUT_FILENAME = "output3.wav" # 输出文件 DEVICE_INDEX = GetIndex() # 设备索引,请根据您的系统声音设备进行替换 if DEVICE_INDEX==-1: print('请打开立体声混音') audio = pyaudio.PyAudio() # 开始录制 stream = audio.open(format=FORMAT, channels=CHANNELS, rate=RATE, input=True, frames_per_buffer=CHUNK, input_device_index=DEVICE_INDEX) data = stream.read(CHUNK) print("recording...") frames = [] moreDatas=[] maxcount=3 count=0 while True: # 初始化一个空的缓冲区 datas = [] for i in range(0, int(RATE / CHUNK * RECORD_SECONDS)): data = stream.read(CHUNK) audio_data = np.frombuffer(data, dtype=np.int16) datas.append(data) # 计算音频的平均绝对值 volume = np.mean(np.abs(audio_data)) # 将音量级别打印出来 print("音量级别:", volume) moreDatas.append(datas) if len(moreDatas)>maxcount: moreDatas.pop(0) newDatas=[i for j in moreDatas for i in j] buffers=b'' for buffer in newDatas: buffers+=buffer print('开始识别') buffers=np.frombuffer(buffers, dtype=np.int16) # a = np.ndarray(buffer=np.array(datas), dtype=np.int16, shape=(CHUNK,)) segments, info = model.transcribe(np.array(buffers), language="en") text='' for segment in segments: print("[%.2fs -> %.2fs] %s" % (segment.start, segment.end, segment.text)) text+=segment.text print(text) print("finished recording") # 停止录制 stream.stop_stream() stream.close() audio.terminate() # 保存录音 wf = wave.open(WAVE_OUTPUT_FILENAME, 'wb') wf.setnchannels(CHANNELS) wf.setsampwidth(audio.get_sample_size(FORMAT)) wf.setframerate(RATE) wf.writeframes(b''.join(frames)) wf.close() #addAudio_volume(WAVE_OUTPUT_FILENAME)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/weixin_40725706/article/detail/708143

推荐阅读

相关标签