- 1cmd环境使用代理_配置代理服务器后如何让本地cmd也可以使用

- 2团队中“技术大拿”并非越多越好_技术大拿的重要性

- 3【优秀毕设】基于JSP+SSM+Mysql的小区物业管理系统【源码+LW】_ssm jsp 项目

- 4银河麒麟v10:通过rancher离线安装k8s_rancher一直在waiting

- 5【OpenHarmony】Windows 平台搭建 DevEco Studio 开发环境 ② ( DevEco Studio 设备管理 | 设备管理器 | 远程模拟器/设备 | 本地模拟器 )_devecostudio-windows

- 6纯前端在线Office文档安全预览之打开Word文档后禁止打印、禁止另存为、禁止复制_前端预览office

- 7Eureka心跳检测

- 8AI人工智能 Agent:高级概念剖析_ai agent tag

- 9java中super的用法_java中super和this用法总结

- 10替代not in 和 in 的办法_not in 替代

docker-compose部署kafka、SASL模式(密码校验模式)_system.out.printf("主题 = %s, 分区 = %d, 位移 = %d, " +

赞

踩

一.基础kafka部署

zookeeper,kafka,kafka-ui

docker-compose.yml

注意点:192.168.1.20 是宿主机的ip

- version: "3"

- services:

- zookeeper:

- image: wurstmeister/zookeeper

- container_name: zookeeper

- restart: always

- ports:

- - 2181:2181

- environment:

- ZOOKEEPER_CLIENT_PORT: 2181

- kafka:

- image: wurstmeister/kafka

- restart: always

- container_name: kafka

- depends_on:

- - zookeeper

- ports:

- - 9092:9092

- environment:

- KAFKA_BROKER_ID: 0

- KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.1.20:9092

- KAFKA_ADVERTISED_PORT: 9092

- KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9092

- KAFKA_PORT: 9092

- KAFKA_ALLOW_EVERYONE_IF_NO_ACL_FOUND: "false"

- KAFKA_ZOOKEEPER_CONNECT: zookeeper:2181

- KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

- KAFKA_GROUP_INITIAL_REBALANCE_DELAY_MS: 0

- KAFKA_HEAP_OPTS: "-Xmx512M -Xmx512M"

- kafka-ui:

- image: provectuslabs/kafka-ui:latest

- container_name: kafka-ui

- restart: always

- ports:

- - 10010:8080

- environment:

- - DYNAMIC_CONFIG_ENABLED=true

- - SERVER_SERVLET_CONTEXT_PATH=/ui-kafka

- - KAFKA_CLUSTERS_0_NAME=local

- - KAFKA_CLUSTERS_0_BOOTSTRAPSERVERS=kafka:9092

- - KAFKA_CLUSTERS_0_PROPERTIES_SECURITY_PROTOCOL=PLAINTEXT

- depends_on:

- - zookeeper

- - kafka

-

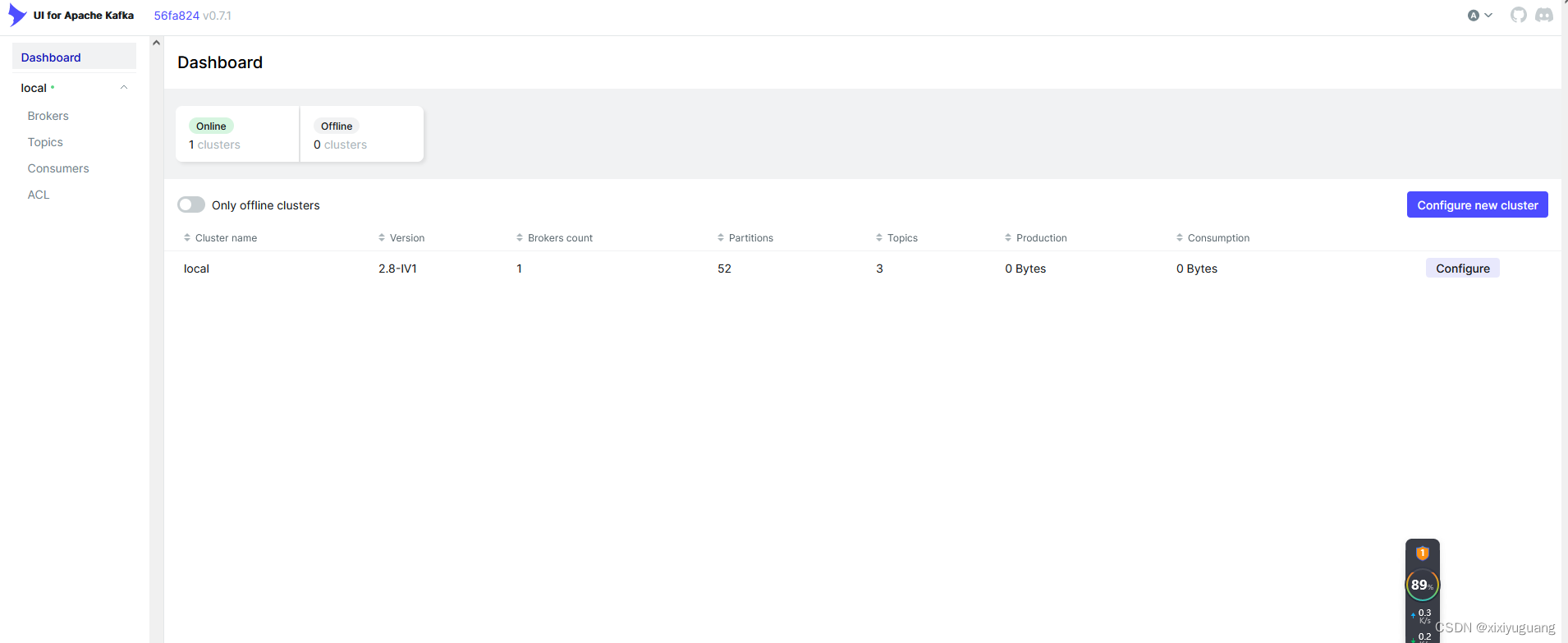

kafka-ui

地址:http://localhost:10010/ui-kafka/

java生产者

- <dependency>

- <groupId>org.springframework.kafka</groupId>

- <artifactId>spring-kafka</artifactId>

- <version>2.9.0</version>

- </dependency>

-

-

- import org.apache.kafka.clients.producer.KafkaProducer;

- import org.apache.kafka.clients.producer.ProducerConfig;

- import org.apache.kafka.clients.producer.ProducerRecord;

- import org.apache.kafka.common.serialization.StringSerializer;

-

- import java.util.Properties;

-

- public class KafkaProducerTest {

-

- public static void main(String[] args) {

-

-

- Properties properties = new Properties();

-

-

-

- properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, "127.0.0.1:9092");

-

- // KEY: 是kafka用于做消息投递计算具体投递到对应的主题的哪一个partition而需要的

- properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

- // VALUE: 实际发送消息的内容

- properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

-

- // 2.创建kafka生产者对象 传递properties属性参数集合

- KafkaProducer<String, String> producer = new KafkaProducer<>(properties);

-

- for (int i = 0; i < 100; i++) {

- ProducerRecord<String, String> record = new ProducerRecord<String, String>("topic_test", i + " : testx123测试");

- // 4.发送消息

- producer.send(record);

- System.out.println("发送成功: " + i);

- }

- // 5.关闭生产者

- producer.close();

-

- }

- }

java消费者

- public class KafkaConsumerTest {

-

- public static void main(String[] args) {

-

-

- Properties props = new Properties();

- // bootstrap.servers:kafka服务器地址,多个用逗号隔开

- props.put("bootstrap.servers", "127.0.0.1:9092");

- props.put("group.id", "topic-test-group"); // 消费组groupId

- props.put("auto.offset.reset", "earliest");

- // 序列化方式

- props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

- props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

-

- KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props);

-

- consumer.subscribe(Collections.singletonList("topic_test")); // 订阅的topic

- while (true) {

- ConsumerRecords<String, String> records = consumer.poll(Duration.ofMillis(1000L));

- for (ConsumerRecord<String, String> record : records) {

- System.out.printf("主题 = %s, 分区 = %d, 位移 = %d, " + "消息键 = %s, 消息值 = %s\n",

- record.topic(), record.partition(), record.offset(), record.key(), record.value());

- }

- if (!records.isEmpty()) {

- try {

- // 提交消费位移

- consumer.commitSync();

- } catch (CommitFailedException exception) {

- System.out.println("commit failed....");

- }

- }

-

- }

- }

- }

二.SASL模式部署kafka

解释: SASL(Simple Authentication and Security Layer)的配置

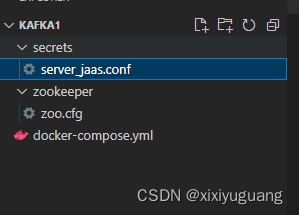

目录结构如下:C:/docker/kafka1/

server_jaas.conf配置

需要新建文件:server_jaas.conf,前两个是zk配置,后两个是kafka配置

- Client {

- org.apache.zookeeper.server.auth.DigestLoginModule required

- username="admin"

- password="123456";

- };

-

-

- Server {

- org.apache.zookeeper.server.auth.DigestLoginModule required

- username="admin"

- password="123456"

- user_super="123456"

- user_admin="123456";

- };

-

- KafkaServer {

- org.apache.kafka.common.security.plain.PlainLoginModule required

- username="admin"

- password="123456"

- user_admin="123456";

- };

-

- KafkaClient {

- org.apache.kafka.common.security.plain.PlainLoginModule required

- username="admin"

- password="123456";

- };

zoo.cfg配置

其他的没改变,就是最后添加的四行

- # The number of milliseconds of each tick

- tickTime=2000

- # The number of ticks that the initial

- # synchronization phase can take

- initLimit=10

- # The number of ticks that can pass between

- # sending a request and getting an acknowledgement

- syncLimit=5

- # the directory where the snapshot is stored.

- # do not use /tmp for storage, /tmp here is just

- # example sakes.

- dataDir=/opt/zookeeper-3.4.13/data

- # the port at which the clients will connect

- clientPort=2181

- # the maximum number of client connections.

- # increase this if you need to handle more clients

- #maxClientCnxns=60

- #

- # Be sure to read the maintenance section of the

- # administrator guide before turning on autopurge.

- #

- # http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

- #

- # The number of snapshots to retain in dataDir

- autopurge.snapRetainCount=3

- # Purge task interval in hours

- # Set to "0" to disable auto purge feature

- autopurge.purgeInterval=1

-

- ## 开启SASl关键配置

- authProvider.1=org.apache.zookeeper.server.auth.SASLAuthenticationProvider

- requireClientAuthScheme=sasl

- jaasLoginRenew=3600000

- zookeeper.sasl.client=true

docker-compose.yml

- # 版本根据你的docker版本来的,目前主流应该都是3.几的版本

- version: '3.8'

- services:

- zookeeper:

- image: wurstmeister/zookeeper

- volumes:

- - C:/docker/kafka1/secrets/:/opt/secrets/

- - C:/docker/kafka1/zookeeper/zoo.cfg:/opt/zookeeper-3.4.13/conf/zoo.cfg

- container_name: zookeeper

- environment:

- ZOOKEEPER_CLIENT_PORT: 2181

- ZOOKEEPER_TICK_TIME: 2000

- SERVER_JVMFLAGS: -Djava.security.auth.login.config=/opt/secrets/server_jaas.conf

- ports:

- - 2181:2181

- restart: always

- kafka:

- image: wurstmeister/kafka

- container_name: kafka

- depends_on:

- - zookeeper

- ports:

- - 9092:9092

- volumes:

- - C:/docker/kafka1/secrets/:/opt/secrets/

- environment:

- KAFKA_BROKER_ID: 0

- KAFKA_ADVERTISED_LISTENERS: SASL_PLAINTEXT://192.168.1.20:9092

- KAFKA_ADVERTISED_PORT: 9092

- KAFKA_LISTENERS: SASL_PLAINTEXT://0.0.0.0:9092

- KAFKA_SECURITY_INTER_BROKER_PROTOCOL: SASL_PLAINTEXT

- KAFKA_PORT: 9092

- KAFKA_SASL_MECHANISM_INTER_BROKER_PROTOCOL: PLAIN

- KAFKA_SASL_ENABLED_MECHANISMS: PLAIN

- KAFKA_AUTHORIZER_CLASS_NAME: kafka.security.auth.SimpleAclAuthorizer

- KAFKA_SUPER_USERS: User:admin

- KAFKA_ALLOW_EVERYONE_IF_NO_ACL_FOUND: "true" #设置为true,ACL机制为黑名单机制,只有黑名单中的用户无法访问,默认为false,ACL机制为白名单机制,只有白名单中的用户可以访问

- KAFKA_ZOOKEEPER_CONNECT: zookeeper:2181

- KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

- KAFKA_GROUP_INITIAL_REBALANCE_DELAY_MS: 0

- KAFKA_HEAP_OPTS: "-Xmx512M -Xms16M"

- KAFKA_OPTS: -Djava.security.auth.login.config=/opt/secrets/server_jaas.conf

- kafka-ui:

- image: provectuslabs/kafka-ui:latest

- container_name: kafka-ui

- restart: always

- ports:

- - 10010:8080

- environment:

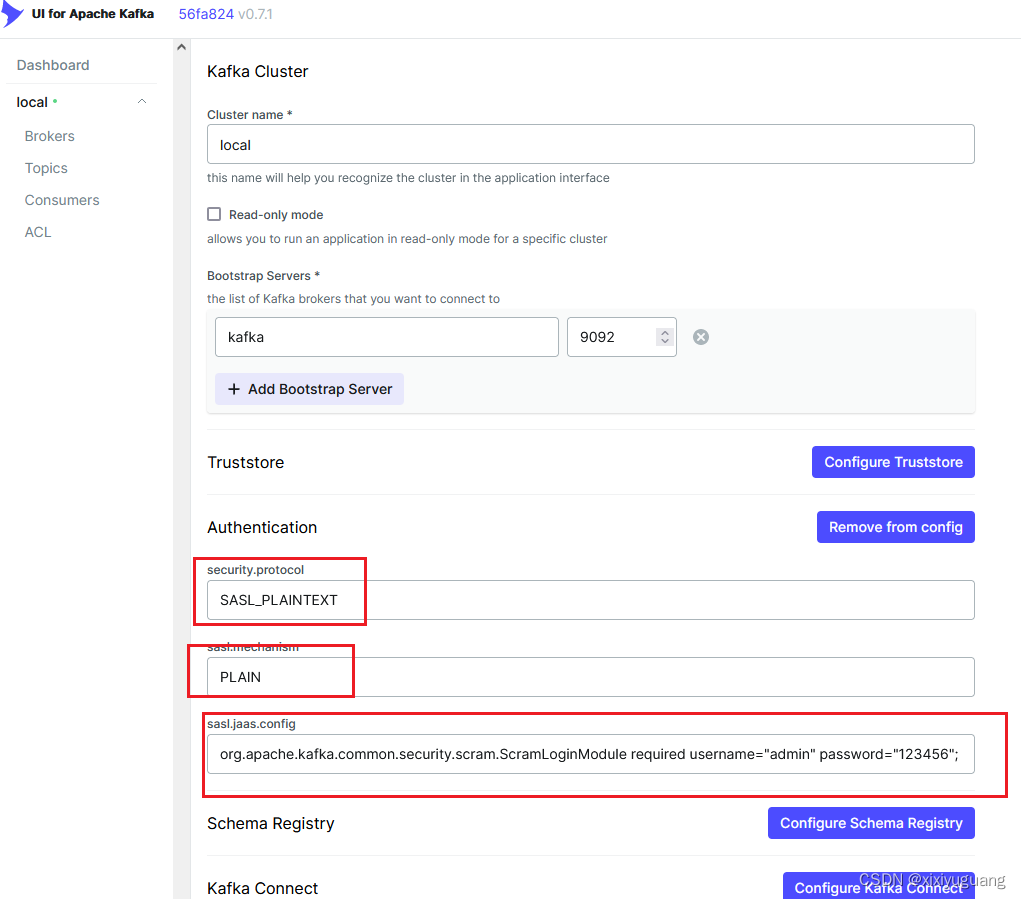

- - DYNAMIC_CONFIG_ENABLED=true

- - SERVER_SERVLET_CONTEXT_PATH=/kafka-ui

- - KAFKA_CLUSTERS_0_NAME=local

- - KAFKA_CLUSTERS_0_BOOTSTRAPSERVERS=kafka:9092

- - KAFKA_CLUSTERS_0_PROPERTIES_SECURITY_PROTOCOL=SASL_PLAINTEXT

- - KAFKA_CLUSTERS_0_PROPERTIES_SASL_MECHANISM=PLAIN

- - KAFKA_CLUSTERS_0_PROPERTIES_SASL_JAAS_CONFIG=org.apache.kafka.common.security.scram.ScramLoginModule required username="admin" password="123456";

- depends_on:

- - zookeeper

- - kafka

这是一个Docker Compose文件,用于定义和运行多个Docker容器的应用。我会为你详细解释这个文件的内容:

-

版本:

version: '3.8': 指定了Docker Compose的版本为3.8。

-

services:

- 定义了两个服务,分别是

zookeeper和kafka。

- 定义了两个服务,分别是

-

zookeeper:

- 使用

wurstmeister/zookeeper镜像来创建容器。 - 挂载了两个卷:一个是本地的

C:/docker/kafka1/secrets/目录到容器内的/opt/secrets/目录,另一个是本地的C:/docker/kafka1/zookeeper/zoo.cfg文件到容器内的/opt/zookeeper-3.4.13/conf/zoo.cfg文件。 - 设置容器的名称为

zookeeper。 - 设置环境变量:如

ZOOKEEPER_CLIENT_PORT,ZOOKEEPER_TICK_TIME,SERVER_JVMFLAGS等。 - 映射容器的2181端口到主机的2181端口。

- 设置容器在退出后总是重启。

- 使用

-

kafka:

- 使用

wurstmeister/kafka镜像来创建容器。 - 容器名称为

kafka。 - 这个服务依赖于

zookeeper服务,这意味着它会等待zookeeper服务启动后再启动。 - 映射容器的9092端口到主机的9092端口。

- 挂载了与

zookeeper相同的卷。 - 设置环境变量,包括Kafka的配置如:广告监听器、端口、安全协议、SASL机制等。还定义了Kafka的超级用户为“admin”。

- 设置Kafka的存储和堆大小。

- 使用

-

总体解释:

这是一个使用Docker Compose配置Kafka和Zookeeper集群的示例。Zookeeper作为Kafka的协调服务,负责管理集群的状态和配置。这个配置文件中,Zookeeper和Kafka都有详细的配置和环境变量设置,以适应特定的使用场景或安全需求。例如,SASL_PLAINTEXT是用于安全认证的协议,而SimpleAclAuthorizer则是一个简单的访问控制列表作者器,用于权限控制。

这是一个Docker Compose配置文件的一部分,用于设置Kafka UI服务。Kafka UI是一个用于管理和监视Apache Kafka集群的工具。以下是对该配置的详细解释:

-

image:

- 使用

provectuslabs/kafka-ui:latest镜像来创建容器。这个镜像是专门为Kafka UI提供的,并保持最新。

- 使用

-

container_name:

- 容器的名称为

kafka-ui。

- 容器的名称为

-

restart:

- 设置容器的重启策略为

always,这意味着容器将始终在退出后自动重启。

- 设置容器的重启策略为

-

ports:

- 将容器的8080端口映射到主机的10010端口,这样你就可以从主机访问Kafka UI。

-

environment:

- 这些是传递给容器的环境变量。它们用于配置Kafka UI的各种设置。

DYNAMIC_CONFIG_ENABLED=true: 启用动态配置。SERVER_SERVLET_CONTEXT_PATH=/kafka-ui: 设置服务器的Servlet上下文路径。KAFKA_CLUSTERS_0_NAME=local: 第一个Kafka集群的名称为“local”。KAFKA_CLUSTERS_0_BOOTSTRAPSERVERS=kafka:9092: 第一个Kafka集群的引导服务器地址是kafka:9092。KAFKA_CLUSTERS_0_PROPERTIES_SECURITY_PROTOCOL=SASL_PLAINTEXT: 安全协议设置为SASL_PLAINTEXT。KAFKA_CLUSTERS_0_PROPERTIES_SASL_MECHANISM=PLAIN: SASL机制设置为PLAIN。KAFKA_CLUSTERS_0_PROPERTIES_SASL_JAAS_CONFIG=...: SASL JAAS配置,其中定义了用户名和密码。

- 这些是传递给容器的环境变量。它们用于配置Kafka UI的各种设置。

-

depends_on:

- 这部分指定了该服务所依赖的其他服务。在这里,它依赖于

zookeeper和kafka服务,这意味着Kafka UI服务将在这些服务启动后启动。

- 这部分指定了该服务所依赖的其他服务。在这里,它依赖于

总之,这个配置是用于设置Kafka UI服务的Docker容器,该服务依赖于Zookeeper和Kafka集群,并使用特定的环境变量进行配置。

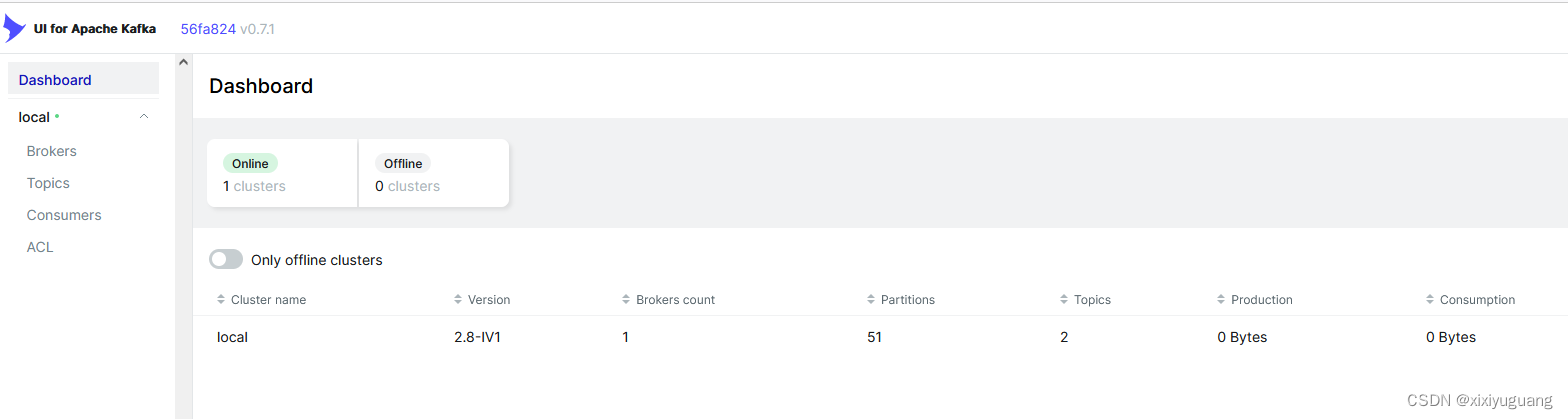

kafka-ui测试

http://localhost:10010/kafka-ui

java生产者

-

-

- public class KafkaProducerTest {

-

- public static void main(String[] args) {

-

-

- String user = "admin";

- String password = "123456";

- String topic = "test-topic";

-

- Properties properties = new Properties();

- // 1.配置生产者启动的关键属性参数

-

- properties.put("group.id", "test_group");

-

-

- properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, "127.0.0.1:9092");

-

- properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

- // VALUE: 实际发送消息的内容

- properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

-

- // ssl加密和认证

- properties.put("security.protocol", "SASL_PLAINTEXT");

- properties.put("sasl.mechanism", "PLAIN");

- properties.put("sasl.jaas.config",

- "org.apache.kafka.common.security.scram.ScramLoginModule "

- + "required username=\"" + user + "\" password=\"" + password + "\";");

- // 2.创建kafka生产者对象 传递properties属性参数集合

- KafkaProducer<String, String> producer = new KafkaProducer<>(properties);

-

- for (int i = 0; i < 1000; i++) {

- // 3.构造消息内容

- try {

- Thread.sleep(500);

- } catch (InterruptedException e) {

- throw new RuntimeException(e);

- }

-

- ProducerRecord<String, String> record = new ProducerRecord<String, String>("H0162", i + " : testxiaoxixixiix接口连接123");

-

- // 4.发送消息

- producer.send(record);

- System.out.println("发送成功: " + i);

- }

-

-

- // 5.关闭生产者

- producer.close();

-

- }

- }

java消费者

-

- public class KafkaConsumerTest {

-

- public static void main(String[] args) {

- String user = "admin";

- String password = "123456";

- String topic = "test-topic";

-

- Properties props = new Properties();

- // bootstrap.servers:kafka服务器地址,见中间服务平台”机构授权“功能操作说明 36.137.123.54:9092为示例地址

- props.put("bootstrap.servers", "127.0.0.1:9092");

- props.put("group.id", topic);

- props.put("auto.offset.reset", "earliest");

- // 序列化方式

- props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

- props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

- // ssl加密和认证

- props.put("security.protocol", "SASL_PLAINTEXT");

- props.put("sasl.mechanism", "PLAIN");

- props.put("sasl.jaas.config",

- "org.apache.kafka.common.security.scram.ScramLoginModule "

- + "required username=\"" + user + "\" password=\"" + password + "\";");

-

- KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props);

-

- consumer.subscribe(Collections.singletonList(topic));

- while (true) {

- ConsumerRecords<String, String> records = consumer.poll(Duration.ofMillis(1000L));

- for (ConsumerRecord<String, String> record : records) {

- System.out.printf("主题 = %s, 分区 = %d, 位移 = %d, " + "消息键 = %s, 消息值 = %s\n",

- record.topic(), record.partition(), record.offset(), record.key(), record.value());

- }

- if (!records.isEmpty()) {

- try {

- // 提交消费位移

- consumer.commitSync();

- } catch (CommitFailedException exception) {

- System.out.println("commit failed....");

- }

- }

-

- }

- }

- }