- 1git打tag以及拉取tag_git拉取某个tag的代码

- 2Streamworks,基于扩展FlinkSQL实现流计算的源表导入、维表关联与结果表导出_flinksql 关联

- 3python无聊代码【1】

- 4分享一个软硬件开源的低功耗时钟项目

- 5java web从入门到资深进阶路线图_javaweb学习路线

- 6考研数据结构之链栈_栈链

- 7firefox android 阅读模式,何必羡慕Safari 5?火狐浏览器也有“阅读模式”

- 8PyCharm中运行LeetCode中代码_leetcode的代码怎么运行

- 9动态规划:完全背包、多重背包

- 10Python 远程部署利器 Fabric 模块详解_python fabric windows

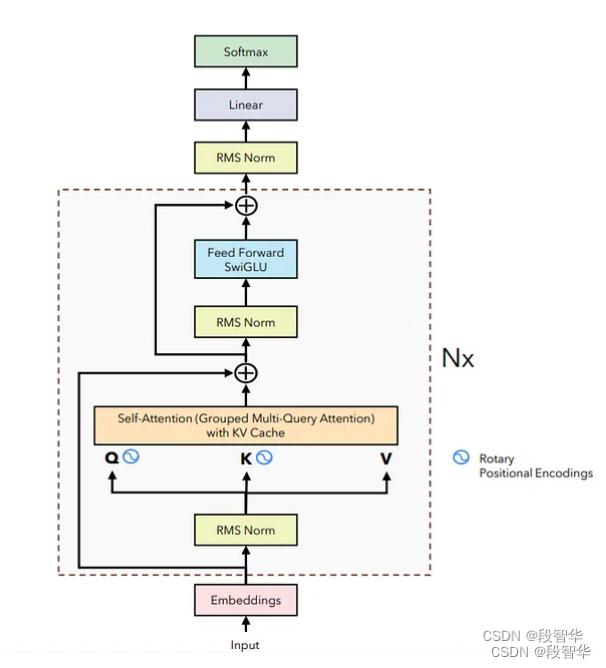

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(八)Transformer块

赞

踩

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(八)编码器块

Transformer块

由于 只关注模型的推理,因此 只会研究transformer块

class EncoderBlock(nn.Module): def __init__(self, args: ModelArgs): super().__init__() self.n_heads = args.n_heads self.dim = args.dim self.head_dim = args.dim // args.n_heads self.attention = SelfAttention(args) self.feed_forward = FeedForward(args) # normalize BEFORE the self attention self.attention_norm = RMSNorm(args.dim, eps=args.norm_eps) # Normalization BEFORE the feed forward self.ffn_norm = RMSNorm(args.dim, eps=args.norm_eps) def forward(self, x: torch.Tensor, start_pos: int, freqs_complex: torch.Tensor): # (B, seq_len, dim) + (B, seq_len, dim) -> (B, seq_len, dim) h = x + self.attention.forward(self.attention_norm(x), start_pos, freqs_complex) out = h + self.feed_forward.forward(self.ffn_norm(h)) return out

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

系列博客

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(一)Llama3 模型 架构

https://duanzhihua.blog.csdn.net/article/details/138208650

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(二)RoPE位置编码

https://duanzhihua.blog.csdn.net/article/details/138212328

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(三)KV缓存

https://duanzhihua.blog.csdn.net/article/details/138213306

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(四)分组多查询注意力

https://duanzhihua.blog.csdn.net/article/details/138216050

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(五)RMS 均方根归一化

https://duanzhihua.blog.csdn.net/article/details/138216630

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(六)SwiGLU 激活函数

https://duanzhihua.blog.csdn.net/article/details/138217261

探索和构建 LLaMA 3 架构:深入探讨组件、编码和推理技术(七)前馈神经网络

https://duanzhihua.blog.csdn.net/article/details/138218095