- 1SE-Net:Squeeze-and-Excitation Networks论文详解_se注意力机制论文

- 2技术开发知识库_开发过程专家知识库

- 3二叉树遍历方法——前、中、后序遍历(图解)_二叉树遍历前序中序后序

- 4为什么前端工程师的工资越来越高了?_前端为什么比后端工资高

- 5自动化平台测试开发方案(详解自动化平台开发)_自动化测试开发

- 6寻找数组中的最小值和最大值——编程之美2.10_dw法的取值中最大值和最小值

- 7Unity中关于SendMessage方法_安卓 unitysendmessage

- 8【AIGC】探索大语言模型中的词元化技术机器应用实例_大模型 词元划分

- 9win11如何安装及使用ncat(nc命令)_windows ncat

- 10数字信号处理|Matlab设计巴特沃斯低通滤波器(冲激响应不变法和双线性变换法)

七月论文审稿GPT第2版:用一万多条paper-review数据微调LLaMA2 7B最终反超GPT4_大模型auto review

赞

踩

目录

1.1 两大PDF解析器:nougat VS ScienceBeam

第三部分 对review数据的进一步处理:规范Review的格式且多聚一

3.2 为了让模型对review的学习更有迹可循:归纳出来4个要点且多聚一

3.2.1 设计更好的提示模板以让大模型帮梳理出来review语料的4个内容点

3.2.3 通过最终的prompt来处理review数据:ChatGPT VS 开源模型

3.2.4 对review数据的最后梳理:得到JSON文本的变体版且剔除长尾数据

3.3 (选读)相关工作之AcademicGPT:增量训练LLaMA2-70B,包含论文审稿功能

3.3.1 AcademicGPT: Empowering Academic Research

3.3.2 论文评审:借鉴ReviewAdvisor抽取出review的7个要点(类似我司借鉴斯坦福工作把review归纳出4个要点)

3.3.3 70B的AcademicGPT在论文审稿上效果不佳的原因

第四部分 模型的选型:从Mistral、Mistral-YaRN到LongLora LLaMA

4.1 前置知识:Mistral 7B、YaRN、LongLoRA/LongQLoRA

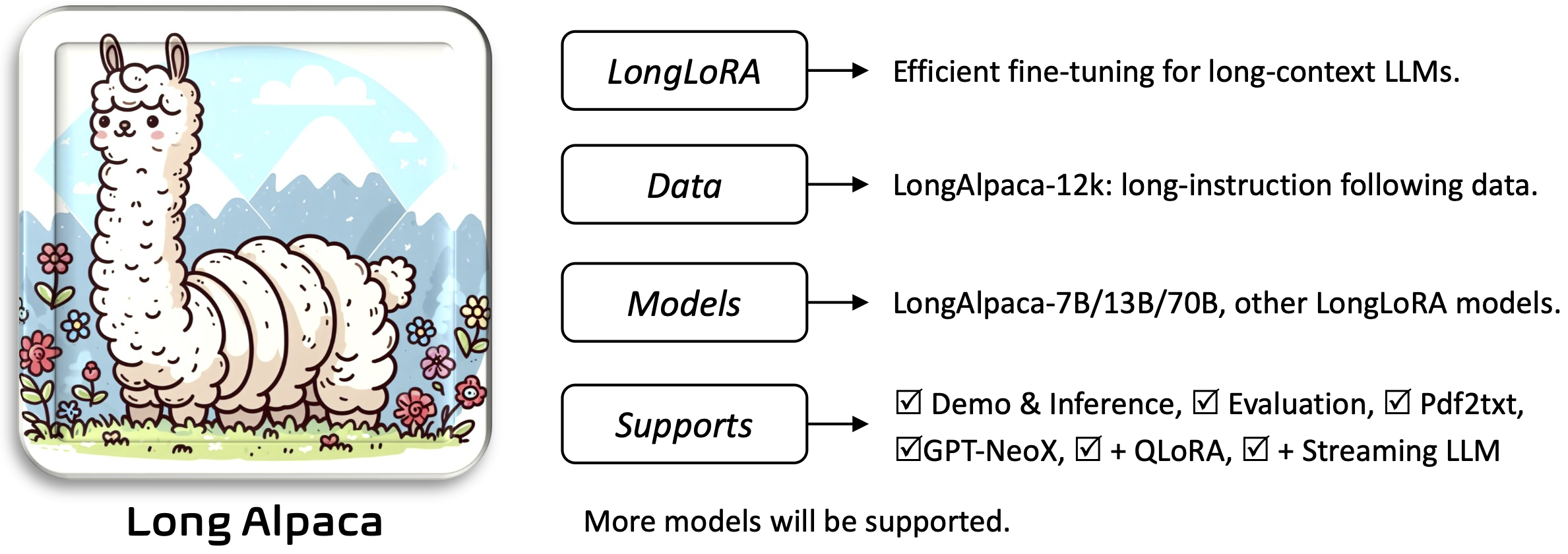

4.1.3 LongLoRA LLaMA与LongQLoRA LLaMA

4.2 模型怎么选,此三PK:Yarn-Mistral-7b-64k、Mistral-instruct、LLaMA-LongLoRA/LLaMA-LongQLoRA

4.2.2 直接通过llama factory微调Mistral-instruct

4.2.3 LLaMA2 7B chat-LongLoRA:成功

4.2.3.1 不染的工作:大改LongLoRA源码跑第一轮

4.2.3.2 阿李的工作:小改LongLoRA源码跑第二轮

4.2.4 基于LongQLoRA + 一万多条paper-review数据集微调LLaMA2 7B chat:成功

第五部分 模型的训练:如何微调LLaMA2、Yarn-Mistral

5.1 LLaMA2 7b chat + LongQLoRA训练

5.2 LLaMA2 7b chat + LongLoRA训练

5.2.1 [不染]Llama2-7b-chat + LongLoRA源码 + 训练/推理

5.2.2 [阿李]LLaMA-2-7b-chat + LongLoRA训练

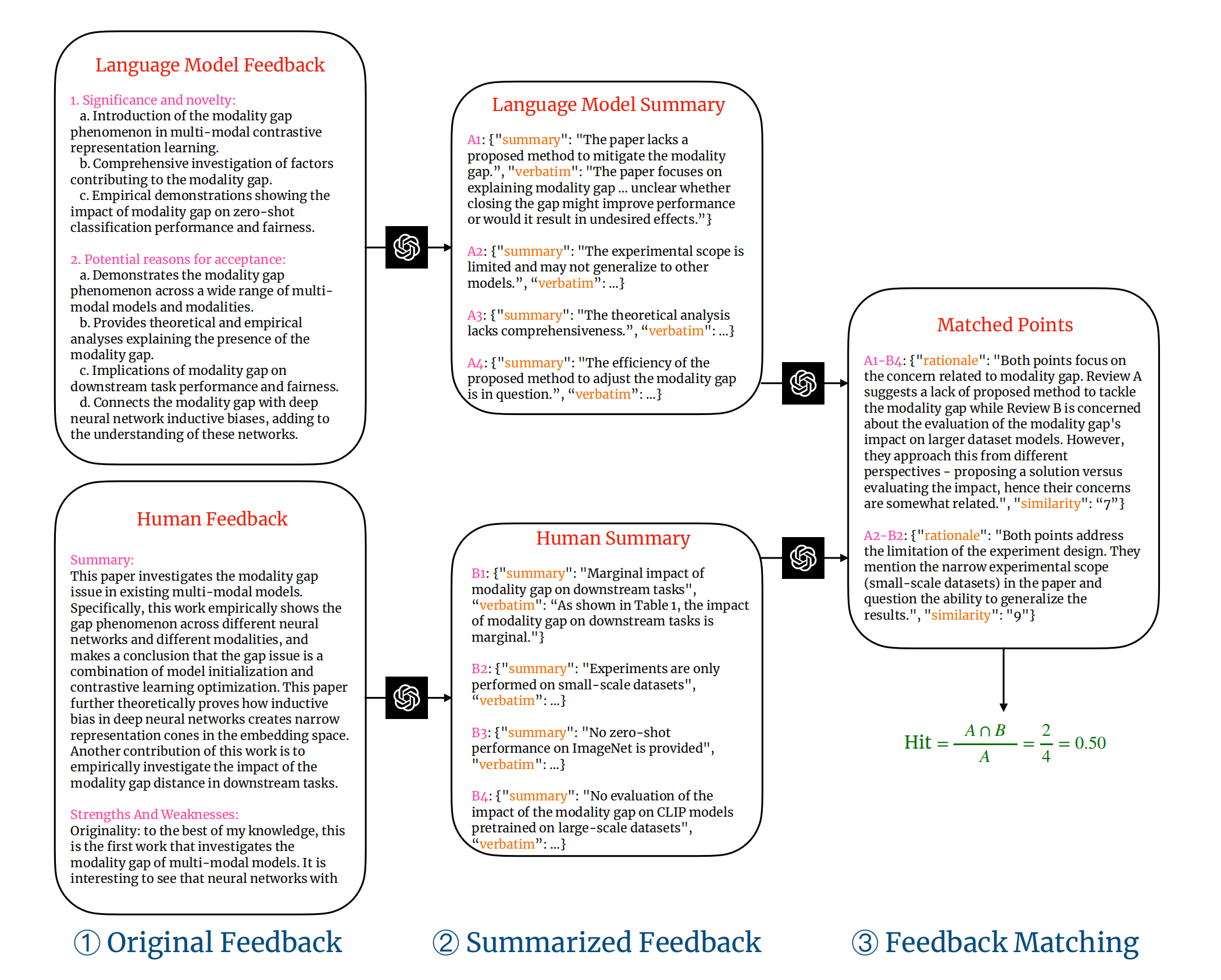

6.1.2 基于「重叠度上命中率指标」衡量LLM评估效果的流程

6.2 对LLaMA2 7B chat-LongQLoRA效果的评估:强过GPT3.5和GPT4

6.2.1 让我司审稿模型、GPT3.5分别对测试集的paper输出review

6.2.2 对review的处理:格式转换、为review项标注观点序号

6.2.3 对人工、llama2、GPT3.5输出的观点项进行匹配

6.2.4 我司审稿模型与GPT大PK:计算命中率与命中数,一决胜率

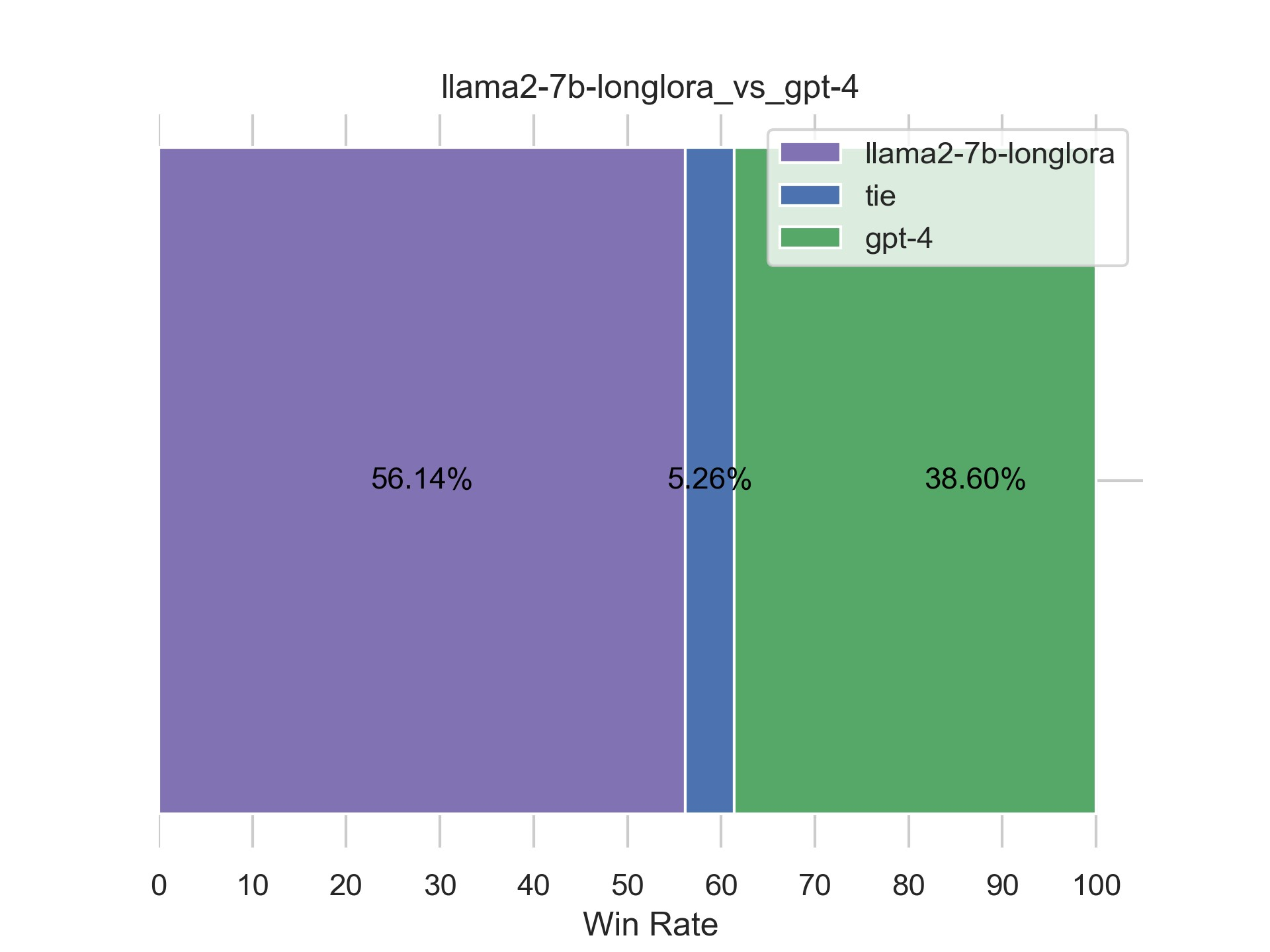

6.3 对LLaMA2 7B chat-LongLoRA效果的评估:依然强过GPT3.5和GPT4

6.3.1 针对「5.2.1 [不染]Llama2-7b-chat + LongLoRA源码 + 训练/推理」的评估

6.3.2 针对「5.2.2 [阿李]LLaMA-2-7b-chat + LongLoRA训练」的评估

前言

如此前这篇文章《学术论文GPT的源码解读与微调:从ChatPaper到七月论文审稿GPT第1版》中的第三部分所述,对于论文的摘要/总结、对话、翻译、语法检查而言,市面上的学术论文GPT的效果虽暂未有多好,可至少还过得去,而如果涉及到论文的修订/审稿,则市面上已有的学术论文GPT的效果则大打折扣

原因在哪呢?本质原因在于无论什么功能,它们基本都是基于API实现的,而关键是API毕竟不是万能的,API做翻译/总结/对话还行,但如果要对论文提出审稿意见,则API就捉襟见肘了,故为实现更好的review效果,需要使用特定的对齐数据集进行微调来获得具备优秀review能力的模型

继而,我们在第一版中,做了以下三件事

- 爬取了3万多篇paper、十几万的review数据,并对3万多篇PDF形式的paper做解析(review数据爬下来之后就是文本数据,不用做解析)

当然,paper中有被接收的、也有被拒绝的 - 为提高数据质量,针对paper和review做了一系列数据处理

当然,主要是针对review数据做处理 - 基于RWKV进行微调,然因其遗忘机制比较严重,故最终效果不达预期

所以,进入Q4后,我司论文审稿GPT的项目团队开始做第二版(我司自从23年Q3在教育团队之外,我再带队成立LLM项目团队之后,一直在不断迭代各种LLM项目,后来每个项目各自一个项目组,其中第二项目组负责论文审稿GPT,23年Q3由我和阿荀组成,23年Q4增加了不染、雪狼、朝阳,24年Q1增加了文弱、阿李),并着重做以下三大方面的优化

- 数据的解析与处理的优化,meta的一个ocr即「nougat」能提取出LaTeX,当然,我们也在同步对比另一个解析器sciencebeam的效果

- 借鉴GPT4做审稿人那篇论文,让ChatGPT API帮爬到的review语料,梳理出来 以下4个方面的内容

1 重要性和新颖性

2 论文被接受的原因

3 论文被拒绝的原因

4 改进建议 - 模型本身的优化,比如llama longlora

第一部分 第二版对论文PDF数据的解析

1.1 两大PDF解析器:nougat VS ScienceBeam

1.1.1 Meta nougat

nougat是Meta针对于学术PDF文档的开源解析工具(其主页、其代码仓库),以OCR方法为主线,较之过往解析方案最突出的特点是可准确识别出公式、表格并将其转换为可适应Markdown格式的文本。缺陷就是转换速读较慢、且解析内容可能存在一定的乱序

和另一个解析器sciencebeam做下对比,可知

- nougat比较好的地方在于可以把图片公式拆解成LaTeX源码,另外就是识别出来的内容可以通过“#”符号来拆解文本段

缺陷就是效率很低、非常慢,拿共约80页的3篇pdf来解析的话,大概需要2分钟,且占用20G显存,到时候如果要应用化,要让用户传pdf解析的话,部署可能也会有点难度 - sciencebeam的话就是快不少,同样量级的3篇大约1分钟内都可以完成,和第1版用的SciPDF差不多,只需要CPU就可以驱动起来了

当然,还要考虑的是解析器格式化的粒度,比如正文拆成了什么样子的部分,后续我们需不需要对正文的特定部分专门取出来做处理,如果格式化粒度不好的话,可能会比较难取出来

- 环境配置

- # 新建虚拟环境

- conda create -n nougat-ocr python=3.10

- # 激活虚拟环境

- conda activate nougat-ocr

- # 使用pip安装必要库(镜像源安装可能会出现版本冲突问题,建议开启代理使用python官方源进行安装)

- pip install nougat-ocr -i https://pypi.org/simple

- 使用方法

- # 初次使用时会自动获取最新的权重文件

- # 针对单个pdf文件

- nougat {pdf文件路径} -o {解析输出目录}

- # 针对多个pdf所在文件夹

- nougat {pdf目录路径} -o {解析输出目录}

- 测试示例

标题及开头 公式识别与转换

公式识别与转换

脚注识别

1.1.2 ScienceBeam

ScienceBeam是经典PDF文档解析器GROBID的变体项目,是论文《Can large language models provide useful feedback on research papers? A large-scale empirical analysis》所采用的文本提取方法,同其他较早期的解析方法一样,对公式无法做出LateX层面的解析,且该解析器仅支持在X86架构的Linux系统中使用

// 待更

1.2 对2.6万篇paper的解析

最终,针对有review的2.6万篇paper (第一版 全部paper3万篇,其中带review的2.5万篇;第二版 全部paper3.2万篇,其中带review的2.6万篇 )

1.2.1 nougat的解析过程

- 我司审稿项目组的其中一位“雪狼”用的1张显存为24G的P40解析完其中一半,另外一半由另一位“不染”用的1张显存为48G的A40解析完

- 因nougat解析起来太耗资源,加之当时我们的卡有限,所以这个PDF的解析,我们便用了一两周..

1.2.2 ScienceBeam的解析结果

ScienceBeam解析的结果为字典,其中涉及的键有

- title: Paper的标题,有部分会因为解析不出而留空,可以使用相应的OpenReview数据的标题来代替

- abstract: Paper的摘要,可能有部分会因为解析不出而留空,可以使用相应的OpenReview数据的摘要来代替

- introduction: Paper的介绍,通常会包含在main_content中

- figure_and_table_captions: Paper中图表下方的文字描述

- section_titles: Paper各个小节的标题

- main_content: Paper的正文(含introduction)

实际取用的部分是其中的title、abstract、figure_and_table_captions以及main_content

且会加入[TITLE]、[ABSTRACT]、[CAPTIONS]、[CONTENT]特殊符号加以区分Paper的各个部分,考虑到[CONTENT]可能会提及[CAPTIONS]中的内容,因此将[CAPTIONS]置于[CONTENT]之前

- [TITLE]

- 标题

-

- [ABSTRACT]

- 摘要

-

- [CAPTIONS]

- 各图表描述

-

- [CONTENT]

- 其余正文

// 待更

第二部分 第二版对paper和review数据的处理

2.1 第一版对review数据的处理

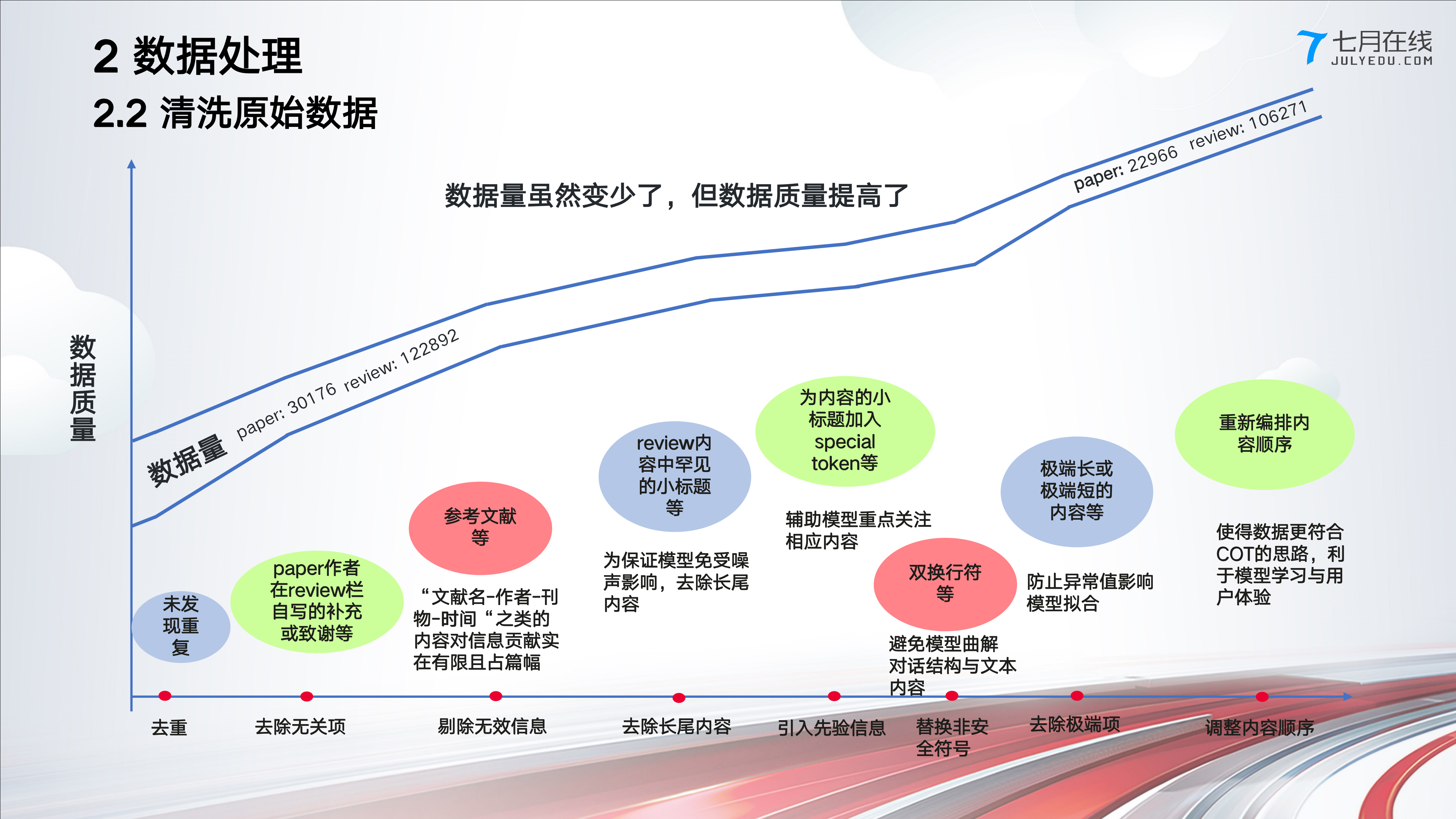

在第一版中,我们对review数据做了如下处理

总之

- 第一版中,面向paper 更多是做的PDF解析(解析器解析出来的正文直接就没包含reference)

第二版中,对于paper的数据处理沿用第一版的处理方法:解析完了之后 不再做什么处理 - 第一版中,面向review 则做的如上图所示的数据处理(注意,review无解析一说,毕竟如前言中所说,review数据爬下来之后就是文本数据,不用做解析)

那第二版 针对review数据的处理呢?详见下文

2.2 第二版对review数据的处理:去掉作者的回复、去除过短的review

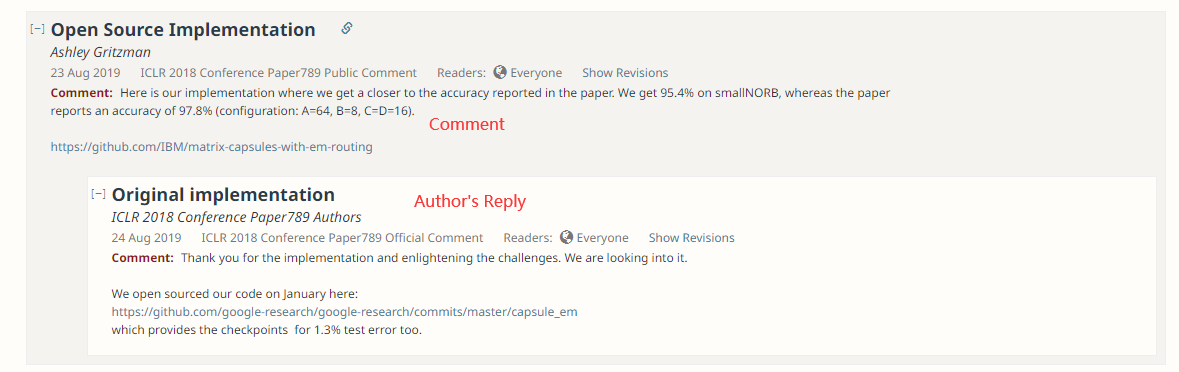

以“b_forum”字段为与Paper数据所关联的外键,“b_forum”为对应Paper的唯一标识符(id)

- 某篇paper所对应的Review数据如果只是单行即为单个Review

- 但很多时候,单篇Paper可能对应有多个Review,故存在多行数据下b_forum相同的情况

针对原始数据,我们做以下4点处理

- 过滤需求外的Review

主要是去掉作者自己的回复,以及对paper的评论

- 将Review字符串化

- 过滤内容过少的Review

- 将Review的内容规范出4个要点且进行“多聚一”,下文详述

本部分数据处理的代码,暂在七月在线的「大模型项目开发线上营」中见

// 待更

第三部分 对review数据的进一步处理:规范Review的格式且多聚一

3.1 斯坦福:让GPT4首次当论文的审稿人

近日,来自斯坦福大学等机构的研究者把数千篇来自Nature、ICLR等的顶会文章丢给了GPT-4,让它生成评审意见、修改建议,然后和人类审稿人给出的意见相比较

- 在GPT4给出的意见中,超50%和至少一名人类审稿人一致,并且超过82.4%的作者表示,GPT-4给出的意见相当有帮助

- 这个工作总结在这篇论文中《Can large language models provide useful feedback on research papers? A large-scale empirical analysis》,这是其对应的代码仓库

所以,怎样让LLM给你审稿呢?具体来说,如下图所示

- 爬取PDF语料

- 接着,解析PDF论文的标题、摘要、图形、表格标题、主要文本

- 然后告诉GPT-4,你需要遵循业内顶尖的期刊会议的审稿反馈形式,包括四个部分

成果是否重要、是否新颖(signifcance andnovelty)

论文被接受的理由(potential reasons for acceptance)

论文被拒的理由(potential reasons for rejection)

改进建议(suggestions for improvement)- Your task now is to draft a high-quality review outline for a top-tierMachine Learning (ML) conference fora submission titled “{PaperTitle}”:

- ```

- {PaperContent}

- ```

- ======

- Your task:

- Compose a high-quality peer review of a paper submitted to a Nature family journal.

- Start by "Review outline:".

- And then:

- "1. Significance and novelty"

- "2. Potential reasons for acceptance"

- "3. Potential reasons for rejection", List multiple key reasons. For each key reason, use **>=2 sub bullet points** to further clarify and support your arguments in painstaking details. Be as specific and detailed as possible.

- "4. Suggestions for improvement", List multiple key suggestions. Be as specific and detailed as possible.

- Be thoughtful and constructive. Write Outlines only.

- 最终,GPT-4针对上图中的这篇论文一针见血地指出:虽然论文提及了模态差距现象,但并没有提出缩小差距的方法,也没有证明这样做的好处

3.2 为了让模型对review的学习更有迹可循:归纳出来4个要点且多聚一

3.2.1 设计更好的提示模板以让大模型帮梳理出来review语料的4个内容点

上一节介绍的斯坦福这个让GPT4当审稿人的工作,对我司做论文审稿GPT还挺有启发的

- 正向看,说明我司这个方向是对的,至少GPT4的有效意见超过50%

- 反向看,说明即便强如GPT4,其API的效果还是有限:近一半意见没被采纳,证明我司做审稿微调的必要性、价值性所在

- 审稿语料的组织 也还挺关键的,好让模型学习起来有条条框框 有条理 分个 1 2 3 4 不混乱,瞬间get到review描述背后的逻辑、含义

比如要是我们爬取到的审稿语料 也能组织成如下这4块,我觉得 就很强了,模型学习起来 会很快

1) 成果是否重要、是否新颖

2) 论文被接受的理由

3) 论文被拒的理由

4) 改进建议

对于上面的“第三大点 审稿语料的组织”,我们(特别是阿荀,其次我)创造性的想出来一个思路,即通过提示模板让大模型来帮忙梳理咱们爬的审稿语料,好把审稿语料 梳理归纳出来上面所说的4个方面的常见review意见

那怎么设计这个提示模板呢?借鉴上节中斯坦福的工作,提示模板可以在斯坦福那个模板基础上,进一步优化如下

// 暂在「大模型项目开发线上营」中见,至于在本文中的更新,待更

3.2.2 如何让归纳出来的review结果更全面:多聚一

我们知道一篇paper存在多个review,而对review数据的学习有三种模式

- 一种是多选一

但多选一有个问题,即是:如果那几个review都不是很全面呢,然后多选一的话会不会对review信息的丰富程度有损 - 一种是多聚一

对多个review做一下总结归纳(阿荀、我先后想到),相当于综合一下,此时还是可以用GPT 3.5 16K或开源模型帮做下review数据的多聚一

- 一种是多轮交互

这种工作量比较大,非首选

如此,最终清洗之后的23176篇paper的review,用多聚一的思路搞的话,便可以直接一次调用支持16K的GPT 3.5(毕竟16K的长度足够,可以把所有的review数据一次性给到GPT3.5 16K),或开源模型让它直接从所有review数据里提炼出4个要点

3.2.3 通过最终的prompt来处理review数据:ChatGPT VS 开源模型

综上,即是考虑多聚一策略来处理Review数据,主要是对Prompting提出了更高的要求:

- 要求大模型聚合所有Review的观点来进行摘要

- 为保证规整Review的统一性,需提供具体的类别(如新颖性、接受原因、拒绝原因、改进建议等)对观点进行明确“分类”

- 强调诚实性来缓解幻觉,在prompt中提供“示弱”选项(如回复“不知道”或允许结果为空等)

- 为使得后续工作更容易从大模型的输出中获取到所关注的信息,需对其输出格式进行要求

上一节斯坦福研究者对模型review效果评估的工作看似很完美,不过其中有个小问题,即尽管LLM可以根据指令遵循来基于Prompt的要求返回JSON格式的内容,但并非每次都能生成得到利于解析的JSON格式内容

相当于咱们得基于上述要求来设计Prompt (最终设计好的prompt暂在七月在线的「大模型项目开发线上营」里讲)

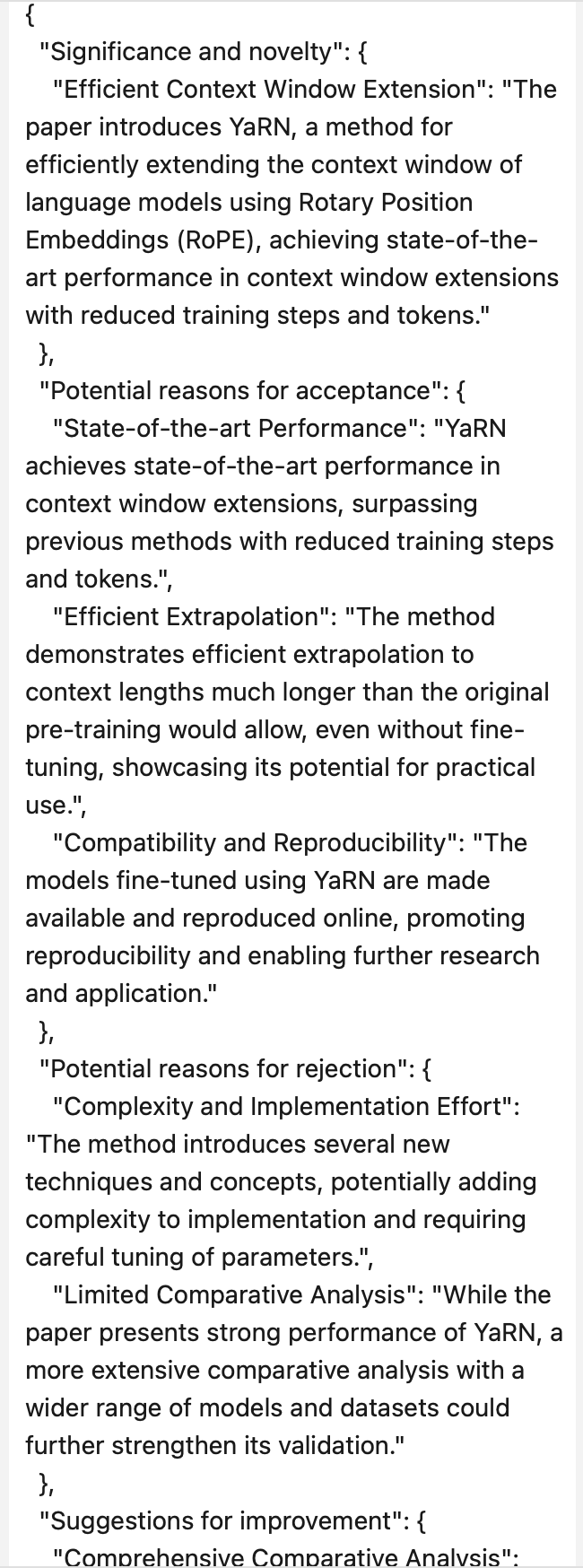

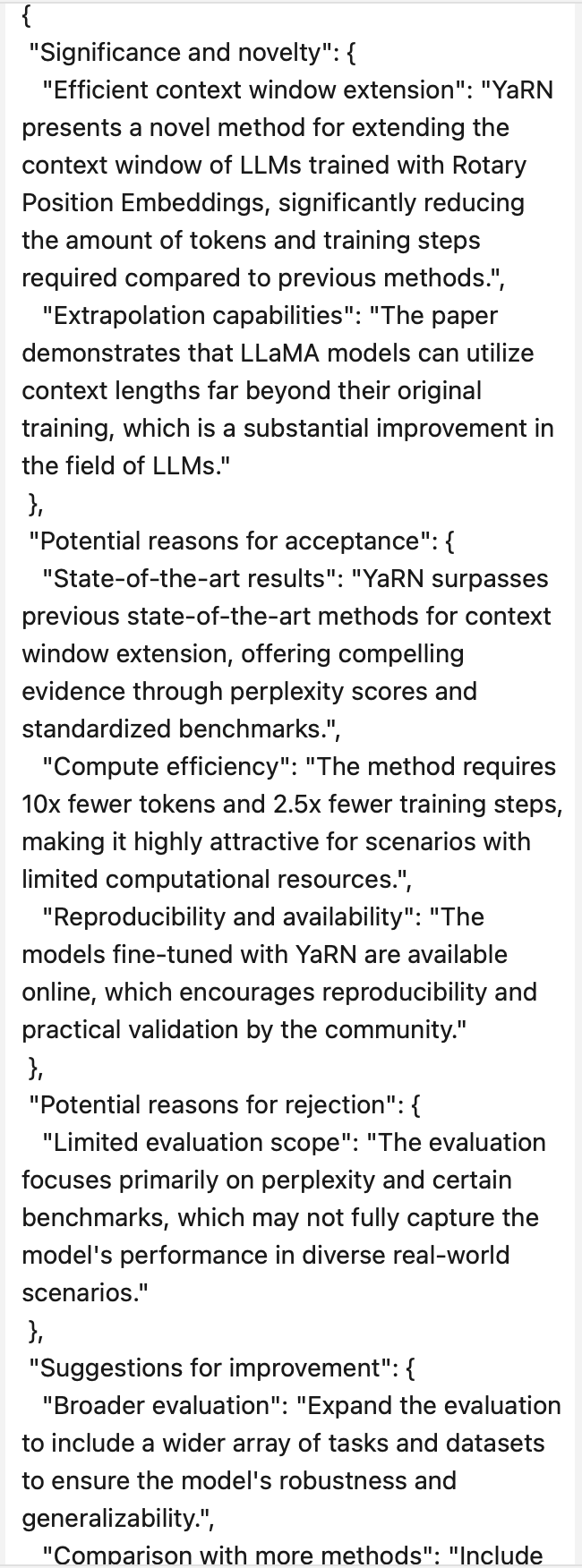

当我们最终的prompt设计好了之后,接下来,便可以让大模型通过该prompt处理review数据了,那我们选用哪种大模型呢,是ChatGPT还是开源模型,为此,我们对比了以下三种大模型

- zephyr-7b-alpha

- Mistral-7B-Instruct-v0.1

- OpenAI刚对外开放的gpt-3.5-turbo-1106,即上一节图中的GPT3.5 Turbo 16K

经过对比发现

- 用OpenAI的gpt-3.5-turbo-1106效果相对更好些,能力更强 效果更好,加之经实际研判,费用也还好 不算高

- 更让OpenAI脱颖而出的是,gpt-4-1106-preview和gpt-3.5-turbo-1106版本中提供了JSON mode,在接口中传入response_format={"type": "json_object"}启用该模式、并在prompt中下达“以JSON格式返回”的指示后,将会返回完全符合JSON格式的内容

// 具体怎么个对比法,以及怎么个效果更好,在大模型线上营里见

不过我们在实际使用的过程中,发现OpenAI对API的访问有各种限制且限制的比较严格(即对用户有多层限制:https://platform.openai.com/docs/guides/rate-limits/usage-tiers?context=tier-one,比如分钟级请求限制、每日请求限制、分钟级token限制、每日token限制 ),访问经常会假死不给返回、也没报错,所以很多时间耗费在被提示“访问超限”,然后等待又重复访问、再被提示超限这样的过程,使得我们一开始使用OpenAI的官方接口23年11.24到11.30大概7天才出了2600多条,并且后续限制访问的出现频率愈加高,头疼..

- 后面实在没办法,我们找了一个国内的二手商,最终调二手商的接口,而二手商调OpenAI的接口,于此,用户访问频率限制、代理等问题就让二手商那边解决了(我们也琢磨了下为何二手商可以解决这类访问限制的问题,根据以往的经验,我们判断,应该是二手商那边的OpenAI账户很多、代理路线很多,做了统一调度管理,然后在用户调用的时候选取当前低频的官方账户来访问官方接口,时不时还自动切换下代理,要知道一个代理被用来高频访问OpenAI的时候,其实有可能是会被放进黑名单的,所以持续维护一个代理池来做自动切换也很重要 )

当然,二手商的接口晚上(或者别的高峰期)有时候还是会返回访问受限的提示,那时候应该用的人比较多,导致即使“最低频访问”的官方接口,访问频率也不算低了,所以也会被访问受限 - 最终,使用二手商的中转接口,12.04到12.08大概5天出了9000多条

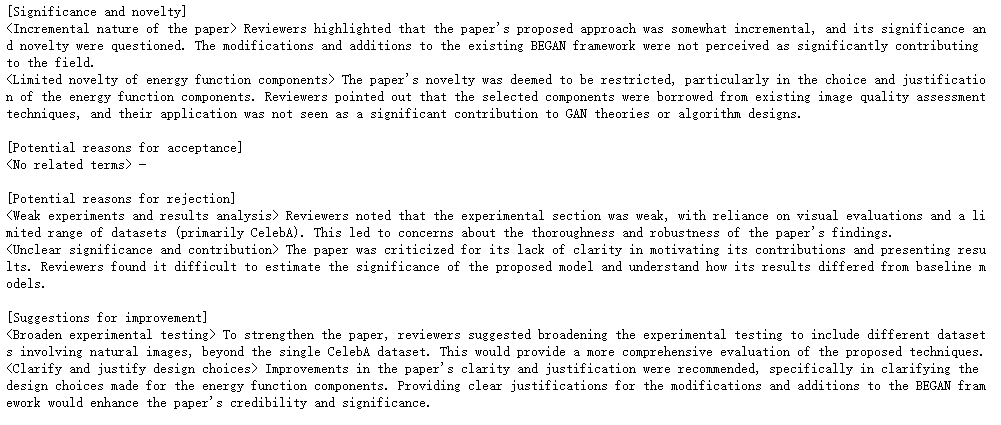

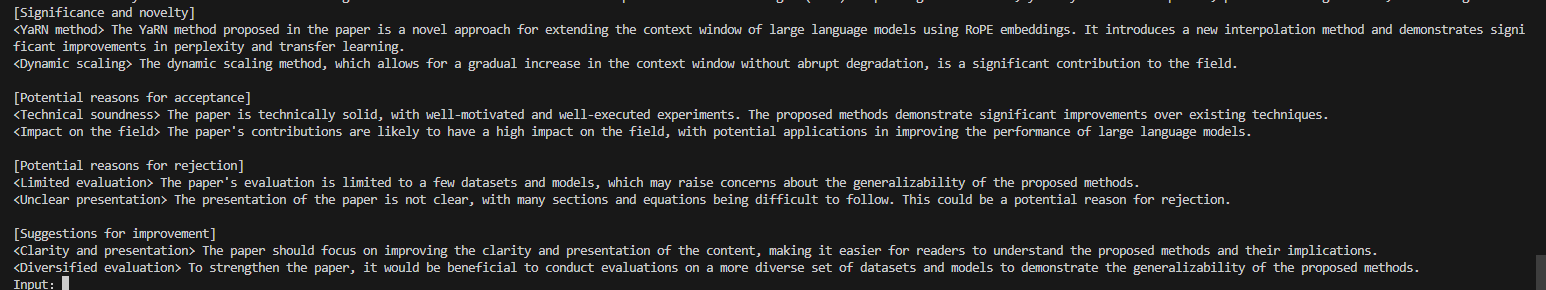

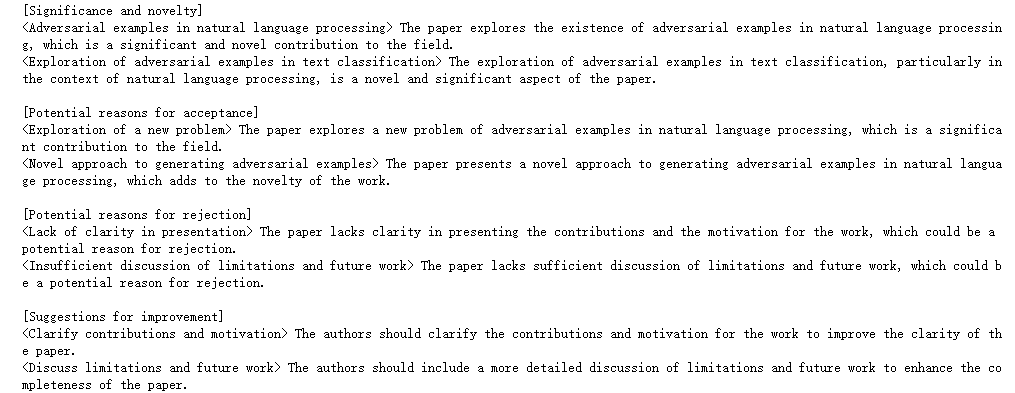

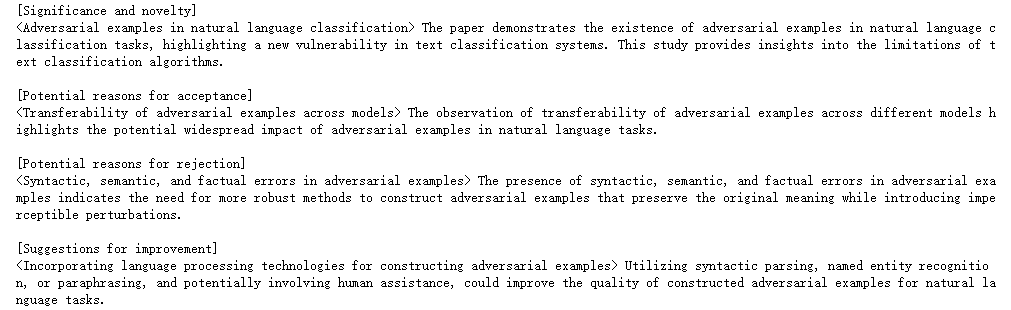

3.2.4 对review数据的最后梳理:得到JSON文本的变体版且剔除长尾数据

原本的经过“多聚一”review侧的数据由JSON mode返回所得,均为JSON格式(字典),大体形式如下

但考虑到后续要微调的开源模型对JSON格式的关注程度可能不足,学习JSON文本可能存在一定的困难,故最终将上述JSON格式的内容转为如下的格式(可以理解为JSON文本的变体版)

- [Significance and novelty]

- <大体描述> 具体描述

- <大体描述> 具体描述

- ...

-

- [Potential reasons for acceptance]

- <大体描述> 具体描述

- <大体描述> 具体描述

- ...

-

- [Potential reasons for rejection]

- <大体描述> 具体描述

- <大体描述> 具体描述

- ...

-

- [Suggestions for improvement]

- <大体描述> 具体描述

- <大体描述> 具体描述

- ...

即如下图所示

且依据内部文件《[正式方案]长尾数据清洗及后续安排》,文本长度过少的Review可能仅包含有一些无关紧要的信息,因此还可以考虑将长度过少的Review进行剔除(当然,paper侧也得剔除相关的长尾数据)

经过一系列操作之后(包括对review做多聚一),数据量从23176篇带多条review的paper降到了15566条paper-review(一篇paper对应一条大review)

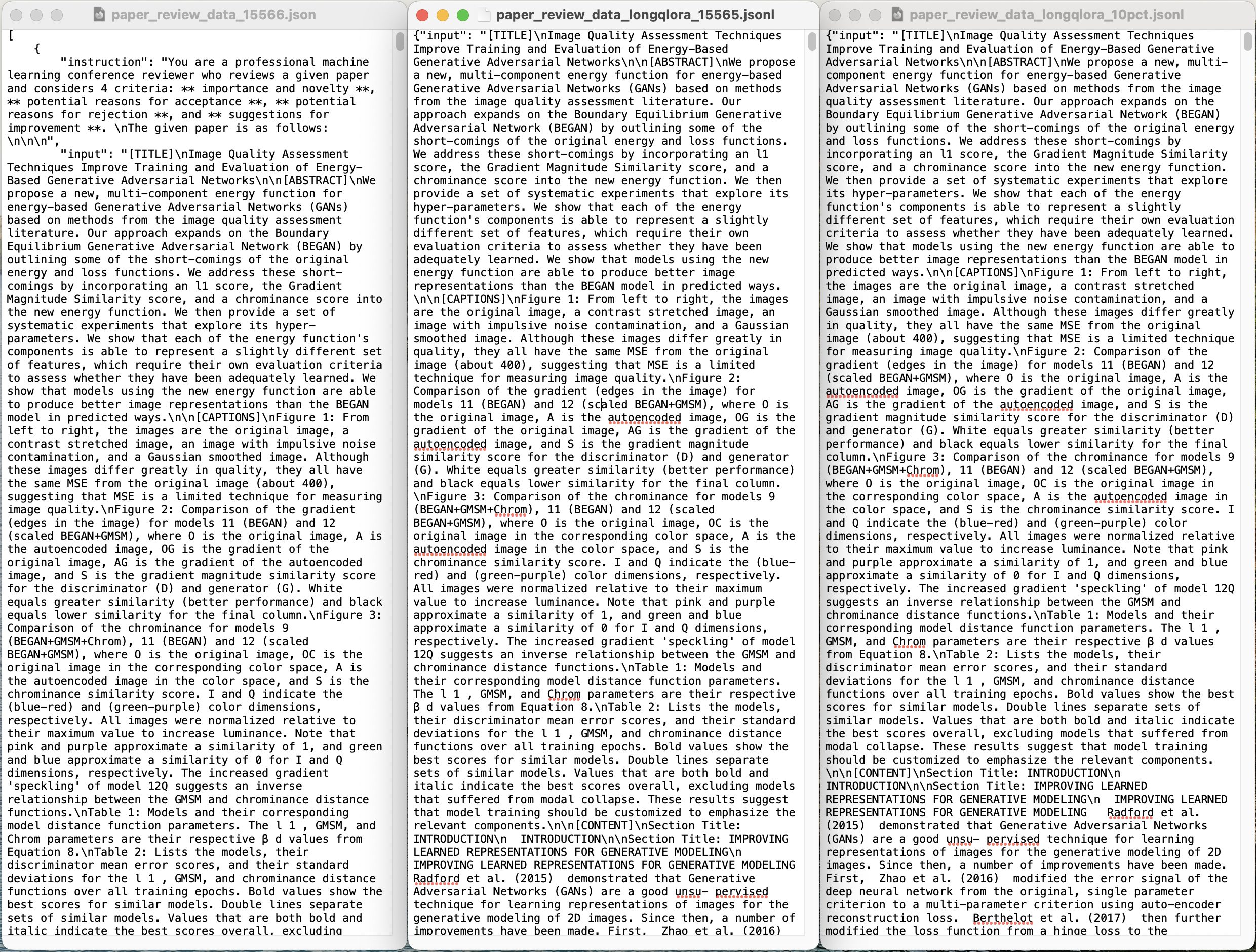

接着通过设计相关指令,且结合处理后的Paper及Review(一篇paper对应一篇review),最终得到一份类Alpaca格式的数据集(instruction-input-output三元组数据),如下所示(至于完整数据集,我司的大模型项目开发线上营里见)

- [

- {

- "instruction": "You are a professional machine learning conference reviewer who reviews a given paper and considers 4 criteria: ** importance and novelty **, ** potential reasons for acceptance **, ** potential reasons for rejection **, and ** suggestions for improvement **. \nThe given paper is as follows: \n\n\n",

- "input": "[TITLE]\nImage Quality Assessment Techniques Improve Training and Evaluation of Energy-Based Generative Adversarial Networks\n\n[ABSTRACT]\nWe propose a new, multi-component energy function for energy-based Generative Adversarial Networks (GANs) based on methods from the image quality assessment literature. Our approach expands on the Boundary Equilibrium Generative Adversarial Network (BEGAN) by outlining some of the short-comings of the original energy and loss functions. We address these short-comings by incorporating an l1 score, the Gradient Magnitude Similarity score, and a chrominance score into the new energy function. We then provide a set of systematic experiments that explore its hyper-parameters. We show that each of the energy function's components is able to represent a slightly different set of features, which require their own evaluation criteria to assess whether they have been adequately learned. We show that models using the new energy function are able to produce better image representations than the BEGAN model in predicted ways.\n\n[CAPTIONS]\nFigure 1: From left to right, the images are the original image, a contrast stretched image, an image with impulsive noise contamination, and a Gaussian smoothed image. Although these images differ greatly in quality, they all have the same MSE from the original image (about 400), suggesting that MSE is a limited technique for measuring image quality.\nFigure 2: Comparison of the gradient (edges in the image) for models 11 (BEGAN) and 12 (scaled BEGAN+GMSM), where O is the original image, A is the autoencoded image, OG is the gradient of the original image, AG is the gradient of the autoencoded image, and S is the gradient magnitude similarity score for the discriminator (D) and generator (G). White equals greater similarity (better performance) and black equals lower similarity for the final column.\nFigure 3: Comparison of the chrominance for models 9 (BEGAN+GMSM+Chrom), 11 (BEGAN) and 12 (scaled BEGAN+GMSM), where O is the original image, OC is the original image in the corresponding color space, A is the autoencoded image in the color space, and S is the chrominance similarity score. I and Q indicate the (blue-red) and (green-purple) color dimensions, respectively. All images were normalized relative to their maximum value to increase luminance. Note that pink and purple approximate a similarity of 1, and green and blue approximate a similarity of 0 for I and Q dimensions, respectively. The increased gradient 'speckling' of model 12Q suggests an inverse relationship between the GMSM and chrominance distance functions.\nTable 1: Models and their corresponding model distance function parameters. The l 1 , GMSM, and Chrom parameters are their respective β d values from Equation 8.\nTable 2: Lists the models, their discriminator mean error scores, and their standard deviations for the l 1 , GMSM, and chrominance distance functions over all training epochs. Bold values show the best scores for similar models. Double lines separate sets of similar models. Values that are both bold and italic indicate the best scores overall, excluding models that suffered from modal collapse. These results suggest that model training should be customized to emphasize the relevant components.\n\n[CONTENT]\nSection Title: INTRODUCTION\n INTRODUCTION\n\nSection Title: IMPROVING LEARNED REPRESENTATIONS FOR GENERATIVE MODELING\n IMPROVING LEARNED REPRESENTATIONS FOR GENERATIVE MODELING Radford et al. (2015) demonstrated that Generative Adversarial Networks (GANs) are a good unsu- pervised technique for learning representations of images for the generative modeling of 2D images. Since then, a number of improvements have been made. First, Zhao et al. (2016) modified the error signal of the deep neural network from the original, single parameter criterion to a multi-parameter criterion using auto-encoder reconstruction loss. Berthelot et al. (2017) then further modified the loss function from a hinge loss to the Wasserstein distance between loss distributions. For each modification, the proposed changes improved the resulting output to

- # 中间一大段略去..

- The first row is model 1, which corresponds with the original BEGAN model. Rows 2-12 represent our experiments. Each cell represents the output of a random sample. \n",

- "output": "[Significance and novelty]\n<Incremental nature of the paper> Reviewers highlighted that the paper's proposed approach was somewhat incremental, and its significance and novelty were questioned. The modifications and additions to the existing BEGAN framework were not perceived as significantly contributing to the field.\n<Limited novelty of energy function components> The paper's novelty was deemed to be restricted, particularly in the choice and justification of the energy function components. Reviewers pointed out that the selected components were borrowed from existing image quality assessment techniques, and their application was not seen as a significant contribution to GAN theories or algorithm designs.\n\n[Potential reasons for acceptance]\n<No related terms> -\n\n[Potential reasons for rejection]\n<Weak experiments and results analysis> Reviewers noted that the experimental section was weak, with reliance on visual evaluations and a limited range of datasets (primarily CelebA). This led to concerns about the thoroughness and robustness of the paper's findings.\n<Unclear significance and contribution> The paper was criticized for its lack of clarity in motivating its contributions and presenting results. Reviewers found it difficult to estimate the significance of the proposed model and understand how its results differed from baseline models.\n\n[Suggestions for improvement]\n<Broaden experimental testing> To strengthen the paper, reviewers suggested broadening the experimental testing to include different datasets involving natural images, beyond the single CelebA dataset. This would provide a more comprehensive evaluation of the proposed techniques.\n<Clarify and justify design choices> Improvements in the paper's clarity and justification were recommended, specifically in clarifying the design choices made for the energy function components. Providing clear justifications for the modifications and additions to the BEGAN framework would enhance the paper's credibility and significance.\n\n"

- },

- {

- "instruction": "You are a professional machine learning conference reviewer who reviews a given paper and considers 4 criteria: ** importance and novelty **, ** potential reasons for acceptance **, ** potential reasons for rejection **, and ** suggestions for improvement **. \nThe given paper is as follows: \n\n\n",

- "input": "[TITLE]\nLearning Independent Features with Adversarial Nets for Non-linear ICA\n\n[ABSTRACT]\nReliable measures of statistical dependence could potentially be useful tools for learning independent features and performing tasks like source separation using Independent Component Analysis (ICA). Unfortunately, many of such measures, like the mutual information, are hard to estimate and optimize directly. We propose to learn independent features with adversarial objectives (Goodfellow et al. 2014, Arjovsky et al. 2017) which optimize such measures implicitly. These objectives compare samples from the joint distribution and the product of the marginals without the need to compute any probability densities. We also propose two methods for obtaining samples from the product of the marginals using either a simple resampling trick or a separate parametric distribution. Our experiments show that this strategy can easily be applied to different types of model architectures and solve both linear and non-linear ICA problems.\n\n\n[CAPTIONS]\nFigure 1: Schematic representation of the entire system for learning non-linear ICA. Specific func- tional shapes can be enforced by choosing a suitable decoder architecture.\nFigure 2: The decoder architecture used for the PNL experiments. It can only learn transformations in which a linear transformation is followed by the application of non-linear scalar functions to each of the dimensions.\nFigure 3: Source signals used in the experiments.\nFigure 4: Convergence plots for the linear synthetic source task.\nTable 1: Maximum correlation results on all tasks for the synthetic data. A 'g' in the suffix of the model name indicates that a separate generator network was used instead of the resampling method. Two scores separated by a '/' indicate that the first score was obtained using the model selection described in Section 6.1 while the second score is simply the best score a posteriori. Parentheses refer contain the standard deviation of the scores multiplied with 10 −4 .\nTable 2: Maximum correlation results on all tasks for the audio data. A 'g' in the suffix of the model name indicates that a separate generator network was used instead of the resampling method. Two scores separated by a '/' indicate that the first score was obtained using the model selection described in Section 6.1 while the second score is simply the best score a posteriori. Parentheses refer contain the standard deviation of the scores multiplied with 10 −4 .\n\n[CONTENT]\nSection Title: INTRODUCTION\n INTRODUCTION When unsupervised models are developed to learn interesting features of data, this often implies that each feature represents some interesting unique property of the data that cannot easily be derived from the other features. A sensible way to learn such features is by ensuring that they are statistically independent.

- # 中间一大段略去..

- Future work should be devoted to a more thorough theoretical analysis of of the proposed methods for minimizing and measuring dependence and how to evaluate them.\n See the appendix for more details about the synthetic signals.\n",

- "output": "[Significance and novelty]\n<Adversarial objectives for computing independent features> The use of adversarial objectives for computing independent features sheds new light on the problem of independent component analysis. It presents an interesting and potentially innovative approach to addressing the issue.\n<Comparison with existing methods for independent component analysis (ICA)> The paper's comparison with existing methods for ICA, such as linear and post-nonlinear ICA, highlights the attempt to address a wide range of ICA problems using the proposed GAN variant. This comprehensive approach could contribute to the literature on ICA methods.\n\n[Potential reasons for acceptance]\n<Conceptually thought-provoking> The paper presents a conceptually thought-provoking approach to independent component analysis using adversarial training, which could contribute to the advancement of ICA methods.\n<Coverage of linear and non-linear ICA problems> The coverage of both linear and non-linear ICA problems demonstrates the broad applicability of the proposed GAN-based approach, potentially adding value to the field of independent component analysis.\n\n[Potential reasons for rejection]\n<Lack of clarity and focus in presentation> Reviewers have expressed concerns about the lack of clarity, focus, and thorough analysis in the presentation of the proposed GAN variant for ICA, leading to a marginal rating below the acceptance threshold.\n<Inadequate comparison with prior work> Reviewers have noted that the comparison with existing methods, such as linear and post-nonlinear ICA, is inadequate, and the paper lacks comprehensive analysis and evaluation, resulting in a rating marginally below the acceptance threshold.\n\n[Suggestions for improvement]\n<Streamlining focus and discussion> The authors should focus their discussion on addressing specific ICA problems, streamlining the presentation, and providing a more focused and in-depth analysis of the proposed GAN variant for ICA. Emphasizing the novelty and significance of the approach could strengthen the paper.\n<Comprehensive comparative analysis> Enhancing the comparison with prior work, especially in the context of linear and non-linear ICA, and providing a more thorough evaluation of the proposed method would address concerns raised by the reviewers and potentially improve the paper's acceptance prospects.\n\n"

- },

- # 总计15566条..

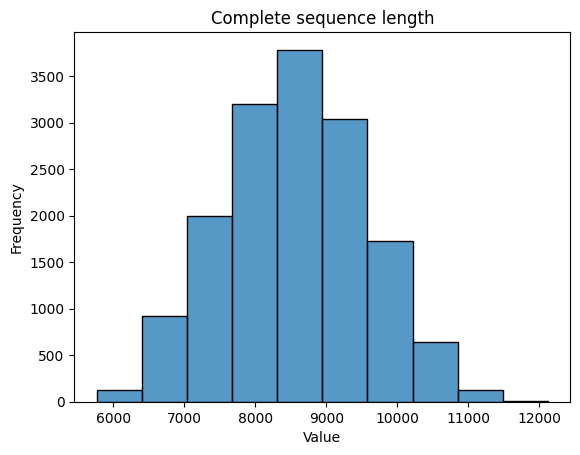

再考虑到单条数据算作“instruction+input+output”的拼接,使用Mistral的tokenizer对各条数据进行分词,并统计数据的token数

由上图大致可了解到单条token数大致在6000至12000的区间,较多数据的长度分布在8500左右,因此后续在为训练模型设定序列裁切(cut off)长度时选择11264或12288比较合适

3.3 (选读)相关工作之AcademicGPT:增量训练LLaMA2-70B,包含论文审稿功能

3.3.1 AcademicGPT: Empowering Academic Research

11月下旬,我司第二项目组的阿荀发现

- 有一个团队于23年11.21日,在arXiv上提交了一篇论文《AcademicGPT: Empowering Academic Research》,论文中提出了AcademicGPT,其通过学术数据在LLaMA2-70B的基础上经过继续训练得到的

- 然后该团队AcademicGPT的基础之上延伸开发了4个方面的应用:学术问答、论文辅助阅读、论文评审、标题和摘要的辅助生成等功能,由于其中的论文问答、论文摘要等功能已经很常见了(比如此文提到的chatpaper、中科院一团队的gpt_academic都通过GPT3.5的API做了还可以的实现),但论文评审此前一些开源工具通过GPT3.5做的效果并不好,所以既然AcademicGPT做了论文审稿这一功能,而且还用了70B的模型,那必须得关注一波,于是便仔细研究了下他们的论文

(当然,我相信,他们很快也会关注到我司论文审稿GPT这个工作,然后改进他们的训练策略,毕竟同行之间互相借鉴,并不为怪 )

他们与我们有两点显著不同的是,一者,他们对LLaMA做了增量预训练(AcademicGPT is a continual pretraining on LLaMA2),二者,我司目前的论文审稿GPT暂只针对英文论文的评审(毕竟七月的客户要发论文的话,以英文EI ei期刊 SCI论文为主,其次才中文期刊),而他们还考虑到了中文,故他们考虑到LLaMA2-70B有限的中文能力与学术领域知识,所以他们收集中文数据和学术英文数据来对相关方面进行提高

- 中文数据:取自CommonCrawl、Baike、Books等(此外还从互联网爬取了200K学术文本)

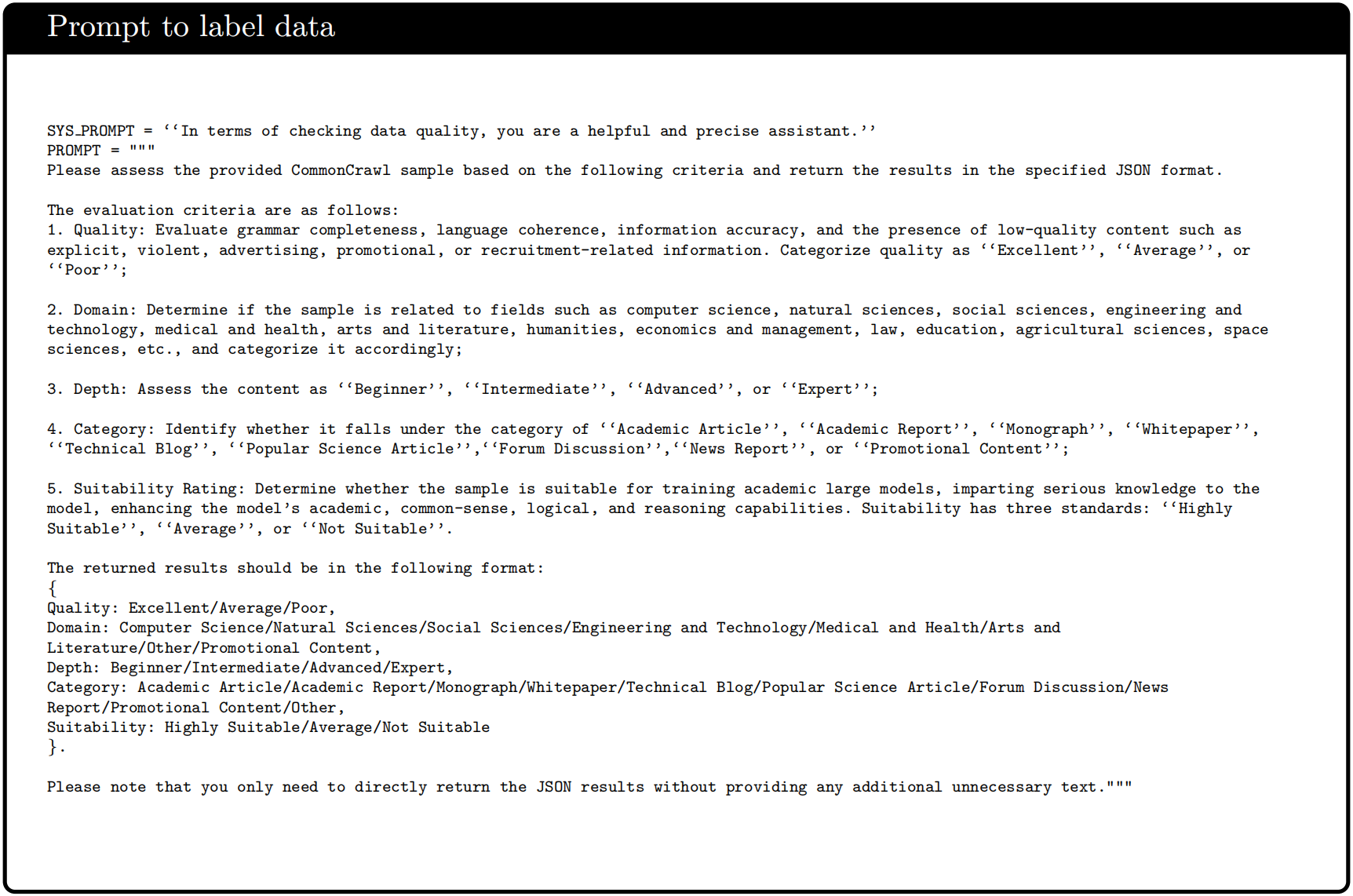

由于CC这类数据通常会包含很多广告、色情等有害信息,所以需要对其进行数据清洗,最终他们借助LLM且使用下图所示的Prompt来对取自互联网的数据进行清洗,比如对文档进行各种标注

(根据论文原文,我们判断,他们应该是先让模型基于人类给的prompt 对一些文本做标注,之后ChatGPT对同样那些文本做标注,最后对比这两者之间的差异,建损失函数 然后微调模型本身,差不多后,模型对剩下的文本做标注)

- 英文学术数据:爬取来自200所顶尖大学的100多万篇Paper、Arxiv的226万篇Paper(截止到23年5月),其中较长的Paper使用Nougat进行解析(和我司七月一样 )、较短的Paper使用研究团队自己的解析器,此外还有4800多万篇来自unpaywall2的免费学术文章,以及Falcon开源的数据集中的学术相关数据

基于上述所得的120B的数据,他们使用192个40G显存的A100 GPU进行继续二次预训练(他们的所有工作我没有任何羡慕,但唯独他们有192块A100,让我个人着实羡慕了一把,好期待有哪个大豪可以解决下我司七月的GPU紧缺问题,^_^),最终通过37天的训练,使得LLaMA2-70B进一步获得理解中文与学术内容的能力,以下是关于训练的更多细节

- 且为了加快训练过程,用了FlashAt-tention2 (Dao, 2023),它不仅加快了注意力模块的速度,而且节省了大量内存,且通过Apex RMSNorm实现了融合cuda内核(Apex RMSNorm that implements a fused cuda kernel)

- 由于AcademicGPT是LLaMA2- 70b的二次训练模型,因此它使用了一些与LLaMA2相同的技术,包括

RMSNorm (Zhang and Sennrich, 2019)而不是LayerNorm,

SwiGLU (Shazeer, 2020)而不是GeLU

对于位置嵌入,它使用RoPE (Su et al., 2021)而不是Alibi(Press et al., 2021)

对于tokenizer,它使用BPE (Sennrich等人,2015)

且使用DeepSpeed (Rasley et al., 2020)和Zero (Rajbhandari et al., 2020),且他们的训练基于gpt-neox (Black et al., 2022)框架,其中我们集成了许多新引入的技能。使用具有40GB内存的192个A100 gpu完成120B数据的训练需要大约37天

3.3.2 论文评审:借鉴ReviewAdvisor抽取出review的7个要点(类似我司借鉴斯坦福工作把review归纳出4个要点)

他们和我司一样,都是从同一带有论文review的网站上收集了29119篇Paper和约79000条Review,然后经过下述处理

- Paper侧处理:剔除了7115篇无内容或无Review的Paper、剔除了解析失败的Paper

- Review侧处理:

- 剔除了具有过多换行符的Review

- 剔除了过短(少于100 tokens),或过长(多于2000 tokens)的Review

- 剔除了与Decision Review决策不一致、且confidence低的Review

- 抽取review要点

和我司「3.2.1 设计更好的提示模板以让大模型帮梳理出来review语料的4个内容点」类似,他们则参考的是《Can We Automate Scientific Reviewing?,作者为Weizhe Yuan等人,另 其对应的GitHub地址为:https://github.com/neulab/ReviewAdvisor》中的7个方面要点,去掉Summary所以是7个:1 动机/影响Motivation/Impact

2 原创性Originality

3 合理性/正确性Soundness/Correctness

4 实质性Substance

5 可复现性Replicability

6 有意义的对比Meaningful Comparison

7 清晰程度Clarity)

且使用该论文的源码对Review进行进一步标注,然后抽取出相应的要点「具体而言,他们在归纳单篇review的7或8个要点时,给定一篇review,让BERT逐个逐个的标注出每个token/词它可能所属的要点类别,BERT对整篇review标注完以后,把有要点标注结果的内容给抽出来,比如下图,模型逐个对每个token标注出了summary(紫色)、clarity(黄色)、substance(橙色)等等,然后把带颜色的要点部分抽出来作为该篇review的归纳,其中+表示积极的情绪,-表示负面情绪」

最终,经过上面一系列梳理之后得到的paper数据 + 归纳好的review数据去微调70B模型

为方便大家理解,我补充一下关于这篇《Can We Automate Scientific Reviewing?》的解释说明

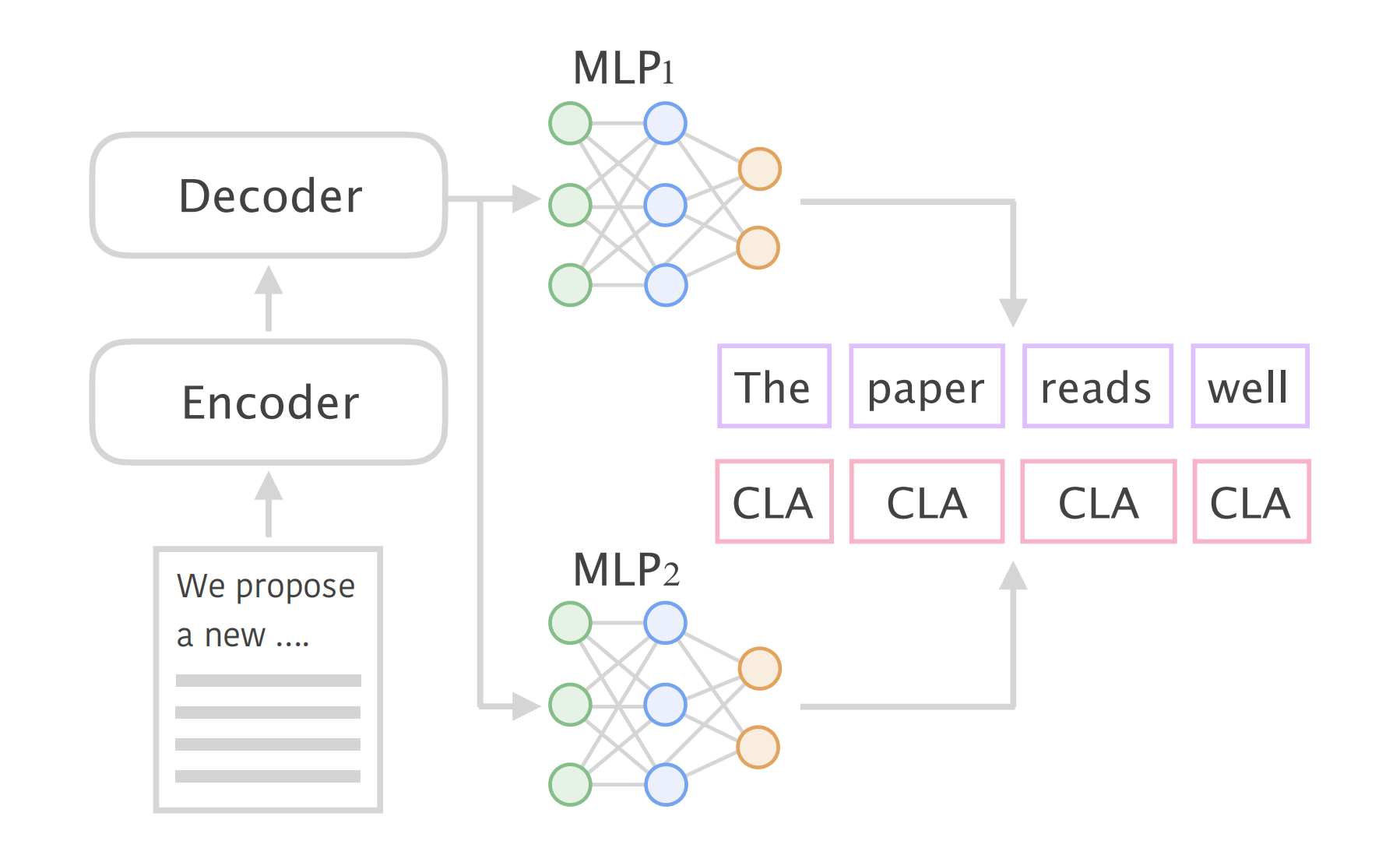

事实上,该篇论文的视角在于将“Review”视作对Paper的摘要与对应内容的评估,以此保证事实正确性。因此该篇论文考虑将Paper Review问题建模为摘要生成任务,采用当时(2021)较为先进的BART模型进行训练,得到ReviewAdvisor模型

通过设计好的评估系统,得出如下观察:

- 模型容易生成非事实性陈述

- 模型尚未学习到高级理解,如没法实质地分辨Paper的高质量与低质量

- 模型倾向于模仿训练数据的语言风格(倾向低级模式),如容易生成训练样本中的高频句子

- 可以较好地概括论文核心思想

最终结论是:“模型评审还尚未能替代人工评审,但可以辅助人工进行评审”

这项工作有两个值得关注的地方:

- 增强Review数据(通过BERT对review数据抽取式归纳出8个要点、然后人工做校正)

对于相对杂乱的Review内容来说,研究团队只想保留有用的“结构化”内容,因此他们将从定义“结构化方面”开始,从Review中取出相应的结构化内容,由此实现Review侧的数据增强1 定义结构化方面

研究团队讨论出了他们所认为的一篇“好的Review”所应该具备的各个方面,包括如下8个要点:

Summary(SUM):总结摘要

Motivation/Impact(MOT):动机/影响

Originality(ORI):原创性

Soundness/Correctness(SOU):合理性/正确性

Substance(SUB):实质性

Replicability(REP):可复现性

Meaningful Comparison(CMP):有意义的对比

Clarity(CLA):清晰程度

2 人工标注

研究团队邀请6名具有机器学习背景的学生对原本的Review进行注释,注释手法倾向于“抽取式摘要”,即标注原文本中哪些片段属于何种类别「which are Summary (SUM), Moti-vation/Impact (MOT) , Originality (ORI), Sound-ness/Correctness (SOU), Substance (SUB), Repli-cability (REP), Meaningful Comparison (CMP)and Clarity (CLA)」

类似于“... ... The results are new[Positive Originality] and important to this field[Positive Motivation] ... ...”

3 训练标注器

考虑到人工标注全部数据并不现实,使用第2步标注过的Review数据训练一个BERT抽取模型作为标注器,用于自动标注原Review中的方面项。即输入Review文本,BERT对文本进行逐token分类预测,预测出Review哪些部分属于哪些方面

4 后处理

使用标注器BERT对余下数据进行标注后,其结果并不完全可信(毕竟BERT的能力没有像GPT3.5那么强,即结果没那么可信),需要制定规则或使用人工对标注器的预测结果进行校正

5 人工检查

邀请具有机器学习背景的人员检查标注结果- 生成Review(通过paper和BERT抽取且人工校正过的review语料,微调BART)

根据给定Paper生成Review,模型选型为彼时最大长度为1024的BART模型,考虑到Paper的长度较长,因此整个生成Review的方案被设计成了两阶段的形式,即首先从Paper中择取出突出片段(输入上下文长度压缩),然后基于这些突出片段来生成review摘要

选取突出片段

使用诸如“demonstrate”“state-of-the-art”等关键词及对句子的诸多规则判断来确定突出片段

训练方面感知摘要(Aspect-aware Summarizaiton)模型

基于基础Seq2Seq模型实现的是由输入序列(Paper)预测输出序列(Review)的过程,研究团队在这个基础上引入了“方面感知”来辅助模型进行预测,强调模型对“方面要点”的输出,即引入两个的多层感知机来分别进行生成任务:模型不仅要逐token生成Review内容,还要逐token预测其对应的“方面要点”因此模型需要同时学习两个损失函数

这也意味着模型在一次推理中将输出2条序列,其一为预测的Review内容(其损失函数为),其二为预测的方面要点(其损失函数为

)

3.3.3 70B的AcademicGPT在论文审稿上效果不佳的原因

根据原论文中展示的对一些论文做审稿的案例来看,其效果并不佳

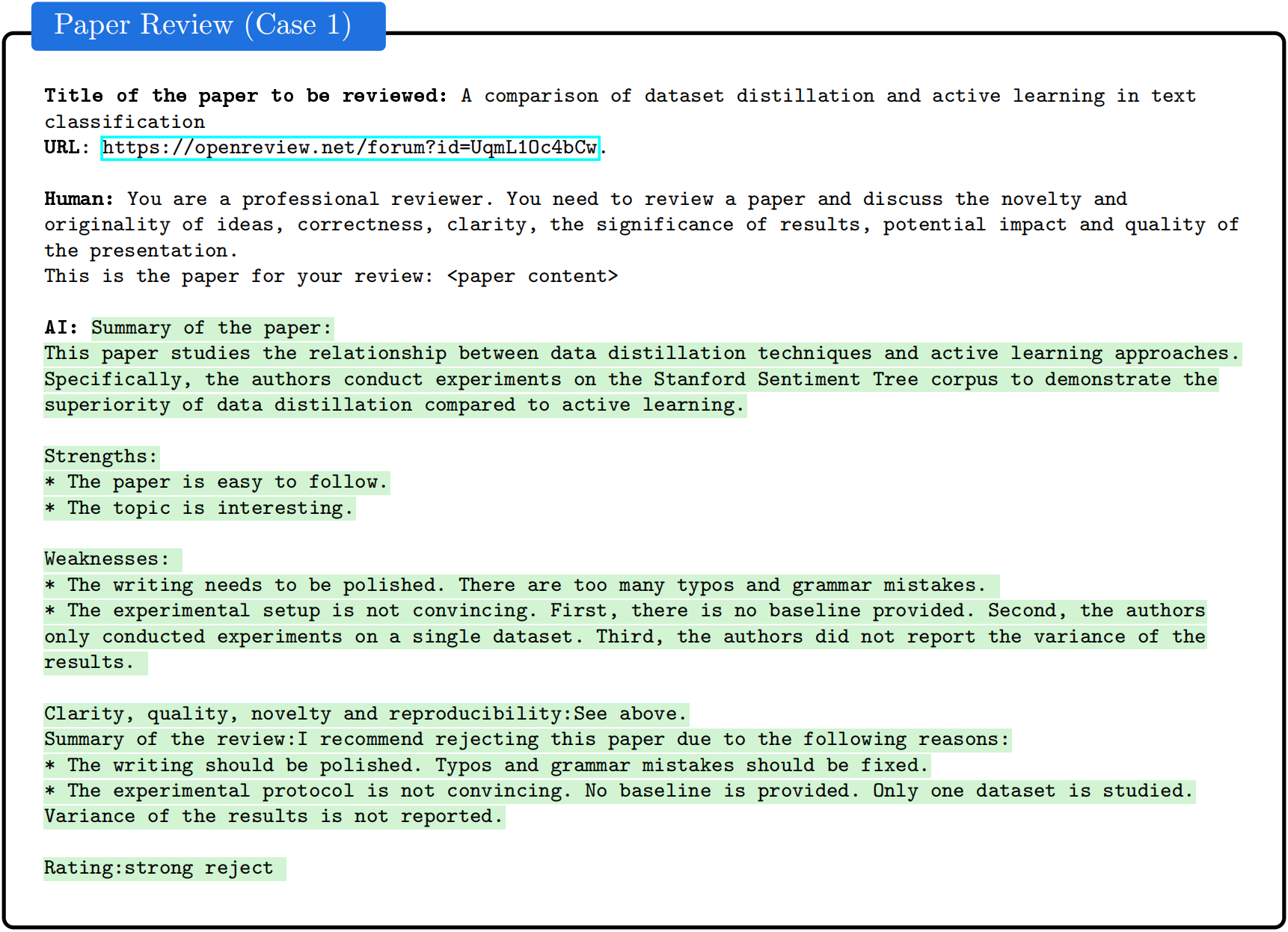

下图是论文中的两个审稿案例

- 下图是论文中的审稿案例1,可以看出来,它指出对应论文的缺点:“写作需要打磨。存在太多的拼写和语法错误。实验设置不够令人信服。首先,没有提供基线。其次,作者仅在单一数据集上进行了实验。第三,作者没有报告结果的方差。”

这种审稿意见对于论文作者本身而言,参考价值可能不大,毕竟当你指出有太多的拼写和语法错误,最好是具体指出来所谓的拼写和语法错误是在论文中哪一段

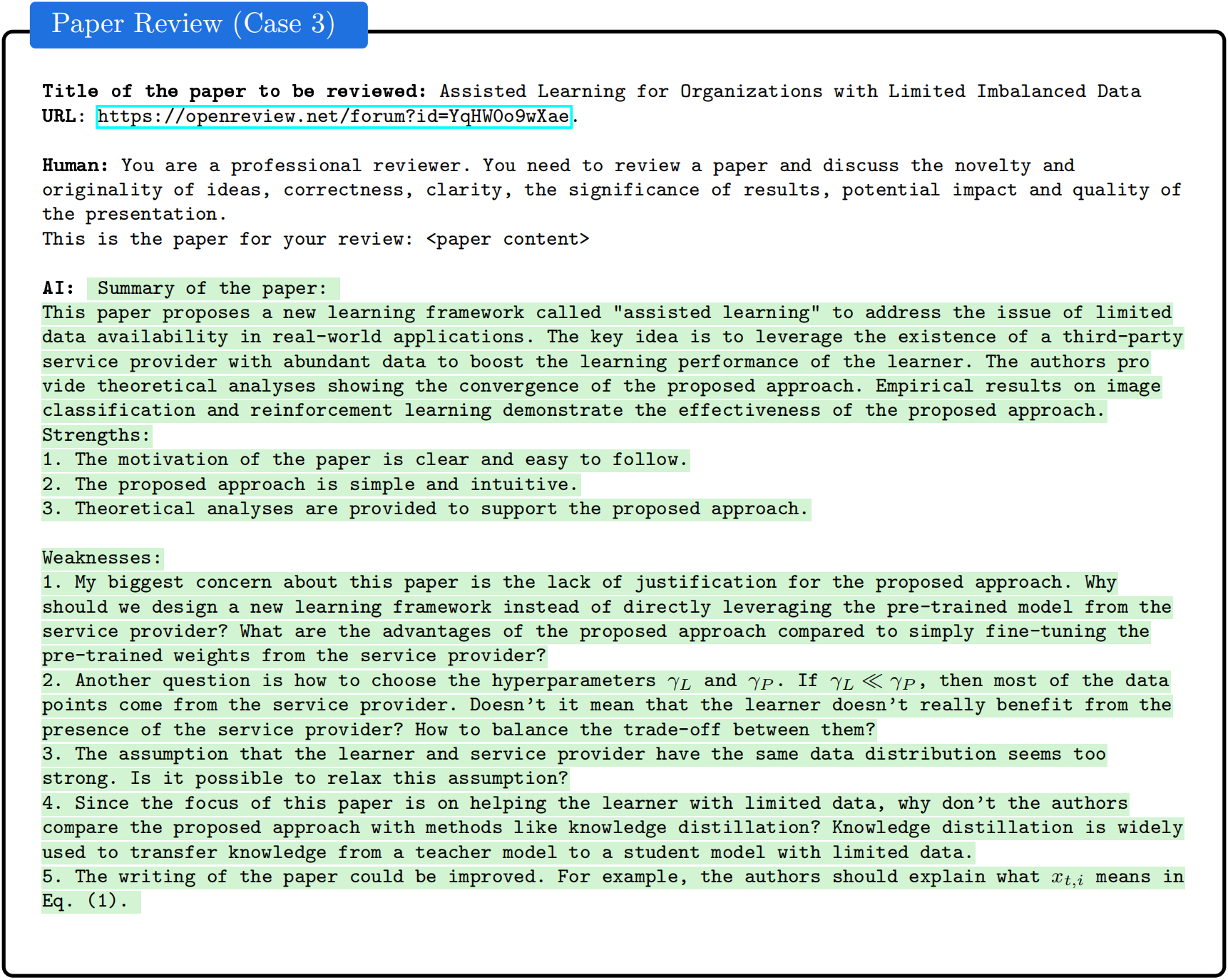

- 下图是论文中的审稿案例2

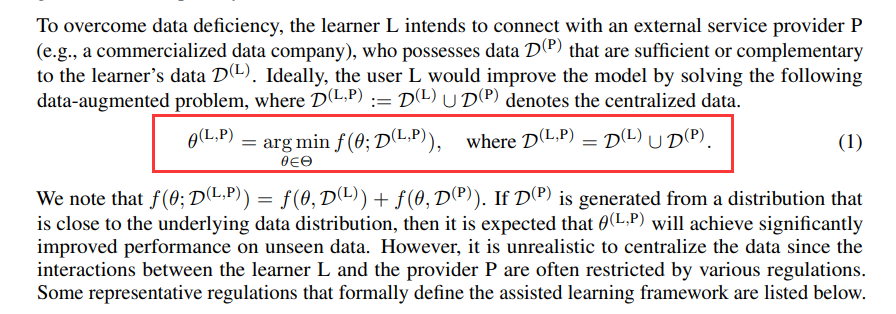

但第5个Weaknesses的点「5. The writing of the paper could be improved. For example, the authors should explain what xt,i means in

Eq. (1) 」是说论文应该解释下公式(1)中的含义,但原论文的公式(1)不涉及

而效果不佳的原因有多个方面,下面更多对比与我司的不一致

- Focus程度不一样

与我司现在整个项目组全力以赴迭代论文审稿GPT不同,对于AcademicGPT而言,论文审稿只是他们4大应用中的其中一块,当然,他们选用的基座模型的参数规模更大、卡也比我们多 - 做摘要抽取的模型不一样

他们通过BERT对review数据抽取出7个要点,而早期模型BERT抽取的结果不一定准确(即便加了一定的人工校正),毕竟和我司所用的GPT3.5还是没法比的 - 做摘要抽取时的策略不一样

即他们通过BERT对review做抽取式摘要时,直接抽取review原话(通过“抽取式摘要”拿出来,相当于抽取、挪动、组合原有的review词 ),可review是由各式各样的人写的,原话风格高度不统一,模型可能会收敛困难

总之,他们与我司论文审稿GPT的差异就在于

他们是抽取式提取要点、我司是生成式归纳要点抽取式抽出来的是原话,但是原话措辞风格迥异,而且抽取式模型能力有限需要做很多人工核验等后处理

但对于生成式而言,尤其是LLM的生成式可以根据要求生成相对统一的措辞风格

- review本身信息的全面程度不一样

他们把各个review抽取出7个要点后,没继续做多聚一的操作

我司把各个review归纳出4个要点后,为让单篇paper所对应的review信息更加全面,做了多聚一的操作

所以虽然AcademicGPT最终基于LLaMA2-70B去微调,模型参数规模比我司选用的大

但因为review数据的质量有限,最终效果自然不会太好

当然,在没有实际开源出来让用户使用之前,也不好下太多论断,具体等他们先对外开放吧(且他们看到本文后,我相信很快也会改进)

第四部分 模型的选型:从Mistral、Mistral-YaRN到LongLora LLaMA

23年12月中旬,本项目总算要走到模型选型阶段了,在此前的工作:数据的处理和数据的质量提高上,下足了功夫,用了各种策略 也用了最新的GPT3.5 16K帮归纳review信息,整个全程是典型的大模型项目开发流程

而论文审稿GPT第二版在做模型选型的时候,我司一开始考虑了三个候选模型:

- Mistral

- Yarn-Mistral-7b-64k

- LLaMA-LongLora

以下逐一介绍这三个模型,以及对应的训练细节、最终效果

4.1 前置知识:Mistral 7B、YaRN、LongLoRA/LongQLoRA

4.1.1 什么是Mistral 7B

参见此文的第一部分《从Mistral 7B到MoE模型Mixtral 8x7B的全面解析:从原理分析到代码解读》

4.1.2 什么是YaRN

因项目中要用到YaRN,所以我又专门写了一篇文章介绍什么是YaRN,详见《大模型上下文扩展之YaRN解析:从直接外推ALiBi、位置插值、NTK-aware插值、YaRN》

比如该文中有讲到:“3.1 YaRN怎么来的:基于“NTK-by-parts”插值修改注意力”

除了前述的插值技术,他们还观察到,在对logits进行softmax操作之前引入温度t可以统一地影响困惑度,无论数据样本和扩展上下文窗口上的token位置如何,更准确地说,将注意力权重的计算修改为

通过将RoPE重新参数化为一组2D矩阵对,给实现注意力缩放带来了明显的好处(The reparametrization of RoPE as a set of 2D matrices has a clear benefit on the implementation of this attention scaling)

- 可以利用“长度缩放”技巧,简单地将复杂的RoPE嵌入按相同比例进行缩放,使得qm和kn都以常数因子

进行缩放

这样一来,在不修改代码的情况下,YaRN能够有效地改变注意力机制

we can instead use a "length scaling" trick which scales both qm and kn by a constant factor p 1/t by simply scaling the complex RoPE embeddings by the same amount.

With this, YaRN can effectively alter the attention mechanism without modifying its code.- 此外,在推理和训练期间,它没有额外开销,因为RoPE嵌入是提前生成并在所有向前传递中被重复使用的。结合“NTK-by-parts”插值方法,就得到了YaRN方法

Furthermore, it has zero overhead during both inference and training, as RoPE embeddings are generated in advance and are reused for all forward passes. Combining it with the "NTK-by-parts" interpolation, we have the YaRN method对于LLaMA和LLaMA 2模型,他们推荐以下值:

上式是在未进行微调的LLaMA 7b、13b、33b和65b模型上,使用“NTK-by-parts”方法对各种因素的尺度扩展进行最小困惑度

拟合得到的(The equation above is found by fitting p 1/t at the lowest perplexity against the scale extension by various factors s using the "NTK-by-parts" method)

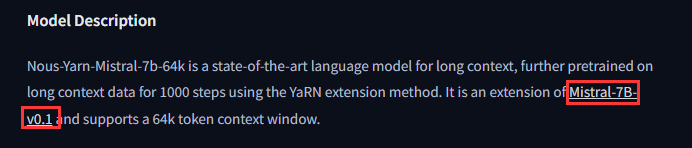

Yarn-Mistral-7b-64k相当于自己实现了modeling,即把mistral的sliding windows attention改了,相当于把sliding windows的范围从滑窗大小直接调到了65536即64K(即直接滑65536那么个范围的滑窗,其实就是全局)

4.1.3 LongLoRA LLaMA与LongQLoRA LLaMA

通过此文《通透理解FlashAttention与FlashAttention2:让大模型上下文长度突破32K的技术之一》的开头可知,LLaMA2的上下文长度只有4K,但通过longlora技术的加持,可以让其上下文长度扩展到32K(LLaMA2 7B可以扩展到100K、LLaMA2 70B可以扩展到32K)

| 模型 | 对应的上下文长度 |

| LLaMA | 2048 |

| LLaMA2 | 4096 |

| LLaMA2-long(其23年9.27发的论文) | 32K |

| 基于LongLoRA技术的LongAlpaca-7B/13B/70B | 32K以上 |

而LongQLoRA则相当于LongLoRA + QLoRA

至于什么是LongLoRA、LongQLoRA,请参见此文:《大模型上下文长度的超强扩展:从LongLoRA到LongQLoRA(含源码剖析)》

4.2 模型怎么选,此四PK:Yarn-Mistral-7b-64k、Mistral-instruct、LLaMA-LongLoRA、LLaMA-LongQLoRA

接上文《3.2.4 对review数据的最后梳理:得到JSON文本的变体版且剔除长尾数据》,我们终于要开始选择合适的模型来微调了,然后在具体微调的时候,又注意到了微调库llama factory

所以我们有以下4种微调方式

- 为微调Yarn-Mistral-7b-64k(qlora+s2 + llama factory),阿荀准备的1张显存为48G的A40

- 雪狼直接通过llama factory微调Mistral-instruct时,则一开始准备的4-8张显存为24G的P40,后来 还是换成了A卡,算是折腾了一圈:从 4*24 到 8*24,再到魔塔(在线)单卡80跑完实例关闭,到最后A40 48(在线autodl)

- 为微调LLaMA-LongLoRA (不染直接改的longlora源码,比如把Embedding和layernorm的lora权重给去掉了),准备2张显存为48G的A40

- 为LLaMA-LongQLoRA (阿荀直接改的longqlora的源码,longqlora的源码剖析见此文),准备1张显存为48G的A40

接下来便逐一阐述以上4种微调方式

4.2.1 Yarn-Mistral-7b-64k

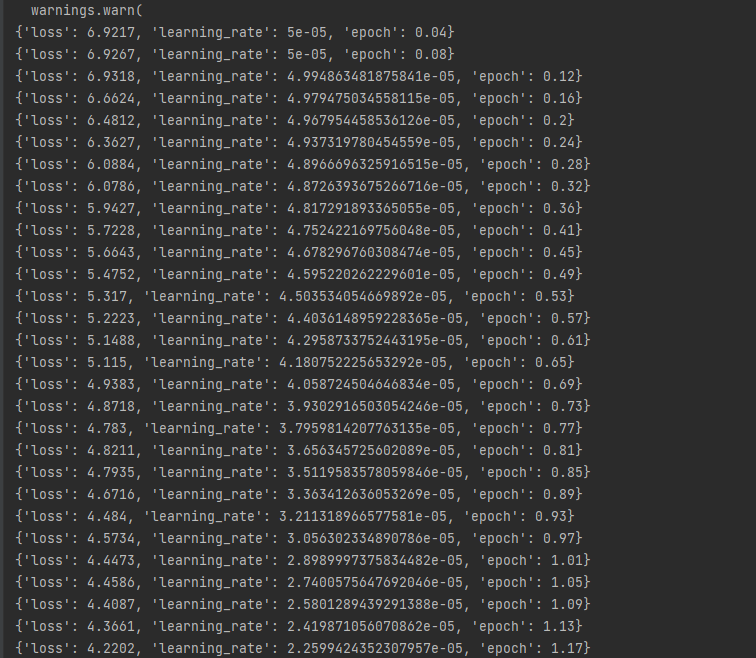

一开始阿荀通过「yarn-mistral + qlora + s2attn + llama factory」跑起来了几百条数据后发现,初始loss达到了6(当然,虽然loss初期很高,但有在下降,说明模型还是有学到,只不过初期loss很高说明我们给的数据和模型所学过的数据差异比较大)

这里面有三件比较有意思的事

- 细究才发现Yarn-Mistral-7b-64k不是个chat模型(yarn-mistral使用的基座是非sft模型),说白了Yarn-Mistral-7b系列均是基于非chat模型训练所得

故一方面我们在微调Yarn-Mistral-7b-64k的同时,也开始关注跟随Mistral 7B一同发布的Mistral 7B – Instruct

- 但后来我们还是不信邪,还是把Yarn-Mistral-7b-64k硬跑了下来,在1.10日下午loss已经降到1.8拉,可喜可贺,^_^

- 然虽yarn-mistral这边练了2个epoch了后,loss虽然相对稳定下降,但仍旧表现出复读严重的问题,调整过解码策略参数也仍是一样

原因猜测是上下文长度太长,注意力分配出去太匀了,以至于内容再加多点、注意力也是大差不差的感觉

实话讲,上面第三个问题 还挺麻烦的,因为模型的输出没有实质性内容,就是在复读用户的一部分输入

加之在此之前,没有人公开用yarn后的模型做过sft,没有实证可以参考

- 不清楚其是否不适合做微调(是否破坏了yarn的外推性)、又为何不适合,这点没有大量、多角度的实验的话是无从确定的

- 又或者是需要更多数据、更大规模模型、更多训练量等等

那最后怎么办呢,具体大模型项目开发线上营中见

4.2.2 直接通过llama factory微调Mistral-instruct

如果我们要微调Mistral 7B–Instruct的话,我们当时的第一反应是怎么扩展Mistral 7B–Instruct的长度呢(Mistral 7B–Instruct的上下文长度只有8K)

- 既然可以给Mistral-7b加YaRN,那类似的,给Mistral 7B–Instruct加YaRN行不行?

然问题是不好实现:YaRN-Mistral 7B – Instruct,因为Yarn是全量训的方案,而大滑窗范围+全量很吃资源 - 受LongLora LLaMA的启发,既然没法给Mistral 7B–Instruct加YaRN,那可以给其加longlora么?

然问题是mistral又没法享有longlora,因为mistral的sliding windows attention和longlora的shift short attention无法同时兼容,但要对原chat模型的上下文长度进行有效扩展又会需要shift short attention - 至此,是不只能意味着,chat版本的mistral-instruct不加其他技巧盲练长文本,还是有别的办法?这个,我司的「大模型项目开发线上营」里见

4.2.3 LLaMA2 7B chat-LongLoRA:成功

4.2.3.1 不染的工作:大改LongLoRA源码跑第一轮

在最开始用微调库llama factory实际微调LLaMA-LongLoRA时

- 发现即便用上了longlora占用还很大,后来发现原来是需要用llama-factory的stable版本

- 但最终还是没用llama factory了,直接魔改LongLoRA源码(比如embedding和Norm层不添加LoRA权重,原因在于一开始添加了但训练效果不稳定,猜测是如果embedding放开得要更大的数据才训得够)

但正因为做了各种魔改,最终不太可控,导致如果正常训练该有的参数的话,则显存不够 得加量化,最终不得已在没加量化的情况下 减少了训练参数(从targets=["q_proj", "k_proj", "v_proj", "o_proj"],减少到targets=["q_proj", "v_proj"]),这点可能会为最终效果不达预期埋下隐患

24年1.31日,不染通过longlora跑了4个epoch之后,针对一篇新的论文得到的review意见如下

- <Potential reasons for acceptance>

- <Technical solidity> The paper presents a technically solid approach to neural architecture search, offering a novel perspective on the problem and providing a theoretically sound optimization method.

- <Empirical evidence> The experimental results demonstrate competitive or better performance compared to existing methods, along with improved efficiency and scalability.

- <Clear presentation> The paper is well written and easy to understand, making it accessible to a wide audience.

-

- [Potential reasons for rejection]

- <No related terms> The paper lacks related terms, which may impact its relevance and positioning in the field.

- <Insufficient comparison with prior art> The paper does not sufficiently compare its approach with prior art, particularly in relation to existing methods for neural architecture search.

- <Unclear motivation> The motivation behind the proposed method is not clearly explained, leading to uncertainty about its significance and novelty.

-

- [Suggestions for improvement]

- <Include related terms> The paper should include relevant related terms to enhance its positioning and relevance in the field.

- <Comprehensive comparison with prior art> A thorough comparison with existing methods for neural architecture search, particularly those addressing similar issues, would strengthen the paper's contribution.

- <Clarify motivation> Providing a clearer explanation of the motivation behind the proposed method would help establish its significance and novelty.

- </s>

4.2.3.2 阿李的工作:小改LongLoRA源码跑第二轮

再之后,我让第二项目组另外一位同事阿李又跑了一轮,其中,超参和longqlora的一致(emb和norm是默认就加权重),且虽说longlora的rank 不一定非得设置为64,但考虑到设置为64也能跑,那就暂先64

最终,gpu所耗资源还行,大概也就比longqlora多了5G左右的占用,故没用量化

至于具体我们如何训练的,以及最终该模型的效果如何,请见下文的模型训练、与模型评估

4.2.4 基于LongQLoRA + 一万多条paper-review数据集微调LLaMA2 7B chat:成功

LLaMA2 7B chat本身的上下文长度只有4096,好在我们给它加上LongQLoRA之后,其上下文长度确实实现了从4096到12288(至于为何是12288,原因见上文的3.2.4节最后)

24年1.17日(是在我创业即将9周年的前两天),在历经80h的模型训练之后,我们终于通过15565条paper-review数据集把LLaMA2 7B chat LongQLoRA微调好了(相比3.2.4节最后说的15566去掉了一条异常数据,至于怎么个异常法,线上营中说 ),是我司第二项目组「包括我、阿荀(主力)、朝阳、雪狼、不染」花费整整半年、且历经论文审稿第一版、第二版的里程碑式工作(后续再迭代优化下之后,今年会把这个工作发表成SCI论文)

具体而言

- 用下面这段名为“llama2_instruct+input ”的prompt (至于更全面的prompt线上营中见)

(简单解释一下格式的问题

由于如上文3.2.4节最后说的,我们微调的数据格式均为JSON格式的变体,即

[Significance and novelty]

<大体描述> 具体描述

<大体描述> 具体描述

...

[Potential reasons for acceptance]

<大体描述> 具体描述

<大体描述> 具体描述

...

相当于LLaMA2已经对上述这种JSON变体格式轻车熟路,所以在设计prompt时,不用再特地强调格式的输出)- You are a professional machine learning conference reviewer who reviews a given paper and considers 4 criteria: ** importance and novelty **, ** potential reasons for acceptance **, ** potential reasons for rejection **, and ** suggestions for improvement **.

- The given paper is as follows.:

- [TITLE]

- YaRN: Efficient Context Window Extension of Large Language Models

- [ABSTRACT]

- Rotary Position Embeddings (RoPE) have been shown to effectively encode posi- tional information in transformer-based language models. However..

- # 还有一大段CONTENT,略..

- 然后对比了GPT3.5和GPT4针对YARN这篇论文的审稿意见

首先用的prompt如下(纯正的JSON格式)-

- You are a professional machine learning conference reviewer who reviews a given paper and considers 4 criteria: ** importance and novelty **, ** potential reasons for acceptance **, ** potential reasons for rejection **, and ** suggestions for improvement **.

- You just need to use the following JSON format for output, but don't output opinions that don't exist in the original paper. if you're not sure, return an empty dict:

- {

- 'Significance and novelty': List multiple items by using Dict, The key is a brief description of the item, and the value is a detailed description of the item.

- 'Potential reasons for acceptance': List multiple items by using Dict, The key is a brief description of the item, and the value is a detailed description of the item.

- "Potential reasons for rejection": List multiple items by using Dict, The key is a brief description of the item, and the value is a detailed description of the item.

- 'Suggestions for improvement': List multiple items by using Dict, The key is a brief description of the item, and the value is a detailed description of the item.

- }

- The given paper is as follows.:

- [TITLE]

- YaRN: Efficient Context Window Extension of Large Language Models

- [ABSTRACT]

- Rotary Position Embeddings (RoPE) have been shown to effectively encode posi- tional information in transformer-based language models. However, ...

- # 还有一大段CONTENT,略..

接下来是三个重点工作

- 继续微调另外两个开源模型

- 微调gpt 3.5 16k

- 实现评估pipeline,全面对比我们微调的各个开源模型(包括微调前后)、GPT3.5/4(包括微调前后)的效果,争取早日赶超GPT4

第五部分 模型的训练:如何微调LLaMA2、Yarn-Mistral

5.1 LLaMA2 7b chat + LongQLoRA训练

5.1.1 微调时对LongQLoRA代码的修改

通过上文这节《4.4.4 基于LongQLoRA + 一万多条paper-review数据集微调LLaMA2 7B chat:成功》的内容,我们已经知道终于微调成功了,但到底如何基于一万多paper-review数据集微调LLaMA 2呢?

首先,如之前所说,我们的微调代码是改自LongQLoRA的源码(没有用llama factory),具体而言

- LongQLoRA源码没有实现LLaMA2 sft训练的数据读取类(包括数据读取、数据组织、tokenizing),要自己实现

- 原本的LongQLoRA源码训练参数指定使用fp16数据类型,但是训练可能会很不稳定

具体而言,可能会出现数值溢出问题,初期就出现loss严重震荡,甚至高达上百,并在数步后返回模型已收敛(但实际并未)的提示而中断训练,故要设置使用bf16数据类型进行训练,loss就能稳定从4.多开始收敛 - 要使用支持bf16数据类型(即不建议使用V开头的卡)的卡来训练,使用A40最合适,支持bf16数据类型、显存48G刚好

当然,更多细节在线上营中透露,如下图所示

但上图好像没有看到文件1、文件3、文件5呢?原因在于

- component文件夹下

dataset.py需要修改,参照VicunaSFTDataset去实现Llama2SFTDataset,算文件1,所以标号1空出来了

不过,仿写的时候需要注意

一方面,因为longqlora源码的风格是把instruction放代码里了,所以实际微调时,所用的训练数据 可以只有input、output 而没有instruction

二方面,如阿荀所说“我最开始照着vicunasft实现的,发现超出长度的时候截断方式是直接从尾部截断,极端情况下可能会完全截去output的内容

所以改成让截断从input、output各截断一部分,确保无论如何一条数据也要有output部分”

相当于input所代表的paper截断一部分,output所代表的review截断一部分

可能有同学会疑问,既然longqlora把llama2 拉长到12K以上了,为何还要做截断呢

结果是不做安全截断还是会出现每次运行都不一样的报错,至少longqlora的代码用来跑我们爬的数据会是这样(可能是longqlora实现的问题,或者transformers版本的问题,因为有的框架不做安全截断也没事) - train_args文件夹下

需要自定义:llama2-7b-chat-sft-bf16.yaml,算文件3,相关说明见下文5.1.4节 - train.py,算文件5,加载模型时需要修改成bf16

- # 加载模型

- logger.info(f'Loading model from: {args.model_name_or_path}')

- model = AutoModelForCausalLM.from_pretrained(

- args.model_name_or_path,

- config=config,

- device_map=device_map,

- load_in_4bit=True,

- # torch_dtype=torch.float16,

- torch_dtype=torch.bfloat16,

- trust_remote_code=True,

- quantization_config=BitsAndBytesConfig(

- load_in_4bit=True,

- # bnb_4bit_compute_dtype=torch.float16,

- bnb_4bit_compute_dtype=torch.bfloat16,

- bnb_4bit_use_double_quant=True,

- bnb_4bit_quant_type="nf4",

- llm_int8_threshold=6.0,

- llm_int8_has_fp16_weight=False,

- ),

- )

5.1.2 资源依赖与环境配置

以下是所需的资源需求

- Linux系统

- 支持cuda11.7

- 单张A40(即显存48G+的Ampere架构显卡)

- 可访问HuggingFace/Python官方源(操作前确认已开启)

- 硬盘方面,至少80GB的数据盘空间(系统盘一般30G,但数据盘至少80G,当默认只有50G时,也得支持可扩容到80G以上才行)

接下来,如下配置环境

- cd /path/to/LongQLoRA

-

- # 创建虚拟环境

- conda create -n longqlora python=3.9 pip

-

- # 配置虚拟环境

- ## 单独安装pytorch

- pip install torch==1.13.0+cu117 torchvision==0.14.0+cu117 torchaudio==0.13.0 --extra-index-url https://download.pytorch.org/whl/cu117 -i https://pypi.org/simple

- ## 单独安装flash attention

- pip install flash_attn -i https://pypi.org/simple

- ## 安装requirements

- pip install -r requirements.txt -i https://pypi.org/simple

关于这个requirements,如下所示

5.1.3 前期准备:数据集与模型文件下载

- 创建输出目录

- 放置数据集

- 下载模型文件

安装git-lfs- # 安装git-lfs

- curl -s https://packagecloud.io/install/repositories/github/git-lfs/script.deb.sh | sudo bash

- sudo apt-get install git-lfs

- # 激活git-lfs

- git lfs install

- # 进入用于存储模型文件的目录

- cd /path/to/models_dir

- # 获取Llama-2-7b-chat-hf

- git lfs clone https://huggingface.co/NousResearch/Llama-2-7b-chat-hf

5.1.4 定义传参

- 修改yaml文件

路径位于“/path/to/LongQLoRA/train_args/llama2-7b-chat-sft-bf16.yaml”相关主要参数说明

参数

释义

output_dir

训练输出(日志、权重文件等)目录,即创建的输出目录外加自定义的文件名

model_name_or_path

用于训练的模型文件目录,即获取的模型文件路径

train_file

训练所用数据路径,即放置数据集的路径。

deepspeed

deepspeed参数路径,即LongQLoRA目录下的“train_args/deepspeed/deepspeed_config_s2_bf16.json”

sft

是否是SFT训练模式

use_flash_attn

是否使用flash attention、attention

num_train_epochs

训练轮次

per_device_train_batch_size

每个设备的batch_size

gradient_accumulation_steps

梯度累计数

max_seq_length

数据截断长度

model_max_length

模型所支持的最大长度,即本次训练所要扩展的目标长度

learning_rate

学习率

logging_steps

打印频率,每logging_steps步打印1次

save_steps

权重存储频率,每save_steps步保存1次

save_total_limit

权重存储数量上限,超出该上限时自动删除早期存储的权重

lr_scheduler_type

学习率调度策略

warmup_steps

warmup步数

lora_rank

lora秩的大小

lora_alpha

lora的缩放尺度

lora_dropout

lora的dropout概率

gradient_checkpointing

是否开启gradient_checkpointing

optim

所选用的优化器

bf16

是否开启bf16训练

report_to

输出的日志形式

dataloader_num_workers

读取数据所用线程数,0为不开启多线程

save_strategy

保存策略,steps为按步数进行保存、epochs为按轮次进行保存

weight_decay

权重衰减值

max_grad_norm

梯度裁剪阈值

remove_unused_columns

是否删除数据集中的无关列

- 修改bash文件

路径位于“/path/to/LongQLoRA/run_train_sft_bf16.sh”,该文件如下所示- export CUDA_LAUNCH_BLOCKING=1

- deepspeed train.py --train_args_file /path/to/LongQLoRA/train_args/llama2-7b-chat-sft-bf16.yaml

5.1.5 运行训练

- # 进入LongQLoRA源码目录

- cd /path/to/LongQLoRA

-

- # 启动bash文件进行训练

- bash run_train_sft_bf16.sh

// 更多线上营中见

5.2 LLaMA2 7b chat + LongLoRA训练

5.2.1 [不染]Llama2-7b-chat + LongLoRA源码 + 训练/推理

长QA数据使用以下提示进行微调:

- instruction: str, 任务指令 + 原始论文材料(即input)

- output: str, 指令的答案

不染针对longlora的源码做了不少修改,以下列举几点(完整修改见七月在线对应的课程)

- 因为paper-review数据长度的问题,故把longlora代码中的这段

- prompt_input, prompt_no_input = PROMPT_DICT["prompt_input_llama2"], PROMPT_DICT["prompt_llama2"]

- sources = [

- prompt_input.format_map(example) if example.get("input", "") != "" else prompt_no_input.format_map(example)

- for example in list_data_dict

- ]

- # 因为第75行代码中"prompt_llama2": "[INST]{instruction}[/INST]"

- # 拼接后对文本截断12288时,会造成过长的数据失去了[/INST],形成了两种不同提示词的训练句式。

- prompt_no_input = PROMPT_DICT["prompt_llama2"]

- sources = [

- prompt_no_input.format_map({"instruction": example['instruction'][:12270]}) # 先对instruction进行截断

- for example in list_data_dict

- ]

- 上文提过一嘴,不染在魔改longlora的过程中,发现显存不够用了,故把下面这段代码

- if training_args.low_rank_training:

- if model_args.model_type == "gpt-neox":

- # added `dense` to match with llama as the basic LoRA would only target 'query_key_value'

- targets = ["query_key_value", "dense"]

- else:

- targets=["q_proj", "k_proj", "v_proj", "o_proj"]

- config = LoraConfig(

- r=8,

- lora_alpha=16,

- target_modules=targets,

- lora_dropout=0,

- if training_args.low_rank_training:

- if model_args.model_type == "gpt-neox":

- targets = ["query_key_value", "dense"]

- else:

- targets=["q_proj", "v_proj"] # 显存不够,减少训练参数

- config = LoraConfig( # 提升秩的大小,以学到更多特征,但越大显存占用越大

- r=32,

- lora_alpha=16,

- target_modules=targets,

- lora_dropout=0.05,

5.2.2 [阿李]LLaMA-2-7b-chat + LongLoRA训练

阿李基本没咋改longlora的源码,毕竟如果longlora本身实现没问题,但其训练参数则基本对齐longqlora的(下图左侧为不染的,下图右侧为阿李的)

当然,也并非和longqlora全部百分百对齐,比如关于学习率

- longlora论文中提到:The learning rate is set to 2 ×10−5 for 7B and 13Bmodels

- longqlora论文中则提到:the learning rate is set to 2e-4 and 1e-4 for 7B and 13B model respectively

但阿李在微调小量数据时,learning_rate设置的和longqlora一样的0.0001,可能还是有点大,故最终全量数据时按照longlora默认的10-5 搞

最后总结一下阿李与不染训练过程的差异

- 在参数层面上,对比不染的微调,阿李微调的时候保留了embedding和norm的微调

同时阿李的attention部分有 "q_proj", "k_proj", "v_proj", "o_proj" 参与微调,不染是 "q_proj", "v_proj" 参与微调。- 在超参数层面上,阿李是2个epoch,不染是5个epoch

阿李的learning_rate是0.00001,不染的learning_rate是0.0001

阿李的gradient_accumulation_steps是16,不染的是8总之,如阿荀所说,整体来看

- 阿李用的lora层更多、更新步幅更小、轮次更少——可能会导致lora参数还学不够

- 不染用的lora层更少、更新步幅大、轮次多——可能lora参数太少、学了太多,但考虑到不染的结果连格式也难以遵循,可能他选取的lora层着实太少了

第六部分 模型的评估:如何评估审稿GPT的效果

6.1 斯坦福研究者如何评估GPT4审稿意见的效果

6.1.1 重叠度上命中率的定义

在斯坦福那篇让GPT4当审稿人的论文中(具体论文详见上文3.1节),他们评估了GPT-4 vs. Human和Human vs. Human在命中率方面的两两重叠,命中率定义为集合A中comments与集合B中comments匹配的比例,计算方法如下

6.1.2 基于「重叠度上命中率指标」衡量LLM评估效果的流程

如下图所示

- 对比

该两阶段管道将LLM生成的Review与人类审稿员的Review进行对比 - 提取

利用GPT4的信息提取功能,从LLM生成的Review和人类审稿员的Review中提取关键要点 - 匹配

使用GPT4进行语义相似性分析,将来自LLM和人类反馈的Review进行匹配。针对每个匹配到的Review,都会给出一个相似性评级和理由。通过设定≥7 的相似性阈值来过滤掉较弱匹配的评论(这一阈值是基于匹配阶段经过人工验证选择得出)

总之

- 针对LLM提出的Review与人类的Review,均分别使用一定的prompt (具体prompt见线上营)交由GPT-4进行摘要处理。对LLM下达任务,要求其关注Review中潜在的拒绝原因,并以特定的JSON格式来提供Review所指出的关键问题所在,研究团队解释侧重关键问题的目的在于“Review中的批评直接有助于指导作者改进论文”

- 将需要评估的LLM Review与人类Review由上一步得到的内容共同输入至GPT-4中,利用特定的prompt (具体prompt见线上营)来指示GPT-4输出新的JSON内容,让GPT-4指出两个传入的内容中的匹配项,并且对匹配程度进行评估(5-10分)

作者研究发现5分、6分的相似项置信程度不佳,因此设定7分以上视为“匹配”,再基于计算重叠程度,其中

为LLM提出的批评项数,

为LLM与人类提出的匹配批评项数

6.2 对LLaMA2 7B chat-LongQLoRA效果的评估:强过GPT3.5和GPT4

注,本文的对比测试中

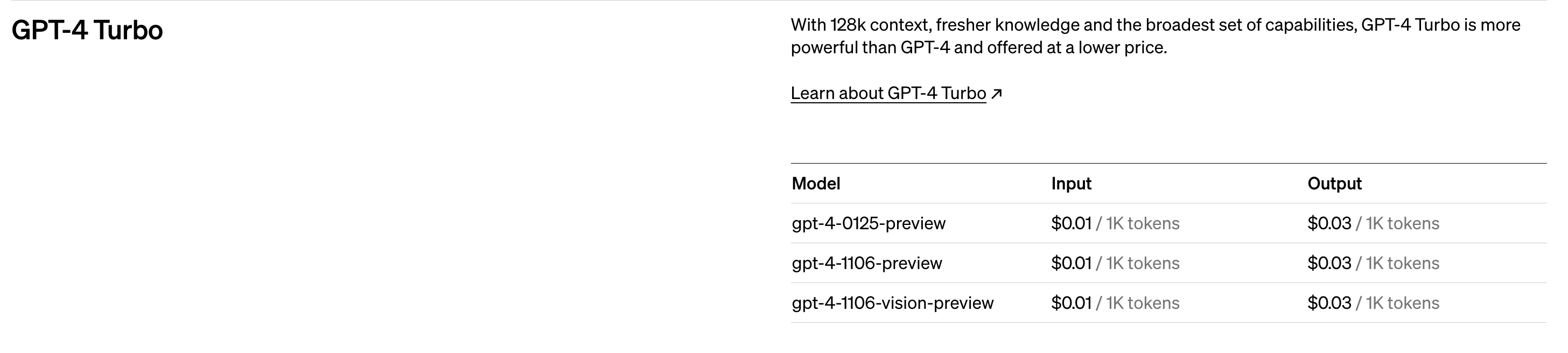

- gpt4是gpt-4-turbo-preview,即GPT_MODEL = "gpt-4-1106-preview"

注,下图是后来24年2.15加上的,即在23年Q4时 还没出来GPT4-0125版本

- gpt3.5是gpt-3.5-turbo-1106

都是支持json format且长输入的版本

在验证集的数据准备上,我司使用57篇训练集外的Paper,各自都对应有“多聚一”后的人工Review。且考虑到使用LLM可能存在输出不稳定的情况,因此将57条数据均分别复制5份,共得到285条测试数据,因此后续LLM有机会对每个输入进行5次生成

下图为测试集的Paper数据

下图为测试集的Review数据(人工/Golden)

6.2.1 让我司审稿模型、GPT3.5分别对测试集的paper输出review(好让它两PK)

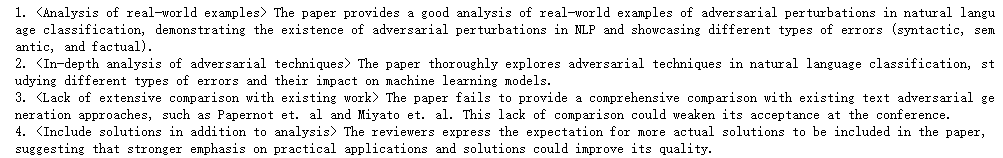

使用LLaMA2-paperreview(为免与实际概念上的Paper数据和Review数据混淆,以下简称llama2)对测试集进行输出,得到类似如下结果

使用gpt-3.5-turbo对测试集进行输出,得到类似如下结果(至于使用的prompt模板如何写,见线上营)

6.2.2 对review的处理:格式转换、为review项标注观点序号

首先,将原本为JSON格式的gpt-3.5-turbo输出内容转换为如下图所示的格式(与人工Review、llama2 Review相同)

接下来,为Review项标注观点序号

-

对人工Review中的“<xxx> yyy”项进行序号标注,并统计该条Review的项数,序号标注的结果如下图所示,该例中项数为4

-

对llama2 Review中的“<xxx> yyy”项进行序号标注,并统计该条Review的项数,序号标注的结果如下图所示,该例中项数为8

-

对gpt-3.5-turbo Review中的“<xxx> yyy”项进行序号标注,并统计该条Review的项数,序号标注的结果如下图所示,该例中项数为4

6.2.3 对人工、llama2、GPT3.5输出的观点项进行匹配

基于上文提到过的这篇论文《Can large language models provide useful feedback on research papers? A large-scale empirical analysis》所提出的“节点匹配prompt”进行小部分修改,得到特定的prompt来指示gpt4进行Review项匹配(至于完整的prompt见线上营)

- prompt模板主要涉及4个要点:

- - 指示gpt4分析并匹配给定的Review A 和 Review B两篇Review中相匹配的观点。

- - 给出输出示例,理当是一个类似

- “{

- {匹配的项1:

- {rationale: 阐明匹配原因, similiraty: 匹配分数},

- {匹配的项2:

- rationale: 阐明匹配原因, similiraty: 匹配分数},

- ...

- }”

- 的多层JSON

- - 声明匹配分数准则,匹配分数由5至10,数字越大匹配成程度越高。

- - 指出如果不存在匹配项则返回空JSON

首先,使用gpt4基于特定prompt模板对llama2 Review(Review A)和人工Review(Review B)进行观点匹配,输出结果类似下图

然后,使用gpt4基于上述prompt模板对gpt-3.5-turbo Review(Review A)和人工Review(Review B)进行观点匹配,输出结果类似下图

6.2.4 我司审稿模型与GPT大PK:计算命中率与命中数,一决胜率

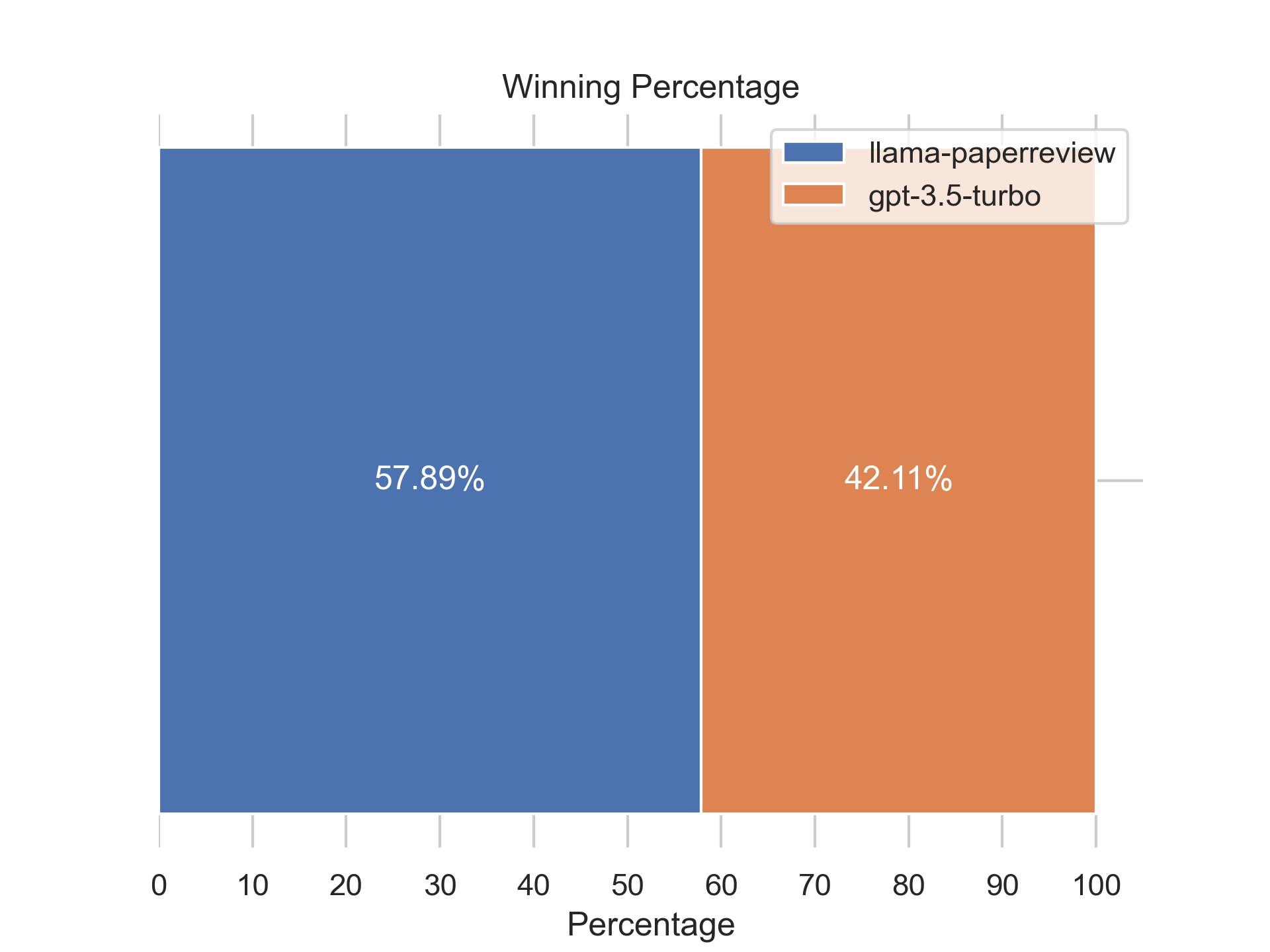

- 计算命中率:对GPT3.5胜率近6成、对GPT4胜率更高

定义similarity为7及以上的匹配项为“强匹配项”,即算作“命中”,根据以下公式计算命中率hit rate

其中A为Review A,通常为LLM输出的Review,B为Review B,通常为人工Review,命中率即为“LLM输出的Review与人工Review的强匹配项”与“LLM输出的Review项数”的比值

对于具体的某篇Paper p,其存在N个LLM输出的Review,对N个Review的命中率计算出平均命中率mean hit rate。在本评估中N为5,即对于同篇Paper均输出5个Review

即如果按照斯坦福一团队把GPT4当审稿人论文中的评估模式,是个比值,得到的结果如下,其显示七月审稿GPT超越了GPT3.5(两两比对llama2与gpt-3.5-turbo对于同篇Paper的平均命中率,平均命中率高者即为“获胜”,对于给定的57篇Paper,其中33篇为llama2具有更高的平均命中率)

至于GPT4呢,实话讲,我们超过GPT4反而更多,可能不太符合直觉,毕竟一般人的认知里,GPT4按道理应该比gpt-3.5-turbo更强,但事实上在该场景下、该评估指标下,其表现确实反而不如gpt-3.5-turbo

对于此点,微博上一朋友也同样表达了类似的观点:

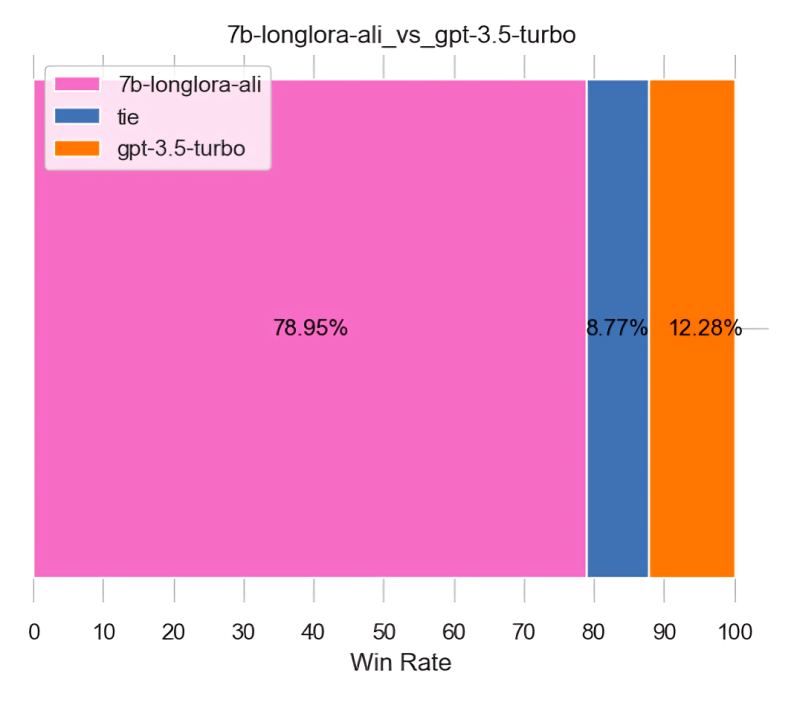

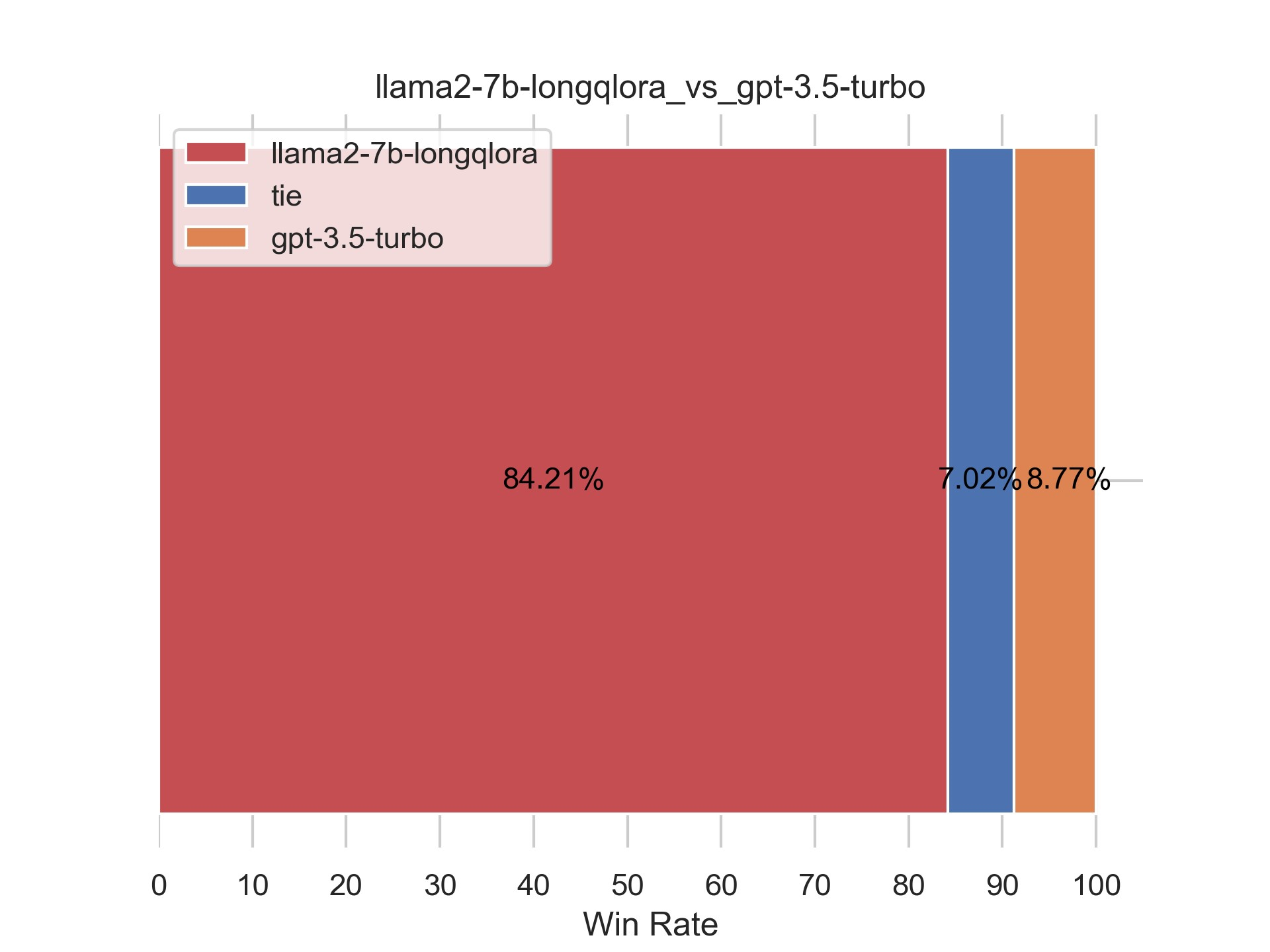

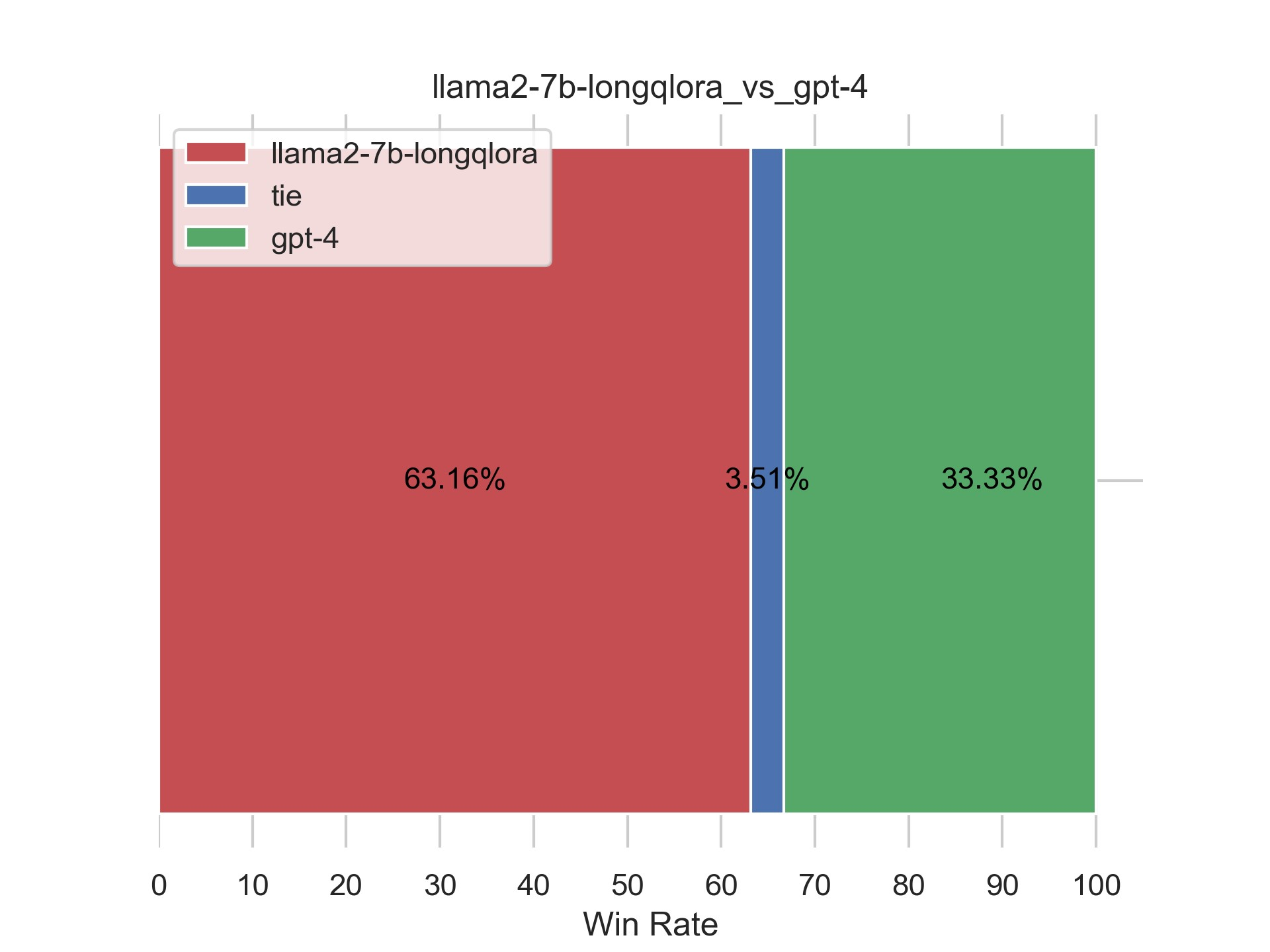

- 计算命中数:对GPT3.5胜率超8成、对GPT4胜率超6成

如果只看绝对值,即只算平均命中数的话,我们对gpt3.5的胜率是84.21%,对gpt4的胜率是63.16%

考虑到LLM输出的长度存在上限,也不至于生成过多观点,并且基于具体应用场景考虑,“过多生成观点”也并非坏事,甚至可能还会给用户带来更多启发

故考虑直接使用“命中数”作为相关指标,即直接统计LLM与人工的“强匹配项”,而不计其生成的观点数

还是一样的,其中A为Review A,通常为LLM输出的Review;B为Review B,通常为人工Review。命中数即为“LLM输出的Review与人工Review的强匹配项数”

对于具体的某篇Paper p,其存在N个LLM输出的Review,对N个Review的命中数计算出平均命中数mean hits。在本评估中N为5,即对于同篇Paper均输出5个Review首先,两两比对llama2与gpt-3.5-turbo对于同篇Paper的平均命中数,平均命中数高者即为“获胜”。对于给定的57篇Paper,其中48篇为llama2具有更高的平均命中数,4篇打平(蓝色的tie表示),余下5篇则为gpt-3.5-turbo具有更高的平均命中数

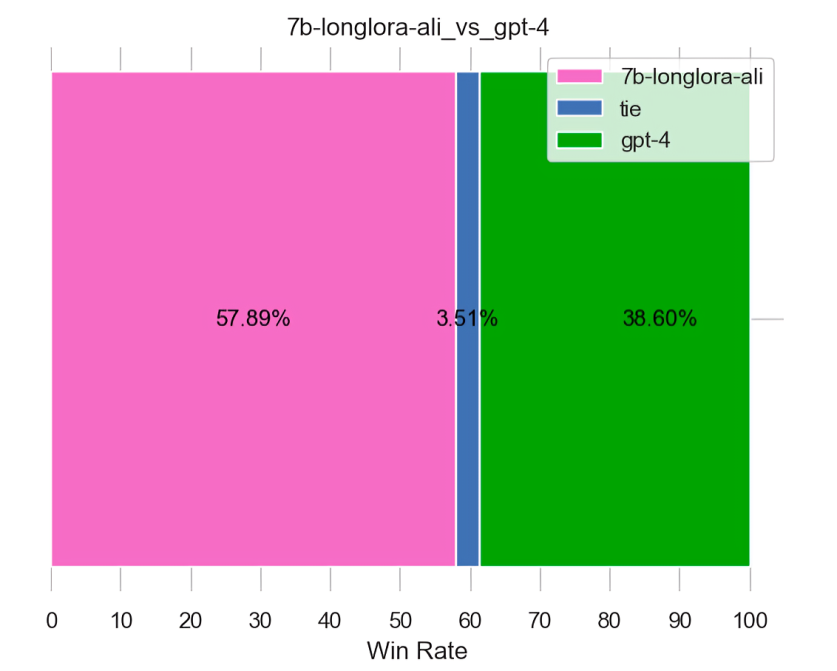

其次,那如果是PK GPT4呢?通过两两比对llama2与gpt-4对于同篇Paper的平均命中数,平均命中数高者即为“获胜”。对于给定的57篇Paper,其中36篇为llama2具有更高的平均命中数,余下27篇则为gpt-4具有更高的平均命中数

6.3 对LLaMA2 7B chat-LongLoRA效果的评估:依然强过GPT3.5和GPT4

一开始第二项目组的文弱做了很多评估,加之考虑到以后会经常做各种评估,我总结了一下今后的「模型评估原则」:在同在一个季度的工作 才互相PK,且用当季度最强的裁判去评判

按照这个原则的话

- 那23年我司q4的7b工作,去对标GPT4时,对比gpt4-1106即可,且gpt4-1106做裁判

不需要对比gpt4-0125的任何生成结果,且7b也不用gpt4-0125的任何裁判结果

但后续的第3版13b可以去和gpt4-0125的生成结果PK,且gpt4-0125去评判

如此对我司和OpenAI都公平公正,毕竟谁都会升级,那就PK同一时间段的产物- 关于裁判 再强调一下

裁判首选用当时的最强裁判去评判,避免每出来一个新裁判,就得把以前的工作重新评判下,那评判无止境了

根据上一节的评估方法,只考虑命中数的话(之后版本的评估,除非特别说明,都默认只考虑命中数),直接上结果(有的朋友可能已经预料到了,由于本质上都是微调的LLaMA2 7B chat,只是半个月之前用的longqlora,这一次用的longlora,所以效果上不会有什么太大的差距)

6.3.1 针对「5.2.1 [不染]Llama2-7b-chat + LongLoRA源码 + 训练/推理」的评估

- 在论文审稿这个单项场景下,我司微调后的模型依然超过GPT3.5 turbo

- 如你所见,我司微调后的模型依然超过GPT4

6.3.2 针对「5.2.2 [阿李]LLaMA-2-7b-chat + LongLoRA训练」的评估

还是同样的评估方法,通过56篇paper-人工review产生的285条数据集,依然考察命中数,评估结果如下图所示,依然超过了GPT3.5、GPT4

至此,本文中已透露了很多我司论文审稿GPT第2版的各种工程细节,这些细节网上很少有,毕竟商用项目

至于第2.5版本的优化,请参见此文《七月论文审稿GPT第2.5版:微调GPT3.5 turbo 16K和llama2 13B以扩大对GPT4的优势》

当然 再更多则在「大模型项目开发线上营」见

参考文献与推荐阅读

- GPT4当审稿人那篇论文的全文翻译:【斯坦福大学最新研究】使用大语言模型生成审稿意见

- GPT-4竟成Nature审稿人?斯坦福清华校友近5000篇论文实测,超50%结果和人类评审一致

- 几篇mistral-7B的中文解读

从开源LLM中学模型架构优化-Mistral 7B

开源社区新宠Mistral,最好的7B模型 - Mistral 7B-来自号称“欧洲OpenAI”Mistral AI团队发布的最强7B模型

- [论文尝鲜]LongLoRA - 高效微调长上下文的LLMs,如果你发现该文与本博客中的有些表述不一致,请以本博客的为准

- 2行代码,「三体」一次读完!港中文贾佳亚团队联手MIT发布超长文本扩展技术,打破LLM遗忘魔咒

创作、修改、完善记录

- 第一大阶段 review数据处理

11.2日,开写本文 - 11.3日,侧重写第二部分、GPT4审稿的思路

- 11.4日,侧重写第三部分中的Mistral 7B

- 11.5日,继续完善Mistral 7B的部分

- 11.11日,更新此节:“2.2.2 如何让梳理出来的review结果更全面:多聚一”

完善1.1.1节Meta nougat

顺带感慨下,为项目落地而进行的技术研究,这种感觉特别爽,^_^ - 11.15,增加2.2节:对review数据的二次处理

- 11.18,优化2.2节中的部分描述

- 11.22,补充了第二部分关于论文审稿GPT第一版中数据处理部分的细节,比如对paper数据处理只是做了去除reference

补充“3.2.3节 通过最终的prompt来处理review数据:ChatGPT VS 开源模型”的相关内容 - 11.23,新增此节:1.2 对2.6万篇paper的解析

- 11.25,考虑到数据解析、数据处理、模型训练之后,还得做模型的评估

故新增一部分的内容,即第五部分 模型的评估:如何评估审稿GPT的效果 - 12.8,因为要在武汉给一公司做内训,且也将在「大模型项目开发线上营」里讲论文审稿GPT,所以随着该项目的不断推进,故

补充在通过OpenAI的API对review数据做摘要处理时,如何绕开API做的各种访问限制

新增一节:“3.3 相关工作之AcademicGPT:增量训练LLaMA2-70B,包含论文审稿功能” - 12.9,重点优化此节的内容:“3.3.2 论文评审:借鉴ReviewAdvisor抽取出review的7个要点(类似我司借鉴斯坦福工作把review归纳出4个要点)”

- 12.17,重点优化关于「相关工作AcademicGPT」的描述,特别是其review抽取式归纳的策略

- 12.18,补充了Mistral 7B的模型参数图,并补充了和GQA、window_size等参数相关的解释说明

- 12.20,新增:“1.2.2 ScienceBeam的解析结果”,以及review数据的组织格式

- 12.21,完善关于第二版中对paper和review数据处理相关的内容描述,即

第一版,paper有解析无数据处理,review无解析有处理

第二版,paper有解析无数据处理,review也无解析但做了更多处理 - 第二大阶段 模型的选型、训练、调优

12.24,增加对YaRN的补充介绍,即

3.1 YaRN怎么来的:基于“NTK-by-parts”插值修改注意力 - 12.26,开始更新“4.3 LongLora LLaMA”一节

- 12.28,新增一节:4.4 模型怎么选,此三PK:Mistral-instruct、LLaMA-LongLoRA、LLaMA-LongQLoRA

- 24年1.4,为把LongLora和LongQLora更好的写清楚,故把本文中关于LongLora的部分抽取出来独立成一篇新的文章

且新增一节:3.2.4 对review数据的最后梳理:得到JSON文本的变体版 - 1.6,4.4.1节中,确切地说应该是yarn-mistral + qlora + s2attn,且没直接用longqlora的实现,团队直接改的代码,但运行还是基于llama factory的框架

故最终定为:yarn-mistral + qlora + s2attn + llama factory - 1.7,修正关于Mistral中GQA的一个表述:在Mistral的GQA中,Q的头数是K V头数的4倍,而非2倍

- 1.9,修订“4.4 模型怎么选,此三PK:Yarn-Mistral-7b-64k、Mistral-instruct、LLaMA-LongLoRA/LLaMA-LongQLoRA”中相关的内容

- 第三阶段 模型训练终于取得突破

1.17,更新此节

4.4.4 基于LongQLoRA + 一万多条paper-review数据集微调LLaMA2 7B chat:成功

且标题由之前的

七月论文审稿GPT第2版:从Meta Nougat、GPT4审稿到微调Mistral、LongLora Llama

改成

七月论文审稿GPT第2版:如何用一万多条paper-review数据集微调LLaMA2以赶超GPT4 - 1.18,补充让“微调好的模型对某篇新论文输出审稿意见”的prompt,且重点说明了其格式

补充第二版中15566条论文审稿的指令数据的表示(instruction-input-output三元组数据) - 第四阶段 开始进行模型的评估

1.20,新增最新的评估结果,即新增此节

6.2.1 对LLaMA2 7B chat-LongQLoRA效果的评估:强过GPT3.5 - 1.21,新增以下新的一部分

第五部分 模型的训练与微调:如何微调LLaMA 2、Yarn-Mistral - 1.22,更新对GPT4的胜率

6.2.1 对LLaMA2 7B chat-LongQLoRA效果的评估:强过GPT3.5和GPT4

且再次更新本文的标题为

七月论文审稿GPT第2版:用一万多条paper-review数据集微调LLaMA2最终反超GPT4 - 1.24,考虑到学术研究及指标评估是一件极其严肃的事情,故新增一节内容,完全公开我们的评估方法(借鉴的斯坦福一团队把GPT4当审稿人论文中的评估模式)

6.2 对LLaMA2 7B chat-LongQLoRA效果的评估:强过GPT3.5和GPT4 - 1.25,在5.1节中补充我们微调时,对LongQLoRA源码的具体修改

- 1.31,第二项目组的不染把「LLaMA2 7B chat-LongLoRA」的结果也终于跑通了

故更新此节的内容:4.4.3 LLaMA2 7B chat-LongLoRA:成功

且新增一个部分:第七部分 后续的迭代计划:第2.5版微调ChatGPT和13B的模型 - 第五阶段 再次超越GPT4,且不断把模型评估的工作严谨细致化

2.1日,新增一节的内容,即

6.3 对LLaMA2 7B chat-LongLoRA效果的评估:依然强过GPT3.5和GPT4

至此,也代表我过去半年带项目团队的成功,代表第二项目组阿荀等人的努力没白费,代表今年我司可以做出更多有影响力、有效果的大模型应用,欢迎更多朋友加入我司各个项目组 - 2.6日,新增一节,即

4.4.3.2 阿李的工作:LongLoRA跑第二轮 - 2.7日,补充requirements.txt,且新增一节,即

5.2 LLaMA2 7b chat + LongLoRA训练 - 2.12日,更新此节的内容

6.3.2 针对「5.2.2 [阿李]LLaMA-2-7b-chat + LongLoRA训练」的评估 - 2.15,在6.3节的开头补上模型评估原则

且在6.2节开头补上关于gpt4-turbo的各个模型示意图 - 2.21,在第五部分最后补充:阿李与不染训练过程的差异

- 3.1,把介绍Mistral 7B的部分抽取出来,放到另一篇文章中

《从Mistral 7B到MoE模型Mixtral 8x7B的全面解析:从原理分析到代码解读》 - 3.5,把此节的标题,从此三PK,改成此四PK,即

4.2 模型怎么选,此四PK:Yarn-Mistral-7b-64k、Mistral-instruct、LLaMA-LongLoRA、LLaMA-LongQLoRA - 4.15,把2.2节的标题改为:

2.2 第二版对review数据的处理:去掉作者的回复、去除过短的review - 4.19,为免歧义,修订下面这句话

input所代表的paper截断一部分,output所代表的review截断一部分 - 4.28,把4.2.4节里,“然后对比了GPT3.5和GPT4针对YARN这篇论文的审稿意见

首先用的prompt如下(纯正的JSON格式)”中prompt的这句话

You just need to use the following JSON format for output, but don't output opinions that don't exist in the original reviews. if you're not sure, return an empty dict:

改成

You just need to use the following JSON format for output, but don't output opinions that don't exist in the original paper. if you're not sure, return an empty dict: