热门标签

热门文章

- 1高通芯片的随身Wi-Fi用分区软件开启adb教程_随身wifi开启adb教程

- 2Visual Studio 2022 AI Code 支持_visual studio 2022ai插件

- 3STM32duino-bootloader:STM32的开源Bootloader深入解析_stm32duino-bootloader-master

- 4用ChatGPT生成Excel公式,太方便了!

- 5设计工具【ProcessOn 】,在线设计,协通设计,设计模板_用processon设计用户界面设计

- 6T5模型简单介绍

- 7清华大学chatGLM6B模型本地化部署教程_清华大模型本地调用

- 8大咖实录|阿里巴巴集团首席技术官张建锋2017云栖大会演讲_2017年10月11日阿里巴巴首席技术官张峰

- 9ChatGPT:打破学术写作束缚

- 10快速上手vercel,免费部署上线你的前端项目,3分钟学会_vercel能免费部署多少个

当前位置: article > 正文

【大数据&AI人工智能】图文详解 ChatGPT、文心一言等大模型背后的 Transformer 算法原理_文心大模型的工作原理

作者:凡人多烦事01 | 2024-04-06 22:07:33

赞

踩

文心大模型的工作原理

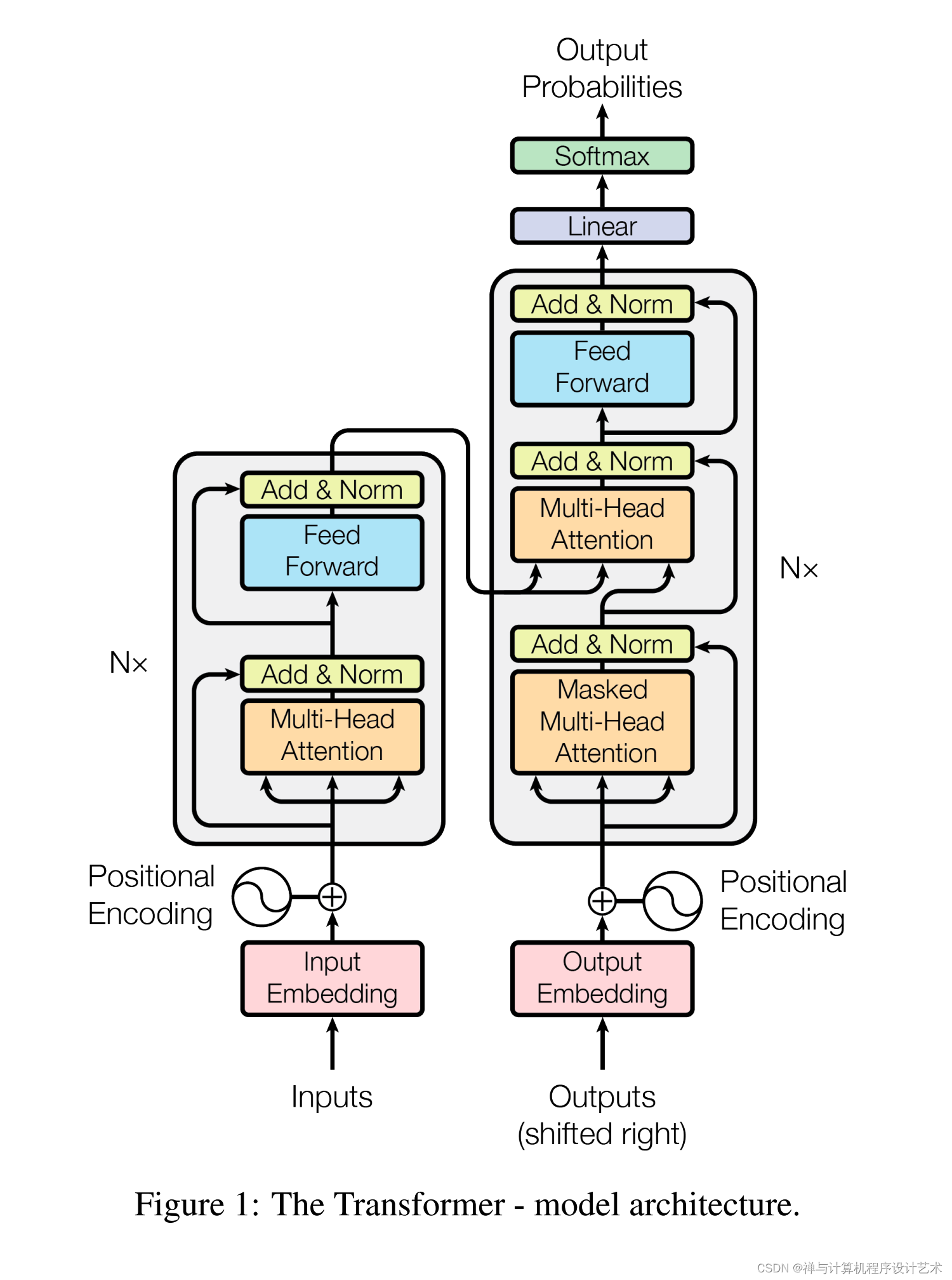

论文 Attention is All You Need 中推荐了 Transformer 。

The dominant sequence transduction models are based on complex recurrent or convolutional neural networks in an encoder-decoder configuration. The best performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train. Our model achieves 28.4 BLEU on the WMT 2014 English-to-German translation task, improving over the existing best res

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/凡人多烦事01/article/detail/374625

推荐阅读

相关标签