热门标签

热门文章

- 1行业现状?互联网公司为什么宁愿花20k招人,也不愿涨薪留住老员工~_互联网企业不晋升和加薪的原因有哪些

- 2初次使用git上传项目,教你一步步上传文件,经验分享_阿里云云效新建了代码库如何上传项目

- 3EMNLP2021 | 实体关系抽取新SoTA - 对NER和RE任务进行联合编码

- 4Delphi中小试Opencv--图像差异对比(大家来找茬辅助实现cvAbsDiff函数的使用)_delphi opencv

- 5如何把拉线位移传感器应用在浆纱机上_拉线传感器应用

- 6如何看待自然语言处理未来的走向?

- 7(附源码)计算机毕业设计SSM快递代收系统_ssm和vue校园快递代取系统

- 8LLMs之Code:SQLCoder的简介、安装、使用方法之详细攻略

- 9猿创征文|大数据之离线数据处理总结+思维导图(全面总结)_离线大数据处理能力

- 10【无标题】_setupprodoffscrub无法连接网络

当前位置: article > 正文

Llama3本地部署实现模型对话_开源大模型llama3 本地部署

作者:凡人多烦事01 | 2024-05-16 22:34:38

赞

踩

开源大模型llama3 本地部署

1. 从github下载目录文件

https://github.com/meta-llama/llama3

使用git下载或者直接从github项目地址下载压缩包文件

git clone https://github.com/meta-llama/llama3.git

- 1

2.申请模型下载链接

到Meta Llama website填写表格申请,国家貌似得填写外国,组织随便填写即可

3.安装依赖

在Llama3最高级目录执行以下命令(建议在安装了python的conda环境下执行)

pip install -e .

- 1

4.下载模型

执行以下命令

bash download.sh

- 1

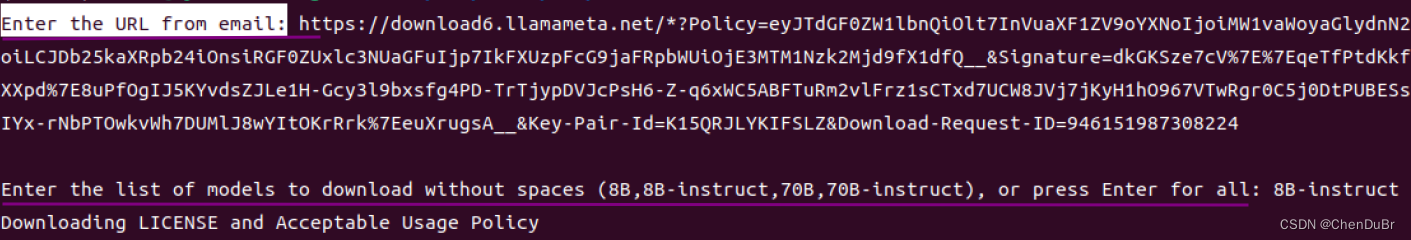

根据提示输出邮件里的链接,选择想要的模型,我这里选的是8B-instruct,注意要确保自己的显存足够模型推理

开始下载之后要等待一段时间才能下载完成

开始下载之后要等待一段时间才能下载完成

5. 运行示例脚本

执行以下命令:

torchrun --nproc_per_node 1 example_chat_completion.py \

--ckpt_dir Meta-Llama-3-8B-Instruct/ \

--tokenizer_path Meta-Llama-3-8B-Instruct/tokenizer.model \

--max_seq_len 512 --max_batch_size 6

- 1

- 2

- 3

- 4

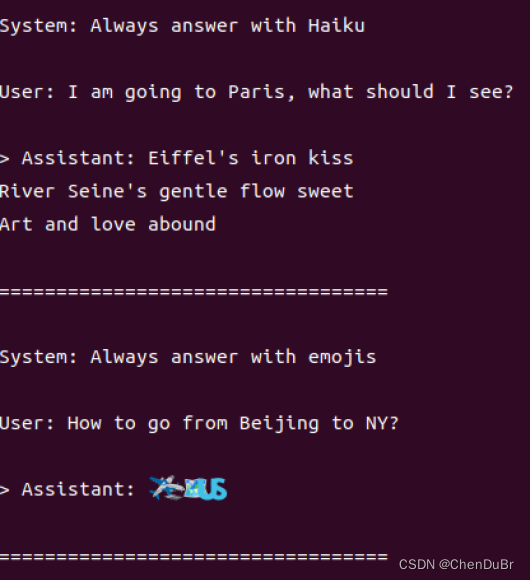

有以下一些对话的示例输出

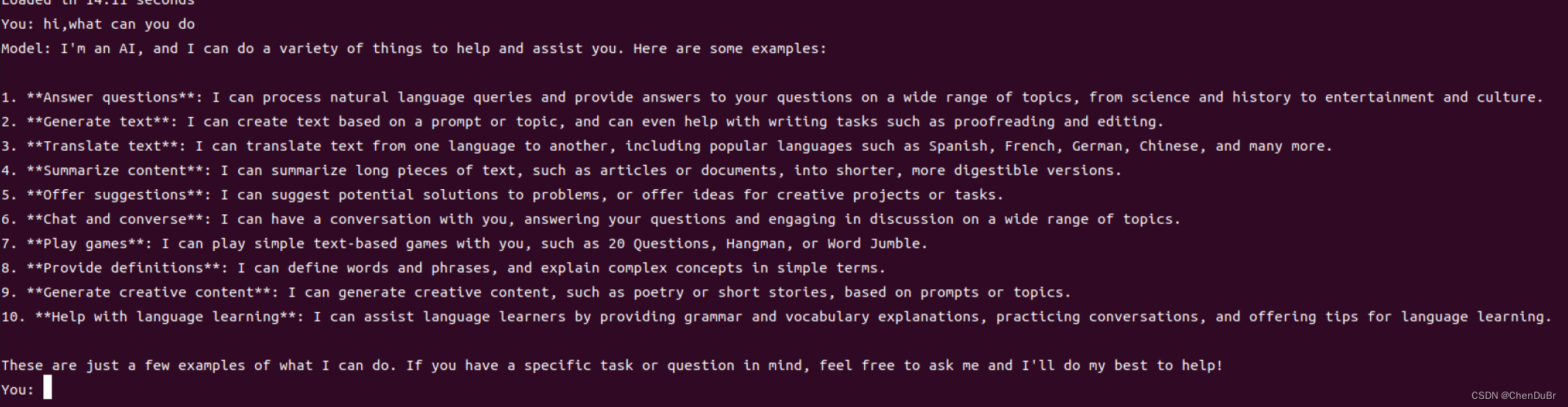

运行自己的对话脚本

在主目录下创建以下chat.py脚本

# Copyright (c) Meta Platforms, Inc. and affiliates. # This software may be used and distributed in accordance with the terms of the Llama 3 Community License Agreement. from typing import List, Optional import fire from llama import Dialog, Llama def main( ckpt_dir: str, tokenizer_path: str, temperature: float = 0.6, top_p: float = 0.9, max_seq_len: int = 512, max_batch_size: int = 4, max_gen_len: Optional[int] = None, ): """ Examples to run with the models finetuned for chat. Prompts correspond of chat turns between the user and assistant with the final one always being the user. An optional system prompt at the beginning to control how the model should respond is also supported. The context window of llama3 models is 8192 tokens, so `max_seq_len` needs to be <= 8192. `max_gen_len` is optional because finetuned models are able to stop generations naturally. """ generator = Llama.build( ckpt_dir=ckpt_dir, tokenizer_path=tokenizer_path, max_seq_len=max_seq_len, max_batch_size=max_batch_size, ) # Modify the dialogs list to only include user inputs dialogs: List[Dialog] = [ [{"role": "user", "content": ""}], # Initialize with an empty user input ] # Start the conversation loop while True: # Get user input user_input = input("You: ") # Exit loop if user inputs 'exit' if user_input.lower() == 'exit': break # Append user input to the dialogs list dialogs[0][0]["content"] = user_input # Use the generator to get model response result = generator.chat_completion( dialogs, max_gen_len=max_gen_len, temperature=temperature, top_p=top_p, )[0] # Print model response print(f"Model: {result['generation']['content']}") if __name__ == "__main__": fire.Fire(main)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

运行以下命令就可以开始对话辣:

torchrun --nproc_per_node 1 chat.py --ckpt_dir Meta-Llama-3-8B-Instruct/ --tokenizer_path Meta-Llama-3-8B-Instruct/tokenizer.model --max_seq_len 512 --max_batch_size 6

- 1

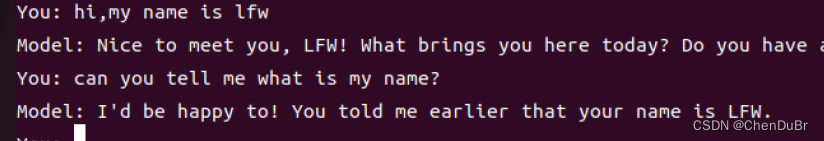

实现多轮对话

Mchat.py脚本如下:

from typing import List, Optional import fire from llama import Dialog, Llama def main( ckpt_dir: str, tokenizer_path: str, temperature: float = 0.6, top_p: float = 0.9, max_seq_len: int = 512, max_batch_size: int = 4, max_gen_len: Optional[int] = None, ): """ Run chat models finetuned for multi-turn conversation. Prompts should include all previous turns, with the last one always being the user's. The context window of llama3 models is 8192 tokens, so `max_seq_len` needs to be <= 8192. `max_gen_len` is optional because finetuned models are able to stop generations naturally. """ generator = Llama.build( ckpt_dir=ckpt_dir, tokenizer_path=tokenizer_path, max_seq_len=max_seq_len, max_batch_size=max_batch_size, ) dialogs: List[Dialog] = [[]] # Start with an empty dialog while True: user_input = input("You: ") if user_input.lower() == 'exit': break # Update the dialogs list with the latest user input dialogs[0].append({"role": "user", "content": user_input}) # Generate model response using the current dialog context result = generator.chat_completion( dialogs, max_gen_len=max_gen_len, temperature=temperature, top_p=top_p, )[0] # Print model response and add it to the dialog model_response = result['generation']['content'] print(f"Model: {model_response}") dialogs[0].append({"role": "system", "content": model_response}) if __name__ == "__main__": fire.Fire(main)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

运行以下命令就可以开始多轮对话辣:

调整--max_seq_len 512 的值可以增加多轮对话模型的记忆长度,不过需要注意的是这可能会增加模型运算的时间和内存需求。

torchrun --nproc_per_node 1 Mchat.py --ckpt_dir Meta-Llama-3-8B-Instruct/ --tokenizer_path Meta-Llama-3-8B-Instruct/tokenizer.model --max_seq_len 512 --max_batch_size 6

- 1

开始好好玩耍吧 !

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/凡人多烦事01/article/detail/580747

推荐阅读

相关标签