- 1re:Invent 2023 | 将关键工作负载迁移到亚马逊云科技,重构医疗保健服务的交付方式

- 2NLP之MLP与CNN的姓氏分类实现

- 3【FPGA】Verilog仿真与验证_verilog-xl仿真

- 42022-06-28 网工进阶(十三)IS-IS-路由过滤、路由汇总、认证、影响ISIS邻居关系建立的因素、其他命令和特性_is协议有哪几种认证方式,若认证没有匹配成功是否会影响两节点建立邻居关系

- 5CCSA学习笔记 第一节 思科安全解决方案综述_ccsa 思科

- 6RabbitMQ的五种工作模式和使用场景_rabbitmq五种消息模型及应用场景

- 7uniapp底部弹出层(uni-popup)使用技巧_uniapp从底部弹出一个盒子

- 8Python开发移动APP之Kivy_kivy app 后台运行

- 9云原生Docker容器中的OpenCV:轻松构建可移植的计算机视觉环境_opencv docker

- 10python3自定义kubernetes的调度器(二)_要求使用python编写一个kubernetes调度器,监听pod的变化,当检测到有pending或

Kubernetes(k8s)集群部署六、service(ClusterIP、NodePort、ExternalName、开启kube-proxy的ipvs模式)_clusterip nodeport

赞

踩

service

暴露服务,以便可以访问,动态负载均衡,(容器环境,ip地址动态,)

Service可以看作是一组提供相同服务的Pod对外的访问接口。借助Service,应用可以方便地实现服务发现和负载均衡。

service默认只支持4层负载均衡能力,没有7层功能。(可以通过Ingress实现)

service的类型:

ClusterIP:默认值,k8s系统给service自动分配的虚拟IP,只能在集群内部访问。

NodePort:将Service通过指定的Node上的端口暴露给外部,访问任意一个NodeIP:nodePort都将路由到ClusterIP。

LoadBalancer:在 NodePort 的基础上,借助 cloud provider 创建一个外部的负载均衡器,并将请求转发到 :NodePort,此模式只能在云服务器上使用。

ExternalName:将服务通过 DNS CNAME 记录方式转发到指定的域名(通过 spec.externlName 设定)。集群内部访问外部

联系之前的docker-Proxy学习

Service 是由 kube-proxy 组件,加上 iptables 来共同实现的.

kube-proxy 通过 iptables 处理 Service 的过程,需要在宿主机上设置相当多的 iptables 规则,如果宿主机有大量的Pod,不断刷新iptables规则,会消耗大量的CPU资源。

IPVS模式的service,可以使K8s集群支持更多量级的Pod。

默认ClusterIP

实验环境

[root@server2 ~]# kubectl apply -f rs.yml deployment.apps/deployment created [root@server2 ~]# cat rs.yml apiVersion: apps/v1 kind: Deployment metadata: name: deployment spec: replicas: 3 selector: matchLabels: app: nginx template: metadata: labels: app: nginx spec: containers: - name: nginx image: myapp:v1 [root@server2 ~]# kubectl expose deployment deployment --port=80 service/deployment exposed [root@server2 ~]# kubectl get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE deployment ClusterIP 10.104.98.119 <none> 80/TCP 7s kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 9d [root@server2 ~]# kubectl describe svc deployment Name: deployment Namespace: default Labels: <none> Annotations: <none> Selector: app=nginx Type: ClusterIP IP Families: <none> IP: 10.104.98.119 IPs: 10.104.98.119 Port: <unset> 80/TCP TargetPort: 80/TCP Endpoints: 10.244.1.71:80,10.244.1.72:80,10.244.1.73:80 Session Affinity: None Events: <none>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

通过iptables规则

[root@server2 ~]# iptables -t nat -nL | grep :80

DNAT tcp -- 0.0.0.0/0 0.0.0.0/0 /* default/deployment */ tcp to:10.244.1.71:80

DNAT tcp -- 0.0.0.0/0 0.0.0.0/0 /* default/deployment */ tcp to:10.244.1.72:80

DNAT tcp -- 0.0.0.0/0 0.0.0.0/0 /* default/deployment */ tcp to:10.244.1.73:80

KUBE-MARK-MASQ tcp -- !10.244.0.0/16 10.104.98.119 /* default/deployment cluster IP */ tcp dpt:80

KUBE-SVC-ZBZTQJVIH62KRRHU tcp -- 0.0.0.0/0 10.104.98.119 /* default/deployment cluster IP */ tcp dpt:80

[root@server2 ~]#

- 1

- 2

- 3

- 4

- 5

- 6

- 7

开启kube-proxy的ipvs模式:

yum install -y ipvsadm //所有节点安装

kubectl edit cm kube-proxy -n kube-system //修改IPVS模式 服务的配置从镜像中独立出来

mode: "ipvs"

kubectl get pod -n kube-system |grep kube-proxy | awk '{system("kubectl delete pod "$1" -n kube-system")}' //更新kube-proxy pod

可以观察到建立的副本数,当文件里面 的副本数发生改变的时候,可以直接在此处观察到,lvs负载均衡器

kube-proxy通过linux的IPVS模块,以rr轮询方式调度service中的Pod

ipvs模式负载均衡

[root@server2 ~]# curl 10.104.98.119

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@server2 ~]# curl 10.104.98.119/hostname.html

deployment-6456d7c676-qnnw5

[root@server2 ~]# curl 10.104.98.119/hostname.html

deployment-6456d7c676-svkdn

[root@server2 ~]# curl 10.104.98.119/hostname.html

deployment-6456d7c676-68hlt

[root@server2 ~]# curl 10.104.98.119/hostname.html

deployment-6456d7c676-qnnw5

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

IPVS模式下,kube-proxy会在service创建后,在宿主机上添加一个虚拟网卡:kube-ipvs0,并分配service IP。

每个节点都有

当继续添加时

[root@server2 ~]# cat demo.yml --- apiVersion: v1 kind: Service metadata: name: myservice spec: selector: app: myapp ports: - protocol: TCP port: 80 targetPort: 80 --- apiVersion: apps/v1 kind: Deployment metadata: name: demo2 spec: replicas: 3 selector: matchLabels: app: myapp template: metadata: labels: app: myapp spec: containers: - name: myapp image: myapp:v2

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

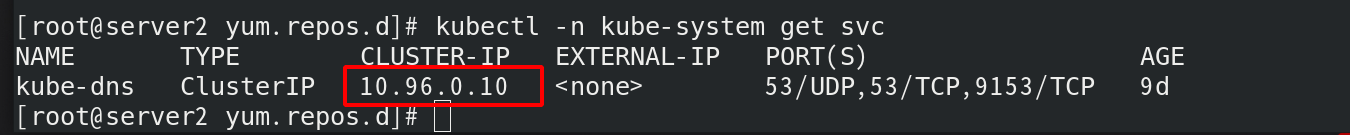

Kubernetes 提供了一个 DNS 插件 Service。

为整个集群提供dns解析

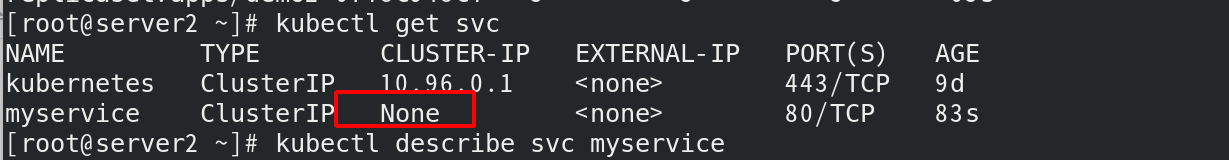

Headless Service “无头服务”

Headless Service不需要分配一个VIP,而是直接以DNS记录的方式解析出被代理Pod的IP地址。

域名格式:$(servicename).$(namespace).svc.cluster.local

[root@server2 ~]# cat demo.yml --- apiVersion: v1 kind: Service metadata: name: myservice spec: selector: app: myapp ports: - protocol: TCP port: 80 targetPort: 80 clusterIP: None #不给ip地址 --- apiVersion: apps/v1 kind: Deployment metadata: name: demo2 spec: replicas: 3 selector: matchLabels: app: myapp template: metadata: labels: app: myapp spec: containers: - name: myapp image: myapp:v2 [root@server2 ~]# kubectl apply -f demo.yml

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

每个pod有相应的A记录解析

/ # curl 10.244.1.83 10-244-1-83.myservice.default.svc.cluster.local/hostname.html

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

demo2-67f8c948cf-tnjs9

- 1

- 2

- 3

Pod滚动更新后,依然可以解析:

$ kubectl delete pod --all

pod "deployment-nginx-58f549b56d-4qswl" deleted

pod "deployment-nginx-58f549b56d-7sz7c" deleted

pod "deployment-nginx-58f549b56d-gwswr" deleted

$ dig -t A nginx-svc.default.svc.cluster.local @10.96.0.10

...

;; QUESTION SECTION:

;nginx-svc.default.svc.cluster.local. IN A

;; ANSWER SECTION:

nginx-svc.default.svc.cluster.local. 30 IN A 10.244.2.111

nginx-svc.default.svc.cluster.local. 30 IN A 10.244.1.120

nginx-svc.default.svc.cluster.local. 30 IN A 10.244.0.61

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

NodePort

LoadBalancer

在nodeport基础上分一个ip

从外部访问 Service 的第二种方式,适用于公有云上的 Kubernetes 服务。这时候,你可以指定一个 LoadBalancer 类型的 Service。

$ vim lb-service.yaml

apiVersion: v1

kind: Service

metadata:

name: lb-nginx

spec:

ports:

- name: http

port: 80

targetPort: 80

selector:

app: nginx

type: LoadBalancer

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

在service提交后,Kubernetes就会调用 CloudProvider 在公有云上为你创建一个负载均衡服务,并且把被代理的 Pod 的 IP地址配置给负载均衡服务做后端。

service允许为其分配一个公有IP。

[root@server2 ~]# cat demo.yml --- apiVersion: v1 kind: Service metadata: name: myservice spec: selector: app: myapp ports: - protocol: TCP port: 80 targetPort: 80 #clusterIP: None #不给ip地址 #type: NodePort #type: LoadBalancer externalIPs: - 172.25.10.100 ###分配一个共有ip --- apiVersion: apps/v1 kind: Deployment metadata: name: demo2 spec: replicas: 3 selector: matchLabels: app: myapp template: metadata: labels: app: myapp spec: containers: - name: myapp image: myapp:v2

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

通过公有地址可以直接访问

ExternalName(内部访问外网)

集群外部资源发生改变时,直接修改文件里面的域名

www.westos.org 西开官网

$ vim ex-service.yaml

apiVersion: v1

kind: Service

metadata:

name: my-service

spec:

type: ExternalName

externalName: wwwwestos.org

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8