- 1NTLK的安装与使用(两种方法)【python】_ntlk安装

- 2Rabbitmq在java中的使用_java使用rabbitmq

- 3Azure虚拟网络对等互连_azure peer

- 4数学&概率题&智力题&算法题 总结

- 5基于SpringBoot+Vue的宠物领养系统(附开题报告)_.“爱宠e+”宠物综合服务平台开发模式研究[j].电脑知识与技

- 6CSDN博客文章写作技巧_csdn 系列文章如何写第二篇

- 7xilinx FPGA触发器和锁存器_fpga ldce

- 8沉浸式论文写作1--模型图绘制_论文研究模型怎么画

- 9千言数据集:文本相似度——提取TFIDF以及统计特征,训练和预测_tfidf文本识别 模型训练

- 100基础小白学习云计算的第八天(作者不易,你的关注就是我最大的动力)

第87步 时间序列建模实战:LSTM回归建模_lstm回归模型

赞

踩

基于WIN10的64位系统演示

一、写在前面

这一期,我们介绍大名鼎鼎的LSTM回归。

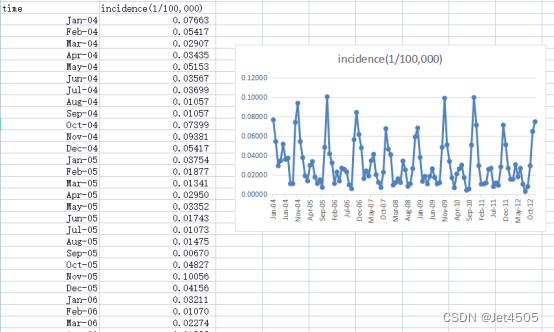

同样,这里使用这个数据:

《PLoS One》2015年一篇题目为《Comparison of Two Hybrid Models for Forecasting the Incidence of Hemorrhagic Fever with Renal Syndrome in Jiangsu Province, China》文章的公开数据做演示。数据为江苏省2004年1月至2012年12月肾综合症出血热月发病率。运用2004年1月至2011年12月的数据预测2012年12个月的发病率数据。

二、LSTM回归

(1)LSTM简介

LSTM (Long Short-Term Memory) 是一种特殊的RNN(递归神经网络)结构,由Hochreiter和Schmidhuber在1997年首次提出。LSTM 被设计出来是为了避免长序列在训练过程中的长期依赖问题,这是传统 RNNs 所普遍遇到问题。

(a)LSTM 的主要特点:

(a1)三个门结构:LSTM 包含三个门结构:输入门、遗忘门和输出门。这些门决定了信息如何进入、被存储或被遗忘,以及如何输出。

(a2)记忆细胞:LSTM的核心是称为记忆细胞的结构。它可以保留、修改或访问的内部状态。通过门结构,模型可以学会在记忆细胞中何时存储、忘记或检索信息。

(a3)长期依赖问题:LSTM特别擅长学习、存储和使用长期信息,从而避免了传统RNN在长序列上的梯度消失问题。

(b)为什么LSTM适合时间序列建模:

(b1)序列数据的特性:时间序列数据具有顺序性,先前的数据点可能会影响后面的数据点。LSTM设计之初就是为了处理带有时间间隔、延迟和长期依赖关系的序列数据。

(b2)长期依赖:在时间序列分析中,某个事件可能会受到很早之前事件的影响。传统的RNNs由于梯度消失的问题,很难捕捉这些长期依赖关系。但是,LSTM结构可以有效地处理这种依赖关系。

(b3)记忆细胞:对于时间序列预测,能够记住过去的信息是至关重要的。LSTM的记忆细胞可以为模型提供这种存储和检索长期信息的能力。

(b4)灵活性:LSTM模型可以与其他神经网络结构(如CNN)结合,用于更复杂的时间序列任务,例如多变量时间序列或序列生成。

综上所述,由于LSTM的设计和特性,它非常适合时间序列建模,尤其是当数据具有长期依赖关系时。

(2)单步滚动预测

- import pandas as pd

- import numpy as np

- from sklearn.metrics import mean_absolute_error, mean_squared_error

- from tensorflow.python.keras.models import Sequential

- from tensorflow.python.keras import layers, models, optimizers

- from tensorflow.python.keras.optimizers import adam_v2

-

- # 读取数据

- data = pd.read_csv('data.csv')

-

- # 将时间列转换为日期格式

- data['time'] = pd.to_datetime(data['time'], format='%b-%y')

-

- # 创建滞后期特征

- lag_period = 6

- for i in range(lag_period, 0, -1):

- data[f'lag_{i}'] = data['incidence'].shift(lag_period - i + 1)

-

- # 删除包含 NaN 的行

- data = data.dropna().reset_index(drop=True)

-

- # 划分训练集和验证集

- train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

- validation_data = data[(data['time'] >= '2012-01-01') & (data['time'] <= '2012-12-31')]

-

- # 定义特征和目标变量

- X_train = train_data[['lag_1', 'lag_2', 'lag_3', 'lag_4', 'lag_5', 'lag_6']].values

- y_train = train_data['incidence'].values

- X_validation = validation_data[['lag_1', 'lag_2', 'lag_3', 'lag_4', 'lag_5', 'lag_6']].values

- y_validation = validation_data['incidence'].values

-

- # 对于LSTM,我们需要将输入数据重塑为 [samples, timesteps, features]

- X_train = X_train.reshape(X_train.shape[0], X_train.shape[1], 1)

- X_validation = X_validation.reshape(X_validation.shape[0], X_validation.shape[1], 1)

-

- # 构建LSTM回归模型

- input_layer = layers.Input(shape=(X_train.shape[1], 1))

-

- x = layers.LSTM(50, return_sequences=True)(input_layer)

- x = layers.LSTM(25, return_sequences=False)(x)

- x = layers.Dropout(0.1)(x)

- x = layers.Dense(25, activation='relu')(x)

- x = layers.Dropout(0.1)(x)

- output_layer = layers.Dense(1)(x)

-

- model = models.Model(inputs=input_layer, outputs=output_layer)

-

- model.compile(optimizer=adam_v2.Adam(learning_rate=0.001), loss='mse')

-

- # 训练模型

- history = model.fit(X_train, y_train, epochs=200, batch_size=32, validation_data=(X_validation, y_validation), verbose=0)

-

- # 单步滚动预测函数

- def rolling_forecast(model, initial_features, n_forecasts):

- forecasts = []

- current_features = initial_features.copy()

-

- for i in range(n_forecasts):

- # 使用当前的特征进行预测

- forecast = model.predict(current_features.reshape(1, len(current_features), 1)).flatten()[0]

- forecasts.append(forecast)

-

- # 更新特征,用新的预测值替换最旧的特征

- current_features = np.roll(current_features, shift=-1)

- current_features[-1] = forecast

-

- return np.array(forecasts)

-

- # 使用训练集的最后6个数据点作为初始特征

- initial_features = X_train[-1].flatten()

-

- # 使用单步滚动预测方法预测验证集

- y_validation_pred = rolling_forecast(model, initial_features, len(X_validation))

-

- # 计算训练集上的MAE, MAPE, MSE 和 RMSE

- mae_train = mean_absolute_error(y_train, model.predict(X_train).flatten())

- mape_train = np.mean(np.abs((y_train - model.predict(X_train).flatten()) / y_train))

- mse_train = mean_squared_error(y_train, model.predict(X_train).flatten())

- rmse_train = np.sqrt(mse_train)

-

- # 计算验证集上的MAE, MAPE, MSE 和 RMSE

- mae_validation = mean_absolute_error(y_validation, y_validation_pred)

- mape_validation = np.mean(np.abs((y_validation - y_validation_pred) / y_validation))

- mse_validation = mean_squared_error(y_validation, y_validation_pred)

- rmse_validation = np.sqrt(mse_validation)

-

- print("验证集:", mae_validation, mape_validation, mse_validation, rmse_validation)

- print("训练集:", mae_train, mape_train, mse_train, rmse_train)

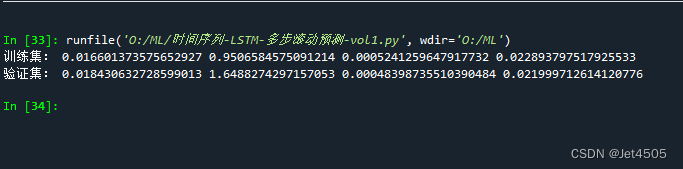

看结果:

(3)多步滚动预测-vol. 1

- import pandas as pd

- import numpy as np

- from sklearn.metrics import mean_absolute_error, mean_squared_error

- import tensorflow as tf

- from tensorflow.python.keras.models import Model

- from tensorflow.python.keras.layers import Input, LSTM, Dense, Dropout, Flatten

- from tensorflow.python.keras.optimizers import adam_v2

-

- # 读取数据

- data = pd.read_csv('data.csv')

- data['time'] = pd.to_datetime(data['time'], format='%b-%y')

-

- n = 6

- m = 2

-

- # 创建滞后期特征

- for i in range(n, 0, -1):

- data[f'lag_{i}'] = data['incidence'].shift(n - i + 1)

-

- data = data.dropna().reset_index(drop=True)

-

- train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

- validation_data = data[(data['time'] >= '2012-01-01') & (data['time'] <= '2012-12-31')]

-

- # 准备训练数据

- X_train = []

- y_train = []

-

- for i in range(len(train_data) - n - m + 1):

- X_train.append(train_data.iloc[i+n-1][[f'lag_{j}' for j in range(1, n+1)]].values)

- y_train.append(train_data.iloc[i+n:i+n+m]['incidence'].values)

-

- X_train = np.array(X_train)

- y_train = np.array(y_train)

- X_train = X_train.astype(np.float32)

- y_train = y_train.astype(np.float32)

-

- # 构建LSTM模型

- inputs = Input(shape=(n, 1))

- x = LSTM(64, return_sequences=True)(inputs)

- x = LSTM(32)(x)

- x = Dense(50, activation='relu')(x)

- x = Dropout(0.1)(x)

- outputs = Dense(m)(x)

-

- model = Model(inputs=inputs, outputs=outputs)

-

- model.compile(optimizer=adam_v2.Adam(learning_rate=0.001), loss='mse')

-

- # 训练模型

- model.fit(X_train, y_train, epochs=200, batch_size=32, verbose=0)

-

- def lstm_rolling_forecast(data, model, n, m):

- y_pred = []

-

- for i in range(len(data) - n):

- input_data = data.iloc[i+n-1][[f'lag_{j}' for j in range(1, n+1)]].values.astype(np.float32).reshape(1, n, 1)

- pred = model.predict(input_data)

- y_pred.extend(pred[0])

-

- for i in range(1, m):

- for j in range(len(y_pred) - i):

- y_pred[j+i] = (y_pred[j+i] + y_pred[j]) / 2

-

- return np.array(y_pred)

-

- # Predict for train_data and validation_data

- y_train_pred_lstm = lstm_rolling_forecast(train_data, model, n, m)[:len(y_train)]

- y_validation_pred_lstm = lstm_rolling_forecast(validation_data, model, n, m)[:len(validation_data) - n]

-

- # Calculate performance metrics for train_data

- mae_train = mean_absolute_error(train_data['incidence'].values[n:len(y_train_pred_lstm)+n], y_train_pred_lstm)

- mape_train = np.mean(np.abs((train_data['incidence'].values[n:len(y_train_pred_lstm)+n] - y_train_pred_lstm) / train_data['incidence'].values[n:len(y_train_pred_lstm)+n]))

- mse_train = mean_squared_error(train_data['incidence'].values[n:len(y_train_pred_lstm)+n], y_train_pred_lstm)

- rmse_train = np.sqrt(mse_train)

-

- # Calculate performance metrics for validation_data

- mae_validation = mean_absolute_error(validation_data['incidence'].values[n:len(y_validation_pred_lstm)+n], y_validation_pred_lstm)

- mape_validation = np.mean(np.abs((validation_data['incidence'].values[n:len(y_validation_pred_lstm)+n] - y_validation_pred_lstm) / validation_data['incidence'].values[n:len(y_validation_pred_lstm)+n]))

- mse_validation = mean_squared_error(validation_data['incidence'].values[n:len(y_validation_pred_lstm)+n], y_validation_pred_lstm)

- rmse_validation = np.sqrt(mse_validation)

-

- print("训练集:", mae_train, mape_train, mse_train, rmse_train)

- print("验证集:", mae_validation, mape_validation, mse_validation, rmse_validation)

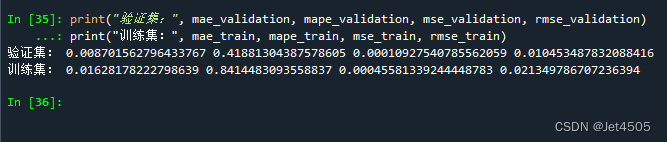

结果:

(4)多步滚动预测-vol. 2

- import pandas as pd

- import numpy as np

- from sklearn.model_selection import train_test_split

- from sklearn.metrics import mean_absolute_error, mean_squared_error

- from tensorflow.python.keras.models import Sequential, Model

- from tensorflow.python.keras.layers import Dense, LSTM, Input

- from tensorflow.python.keras.optimizers import adam_v2

-

- # Loading and preprocessing the data

- data = pd.read_csv('data.csv')

- data['time'] = pd.to_datetime(data['time'], format='%b-%y')

-

- n = 6

- m = 2

-

- # 创建滞后期特征

- for i in range(n, 0, -1):

- data[f'lag_{i}'] = data['incidence'].shift(n - i + 1)

-

- data = data.dropna().reset_index(drop=True)

-

- train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

- validation_data = data[(data['time'] >= '2012-01-01') & (data['time'] <= '2012-12-31')]

-

- # 只对X_train、y_train、X_validation取奇数行

- X_train = train_data[[f'lag_{i}' for i in range(1, n+1)]].iloc[::2].reset_index(drop=True).values

- X_train = X_train.reshape(X_train.shape[0], X_train.shape[1], 1)

-

- y_train_list = [train_data['incidence'].shift(-i) for i in range(m)]

- y_train = pd.concat(y_train_list, axis=1)

- y_train.columns = [f'target_{i+1}' for i in range(m)]

- y_train = y_train.iloc[::2].reset_index(drop=True).dropna().values[:, 0]

-

- X_validation = validation_data[[f'lag_{i}' for i in range(1, n+1)]].iloc[::2].reset_index(drop=True).values

- X_validation = X_validation.reshape(X_validation.shape[0], X_validation.shape[1], 1)

-

- y_validation = validation_data['incidence'].values

-

- # Building the LSTM model

- inputs = Input(shape=(n, 1))

- x = LSTM(50, activation='relu')(inputs)

- x = Dense(50, activation='relu')(x)

- outputs = Dense(1)(x)

-

- model = Model(inputs=inputs, outputs=outputs)

- optimizer = adam_v2.Adam(learning_rate=0.001)

- model.compile(optimizer=optimizer, loss='mse')

-

- # Train the model

- model.fit(X_train, y_train, epochs=200, batch_size=32, verbose=0)

-

- # Predict on validation set

- y_validation_pred = model.predict(X_validation).flatten()

-

- # Compute metrics for validation set

- mae_validation = mean_absolute_error(y_validation[:len(y_validation_pred)], y_validation_pred)

- mape_validation = np.mean(np.abs((y_validation[:len(y_validation_pred)] - y_validation_pred) / y_validation[:len(y_validation_pred)]))

- mse_validation = mean_squared_error(y_validation[:len(y_validation_pred)], y_validation_pred)

- rmse_validation = np.sqrt(mse_validation)

-

- # Predict on training set

- y_train_pred = model.predict(X_train).flatten()

-

- # Compute metrics for training set

- mae_train = mean_absolute_error(y_train, y_train_pred)

- mape_train = np.mean(np.abs((y_train - y_train_pred) / y_train))

- mse_train = mean_squared_error(y_train, y_train_pred)

- rmse_train = np.sqrt(mse_train)

-

- print("验证集:", mae_validation, mape_validation, mse_validation, rmse_validation)

- print("训练集:", mae_train, mape_train, mse_train, rmse_train)

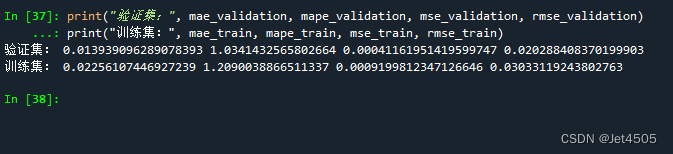

结果:

(5)多步滚动预测-vol. 3

- import pandas as pd

- import numpy as np

- from sklearn.metrics import mean_absolute_error, mean_squared_error

- from tensorflow.python.keras.models import Sequential, Model

- from tensorflow.python.keras.layers import Dense, LSTM, Input

- from tensorflow.python.keras.optimizers import adam_v2

-

- # 数据读取和预处理

- data = pd.read_csv('data.csv')

- data_y = pd.read_csv('data.csv')

- data['time'] = pd.to_datetime(data['time'], format='%b-%y')

- data_y['time'] = pd.to_datetime(data_y['time'], format='%b-%y')

-

- n = 6

-

- for i in range(n, 0, -1):

- data[f'lag_{i}'] = data['incidence'].shift(n - i + 1)

-

- data = data.dropna().reset_index(drop=True)

- train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

- X_train = train_data[[f'lag_{i}' for i in range(1, n+1)]]

- m = 3

-

- X_train_list = []

- y_train_list = []

-

- for i in range(m):

- X_temp = X_train

- y_temp = data_y['incidence'].iloc[n + i:len(data_y) - m + 1 + i]

-

- X_train_list.append(X_temp)

- y_train_list.append(y_temp)

-

- for i in range(m):

- X_train_list[i] = X_train_list[i].iloc[:-(m-1)].values

- X_train_list[i] = X_train_list[i].reshape(X_train_list[i].shape[0], X_train_list[i].shape[1], 1)

- y_train_list[i] = y_train_list[i].iloc[:len(X_train_list[i])].values

-

- # 模型训练

- models = []

- for i in range(m):

- # Building the LSTM model

- inputs = Input(shape=(n, 1))

- x = LSTM(50, activation='relu')(inputs)

- x = Dense(50, activation='relu')(x)

- outputs = Dense(1)(x)

-

- model = Model(inputs=inputs, outputs=outputs)

- optimizer = adam_v2.Adam(learning_rate=0.001)

- model.compile(optimizer=optimizer, loss='mse')

- model.fit(X_train_list[i], y_train_list[i], epochs=200, batch_size=32, verbose=0)

- models.append(model)

-

- validation_start_time = train_data['time'].iloc[-1] + pd.DateOffset(months=1)

- validation_data = data[data['time'] >= validation_start_time]

- X_validation = validation_data[[f'lag_{i}' for i in range(1, n+1)]].values

- X_validation = X_validation.reshape(X_validation.shape[0], X_validation.shape[1], 1)

-

- y_validation_pred_list = [model.predict(X_validation) for model in models]

- y_train_pred_list = [model.predict(X_train_list[i]) for i, model in enumerate(models)]

-

- def concatenate_predictions(pred_list):

- concatenated = []

- for j in range(len(pred_list[0])):

- for i in range(m):

- concatenated.append(pred_list[i][j])

- return concatenated

-

- y_validation_pred = np.array(concatenate_predictions(y_validation_pred_list))[:len(validation_data['incidence'])]

- y_train_pred = np.array(concatenate_predictions(y_train_pred_list))[:len(train_data['incidence']) - m + 1]

- y_validation_pred = y_validation_pred.flatten()

- y_train_pred = y_train_pred.flatten()

-

- mae_validation = mean_absolute_error(validation_data['incidence'], y_validation_pred)

- mape_validation = np.mean(np.abs((validation_data['incidence'] - y_validation_pred) / validation_data['incidence']))

- mse_validation = mean_squared_error(validation_data['incidence'], y_validation_pred)

- rmse_validation = np.sqrt(mse_validation)

-

- mae_train = mean_absolute_error(train_data['incidence'][:-(m-1)], y_train_pred)

- mape_train = np.mean(np.abs((train_data['incidence'][:-(m-1)] - y_train_pred) / train_data['incidence'][:-(m-1)]))

- mse_train = mean_squared_error(train_data['incidence'][:-(m-1)], y_train_pred)

- rmse_train = np.sqrt(mse_train)

-

- print("验证集:", mae_validation, mape_validation, mse_validation, rmse_validation)

- print("训练集:", mae_train, mape_train, mse_train, rmse_train)

结果:

三、数据

链接:https://pan.baidu.com/s/1EFaWfHoG14h15KCEhn1STg?pwd=q41n

提取码:q41n