- 1“人工智能”成热门话题,声纹黑科技引领未来发展潮流_声纹识别对人工智能产业链的影响

- 2淘宝 npm 源将在 2022 年 5 月 31 日更换域名服务_如何查看淘宝镜像截止时间

- 3vscode连接远程Linux服务器_vscode远程连接linux

- 4推荐一款go语言的开源物联网框架-opengw

- 5旅游景区预约系统的设计与实现2023_《旅游景点预约系统的设计与开发》交互式设计本章小结】

- 6小程序实现下拉刷新_小程序下拉刷新

- 7cmd常用命令讲解_cmd的ping命令大全

- 8鸿蒙第二次_鸿蒙应用 '@system.router' 页面渲染不出来

- 9Unix环境高级编程-进程基本知识_unix pid pgid sid

- 10EasyExcel导出(单Sheet导出、多Sheet导出、多文件导出)_easyexcel导出文件

时间序列预测05:CNN时间序列预测模型详解 01 Univariate CNN、Multivariate CNN_cnn二维卷积 时间序列

赞

踩

【时间序列预测/分类】 全系列60篇由浅入深的博文汇总:传送门

卷积神经网络模型(CNN)可以应用于时间序列预测。有许多类型的CNN模型可用于每种特定类型的时间序列预测问题。在本介绍了在以TF2.1为后端的Keras中如何开发用于时间序列预测的不同的CNN模型。这些模型是在比较小的人为构造的时间序列问题上演示的,模型配置也是任意的,并没有进行调参优化,这些内容会在以后的文章中介绍。

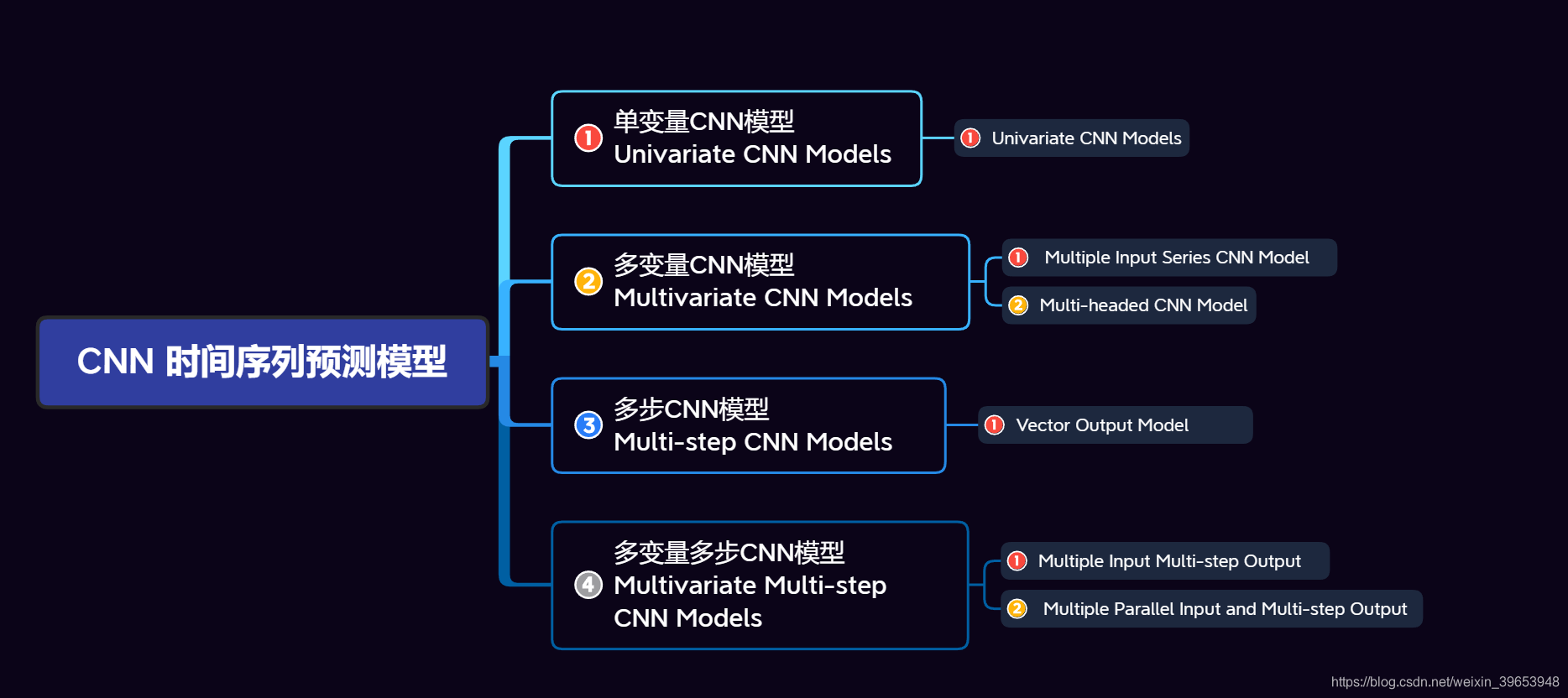

先看一下思维导图,本文讲解了开发CNN时间序列预测模型中的前两个知识点:单变量CNN模型和多变量CNN模型(思维导图中的1、2):

文章目录

1. 单变量CNN模型(Univariate CNN Models)

尽管传统上是针对二维图像数据开发的,但CNNs可以用来对单变量时间序列预测问题进行建模。单变量时间序列是由具有时间顺序的单个观测序列组成的数据集,需要一个模型从过去的观测序列中学习以预测序列中的下一个值。本节分为两部分:

- Data Preparation

- CNN Model

1.1 数据准备

假设有如下序列数据:

[10, 20, 30, 40, 50, 60, 70, 80, 90]

- 1

我们可以将序列分成多个输入/输出模式,称为样本(samples),其中三个时间步(time steps)作为输入,一个时间步作为输出,并据此来预测一个时间步的输出值y。

X, y

10, 20, 30, 40

20, 30, 40, 50

30, 40, 50, 60

...

- 1

- 2

- 3

- 4

- 5

我们可以通过一个 split_sequence() 函数来实现上述操作,该函数可以将给定的单变量序列拆分为多个样本,其中每个样本具有指定数量的时间步,并且输出是单个时间步。因为这些函数在之前的文章已经介绍过,为了增加文章的可读性,此处就不逐一讲解了,会在每章结束之后,给出完整代码,如果有不懂的地方,可以查看之前的一篇文章。经过此函数处理之后,单变量序列变为:

[10 20 30] 40

[20 30 40] 50

[30 40 50] 60

[40 50 60] 70

[50 60 70] 80

[60 70 80] 90

- 1

- 2

- 3

- 4

- 5

- 6

其中,每一行作为一个样本,其中三个时间步长值是样本数据,每一行中的最后一个单值是输出(y),因为是单变量,所以特征数(features)为1。

1.2 CNN 模型

1D CNN是一个CNN模型,它有一个卷积隐藏层,在一维序列上工作。在某些情况下,这之后可能是第二卷积层,例如非常长的输入序列,然后是池化层,其任务是将卷积层的输出提取到最显著的元素。卷积层和池化层之后是全连接层,用于解释模型卷积部分提取的特征。在卷积层和全连接层之间使用展平层(Flatten)将特征映射简化为一个一维向量。代码实现:

model = Sequential()

model.add(Conv1D(filters=64, kernel_size=2, activation='relu', input_shape=(n_steps,

n_features)))

model.add(MaxPooling1D(pool_size=2))

model.add(Flatten())

model.add(Dense(50, activation='relu'))

model.add(Dense(1))

model.compile(optimizer='adam', loss='mse')

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

模型的关键是输入的形状 input_shape 参数;这是模型在时间步数和特征数方面期望作为每个样本的输入。我们使用的是一个单变量序列,因此特征数为1。时间步数是在划分数据集时 split_sequence() 函数的参数中定义的。

每个样本的输入形状在第一个隐藏层定义的输入形状参数中指定。因为有多个样本,因此,模型期望训练数据的输入维度或形状为:[样本,时间步,特征]([samples, timesteps, features]) 。 split_sequence() 函数输出的训练数据 X 的形状为 [samples,timesteps],因此应该对 X 重塑形状,增加一个特征维度,以满足CNN模型的输入要求。代码实现:

n_features = 1

X = X.reshape((X.shape[0], X.shape[1], n_features))

- 1

- 2

CNN实际上并不认为数据具有时间步,而是将其视为可以执行卷积读取操作的序列,如一维图像。上例中,我们定义了一个卷积层,它有64个filter,kernel大小为2。接下来是一个最大池化层和一个全连接层(Dense)来解释输入特性。最后,输出层预测单个数值。该模型利用有效的随机梯度下降Adam进行拟合,利用均方误差(mse)损失函数进行优化。处理好训练数据和定义完模型之后,接下来开始训练,代码实现:

model.fit(X, y, epochs=1000, verbose=0)

- 1

在模型拟合后,可以利用它进行预测。假设输入[70,80,90]来预测序列中的下一个值,并期望模型能预测类似于[100]的数据。该CNN模型期望输入形状是三维的,形状为 [样本、时间步长、特征] ,因此,在进行预测之前,必须重塑单个输入样本为三维形状。代码实现:

x_input = array([70, 80, 90])

x_input = x_input.reshape((1, n_steps, n_features))

yhat = model.predict(x_input, verbose=0)

- 1

- 2

- 3

完整代码:

import numpy as np

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, Flatten, Conv1D, MaxPooling1D

# 该该函数将序列数据分割成样本

def split_sequence(sequence, sw_width, n_features):

'''

这个简单的示例,通过for循环实现有重叠截取数据,滑动步长为1,滑动窗口宽度为sw_width。

以后的文章,会介绍使用yield方法来实现特定滑动步长的滑动窗口的实例。

'''

X, y = [], []

for i in range(len(sequence)):

# 获取单个样本中最后一个元素的索引,因为python切片前闭后开,索引从0开始,所以不需要-1

end_element_index = i + sw_width

# 如果样本最后一个元素的索引超过了序列索引的最大长度,说明不满足样本元素个数,则这个样本丢弃

if end_element_index > len(sequence) - 1:

break

# 通过切片实现步长为1的滑动窗口截取数据组成样本的效果

seq_x, seq_y = sequence[i:end_element_index], sequence[end_element_index]

X.append(seq_x)

y.append(seq_y)

process_X, process_y = np.array(X), np.array(y)

process_X = process_X.reshape((process_X.shape[0], process_X.shape[1], n_features))

print('split_sequence:\nX:\n{}\ny:\n{}\n'.format(np.array(X), np.array(y)))

print('X_shape:{},y_shape:{}\n'.format(np.array(X).shape, np.array(y).shape))

print('train_X:\n{}\ntrain_y:\n{}\n'.format(process_X, process_y))

print('train_X.shape:{},trian_y.shape:{}\n'.format(process_X.shape, process_y.shape))

return process_X, process_y

def oned_cnn_model(sw_width, n_features, X, y, test_X, epoch_num, verbose_set):

model = Sequential()

# 对于一维卷积来说,data_format='channels_last'是默认配置,该API的规则如下:

# 输入形状为:(batch, steps, channels);输出形状为:(batch, new_steps, filters),padding和strides的变化会导致new_steps变化

# 如果设置为data_format = 'channels_first',则要求输入形状为: (batch, channels, steps).

model.add(Conv1D(filters=64, kernel_size=2, activation='relu',

strides=1, padding='valid', data_format='channels_last',

input_shape=(sw_width, n_features)))

# 对于一维池化层来说,data_format='channels_last'是默认配置,该API的规则如下:

# 3D 张量的输入形状为: (batch_size, steps, features);输出3D张量的形状为:(batch_size, downsampled_steps, features)

# 如果设置为data_format = 'channels_first',则要求输入形状为:(batch_size, features, steps)

model.add(MaxPooling1D(pool_size=2, strides=None, padding='valid',

data_format='channels_last'))

# data_format参数的作用是在将模型从一种数据格式切换到另一种数据格式时保留权重顺序。默认为channels_last。

# 如果设置为channels_last,那么数据输入形状应为:(batch,…,channels);如果设置为channels_first,那么数据输入形状应该为(batch,channels,…)

# 输出为(batch, 之后参数尺寸的乘积)

model.add(Flatten())

# Dense执行以下操作:output=activation(dot(input,kernel)+bias),

# 其中,activation是激活函数,kernel是由层创建的权重矩阵,bias是由层创建的偏移向量(仅当use_bias为True时适用)。

# 2D 输入:(batch_size, input_dim);对应 2D 输出:(batch_size, units)

model.add(Dense(units=50, activation='relu',

use_bias=True, kernel_initializer='glorot_uniform', bias_initializer='zeros',))

# 因为要预测下一个时间步的值,因此units设置为1

model.add(Dense(units=1))

# 配置模型

model.compile(optimizer='adam', loss='mse',

metrics=['accuracy'], loss_weights=None, sample_weight_mode=None, weighted_metrics=None, target_tensors=None)

print('\n',model.summary())

# X为输入数据,y为数据标签;batch_size:每次梯度更新的样本数,默认为32。

# verbose: 0,1,2. 0=训练过程无输出,1=显示训练过程进度条,2=每训练一个epoch打印一次信息

history = model.fit(X, y, batch_size=32, epochs=epoch_num, verbose=verbose_set)

yhat = model.predict(test_X, verbose=0)

print('\nyhat:', yhat)

return model, history

if __name__ == '__main__':

train_seq = [10, 20, 30, 40, 50, 60, 70, 80, 90]

sw_width = 3

n_features = 1

epoch_num = 1000

verbose_set = 0

train_X, train_y = split_sequence(train_seq, sw_width, n_features)

# 预测

x_input = np.array([70, 80, 90])

x_input = x_input.reshape((1, sw_width, n_features))

model, history = oned_cnn_model(sw_width, n_features, train_X, train_y, x_input, epoch_num, verbose_set)

print('\ntrain_acc:%s'%np.mean(history.history['accuracy']), '\ntrain_loss:%s'%np.mean(history.history['loss']))

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

输出:

split_sequence:

X:

[[10 20 30]

[20 30 40]

[30 40 50]

[40 50 60]

[50 60 70]

[60 70 80]]

y:

[40 50 60 70 80 90]

X_shape:(6, 3),y_shape:(6,)

train_X:

[[[10]

[20]

[30]]

[[20]

[30]

[40]]

[[30]

[40]

[50]]

[[40]

[50]

[60]]

[[50]

[60]

[70]]

[[60]

[70]

[80]]]

train_y:

[40 50 60 70 80 90]

train_X.shape:(6, 3, 1),trian_y.shape:(6,)

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv1d (Conv1D) (None, 2, 64) 192

_________________________________________________________________

max_pooling1d (MaxPooling1D) (None, 1, 64) 0

_________________________________________________________________

flatten (Flatten) (None, 64) 0

_________________________________________________________________

dense (Dense) (None, 50) 3250

_________________________________________________________________

dense_1 (Dense) (None, 1) 51

=================================================================

Total params: 3,493

Trainable params: 3,493

Non-trainable params: 0

_________________________________________________________________

None

yhat: [[101.824524]]

train_acc:0.0

train_loss:83.09048912930488

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

2. 多变量 CNN 模型 (Multivariate CNN Models)

多变量(多元)时间序列数据是指每一时间步有多个观测值的数据。对于多变量时间序列数据,有两种主要模型:

- 多输入序列;

- 多并行序列

2.1 多输入序列

一个问题可能有两个或多个并行输入时间序列和一个依赖于输入时间序列的输出时间序列。输入时间序列是并行的,因为每个序列在同一时间步上都有观测值。我们可以通过两个并行输入时间序列的简单示例来演示这一点,其中输出序列是输入序列的简单相加。代码实现:

in_seq1 = array([10, 20, 30, 40, 50, 60, 70, 80, 90])

in_seq2 = array([15, 25, 35, 45, 55, 65, 75, 85, 95])

out_seq = array([in_seq1[i]+in_seq2[i] for i in range(len(in_seq1))])

- 1

- 2

- 3

我们可以将这三个数据数组重塑为单个数据集,其中每一行是一个时间步,每一列是一个单独的时间序列。这是在CSV文件中存储并行时间序列的标准方法。代码实现:

in_seq1 = in_seq1.reshape((len(in_seq1), 1))

in_seq2 = in_seq2.reshape((len(in_seq2), 1))

out_seq = out_seq.reshape((len(out_seq), 1))

# 对于二维数组,hstack方法沿第二维堆叠,即沿着列堆叠,列数增加。

dataset = hstack((in_seq1, in_seq2, out_seq)

- 1

- 2

- 3

- 4

- 5

最后结果:

[[ 10 15 25]

[ 20 25 45]

[ 30 35 65]

[ 40 45 85]

[ 50 55 105]

[ 60 65 125]

[ 70 75 145]

[ 80 85 165]

[ 90 95 185]]

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

与单变量时间序列一样,我们必须将这些数据构造成具有输入和输出样本的样本。一维CNN模型需要足够的上下文信息来学习从输入序列到输出值的映射。CNNs可以支持并行输入时间序列作为独立的通道,可以类比图像的红色、绿色和蓝色分量。因此,我们需要将数据分成样本,保持两个输入序列的观测顺序。如果我们选择三个输入时间步骤,那么第一个示例将如下所示:

输入:

10, 15

20, 25

30, 35

- 1

- 2

- 3

输出:

65

- 1

这里可能会看不明白,其实之前的文章已经介绍过了,这里再说一遍。看上边的9×3数组,也就是说,将每个并行序列(列)的前三个时间步(行)的值(3×2,三行两列)作为输入样本提供给模型,并且把第三个时间步(列)的值(本例中为65),作为样本标签提供给模型。还要注意,在将时间序列转换为输入/输出样本以训练模型时,不得不放弃输出时间序列中的一些值(前两行的第三列25和45没有使用),因为在先前的时间步,输入时间序列中没有值,所以没法预测。这个操作可以通过类似上节的oned_split_sequence函数来实现,为了增加文章的可读性,这里只放出结果,相关代码在完整代码部分中。划分结果:

[[10 15]

[20 25]

[30 35]] 65

[[20 25]

[30 35]

[40 45]] 85

[[30 35]

[40 45]

[50 55]] 105

[[40 45]

[50 55]

[60 65]] 125

[[50 55]

[60 65]

[70 75]] 145

[[60 65]

[70 75]

[80 85]] 165

[[70 75]

[80 85]

[90 95]] 185

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

看到这里,其实就很好理解了。每个样本中,三行相当于滑动窗口的宽度为3,上述划分是以滑动步长为1来取值的,每个样本之间的值是有重叠的,两列相当于有两个特征,然后用这些数据来预测下一步的输出,这个输出是单值的。这种方式可以用于时间序列分类任务,之后的文章中会介绍,比如人类行为识别。

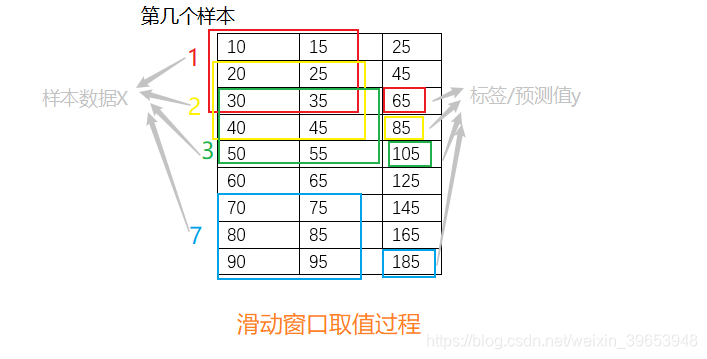

之前也在滑动窗口取值卡了一段时间,经过这段时间的学习,想明白了,画个图吧,方便填坑。这个图一看就明白了,还是拿上边的数据,来分析,如下图:

如果要做时间序列分类任务,只需要把最后一列数据换为标签就行了,每个采样点一个标签,然后取滑动窗口宽度结束索引的行的标签为样本标签;如果想要增加特征,增加列数就可以了。比如做降雨量预测,每一列数据就代表一个特征的采样数据,例如温度、湿度、风速等等,每一行就表示在不同时间戳上这些特征的采样值。

2.1.1 CNN Model

完整代码:

import numpy as np

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, Flatten, Conv1D, MaxPooling1D

def split_sequences(first_seq, secend_seq, sw_width):

'''

该函数将序列数据分割成样本

'''

input_seq1 = np.array(first_seq).reshape(len(first_seq), 1)

input_seq2 = np.array(secend_seq).reshape(len(secend_seq), 1)

out_seq = np.array([first_seq[i]+secend_seq[i] for i in range(len(first_seq))])

out_seq = out_seq.reshape(len(out_seq), 1)

dataset = np.hstack((input_seq1, input_seq2, out_seq))

print('dataset:\n',dataset)

X, y = [], []

for i in range(len(dataset)):

# 切片索引从0开始,区间为前闭后开,所以不用减去1

end_element_index = i + sw_width

# 同样的道理,这里考虑最后一个样本正好取到最后一行的数据,那么索引不能减1,如果减去1的话最后一个样本就取不到了。

if end_element_index > len(dataset):

break

# 该语句实现步长为1的滑动窗口截取数据功能;

# 以下切片中,:-1 表示取除最后一列的其他列数据;-1表示取最后一列的数据

seq_x, seq_y = dataset[i:end_element_index, :-1], dataset[end_element_index-1, -1]

X.append(seq_x)

y.append(seq_y)

process_X, process_y = np.array(X), np.array(y)

n_features = process_X.shape[2]

print('train_X:\n{}\ntrain_y:\n{}\n'.format(process_X, process_y))

print('train_X.shape:{},trian_y.shape:{}\n'.format(process_X.shape, process_y.shape))

print('n_features:',n_features)

return process_X, process_y, n_features

def oned_cnn_model(sw_width, n_features, X, y, test_X, epoch_num, verbose_set):

model = Sequential()

model.add(Conv1D(filters=64, kernel_size=2, activation='relu',

strides=1, padding='valid', data_format='channels_last',

input_shape=(sw_width, n_features)))

model.add(MaxPooling1D(pool_size=2, strides=None, padding='valid',

data_format='channels_last'))

model.add(Flatten())

model.add(Dense(units=50, activation='relu',

use_bias=True, kernel_initializer='glorot_uniform', bias_initializer='zeros',))

model.add(Dense(units=1))

model.compile(optimizer='adam', loss='mse',

metrics=['accuracy'], loss_weights=None, sample_weight_mode=None, weighted_metrics=None, target_tensors=None)

print('\n',model.summary())

history = model.fit(X, y, batch_size=32, epochs=epoch_num, verbose=verbose_set)

yhat = model.predict(test_X, verbose=0)

print('\nyhat:', yhat)

return model, history

if __name__ == '__main__':

train_seq1 = [10, 20, 30, 40, 50, 60, 70, 80, 90]

train_seq2 = [15, 25, 35, 45, 55, 65, 75, 85, 95]

sw_width = 3

epoch_num = 1000

verbose_set = 0

train_X, train_y, n_features = split_sequences(train_seq1, train_seq2, sw_width)

# 预测

x_input = np.array([[80, 85], [90, 95], [100, 105]])

x_input = x_input.reshape((1, sw_width, n_features))

model, history = oned_cnn_model(sw_width, n_features, train_X, train_y, x_input, epoch_num, verbose_set)

print('\ntrain_acc:%s'%np.mean(history.history['accuracy']), '\ntrain_loss:%s'%np.mean(history.history['loss']))

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

输出:

dataset:

[[ 10 15 25]

[ 20 25 45]

[ 30 35 65]

[ 40 45 85]

[ 50 55 105]

[ 60 65 125]

[ 70 75 145]

[ 80 85 165]

[ 90 95 185]]

train_X:

[[[10 15]

[20 25]

[30 35]]

[[20 25]

[30 35]

[40 45]]

[[30 35]

[40 45]

[50 55]]

[[40 45]

[50 55]

[60 65]]

[[50 55]

[60 65]

[70 75]]

[[60 65]

[70 75]

[80 85]]

[[70 75]

[80 85]

[90 95]]]

train_y:

[ 65 85 105 125 145 165 185]

train_X.shape:(7, 3, 2),trian_y.shape:(7,)

n_features: 2

Model: "sequential_2"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv1d_2 (Conv1D) (None, 2, 64) 320

_________________________________________________________________

max_pooling1d_2 (MaxPooling1 (None, 1, 64) 0

_________________________________________________________________

flatten_2 (Flatten) (None, 64) 0

_________________________________________________________________

dense_4 (Dense) (None, 50) 3250

_________________________________________________________________

dense_5 (Dense) (None, 1) 51

=================================================================

Total params: 3,621

Trainable params: 3,621

Non-trainable params: 0

_________________________________________________________________

None

yhat: [[205.84216]]

train_acc:0.0

train_loss:290.3450777206163

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

2.1.2 Multi-headed CNN Model

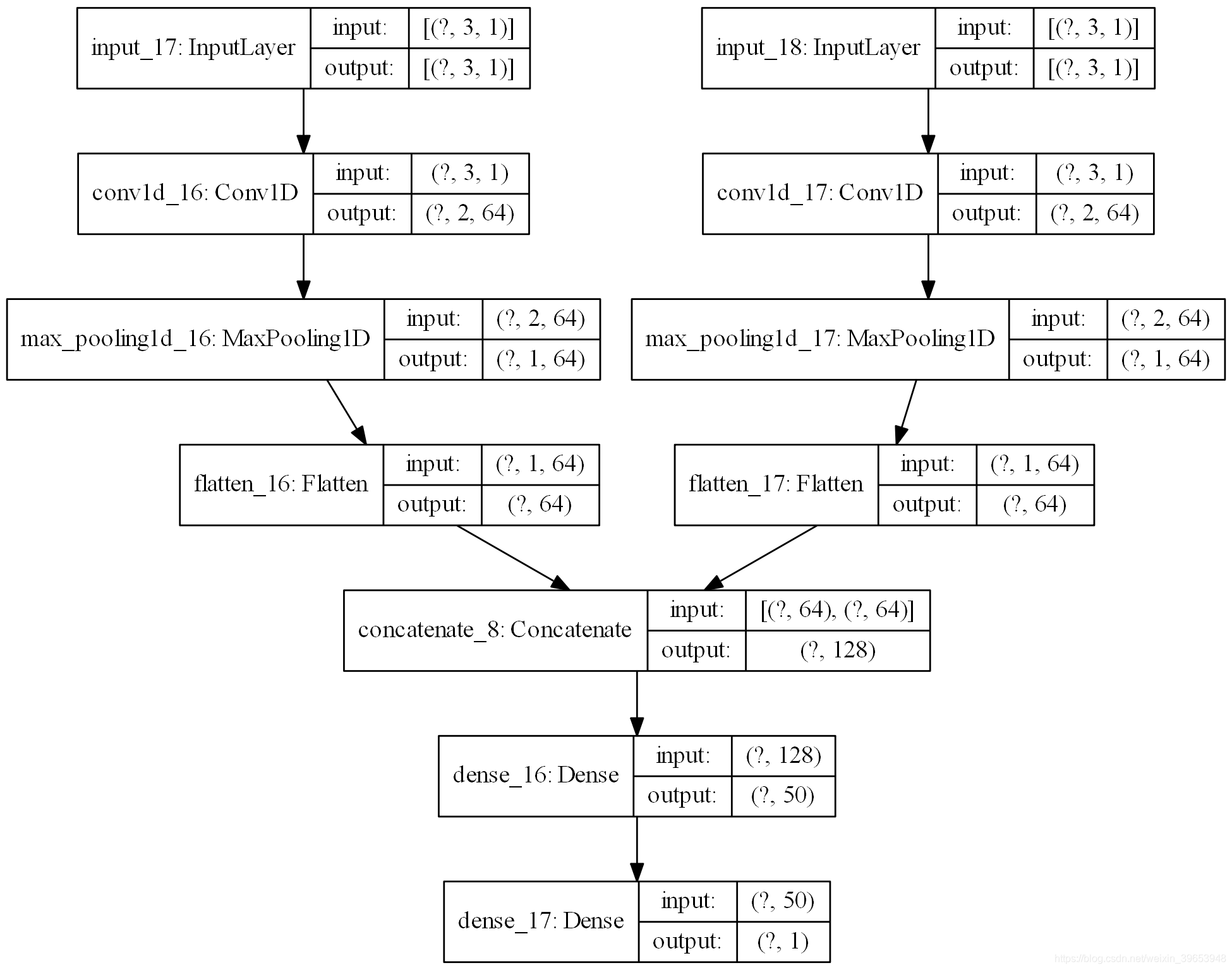

有另一种更精细的方法来解决多变量输入序列问题。每个输入序列可以由单独的CNN处理,并且在对输出序列进行预测之前,可以组合这些子模型中的每个的输出。我们可以称之为 Multi-headed CNN模型。它可能提供更多的灵活性或更好的性能,这取决于正在建模的问题的具体情况。例如,它允许每个输入序列配置不同的子模型,例如过滤器映射的数量和内核大小。这种类模型可以用Keras API定义。首先,可以将第一个输入模型定义为一个一维CNN,其输入层要求输入为n个步骤和1个特征。代码实现:

visible1 = Input(shape=(n_steps, n_features))

cnn1 = Conv1D(filters=64, kernel_size=2, activation='relu')(visible1)

cnn1 = MaxPooling1D(pool_size=2)(cnn1)

cnn1 = Flatten()(cnn1)

visible2 = Input(shape=(n_steps, n_features))

cnn2 = Conv1D(filters=64, kernel_size=2, activation='relu')(visible2)

cnn2 = MaxPooling1D(pool_size=2)(cnn2)

cnn2 = Flatten()(cnn2)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

定义好两个输入子模型后,可以将每个模型的输出合并为一个长向量,在对输出序列进行预测之前可以对其进行解释。最后,将输入和输出绑定在一起。代码实现:

merge = concatenate([cnn1, cnn2])

dense = Dense(50, activation='relu')(merge)

output = Dense(1)(dense)

model = Model(inputs=[visible1, visible2], outputs=output)

- 1

- 2

- 3

- 4

- 5

此模型要求将输入作为两个元素的列表提供,其中列表中的每个元素都包含其中一个子模型的数据。为了实现这一点,我们可以将3D输入数据分割成两个独立的输入数据数组,即从一个形状为 [7,3,2] 的数组分割成两个形状为 [7,3,1] 的3D数组。代码实现:

n_features = 1

# separate input data

X1 = X[:, :, 0].reshape(X.shape[0], X.shape[1], n_features)

X2 = X[:, :, 1].reshape(X.shape[0], X.shape[1], n_features)

- 1

- 2

- 3

- 4

开始训练:

model.fit([X1, X2], y, epochs=1000, verbose=0)

- 1

完整代码:

import numpy as np

from tensorflow.keras.models import Sequential, Model

from tensorflow.keras.layers import Dense, Flatten, Conv1D, MaxPooling1D, Input, concatenate

from tensorflow.keras.utils import plot_model

def split_sequences2(first_seq, secend_seq, sw_width, n_features):

'''

该函数将序列数据分割成样本

'''

input_seq1 = np.array(first_seq).reshape(len(first_seq), 1)

input_seq2 = np.array(secend_seq).reshape(len(secend_seq), 1)

out_seq = np.array([first_seq[i]+secend_seq[i] for i in range(len(first_seq))])

out_seq = out_seq.reshape(len(out_seq), 1)

dataset = np.hstack((input_seq1, input_seq2, out_seq))

print('dataset:\n',dataset)

X, y = [], []

for i in range(len(dataset)):

# 切片索引从0开始,区间为前闭后开,所以不用减去1

end_element_index = i + sw_width

# 同样的道理,这里考虑最后一个样本正好取到最后一行的数据,那么索引不能减1,如果减去1的话最后一个样本就取不到了。

if end_element_index > len(dataset):

break

# 该语句实现步长为1的滑动窗口截取数据功能;

# 以下切片中,:-1 表示取除最后一列的其他列数据;-1表示取最后一列的数据

seq_x, seq_y = dataset[i:end_element_index, :-1], dataset[end_element_index-1, -1]

X.append(seq_x)

y.append(seq_y)

process_X, process_y = np.array(X), np.array(y)

# [:,:,0]表示三维数组前两个维度的数据全取,第三个维度取第一个数据,可以想象成一摞饼干,取了一块。

# 本例中 process_X的shape为(7,3,2),所以下式就很好理解了,

X1 = process_X[:,:,0].reshape(process_X.shape[0], process_X.shape[1], n_features)

X2 = process_X[:,:,1].reshape(process_X.shape[0], process_X.shape[1], n_features)

print('train_X:\n{}\ntrain_y:\n{}\n'.format(process_X, process_y))

print('train_X.shape:{},trian_y.shape:{}\n'.format(process_X.shape, process_y.shape))

print('X1.shape:{},X2.shape:{}\n'.format(X1.shape, X2.shape))

return X1, X2, process_y

def oned_cnn_model(n_steps, n_features, X_1, X_2, y, x1, x2, epoch_num, verbose_set):

visible1 = Input(shape=(n_steps, n_features))

cnn1 = Conv1D(filters=64, kernel_size=2, activation='relu')(visible1)

cnn1 = MaxPooling1D(pool_size=2)(cnn1)

cnn1 = Flatten()(cnn1)

visible2 = Input(shape=(n_steps, n_features))

cnn2 = Conv1D(filters=64, kernel_size=2, activation='relu')(visible2)

cnn2 = MaxPooling1D(pool_size=2)(cnn2)

cnn2 = Flatten()(cnn2)

merge = concatenate([cnn1, cnn2])

dense = Dense(50, activation='relu')(merge)

output = Dense(1)(dense)

model = Model(inputs=[visible1, visible2], outputs=output)

model.compile(optimizer='adam', loss='mse',

metrics=['accuracy'], loss_weights=None, sample_weight_mode=None, weighted_metrics=None, target_tensors=None)

print('\n',model.summary())

plot_model(model, to_file='multi_head_cnn_model.png', show_shapes=True, show_layer_names=True, rankdir='TB', dpi=200)

history = model.fit([X_1, X_2], y, batch_size=32, epochs=epoch_num, verbose=verbose_set)

yhat = model.predict([x1,x2], verbose=0)

print('\nyhat:', yhat)

return model, history

if __name__ == '__main__':

train_seq1 = [10, 20, 30, 40, 50, 60, 70, 80, 90]

train_seq2 = [15, 25, 35, 45, 55, 65, 75, 85, 95]

sw_width = 3

n_features = 1

epoch_num = 1000

verbose_set = 0

train_X1, train_X2, train_y = split_sequences2(train_seq1, train_seq2, sw_width, n_features)

# 预测

x_input = np.array([[80, 85], [90, 95], [100, 105]])

x_1 = x_input[:, 0].reshape((1, sw_width, n_features))

x_2 = x_input[:, 1].reshape((1, sw_width, n_features))

model, history = oned_cnn_model(sw_width, n_features, train_X1, train_X2, train_y, x_1, x_2, epoch_num, verbose_set)

print('\ntrain_acc:%s'%np.mean(history.history['accuracy']), '\ntrain_loss:%s'%np.mean(history.history['loss']))

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

输出:

dataset:

[[ 10 15 25]

[ 20 25 45]

[ 30 35 65]

[ 40 45 85]

[ 50 55 105]

[ 60 65 125]

[ 70 75 145]

[ 80 85 165]

[ 90 95 185]]

train_X:

[[[10 15]

[20 25]

[30 35]]

[[20 25]

[30 35]

[40 45]]

[[30 35]

[40 45]

[50 55]]

[[40 45]

[50 55]

[60 65]]

[[50 55]

[60 65]

[70 75]]

[[60 65]

[70 75]

[80 85]]

[[70 75]

[80 85]

[90 95]]]

train_y:

[ 65 85 105 125 145 165 185]

train_X.shape:(7, 3, 2),trian_y.shape:(7,)

X1.shape:(7, 3, 1),X2.shape:(7, 3, 1)

Model: "model_8"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_17 (InputLayer) [(None, 3, 1)] 0

__________________________________________________________________________________________________

input_18 (InputLayer) [(None, 3, 1)] 0

__________________________________________________________________________________________________

conv1d_16 (Conv1D) (None, 2, 64) 192 input_17[0][0]

__________________________________________________________________________________________________

conv1d_17 (Conv1D) (None, 2, 64) 192 input_18[0][0]

__________________________________________________________________________________________________

max_pooling1d_16 (MaxPooling1D) (None, 1, 64) 0 conv1d_16[0][0]

__________________________________________________________________________________________________

max_pooling1d_17 (MaxPooling1D) (None, 1, 64) 0 conv1d_17[0][0]

__________________________________________________________________________________________________

flatten_16 (Flatten) (None, 64) 0 max_pooling1d_16[0][0]

__________________________________________________________________________________________________

flatten_17 (Flatten) (None, 64) 0 max_pooling1d_17[0][0]

__________________________________________________________________________________________________

concatenate_8 (Concatenate) (None, 128) 0 flatten_16[0][0]

flatten_17[0][0]

__________________________________________________________________________________________________

dense_16 (Dense) (None, 50) 6450 concatenate_8[0][0]

__________________________________________________________________________________________________

dense_17 (Dense) (None, 1) 51 dense_16[0][0]

==================================================================================================

Total params: 6,885

Trainable params: 6,885

Non-trainable params: 0

__________________________________________________________________________________________________

None

yhat: [[205.66142]]

train_acc:0.0

train_loss:201.12432714579907

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

保存的网络结构图:

2.2 多并行序列

假设有如下序列:

[[ 10 15 25]

[ 20 25 45]

[ 30 35 65]

[ 40 45 85]

[ 50 55 105]

[ 60 65 125]

[ 70 75 145]

[ 80 85 165]

[ 90 95 185]]

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

输入:

10, 15, 25

20, 25, 45

30, 35, 65

- 1

- 2

- 3

输出:

40, 45, 85

- 1

对于整个数据集:

[[10 15 25]

[20 25 45]

[30 35 65]] [40 45 85]

[[20 25 45]

[30 35 65]

[40 45 85]] [ 50 55 105]

[[ 30 35 65]

[ 40 45 85]

[ 50 55 105]] [ 60 65 125]

[[ 40 45 85]

[ 50 55 105]

[ 60 65 125]] [ 70 75 145]

[[ 50 55 105]

[ 60 65 125]

[ 70 75 145]] [ 80 85 165]

[[ 60 65 125]

[ 70 75 145]

[ 80 85 165]] [ 90 95 185]

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

2.2.1 Vector-Output CNN Model

要在该数据集上建立一个一维CNN模型。在该模型中,通过input_shape参数为输入层指定时间步数和并行序列(特征)。代码实现:

model = Sequential()

model.add(Conv1D(filters=64, kernel_size=2, activation='relu', input_shape=(n_steps,

n_features)))

model.add(MaxPooling1D(pool_size=2))

model.add(Flatten())

model.add(Dense(50, activation='relu'))

model.add(Dense(n_features))

model.compile(optimizer='adam', loss='mse')

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

完整代码:

import numpy as np

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, Flatten, Conv1D, MaxPooling1D

def split_sequences(first_seq, secend_seq, sw_width):

'''

该函数将序列数据分割成样本

'''

input_seq1 = np.array(first_seq).reshape(len(first_seq), 1)

input_seq2 = np.array(secend_seq).reshape(len(secend_seq), 1)

out_seq = np.array([first_seq[i]+secend_seq[i] for i in range(len(first_seq))])

out_seq = out_seq.reshape(len(out_seq), 1)

dataset = np.hstack((input_seq1, input_seq2, out_seq))

print('dataset:\n',dataset)

X, y = [], []

for i in range(len(dataset)):

end_element_index = i + sw_width

if end_element_index > len(dataset) - 1:

break

# 该语句实现步长为1的滑动窗口截取数据功能;

seq_x, seq_y = dataset[i:end_element_index, :], dataset[end_element_index, :]

X.append(seq_x)

y.append(seq_y)

process_X, process_y = np.array(X), np.array(y)

n_features = process_X.shape[2]

print('train_X:\n{}\ntrain_y:\n{}\n'.format(process_X, process_y))

print('train_X.shape:{},trian_y.shape:{}\n'.format(process_X.shape, process_y.shape))

print('n_features:',n_features)

return process_X, process_y, n_features

def oned_cnn_model(sw_width, n_features, X, y, test_X, epoch_num, verbose_set):

model = Sequential()

model.add(Conv1D(filters=64, kernel_size=2, activation='relu',

strides=1, padding='valid', data_format='channels_last',

input_shape=(sw_width, n_features)))

model.add(MaxPooling1D(pool_size=2, strides=None, padding='valid',

data_format='channels_last'))

model.add(Flatten())

model.add(Dense(units=50, activation='relu',

use_bias=True, kernel_initializer='glorot_uniform', bias_initializer='zeros',))

model.add(Dense(units=n_features))

model.compile(optimizer='adam', loss='mse',

metrics=['accuracy'], loss_weights=None, sample_weight_mode=None, weighted_metrics=None, target_tensors=None)

print('\n',model.summary())

history = model.fit(X, y, batch_size=32, epochs=epoch_num, verbose=verbose_set)

yhat = model.predict(test_X, verbose=0)

print('\nyhat:', yhat)

return model, history

if __name__ == '__main__':

train_seq1 = [10, 20, 30, 40, 50, 60, 70, 80, 90]

train_seq2 = [15, 25, 35, 45, 55, 65, 75, 85, 95]

sw_width = 3

epoch_num = 3000

verbose_set = 0

train_X, train_y, n_features = split_sequences(train_seq1, train_seq2, sw_width)

# 预测

x_input = np.array([[70,75,145], [80,85,165], [90,95,185]])

x_input = x_input.reshape((1, sw_width, n_features))

model, history = oned_cnn_model(sw_width, n_features, train_X, train_y, x_input, epoch_num, verbose_set)

print('\ntrain_acc:%s'%np.mean(history.history['accuracy']), '\ntrain_loss:%s'%np.mean(history.history['loss']))

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

输出:

dataset:

[[ 10 15 25]

[ 20 25 45]

[ 30 35 65]

[ 40 45 85]

[ 50 55 105]

[ 60 65 125]

[ 70 75 145]

[ 80 85 165]

[ 90 95 185]]

train_X:

[[[ 10 15 25]

[ 20 25 45]

[ 30 35 65]]

[[ 20 25 45]

[ 30 35 65]

[ 40 45 85]]

[[ 30 35 65]

[ 40 45 85]

[ 50 55 105]]

[[ 40 45 85]

[ 50 55 105]

[ 60 65 125]]

[[ 50 55 105]

[ 60 65 125]

[ 70 75 145]]

[[ 60 65 125]

[ 70 75 145]

[ 80 85 165]]]

train_y:

[[ 40 45 85]

[ 50 55 105]

[ 60 65 125]

[ 70 75 145]

[ 80 85 165]

[ 90 95 185]]

train_X.shape:(6, 3, 3),trian_y.shape:(6, 3)

n_features: 3

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv1d (Conv1D) (None, 2, 64) 448

_________________________________________________________________

max_pooling1d (MaxPooling1D) (None, 1, 64) 0

_________________________________________________________________

flatten (Flatten) (None, 64) 0

_________________________________________________________________

dense (Dense) (None, 50) 3250

_________________________________________________________________

dense_1 (Dense) (None, 3) 153

=================================================================

Total params: 3,851

Trainable params: 3,851

Non-trainable params: 0

_________________________________________________________________

None

yhat: [[100.58862 106.20969 207.11055]]

train_acc:0.9979444

train_loss:36.841995810692595

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

2.2.2 Multi-output CNN Model

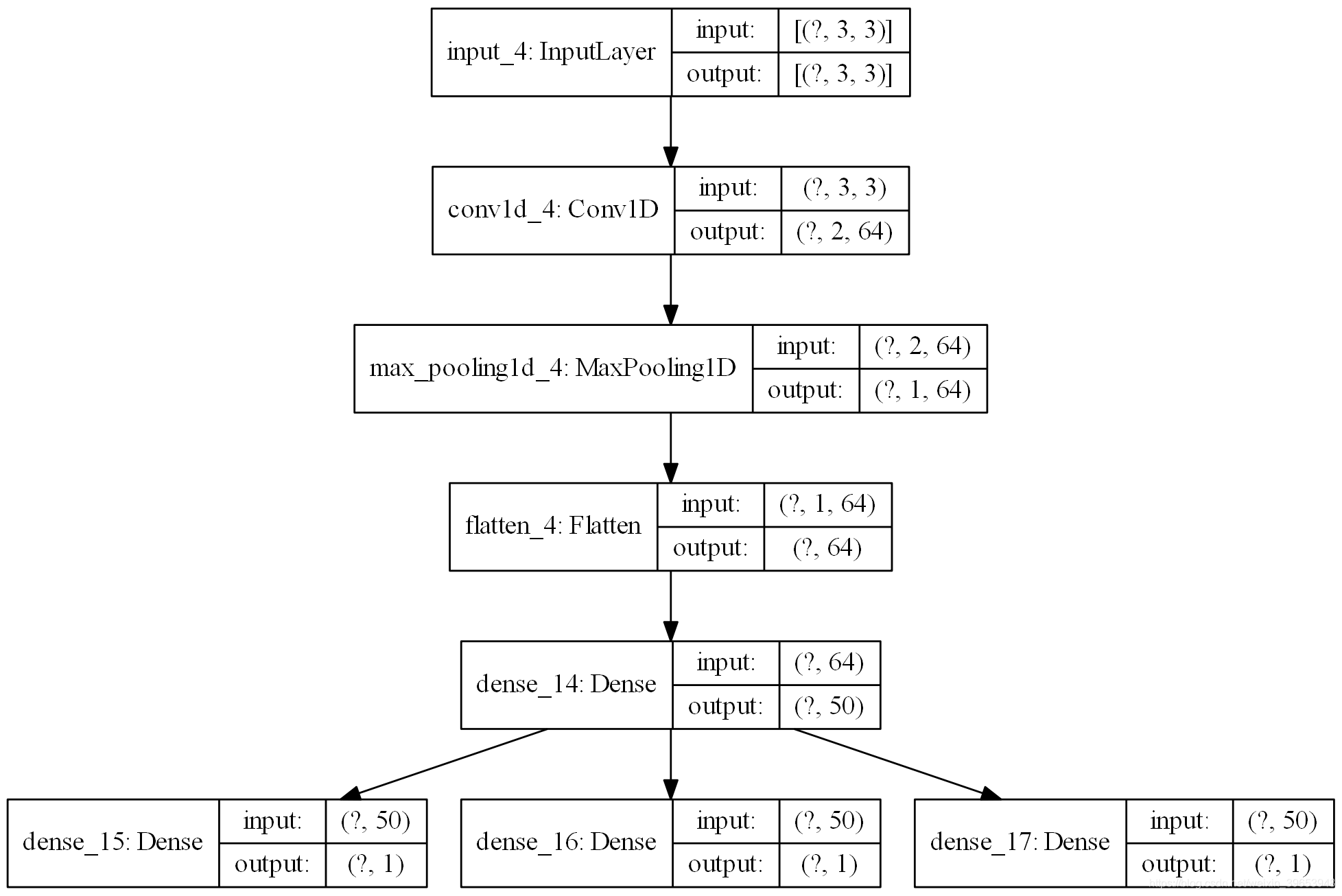

与多个输入序列一样,还有另一种更精细的方法来建模问题。每个输出序列可以由单独的输出CNN模型处理。我们可以称之为多输出CNN模型。它可能提供更多的灵活性或更好的性能,这取决于正在建模的问题的具体情况。代码实现:

visible = Input(shape=(n_steps, n_features))

cnn = Conv1D(filters=64, kernel_size=2, activation='relu')(visible)

cnn = MaxPooling1D(pool_size=2)(cnn)

cnn = Flatten()(cnn)

cnn = Dense(50, activation='relu')(cnn)

- 1

- 2

- 3

- 4

- 5

然后,我们可以为希望预测的三个序列中的每一个定义一个输出层,其中每个输出子模型将预测一个时间步。

output1 = Dense(1)(cnn)

output2 = Dense(1)(cnn)

output3 = Dense(1)(cnn)

- 1

- 2

- 3

绑定模型,编译模型:

model = Model(inputs=visible, outputs=[output1, output2, output3])

model.compile(optimizer='adam', loss='mse')

- 1

- 2

在训练模型时,每个样本需要三个独立的输出数组。可以通过将具有形状[7,3]的输出训练数据转换为具有形状[7,1]的三个数组来实现这一点。

y1 = y[:, 0].reshape((y.shape[0], 1))

y2 = y[:, 1].reshape((y.shape[0], 1))

y3 = y[:, 2].reshape((y.shape[0], 1))

- 1

- 2

- 3

开始训练:

model.fit(X, [y1,y2,y3], epochs=2000, verbose=0)

- 1

完整代码:

import numpy as np

from tensorflow.keras.models import Sequential, Model

from tensorflow.keras.layers import Dense, Flatten, Conv1D, MaxPooling1D, Input, concatenate

from tensorflow.keras.utils import plot_model

def split_sequences(first_seq, secend_seq, sw_width):

'''

该函数将序列数据分割成样本

'''

input_seq1 = np.array(first_seq).reshape(len(first_seq), 1)

input_seq2 = np.array(secend_seq).reshape(len(secend_seq), 1)

out_seq = np.array([first_seq[i]+secend_seq[i] for i in range(len(first_seq))])

out_seq = out_seq.reshape(len(out_seq), 1)

dataset = np.hstack((input_seq1, input_seq2, out_seq))

print('dataset:\n',dataset)

X, y = [], []

for i in range(len(dataset)):

end_element_index = i + sw_width

if end_element_index > len(dataset) - 1:

break

# 该语句实现步长为1的滑动窗口截取数据功能;

seq_x, seq_y = dataset[i:end_element_index, :], dataset[end_element_index, :]

X.append(seq_x)

y.append(seq_y)

process_X, process_y = np.array(X), np.array(y)

n_features = process_X.shape[2]

y1 = process_y[:, 0].reshape((process_y.shape[0], 1))

y2 = process_y[:, 1].reshape((process_y.shape[0], 1))

y3 = process_y[:, 2].reshape((process_y.shape[0], 1))

print('train_X:\n{}\ntrain_y:\n{}\n'.format(process_X, process_y))

print('train_X.shape:{},trian_y.shape:{}\n'.format(process_X.shape, process_y.shape))

print('n_features:',n_features)

return process_X, process_y, n_features, y1, y2, y3

def oned_cnn_model(n_steps, n_features, X, y, test_X, epoch_num, verbose_set):

visible = Input(shape=(n_steps, n_features))

cnn = Conv1D(filters=64, kernel_size=2, activation='relu')(visible)

cnn = MaxPooling1D(pool_size=2)(cnn)

cnn = Flatten()(cnn)

cnn = Dense(50, activation='relu')(cnn)

output1 = Dense(1)(cnn)

output2 = Dense(1)(cnn)

output3 = Dense(1)(cnn)

model = Model(inputs=visible, outputs=[output1, output2, output3])

model.compile(optimizer='adam', loss='mse',

metrics=['accuracy'], loss_weights=None, sample_weight_mode=None, weighted_metrics=None, target_tensors=None)

print('\n',model.summary())

plot_model(model, to_file='vector_output_cnn_model.png', show_shapes=True, show_layer_names=True, rankdir='TB', dpi=200)

model.fit(X, y, batch_size=32, epochs=epoch_num, verbose=verbose_set)

yhat = model.predict(test_X, verbose=0)

print('\nyhat:', yhat)

return model

if __name__ == '__main__':

train_seq1 = [10, 20, 30, 40, 50, 60, 70, 80, 90]

train_seq2 = [15, 25, 35, 45, 55, 65, 75, 85, 95]

sw_width = 3

epoch_num = 2000

verbose_set = 0

train_X, train_y, n_features, y1, y2, y3 = split_sequences(train_seq1, train_seq2, sw_width)

# 预测

x_input = np.array([[70,75,145], [80,85,165], [90,95,185]])

x_input = x_input.reshape((1, sw_width, n_features))

model = oned_cnn_model(sw_width, n_features, train_X, [y1, y2, y3], x_input, epoch_num, verbose_set)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

输出:

dataset:

[[ 10 15 25]

[ 20 25 45]

[ 30 35 65]

[ 40 45 85]

[ 50 55 105]

[ 60 65 125]

[ 70 75 145]

[ 80 85 165]

[ 90 95 185]]

train_X:

[[[ 10 15 25]

[ 20 25 45]

[ 30 35 65]]

[[ 20 25 45]

[ 30 35 65]

[ 40 45 85]]

[[ 30 35 65]

[ 40 45 85]

[ 50 55 105]]

[[ 40 45 85]

[ 50 55 105]

[ 60 65 125]]

[[ 50 55 105]

[ 60 65 125]

[ 70 75 145]]

[[ 60 65 125]

[ 70 75 145]

[ 80 85 165]]]

train_y:

[[ 40 45 85]

[ 50 55 105]

[ 60 65 125]

[ 70 75 145]

[ 80 85 165]

[ 90 95 185]]

train_X.shape:(6, 3, 3),trian_y.shape:(6, 3)

n_features: 3

Model: "model_2"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_4 (InputLayer) [(None, 3, 3)] 0

__________________________________________________________________________________________________

conv1d_4 (Conv1D) (None, 2, 64) 448 input_4[0][0]

__________________________________________________________________________________________________

max_pooling1d_4 (MaxPooling1D) (None, 1, 64) 0 conv1d_4[0][0]

__________________________________________________________________________________________________

flatten_4 (Flatten) (None, 64) 0 max_pooling1d_4[0][0]

__________________________________________________________________________________________________

dense_14 (Dense) (None, 50) 3250 flatten_4[0][0]

__________________________________________________________________________________________________

dense_15 (Dense) (None, 1) 51 dense_14[0][0]

__________________________________________________________________________________________________

dense_16 (Dense) (None, 1) 51 dense_14[0][0]

__________________________________________________________________________________________________

dense_17 (Dense) (None, 1) 51 dense_14[0][0]

==================================================================================================

Total params: 3,851

Trainable params: 3,851

Non-trainable params: 0

__________________________________________________________________________________________________

None

yhat: [array([[101.1264]], dtype=float32), array([[106.274635]], dtype=float32), array([[207.64928]], dtype=float32)]

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

网络结构图:

由于字数过多(已经28000多字)页面变卡,下篇继续将剩下的两类模型。