- 1【Rstudio】安装流程_rstudio desktop

- 2ATF(ARM Trusted firmware)完成启动流程

- 3时延估计 matlab,Matlab lms自适应时延估计算法仿真 求解

- 4yolov5模型 转 tensorRT_yolov5转tensorrt

- 5威联通(QNAP)docker jellyfin开启硬解_威联通jellyfin硬解

- 6CPU硬解Stable-Diffusion_--no-half

- 7Unity 将图片转换成 sprite 格式_unity convert images to sprites

- 8Linux下rabbitmq安装rabbitmq_delayed_message_exchange插件_rabbitmq_delayed_message_exchange3.6.x

- 9Unity使用face++实现人脸识别_unity人脸融合

- 10基于Python爬虫重庆美食商家数据可视化系统设计与实现(Django框架) 研究背景与意义、国内外研究现状_餐饮企业数据分析与可视化项目

Centos 8安装部署openstack Victoria版_centos8 openstack 生产环境

赞

踩

文章目录

OpenStack基础介绍

请查看此博客

安装部署官方文档,点击此处查看

硬件最低要求

控制器节点:1个处理器,4 GB内存和5 GB存储

计算节点:1个处理器,2 GB内存和10 GB存储

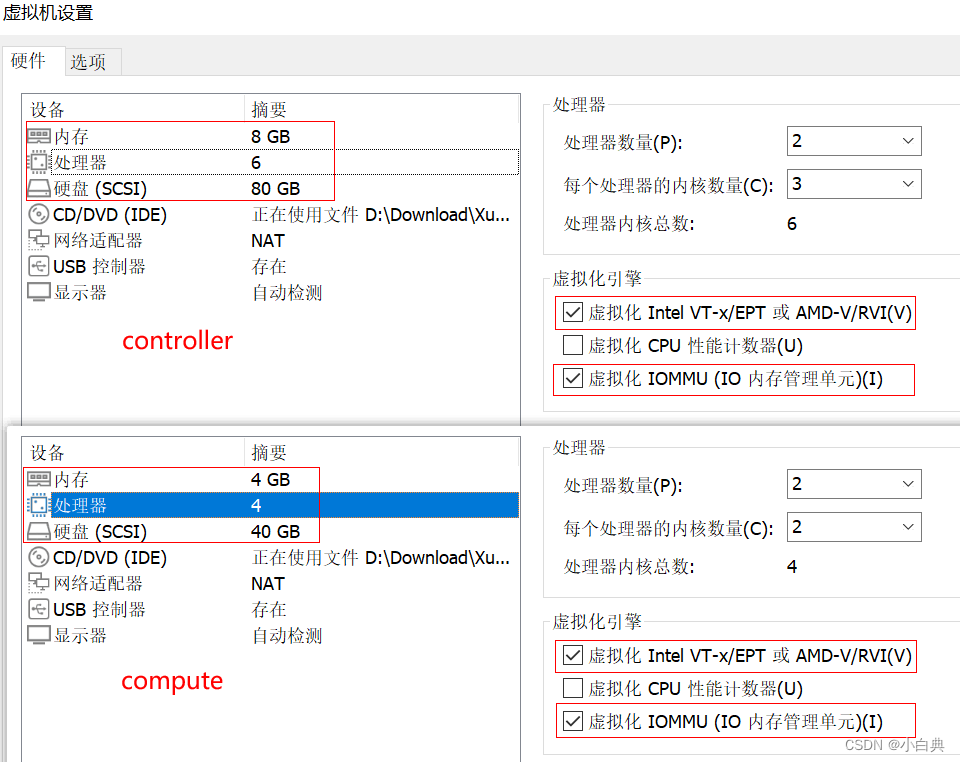

在虚拟机中安装请调整内存、处理器和磁盘大小,以满足硬件最低要求,并勾选虚拟化

由于硬件条件有限,此此安装只有控制节点和计算节点

安装环境

-

工具:VMware Workstation 16 Pro

-

操作系统:CentOS 8.3

-

控制节点虚拟机配置,内存 8G、处理器 6C、磁盘 80G、虚拟化引擎

-

计算节点虚拟机配置,内存 4G、处理器 4C、磁盘 40G、虚拟化引擎

一、基础服务安装

1、基本配置

配置节点:控制节点和计算节点

-

修改主机名

# 修改控制节点 hostnamectl set-hostname controller exec bash # 修改计算节点 hostnamectl set-hostname compute exec bash- 1

- 2

- 3

- 4

- 5

- 6

-

更换网络服务

在安装部署OpenStack时,OpenStack的网络服务会与NetworkManager服务产生冲突,二者无法一起正常工作,需要使用Network

# 安装Network服务 dnf install network-scripts -y # 停用NetworkManager并禁止开机自启 systemctl stop NetworkManager && systemctl disable NetworkManager # 启用 Network并设置开机自启 systemctl start network && systemctl enable network- 1

- 2

- 3

- 4

- 5

- 6

-

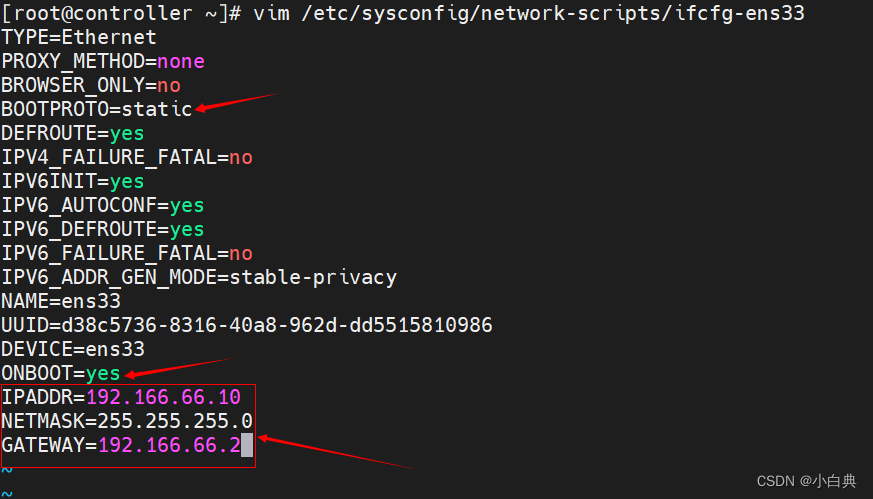

设置静态IP

编辑网络配置文件

vim /etc/sysconfig/network-scripts/ifcfg-ens33- 1

修改修改并添加以下内容

# 设为静态 BOOTPROTO=static # 设为开机自动连接 ONBOOT=yes # 添加IP、子网掩码及网关 IPADDR=192.166.66.10 NETMASK=255.255.255.0 GATEWAY=192.166.66.2- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

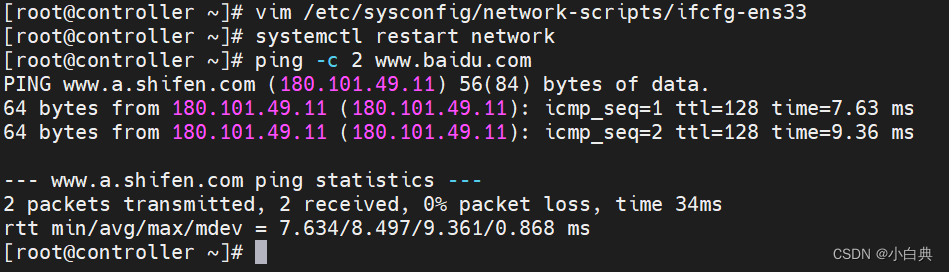

重启Network网络服务

# 重启网络服务 systemctl restart network # 测试是否可访问外网 ping -c 2 www.baidu.com- 1

- 2

- 3

- 4

-

关闭所有节点防火墙并禁止开机自启

systemctl stop firewalld && systemctl disable firewalld- 1

-

配置host解析,在hosts文件中添加主机

使用echo命令修改,分别在两个节点执行以下命令

echo -e "192.166.66.10\tcontroller\n192.166.66.11\tcompute" >> /etc/hosts- 1

或者编辑hosts文件

vim /etc/hosts添加如下信息192.166.66.10 controller 192.166.66.11 compute- 1

- 2

配置后可通过命令

scp -rp /etc/hosts 192.166.66.11:/etc/hosts直接覆盖另一节点hosts文件然后测试控制节点与计算节点的连通性,以及两节点与外网的连通性,在各节点上分别执行如下命令

# 控制节点 ping -c 3 www.baidu.com ping -c 3 compute # 计算节点 ping -c 3 www.baidu.com ping -c 3 controller- 1

- 2

- 3

- 4

- 5

- 6

2、基础服务

配置节点:控制节点和计算节点

-

时间同步,先执行命令

rpm -qa |grep chrony查看系统是否安装chrony,若未安装则执行安装命令dnf install chrony -y,若已安装则编辑chrony配置文件vim /etc/chrony.conf修改以下两条信息,注意:在计算节点仅修改第一条,修改为server controller iburst,直接与控制节点同步# Please consider joining the pool (http://www.pool.ntp.org/join.html). server ntp6.aliyun.com iburst # Allow NTP client access from local network. allow 10.0.0.0/24- 1

- 2

- 3

- 4

-

重启chrony服务并开机自启

systemctl restart chronyd && systemctl enable chronyd- 1

-

安装openstack存储库

dnf config-manager --enable powertools dnf install centos-release-openstack-victoria -y- 1

- 2

-

若网络太慢,可以修改为国内的yum源,修改方式请查看官方操作步骤,各源地址如下

华为 https://mirrors.huaweicloud.com/ 清华 https://mirrors.tuna.tsinghua.edu.cn/ 阿里云 https://mirrors.aliyun.com/ 网易 https://mirrors.163.com/ 中科大 https://mirrors.ustc.edu.cn/- 1

- 2

- 3

- 4

- 5

-

升级所有节点上的软件包

dnf upgrade -y- 1

-

安装openstack客户端和openstack-selinux

dnf install python3-openstackclient openstack-selinux -y- 1

3、SQL数据库

配置节点:仅控制节点

-

安装Mariadb数据库,也可安装MySQL数据库

dnf install mariadb mariadb-server python3-PyMySQL -y- 1

-

创建和编辑

vim /etc/my.cnf.d/openstack.cnf文件,添加如下信息[mysqld] bind-address = 192.166.66.10 default-storage-engine = innodb innodb_file_per_table = on max_connections = 4096 collation-server = utf8_general_ci character-set-server = utf8- 1

- 2

- 3

- 4

- 5

- 6

- 7

-

启动数据库并设置为开机自启

systemctl start mariadb && systemctl enable mariadb- 1

-

保护数据库服务

mysql_secure_installation # 输入当前用户root密码,若为空直接回车 Enter current password for root (enter for none): OK, successfully used password, moving on... # 是否设置root密码 Set root password? [Y/n] y # 输入新密码 New password: # 再次输入新密码 Re-enter new password: # 是否删除匿名用户 Remove anonymous users? [Y/n] y # 是否禁用远程登录 Disallow root login remotely? [Y/n] n # 是否删除数据库并访问它 Remove test database and access to it? [Y/n] y # 是否重新加载权限表 Reload privilege tables now? [Y/n] y # 以上步骤根据实际情况做配置即可,不一定要与此处保持一致

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

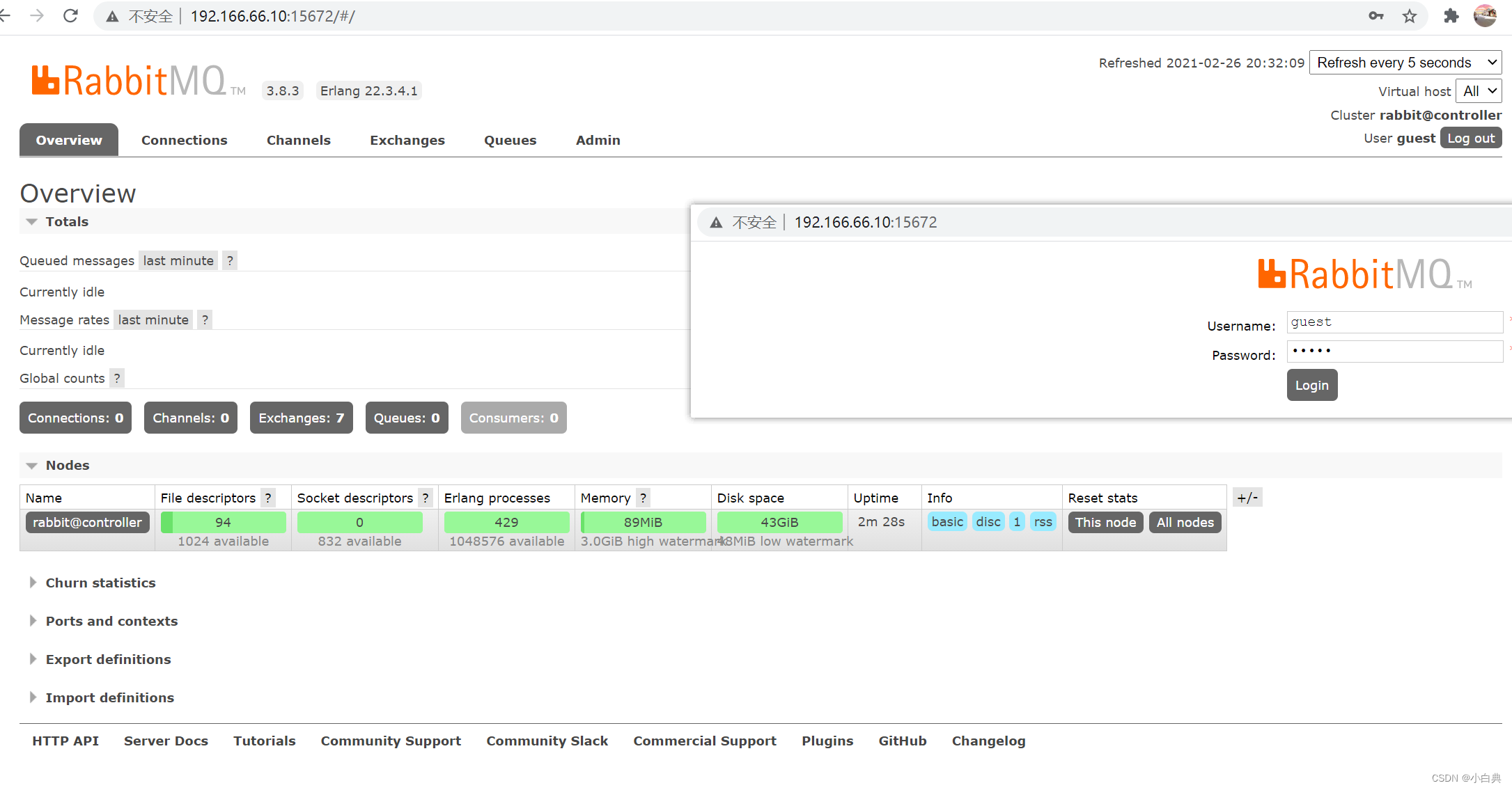

4、消息队列

配置节点:仅控制节点

-

安装软件包

dnf install rabbitmq-server -y- 1

-

启动消息队列服务并设置为开机自启

systemctl start rabbitmq-server && systemctl enable rabbitmq-server- 1

-

添加openstack用户并设置密码

rabbitmqctl add_user openstack RABBIT_PASS- 1

-

给openstack用户可读可写可配置权限

rabbitmqctl set_permissions openstack ".*" ".*" ".*"- 1

-

为了方便监控,启用Web界面管理插件

rabbitmq-plugins enable rabbitmq_management- 1

安装成功后通过命令

netstat -lntup查看多了一个15672的服务端口,通过浏览器访问可以成功登录RabbitMQ,默认管理员账号密码都是guest,登录成功页面如下图

5、Memcached缓存

配置节点:仅控制节点

-

安装软件包

dnf install memcached python3-memcached -y- 1

-

编辑

vim /etc/sysconfig/memcached文件,将OPTTONS行修改成如下信息OPTIONS="-l 127.0.0.1,::1,controller"- 1

-

启动Memcached服务并设置开机自启

systemctl start memcached && systemctl enable memcached- 1

6、Etcd集群

配置节点:仅控制节点

-

安装软件包

dnf install etcd -y- 1

-

编辑

vim /etc/etcd/etcd.conf文件,修改如下信息#[Member] ETCD_DATA_DIR="/var/lib/etcd/default.etcd" ETCD_LISTEN_PEER_URLS="http://192.166.66.10:2380" ETCD_LISTEN_CLIENT_URLS="http://192.166.66.10:2379" ETCD_NAME="controller" #[Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.166.66.10:2380" ETCD_ADVERTISE_CLIENT_URLS="http://192.166.66.10:2379" ETCD_INITIAL_CLUSTER="controller=http://192.166.66.10:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new"- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

-

启动Etcd服务并设置开机自启

systemctl start etcd && systemctl enable etcd- 1

二、KeyStone服务安装

配置节点:仅控制节点

1、创库授权

-

连接数据库

mysql -u root -p- 1

-

创建keystone数据库

CREATE DATABASE keystone;- 1

-

授予keystone数据库权限,然后退出

GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY 'KEYSTONE_DBPASS'; GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' IDENTIFIED BY 'KEYSTONE_DBPASS'; exit;- 1

- 2

- 3

2、安装KeyStone相关软件包

-

安装软件

dnf install openstack-keystone httpd python3-mod_wsgi -y- 1

-

修改/etc/keystone/keystone.conf文件,由于文件内容有2700行左右,备注内容过多,实际有效配置信息只有40行左右,所有为了方便修改文件,可以先备份该文件,然后去掉注释信息

# 备份 cp /etc/keystone/keystone.conf /etc/keystone/keystone.conf.bak # 去掉备份文件keystone.conf.backup的空行、备注等信息覆盖掉keystone.conf文件 grep -Ev '^$|#' /etc/keystone/keystone.conf.bak >/etc/keystone/keystone.conf- 1

- 2

- 3

- 4

然后再手动修改文件内容,修改信息如下

[database] connection = mysql+pymysql://keystone:KEYSTONE_DBPASS@controller/keystone [token] provider = fernet- 1

- 2

- 3

- 4

- 5

手动方式和命令行方式二选一

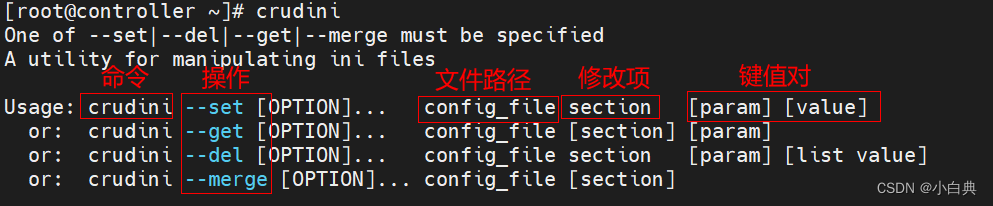

为了提高修改文件效率,减少配置错误率,我们可以使用配置工具,通过命令修改文件,先安装软件

dnf install crudini -y- 1

执行命令修改文件内容

crudini --set /etc/keystone/keystone.conf database connection mysql+pymysql://keystone:KEYSTONE_DBPASS@controller/keystone crudini --set /etc/keystone/keystone.conf token provider fernet- 1

- 2

-

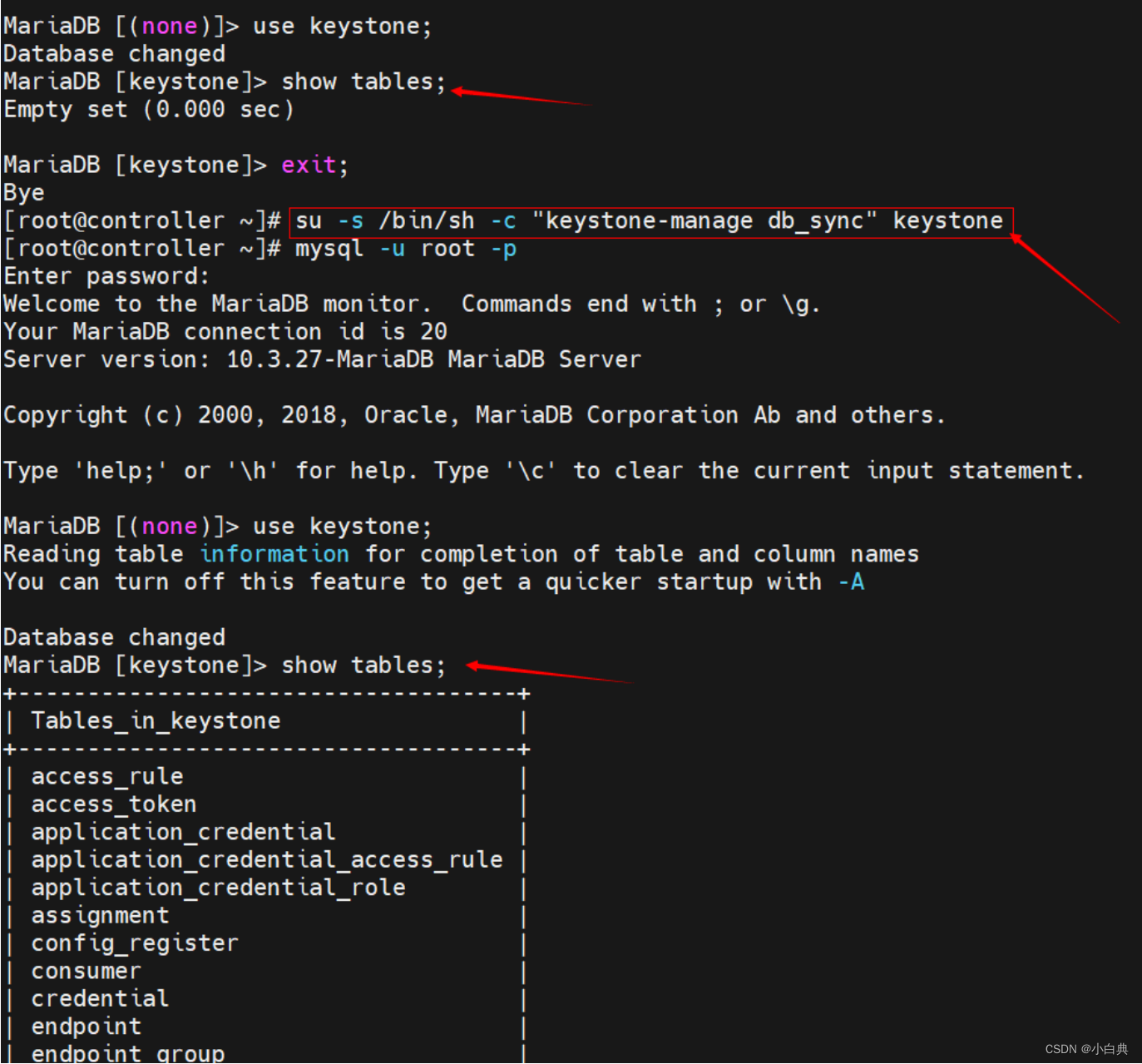

初始化数据库

su -s /bin/sh -c "keystone-manage db_sync" keystone- 1

同步前后可以先看一下数据库信息,下图是操作前后数据库信息变化

-

初始化Fernet,执行如下两条命令

keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone keystone-manage credential_setup --keystone-user keystone --keystone-group keystone- 1

- 2

-

引导身份认证服务

keystone-manage bootstrap --bootstrap-password ADMIN_PASS \ --bootstrap-admin-url http://controller:5000/v3/ \ --bootstrap-internal-url http://controller:5000/v3/ \ --bootstrap-public-url http://controller:5000/v3/ \ --bootstrap-region-id RegionOne- 1

- 2

- 3

- 4

- 5

3、配置Apache HTTP服务

-

编辑

vim /etc/httpd/conf/httpd.conf文件,添加如下信息ServerName controller- 1

-

创建

/usr/share/keystone/wsgi-keystone.conf文件链接ln -s /usr/share/keystone/wsgi-keystone.conf /etc/httpd/conf.d/- 1

-

启动httpd服务 并设置开机自启

systemctl start httpd && systemctl enable httpd- 1

-

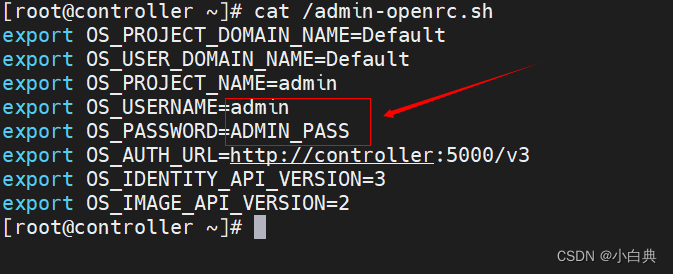

创建环境变量脚本来配置管理员账号,执行命令

vim /admin-openrc.sh,添加如下信息export OS_USERNAME=admin export OS_PASSWORD=ADMIN_PASS export OS_PROJECT_NAME=admin export OS_USER_DOMAIN_NAME=Default export OS_PROJECT_DOMAIN_NAME=Default export OS_AUTH_URL=http://controller:5000/v3 export OS_IDENTITY_API_VERSION=3- 1

- 2

- 3

- 4

- 5

- 6

- 7

然后初始化,脚本执行命令

source /admin-openrc.sh或者. /admin-openrc.sh

4、创建域,项目,用户和角色

-

创建域,程序中已存在默认域,此命令只是一个创建域的例子,可以不执行

openstack domain create --description "An Example Domain" example- 1

-

创建service项目,也叫做租户

openstack project create --domain default --description "Service Project" service- 1

-

创建myproject测试项目

openstack project create --domain default --description "Demo Project" myproject- 1

-

创建myuser用户

openstack user create --domain default --password-prompt myuser # 执行命令后需要设置用户密码,输入两次相同的密码- 1

- 2

-

创建myrole角色

openstack role create myrole- 1

-

将myrole角色添加到myproject项目和myuser用户

openstack role add --project myproject --user myuser myrole- 1

-

编辑环境变量脚本

vim /admin-openrc.sh,修改脚本,把创建的项目用户信息添加到环境变量值export OS_PROJECT_DOMAIN_NAME=Default export OS_USER_DOMAIN_NAME=Default export OS_PROJECT_NAME=admin export OS_USERNAME=admin export OS_PASSWORD=ADMIN_PASS export OS_AUTH_URL=http://controller:5000/v3 export OS_IDENTITY_API_VERSION=3 export OS_IMAGE_API_VERSION=2- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

然后再次初始化脚本,执行命令

source /admin-openrc.sh或者. /admin-openrc.sh也可以根据自己创建的项目角色信息编写一个脚本,如:

vim /dyd-openrc.shexport OS_PROJECT_DOMAIN_NAME=Default export OS_USER_DOMAIN_NAME=Default export OS_PROJECT_NAME=myproject export OS_USERNAME=myuser export OS_PASSWORD=ADMIN_PASS export OS_AUTH_URL=http://controller:5000/v3 export OS_IDENTITY_API_VERSION=3 export OS_IMAGE_API_VERSION=2- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

然后再次初始化脚本,执行命令

source /dyd-openrc.sh或者. /admin-openrc.sh -

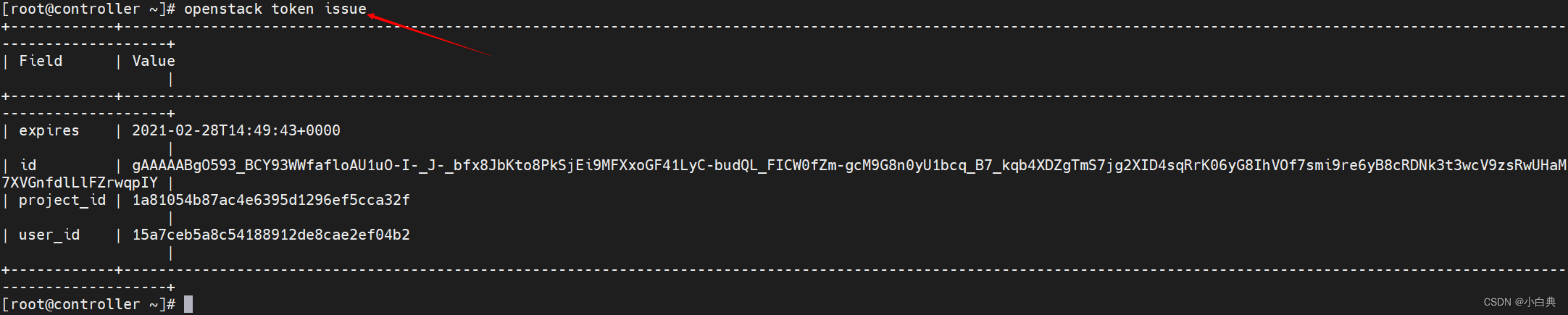

验证token令牌

# 验证KeyStone服务是否正常 openstack token issue- 1

- 2

出现如下图信息就说明KeyStone配置完成啦!

-

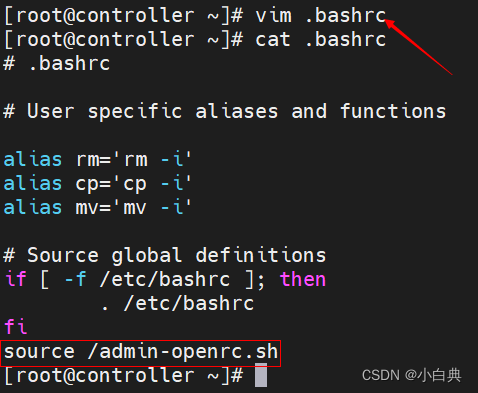

每次执行openstack命令之前都需要先执行脚本

source /admin-openrc.sh或者. /admin-openrc.sh,所以也可以设置为开机自动加载环境变量,将命令添加到.bashrc中即可,如图

三、Glance服务安装

配置节点:仅控制节点

1、创库授权

-

连接数据库

mysql -u root -p- 1

-

创建glance数据库

CREATE DATABASE glance;- 1

-

授予glance数据库权限,然后退出

GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'localhost' IDENTIFIED BY 'GLANCE_DBPASS'; GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' IDENTIFIED BY 'GLANCE_DBPASS'; exit;- 1

- 2

- 3

2、创建glance用户并关联角色

上文已设置自动加载环境变量,若未设置且未加载,请先加载环境变量脚本. /admin-openrc.sh

-

创建glance用户并设置密码为GLANCE_PASS,此处与上面创建用户的不同之处是未使用交互式的方式,直接将密码放入了命令中

openstack user create --domain default --password GLANCE_PASS glance- 1

-

使用admin角色将Glance用户添加到服务项目中

# 在service的项目上给glance用户关联admin角色 openstack role add --project service --user glance admin- 1

- 2

3、创建glance服务并注册API

-

创建glance服务

openstack service create --name glance --description "OpenStack Image" image- 1

-

注册API,也就是创建镜像服务的API终端endpoints

openstack endpoint create --region RegionOne image public http://controller:9292 openstack endpoint create --region RegionOne image internal http://controller:9292 openstack endpoint create --region RegionOne image admin http://controller:9292- 1

- 2

- 3

4、安装并配置glance

-

安装glance软件包,安装若出现依赖问题,请更换安装源

dnf install openstack-glance -y- 1

-

编辑

vim /etc/glance/glance-api.conf文件,文件内容过多,进6000行,建议向上文一样使用命令配置,也可以手动配置手动修改如下信息

[database] connection = mysql+pymysql://glance:GLANCE_DBPASS@controller/glance [keystone_authtoken] www_authenticate_uri = http://controller:5000 auth_url = http://controller:5000 memcached_servers = controller:11211 auth_type = password project_domain_name = Default user_domain_name = Default project_name = service username = glance password = GLANCE_PASS [paste_deploy] flavor = keystone [glance_store] stores = file,http default_store = file filesystem_store_datadir = /var/lib/glance/images/

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

命令行修改以上信息

crudini --set /etc/glance/glance-api.conf database connection mysql+pymysql://glance:GLANCE_DBPASS@controller/glance crudini --set /etc/glance/glance-api.conf keystone_authtoken www_authenticate_uri http://controller:5000 crudini --set /etc/glance/glance-api.conf keystone_authtoken auth_url http://controller:5000 crudini --set /etc/glance/glance-api.conf keystone_authtoken memcached_servers controller:11211 crudini --set /etc/glance/glance-api.conf keystone_authtoken auth_type password crudini --set /etc/glance/glance-api.conf keystone_authtoken project_domain_name Default crudini --set /etc/glance/glance-api.conf keystone_authtoken user_domain_name Default crudini --set /etc/glance/glance-api.conf keystone_authtoken project_name service crudini --set /etc/glance/glance-api.conf keystone_authtoken username glance crudini --set /etc/glance/glance-api.conf keystone_authtoken password GLANCE_PASS crudini --set /etc/glance/glance-api.conf paste_deploy flavor keystone crudini --set /etc/glance/glance-api.conf glance_store stores file,http crudini --set /etc/glance/glance-api.conf glance_store default_store file crudini --set /etc/glance/glance-api.conf glance_store filesystem_store_datadir /var/lib/glance/images/- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

-

同步数据库

su -s /bin/sh -c "glance-manage db_sync" glance- 1

-

启动glance服务并设置开机自启

systemctl start openstack-glance-api && systemctl enable openstack-glance-api- 1

-

下载一个测试镜像先上传到系统中,然后上传到glance服务中

-

测试镜像cirros下载地址:点击此处进入下载页,由于网络限制,建议复制下载地址后使用迅雷等下载工具进行下载

-

使用命令上传到Glance服务中

# 将当前目录下的cirros-0.5.1-aarch64-disk.img镜像命名为“cirros”,镜像格式是qcow2,容器格式是bare,设为公有镜像 openstack image create "cirros" --file cirros-0.5.1-aarch64-disk.img --disk-format qcow2 --container-format bare --public- 1

- 2

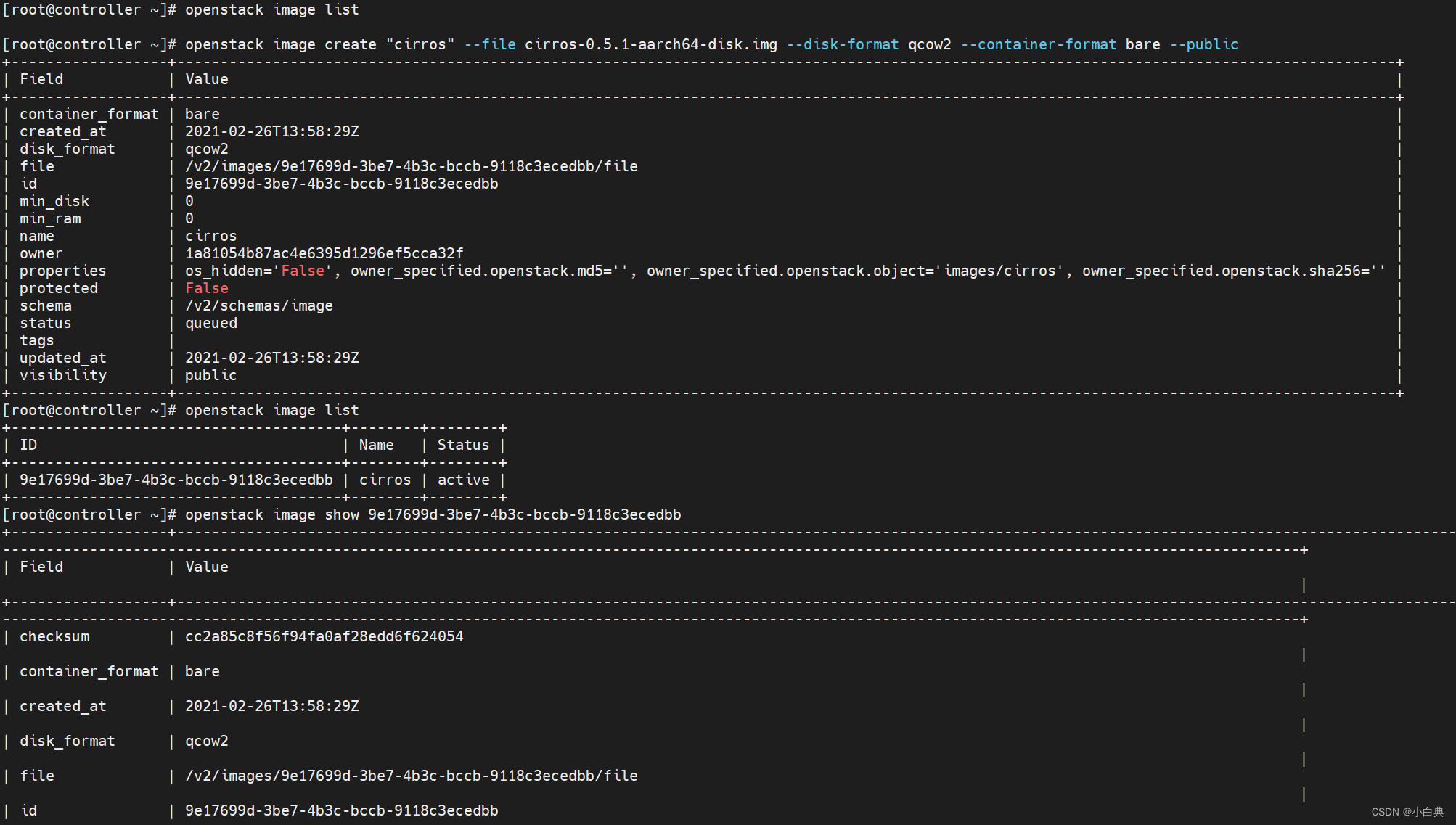

出现如下图说明镜像上传成功,通过命令

openstack image list可以看到上传的镜像

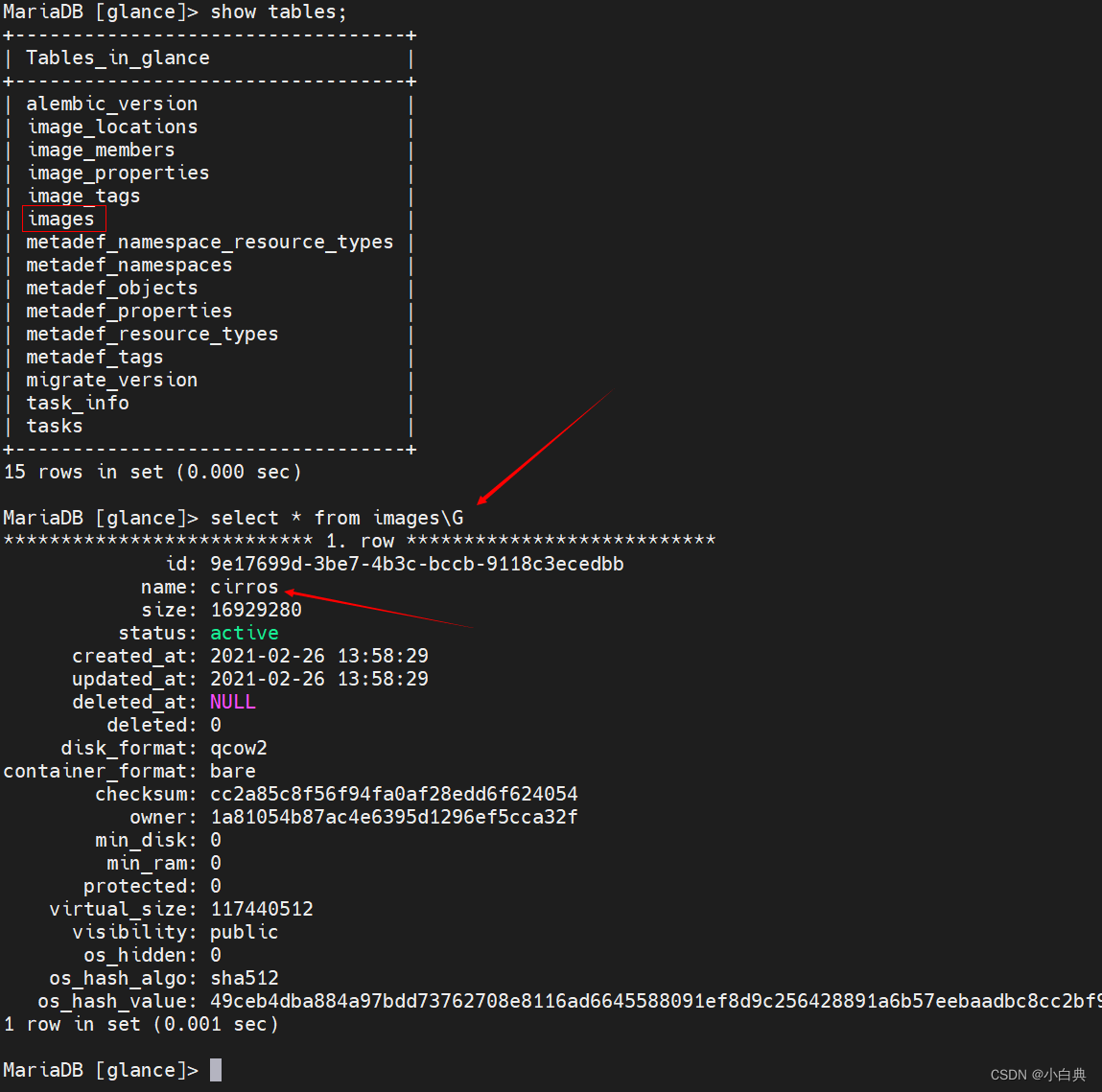

镜像信息都是存在glance数据库中的,我们可以在glance库中的images表看到上传的镜像信息

在/var/lib/glance/images/目录下可以看到镜像文件,如果要删除此镜像需要删除数据库信息,再删除镜像文件

-

四、Placement服务安装

配置节点:控制节点

Placement服务的作用是跟踪资源(如计算节点,存储资源池,网络资源池等)的使用情况,提供自定义资源的能力,为分配资源提供服务。Placement在openstack的Stein版本之前是属于Nova组件的一部分。在安装Nova之前需要先安装此组件

1、创库授权

-

连接数据库

mysql -u root -p- 1

-

创建Plancement数据库

CREATE DATABASE placement;- 1

-

授予Plancement数据库权限,然后退出

GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'localhost' IDENTIFIED BY 'PLACEMENT_DBPASS'; GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'%' IDENTIFIED BY 'PLACEMENT_DBPASS'; exit;- 1

- 2

- 3

2、配置用户和Endpoint

先加载环境变量source /admin-openrc.sh

-

创建一个plancement用户并设置密码为PLACEMENT_PASS

openstack user create --domain default --password PLACEMENT_PASS placement- 1

-

使用admin角色将Placement用户添加到服务项目中

# 在service的项目上给placement用户关联admin角色 openstack role add --project service --user placement admin- 1

- 2

3、创建Placement服务并注册API

-

创建Plancement服务

openstack service create --name placement --description "Placement API" placement- 1

-

创建Plancement服务API端口

openstack endpoint create --region RegionOne placement public http://controller:8778 openstack endpoint create --region RegionOne placement internal http://controller:8778 openstack endpoint create --region RegionOne placement admin http://controller:8778- 1

- 2

- 3

4、安装并配置Plancement

-

安装Plancement软件包

dnf install openstack-placement-api -y- 1

-

编辑

vim /etc/placement/placement.conf文件,文件700行左右,手动命令二选一手动修改文件内容

[placement_database] connection = mysql+pymysql://placement:PLACEMENT_DBPASS@controller/placement [api] auth_strategy = keystone [keystone_authtoken] auth_url = http://controller:5000/v3 memcached_servers = controller:11211 auth_type = password project_domain_name = Default user_domain_name = Default project_name = service username = placement password = PLACEMENT_PASS- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

命令行修改文件内容

crudini --set /etc/placement/placement.conf placement_database connection mysql+pymysql://placement:PLACEMENT_DBPASS@controller/placement crudini --set /etc/placement/placement.conf api auth_strategy keystone crudini --set /etc/placement/placement.conf keystone_authtoken auth_url http://controller:5000/v3 crudini --set /etc/placement/placement.conf keystone_authtoken memcached_servers controller:11211 crudini --set /etc/placement/placement.conf keystone_authtoken auth_type password crudini --set /etc/placement/placement.conf keystone_authtoken project_domain_name Default crudini --set /etc/placement/placement.conf keystone_authtoken user_domain_name Default crudini --set /etc/placement/placement.conf keystone_authtoken project_name service crudini --set /etc/placement/placement.conf keystone_authtoken username placement crudini --set /etc/placement/placement.conf keystone_authtoken password PLACEMENT_PASS- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

-

同步数据库

su -s /bin/sh -c "placement-manage db sync" placement- 1

-

重启httpd服务

systemctl restart httpd- 1

-

检查Placement服务状态

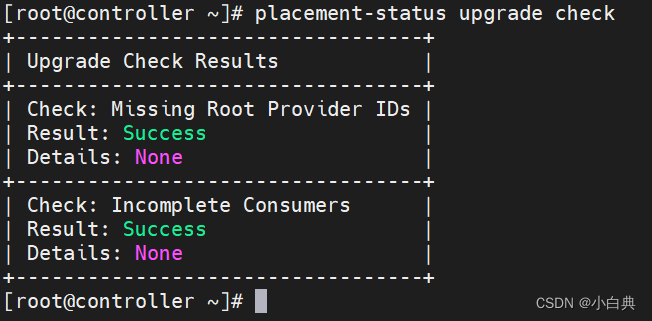

placement-status upgrade check- 1

出现如下图所示,说明安装配置成功

五、Nova服务安装

配置节点:控制节点和计算节点

Nova是服务是openstack最核心的服务,由它来创建云主机,其它服务都是协助,同时Nova组件也是最多的,由于Nova组件较多,此处控制节点和计算节点分开写,再次提醒上文提到【配置节点:控制节点和计算节点】指的是相同的操作配置需要在控制节点和计算节点都执行一遍,这里是分开讲解安装步骤的,先从控制节点开始

控制节点

1、创库授权

-

连接数据库

mysql -u root -p- 1

-

创建nova_api,nova和nova_cell0数据库

CREATE DATABASE nova_api; CREATE DATABASE nova; CREATE DATABASE nova_cell0;- 1

- 2

- 3

-

分别授予三个数据库权限,然后退出

# 授权nova_api数据库 GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS'; GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS'; # 授权nova数据库 GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS'; GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS'; # 授权nova_cell0数据库 GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS'; GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS'; exit;- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

2、配置用户和Endpoint

先加载环境变量source /admin-openrc.sh

-

创建nova用户并设置密码为NOVA_PASS

openstack user create --domain default --password NOVA_PASS nova- 1

-

使用admin角色将nova用户添加到服务项目中

# 在service的项目上给nova用户关联admin角色 openstack role add --project service --user nova admin- 1

- 2

3、创建Nova服务并注册API

-

创建Nova服务

openstack service create --name nova --description "OpenStack Compute" compute- 1

-

创建Nova服务API端口

openstack endpoint create --region RegionOne compute public http://controller:8774/v2.1 openstack endpoint create --region RegionOne compute internal http://controller:8774/v2.1 openstack endpoint create --region RegionOne compute admin http://controller:8774/v2.1- 1

- 2

- 3

4、安装并配置Nova

-

安装nova相关软件包

dnf install openstack-nova-api openstack-nova-conductor openstack-nova-novncproxy openstack-nova-scheduler -y- 1

-

编辑

vim /etc/nova/nova.conf文件,文件近6000行,依然手动命令二选一手动修改如下信息

[DEFAULT] enabled_apis = osapi_compute,metadata transport_url = rabbit://openstack:RABBIT_PASS@controller:5672/ my_ip = 192.166.66.10 [api_database] connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova_api [database] connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova [api] auth_strategy = keystone [keystone_authtoken] www_authenticate_uri = http://controller:5000/ auth_url = http://controller:5000/ memcached_servers = controller:11211 auth_type = password project_domain_name = Default user_domain_name = Default project_name = service username = nova password = NOVA_PASS [vnc] enabled = true server_listen = $my_ip server_proxyclient_address = $my_ip [glance] api_servers = http://controller:9292 [oslo_concurrency] lock_path = /var/lib/nova/tmp [placement] region_name = RegionOne project_domain_name = Default project_name = service auth_type = password user_domain_name = Default auth_url = http://controller:5000/v3 username = placement password = PLACEMENT_PASS

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

命令行修改以上信息,命令不要一次性批量操作,由于命令过多,批量执行终端可能会出错,建议分批次执行

crudini --set /etc/nova/nova.conf DEFAULT enabled_apis osapi_compute,metadata crudini --set /etc/nova/nova.conf DEFAULT transport_url rabbit://openstack:RABBIT_PASS@controller:5672/ crudini --set /etc/nova/nova.conf DEFAULT my_ip 192.166.66.10 crudini --set /etc/nova/nova.conf api_database connection mysql+pymysql://nova:NOVA_DBPASS@controller/nova_api crudini --set /etc/nova/nova.conf database connection mysql+pymysql://nova:NOVA_DBPASS@controller/nova crudini --set /etc/nova/nova.conf api auth_strategy keystone crudini --set /etc/nova/nova.conf keystone_authtoken www_authenticate_uri http://controller:5000/ crudini --set /etc/nova/nova.conf keystone_authtoken auth_url http://controller:5000/ crudini --set /etc/nova/nova.conf keystone_authtoken memcached_servers controller:11211 crudini --set /etc/nova/nova.conf keystone_authtoken auth_type password crudini --set /etc/nova/nova.conf keystone_authtoken project_domain_name Default crudini --set /etc/nova/nova.conf keystone_authtoken user_domain_name Default crudini --set /etc/nova/nova.conf keystone_authtoken project_name service crudini --set /etc/nova/nova.conf keystone_authtoken username nova crudini --set /etc/nova/nova.conf keystone_authtoken password NOVA_PASS crudini --set /etc/nova/nova.conf vnc enabled true crudini --set /etc/nova/nova.conf vnc server_listen '$my_ip' crudini --set /etc/nova/nova.conf vnc server_proxyclient_address '$my_ip' crudini --set /etc/nova/nova.conf glance api_servers http://controller:9292 crudini --set /etc/nova/nova.conf oslo_concurrency lock_path /var/lib/nova/tmp crudini --set /etc/nova/nova.conf placement region_name RegionOne crudini --set /etc/nova/nova.conf placement project_domain_name Default crudini --set /etc/nova/nova.conf placement project_name service crudini --set /etc/nova/nova.conf placement auth_type password crudini --set /etc/nova/nova.conf placement user_domain_name Default crudini --set /etc/nova/nova.conf placement auth_url http://controller:5000/v3 crudini --set /etc/nova/nova.conf placement username placement crudini --set /etc/nova/nova.conf placement password PLACEMENT_PASS

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

-

同步数据库

# 同步nova_api数据库 su -s /bin/sh -c "nova-manage api_db sync" nova # 同步nova_cell0数据库 su -s /bin/sh -c "nova-manage cell_v2 map_cell0" nova # 创建cell1 su -s /bin/sh -c "nova-manage cell_v2 create_cell --name=cell1 --verbose" nova # 同步nova数据库 su -s /bin/sh -c "nova-manage db sync" nova- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

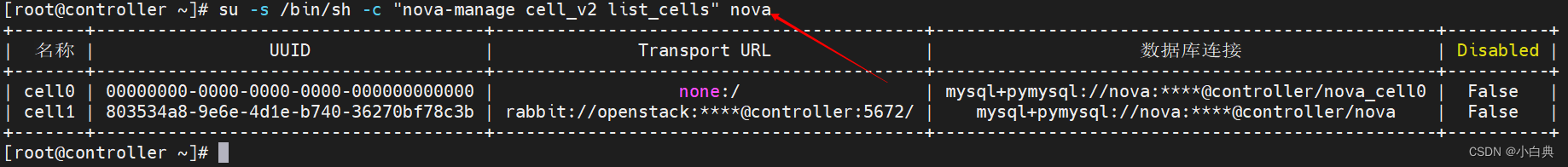

验证nova_cell0和cell1是否添加成功

su -s /bin/sh -c "nova-manage cell_v2 list_cells" nova- 1

-

启动服务并设为开机自启

systemctl start openstack-nova-api openstack-nova-scheduler openstack-nova-conductor openstack-nova-novncproxy && systemctl enable openstack-nova-api openstack-nova-scheduler openstack-nova-conductor openstack-nova-novncproxy- 1

- 2

-

验证服务是否成功启动,使用命令

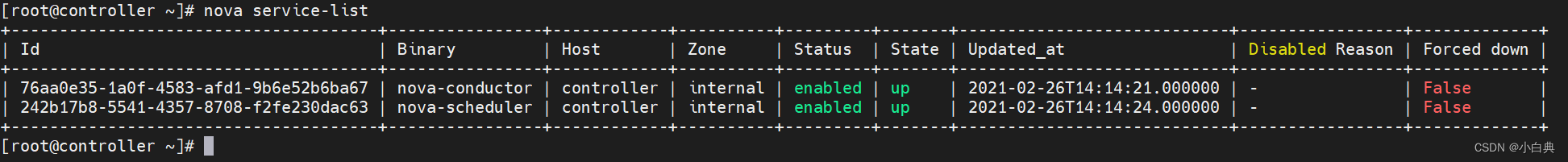

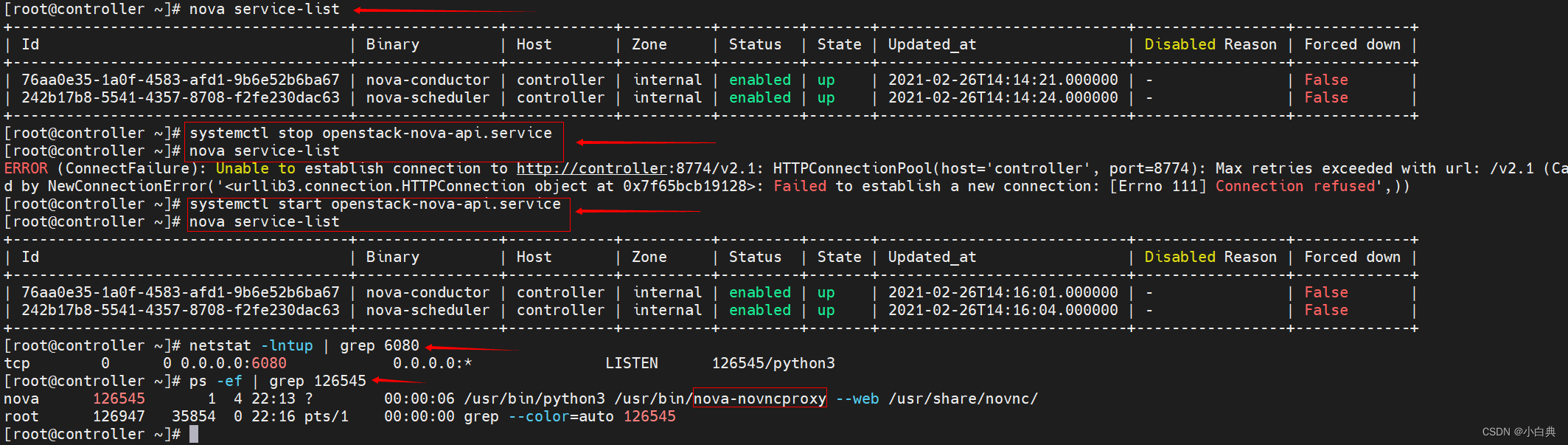

nova service-list,如下图

启动了4个服务,为什么只看到2个服务呢?

这是因为

nova service-list这个命令是发给openstack-nova-api的,由openstack-nova-api服务返回响应结果,若nova-api服务关闭了,openstack-nova-scheduler和openstack-nova-conductor两个服务便无法启动,而查看openstack-nova-novncproxy服务是启动成功,是通过端口查看的,netstat -lntup | grep 6080,查看进程ps -ef | grep 上条命令得到的进程号,如下图

可以通过Web访问noVNC页面,只是还没有连接云主机

计算节点

1、安装并配置Nova

-

安装软件包

dnf install openstack-nova-compute -y- 1

-

编辑

vim /etc/nova/nova.conf文件,文件5500行左右,手动命令二选一手动修改文件以下内容

[DEFAULT] enabled_apis = osapi_compute,metadata transport_url = rabbit://openstack:RABBIT_PASS@controller my_ip = 192.166.66.11 [api] auth_strategy = keystone [keystone_authtoken] www_authenticate_uri = http://controller:5000/ auth_url = http://controller:5000/ memcached_servers = controller:11211 auth_type = password project_domain_name = Default user_domain_name = Default project_name = service username = nova password = NOVA_PASS [vnc] enabled = true server_listen = 0.0.0.0 server_proxyclient_address = $my_ip novncproxy_base_url = http://controller:6080/vnc_auto.html [glance] api_servers = http://controller:9292 [oslo_concurrency] lock_path = /var/lib/nova/tmp [placement] region_name = RegionOne project_domain_name = Default project_name = service auth_type = password user_domain_name = Default auth_url = http://controller:5000/v3 username = placement password = PLACEMENT_PASS

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

命令修改以上内容,已更换节点,使用命令需要先执行安装软件包

dnf install crudini -y crudini --set /etc/nova/nova.conf DEFAULT enabled_apis osapi_compute,metadata crudini --set /etc/nova/nova.conf DEFAULT transport_url rabbit://openstack:RABBIT_PASS@controller crudini --set /etc/nova/nova.conf DEFAULT my_ip 192.166.66.11 crudini --set /etc/nova/nova.conf api auth_strategy keystone crudini --set /etc/nova/nova.conf keystone_authtoken www_authenticate_uri http://controller:5000/ crudini --set /etc/nova/nova.conf keystone_authtoken auth_url http://controller:5000/ crudini --set /etc/nova/nova.conf keystone_authtoken memcached_servers controller:11211 crudini --set /etc/nova/nova.conf keystone_authtoken auth_type password crudini --set /etc/nova/nova.conf keystone_authtoken project_domain_name Default crudini --set /etc/nova/nova.conf keystone_authtoken user_domain_name Default crudini --set /etc/nova/nova.conf keystone_authtoken project_name service crudini --set /etc/nova/nova.conf keystone_authtoken username nova crudini --set /etc/nova/nova.conf keystone_authtoken password NOVA_PASS crudini --set /etc/nova/nova.conf vnc enabled true crudini --set /etc/nova/nova.conf vnc server_listen 0.0.0.0 crudini --set /etc/nova/nova.conf vnc server_proxyclient_address '$my_ip' crudini --set /etc/nova/nova.conf vnc novncproxy_base_url http://controller:6080/vnc_auto.html crudini --set /etc/nova/nova.conf glance api_servers http://controller:9292 crudini --set /etc/nova/nova.conf oslo_concurrency lock_path /var/lib/nova/tmp crudini --set /etc/nova/nova.conf placement region_name RegionOne crudini --set /etc/nova/nova.conf placement project_domain_name Default crudini --set /etc/nova/nova.conf placement project_name service crudini --set /etc/nova/nova.conf placement auth_type password crudini --set /etc/nova/nova.conf placement user_domain_name Default crudini --set /etc/nova/nova.conf placement auth_url http://controller:5000/v3 crudini --set /etc/nova/nova.conf placement username placement crudini --set /etc/nova/nova.conf placement password PLACEMENT_PASS

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

-

确认计算节点是否支持硬件加速

egrep -c '(vmx|svm)' /proc/cpuinfo- 1

执行命令后返回结果是数字说明支持硬件加速,否则需要编辑

vim /etc/nova/nova.conf文件中的[libvirt]部分,修改以下内容[libvirt] virt_type = qemu- 1

- 2

-

启动nova服务和后期管理虚机的libvirt服务并设为开机自启

systemctl start libvirtd openstack-nova-compute && systemctl enable libvirtd openstack-nova-compute- 1

控制节点

计算节点安装配置完成后再回到控制节点操作

先加载环境变量脚本source /admin-openrc.sh

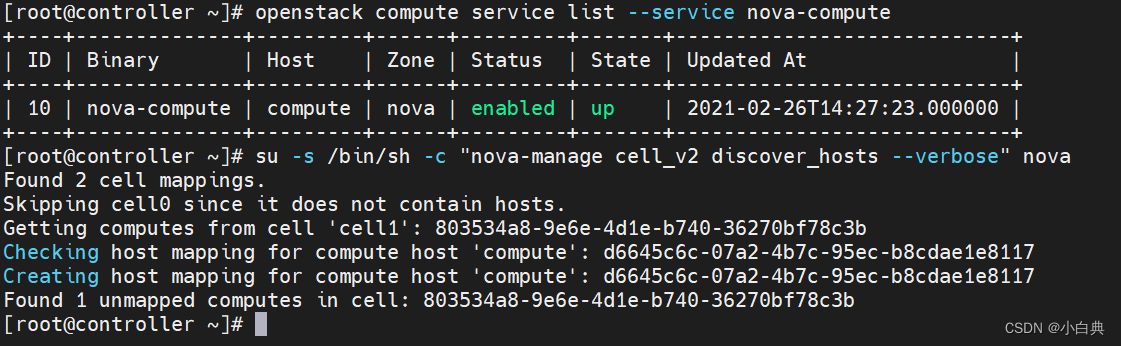

在控制节点查看nova-compute服务

openstack compute service list --service nova-compute

- 1

同步计算节点

su -s /bin/sh -c "nova-manage cell_v2 discover_hosts --verbose" nova

- 1

设置发现间隔时间,编辑vim /etc/nova/nova.conf文件,修改文件

# 手动修改方式

[scheduler]

discover_hosts_in_cells_interval = 300

# 命令修改方式

crudini --set /etc/nova/nova.conf scheduler discover_hosts_in_cells_interval 300

- 1

- 2

- 3

- 4

- 5

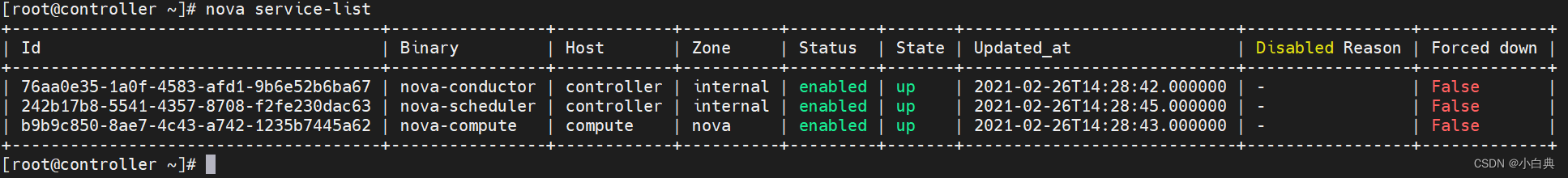

此时再执行nova service-list命令,会多出一个compute服务

六、Neutron服务安装

配置节点:控制节点和计算节点

控制节点

1、创库授权

-

连接数据库

mysql -u root -p- 1

-

创建neutron数据库

CREATE DATABASE neutron;- 1

-

授予数据库权限,然后退出

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' IDENTIFIED BY 'NEUTRON_DBPASS'; GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY 'NEUTRON_DBPASS'; exit;- 1

- 2

- 3

2、配置用户和Endpoint

先加载环境变量source /admin-openrc.sh

-

创建neutron用户并设置密码为NEUTRON_PASS

openstack user create --domain default --password NEUTRON_PASS neutron- 1

-

使用admin角色将neutron用户添加到服务项目中

# 在service的项目上给neutron用户关联admin角色 openstack role add --project service --user neutron admin- 1

- 2

3、创建Neutron服务并注册API

-

创建Neutron服务

openstack service create --name neutron --description "OpenStack Networking" network- 1

-

创建Neutron服务API端口

openstack endpoint create --region RegionOne network public http://controller:9696 openstack endpoint create --region RegionOne network internal http://controller:9696 openstack endpoint create --region RegionOne network admin http://controller:9696- 1

- 2

- 3

4、安装并配置Neutron

安装相关软件包

执行此命令安装软件包

dnf install openstack-neutron openstack-neutron-ml2 openstack-neutron-linuxbridge ebtables -y

- 1

公有网络和私有网络配置任意选择一种即可

控制节点公有网络

- 配置Neutron组件

编辑vim /etc/neutron/neutron.conf文件

手动修改以下文件内容

[database] connection = mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutron [DEFAULT] core_plugin = ml2 service_plugins = transport_url = rabbit://openstack:RABBIT_PASS@controller auth_strategy = keystone notify_nova_on_port_status_changes = true notify_nova_on_port_data_changes = true [keystone_authtoken] www_authenticate_uri = http://controller:5000 auth_url = http://controller:5000 memcached_servers = controller:11211 auth_type = password project_domain_name = default user_domain_name = default project_name = service username = neutron password = NEUTRON_PASS [nova] auth_url = http://controller:5000 auth_type = password project_domain_name = default user_domain_name = default region_name = RegionOne project_name = service username = nova password = NOVA_PASS [oslo_concurrency] lock_path = /var/lib/neutron/tmp

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

命令修改以上文件内容

crudini --set /etc/neutron/neutron.conf database connection mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutron crudini --set /etc/neutron/neutron.conf DEFAULT core_plugin ml2 crudini --set /etc/neutron/neutron.conf DEFAULT service_plugins crudini --set /etc/neutron/neutron.conf DEFAULT transport_url rabbit://openstack:RABBIT_PASS@controller crudini --set /etc/neutron/neutron.conf DEFAULT auth_strategy keystone crudini --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_status_changes true crudini --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_data_changes true crudini --set /etc/neutron/neutron.conf keystone_authtoken www_authenticate_uri http://controller:5000 crudini --set /etc/neutron/neutron.conf keystone_authtoken auth_url http://controller:5000 crudini --set /etc/neutron/neutron.conf keystone_authtoken memcached_servers controller:11211 crudini --set /etc/neutron/neutron.conf keystone_authtoken auth_type password crudini --set /etc/neutron/neutron.conf keystone_authtoken project_domain_name default crudini --set /etc/neutron/neutron.conf keystone_authtoken user_domain_name default crudini --set /etc/neutron/neutron.conf keystone_authtoken project_name service crudini --set /etc/neutron/neutron.conf keystone_authtoken username neutron crudini --set /etc/neutron/neutron.conf keystone_authtoken password NEUTRON_PASS crudini --set /etc/neutron/neutron.conf nova auth_url http://controller:5000 crudini --set /etc/neutron/neutron.conf nova auth_type password crudini --set /etc/neutron/neutron.conf nova project_domain_name default crudini --set /etc/neutron/neutron.conf nova user_domain_name default crudini --set /etc/neutron/neutron.conf nova region_name RegionOne crudini --set /etc/neutron/neutron.conf nova project_name service crudini --set /etc/neutron/neutron.conf nova username nova crudini --set /etc/neutron/neutron.conf nova password NOVA_PASS crudini --set /etc/neutron/neutron.conf oslo_concurrency lock_path /var/lib/neutron/tmp

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 配置ML2组件

编辑vim /etc/neutron/plugins/ml2/ml2_conf.ini文件

手动修改以下文件内容

[ml2]

type_drivers = flat,vlan

tenant_network_types =

mechanism_drivers = linuxbridge

extension_drivers = port_security

[ml2_type_flat]

flat_networks = provider

[securitygroup]

enable_ipset = true

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

命令修改以上文件内容

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 type_drivers flat,vlan

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 tenant_network_types

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 mechanism_drivers linuxbridge

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 extension_drivers port_security

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_flat flat_networks provider

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup enable_ipset true

- 1

- 2

- 3

- 4

- 5

- 6

- 配置LinuxBridge

编辑vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini文件

手动修改以下文件内容

[linux_bridge]

physical_interface_mappings = provider:ens33

[vxlan]

enable_vxlan = false

[securitygroup]

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

命令修改以上文件内容

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini linux_bridge physical_interface_mappings provider:ens33

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan enable_vxlan false

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup enable_security_group true

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup firewall_driver neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

- 1

- 2

- 3

- 4

执行以下命令

modprobe br_netfilter

sysctl net.bridge.bridge-nf-call-iptables

sysctl net.bridge.bridge-nf-call-ip6tables

- 1

- 2

- 3

- 配置DHCP

编辑vim /etc/neutron/dhcp_agent.ini文件

手动修改文件以下内容

[DEFAULT]

interface_driver = linuxbridge

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = true

- 1

- 2

- 3

- 4

命令修改以上文件内容

crudini --set /etc/neutron/dhcp_agent.ini DEFAULT interface_driver linuxbridge

crudini --set /etc/neutron/dhcp_agent.ini DEFAULT dhcp_driver neutron.agent.linux.dhcp.Dnsmasq

crudini --set /etc/neutron/dhcp_agent.ini DEFAULT enable_isolated_metadata true

- 1

- 2

- 3

- 配置元数据代理

编辑vim /etc/neutron/metadata_agent.ini文件

手动修改以下文件内容

[DEFAULT]

nova_metadata_host = controller

metadata_proxy_shared_secret = METADATA_SECRET

- 1

- 2

- 3

命令修改以上文件内容

crudini --set /etc/neutron/metadata_agent.ini DEFAULT nova_metadata_host controller

crudini --set /etc/neutron/metadata_agent.ini DEFAULT metadata_proxy_shared_secret METADATA_SECRET

- 1

- 2

- 为Nova配置网络服务

编辑vim /etc/nova/nova.conf文件

手动修改以下文件内容

[neutron]

auth_url = http://controller:5000

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = NEUTRON_PASS

service_metadata_proxy = true

metadata_proxy_shared_secret = METADATA_SECRET

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

命令修改以上内容

crudini --set /etc/nova/nova.conf neutron auth_url http://controller:5000

crudini --set /etc/nova/nova.conf neutron auth_type password

crudini --set /etc/nova/nova.conf neutron project_domain_name default

crudini --set /etc/nova/nova.conf neutron user_domain_name default

crudini --set /etc/nova/nova.conf neutron region_name RegionOne

crudini --set /etc/nova/nova.conf neutron project_name service

crudini --set /etc/nova/nova.conf neutron username neutron

crudini --set /etc/nova/nova.conf neutron password NEUTRON_PASS

crudini --set /etc/nova/nova.conf neutron service_metadata_proxy true

crudini --set /etc/nova/nova.conf neutron metadata_proxy_shared_secret METADATA_SECRET

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

控制节点私有网络

- 配置Neutron组件

编辑vim /etc/neutron/neutron.conf文件

手动修改以下文件内容

[database] connection = mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutron [DEFAULT] core_plugin = ml2 service_plugins = router allow_overlapping_ips = true transport_url = rabbit://openstack:RABBIT_PASS@controller auth_strategy = keystone notify_nova_on_port_status_changes = true notify_nova_on_port_data_changes = true [keystone_authtoken] www_authenticate_uri = http://controller:5000 auth_url = http://controller:5000 memcached_servers = controller:11211 auth_type = password project_domain_name = default user_domain_name = default project_name = service username = neutron password = NEUTRON_PASS [nova] auth_url = http://controller:5000 auth_type = password project_domain_name = default user_domain_name = default region_name = RegionOne project_name = service username = nova password = NOVA_PASS [oslo_concurrency] lock_path = /var/lib/neutron/tmp

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

命令修改以上文件内容

crudini --set /etc/neutron/neutron.conf database connection mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutron crudini --set /etc/neutron/neutron.conf DEFAULT core_plugin ml2 crudini --set /etc/neutron/neutron.conf DEFAULT service_plugins router crudini --set /etc/neutron/neutron.conf DEFAULT allow_overlapping_ips true crudini --set /etc/neutron/neutron.conf DEFAULT transport_url rabbit://openstack:RABBIT_PASS@controller crudini --set /etc/neutron/neutron.conf DEFAULT auth_strategy keystone crudini --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_status_changes true crudini --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_data_changes true crudini --set /etc/neutron/neutron.conf keystone_authtoken www_authenticate_uri http://controller:5000 crudini --set /etc/neutron/neutron.conf keystone_authtoken auth_url http://controller:5000 crudini --set /etc/neutron/neutron.conf keystone_authtoken memcached_servers controller:11211 crudini --set /etc/neutron/neutron.conf keystone_authtoken auth_type password crudini --set /etc/neutron/neutron.conf keystone_authtoken project_domain_name default crudini --set /etc/neutron/neutron.conf keystone_authtoken user_domain_name default crudini --set /etc/neutron/neutron.conf keystone_authtoken project_name service crudini --set /etc/neutron/neutron.conf keystone_authtoken username neutron crudini --set /etc/neutron/neutron.conf keystone_authtoken password NEUTRON_PASS crudini --set /etc/neutron/neutron.conf nova auth_url http://controller:5000 crudini --set /etc/neutron/neutron.conf nova auth_type password crudini --set /etc/neutron/neutron.conf nova project_domain_name default crudini --set /etc/neutron/neutron.conf nova user_domain_name default crudini --set /etc/neutron/neutron.conf nova region_name RegionOne crudini --set /etc/neutron/neutron.conf nova project_name service crudini --set /etc/neutron/neutron.conf nova username nova crudini --set /etc/neutron/neutron.conf nova password NOVA_PASS crudini --set /etc/neutron/neutron.conf oslo_concurrency lock_path /var/lib/neutron/tmp

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 配置ML2组件

编辑vim /etc/neutron/plugins/ml2/ml2_conf.ini文件

手动修改以下文件内容

[ml2]

type_drivers = flat,vlan,vxlan

tenant_network_types = vxlan

mechanism_drivers = linuxbridge,l2population

extension_drivers = port_security

[ml2_type_flat]

flat_networks = provider

[ml2_type_vxlan]

vni_ranges = 1:1000

[securitygroup]

enable_ipset = true

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

命令修改以上文件内容

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 type_drivers flat,vlan,vxlan

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 tenant_network_types vxlan

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 mechanism_drivers linuxbridge,l2population

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 extension_drivers port_security

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_flat flat_networks provider

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_vxlan vni_ranges 1:1000

crudini --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup enable_ipset true

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 配置LinuxBridge

编辑vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini文件

手动修改以下文件内容

[linux_bridge]

physical_interface_mappings = provider:ens33

[vxlan]

enable_vxlan = true

local_ip = 192.166.66.10

l2_population = true

[securitygroup]

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

命令修改以上文件内容

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini linux_bridge physical_interface_mappings provider:ens33

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan enable_vxlan true

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan local_ip 192.166.66.10

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan l2_population true

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup enable_security_group true

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup firewall_driver neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

- 1

- 2

- 3

- 4

- 5

- 6

执行以下三条命令

modprobe br_netfilter

sysctl net.bridge.bridge-nf-call-iptables

sysctl net.bridge.bridge-nf-call-ip6tables

- 1

- 2

- 3

- 配置L3

编辑vim /etc/neutron/l3_agent.ini文件,手动修改以下内容

[DEFAULT]

interface_driver = linuxbridge

- 1

- 2

命令修改以上内容

crudini --set /etc/neutron/l3_agent.ini DEFAULT interface_driver linuxbridge

- 1

- 配置DHCP

编辑vim /etc/neutron/dhcp_agent.ini文件

手动修改文件以下内容

[DEFAULT]

interface_driver = linuxbridge

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = true

- 1

- 2

- 3

- 4

命令修改以上文件内容

crudini --set /etc/neutron/dhcp_agent.ini DEFAULT interface_driver linuxbridge

crudini --set /etc/neutron/dhcp_agent.ini DEFAULT dhcp_driver neutron.agent.linux.dhcp.Dnsmasq

crudini --set /etc/neutron/dhcp_agent.ini DEFAULT enable_isolated_metadata true

- 1

- 2

- 3

- 配置元数据代理

编辑vim /etc/neutron/metadata_agent.ini文件

手动修改以下文件内容

[DEFAULT]

nova_metadata_host = controller

metadata_proxy_shared_secret = METADATA_SECRET

memcache_servers = controller:11211

- 1

- 2

- 3

- 4

- 5

命令修改以上文件内容

crudini --set /etc/neutron/metadata_agent.ini DEFAULT nova_metadata_host controller

crudini --set /etc/neutron/metadata_agent.ini DEFAULT metadata_proxy_shared_secret METADATA_SECRET

crudini --set /etc/neutron/neutron.conf keystone_authtoken memcached_servers controller:11211

- 1

- 2

- 3

- 为Nova配置网络服务

编辑vim /etc/nova/nova.conf文件

手动修改以下文件内容

[neutron]

auth_url = http://controller:5000

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = NEUTRON_PASS

service_metadata_proxy = true

metadata_proxy_shared_secret = METADATA_SECRET

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

命令修改以上内容

crudini --set /etc/nova/nova.conf neutron auth_url http://controller:5000

crudini --set /etc/nova/nova.conf neutron auth_type password

crudini --set /etc/nova/nova.conf neutron project_domain_name default

crudini --set /etc/nova/nova.conf neutron user_domain_name default

crudini --set /etc/nova/nova.conf neutron region_name RegionOne

crudini --set /etc/nova/nova.conf neutron project_name service

crudini --set /etc/nova/nova.conf neutron username neutron

crudini --set /etc/nova/nova.conf neutron password NEUTRON_PASS

crudini --set /etc/nova/nova.conf neutron service_metadata_proxy true

crudini --set /etc/nova/nova.conf neutron metadata_proxy_shared_secret METADATA_SECRET

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

5、控制节点安装确认

-

添加sudoer权限

修改

vim /etc/neutron/neutron.conf文件,修改以下内容[privsep] user = neutron helper_command = sudo privsep-helper- 1

- 2

- 3

修改

vim /etc/sudoers.d/neutron文件,添加以下内容后强制保存退出neutron ALL = (root) NOPASSWD: ALL- 1

-

网络服务初始化脚本需要一个软链接指向/etc/neutron/plugins/ml2/ml2_conf.ini文件,创建软链接

ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini- 1

-

同步数据库

su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron- 1

-

重启nova-api服务

systemctl restart openstack-nova-api- 1

-

启用网络服务并设为开机自启,两种网络都需要要执行以下两条命令

systemctl start neutron-server neutron-linuxbridge-agent neutron-dhcp-agent neutron-metadata-agent && systemctl enable neutron-server neutron-linuxbridge-agent neutron-dhcp-agent neutron-metadata-agent- 1

- 2

-

对于私有网络,还应该启动L3服务并设为开机自启

systemctl restart neutron-l3-agent && systemctl enable neutron-l3-agent- 1

-

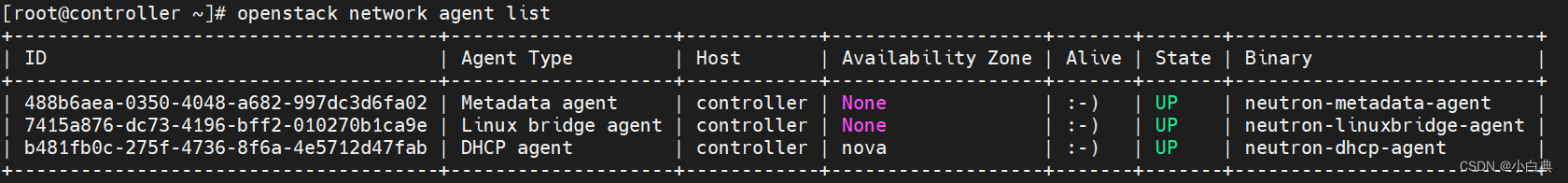

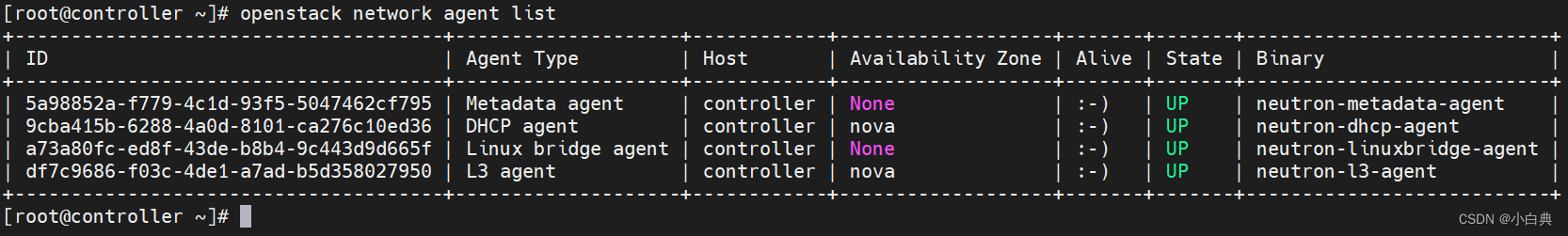

查看网络代理

openstack network agent list- 1

控制节点成功配置公有网络后,应输出如下图

控制节点成功配置私有网络后,应输出如下图

计算节点

-

安装相关软件包

dnf install openstack-neutron-linuxbridge ebtables ipset -y- 1

公有网络和私有网络配置任意选择一种即可,但要与控制节点保持一致

-

配置网络组件

编辑

vim /etc/neutron/neutron.conf文件,手动修改以下文件内容[DEFAULT] transport_url = rabbit://openstack:RABBIT_PASS@controller auth_strategy = keystone [keystone_authtoken] www_authenticate_uri = http://controller:5000 auth_url = http://controller:5000 memcached_servers = controller:11211 auth_type = password project_domain_name = default user_domain_name = default project_name = service username = neutron password = NEUTRON_PASS [oslo_concurrency] lock_path = /var/lib/neutron/tmp

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

命令修改以上文件

crudini --set /etc/neutron/neutron.conf DEFAULT transport_url rabbit://openstack:RABBIT_PASS@controller crudini --set /etc/neutron/neutron.conf DEFAULT auth_strategy keystone crudini --set /etc/neutron/neutron.conf keystone_authtoken www_authenticate_uri http://controller:5000 crudini --set /etc/neutron/neutron.conf keystone_authtoken auth_url http://controller:5000 crudini --set /etc/neutron/neutron.conf keystone_authtoken memcached_servers controller:11211 crudini --set /etc/neutron/neutron.conf keystone_authtoken auth_type password crudini --set /etc/neutron/neutron.conf keystone_authtoken project_domain_name default crudini --set /etc/neutron/neutron.conf keystone_authtoken user_domain_name default crudini --set /etc/neutron/neutron.conf keystone_authtoken project_name service crudini --set /etc/neutron/neutron.conf keystone_authtoken username neutron crudini --set /etc/neutron/neutron.conf keystone_authtoken password NEUTRON_PASS crudini --set /etc/neutron/neutron.conf oslo_concurrency lock_path /var/lib/neutron/tmp- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

计算节点公有网络

-

配置LinuxBridge

编辑

vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini文件,手动修改以下文件内容[linux_bridge] physical_interface_mappings = provider:ens33 [vxlan] enable_vxlan = false [securitygroup] enable_security_group = true firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

命令修改以上文件内容

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini linux_bridge physical_interface_mappings provider:ens33 crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan enable_vxlan false crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup enable_security_group true crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup firewall_driver neutron.agent.linux.iptables_firewall.IptablesFirewallDriver- 1

- 2

- 3

- 4

执行以下三条命令

modprobe br_netfilter sysctl net.bridge.bridge-nf-call-iptables sysctl net.bridge.bridge-nf-call-ip6tables- 1

- 2

- 3

计算节点私有网络

-

配置LinuxBridge

编辑

vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini文件,手动修改以下文件内容[linux_bridge] physical_interface_mappings = provider:ens33 [vxlan] enable_vxlan = true local_ip = 192.166.66.11 l2_population = true [securitygroup] enable_security_group = true firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

命令修改以上文件内容

crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini linux_bridge physical_interface_mappings provider:ens33 crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan enable_vxlan true crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan local_ip 192.166.66.11 crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan l2_population true crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup enable_security_group true crudini --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup firewall_driver neutron.agent.linux.iptables_firewall.IptablesFirewallDriver- 1

- 2

- 3

- 4

- 5

- 6

执行以下三条命令

modprobe br_netfilter sysctl net.bridge.bridge-nf-call-iptables sysctl net.bridge.bridge-nf-call-ip6tables- 1

- 2

- 3

-

为Nova配置网络服务

编辑

vim /etc/nova/nova.conf文件,手动修改以下内容[neutron] auth_url = http://controller:5000 auth_type = password project_domain_name = default user_domain_name = default region_name = RegionOne project_name = service username = neutron password = NEUTRON_PASS- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

命令修改以上文件内容

crudini --set /etc/nova/nova.conf neutron auth_url http://controller:5000 crudini --set /etc/nova/nova.conf neutron auth_type password crudini --set /etc/nova/nova.conf neutron project_domain_name default crudini --set /etc/nova/nova.conf neutron user_domain_name default crudini --set /etc/nova/nova.conf neutron region_name RegionOne crudini --set /etc/nova/nova.conf neutron project_name service crudini --set /etc/nova/nova.conf neutron username neutron crudini --set /etc/nova/nova.conf neutron password NEUTRON_PASS- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

6、计算节点安装确认

-

添加sudoer权限

修改

vim /etc/neutron/neutron.conf文件,修改以下内容[privsep] user = neutron helper_command = sudo privsep-helper- 1

- 2

- 3

修改

vim /etc/sudoers.d/neutron文件,添加以下内容后强制保存退出neutron ALL = (root) NOPASSWD: ALL- 1

-

关闭Selinux

编辑

vim /etc/selinux/config文件# 修改SELINUX的值,保存退出 SELINUX=permissive #执行以下命令,使配置立即生效 setenforce 0- 1

- 2

- 3

- 4

- 5

-

重启计算服务

systemctl restart openstack-nova-compute- 1

-

启动LinuxBridge代理并设为开机自启

systemctl start neutron-linuxbridge-agent && systemctl enable neutron-linuxbridge-agent- 1

-

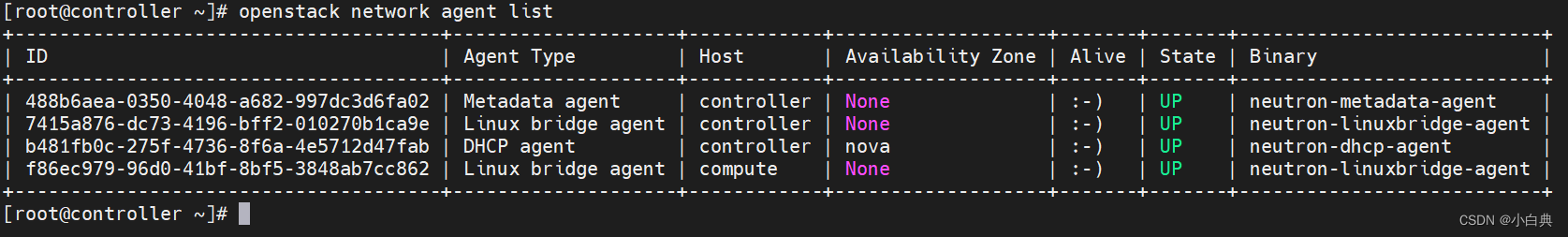

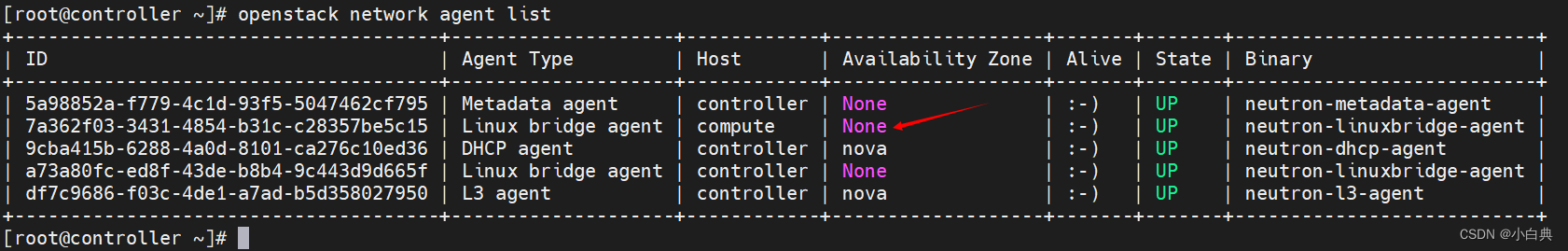

回到控制节点再次执行查看网络代理命令

openstack network agent list- 1

计算节点配置公有网络后,应输出如下图,多出一个计算节点的网桥

计算节点配置私有网络后,应输出如下图,同样也是多出一个计算节点的网桥

七、Horizon服务安装

配置节点:控制节点或者计算节点

此处以安装到计算节点为例,两节点中网络配置为私有网络

1. 安装软件包

dnf install openstack-dashboard -y

- 1

2. 配置Horizon文件

编辑 vim /etc/openstack-dashboard/local_settings 文件,修改以下文件内容

# 配置仪表盘在controller节点上使用openstack服务 OPENSTACK_HOST = "controller" # 配置运行访问仪表盘的主机,星号表示运行所有主机访问 ALLOWED_HOSTS = ['*'] # 配置memcached会话存储服务 SESSION_ENGINE = 'django.contrib.sessions.backends.cache' CACHES = { 'default': { 'BACKEND': 'django.core.cache.backends.memcached.MemcachedCache', 'LOCATION': 'controller:11211', } } # 启用身份API版本3 OPENSTACK_KEYSTONE_URL = "http://%s:5000/v3" % OPENSTACK_HOST TIME_ZONE = "Asia/Shanghai" # 上面几项修改即可,以下为新增信息 # 启用对域的支持 OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True # 配置API版本 OPENSTACK_API_VERSIONS = { "identity": 3, "image": 2, "volume": 3, } # 配置默认域 OPENSTACK_KEYSTONE_DEFAULT_DOMAIN = "Default" # 配置默认角色 OPENSTACK_KEYSTONE_DEFAULT_ROLE = "user" # 启用对第3层网络服务的支持,若是公有网络则需要禁用,将True改为False OPENSTACK_NEUTRON_NETWORK = { 'enable_router': True, 'enable_quotas': True, 'enable_distributed_router': True, 'enable_ha_router': True, 'enable_lb': True, 'enable_firewall': True, 'enable_vpn': True, 'enable_fip_topology_check': True, }

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

编辑vim /etc/httpd/conf.d/openstack-dashboard.conf文件,添加以下内容

WSGIApplicationGroup %{GLOBAL}

- 1

重建apache的dashboard配置文件

# 执行以下两条命令

cd /usr/share/openstack-dashboard

python3 manage.py make_web_conf --apache > /etc/httpd/conf.d/openstack-dashboard.conf

- 1

- 2

- 3

建立策略文件(policy.json)的软链接

ln -s /etc/openstack-dashboard /usr/share/openstack-dashboard/openstack_dashboard/conf

- 1

3. 安装确认

重启Web服务器和会话存储服务

# 计算节点执行,启动httpd服务并设为开机自启

systemctl start httpd && systemctl enable httpd

# 控制节点执行,重启memcached会话存储服务

systemctl restart memcached

- 1

- 2

- 3

- 4

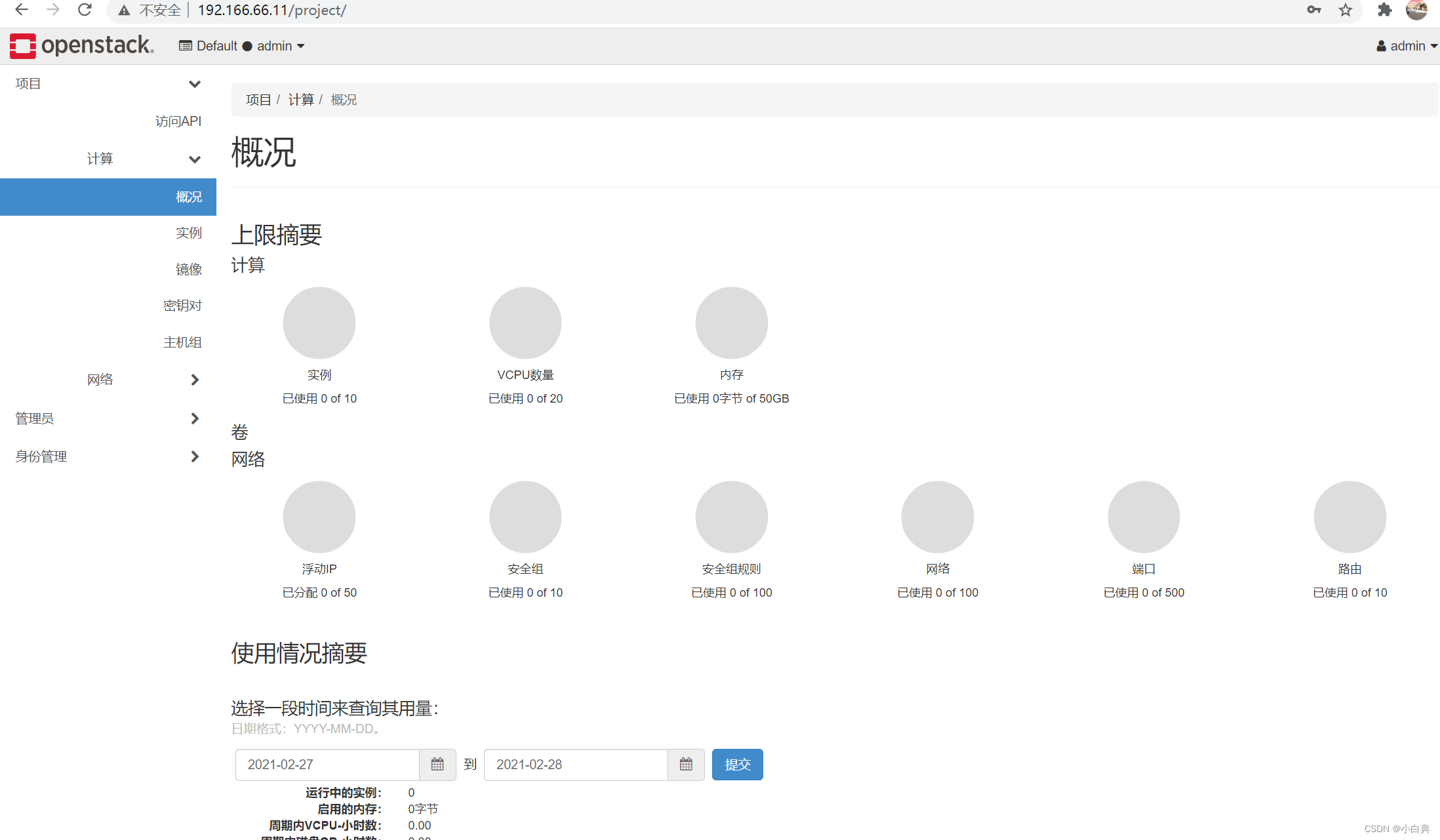

4. 访问Dashboard

http://192.166.66.11

- 1

成功访问页面如下

文件配置正确会自动填入域Default,否则可能配置有问题,手动输入也会登录失败,若不记得用户名密码可以查看环境变量脚本

输入用户名密码就可以成功访问啦

此次安装除SQL数据库外,其它全部使用默认密码!若自己设置密码一定要记清楚,密码太多容易搞错,安装过程一定要细心,用虚拟机安装要多使用快照功能

其它方式安装可以参考这三篇文章

Centos 8使用devstack快速安装openstack最新版

Centos 8中使用Packstack(RDO)快速安装openstack Victoria版

Ubuntu 20使用devstack快速安装openstack最新版