Kafka报错处理

1、 记一次kafka报错处理

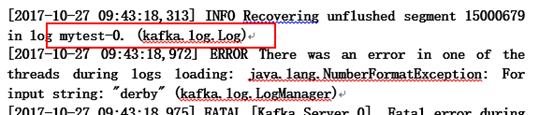

Kafka停止后,再启动的时候发生了报错:

[2017-10-27 09:43:18,313] INFO Recovering unflushed segment 15000679 in log mytest-0. (kafka.log.Log)

[2017-10-27 09:43:18,972] ERROR There was an error in one of the threads during logs loading: java.lang.NumberFormatException: For input string: "derby" (kafka.log.LogManager)

[2017-10-27 09:43:18,975] FATAL [Kafka Server 0], Fatal error during KafkaServer startup. Prepare to shutdown (kafka.server.KafkaServer)

java.lang.NumberFormatException: For input string: "derby"

at java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.lang.Long.parseLong(Long.java:589)

at java.lang.Long.parseLong(Long.java:631)

at scala.collection.immutable.StringLike$class.toLong(StringLike.scala:277)

at scala.collection.immutable.StringOps.toLong(StringOps.scala:29)

at kafka.log.Log$.offsetFromFilename(Log.scala:1648)

at kafka.log.Log$$anonfun$loadSegmentFiles$3.apply(Log.scala:284)

at kafka.log.Log$$anonfun$loadSegmentFiles$3.apply(Log.scala:272)

at scala.collection.TraversableLike$WithFilter$$anonfun$foreach$1.apply(TraversableLike.scala:733)

直接看报错日志,从日志中可以看出有个明显的报错:

ERROR There was an error in one of the threads during logs loading: java.lang.NumberFormatException: For input string: "derby" (kafka.log.LogManager)

从原义上可以看出说是有个线程在加载log的时候出错了,java.lang.NumberFormatException抛出的异常,输入的字符串derby有问题。

什么鬼啊??

首先来分析一下kafka重新启动要做的事情:

启动kafka broker的时候,会重新load之前的每个topic的数据,正常情况下会提示每个topic恢复完成。

INFO Recovering unflushed segment 8790240 in log userlog-2. (kafka.log.Log)

INFO Loading producer state from snapshot file 00000000000008790240.snapshot for partition userlog-2 (kafka.log.ProducerStateManager)

INFO Loading producer state from offset 10464422 for partition userlog-2 with message format version 2 (kafka.log.Log)

INFO Loading producer state from snapshot file 00000000000010464422.snapshot for partition userlog-2 (kafka.log.ProducerStateManager)

INFO Completed load of log userlog-2 with 2 log segments, log start offset 6223445 and log end offset 10464422 in 4460 ms (kafka.log.Log)

但当有些topic下的数据恢复失败的时候,会导致broker关闭,就会报错:

ERROR There was an error in one of the threads during logs loading: java.lang.NumberFormatException: For input string: "derby" (kafka.log.LogManager)

现在清楚了问题出在topic的数据有问题,什么问题呢??

赶紧到kafka存放topic的地方去看一下,这个路径是在server.properties里面设置的:

log.dirs=/data/kafka/kafka-logs

1)从错误日志前一行来看:

可以看出,是在加载mytest-0这个topic出现的问题,直接到这个topic所在的目录下,发现有个derby.log.是非法文件直接删掉,重启服务。

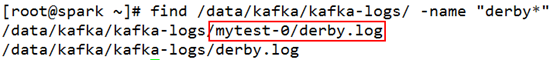

2)完整的在查一下,确保没有类似的文件

#cd /data/kafka/kafka-logs

#find /data/kafka/kafka-logs/ -name "derby*"

可以看到在 topic,mytest-0下有个derby.log的文件,是非法的。因为kafka broker要求所有数据文件名称都是Long类型的。只要把这个文件删掉,在重启kafka就可以了。

2、 记一次kafka、zookeeper报错

Kafka和zookeeper都正常启动,但是从日志看,连上以后很快就断开连接 。报错信息如下:

[2017-10-27 15:06:08,981] INFO Established session 0x15f5c88c014000a with negotiated timeout 240000 for client /127.0.0.1:33494 (org.apache.zookeeper.server.ZooKeeperServer)

[2017-10-27 15:06:08,982] INFO Processed session termination for sessionid: 0x15f5c88c014000a (org.apache.zookeeper.server.PrepRequestProcessor)

[2017-10-27 15:06:08,984] WARN caught end of stream exception (org.apache.zookeeper.server.NIOServerCnxn)

EndOfStreamException: Unable to read additional data from client sessionid 0x15f5c88c014000a, likely client has closed socket

at org.apache.zookeeper.server.NIOServerCnxn.doIO(NIOServerCnxn.java:239)

at org.apache.zookeeper.server.NIOServerCnxnFactory.run(NIOServerCnxnFactory.java:203)

at java.lang.Thread.run(Thread.java:745)

从日志字面意思来看,第一条日志:说是session 0x15f5c88c014000a 240秒后超时了,(什么鬼?);继续第二条日志说0x15f5c88c014000a 这个session结束了,超时导致断开了这个session,这是明白的;Ok接下来看第三条:不能从0x15f5c88c014000a session读取额外的数据了。(都断开连接了,怎么读)。至此日志分析完毕,看来就是session超时断开导致的。直接就去加大session的连接时间就可以了。

配置的超时时间太短,Zookeeper没有读完Consumer的数据,连接就被Consumer断开了!

解决方法:

修改kafka的server.properties文件:

# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=600000

zookeeper.session.timeout.ms=400000

一般就可以了。如果还不放心就把zookeeper的配置文件也改一下:

# disable the per-ip limit on the number of connections since this is a non-production config

maxClientCnxns=1000

tickTime=120000

3、记一次kafka报错

kafka.common.ConsumerRebalanceFailedException异常解决

consumer消费kafka消息的时候,出现一个报错:

kafka.common.ConsumerRebalanceFailedException: migart_nginx-1446432618163-2746a209 can't rebalance after 4 retries

at kafka.consumer.ZookeeperConsumerConnector$ZKRebalancerListener.syncedRebalance(ZookeeperConsumerConnector.scala:432)

at kafka.consumer.ZookeeperConsumerConnector.kafka$consumer$ZookeeperConsumerConnector$$reinitializeConsumer(ZookeeperConsumerConnector.scala:722)

at kafka.consumer.ZookeeperConsumerConnector.consume(ZookeeperConsumerConnector.scala:212)

at kafka.javaapi.consumer.ZookeeperConsumerConnector.createMessageStreams(ZookeeperConsumerConnector.scala:80)

at kafka.javaapi.consumer.ZookeeperConsumerConnector.createMessageStreams(ZookeeperConsumerConnector.scala:92)

at com.symboltech.mine.ConsumerUtil.start(ConsumerUtil.java:40)

at com.symboltech.mine.KafkaConsumer.sdf(KafkaConsumer.java:58)

解决办法:

这是kafka的consumer的zk配置项的问题,修改kafka的consumer.properties

zookeeper.session.timeout.ms=10000

zookeeper.connection.timeout.ms=10000

#消费均衡两次重试之间的时间间隔

rebalance.backoff.ms=3000

#消费均衡的重试次数

rebalance.max.retries=10

注:

rebalance.backoff.ms*rebalance.max.retries > zookeeper.session.timeout.ms,否则还没有处理完毕,session还没有断开,就有新的consumer进来。

官方解释:

consumer rebalancing fails (you will see ConsumerRebalanceFailedException): This is due to conflicts when two consumers are trying to own the same topic partition. The log will show you what caused the conflict (search for "conflict in ").

If your consumer subscribes to many topics and your ZK server is busy, this could be caused by consumers not having enough time to see a consistent view of all consumers in the same group. If this is the case, try Increasing rebalance.max.retries and rebalance.backoff.ms.

Another reason could be that one of the consumers is hard killed. Other consumers during rebalancing won't realize that consumer is gone after zookeeper.session.timeout.ms time. In the case, make sure that rebalance.max.retries * rebalance.backoff.ms > zookeeper.session.timeout.ms.

4、kafka常用配置,注意参考官方的说明,有些参数可能有的版本已经废弃,此处的参数仅供参考。

broker配置

#非负整数,用于唯一标识broker

broker.id 0

#kafka持久化数据存储的路径,可以指定多个,以逗号分隔

log.dirs /tmp/kafka-logs

#broker接收连接请求的端口

port 9092

#指定zk连接字符串,[hostname:port]以逗号分隔

zookeeper.connect

#单条消息最大大小控制,消费端的最大拉取大小需要略大于该值

message.max.bytes 1000000

#接收网络请求的线程数

num.network.threads 3

#用于执行请求的I/O线程数

num.io.threads 8

#用于各种后台处理任务(如文件删除)的线程数

background.threads 10

#待处理请求最大可缓冲的队列大小

queued.max.requests 500

#配置该机器的IP地址

host.name

#默认分区个数

num.partitions 1

#分段文件大小,超过后会轮转

log.segment.bytes 1024 * 1024 * 1024

#日志没达到大小,如果达到这个时间也会轮转

log.roll.{ms,hours} 168

#日志保留时间

log.retention.{ms,minutes,hours}

#不存在topic的时候是否自动创建

auto.create.topics.enable true

#partition默认的备份因子

default.replication.factor 1

#如果这个时间内follower没有发起fetch请求,被认为dead,从ISR移除

replica.lag.time.max.ms 10000

#如果follower相比leader落后这么多以上消息条数,会被从ISR移除

replica.lag.max.messages 4000

#从leader可以拉取的消息最大大小

replica.fetch.max.bytes 1024 * 1024

#从leader拉取消息的fetch线程数

num.replica.fetchers 1

#zk会话超时时间

zookeeper.session.timeout.ms 6000

#zk连接所用时间

zookeeper.connection.timeout.ms

#zk follower落后leader的时间

zookeeper.sync.time.ms 2000

#是否开启topic可以被删除的方式

delete.topic.enable false

producer配置

#参与消息确认的broker数量控制,0代表不需要任何确认 1代表需要leader replica确认 -1代表需要ISR中所有进行确认

request.required.acks 0

#从发送请求到收到ACK确认等待的最长时间(超时时间)

request.timeout.ms 10000

#设置消息发送模式,默认是同步方式, async异步模式下允许消息累计到一定量或一段时间又另外线程批量发送,吞吐量好但丢失数据风险增大

producer.type sync

#消息序列化类实现方式,默认是byte[]数组形式

serializer.class kafka.serializer.DefaultEncoder

#kafka消息分区策略实现方式,默认是对key进行hash

partitioner.class kafka.producer.DefaultPartitioner

#对发送的消息采取的压缩编码方式,有none|gzip|snappy

compression.codec none

#指定哪些topic的message需要压缩

compressed.topics null

#消息发送失败的情况下,重试发送的次数 存在消息发送是成功的,只是由于网络导致ACK没收到的重试,会出现消息被重复发送的情况

message.send.max.retries 3

#在开始重新发起metadata更新操作需要等待的时间

retry.backoff.ms 100

#metadata刷新间隔时间,如果负值则失败的时候才会刷新,如果0则每次发送后都刷新,正值则是一种周期行为

topic.metadata.refresh.interval.ms 600 * 1000

#异步发送模式下,缓存数据的最长时间,之后便会被发送到broker

queue.buffering.max.ms 5000

#producer端异步模式下最多缓存的消息条数

queue.buffering.max.messages 10000

#0代表队列没满的时候直接入队,满了立即扔弃,-1代表无条件阻塞且不丢弃

queue.enqueue.timeout.ms -1

#一次批量发送需要达到的消息条数,当然如果queue.buffering.max.ms达到的时候也会被发送

batch.num.messages 200

consumer配置

#指明当前消费进程所属的消费组,一个partition只能被同一个消费组的一个消费者消费

group.id

#针对一个partition的fetch request所能拉取的最大消息字节数,必须大于等于Kafka运行的最大消息

fetch.message.max.bytes 1024 * 1024

#是否自动周期性提交已经拉取到消费端的消息offset

auto.commit.enable true

#自动提交offset到zookeeper的时间间隔

auto.commit.interval.ms 60 * 1000

#消费均衡的重试次数

rebalance.max.retries 4

#消费均衡两次重试之间的时间间隔

rebalance.backoff.ms 2000

#当重新去获取partition的leader前需要等待的时间

refresh.leader.backoff.ms 200

#如果zookeeper上没有offset合理的初始值情况下获取第一条消息开始的策略smallest|largeset

auto.offset.reset largest

#如果其超时,将会可能触发rebalance并认为已经死去

zookeeper.session.timeout.ms 6000

#确认zookeeper连接建立操作客户端能等待的最长时间

zookeeper.connection.timeout.ms 6000

注:配置参数,摘自csdn,http://blog.csdn.net/huanggang028/article/details/47830529