- 1返回购买prod_id为BR01 的产品的所有顾客的电子邮件

- 2张孝祥正在整理Java就业面试题大全20100602版本(八)

- 3content-type的几种取值_text/plain

- 4蓝桥青少2023年python测评_python 蓝桥杯 给定一个正整数n,计算n除以7的商

- 5冰冻三尺非一日之寒-自学篇 浅谈个人学习方法_hongyang csdn

- 6springboot系类代码:springboot-data-jpa_spring data jpa怎么注入entityinformation

- 7dockerfile的优化_dockerfile 多个env 合并成一行

- 8springboot 最新稳定版_spring boot 最新版本

- 9PHP调用DLL_php 使用 aspose.dll

- 10MyBatis 源码系列:MyBatis 解析配置文件、二级缓存、SQL

二进制部署K8S

赞

踩

一、说明

1.部署K8S集群两种方式

-

kubeadmin

Kubeadm是一个K8s部署工具,提供kubeadm init和kubeadm join,用于快速部署Kubernetes集群。

-

二进制方式

使用二进制包,手动部署每个组件,组成Kubernetes集群。(此次使用)

-

总结

Kubeadm降低部署门槛,但屏蔽了很多细节,遇到问题很难排查。如果想更容易可控,推荐使用二进制包部署Kubernetes集群,虽然部署麻烦点,但是利于后期维护。

2.环境准备

服务器要求:

-

建议最小硬件配置:2核CPU、2G内存、30G硬盘

-

服务器最好可以访问外网,会有从网上拉取镜像需求,如果服务器不能上网,需要提前下载对应镜像并导入

安装软件说明:

| 软件 | 版本 |

|---|---|

| 操作系统 | CentOS release 7.6 |

| 容器引擎 | Docker CE 19 |

| Kubernetes | Kubernetes v1.20 |

服务器资源:

| 部署内容 | 资源 | IP地址 | 说明 |

|---|---|---|---|

| K8S-Master01 etcd1 | CPU:4C 内存:12G 磁盘:100G | 10.0.33.190 | k8s主节点1 |

| K8S-Master02 etcd2 | CPU:4C 内存:12G 磁盘:100G | 10.0.33.191 | k8s主节点2 |

| K8S-Node01 etcd3 | CPU:4C 内存:12G 磁盘:100G | 10.0.33.192 | k8s从节点1 |

| K8S-Node02 | CPU:4C 内存:12G 磁盘:100G | 10.0.33.193 | k8s从节点2 |

| Nginx(master) Harbor Jenkins | CPU:2C 内存:8G 磁盘:500G | 10.0.33.194 10.0.33.196(VIP) | Nginx主节点 |

| Nginx Gitlab | CPU:2C 内存:8G 磁盘:100G | 10.0.33.195 | Nginx从节点 |

其它:

虚拟ip4个: 10.0.33.196 10.0.33.197 10.0.33.198 10.0.33.199

其中10.0.33.196用于K8S集群高可用,剩余3个虚拟IP用于后续节点扩容。

3.系统初始化

对k8s服务器系统进行初始化,包括master和node节点。

此次操作的服务器IP:10.0.33.190-10.0.33.194

# 关闭防火墙 systemctl stop firewalld systemctl disable firewalld # 关闭selinux: setenforce 0 # 临时 sed -i 's/enforcing/disabled/' /etc/selinux/config # 永久 # 关闭swap: swapoff -a # 临时 sed -ri 's/.*swap.*/#&/' /etc/fstab # 永久 # 同步系统时间(可以访问外网才能同步,如果没有外网请使用其它方式同步。如内网时间服务器): yum install ntpdate -y # 安装ntpdate命令 ntpdate time.windows.com # time.windows.com为时间服务器地址,可以根据需求更换为别的地址 # 如果服务器时区不对,可以修改一下 timedatectl set-timezone 'Asia/Shanghai' # 修改hosts文件,根据实际IP进行修改 cat >> /etc/hosts << EOF 10.0.33.190 k8s-master1 10.0.33.191 k8s-master2 10.0.33.192 k8s-node1 10.0.33.193 k8s-node2 EOF # 修改主机名 # 10.0.33.190服务器 hostnamectl set-hostname k8s-master1 # 10.0.33.191服务器 hostnamectl set-hostname k8s-master2 # 10.0.33.192服务器 hostnamectl set-hostname k8s-node1 # 10.0.33.193服务器 hostnamectl set-hostname k8s-node2 # 修改完成后查看 hostname # 将桥接的IPv4流量传递到iptables的链 cat > /etc/sysctl.d/k8s.conf << EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF sysctl --system # 生效

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

二、部署ETCD集群

1.etcd简介

Etcd 是一个分布式键值存储系统,Kubernetes使用Etcd进行数据存储,所以先准备一个Etcd数据库,为解决Etcd单点故障,应采用集群方式部署,这里使用3台组建集群,可容忍1台机器故障,当然,也可以使用5台组建集群,可容忍2台机器故障。

部署服务器节点:

10.0.33.190,10.0.33.191,10.0.33.192

注:这里为了节约机器与K8s服务器复用。也可以独立于k8s集群之外部署,只要apiserver能连接到就行。

2.准备cfssl证书生成工具

cfssl是一个开源的证书管理工具,使用json文件生成证书,相比openssl更方便使用。

找任意一台服务器操作,这里用Master节点。

# 按照以下命令依次执行即可

# 以下3个文件如果服务器上无法下载,可在自己本机下载后上传至服务器。(外网下载可能会比较慢)

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

# 给3个文件添加执行权限

chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64

# 移动至bin目录下

mv cfssl_linux-amd64 /usr/local/bin/cfssl

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

3.生成etcd证书

- 自签证书颁发机构(CA)

创建工作目录:

mkdir -p ~/TLS/{etcd,k8s}

cd ~/TLS/etcd

- 1

- 2

自签CA:

cat > ca-config.json << EOF { "signing": { "default": { "expiry": "87600h" }, "profiles": { "www": { "expiry": "87600h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ] } } } } EOF cat > ca-csr.json << EOF { "CN": "etcd CA", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing" } ] } EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

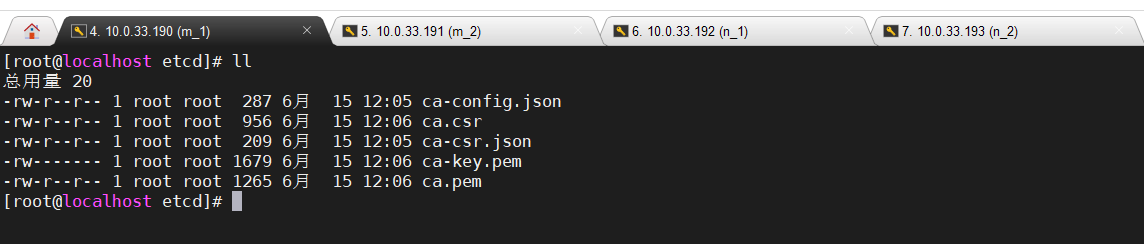

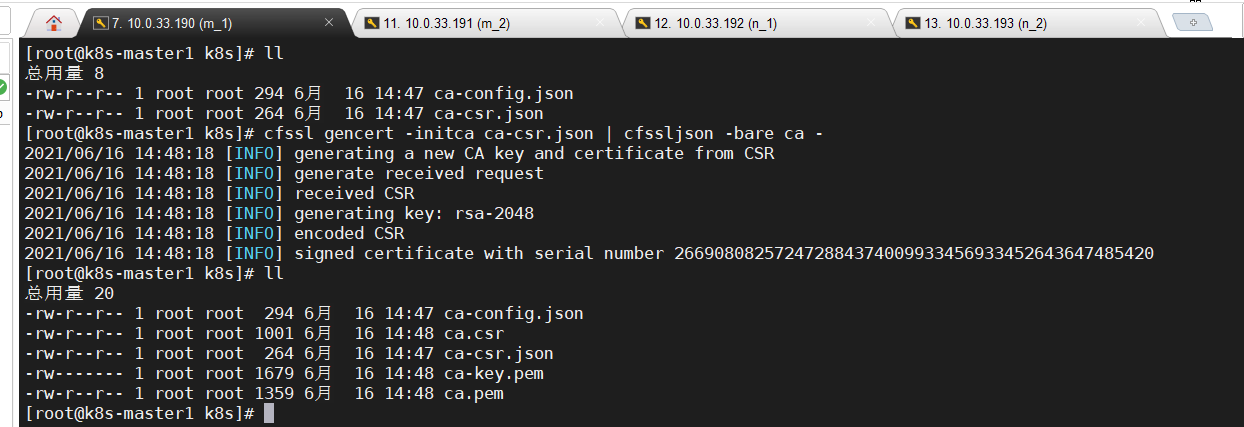

生成证书:

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

- 1

执行完成会生成ca.pem和ca-key.pem文件

- 使用自签CA签发Etcd HTTPS证书

创建证书申请文件:

注:hosts字段中IP为所有etcd节点的集群内部通信IP,一个都不能少。如果为了方便后期扩容可以多写几个预留的IP。

cat > server-csr.json << EOF { "CN": "etcd", "hosts": [ "10.0.33.190", "10.0.33.191", "10.0.33.192" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing" } ] } EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

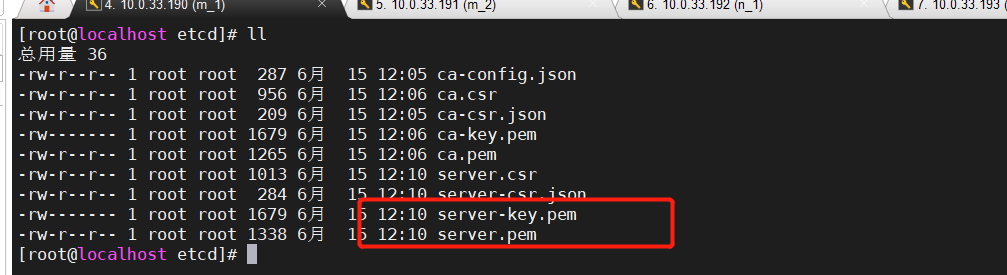

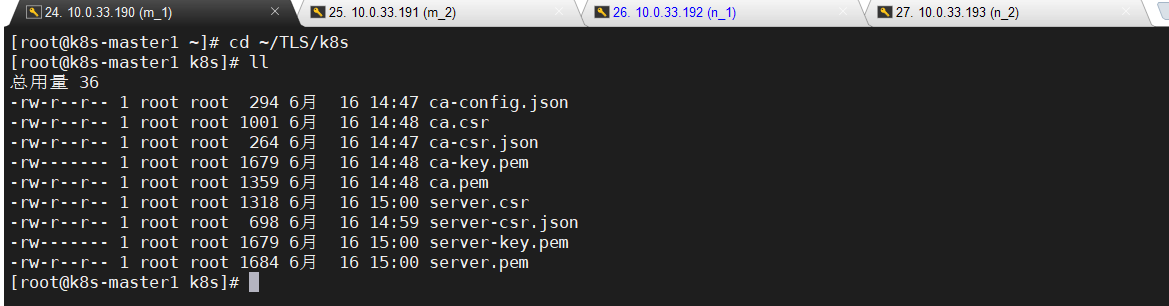

生成证书:

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

- 1

执行后会生成server.pem和server-key.pem文件

4.部署etcd

下载安装包:

地址:https://github.com/etcd-io/etcd/releases/download/v3.4.9/etcd-v3.4.9-linux-amd64.tar.gz

为简化操作以下步骤都在1节点服务器上操作,完成后会将节点1生成的所有文件拷贝到节点2和节点3

- 创建目录

# 创建目录

mkdir -p /opt/etcd/{bin,cfg,ssl}

# 解压安装包

tar zxvf etcd-v3.4.9-linux-amd64.tar.gz

# 进入解压目录

mv etcd-v3.4.9-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

# 把刚才生成的证书拷贝到ssl目录

cp ~/TLS/etcd/ca*pem ~/TLS/etcd/server*pem /opt/etcd/ssl/

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 创建etcd配置文件

cat > /opt/etcd/cfg/etcd.conf << EOF

#[Member]

ETCD_NAME="etcd-1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.33.190:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.33.190:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.33.190:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.33.190:2379"

ETCD_INITIAL_CLUSTER="etcd-1=https://10.0.33.190:2380,etcd-2=https://10.0.33.191:2380,etcd-3=https://10.0.33.192:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

配置说明:

• ETCD_NAME:节点名称,集群中唯一

• ETCD_DATA_DIR:数据目录

• ETCD_LISTEN_PEER_URLS:集群通信监听地址

• ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

• ETCD_INITIAL_ADVERTISE_PEERURLS:集群通告地址

• ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

• ETCD_INITIAL_CLUSTER:集群节点地址

• ETCD_INITIALCLUSTER_TOKEN:集群Token

• ETCD_INITIALCLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群

- systemd管理etcd

cat > /usr/lib/systemd/system/etcd.service << EOF [Unit] Description=Etcd Server After=network.target After=network-online.target Wants=network-online.target [Service] Type=notify EnvironmentFile=/opt/etcd/cfg/etcd.conf ExecStart=/opt/etcd/bin/etcd \ --cert-file=/opt/etcd/ssl/server.pem \ --key-file=/opt/etcd/ssl/server-key.pem \ --peer-cert-file=/opt/etcd/ssl/server.pem \ --peer-key-file=/opt/etcd/ssl/server-key.pem \ --trusted-ca-file=/opt/etcd/ssl/ca.pem \ --peer-trusted-ca-file=/opt/etcd/ssl/ca.pem \ --logger=zap Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 部署其它节点

以上1节点就完成了,接下来部署另外两个节点

# 把文件复制到另外两个节点服务器

scp -r /opt/etcd/ root@10.0.33.191:/opt/

scp /usr/lib/systemd/system/etcd.service root@10.0.33.191:/usr/lib/systemd/system/

scp -r /opt/etcd/ root@10.0.33.192:/opt/

scp /usr/lib/systemd/system/etcd.service root@10.0.33.192:/usr/lib/systemd/system/

- 1

- 2

- 3

- 4

- 5

修改节点2和节点3服务器配置文件:

vim /opt/etcd/cfg/etcd.conf

#[Member]

ETCD_NAME="etcd-2" # 修改此处,节点2改为etcd-2,节点3改为etcd-3

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.33.191:2380" # 修改此处为当前服务器IP

ETCD_LISTEN_CLIENT_URLS="https://10.0.33.191:2379" # 修改此处为当前服务器IP

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.33.191:2380" # 修改此处为当前服务器IP

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.33.191:2379" # 修改此处为当前服务器IP

ETCD_INITIAL_CLUSTER="etcd-1=https://10.0.33.190:2380,etcd-2=https://10.0.33.191:2380,etcd-3=https://10.0.33.192:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

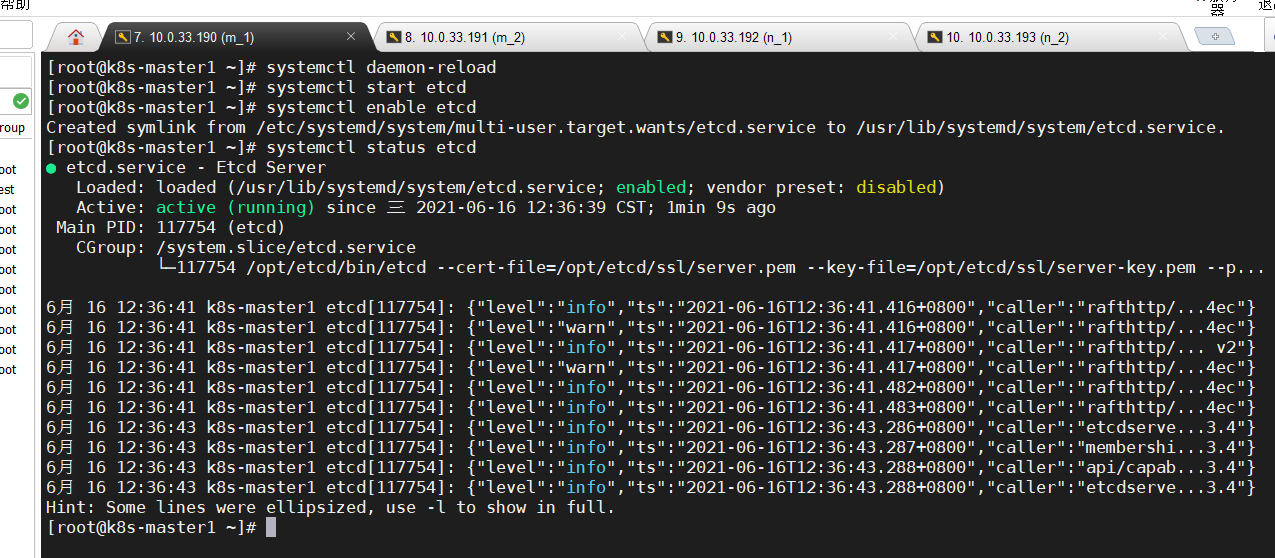

- 启动并设置开机启动

在3台机器上执行以下操作

# 重新加载配置

systemctl daemon-reload

# 启动etcd。(第一台启动时会卡住,其它节点正常后才会恢复正常)

systemctl start etcd

# 设置开机自启

systemctl enable etcd

- 1

- 2

- 3

- 4

- 5

- 6

启动后查看etcd状态·,显示“running”说明正常。

systemctl status etcd

- 1

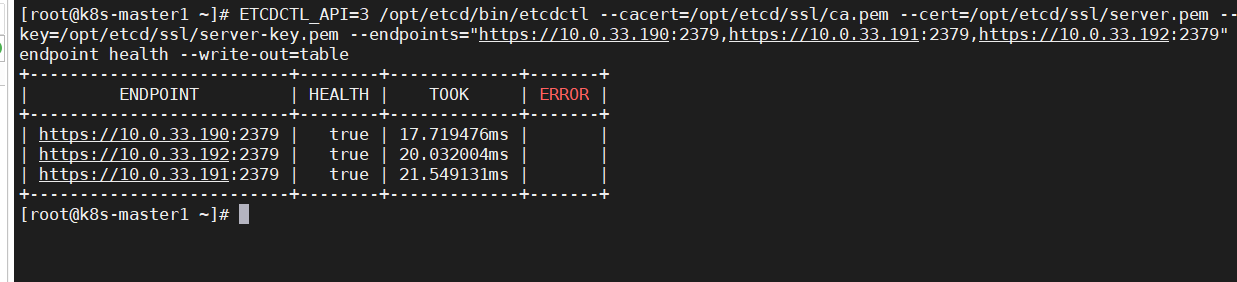

- 查看集群状态

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://10.0.33.190:2379,https://10.0.33.191:2379,https://10.0.33.192:2379" endpoint health --write-out=table

- 1

如果输出上面信息,就说明集群部署成功。

如果有问题第一步先看日志:/var/log/message 或 journalctl -u etcd

三、安装docker

1.下载并安装

下载地址:https://download.docker.com/linux/static/stable/x86_64/docker-19.03.9.tgz

所有k8s节点都需要安装,在k8s所有节点执行以下操作

# 下载安装包,如果已有安装包直接上传即可

wget https://download.docker.com/linux/static/stable/x86_64/docker-19.03.9.tgz

# 解压

tar zxvf docker-19.03.9.tgz

mv docker/* /usr/bin

- 1

- 2

- 3

- 4

- 5

- 加入systemd管理

cat > /usr/lib/systemd/system/docker.service << EOF [Unit] Description=Docker Application Container Engine Documentation=https://docs.docker.com After=network-online.target firewalld.service Wants=network-online.target [Service] Type=notify ExecStart=/usr/bin/dockerd ExecReload=/bin/kill -s HUP $MAINPID LimitNOFILE=infinity LimitNPROC=infinity LimitCORE=infinity TimeoutStartSec=0 Delegate=yes KillMode=process Restart=on-failure StartLimitBurst=3 StartLimitInterval=60s [Install] WantedBy=multi-user.target EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 配置文件

# 创建配置文件

mkdir /etc/docker

cat > /etc/docker/daemon.json << EOF

{ "registry-mirrors": ["https://b9pmyelo.mirror.aliyuncs.com"] }

EOF

- 1

- 2

- 3

- 4

- 5

- 启动并加入开机启动

systemctl daemon-reload

systemctl start docker

systemctl enable docker

- 1

- 2

- 3

四、Master节点部署

注:这里先部署单master节点,另外一个master节点后面再进行扩容

1.生成kube-apiserver证书

- 自签证书颁发机构(CA)

# 进到之前创建的TLS下的k8s目录 cd ~/TLS/k8s # 生成json文件 cat > ca-config.json << EOF { "signing": { "default": { "expiry": "87600h" }, "profiles": { "kubernetes": { "expiry": "87600h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ] } } } } EOF # 注意是两个文件 cat > ca-csr.json << EOF { "CN": "kubernetes", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing", "O": "k8s", "OU": "System" } ] } EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

生成证书:

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

- 1

执行完会生成ca.pem和ca-key.pem文件

- 使用自签CA签发kube-apiserver HTTPS证书

注:下面文件hosts字段中IP为所有Master/LB/VIP IP(跟K8S集群相关的所有IP),一个都不能少!为了方便后期扩容可以多写几个预留的IP。这里把10.0.33.190 - 10.0.33.199的IP全部加上。

# 创建证书申请文件 cat > server-csr.json << EOF { "CN": "kubernetes", "hosts": [ "10.0.0.1", "127.0.0.1", "10.0.33.190", "10.0.33.191", "10.0.33.192", "10.0.33.193", "10.0.33.194", "10.0.33.195", "10.0.33.196", "10.0.33.197", "10.0.33.198", "10.0.33.199", "kubernetes", "kubernetes.default", "kubernetes.default.svc", "kubernetes.default.svc.cluster", "kubernetes.default.svc.cluster.local" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ] } EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

生成证书:

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

- 1

执行完会生成server.pem和server-key.pem文件

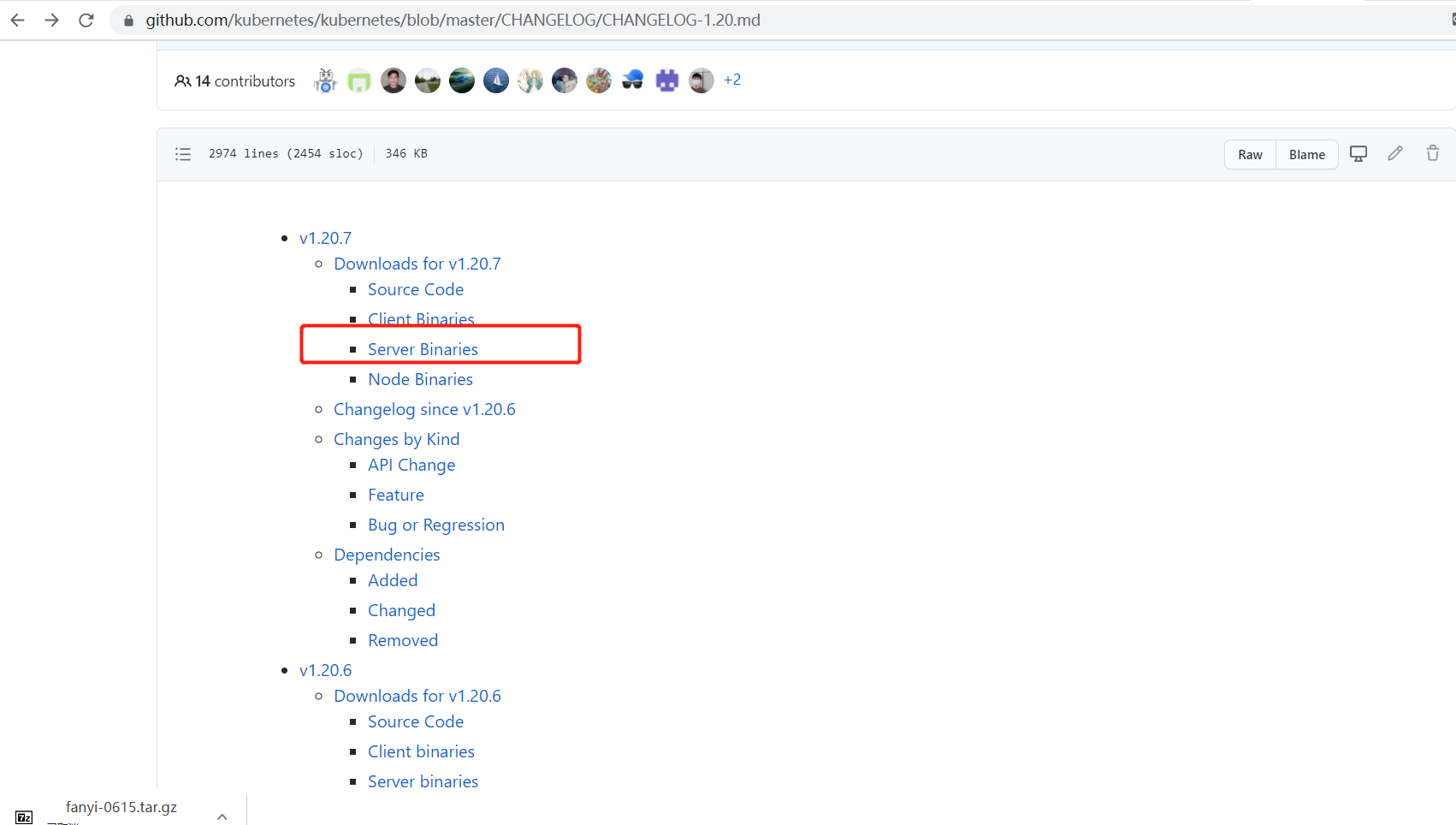

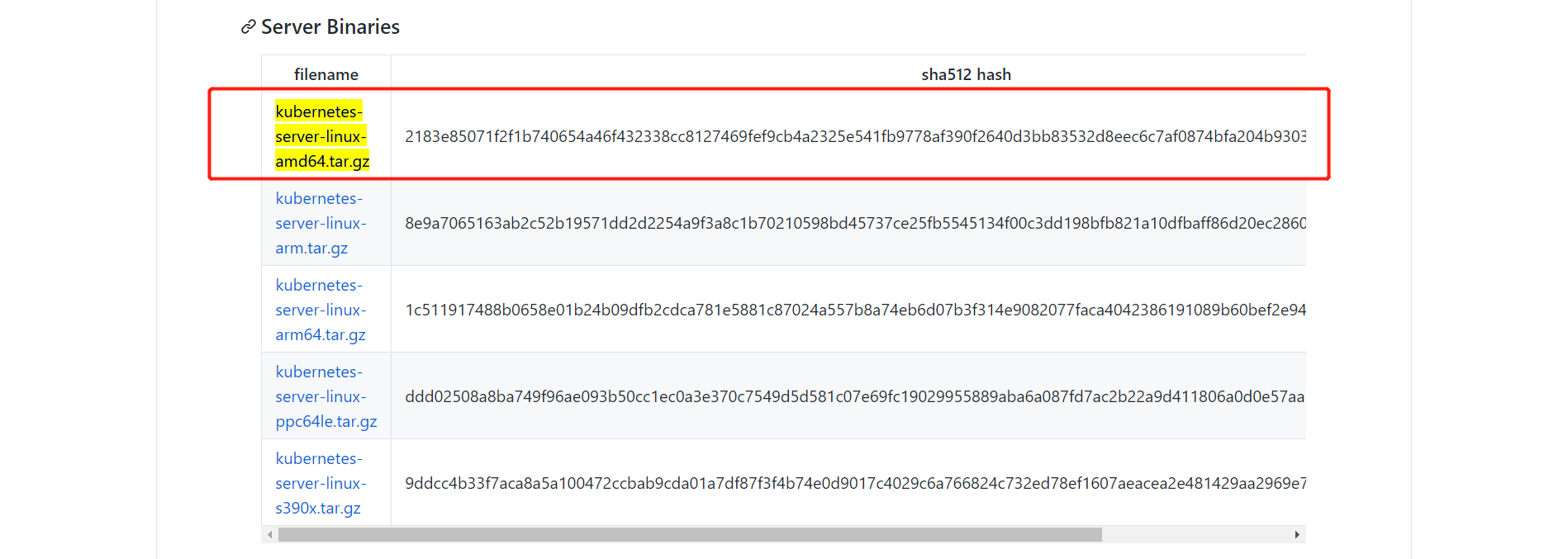

2.下载安装包

下载地址: https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.20.md

注:打开链接里面有很多包,下载一个server包就够了,包含了Master和Worker Node二进制文件。

下载步骤:

- 打开下载地址

- 选择版本下载即可(根据服务器类型选择)

下载完成后上传至master1服务器,并解压

# 创建k8s的工作目录

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

# 解压

tar zxvf kubernetes-server-linux-amd64.tar.gz

# 复制文件

cd kubernetes/server/bin

cp kube-apiserver kube-scheduler kube-controller-manager /opt/kubernetes/bin

cp kubectl /usr/bin/

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

3.部署kube-apiserver

3.1 创建配置文件

cat > /opt/kubernetes/cfg/kube-apiserver.conf << EOF KUBE_APISERVER_OPTS="--logtostderr=false \\ --v=2 \\ --log-dir=/opt/kubernetes/logs \\ --etcd-servers=https://10.0.33.190:2379,https://10.0.33.191:2379,https://10.0.33.192:2379 \\ --bind-address=10.0.33.190 \\ --secure-port=6443 \\ --advertise-address=10.0.33.190 \\ --allow-privileged=true \\ --service-cluster-ip-range=10.0.0.0/24 \\ --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\ --authorization-mode=RBAC,Node \\ --enable-bootstrap-token-auth=true \\ --token-auth-file=/opt/kubernetes/cfg/token.csv \\ --service-node-port-range=30000-32767 \\ --kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \\ --kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \\ --tls-cert-file=/opt/kubernetes/ssl/server.pem \\ --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \\ --client-ca-file=/opt/kubernetes/ssl/ca.pem \\ --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --service-account-issuer=api \\ --service-account-signing-key-file=/opt/kubernetes/ssl/server-key.pem \\ --etcd-cafile=/opt/etcd/ssl/ca.pem \\ --etcd-certfile=/opt/etcd/ssl/server.pem \\ --etcd-keyfile=/opt/etcd/ssl/server-key.pem \\ --requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \\ --proxy-client-cert-file=/opt/kubernetes/ssl/server.pem \\ --proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem \\ --requestheader-allowed-names=kubernetes \\ --requestheader-extra-headers-prefix=X-Remote-Extra- \\ --requestheader-group-headers=X-Remote-Group \\ --requestheader-username-headers=X-Remote-User \\ --enable-aggregator-routing=true \\ --audit-log-maxage=30 \\ --audit-log-maxbackup=3 \\ --audit-log-maxsize=100 \\ --feature-gates=RemoveSelfLink=false \\ --audit-log-path=/opt/kubernetes/logs/k8s-audit.log" EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

注:上面两个\ \ 第一个是转义符,第二个是换行符,使用转义符是为了使用EOF保留换行符。

配置参数说明:

• --logtostderr:启用日志

• —v:日志等级

• --log-dir:日志目录

• --etcd-servers:etcd集群地址

• --bind-address:监听地址

• --secure-port:https安全端口

• --advertise-address:集群通告地址

• --allow-privileged:启用授权

• --service-cluster-ip-range:Service虚拟IP地址段

• --enable-admission-plugins:准入控制模块

• --authorization-mode:认证授权,启用RBAC授权和节点自管理

• --enable-bootstrap-token-auth:启用TLS bootstrap机制

• --token-auth-file:bootstrap token文件

• --service-node-port-range:Service nodeport类型默认分配端口范围

• --kubelet-client-xxx:apiserver访问kubelet客户端证书

• --tls-xxx-file:apiserver https证书

• 1.20版本必须加的参数:–service-account-issuer,–service-account-signing-key-file

• --etcd-xxxfile:连接Etcd集群证书

• --audit-log-xxx:审计日志

• 启动聚合层相关配置:–requestheader-client-ca-file,–proxy-client-cert-file,–proxy-client-key-file,–requestheader-allowed-names,–requestheader-extra-headers-prefix,–requestheader-group-headers,–requestheader-username-headers,–enable-aggregator-routing

3.2 配置证书

把证书拷贝到配置文件中的路径:

cp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem /opt/kubernetes/ssl/

- 1

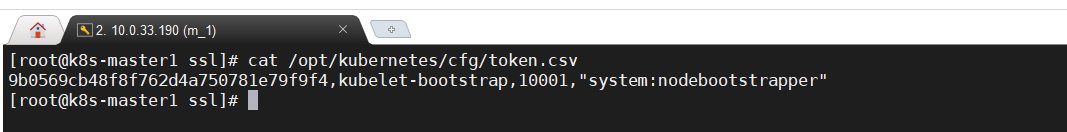

启用 TLS Bootstrapping 机制:

# 创建配置文件中token文件

cat > /opt/kubernetes/cfg/token.csv <<-EOF

`head -c 16 /dev/urandom | od -An -t x | tr -d ' '`,kubelet-bootstrap,10001,"system:nodebootstrapper"

EOF

- 1

- 2

- 3

- 4

3.3 启动

systemd管理apiserver

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

启动并设置开机启动

systemctl daemon-reload

systemctl start kube-apiserver

systemctl enable kube-apiserver

- 1

- 2

- 3

4.部署kube-controller-manager

4.1 创建配置文件

cat > /opt/kubernetes/cfg/kube-controller-manager.conf << EOF KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \\ --v=2 \\ --log-dir=/opt/kubernetes/logs \\ --leader-elect=true \\ --kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \\ --bind-address=127.0.0.1 \\ --allocate-node-cidrs=true \\ --cluster-cidr=10.244.0.0/16 \\ --service-cluster-ip-range=10.0.0.0/24 \\ --cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\ --cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --root-ca-file=/opt/kubernetes/ssl/ca.pem \\ --service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --cluster-signing-duration=87600h0m0s" EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

配置文件说明:

• --kubeconfig:连接apiserver配置文件

• --leader-elect:当该组件启动多个时,自动选举(HA)

• --cluster-signing-cert-file/–cluster-signing-key-file:自动为kubelet颁发证书的CA,与apiserver保持一致

4.2 生成kubeconfig文件

生成kube-controller-manager证书:

# 切换目录 cd ~/TLS/k8s # 创建证书请求文件 cat > kube-controller-manager-csr.json << EOF { "CN": "system:kube-controller-manager", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "system:masters", "OU": "System" } ] } EOF # 生成证书 cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

生成kubeconfig文件:

# 新建一个脚本(需要和证书在同级目录)

vim /root/TLS/k8s/kubecmcfg.sh

- 1

- 2

以下为脚本内容

# 设置变量 KUBE_CONFIG="/opt/kubernetes/cfg/kube-controller-manager.kubeconfig" KUBE_APISERVER="https://10.0.33.190:6443" kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=${KUBE_CONFIG} kubectl config set-credentials kube-controller-manager \ --client-certificate=./kube-controller-manager.pem \ --client-key=./kube-controller-manager-key.pem \ --embed-certs=true \ --kubeconfig=${KUBE_CONFIG} kubectl config set-context default \ --cluster=kubernetes \ --user=kube-controller-manager \ --kubeconfig=${KUBE_CONFIG} kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

注:KUBE_APISERVER为上面kube-apiserver的地址

执行脚本

sh -x kubecmcfg.sh

- 1

4.3 启动

# systemd管理controller-manager

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

# 启动并加入开机启动

systemctl daemon-reload

systemctl start kube-controller-manager

systemctl enable kube-controller-manager

- 1

- 2

- 3

- 4

5. 部署kube-scheduler

5.1 创建配置文件

cat > /opt/kubernetes/cfg/kube-scheduler.conf << EOF

KUBE_SCHEDULER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--leader-elect \\

--kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \\

--bind-address=127.0.0.1"

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

• --kubeconfig:连接apiserver配置文件

• --leader-elect:当该组件启动多个时,自动选举(HA)

5.2 生成kubeconfig文件

生成kube-controller-manager证书:

# 切换目录 cd ~/TLS/k8s # 创建证书请求文件 cat > kube-scheduler-csr.json << EOF { "CN": "system:kube-scheduler", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "system:masters", "OU": "System" } ] } EOF # 生成证书 cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

生成kubeconfig文件

# 新建一个脚本(需要和证书在同级目录)

vim /root/TLS/k8s/kubesccfg.sh

- 1

- 2

脚本内容:

KUBE_CONFIG="/opt/kubernetes/cfg/kube-scheduler.kubeconfig" KUBE_APISERVER="https://10.0.33.190:6443" kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=${KUBE_CONFIG} kubectl config set-credentials kube-scheduler \ --client-certificate=./kube-scheduler.pem \ --client-key=./kube-scheduler-key.pem \ --embed-certs=true \ --kubeconfig=${KUBE_CONFIG} kubectl config set-context default \ --cluster=kubernetes \ --user=kube-scheduler \ --kubeconfig=${KUBE_CONFIG} kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

# 执行脚本

sh -x kubesccfg.sh

- 1

- 2

5.3 启动

# systemd管理scheduler

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler.conf

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

# 启动并开机启动

systemctl daemon-reload

systemctl start kube-scheduler

systemctl enable kube-scheduler

- 1

- 2

- 3

- 4

6.查看状态

生成kubectl连接集群的证书:

cd ~/TLS/k8s/ cat > admin-csr.json <<EOF { "CN": "admin", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "system:masters", "OU": "System" } ] } EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

生成kubeconfig文件:

mkdir /root/.kube

- 1

# 新建一个脚本(需要和证书在同级目录)

vim /root/TLS/k8s/kubeadcfg.sh

- 1

- 2

脚本内容:

KUBE_CONFIG="/root/.kube/config" KUBE_APISERVER="https://10.0.33.190:6443" kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=${KUBE_CONFIG} kubectl config set-credentials cluster-admin \ --client-certificate=./admin.pem \ --client-key=./admin-key.pem \ --embed-certs=true \ --kubeconfig=${KUBE_CONFIG} kubectl config set-context default \ --cluster=kubernetes \ --user=cluster-admin \ --kubeconfig=${KUBE_CONFIG} kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

执行脚本:

sh -x kubeadcfg.sh

- 1

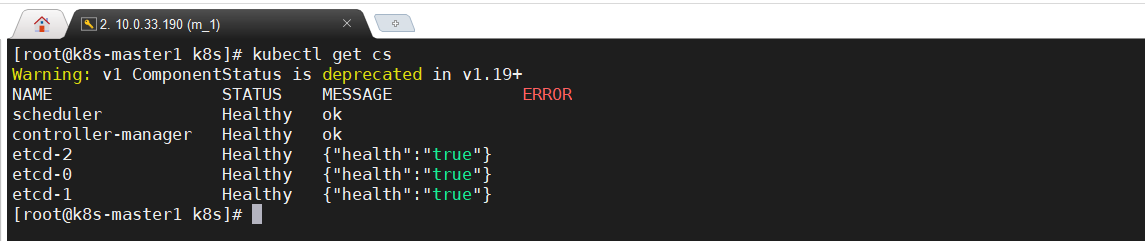

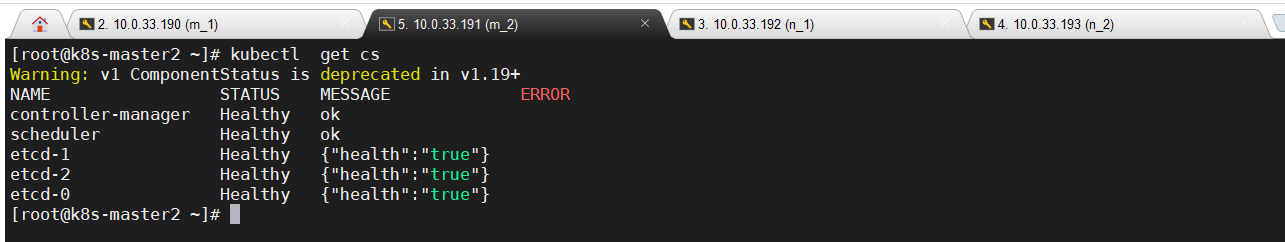

通过kubectl命令查看当前集群组件状态:

kubectl get cs

- 1

如果显示以上内容说明正常,Warning: v1 ComponentStatus is deprecated in v1.19+ 这个警告可以先不管

授权kubelet-bootstrap用户允许请求证书

kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

- 1

- 2

- 3

至此master节点就部署完了,下面进行node节点部署

五、Node节点部署

下面操作也要在Master上操作,因为Master也要作为Node节点

1.准备文件

# 在所有node服务器创建工作目录,即190、192、193三台服务器

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

# master节点执行,进入之前解压的安装包目录

cd ~/kubernetes/server/bin

cp kubelet kube-proxy /opt/kubernetes/bin

# 远程拷贝到其它节点

scp kubelet kube-proxy root@10.0.33.192:/opt/kubernetes/bin

scp kubelet kube-proxy root@10.0.33.193:/opt/kubernetes/bin

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

2.部署kubelet

以下操作在master节点操作,也就是190服务器

2.1 创建配置文件

cat > /opt/kubernetes/cfg/kubelet.conf << EOF

KUBELET_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--hostname-override=k8s-master1 \\

--network-plugin=cni \\

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\

--config=/opt/kubernetes/cfg/kubelet-config.yml \\

--cert-dir=/opt/kubernetes/ssl \\

--pod-infra-container-image=registry.aliyuncs.com/google_containers/pause-amd64:3.0"

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

• --hostname-override:显示名称,集群中唯一

• --network-plugin:启用CNI

• --kubeconfig:空路径,会自动生成,后面用于连接apiserver

• --bootstrap-kubeconfig:首次启动向apiserver申请证书

• --config:配置参数文件

• --cert-dir:kubelet证书生成目录

• --pod-infra-container-image:管理Pod网络容器的镜像。此镜像需要联网下载,如果无法上网需先下载镜像文件并导入

2.2 配置参数文件

cat > /opt/kubernetes/cfg/kubelet-config.yml << EOF kind: KubeletConfiguration apiVersion: kubelet.config.k8s.io/v1beta1 address: 0.0.0.0 port: 10250 readOnlyPort: 10255 cgroupDriver: cgroupfs clusterDNS: - 10.0.0.2 clusterDomain: cluster.local failSwapOn: false authentication: anonymous: enabled: false webhook: cacheTTL: 2m0s enabled: true x509: clientCAFile: /opt/kubernetes/ssl/ca.pem authorization: mode: Webhook webhook: cacheAuthorizedTTL: 5m0s cacheUnauthorizedTTL: 30s evictionHard: imagefs.available: 15% memory.available: 100Mi nodefs.available: 10% nodefs.inodesFree: 5% maxOpenFiles: 1000000 maxPods: 110 EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

生成kubelet初次加入集群引导kubeconfig文件

以下为脚本执行

KUBE_CONFIG="/opt/kubernetes/cfg/bootstrap.kubeconfig" KUBE_APISERVER="https://10.0.33.190:6443" # apiserver IP:PORT TOKEN="9b0569cb48f8f762d4a750781e79f9f4" # 与token.csv里保持一致:/opt/kubernetes/cfg/token.csv # 生成 kubelet bootstrap kubeconfig 配置文件 kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=${KUBE_CONFIG} kubectl config set-credentials "kubelet-bootstrap" \ --token=${TOKEN} \ --kubeconfig=${KUBE_CONFIG} kubectl config set-context default \ --cluster=kubernetes \ --user="kubelet-bootstrap" \ --kubeconfig=${KUBE_CONFIG} kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

3. 启动

加入systemd管理

cat > /usr/lib/systemd/system/kubelet.service << EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet.conf

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

启动并设置开机启动

systemctl daemon-reload

systemctl start kubelet

systemctl enable kubelet

- 1

- 2

- 3

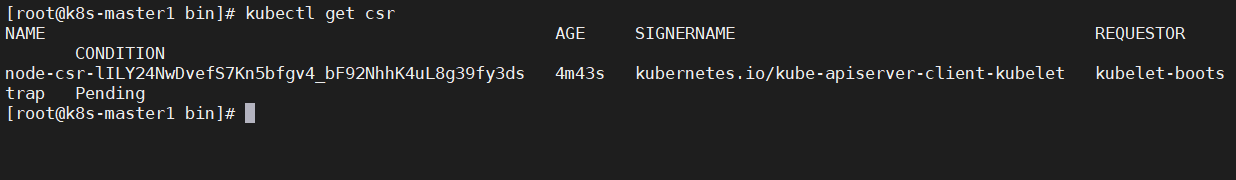

批准kubelet证书申请并加入集群

# 查看kubelet证书请求

kubectl get csr

- 1

- 2

# 批准申请,node-csr-xxxx 根据上面结果填写

kubectl certificate approve node-csr-lILY24NwDvefS7Kn5bfgv4_bF92NhhK4uL8g39fy3ds

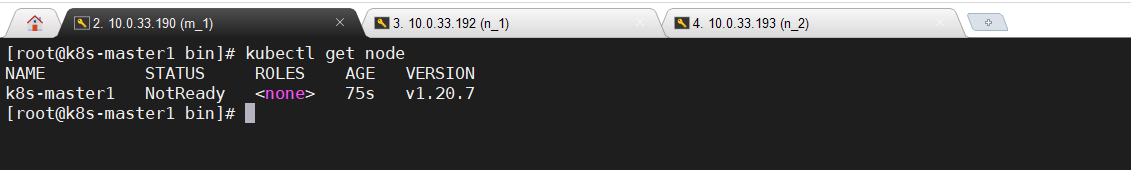

# 查看节点,由于网络插件还没有部署,节点会没有准备就绪 NotReady

kubectl get node

- 1

- 2

- 3

- 4

4. 部署kube-proxy

4.1 创建配置文件

cat > /opt/kubernetes/cfg/kube-proxy.conf << EOF

KUBE_PROXY_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--config=/opt/kubernetes/cfg/kube-proxy-config.yml"

EOF

- 1

- 2

- 3

- 4

- 5

- 6

配置参数文件

cat > /opt/kubernetes/cfg/kube-proxy-config.yml << EOF

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

metricsBindAddress: 0.0.0.0:10249

clientConnection:

kubeconfig: /opt/kubernetes/cfg/kube-proxy.kubeconfig

hostnameOverride: k8s-master1

clusterCIDR: 10.0.0.0/24

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

4.2 生成kube-proxy.kubeconfig文件

生成kube-proxy证书:

# 切换工作目录 cd ~/TLS/k8s # 创建证书请求文件 cat > kube-proxy-csr.json << EOF { "CN": "system:kube-proxy", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ] } EOF # 生成证书 cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

生成kubeconfig文件

脚本执行

KUBE_CONFIG="/opt/kubernetes/cfg/kube-proxy.kubeconfig" KUBE_APISERVER="https://10.0.33.190:6443" kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=${KUBE_CONFIG} kubectl config set-credentials kube-proxy \ --client-certificate=./kube-proxy.pem \ --client-key=./kube-proxy-key.pem \ --embed-certs=true \ --kubeconfig=${KUBE_CONFIG} kubectl config set-context default \ --cluster=kubernetes \ --user=kube-proxy \ --kubeconfig=${KUBE_CONFIG} kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

4.3 启动

# systemd管理kube-proxy

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-proxy.conf

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

# 启动并设置开机启动

systemctl daemon-reload

systemctl start kube-proxy

systemctl enable kube-proxy

- 1

- 2

- 3

- 4

5. 部署网络组件

Calico是一个纯三层的数据中心网络方案,是目前Kubernetes主流的网络方案。

部署calico:

kubectl apply -f calico.yaml

kubectl get pods -n kube-system

- 1

- 2

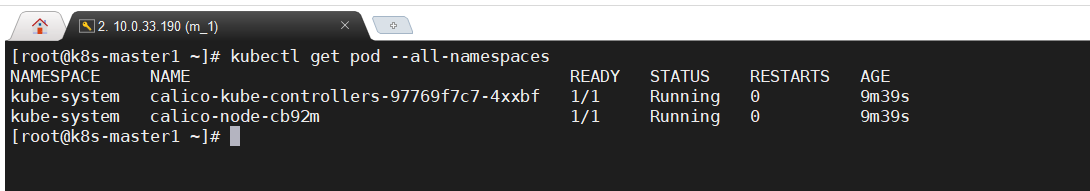

查看calico pod是否running。(需要拉取镜像时间可能久一些)

kubectl get pod --all-namespaces

- 1

6. 授权apiserver访问kubelet

# 生成授权文件 cat > apiserver-to-kubelet-rbac.yaml << EOF apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: annotations: rbac.authorization.kubernetes.io/autoupdate: "true" labels: kubernetes.io/bootstrapping: rbac-defaults name: system:kube-apiserver-to-kubelet rules: - apiGroups: - "" resources: - nodes/proxy - nodes/stats - nodes/log - nodes/spec - nodes/metrics - pods/log verbs: - "*" --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: system:kube-apiserver namespace: "" roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:kube-apiserver-to-kubelet subjects: - apiGroup: rbac.authorization.k8s.io kind: User name: kubernetes EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

# 应用刚才生成的文件

kubectl apply -f apiserver-to-kubelet-rbac.yaml

- 1

- 2

# 测试一下,随便查看个pod日志看是否能显示

kubectl get pod --all-namespaces

# 查看下calico pod的日志

kubectl logs -f calico-node-cb92m -n kube-system

- 1

- 2

- 3

- 4

六、新增Node节点

1.准备文件

拷贝已部署好的Node相关文件到新节点

在刚部署的Master节点(190服务器)将涉及Node的文件拷贝到新节点10.0.33.192/193两台服务器上

scp -r /opt/kubernetes root@10.0.33.192:/opt/

scp -r /opt/kubernetes root@10.0.33.193:/opt/

scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@10.0.33.192:/usr/lib/systemd/system

scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@10.0.33.193:/usr/lib/systemd/system

scp /opt/kubernetes/ssl/ca.pem root@10.0.33.192:/opt/kubernetes/ssl

scp /opt/kubernetes/ssl/ca.pem root@10.0.33.193:/opt/kubernetes/ssl

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

两台节点(192、193)机器删除证书文件

# 删除kubelet证书和kubeconfig文件

rm -f /opt/kubernetes/cfg/kubelet.kubeconfig

rm -f /opt/kubernetes/ssl/kubelet*

- 1

- 2

- 3

注:这几个文件是证书申请审批后自动生成的,每个Node不同,必须删除

2.修改配置

两台节点(192、193)修改主机名

# 192

vi /opt/kubernetes/cfg/kubelet.conf

--hostname-override=k8s-node1

# 193

vi /opt/kubernetes/cfg/kubelet.conf

--hostname-override=k8s-node2

- 1

- 2

- 3

- 4

- 5

- 6

- 7

启动配置开机启动

systemctl daemon-reload

systemctl start kubelet kube-proxy

systemctl enable kubelet kube-proxy

- 1

- 2

- 3

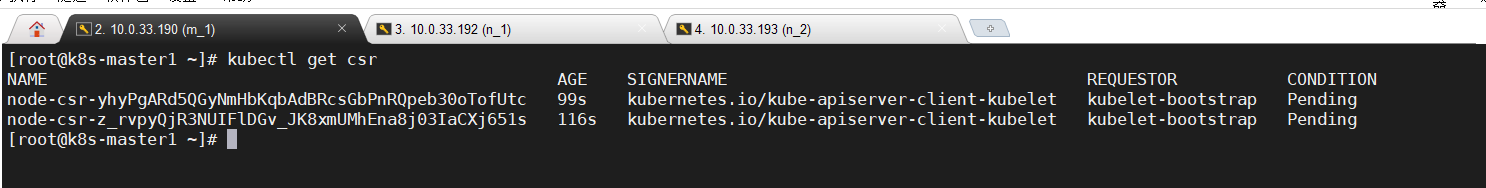

3. 证书申请

在Master(190)上批准新Node kubelet证书申请

# 查看证书请求

kubectl get csr

- 1

- 2

# 授权请求:kubectl certificate approve xxxx ,xxxx根据上面NAME填写

kubectl certificate approve node-csr-yhyPgARd5QGyNmHbKqbAdBRcsGbPnRQpeb30oTofUtc

kubectl certificate approve node-csr-z_rvpyQjR3NUIFlDGv_JK8xmUMhEna8j03IaCXj651s

- 1

- 2

- 3

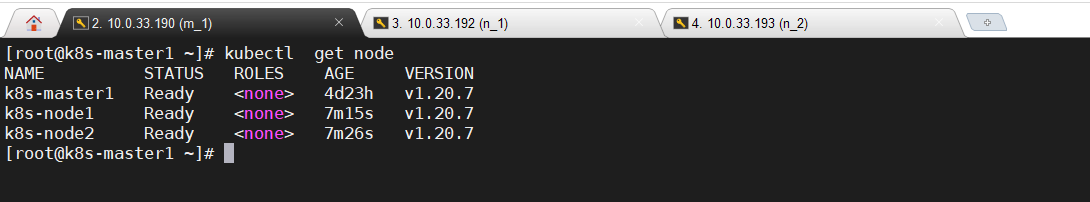

# 查看node状态

kubectl get node

- 1

- 2

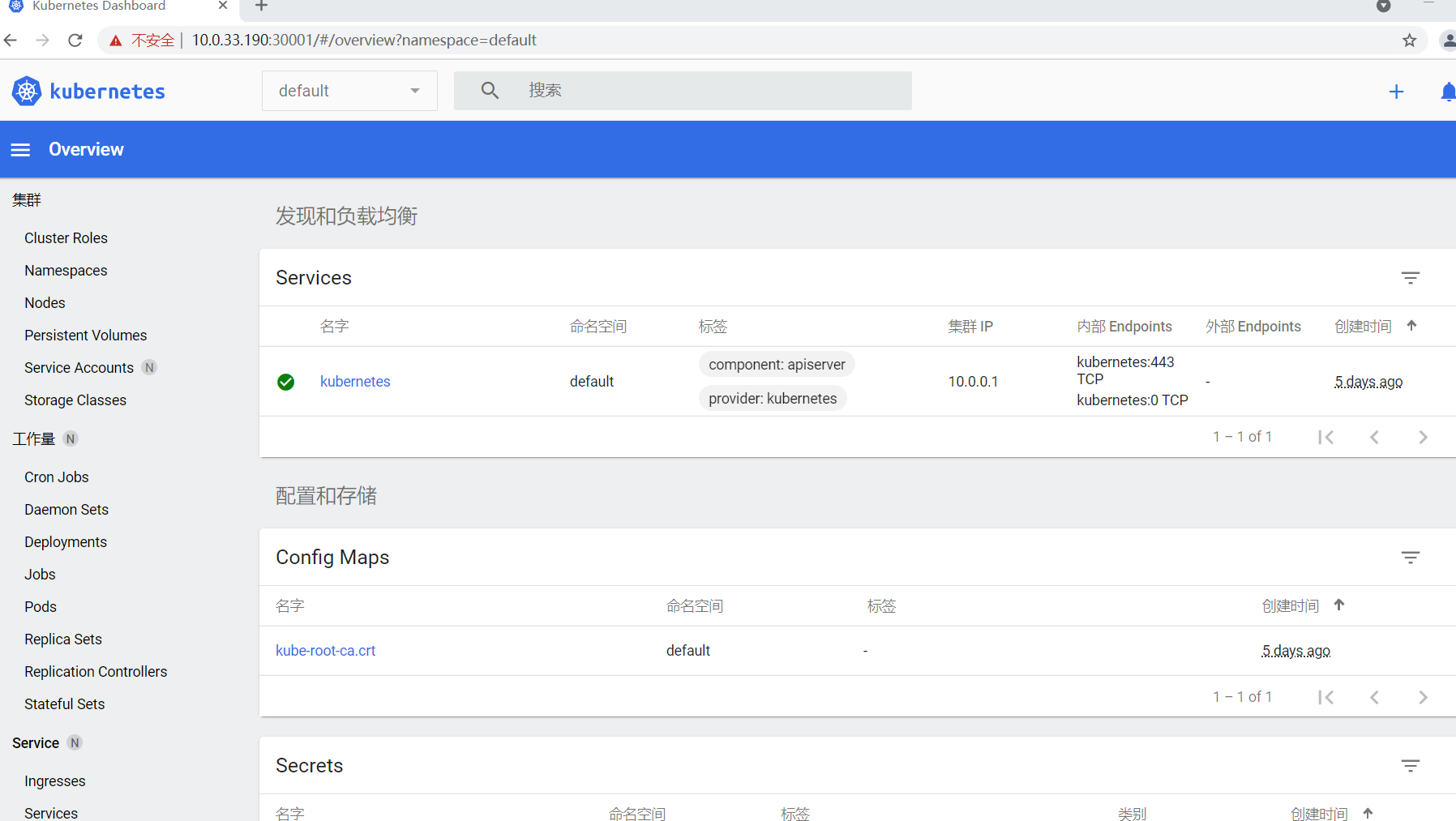

七、部署Dashboard和CoreDNS

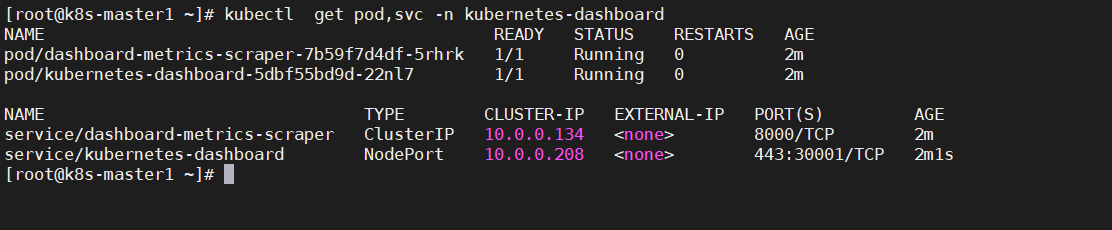

1. 部署Dashboard

Dashboard是k8s的一个web页面,不部署也可以。

# 应用部署文件

kubectl apply -f kubernetes-dashboard.yaml

# 查看状态

kubectl get pod,svc -n kubernetes-dashboard

- 1

- 2

- 3

- 4

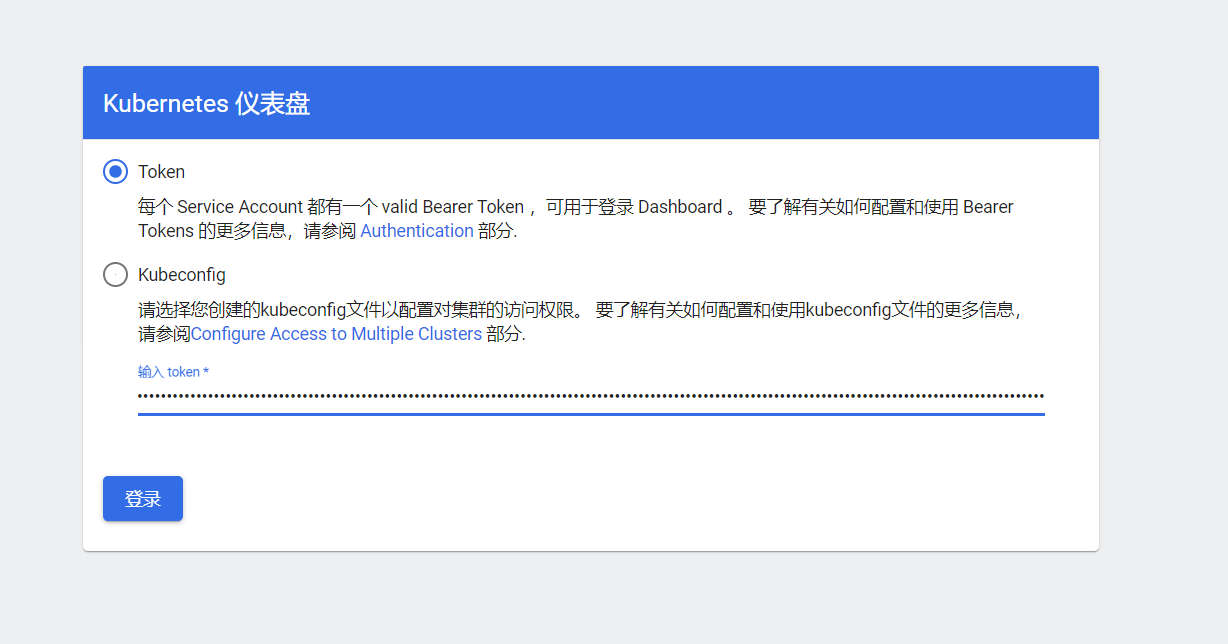

访问页面:https://NodeIP:30001

NodeIP是指随意一个node服务器ip,如:https://10.0.33.190:30001/

创建service account并绑定默认cluster-admin管理员集群角色:

kubectl create serviceaccount dashboard-admin -n kube-system

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

- 1

- 2

- 3

执行完复制token

粘贴并登陆:

登陆后的页面:

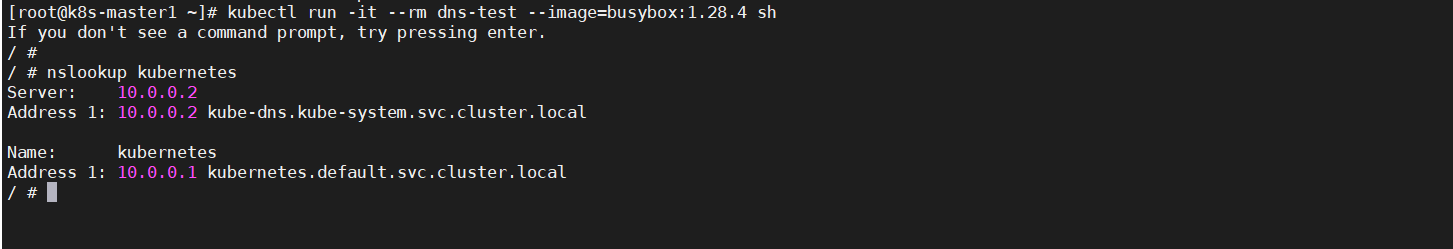

2. 部署CoreDNS

CoreDNS用于集群内部Service名称解析。

# 应用

kubectl apply -f coredns.yaml

# 查看部署状态

kubectl get pod -n kube-system |grep core

- 1

- 2

- 3

- 4

DNS解析测试:

# 创建一个测试容器,并进入容器内部

kubectl run -it --rm dns-test --image=busybox:1.28.4 sh

# 容器内部执行以下操作:

nslookup kubernetes

- 1

- 2

- 3

- 4

如果打印以上内容说明解析没有问题。

至此一个单Master集群就搭建完成了,如果不需要搭建多Mater节点到这里就算完成了!

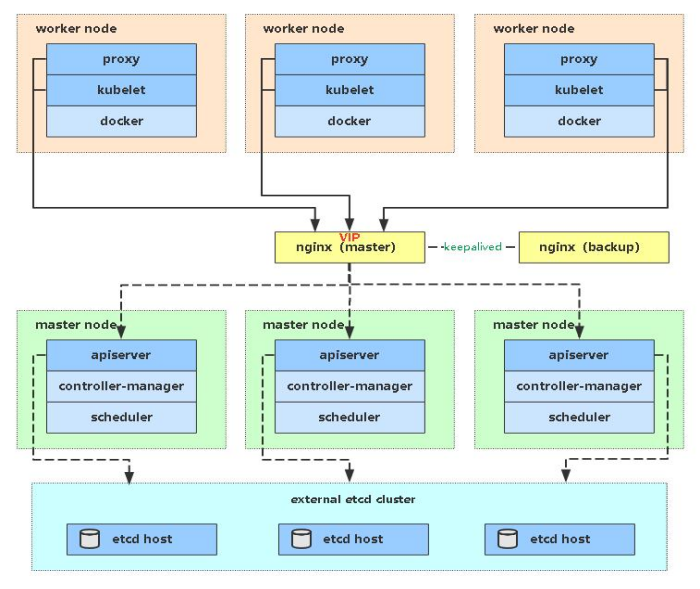

八、扩容为多Master

Master节点扮演着总控中心的角色,通过不断与工作节点上的Kubelet和kube-proxy进行通信来维护整个集群的健康工作状态。如果Master节点故障,将无法使用kubectl工具或者API做任何集群管理。

Master节点主要有三个服务kube-apiserver、kube-controller-manager和kube-scheduler,其中kube-controller-manager和kube-scheduler组件自身通过选择机制已经实现了高可用,所以Master高可用主要针对kube-apiserver组件,而该组件是以HTTP API提供服务,因此对他高可用与Web服务器类似,增加负载均衡器对其负载均衡即可,并且可水平扩容。

多Master架构图:

1. 部署Master2

Master2 与已部署的Master1所有操作一致。所以我们只需将Master1所有K8s文件拷贝过来,再修改下服务器IP和主机名启动即可。

拷贝文件:

# master1上操作

scp -r /opt/kubernetes root@10.0.33.191:/opt

scp -r /opt/etcd/ssl root@10.0.33.191:/opt/etcd

scp /usr/lib/systemd/system/kube* root@10.0.33.191:/usr/lib/systemd/system

scp /usr/bin/kubectl root@10.0.33.191:/usr/bin

scp -r ~/.kube root@10.0.33.191:~

- 1

- 2

- 3

- 4

- 5

- 6

删除证书文件:

master2删除kubelet证书和kubeconfig文件

rm -f /opt/kubernetes/cfg/kubelet.kubeconfig

rm -f /opt/kubernetes/ssl/kubelet*

- 1

- 2

修改配置:

修改apiserver、kubelet和kube-proxy配置文件为本地IP:

vi /opt/kubernetes/cfg/kube-apiserver.conf

# 修改内容

--bind-address=10.0.33.191 \

--advertise-address=10.0.33.191 \

- 1

- 2

- 3

- 4

vi /opt/kubernetes/cfg/kubelet.conf

# 修改内容

--hostname-override=k8s-master2

- 1

- 2

- 3

vi /opt/kubernetes/cfg/kube-proxy-config.yml

# 修改内容

hostnameOverride: k8s-master2

- 1

- 2

- 3

# 修改连接master为本机IP

vi ~/.kube/config

# 修改内容

server: https://10.0.33.191:6443

- 1

- 2

- 3

- 4

启动并设置开机启动:

systemctl daemon-reload

systemctl start kube-apiserver kube-controller-manager kube-scheduler kubelet kube-proxy

systemctl enable kube-apiserver kube-controller-manager kube-scheduler kubelet kube-proxy

- 1

- 2

- 3

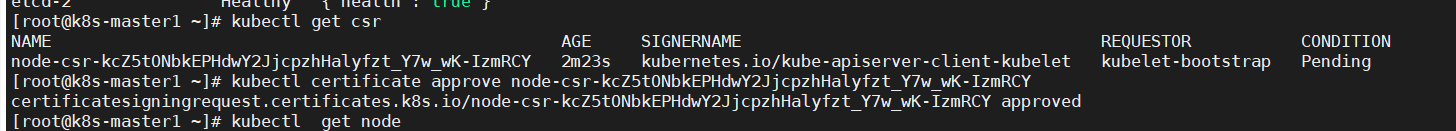

查看集群状态

kubectl get cs

- 1

批准kubelet证书申请

# 查看证书请求

kubectl get csr

# 授权

kubectl certificate approve node-csr-kcZ5tONbkEPHdwY2JjcpzhHalyfzt_Y7w_wK-IzmRCY

- 1

- 2

- 3

- 4

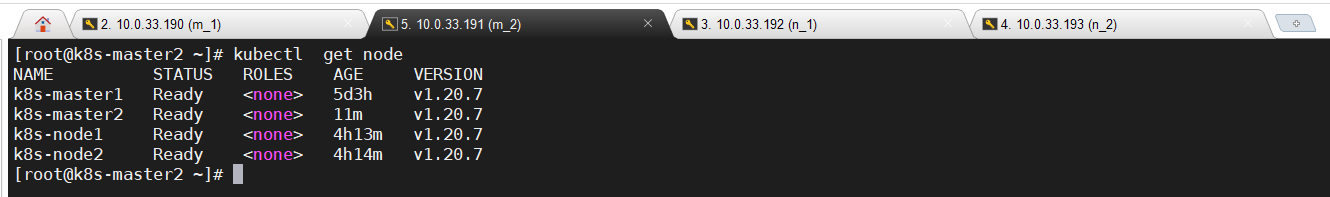

# 查看node状态

kubectl get node

- 1

- 2

2. 部署高可用

部署Nginx+Keepalived高可用负载均衡器

kube-apiserver高可用架构图:

• Nginx是一个主流Web服务和反向代理服务器,这里用四层实现对apiserver实现负载均衡。

• Keepalived是一个主流高可用软件,基于VIP绑定实现服务器双机热备,在上述拓扑中,Keepalived主要根据Nginx运行状态判断是否需要故障转移(漂移VIP),例如当Nginx主节点挂掉,VIP会自动绑定在Nginx备节点,从而保证VIP一直可用,实现Nginx高可用。

部署机器: 10.0.33.194/195 其中194为master

2.1 安装软件包

安装nginx(两台机器都装):

# 下载安装包

wget http://nginx.org/download/nginx-1.20.1.tar.gz

# 安装依赖

yum install gcc gcc-c++ pcre pcre-devel zlib zlib-devel openssl

# 解压

tar -xvf nginx-1.20.1.tar.gz

cd nginx-1.20.1

# 编译安装

./configure --user=nginx \

--group=nginx \

--prefix=/usr/local/nginx \

--conf-path=/usr/local/nginx/nginx.conf \

--with-stream

# 安装

make && make install

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

Nginx配置文件(2台机器一样)

upstream k8s-apiserver改为k8s apiserver地址和端口

cat > /usr/local/nginx/nginx.conf << "EOF" user nginx; worker_processes auto; error_log /usr/local/nginx/logs/error.log; pid /usr/local/nginx/logs/nginx.pid; include /usr/share/nginx/modules/*.conf; events { worker_connections 1024; } # 四层负载均衡,为两台Master apiserver组件提供负载均衡 stream { log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent'; access_log /usr/local/nginx/logs/k8s-access.log main; upstream k8s-apiserver { server 10.0.33.190:6443; # Master1 APISERVER IP:PORT server 10.0.33.191:6443; # Master2 APISERVER IP:PORT } server { listen 16443; # 注意如果nginx与master节点复用,这个监听端口不能是6443,否则会冲突 proxy_pass k8s-apiserver; } } http { log_format main '$remote_addr - $remote_user [$time_local] "$request" ' '$status $body_bytes_sent "$http_referer" ' '"$http_user_agent" "$http_x_forwarded_for"'; access_log /usr/local/nginx/logs/access.log main; sendfile on; tcp_nopush on; tcp_nodelay on; keepalive_timeout 65; types_hash_max_size 2048; include /usr/local/nginx/mime.types; default_type application/octet-stream; server { listen 80 default_server; server_name _; location / { } } } EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

nginx加入systemd管理

cat > /usr/lib/systemd/system/nginx.service << "EOF"

[Unit]

Description=The Nginx HTTP Server daemon

After=network.target remote-fs.target nss-lookup.target

[Service]

#Type为服务的类型,仅启动一个主进程的服务为simple,需要启动若干子进程的服务为forking

Type=forking

ExecStart=/usr/local/nginx/sbin/nginx

ExecReload=/usr/local/nginx/sbin/nginx -s reload

ExecStop=/bin/kill -s QUIT ${MAINPID}

[Install]

WantedBy=multi-user.target

EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

# 添加用户

groupadd nginx && useradd nginx -g nginx

# 重载

systemctl daemon-reload

# 启动

systemctl start nginx

# 加入开机启动

systemctl enable nginx

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

安装keepalived:

# 两台机器安装

yum install epel-release -y

yum install keepalived -y

- 1

- 2

- 3

2.2 keepalived配置

keepalived配置文件(Nginx Master 194)

cat > /etc/keepalived/keepalived.conf << EOF global_defs { notification_email { acassen@firewall.loc failover@firewall.loc sysadmin@firewall.loc } notification_email_from Alexandre.Cassen@firewall.loc smtp_server 127.0.0.1 smtp_connect_timeout 30 router_id NGINX_MASTER } vrrp_script check_nginx { script "/etc/keepalived/check_nginx.sh" } vrrp_instance VI_1 { state MASTER interface ens32 # 修改为实际网卡名 virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的 priority 100 # 优先级,备服务器设置 90 advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒 authentication { auth_type PASS auth_pass 1111 } # 虚拟IP virtual_ipaddress { 10.0.33.196/24 } track_script { check_nginx } } EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

• vrrp_script:指定检查nginx工作状态脚本(根据nginx状态判断是否故障转移)

• virtual_ipaddress:虚拟IP(VIP)

准备上述配置文件中检查nginx运行状态的脚本:

cat > /etc/keepalived/check_nginx.sh << "EOF"

#!/bin/bash

count=$(ss -antp |grep 16443 |egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

exit 1

else

exit 0

fi

EOF

# 添加执行权限

chmod +x /etc/keepalived/check_nginx.sh

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

keepalived配置文件(Nginx Backup 195)

cat > /etc/keepalived/keepalived.conf << EOF global_defs { notification_email { acassen@firewall.loc failover@firewall.loc sysadmin@firewall.loc } notification_email_from Alexandre.Cassen@firewall.loc smtp_server 127.0.0.1 smtp_connect_timeout 30 router_id NGINX_BACKUP } vrrp_script check_nginx { script "/etc/keepalived/check_nginx.sh" } vrrp_instance VI_1 { state BACKUP interface ens32 virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的 priority 90 advert_int 1 authentication { auth_type PASS auth_pass 1111 } virtual_ipaddress { 10.0.33.196/24 } track_script { check_nginx } } EOF

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

准备上述配置文件中检查nginx运行状态的脚本:

cat > /etc/keepalived/check_nginx.sh << "EOF"

#!/bin/bash

count=$(ss -antp |grep 16443 |egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

exit 1

else

exit 0

fi

EOF

# 添加执行权限

chmod +x /etc/keepalived/check_nginx.sh

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

注:keepalived根据脚本返回状态码(0为工作正常,非0不正常)判断是否故障转移。

启动

# 2台一起启动

systemctl daemon-reload

systemctl start keepalived

systemctl enable keepalived

- 1

- 2

- 3

- 4

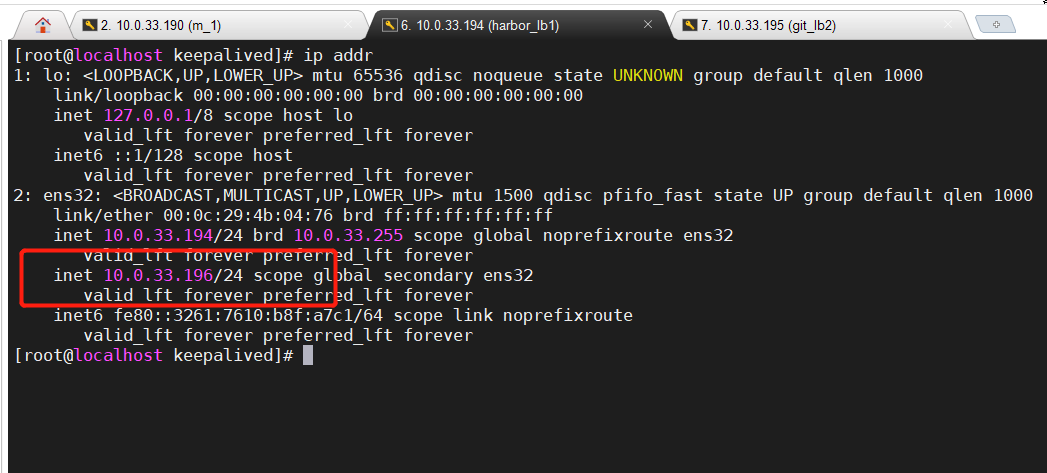

2.3 测试

查看keepalived工作状态

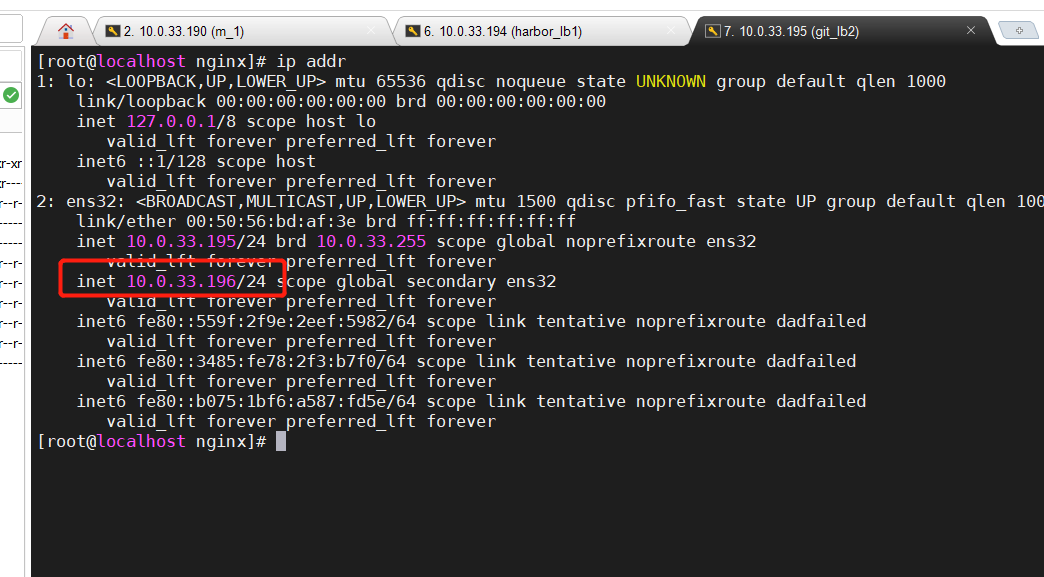

# nginx主节点查看网卡是否绑定虚拟ip

ip addr

- 1

- 2

Nginx+Keepalived高可用测试

把主节点的nginx关闭,然后在从节点上查看虚拟ip是否漂移过去

# Nginx 主节点执行

pkill nginx

# Nginx 从节点

ip addr

- 1

- 2

- 3

- 4

K8S节点测试

K8s集群中任意一个节点,使用curl查看K8s版本测试,使用VIP访问。注意关闭防火墙。

curl -k https://10.0.33.196:16443/version

- 1

如果可以正确获取到k8s版本信息,说明高可用负载正常。

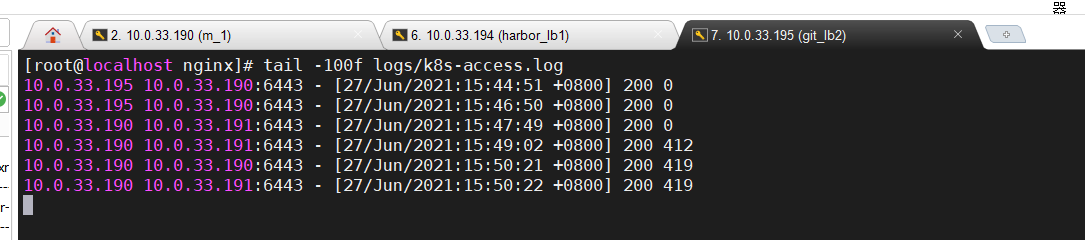

也可以通过查看Nginx日志也可以看到转发apiserver IP:

3. 修改Node连接LB VIP

目前我们增加了Master2 节点和负载均衡器,但是我们是从单Master架构扩容的,也就是说目前所有的Node节点连接都还是Master1 节点ip,如果不改为连接VIP走负载均衡器,那么Master还是单点故障。

把node节点上所有的的配置由:10.0.33.190:6443 改为10.0.33.196:16443(vip)

在所有节点执行(master1和2也执行):

sed -i 's#10.0.33.190:6443#10.0.33.196:16443#' /opt/kubernetes/cfg/*

# 检查

grep "16443" /opt/kubernetes/cfg/*

# 重启

systemctl restart kubelet kube-proxy

- 1

- 2

- 3

- 4

- 5

执行完成后查看节点状态:

kubectl get node

- 1

至此K8S高可用集群就搭建完成了,如果后续需要添加节点按照以上步骤操作即可。

九、其它

1.命令补全

yum install bash-completion -y

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

- 1

- 2

- 3

BACKUP

interface ens32

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.33.196/24

}

track_script {

check_nginx

}

}

EOF

准备上述配置文件中检查nginx运行状态的脚本: ```shell cat > /etc/keepalived/check_nginx.sh << "EOF" #!/bin/bash count=$(ss -antp |grep 16443 |egrep -cv "grep|$$") if [ "$count" -eq 0 ];then exit 1 else exit 0 fi EOF # 添加执行权限 chmod +x /etc/keepalived/check_nginx.sh

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

注:keepalived根据脚本返回状态码(0为工作正常,非0不正常)判断是否故障转移。

启动

# 2台一起启动

systemctl daemon-reload

systemctl start keepalived

systemctl enable keepalived

- 1

- 2

- 3

- 4

2.3 测试

查看keepalived工作状态

# nginx主节点查看网卡是否绑定虚拟ip

ip addr

- 1

- 2

[外链图片转存中…(img-9gu34a0h-1682645840015)]

Nginx+Keepalived高可用测试

把主节点的nginx关闭,然后在从节点上查看虚拟ip是否漂移过去

# Nginx 主节点执行

pkill nginx

# Nginx 从节点

ip addr

- 1

- 2

- 3

- 4

[外链图片转存中…(img-bkfybqqj-1682645840016)]

K8S节点测试

K8s集群中任意一个节点,使用curl查看K8s版本测试,使用VIP访问。注意关闭防火墙。

curl -k https://10.0.33.196:16443/version

- 1

[外链图片转存中…(img-hDjDf5A5-1682645840017)]

如果可以正确获取到k8s版本信息,说明高可用负载正常。

也可以通过查看Nginx日志也可以看到转发apiserver IP:

[外链图片转存中…(img-6g3H5oxd-1682645840017)]

3. 修改Node连接LB VIP

目前我们增加了Master2 节点和负载均衡器,但是我们是从单Master架构扩容的,也就是说目前所有的Node节点连接都还是Master1 节点ip,如果不改为连接VIP走负载均衡器,那么Master还是单点故障。

把node节点上所有的的配置由:10.0.33.190:6443 改为10.0.33.196:16443(vip)

在所有节点执行(master1和2也执行):

sed -i 's#10.0.33.190:6443#10.0.33.196:16443#' /opt/kubernetes/cfg/*

# 检查

grep "16443" /opt/kubernetes/cfg/*

# 重启

systemctl restart kubelet kube-proxy

- 1

- 2

- 3

- 4

- 5

执行完成后查看节点状态:

kubectl get node

- 1

[外链图片转存中…(img-yYNiTVBi-1682645840018)]

至此K8S高可用集群就搭建完成了,如果后续需要添加节点按照以上步骤操作即可。

九、其它

1.命令补全

yum install bash-completion -y

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

- 1

- 2

- 3