热门标签

热门文章

- 1初识人脸识别---人脸识别研究报告(概述篇)_机器视觉的人脸识别报告

- 2专科程序员VS本科程序员,如何摆脱学历「魔咒」?_大专选择程序员摆脱了蓝领

- 3基于Java的连连看游戏设计与实现_java数字连连看程序设计项目背景

- 4交换二叉树的左右子树——非递归方式_非递归实现二叉树左右子树交换

- 5Hbase伪分布式搭建时HQuorumeer启动不成功_hquorumpeer进程没起来是什么原因

- 6git命令删除缓存区(git add)的内容_git删除缓冲区文件

- 7使用MacOS M1(ARM)芯片系统搭建CentOS虚拟机,并基于kubeadm部署搭建k8s集群_m1 vmware centos

- 8[python] 使用scikit-learn工具计算文本TF-IDF值(转载学习)_tf-idf算法调用

- 9android radiobutton 设置选中问题_android recyclerview把第一个item的radiobutton设置成选中状态

- 10Java技能点--switch支持的数据类型解释_java 类常量和接口常量 switch

当前位置: article > 正文

llama.cpp

作者:神奇cpp | 2024-06-30 09:59:28

赞

踩

llama.cpp

https://github.com/echonoshy/cgft-llm

【大模型量化】- Llama.cpp轻量化模型部署及量化_哔哩哔哩_bilibili

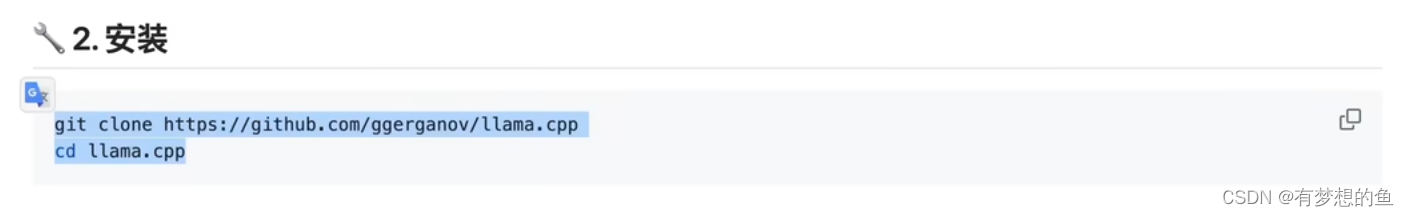

github.com/ggerganov/llama.cpp

cd ~/code/llama.cpp/build_cuda/bin

./quantize --allow-requantize /root/autodl-tmp/models/Llama3-8B-Chinese-Chat-GGUF/Llama3-8B-Chinese-Chat-q8_0-v2_1.gguf /root/autodl-tmp/models/Llama3-8B-Chinese-Chat-GGUF/Llama3-8B-Chinese-Chat-q4_1-v1.gguf Q4_1

python convert-hf-to-gguf.py /root/autodl-tmp/models/Llama3-8B-Chinese-Chat --outfile /root/autodl-tmp/models/Llama3-8B-Chinese-Chat-GGUF/Llama3-8B-Chinese-Chat-q8_0-v1.gguf --outtype q8_0

![]()

![]()

![]()

![]()

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/神奇cpp/article/detail/772236

推荐阅读

相关标签