热门标签

热门文章

- 1Spring接入Metrics_spring metrics

- 2c语言实现结构体变量private,C语言中结构体变量私有化详解

- 3网络是怎样连接起来的_网络是怎么连接起来的

- 4VMware Mac OS X ios 修改显示分辨率 VM10 OS X 10.9_虚拟机改平板分辨率

- 5Java~网络原理~TCP协议与UDP协议详解、TCP保证可靠性传输的措施(确认应答、握手挥手、滑动窗口、流量控制、拥塞控制)及如何提高网络利用率?_资源利用率 协议

- 6基于单同步坐标系软件锁相环的电力电子仿真应用于并网逆变器、微电网、三相VSR

- 7git常用操作_git配置代码审核人

- 8华为OD机试C卷-- 字符串拼接(Java & JS & Python & C)

- 9Java 对称加密AES、DES的实现_aes java

- 10Ambari——大数据平台的搭建利器

当前位置: article > 正文

在k8s上部署ES集群_es集群 k8

作者:繁依Fanyi0 | 2024-07-01 09:03:11

赞

踩

es集群 k8

一、k8s集群架构:

| IP | 角色 |

|---|---|

| 192.168.1.3 | master1 |

| 192.168.1.4 | master2 |

| 192.168.1.5 | master3 |

| 192.168.1.6 | node1 |

| 192.168.1.7 | node2 |

二、部署ES集群

1、配置storageclass,用于动态创建pvc,并自动绑定pv

[root@master1 tmp]# cat sc.yaml

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: local-storage

provisioner: kubernetes.io/no-provisioner

volumeBindingMode: WaitForFirstConsumer

- 1

- 2

- 3

- 4

- 5

- 6

- 7

执行一下:

kubectl apply -f sc.yaml

- 1

2、创建名称空间

kubectl create ns elasticsearch

- 1

3、创建PV

[root@master1 elk]# cat pv.yaml apiVersion: v1 kind: PersistentVolume metadata: name: local-storage-pv-1 namespace: elasticsearch labels: name: local-storage-pv-1 spec: capacity: storage: 1Gi accessModes: - ReadWriteOnce persistentVolumeReclaimPolicy: Retain storageClassName: local-storage local: path: /data/es nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - master1 --- apiVersion: v1 kind: PersistentVolume metadata: name: local-storage-pv-2 namespace: elasticsearch labels: name: local-storage-pv-2 spec: capacity: storage: 1Gi accessModes: - ReadWriteOnce persistentVolumeReclaimPolicy: Retain storageClassName: local-storage local: path: /data/es nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - master2 --- apiVersion: v1 kind: PersistentVolume metadata: name: local-storage-pv-3 namespace: elasticsearch labels: name: local-storage-pv-3 spec: capacity: storage: 1Gi accessModes: - ReadWriteOnce persistentVolumeReclaimPolicy: Retain storageClassName: local-storage local: path: /data/es nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - master3 --- apiVersion: v1 kind: PersistentVolume metadata: name: local-storage-pv-4 namespace: elasticsearch labels: name: local-storage-pv-4 spec: capacity: storage: 1Gi accessModes: - ReadWriteOnce persistentVolumeReclaimPolicy: Retain storageClassName: local-storage local: path: /data/es nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - node1 --- apiVersion: v1 kind: PersistentVolume metadata: name: local-storage-pv-5 namespace: elasticsearch labels: name: local-storage-pv-5 spec: capacity: storage: 1Gi accessModes: - ReadWriteOnce persistentVolumeReclaimPolicy: Retain storageClassName: local-storage local: path: /data/es nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/hostname operator: In values: - node2

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

一共是5个PV,每个都通过nodeSelectorTerms跟k8s节点绑定。

执行一下:

kubectl apply -f pv.yaml

- 1

3、创建StatefulSet,ES属于数据库类型的应用,此类应用适合StatefulSet类型

[root@master1 elk]# cat sts.yaml apiVersion: apps/v1 kind: StatefulSet metadata: name: es7-cluster namespace: elasticsearch spec: serviceName: elasticsearch7 replicas: 5 selector: matchLabels: app: elasticsearch7 template: metadata: labels: app: elasticsearch7 spec: containers: - name: elasticsearch7 image: elasticsearch:7.16.2 resources: limits: cpu: 1000m requests: cpu: 100m ports: - containerPort: 9200 name: rest protocol: TCP - containerPort: 9300 name: inter-node protocol: TCP volumeMounts: - name: data mountPath: /usr/share/elasticsearch/data env: - name: cluster.name value: k8s-logs - name: node.name valueFrom: fieldRef: fieldPath: metadata.name - name: discovery.zen.minimum_master_nodes value: "2" - name: discovery.seed_hosts value: "es7-cluster-0.elasticsearch7,es7-cluster-1.elasticsearch7,es7-cluster-2.elasticsearch7" - name: cluster.initial_master_nodes value: "es7-cluster-0,es7-cluster-1,es7-cluster-2" - name: ES_JAVA_OPTS value: "-Xms1g -Xmx1g" initContainers: - name: fix-permissions image: busybox command: ["sh", "-c", "chown -R 1000:1000 /usr/share/elasticsearch/data"] securityContext: privileged: true volumeMounts: - name: data mountPath: /usr/share/elasticsearch/data - name: increase-vm-max-map image: busybox command: ["sysctl", "-w", "vm.max_map_count=262144"] securityContext: privileged: true - name: increase-fd-ulimit image: busybox command: ["sh", "-c", "ulimit -n 65536"] volumeClaimTemplates: - metadata: name: data spec: accessModes: [ "ReadWriteOnce" ] storageClassName: "local-storage" resources: requests: storage: 1Gi

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

该ES集群通过volumeClaimTemplates来关联storageClass,并自动绑定相应的PV。

执行一下:

kubectl apply -f sts.yaml

- 1

4、创建NodePort类型的Service来蒋ES集群暴漏出去

[root@master1 elk]# cat svc.yaml

apiVersion: v1

kind: Service

metadata:

name: elasticsearch7

namespace: elasticsearch

spec:

selector:

app: elasticsearch7

type: NodePort

ports:

- port: 9200

nodePort: 30002

targetPort: 9200

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

执行一下:

kubectl apply -f svc.yaml

- 1

以上就创建完了,我们来看一下上面创建的资源状态:

(1)storageclass

[root@master1 elk]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

local-storage kubernetes.io/no-provisioner Delete WaitForFirstConsumer false 21h

- 1

- 2

- 3

(2)PV

[root@master1 elk]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

local-storage-pv-1 1Gi RWO Retain Bound elasticsearch/data-es7-cluster-1 local-storage 21h

local-storage-pv-2 1Gi RWO Retain Bound elasticsearch/data-es7-cluster-2 local-storage 21h

local-storage-pv-3 1Gi RWO Retain Bound elasticsearch/data-es7-cluster-0 local-storage 21h

local-storage-pv-4 1Gi RWO Retain Bound elasticsearch/data-es7-cluster-4 local-storage 19m

local-storage-pv-5 1Gi RWO Retain Bound elasticsearch/data-es7-cluster-3 local-storage 19m

[root@master1 tmp]# kubectl get pvc -n elasticsearch

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

data-es7-cluster-0 Bound local-storage-pv-3 1Gi RWO local-storage 21h

data-es7-cluster-1 Bound local-storage-pv-1 1Gi RWO local-storage 21h

data-es7-cluster-2 Bound local-storage-pv-2 1Gi RWO local-storage 21h

data-es7-cluster-3 Bound local-storage-pv-5 1Gi RWO local-storage 20m

data-es7-cluster-4 Bound local-storage-pv-4 1Gi RWO local-storage 19m

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

(3)StatefulSet

[root@master1 elk]# kubectl get statefulset -n elasticsearch

NAME READY AGE

es7-cluster 5/5 21h

[root@master1 tmp]# kubectl get pod -n elasticsearch

NAME READY STATUS RESTARTS AGE

es7-cluster-0 1/1 Running 1 21h

es7-cluster-1 1/1 Running 1 21h

es7-cluster-2 1/1 Running 1 21h

es7-cluster-3 1/1 Running 0 19m

es7-cluster-4 1/1 Running 0 18m

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

(4)Service

[root@master1 tmp]# kubectl get svc -n elasticsearch

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

elasticsearch7 NodePort 10.97.196.176 <none> 9200:30002/TCP 21h

- 1

- 2

- 3

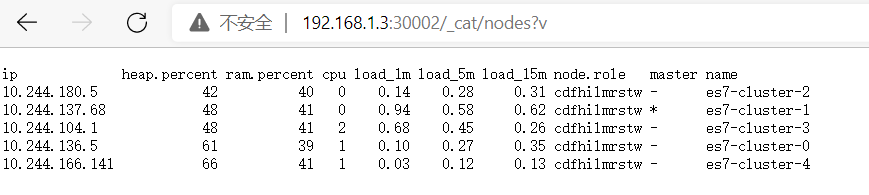

(5)通过restful接口查看ES集群状态

[root@master1 elk]# curl 192.168.1.3:30002/_cat/nodes?v

ip heap.percent ram.percent cpu load_1m load_5m load_15m node.role master name

10.244.136.5 60 39 1 0.19 0.31 0.36 cdfhilmrstw - es7-cluster-0

10.244.104.1 45 41 1 0.98 0.44 0.25 cdfhilmrstw - es7-cluster-3

10.244.180.5 40 40 1 0.28 0.32 0.32 cdfhilmrstw - es7-cluster-2

10.244.166.141 63 41 1 0.06 0.14 0.14 cdfhilmrstw - es7-cluster-4

10.244.137.68 44 41 1 0.29 0.45 0.59 cdfhilmrstw * es7-cluster-1

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

通过网页访问也是正常的:

5、部署kibana

kibana我们使用Deployment类型来部署,而它的Service我们使用NodePort,完整的代码如下:

[root@master1 elk]# cat kibana.yaml apiVersion: v1 kind: Service metadata: name: kibana namespace: elasticsearch labels: app: kibana spec: ports: - port: 5601 targetPort: 5601 nodePort: 30001 type: NodePort selector: app: kibana --- apiVersion: apps/v1 kind: Deployment metadata: name: kibana namespace: elasticsearch labels: app: kibana spec: selector: matchLabels: app: kibana template: metadata: labels: app: kibana spec: nodeSelector: node: node2 containers: - name: kibana image: kibana:7.16.2 resources: limits: cpu: 1000m requests: cpu: 1000m env: - name: ELASTICSEARCH_HOSTS value: http://elasticsearch7:9200 - name: SERVER_PUBLICBASEURL value: "0.0.0.0:5601" - name: I18N.LOCALE value: zh-CN ports: - containerPort: 5601

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

上面的Deployment部分,我们通过nodeSelector指定调度到了node2节点,前提是给node2节点加了个node=node2这样一个标签。

执行一下:

[root@master1 elk]# kubectl apply -f kibana.yaml

- 1

再查看一下状态:

[root@master1 elk]# kubectl get all -n elasticsearch NAME READY STATUS RESTARTS AGE pod/es7-cluster-0 1/1 Running 0 47m pod/es7-cluster-1 1/1 Running 0 46m pod/es7-cluster-2 1/1 Running 0 46m pod/es7-cluster-3 1/1 Running 0 43m pod/es7-cluster-4 1/1 Running 0 42m pod/kibana-768595479f-mhw9q 1/1 Running 0 13m NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/elasticsearch7 NodePort 10.108.115.19 <none> 9200:30002/TCP 47m service/kibana NodePort 10.111.66.207 <none> 5601:30001/TCP 13m NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/kibana 1/1 1 1 13m NAME DESIRED CURRENT READY AGE replicaset.apps/kibana-768595479f 1 1 1 13m NAME READY AGE statefulset.apps/es7-cluster 5/5 47m

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

看到kibana的Service和Pod都在运行中了,通过页面验证一下kibana能不能访问:

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/繁依Fanyi0/article/detail/775781

推荐阅读

相关标签