- 1Cortex-M3 启动代码(GCC)详解_cortex-m3的启动汇编代码

- 2使用R语言中的xts包提取时间序列数据是一项常见的任务_r语言xts函数怎么使用

- 30928CSP-S模拟测试赛后总结

- 4华为OD机试真题2023 B卷(JAVA&JS)_od javascript机试题

- 5福利 | 从生物学到神经元:人工神经网络 ( ANN ) 简介

- 6脑电信号的注意机制:基于ViT的情绪识别:论文精读_introducing attention mechanism for eeg signals: e

- 7Vue实现 展开 / 收起 功能_vue展开收起功能

- 8mybatis(超详细,常用)_mybatis常用标签

- 96个技巧帮你提高Python运行效率_提高python运行速度

- 10奇异值分解(SVD)(Singular Value Decomposition)

spark高可用集群搭建及运行测试_spark hdfsthe maximum recommended task size is 100

赞

踩

之前的文章spark集群的搭建基础上建立的,重复操作已经简写;

之前的配置中使用了master01、slave01、slave02、slave03;

本篇文章还要添加master02和CloudDeskTop两个节点,并配置好运行环境;

一、流程:

1、在搭建高可用集群之前需要先配置高可用,首先在master01上:

[hadoop@master01 ~]$ cd /software/spark-2.1.1/conf/

[hadoop@master01 conf]$ vi spark-env.sh

xport JAVA_HOME=/software/jdk1.7.0_79

export SCALA_HOME=/software/scala-2.11.8

export HADOOP_HOME=/software/hadoop-2.7.3

export HADOOP_CONF_DIR=/software/hadoop-2.7.3/etc/hadoop

#Spark历史服务分配的内存尺寸

export SPARK_DAEMON_MEMORY=512m

#这面的这一项就是Spark的高可用配置,如果是配置master的高可用,master就必须有;如果是slave的高可用,slave就必须有;但是建议都配置。

export SPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=ZOOKEEPER -Dspark.deploy.zookeeper.url=slave01:2181,slave02:2181,slave03:2181 -Dspark.deploy.zookeeper.dir=/spark"

#当启用了Spark的高可用之后,下面的这一项应该被注释掉(即不能再被启用,后面通过提交应用时使用--master参数指定高可用集群节点)

#export SPARK_MASTER_IP=master01

#export SPARK_WORKER_MEMORY=1500m

#export SPARK_EXECUTOR_MEMORY=100m

2、将master01节点上的Spark配置文件spark-env.sh同步拷贝到Spark集群上的每一个Worker节点

[hadoop@master01 software]$ scp -r spark-2.1.1/conf/spark-env.sh slave01:/software/spark-2.1.1/conf/

[hadoop@master01 software]$ scp -r spark-2.1.1/conf/spark-env.sh slave02:/software/spark-2.1.1/conf/

[hadoop@master01 software]$ scp -r spark-2.1.1/conf/spark-env.sh slave03:/software/spark-2.1.1/conf/

3、配置master02的高可用配置:

#拷贝Scala安装目录和Spark安装目录到master02节点

[hadoop@master01 software]$ scp -r scala-2.11.8 spark-2.1.1 master02:/software/

[hadoop@master02 software]$ su -lc "chown -R root:root /software/scala-2.11.8"

#拷贝环境配置/etc/profile到master02节点

[hadoop@master01 software]$ su -lc "scp -r /etc/profile master02:/etc/"

#让环境配置立即生效

[hadoop@master01 software]$ su -lc "source /etc/profile"

4、配置CloudDeskTop的高可用配置,方便在eclipse进行开发:

#拷贝Scala安装目录和Spark安装目录到CloudDeskTop节点

[hadoop@master01 software]$ scp -r scala-2.11.8 spark-2.1.1 CloudDeskTop:/software/

[hadoop@CloudDeskTop software]$ su -lc "chown -R root:root /software/scala-2.11.8"

#拷贝环境配置/etc/profile到CloudDeskTop节点

[hadoop@CloudDeskTop software]$ vi /etc/profile

- JAVA_HOME=/software/jdk1.7.0_79

- HADOOP_HOME=/software/hadoop-2.7.3

- HBASE_HOME=/software/hbase-1.2.6

- SQOOP_HOME=/software/sqoop-1.4.6

- SCALA_HOME=/software/scala-2.11.8

- SPARK_HOME=/software/spark-2.1.1

- PATH=$PATH:$JAVA_HOME/bin:$JAVA_HOME/lib:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$HBASE_HOME/bin:$SQOOP_HOME/bin:$SCALA_HOME/bin::$SPARK_HOME/bin:

- export PATH JAVA_HOME HADOOP_HOME HBASE_HOME SQOOP_HOME SCALA_HOME SPARK_HOME

#让环境配置立即生效:(大数据学习交流群:217770236 让我我们一起学习大数据)

[hadoop@CloudDeskTop software]$ source /etc/profile

二、启动spark集群

由于每次都要启动,比较麻烦,所以博主写了个简单的启动脚本:第一个同步时间的脚本在root用户下执行,后面的脚本在hadoop用户下执行;

同步时间synchronizedDate.sh

同步时间synchronizedDate.sh

start-total.sh

start-total.sh

start-total.sh

start-total.sh

stop-total.sh

stop-total.sh

[hadoop@master01 install]$ sh start-total.sh

三、高可用集群测试:

使用浏览器访问:

http://master01的IP地址:8080/ #显示Status:ALIVE

http://master02的IP地址:8080/ #显示Status: STANDBY

感谢李永富老师提供的资深总结:

注意:通过上面的访问测试发现以下结论:

0)、ZK保存的集群状态数据也称为元数据,保存的元数据包括:worker、driver、application;

1)、Spark启动时,ZK根据Spark配置文件slaves中的worker配置项使用排除法找到需要启动的master节点(除了在slaves文件中被定义为worker节点以外的节点都有可能被选举为master节点来启动)

2)、ZK集群将所有启动了master进程的节点纳入到高可用集群中的节点来进行管理;

3)、如果处于alive状态的master节点宕机,则ZK集群会自动将其alive状态切换到高可用集群中的另一个节点上继续提供服务;如果宕机的master恢复则alive状态并不会恢复回去而是继续使用当前的alive节点,这说明了ZK实现的是双主或多主模式的高可用集群;

4)、Spark集群中master节点的高可用可以设置的节点数多余两个(高可用集群节点数可以大于2);

5)、高可用集群中作为active节点的master则是由ZK集群来确定的,alive的master宕机之后同样由ZK来决定新的alive的master节点,当新的alive的master节点确定好之后由该新的alive的master节点去主动通知客户端(spark-shell、spark-submit)来连接它自己(这是服务端主动连接客户端并通知客户端去连接服务端自己的过程,这个过程与Hadoop的客户端连接高可用集群不同,Hadoop是通过hadoop客户端主动识别高可用集群中的active节点的master);

6)、Hadoop与Spark的高可用都是基于ZK的双主或多主模式,而不是类同于KP的主备模式,双主模式与主备模式的区别在于;

双主模式:充当master的主机是并列的,没有优先级之分,双主切换的条件是其中一台master宕掉之后切换到另一台master

主备模式:充当master的主机不是并列的,存在优先级(优先级:主>备),主备模式切换的条件有两种:

A、主master宕掉之后自动切换到备master

B、主master恢复之后自动切换回主master

四、运行测试:

#删除以前的老的输出目录

[hadoop@CloudDeskTop install]$ hdfs dfs -rm -r /spark/output

1、准备测试所需数据

- [hadoop@CloudDeskTop install]$ hdfs dfs -ls /spark

- Found 1 items

- drwxr-xr-x - hadoop supergroup 0 2018-01-05 15:14 /spark/input

- [hadoop@CloudDeskTop install]$ hdfs dfs -ls /spark/input

- Found 1 items

- -rw-r--r-- 3 hadoop supergroup 66 2018-01-05 15:14 /spark/input/wordcount

- [hadoop@CloudDeskTop install]$ hdfs dfs -cat /spark/input/wordcount

- my name is ligang

- my age is 35

- my height is 1.67

- my weight is 118

2、运行spark-shell和spark-submit时需要使用--master参数同时指定高可用集群的所有节点,节点之间使用英文逗号分割,如下:

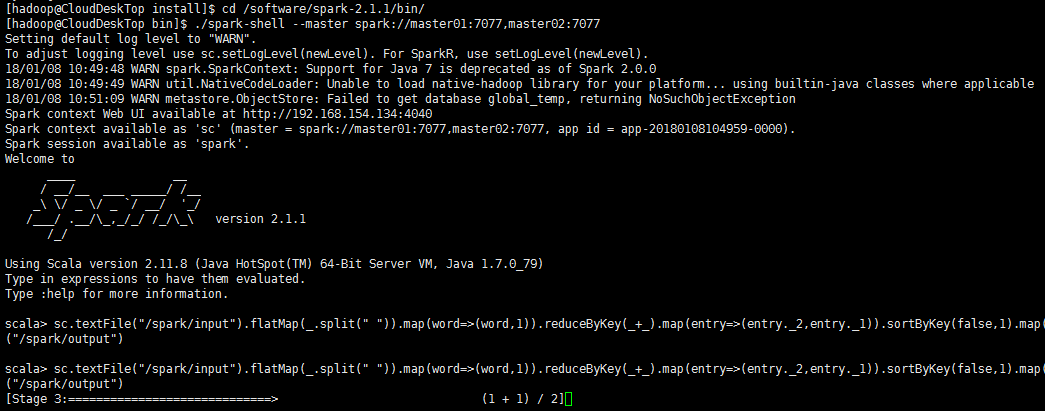

1)、使用spark-shell

[hadoop@CloudDeskTop bin]$ pwd

/software/spark-2.1.1/bin

[hadoop@CloudDeskTop bin]$ ./spark-shell --master spark://master01:7077,master02:7077

scala> sc.textFile("/spark/input").flatMap(_.split(" ")).map(word=>(word,1)).reduceByKey(_+_).map(entry=>(entry._2,entry._1)).sortByKey(false,1).map(entry=>(entry._2,entry._1)).saveAsTextFile("/spark/output")

scala> :q

查看HDFS集群中的输出结果:

hdfs集群中的输出结果:

[hadoop@slave01 ~]$ hdfs dfs -ls /spark/

Found 2 items

drwxr-xr-x - hadoop supergroup 0 2018-01-05 15:14 /spark/input

drwxr-xr-x - hadoop supergroup 0 2018-01-08 10:53 /spark/output

[hadoop@slave01 ~]$ hdfs dfs -ls /spark/output

Found 2 items

-rw-r--r-- 3 hadoop supergroup 0 2018-01-08 10:53 /spark/output/_SUCCESS

-rw-r--r-- 3 hadoop supergroup 88 2018-01-08 10:53 /spark/output/part-00000

[hadoop@slave01 ~]$ hdfs dfs -cat /spark/output/part-00000

(is,4)

(my,4)

(118,1)

(1.67,1)

(35,1)

(ligang,1)

(weight,1)

(name,1)

(height,1)

(age,1)

hdfs集群中的输出结果:

2)、使用spark-submit

[hadoop@CloudDeskTop bin]$ ./spark-submit --class org.apache.spark.examples.JavaSparkPi --master spark://master01:7077,master02:7077 ../examples/jars/spark-examples_2.11-2.1.1.jar 1

Pi的计算结果:

3)、测试Spark的高可用是否可以做到Job的运行时高可用

在运行Job的过程中将主Master进程宕掉,观察Spark在高可用集群下是否可以正常跑完Job;

经过实践测试得出结论:Spark的高可用比Yarn的高可用更智能化,可以做到Job的运行时高可用,这与HDFS的高可用能力是相同的;Spark之所以可以做到运行时高可用应该是因为在Job的运行时其Worker节点对Master节点的依赖不及Yarn集群下NM节点对RM节点的依赖那么多。

4)、停止集群

[hadoop@master01 install]$ sh stop-total.sh

注意:

1、如果需要在Spark的高可用配置下仅开启其中一个Master节点,你只需要直接将另一个节点关掉即可,不需要修改任何配置,以后需要多节点高可用时直接启动那些节点上的Master进程即可,ZK会在这些节点启动Master进程时自动感知并将其加入高可用集群组中去,同时为他们分配相应的高可用角色;

2、如果在Spark的高可用配置下仅开启其中一个Master节点,则该唯一节点必须是Alive角色,提交Job时spark-submit的--master参数应该只写Alive角色的唯一Master节点即可,如果你还是把那些没有启动Master进程的节点加入到--master参数列表中去则会引发IOException,但是整个Job仍然会运行成功,因为毕竟运行Job需要的仅仅是Alive角色的Master节点。