热门标签

热门文章

- 1超级简单的Python爬虫入门教程(非常详细),通俗易懂,看一遍就会了_爬虫python入门

- 2【Doris】Doris 最佳实践-Compaction调优(3)_doris中如何查看哪个表的版本有堆积?

- 3⑩② 【MySQL索引】详解MySQL`索引`:结构、分类、性能分析、设计及使用规则。_mysql 索引名带`

- 4CentOS 7.6 防火墙打开、关闭,端口开启、关闭_centos7.6开放端口命令

- 5李宏毅-2023春机器学习 ML2023 SPRING-学习笔记:2/24 正确认识chatGPT_李宏毅2023gpt

- 6yaw(pan)/pitch(tilt)/roll计算_yaw pitch roll计算

- 7Vivado PLL锁相环 IP核的使用

- 8雪花id如何保证连续且不重复

- 9解决ssh:connect to host github.com port 22: Connection timed out与kex_exchange_identification_cloning into 'gonganzichan'... ssh: connect to hos

- 10循环队列的初始化,入队,出队,求队长,取队头元素_循环队列初始化

当前位置: article > 正文

使用Linux部署Kafka教程_linux搭建kafka

作者:菜鸟追梦旅行 | 2024-04-14 03:14:59

赞

踩

linux搭建kafka

目录

一、部署Zookeeper

1、创建一个网络

- # app-tier:网络名称 # –driver:网络类型为bridge

-

- docker network create app-kafka --driver bridge

2、拉取Zookeeper镜像

docker pull bitnami/zookeeper:latest

3、运行Zookeeper

- # Kafka依赖zookeeper所以先安装zookeeper

- # -p:设置映射端口(默认2181)

- # -d:后台启动

-

- docker run -d --name zookeeper-server \

- -p 2181:2181 \

- --network app-kafka \

- -e ALLOW_ANONYMOUS_LOGIN=yes \

- bitnami/zookeeper:latest

二、部署Kafka

1、拉取Kafka镜像

docker pull bitnami/kafka:latest

2、运行Kafka

- # 安装并运行Kafka,

- # –name:容器名称

- # -p:设置映射端口(默认9092 )

- # -d:后台启动

- # ALLOW_PLAINTEXT_LISTENER任何人可以访问

- # KAFKA_CFG_ZOOKEEPER_CONNECT链接的zookeeper

- # KAFKA_ADVERTISED_HOST_NAME当前主机IP或地址(重点:如果是服务器部署则配服务器IP或域名否则客户端监听消息会报地址错误)

- # -e KAFKA_CFG_ADVERTISED_LISTENERS=PLAINTEXT://8.142.83.78:9092 这个IP一定要是外网IP,不要设置为内网IP

-

- docker run -d --name kafka-server \

- --network app-kafka \

- -p 9092:9092 \

- -e ALLOW_PLAINTEXT_LISTENER=yes \

- -e KAFKA_CFG_ZOOKEEPER_CONNECT=zookeeper-server:2181 \

- -e KAFKA_CFG_ADVERTISED_LISTENERS=PLAINTEXT://8.142.83.78:9092 \

- bitnami/kafka:latest

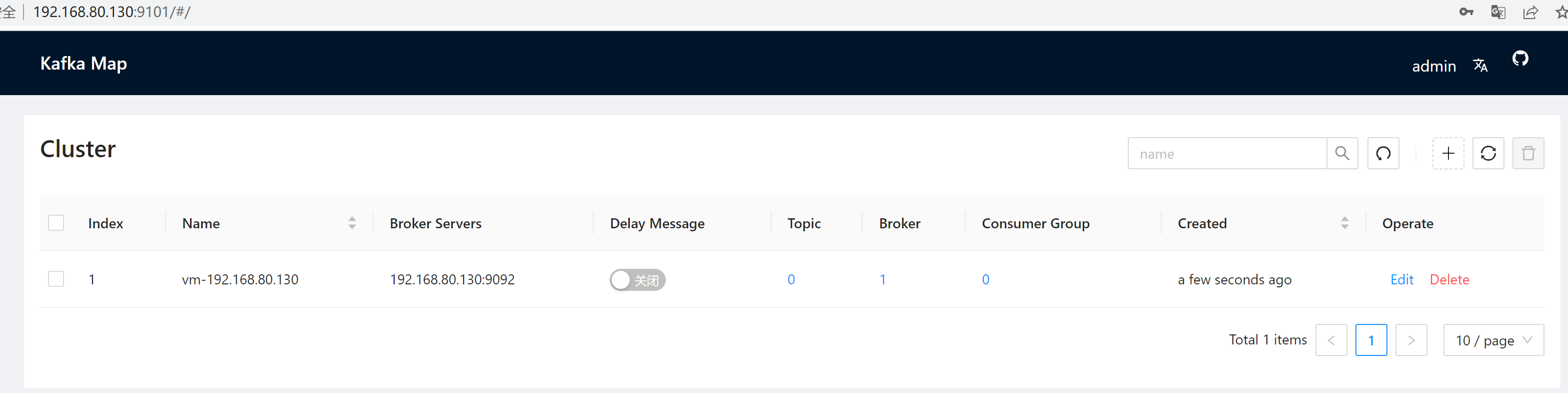

三、kafka-map图形化管理工具

- # 图形化管理工具

- # 访问地址:http://服务器IP:9101/

- # DEFAULT_USERNAME:默认账号admin

- # DEFAULT_PASSWORD:默认密码admin

-

- # Git 地址:https://github.com/dushixiang/kafka-# map/blob/master/README-zh_CN.md

-

- docker run -d --name kafka-map \

- --network app-kafka \

- -p 9101:8080 \

- -v /opt/kafka-map/data:/usr/local/kafka-map/data \

- -e DEFAULT_USERNAME=admin \

- -e DEFAULT_PASSWORD=admin \

- --restart always dushixiang/kafka-map:latest

四、验证是否部署成功

1、查看zookeeper中有关kafka的配置

- docker exec -it zookeeper-server /bin/bash

-

- cd /bin

- sh zkCli.sh -server 127.0.0.1:2181

-

- [zk: 127.0.0.1:2181(CONNECTED) 7] ls /

- [zookeeper, kafka]

- [zk: 127.0.0.1:2181(CONNECTED) 8] ls /kafka

- [cluster, controller, controller_epoch, brokers, admin, isr_change_notification, consumers, config]

- [zk: 127.0.0.1:2181(CONNECTED) 9]

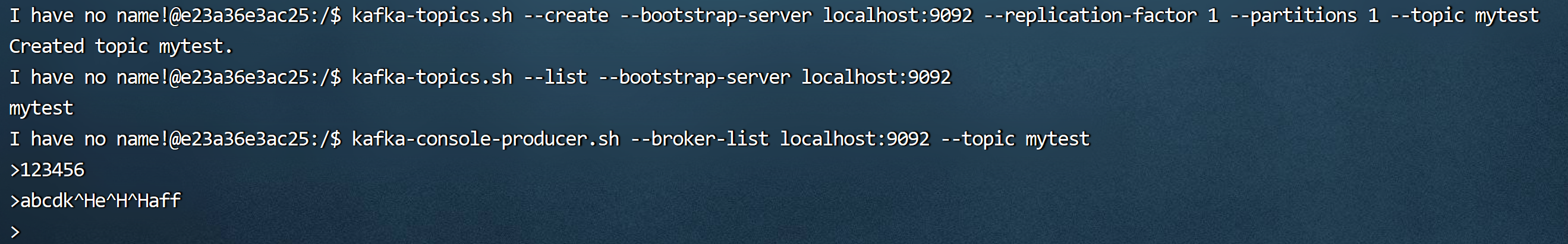

2、进入kafka容器查看

- [root@blog]$docker exec -it zookeeper-server bash

- [root@blog]$ ps -ef

- PID USER TIME COMMAND

- 1 root 0:08 /usr/lib/jvm/java-1.8-openjdk/jre/bin/java -Xmx1G -Xms1G -server -XX:+UseG1GC -XX:MaxGCPauseMillis=20 -XX:InitiatingHeapOccupancyPercent=35 -XX:+ExplicitGCInvokesConcurrent -XX:MaxIn

- 502 root 0:00 /bin/sh

- 506 root 0:00 ps -ef

- # 创建主题

- kafka-topics.sh --create --bootstrap-server localhost:9092 --replication-factor 1 --partitions 1 --topic mytest

-

- # 查看主题

- kafka-topics.sh --list --bootstrap-server localhost:9092

-

- # 发送消息

- kafka-console-producer.sh --broker-list localhost:9092 --topic mytest

- # 接收消息

- kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic mytest --from-beginning

五、SpringBoot整合Kafka

1、导入依赖

- <dependency>

- <groupId>org.springframework.kafka</groupId>

- <artifactId>spring-kafka</artifactId>

- </dependency>

2、修改配置

- spring:

- kafka:

- bootstrap-servers: 192.168.58.130:9092 #部署linux的kafka的ip地址和端口号

- producer:

- # 发生错误后,消息重发的次数。

- retries: 1

- #当有多个消息需要被发送到同一个分区时,生产者会把它们放在同一个批次里。该参数指定了一个批次可以使用的内存大小,按照字节数计算。

- batch-size: 16384

- # 设置生产者内存缓冲区的大小。

- buffer-memory: 33554432

- # 键的序列化方式

- key-serializer: org.apache.kafka.common.serialization.StringSerializer

- # 值的序列化方式

- value-serializer: org.apache.kafka.common.serialization.StringSerializer

- # acks=0 : 生产者在成功写入消息之前不会等待任何来自服务器的响应。

- # acks=1 : 只要集群的首领节点收到消息,生产者就会收到一个来自服务器成功响应。

- # acks=all :只有当所有参与复制的节点全部收到消息时,生产者才会收到一个来自服务器的成功响应。

- acks: 1

- consumer:

- # 自动提交的时间间隔 在spring boot 2.X 版本中这里采用的是值的类型为Duration 需要符合特定的格式,如1S,1M,2H,5D

- auto-commit-interval: 1S

- # 该属性指定了消费者在读取一个没有偏移量的分区或者偏移量无效的情况下该作何处理:

- # latest(默认值)在偏移量无效的情况下,消费者将从最新的记录开始读取数据(在消费者启动之后生成的记录)

- # earliest :在偏移量无效的情况下,消费者将从起始位置读取分区的记录

- auto-offset-reset: earliest

- # 是否自动提交偏移量,默认值是true,为了避免出现重复数据和数据丢失,可以把它设置为false,然后手动提交偏移量

- enable-auto-commit: false

- # 键的反序列化方式

- key-deserializer: org.apache.kafka.common.serialization.StringDeserializer

- # 值的反序列化方式

- value-deserializer: org.apache.kafka.common.serialization.StringDeserializer

- listener:

- # 在侦听器容器中运行的线程数。

- concurrency: 5

- #listner负责ack,每调用一次,就立即commit

- ack-mode: manual_immediate

- missing-topics-fatal: false

本次测试:linux地址:192.168.58.130

spring.kafka.bootstrap-servers=192.168.58.130:9092

advertised.listeners=192.168.58.130:9092

3、生产者

- import com.alibaba.fastjson.JSON;

-

- import lombok.extern.slf4j.Slf4j;

- import org.springframework.beans.factory.annotation.Autowired;

- import org.springframework.kafka.core.KafkaTemplate;

- import org.springframework.kafka.support.SendResult;

- import org.springframework.stereotype.Component;

- import org.springframework.util.concurrent.ListenableFuture;

- import org.springframework.util.concurrent.ListenableFutureCallback;

-

- /**

- * 事件的生产者

- */

- @Slf4j

- @Component

- public class KafkaProducer {

-

- @Autowired

- public KafkaTemplate kafkaTemplate;

-

- /** 主题 */

- public static final String TOPIC_TEST = "Test";

- /** 消费者组 */

- public static final String TOPIC_GROUP = "test-consumer-group";

-

- public void send(Object obj){

- String obj2String = JSON.toJSONString(obj);

- log.info("准备发送消息为:{}",obj2String);

-

- //发送消息

- ListenableFuture<SendResult<String, Object>> future = kafkaTemplate.send(TOPIC_TEST, obj);

- //回调

- future.addCallback(new ListenableFutureCallback<SendResult<String, Object>>() {

- @Override

- public void onFailure(Throwable ex) {

- //发送失败的处理

- log.info(TOPIC_TEST + " - 生产者 发送消息失败:" + ex.getMessage());

- }

-

- @Override

- public void onSuccess(SendResult<String, Object> result) {

- //成功的处理

- log.info(TOPIC_TEST + " - 生产者 发送消息成功:" + result.toString());

- }

- });

- }

-

-

-

- }

4、消费者

- import org.apache.kafka.clients.consumer.ConsumerRecord;

- import org.slf4j.Logger;

- import org.slf4j.LoggerFactory;

- import org.springframework.kafka.annotation.KafkaListener;

- import org.springframework.kafka.support.Acknowledgment;

- import org.springframework.kafka.support.KafkaHeaders;

- import org.springframework.messaging.handler.annotation.Header;

- import org.springframework.stereotype.Component;

-

- import java.util.Optional;

-

- /**

- * 事件消费者

- */

- @Component

- public class KafkaConsumer {

- private Logger logger = LoggerFactory.getLogger(org.apache.kafka.clients.consumer.KafkaConsumer.class);

-

- @KafkaListener(topics = KafkaProducer.TOPIC_TEST,groupId = KafkaProducer.TOPIC_GROUP)

- public void topicTest(ConsumerRecord<?,?> record, Acknowledgment ack, @Header(KafkaHeaders.RECEIVED_TOPIC) String topic){

- Optional<?> message = Optional.ofNullable(record.value());

- if (message.isPresent()) {

- Object msg = message.get();

- logger.info("topic_test 消费了: Topic:" + topic + ",Message:" + msg);

- ack.acknowledge();

- }

- }

- }

5、测试发送消息

- @Test

- void kafkaTest(){

- kafkaProducer.send("Hello Kafka");

- }

6、测试收到消息

![]()

六、拦截器

1、生产者拦截器

1.1、生产者拦截器代码

- import lombok.extern.slf4j.Slf4j;

- import org.apache.kafka.clients.producer.ProducerInterceptor;

- import org.apache.kafka.clients.producer.ProducerRecord;

- import org.apache.kafka.clients.producer.RecordMetadata;

-

- import java.util.Map;

-

- /**

- * @author idcos

- * @date 2023/11/9 11:13

- */

- @Slf4j

- public class CustomProducerInterceptor implements ProducerInterceptor<String,String> {

- @Override

- public ProducerRecord onSend(ProducerRecord producerRecord) {

- log.info("发送前处理———发送消息:"+producerRecord.toString());

- return producerRecord;

- }

-

- @Override

- public void onAcknowledgement(RecordMetadata recordMetadata, Exception e) {

- // 在收到消息的确认或异常时执行逻辑

- if (e == null) {

- log.info("消息成功发送");

- } else {

- log.error("消息成功失败");

- }

- }

-

- @Override

- public void close() {

- log.info("执行一些资源清理操作");

- }

-

- @Override

- public void configure(Map<String, ?> map) {

-

- }

- }

1.2、生产者yml配置

- spring:

- kafka:

- bootstrap-servers: IP:9092,IP:9092,IP:9092

- producer:

- properties:

- interceptor.classes: com.kafkaserver3.interceptor.CustomProducerInterceptor

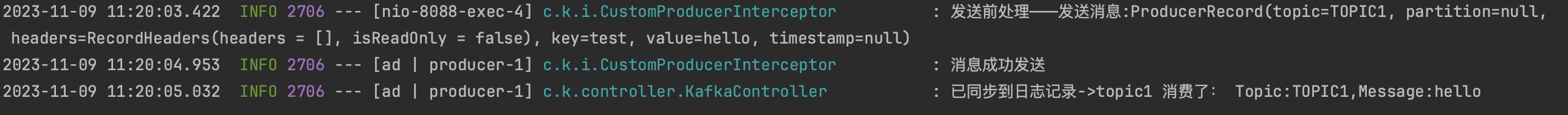

1.3、测试

发送消息hello,主题TOPIC1,key为test

2、消费者拦截器

2.1、消费者拦截器代码

- import lombok.extern.slf4j.Slf4j;

- import org.apache.kafka.clients.consumer.ConsumerInterceptor;

- import org.apache.kafka.clients.consumer.ConsumerRecords;

- import org.apache.kafka.clients.consumer.OffsetAndMetadata;

- import org.apache.kafka.common.TopicPartition;

-

- import java.util.Map;

-

- /**

- * @author idcos

- * @date 2023/11/9 11:25

- */

- @Slf4j

- public class CustomConsumerInterceptor implements ConsumerInterceptor<String,String> {

- @Override

- public ConsumerRecords<String, String> onConsume(ConsumerRecords<String, String> consumerRecords) {

- log.info("消费前处理———发送消息:"+consumerRecords.toString());

- return consumerRecords;

- }

-

- @Override

- public void onCommit(Map<TopicPartition, OffsetAndMetadata> map) {

- // 在消费者提交偏移量之后的逻辑

- log.info("在消费者提交偏移量之后的逻辑"+map.toString());

- }

-

- @Override

- public void close() {

- // 在拦截器关闭时的逻辑

- System.out.println("在拦截器关闭时");

- }

-

- @Override

- public void configure(Map<String, ?> map) {

- // 在拦截器配置时的逻辑

- System.out.println("在拦截器配置时");

- }

- }

2.2、消费者yml配置

- spring:

- kafka:

- bootstrap-servers: IP:9092,IP:9092,IP:9092

- consumer:

- properties:

- interceptor.classes: com.kafkaserver3.interceptor.CustomConsumerInterceptor

2.3、测试

发送消息hello,主题TOPIC1,key为test

参考文档:

本文内容由网友自发贡献,转载请注明出处:【wpsshop博客】

推荐阅读

相关标签