热门标签

热门文章

- 1nltk.data.load()应用及其要注意的事项_[nltk_data] downloading package punkt to /root/nlt

- 2【LorMe云讲堂】土壤-根际微生物组与作物高产高效

- 3Ubuntu20.04安装pytorch(包括安装Anaconda和虚拟环境配置以及安装包spikingjelly)

- 4如何成为一名精通云计算的程序员

- 5教你5步学会用Llama2:我见过最简单的大模型教学_llama2 如何训练

- 6计算机视觉新巅峰,微软&牛津联合提出MVSplat登顶3D重建

- 7探索Grok:马斯克旗下AI公司的革命性产品解读

- 8「数学」- 手撸 Newton插值法 C++

- 9WinHex使用方法详解

- 10Java spring boot 项目和python flask项目 开启 https 请求_flask keystore

当前位置: article > 正文

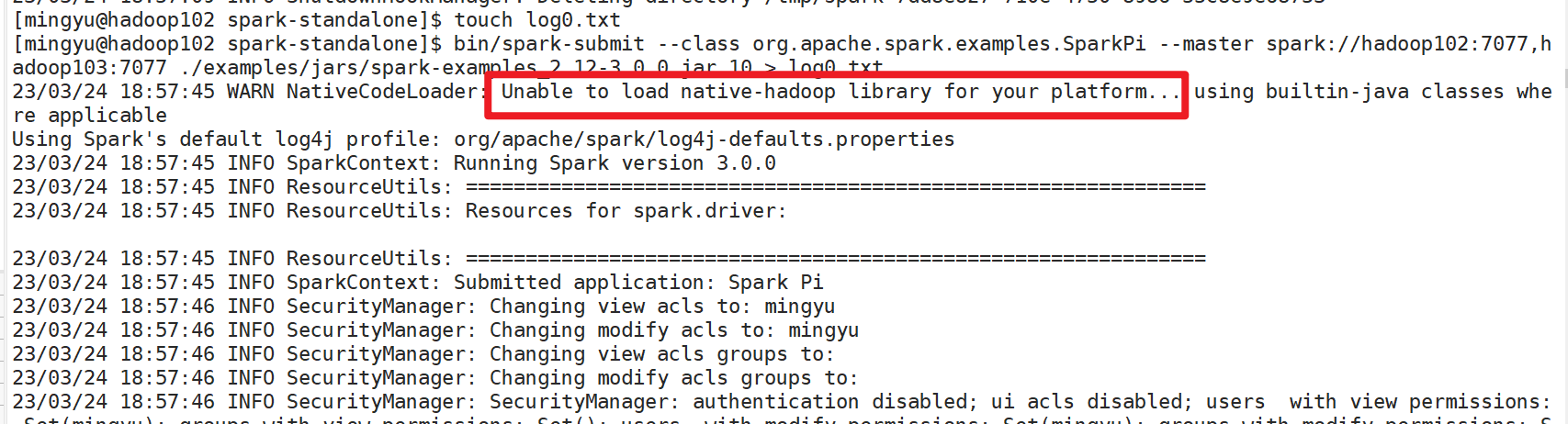

WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java

作者:Monodyee | 2024-04-08 18:17:22

赞

踩

unable to load native-hadoop library for your platform... using builtin-java

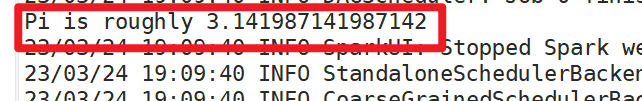

今天配置完Spark高可用之后,想要跑一下Pi来验证配置结果,出现一下问题

- [mingyu@hadoop102 spark-standalone]$ bin/spark-submit --class org.apache.spark.examples.SparkPi --master spark://hadoop102:7077,hadoop103:7077 ./examples/jars/spark-examples_2.12-3.0.0.jar 10

- 23/03/24 19:02:58 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

- Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

- 23/03/24 19:02:58 INFO SparkContext: Running Spark version 3.0.0

- 23/03/24 19:02:58 INFO ResourceUtils: ==============================================================

- 23/03/24 19:02:58 INFO ResourceUtils: Resources for spark.driver:

- 23/03/24 19:02:58 INFO ResourceUtils: ==============================================================

- 23/03/24 19:02:58 INFO SparkContext: Submitted application: Spark Pi

- 23/03/24 19:02:58 INFO SecurityManager: Changing view acls to: mingyu

- 23/03/24 19:02:58 INFO SecurityManager: Changing modify acls to: mingyu

- 23/03/24 19:02:58 INFO SecurityManager: Changing view acls groups to:

- 23/03/24 19:02:58 INFO SecurityManager: Changing modify acls groups to:

- 23/03/24 19:02:58 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(mingyu); groups with view permissions: Set(); users with modify permissions: Set(mingyu); groups with modify permissions: Set()

- 23/03/24 19:02:59 INFO Utils: Successfully started service 'sparkDriver' on port 35475.

- 23/03/24 19:02:59 INFO SparkEnv: Registering MapOutputTracker

- 23/03/24 19:02:59 INFO SparkEnv: Registering BlockManagerMaster

- 23/03/24 19:02:59 INFO BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information

- 23/03/24 19:02:59 INFO BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up

- 23/03/24 19:02:59 INFO SparkEnv: Registering BlockManagerMasterHeartbeat

- 23/03/24 19:02:59 INFO DiskBlockManager: Created local directory at /tmp/blockmgr-7e5af594-3590-4621-a4bc-d07128b4ad43

- 23/03/24 19:02:59 INFO MemoryStore: MemoryStore started with capacity 366.3 MiB

- 23/03/24 19:02:59 INFO SparkEnv: Registering OutputCommitCoordinator

- 23/03/24 19:02:59 INFO Utils: Successfully started service 'SparkUI' on port 4040.

- 23/03/24 19:02:59 INFO SparkUI: Bound SparkUI to 0.0.0.0, and started at http://hadoop102:4040

- 23/03/24 19:02:59 INFO SparkContext: Added JAR file:/opt/module/spark-standalone/./examples/jars/spark-examples_2.12-3.0.0.jar at spark://hadoop102:35475/jars/spark-examples_2.12-3.0.0.jar with timestamp 1679655779831

- 23/03/24 19:03:00 INFO StandaloneAppClient$ClientEndpoint: Connecting to master spark://hadoop102:7077...

- 23/03/24 19:03:00 INFO StandaloneAppClient$ClientEndpoint: Connecting to master spark://hadoop103:7077...

- 23/03/24 19:03:00 INFO TransportClientFactory: Successfully created connection to hadoop102/192.168.13.102:7077 after 54 ms (0 ms spent in bootstraps)

- 23/03/24 19:03:00 INFO TransportClientFactory: Successfully created connection to hadoop103/192.168.13.103:7077 after 54 ms (0 ms spent in bootstraps)

- 23/03/24 19:03:00 INFO StandaloneSchedulerBackend: Connected to Spark cluster with app ID app-20230324190300-0003

- 23/03/24 19:03:00 INFO StandaloneAppClient$ClientEndpoint: Executor added: app-20230324190300-0003/0 on worker-20230324185420-192.168.13.102-41333 (192.168.13.102:41333) with 8 core(s)

- 23/03/24 19:03:00 INFO StandaloneSchedulerBackend: Granted executor ID app-20230324190300-0003/0 on hostPort 192.168.13.102:41333 with 8 core(s), 1024.0 MiB RAM

- 23/03/24 19:03:00 INFO StandaloneAppClient$ClientEndpoint: Executor added: app-20230324190300-0003/1 on worker-20230324185420-192.168.13.103-38373 (192.168.13.103:38373) with 8 core(s)

- 23/03/24 19:03:00 INFO StandaloneSchedulerBackend: Granted executor ID app-20230324190300-0003/1 on hostPort 192.168.13.103:38373 with 8 core(s), 1024.0 MiB RAM

- 23/03/24 19:03:00 INFO StandaloneAppClient$ClientEndpoint: Executor added: app-20230324190300-0003/2 on worker-20230324185421-192.168.13.104-42906 (192.168.13.104:42906) with 8 core(s)

- 23/03/24 19:03:00 INFO Utils: Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 37659.

- 23/03/24 19:03:00 INFO StandaloneSchedulerBackend: Granted executor ID app-20230324190300-0003/2 on hostPort 192.168.13.104:42906 with 8 core(s), 1024.0 MiB RAM

- 23/03/24 19:03:00 INFO NettyBlockTransferService: Server created on hadoop102:37659

- 23/03/24 19:03:00 INFO BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy

- 23/03/24 19:03:00 INFO BlockManagerMaster: Registering BlockManager BlockManagerId(driver, hadoop102, 37659, None)

- 23/03/24 19:03:00 INFO BlockManagerMasterEndpoint: Registering block manager hadoop102:37659 with 366.3 MiB RAM, BlockManagerId(driver, hadoop102, 37659, None)

- 23/03/24 19:03:00 INFO BlockManagerMaster: Registered BlockManager BlockManagerId(driver, hadoop102, 37659, None)

- 23/03/24 19:03:00 INFO BlockManager: Initialized BlockManager: BlockManagerId(driver, hadoop102, 37659, None)

- 23/03/24 19:03:00 INFO StandaloneAppClient$ClientEndpoint: Executor updated: app-20230324190300-0003/2 is now RUNNING

- 23/03/24 19:03:00 INFO StandaloneAppClient$ClientEndpoint: Executor updated: app-20230324190300-0003/0 is now RUNNING

- 23/03/24 19:03:00 INFO StandaloneAppClient$ClientEndpoint: Executor updated: app-20230324190300-0003/1 is now RUNNING

- 23/03/24 19:03:03 ERROR SparkContext: Error initializing SparkContext.

- java.net.ConnectException: Call From hadoop102/192.168.13.102 to hadoop102:8020 failed on connection exception: java.net.ConnectException: 拒绝连接; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

- at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

- at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

- at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

- at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

- at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:831)

- at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:755)

- at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1515)

- at org.apache.hadoop.ipc.Client.call(Client.java:1457)

- at org.apache.hadoop.ipc.Client.call(Client.java:1367)

- at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:228)

- at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:116)

- at com.sun.proxy.$Proxy15.getFileInfo(Unknown Source)

- at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getFileInfo(ClientNamenodeProtocolTranslatorPB.java:903)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

- at java.lang.reflect.Method.invoke(Method.java:498)

- at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:422)

- at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:165)

- at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:157)

- at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95)

- at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:359)

- at com.sun.proxy.$Proxy16.getFileInfo(Unknown Source)

- at org.apache.hadoop.hdfs.DFSClient.getFileInfo(DFSClient.java:1665)

- at org.apache.hadoop.hdfs.DistributedFileSystem$29.doCall(DistributedFileSystem.java:1582)

- at org.apache.hadoop.hdfs.DistributedFileSystem$29.doCall(DistributedFileSystem.java:1579)

- at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

- at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1594)

- at org.apache.spark.deploy.history.EventLogFileWriter.requireLogBaseDirAsDirectory(EventLogFileWriters.scala:77)

- at org.apache.spark.deploy.history.SingleEventLogFileWriter.start(EventLogFileWriters.scala:221)

- at org.apache.spark.scheduler.EventLoggingListener.start(EventLoggingListener.scala:81)

- at org.apache.spark.SparkContext.<init>(SparkContext.scala:572)

- at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2555)

- at org.apache.spark.sql.SparkSession$Builder.$anonfun$getOrCreate$1(SparkSession.scala:930)

- at scala.Option.getOrElse(Option.scala:189)

- at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:921)

- at org.apache.spark.examples.SparkPi$.main(SparkPi.scala:30)

- at org.apache.spark.examples.SparkPi.main(SparkPi.scala)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

- at java.lang.reflect.Method.invoke(Method.java:498)

- at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

- at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:928)

- at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

- at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

- at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

- at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1007)

- at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1016)

- at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

- Caused by: java.net.ConnectException: 拒绝连接

- at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

- at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

- at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

- at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)

- at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:690)

- at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:794)

- at org.apache.hadoop.ipc.Client$Connection.access$3700(Client.java:411)

- at org.apache.hadoop.ipc.Client.getConnection(Client.java:1572)

- at org.apache.hadoop.ipc.Client.call(Client.java:1403)

- ... 42 more

- 23/03/24 19:03:03 INFO SparkUI: Stopped Spark web UI at http://hadoop102:4040

- 23/03/24 19:03:03 INFO StandaloneSchedulerBackend: Shutting down all executors

- 23/03/24 19:03:03 INFO CoarseGrainedSchedulerBackend$DriverEndpoint: Asking each executor to shut down

- 23/03/24 19:03:03 INFO MapOutputTrackerMasterEndpoint: MapOutputTrackerMasterEndpoint stopped!

- 23/03/24 19:03:03 INFO MemoryStore: MemoryStore cleared

- 23/03/24 19:03:03 INFO BlockManager: BlockManager stopped

- 23/03/24 19:03:03 INFO BlockManagerMaster: BlockManagerMaster stopped

- 23/03/24 19:03:03 INFO OutputCommitCoordinator$OutputCommitCoordinatorEndpoint: OutputCommitCoordinator stopped!

- 23/03/24 19:03:03 INFO SparkContext: Successfully stopped SparkContext

- Exception in thread "main" java.net.ConnectException: Call From hadoop102/192.168.13.102 to hadoop102:8020 failed on connection exception: java.net.ConnectException: 拒绝连接; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

- at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

- at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

- at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

- at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

- at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:831)

- at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:755)

- at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1515)

- at org.apache.hadoop.ipc.Client.call(Client.java:1457)

- at org.apache.hadoop.ipc.Client.call(Client.java:1367)

- at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:228)

- at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:116)

- at com.sun.proxy.$Proxy15.getFileInfo(Unknown Source)

- at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getFileInfo(ClientNamenodeProtocolTranslatorPB.java:903)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

- at java.lang.reflect.Method.invoke(Method.java:498)

- at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:422)

- at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:165)

- at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:157)

- at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95)

- at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:359)

- at com.sun.proxy.$Proxy16.getFileInfo(Unknown Source)

- at org.apache.hadoop.hdfs.DFSClient.getFileInfo(DFSClient.java:1665)

- at org.apache.hadoop.hdfs.DistributedFileSystem$29.doCall(DistributedFileSystem.java:1582)

- at org.apache.hadoop.hdfs.DistributedFileSystem$29.doCall(DistributedFileSystem.java:1579)

- at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

- at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1594)

- at org.apache.spark.deploy.history.EventLogFileWriter.requireLogBaseDirAsDirectory(EventLogFileWriters.scala:77)

- at org.apache.spark.deploy.history.SingleEventLogFileWriter.start(EventLogFileWriters.scala:221)

- at org.apache.spark.scheduler.EventLoggingListener.start(EventLoggingListener.scala:81)

- at org.apache.spark.SparkContext.<init>(SparkContext.scala:572)

- at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2555)

- at org.apache.spark.sql.SparkSession$Builder.$anonfun$getOrCreate$1(SparkSession.scala:930)

- at scala.Option.getOrElse(Option.scala:189)

- at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:921)

- at org.apache.spark.examples.SparkPi$.main(SparkPi.scala:30)

- at org.apache.spark.examples.SparkPi.main(SparkPi.scala)

- at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

- at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

- at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

- at java.lang.reflect.Method.invoke(Method.java:498)

- at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

- at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:928)

- at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

- at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

- at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

- at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1007)

- at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1016)

- at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

- Caused by: java.net.ConnectException: 拒绝连接

- at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

- at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

- at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

- at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)

- at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:690)

- at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:794)

- at org.apache.hadoop.ipc.Client$Connection.access$3700(Client.java:411)

- at org.apache.hadoop.ipc.Client.getConnection(Client.java:1572)

- at org.apache.hadoop.ipc.Client.call(Client.java:1403)

- ... 42 more

- 23/03/24 19:03:03 INFO ShutdownHookManager: Shutdown hook called

- 23/03/24 19:03:03 INFO ShutdownHookManager: Deleting directory /tmp/spark-d0c1d6eb-abb2-46fb-b83d-c5c06b2a90a0

- 23/03/24 19:03:03 INFO ShutdownHookManager: Deleting directory /tmp/spark-e6a32946-cd72-41f5-974b-f3bdf2ca76f0

- [mingyu@hadoop102 spark-standalone]$

其中最显眼的就是

不能加载本地hdfs系统,将hdfs系统打开之后就可以了

[mingyu@hadoop102 hadoop-3.1.3]$ sbin/start-dfs.sh

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/Monodyee/article/detail/387358

推荐阅读

相关标签