热门标签

热门文章

- 1Git系列之设置邮箱和用户名_git设置邮箱和用户名

- 2gitee(码云)仓库管理+克隆仓库到本地电脑+本地代码推送上传至gitee仓库_gitee本地推送

- 3几款免费又好用的Redis可视化工具!_redis图形化工具

- 4 流控算法探讨——之计数器模型原理及实现

- 5JAVA如何处理各种批量数据入库(BlockingQueue)_java多线程入库

- 6mysql查询表和字段信息sql语句_sql查询mysql选择字段

- 7dfs和bfs差别_BFS和DFS之间的区别

- 8华为畅享20pro怎么样_畅想20pro怎么样

- 9【学习总结】Ubuntu中vscode用ROS插件调试C++程序_vscode debug ros工程

- 10pytorch Dataset, DataLoader产生自定义的训练数据_pytorch 自定义 dataloader nii格式

当前位置: article > 正文

Resnet 18 及34 的代码复现(基于李沐的动手学深度学习)_resnet34代码

作者:weixin_40725706 | 2024-04-20 18:20:57

赞

踩

resnet34代码

resnet作为一个经典的图像分类模型,下面是对于resnet18及34的复现代码,具体细节请查阅resnet原文:https://arxiv.org/abs/1512.03385

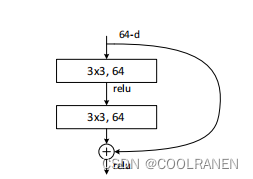

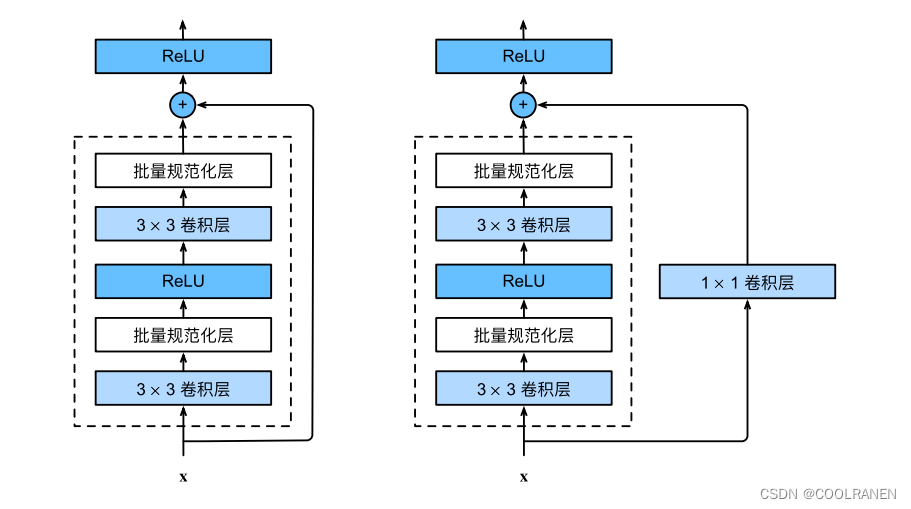

一、残差块

BasicBlock模块有两种模式,一种是输入X以后需要用1x1卷积层来进行下采样,从而升维,将通道数加倍,其中的步幅stride=2,一种是不需要1x1卷积层,直接将x与拟合的残差F(X)相加。basicblock 采用两层3x3的卷积层,每层卷积后经过批量规范化以及Relu函数激活。以下是详细代码。(basicblock)主要是用于resnet18以及resnet34

- import torch

- from torch import nn

- from torch.nn import functional as F

-

- class Residual(nn.Module):

- def __init__(self, input_channels, num_channels,

- use_1x1conv=False, strides=1):

- super().__init__()

- self.conv1 = nn.Conv2d(input_channels, num_channels,

- kernel_size=3, padding=1, stride=strides)

- self.conv2 = nn.Conv2d(num_channels, num_channels,

- kernel_size=3, padding=1)

- if use_1x1conv:

- self.conv3 = nn.Conv2d(input_channels, num_channels,

- kernel_size=1, stride=strides)

- else:

- self.conv3 = None

- self.bn1 = nn.BatchNorm2d(num_channels)

- self.bn2 = nn.BatchNorm2d(num_channels)

-

- def forward(self, X):

- Y = F.relu(self.bn1(self.conv1(X)))

- Y = self.bn2(self.conv2(Y))

- if self.conv3:

- X = self.conv3(X)

- Y += X

- return F.relu(Y)

二、resnet18主体

可以看到conv1由7x7的卷积层组成,输出为64,stride=2

- b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

- #该输入为通道数1,可修改为3,取决于图片

- nn.BatchNorm2d(64), nn.ReLU(),

- nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

然后构建resnet18

- #resnet18

- def resnet18(num_classes,in_channels=1):

- def resnet_block(in_channels,out_channels,num_residuals,

- first_block=False):

- blk = []

- for i in range(num_residuals):

- if i == 0 and not first_block:

- blk.append(Residual(in_channels, out_channels,

- use_1x1conv=True, strides=2))

- else:

- blk.append(Residual(out_channels, out_channels))

- return nn.Sequential(*blk)

-

- net = nn.Sequential(b1)

- net.add_module("resnet_block1", resnet_block(64, 64, 2, first_block=True))

- net.add_module("resnet_block2", resnet_block(64, 128, 2))

- net.add_module("resnet_block3", resnet_block(128, 256, 2))

- net.add_module("resnet_block4", resnet_block(256, 512, 2))

- net.add_module("global_avg_pool", nn.AdaptiveAvgPool2d((1,1)))

- net.add_module("fc", nn.Sequential(nn.Flatten(),

- nn.Linear(512, num_classes)))

- return net

-

上述的num_classes表示需要分类的类别数

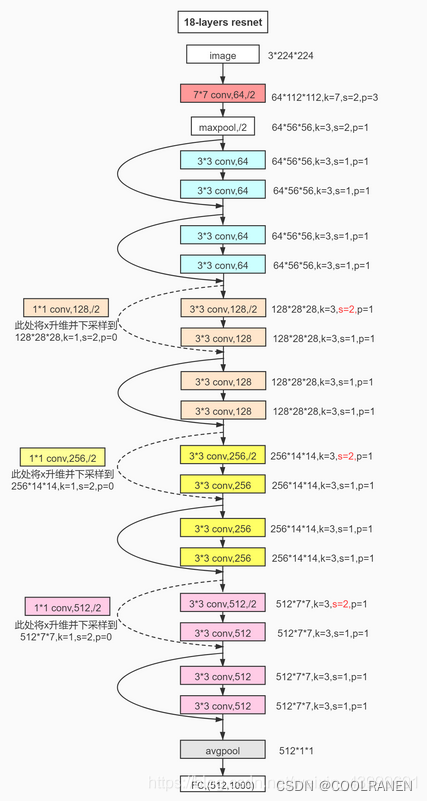

resnet18的架构图片如下

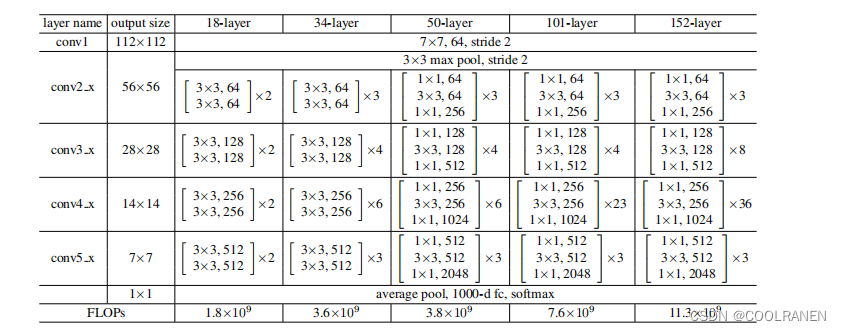

其中conv_3,conv_4,conv_5的三个模块中每个模块中的第一个残差块的输入输出通道数分别从64——128,128——256,256——512,并需要进行1x1的卷积将输入x下采样,从而保证输出的x的通道数与两层3X3卷积后的通道数一致。并且通过第一层的步幅为2的3X3卷积以后,通道数加倍,图片的尺寸,长和宽各变为原来的一半,从而减少了参数量。

以下是输出尺寸的计算公式:

一个尺寸 a*a 的特征图,经过 b*b 的卷积层,步幅(stride)=c,填充(padding)=d,输出特征图的尺寸为:[(a-b+2d)/c]+1

三、Resnet34

resnet34的残差块与resnet18类似,只是conv_2,conv_3,conv_4,conv_5中resnet34网络更深一点。下面是代码实现

- def resnet34(num_classes,in_channels=1):

- def resnet_block(in_channels,out_channels,num_residuals,

- first_block=False):

- blk = []

- for i in range(num_residuals):

- if i == 0 and not first_block:

- blk.append(Residual_1(in_channels, out_channels,

- use_1x1conv=True, strides=2))

- else:

- blk.append(Residual_1(out_channels, out_channels))

- return nn.Sequential(*blk)

-

- net = nn.Sequential(nn.Conv2d(in_channels, 64, kernel_size=7, stride=2, padding=3),

- nn.BatchNorm2d(64),

- nn.ReLU(),

- nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

-

- net.add_module("resnet_block1", resnet_block(64, 64, 3, first_block=True))

- net.add_module("resnet_block2", resnet_block(64, 128, 4))

- net.add_module("resnet_block3", resnet_block(128, 256, 6))

- net.add_module("resnet_block4", resnet_block(256, 512, 3))

- net.add_module("global_avg_pool", nn.AdaptiveAvgPool2d((1,1)))

- net.add_module("fc", nn.Sequential(nn.Flatten(),

- nn.Linear(512, num_classes)))

- return net

以下为完整代码

- import torch

- from torch import nn

- from torch.nn import functional as F

-

- class Residual(nn.Module):

- def __init__(self, input_channels, num_channels,

- use_1x1conv=False, strides=1):

- super().__init__()

- self.conv1 = nn.Conv2d(input_channels, num_channels,

- kernel_size=3, padding=1, stride=strides)

- self.conv2 = nn.Conv2d(num_channels, num_channels,

- kernel_size=3, padding=1)

- if use_1x1conv:

- self.conv3 = nn.Conv2d(input_channels, num_channels,

- kernel_size=1, stride=strides)

- else:

- self.conv3 = None

- self.bn1 = nn.BatchNorm2d(num_channels)

- self.bn2 = nn.BatchNorm2d(num_channels)

-

- def forward(self, X):

- Y = F.relu(self.bn1(self.conv1(X)))

- Y = self.bn2(self.conv2(Y))

- if self.conv3:

- X = self.conv3(X)

- Y += X

- return F.relu(Y)

-

- b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

- #该输入为通道数1,可修改为3,取决于图片

- nn.BatchNorm2d(64), nn.ReLU(),

- nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

-

-

- #resnet18

- def resnet18(num_classes,in_channels=1):

- def resnet_block(in_channels,out_channels,num_residuals,

- first_block=False):

- blk = []

- for i in range(num_residuals):

- if i == 0 and not first_block:

- blk.append(Residual(in_channels, out_channels,

- use_1x1conv=True, strides=2))

- else:

- blk.append(Residual(out_channels, out_channels))

- return nn.Sequential(*blk)

-

- net = nn.Sequential(b1)

- net.add_module("resnet_block1", resnet_block(64, 64, 2, first_block=True))

- net.add_module("resnet_block2", resnet_block(64, 128, 2))

- net.add_module("resnet_block3", resnet_block(128, 256, 2))

- net.add_module("resnet_block4", resnet_block(256, 512, 2))

- net.add_module("global_avg_pool", nn.AdaptiveAvgPool2d((1,1)))

- net.add_module("fc", nn.Sequential(nn.Flatten(),

- nn.Linear(512, num_classes)))

- return net

-

-

-

- def resnet34(num_classes,in_channels=1):

- def resnet_block(in_channels,out_channels,num_residuals,

- first_block=False):

- blk = []

- for i in range(num_residuals):

- if i == 0 and not first_block:

- blk.append(Residual_1(in_channels, out_channels,

- use_1x1conv=True, strides=2))

- else:

- blk.append(Residual_1(out_channels, out_channels))

- return nn.Sequential(*blk)

-

- net = nn.Sequential(nn.Conv2d(in_channels, 64, kernel_size=7, stride=2, padding=3),

- nn.BatchNorm2d(64),

- nn.ReLU(),

- nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

-

- net.add_module("resnet_block1", resnet_block(64, 64, 3, first_block=True))

- net.add_module("resnet_block2", resnet_block(64, 128, 4))

- net.add_module("resnet_block3", resnet_block(128, 256, 6))

- net.add_module("resnet_block4", resnet_block(256, 512, 3))

- net.add_module("global_avg_pool", nn.AdaptiveAvgPool2d((1,1)))

- net.add_module("fc", nn.Sequential(nn.Flatten(),

- nn.Linear(512, num_classes)))

- return net

-

声明:本文内容由网友自发贡献,转载请注明出处:【wpsshop】

推荐阅读

相关标签