热门标签

热门文章

- 1Android 开发 奇异bug 收集 (疑难bug 持续更新)_please include java exception stack in crash repor

- 2入门篇|学渣是如何自学数据结构的?

- 3【计算机组成原理】单周期MIPS CPU的设计_单周期处理器

- 4Intel C++ Compiler 关于Warning, error的处理

- 5angular实现input输入监听_angular 监听input

- 6java thread 内存泄露_Java Thread 是怎么造成内存泄露的?

- 7在Vue项目中自定义全局插件——全局Loading插件_vue git loading

- 8AI与Python - 分析时间序列数据_北极涛动ao指数下载

- 9ueditor 富文本表格功能优化_ueditor 粘贴excel无背景色

- 10R7-7 藏尾诗_第四句的最后一个汉字,占最后三个字节

当前位置: article > 正文

RobertaTokenizer,RobertaForMaskedLM_from transformers import robertaformaskedlm

作者:凡人多烦事01 | 2024-03-16 12:39:58

赞

踩

from transformers import robertaformaskedlm

RobertaTokenizer,RobertaForMaskedLM

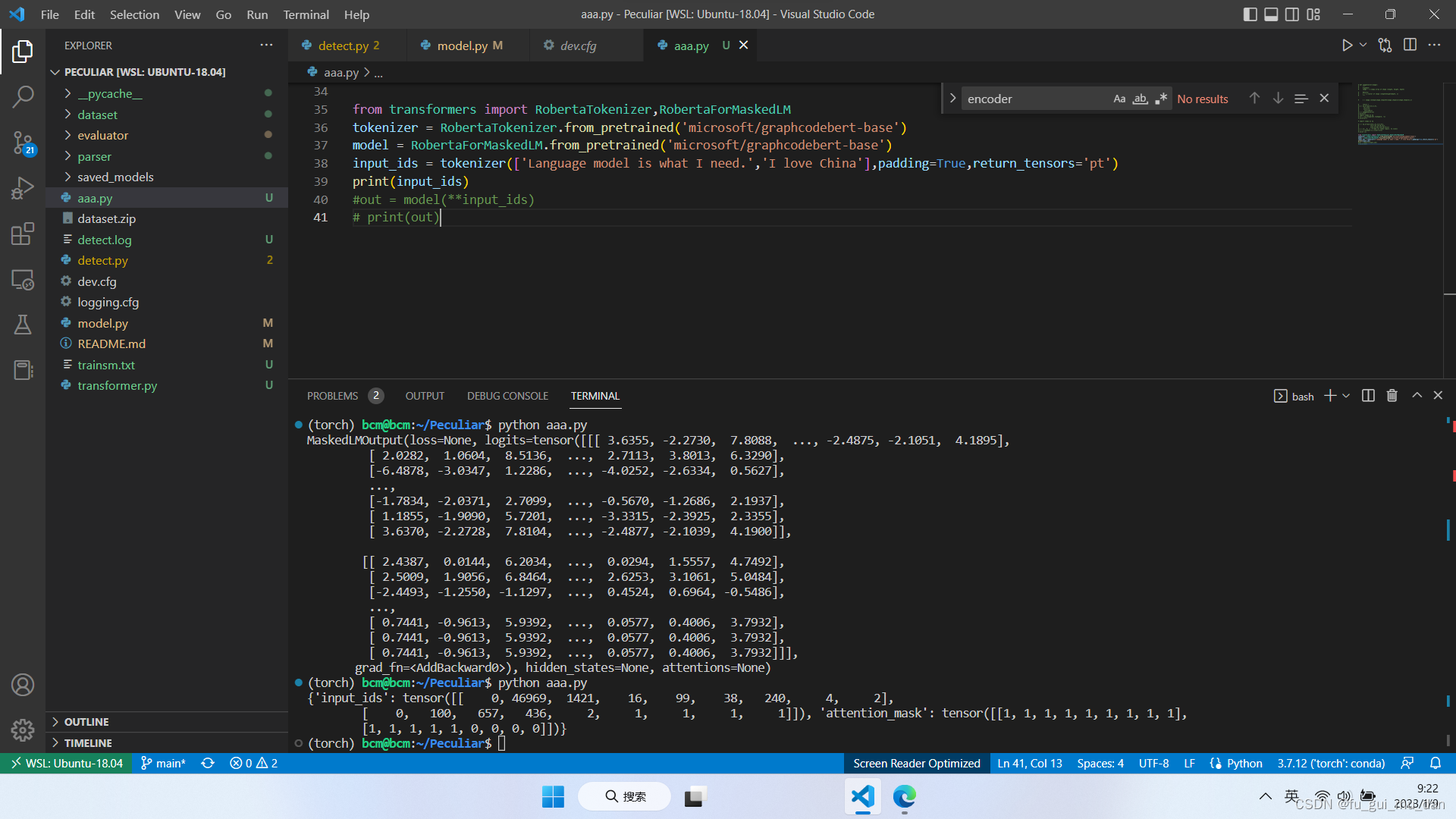

from transformers import RobertaTokenizer,RobertaForMaskedLM

tokenizer = RobertaTokenizer.from_pretrained('microsoft/graphcodebert-base')

model = RobertaForMaskedLM.from_pretrained('microsoft/graphcodebert-base')

input_ids = tokenizer(['Language model is what I need.','I love China'],padding=True,return_tensors='pt')

print(input_ids)

#out = model(**input_ids)

# print(out)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/凡人多烦事01/article/detail/249536

推荐阅读

相关标签