- 1Redis 基本命令—— 超详细操作演示!!!_redis 操作

- 2将打开在扩展显示器的界面移动到主显示器中的方法_怎么把扩展屏的显示拉回主屏

- 3耗时半个月!超详细的保姆级Stable Diffusion使用教程,终于整理出来了!_stable-diffusion

- 4【Redis】Redis高可用之Redis Cluster集群模式详解(Redis专栏启动)_redis怎么启动客户端后边会有cluster

- 5自然语言处理的算法与技术:从机器学习到深度学习_自然语言处理的关键技术 机器学习

- 6【Git】.gitignore 的匹配规则_gitignore文件规则

- 7在微信公众号或小程序中添加问答机器人_基于大模型的智能问答 运用在微信公众号

- 8为什么 GPU 适用于 AI 卷积计算 cnn GPU 线程分级 计算强度 FP32 和 FP64_卷积为什么要用gpu

- 9使用Docker部署即时通讯服务:Rocket.Chat与Mattermost实例

- 10Alert Log中“Fatal NI connect error 12170”错误

2024年大数据最全【Spark MLlib】(五)随机森林(Random Forest(1),作为大数据开发程序员应该怎样去规划自己的学习路线_随机森林 label

赞

踩

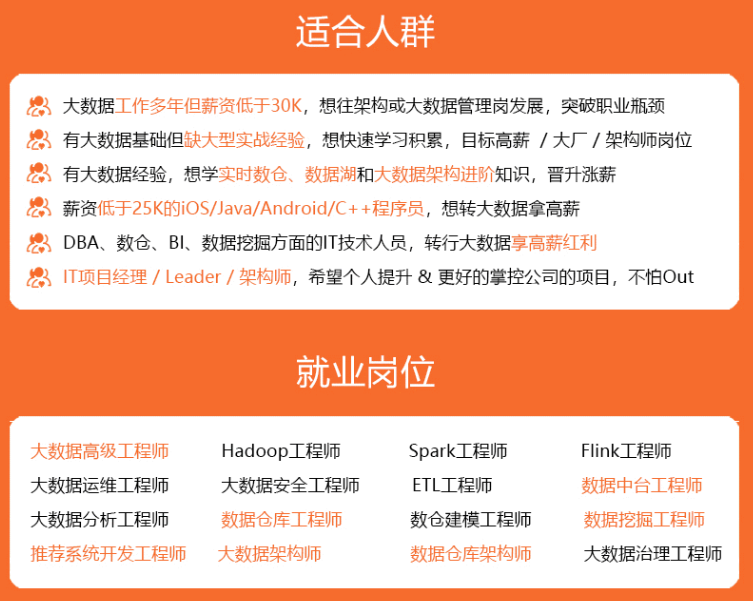

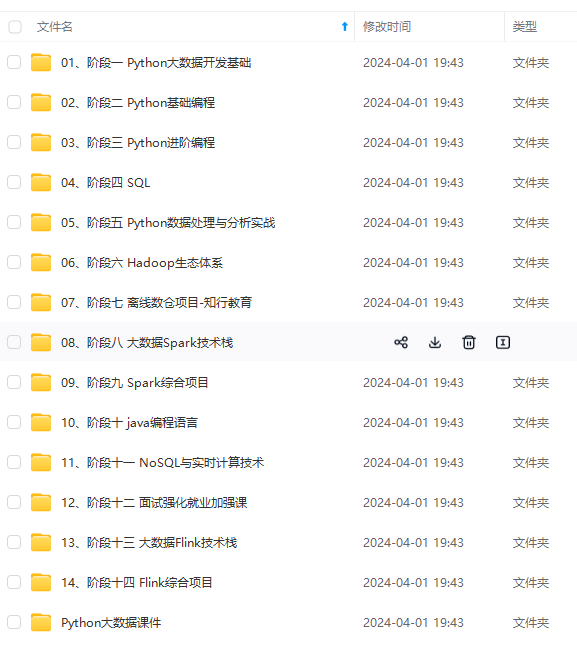

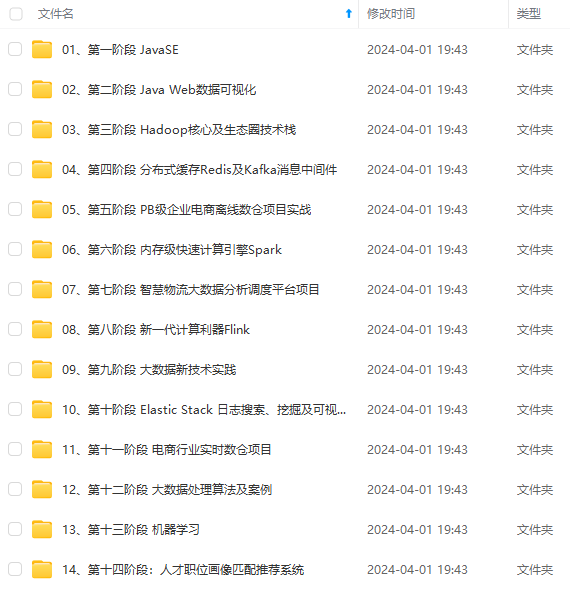

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

3.逐层训练(level-wise training)

单机版本的决策数生成过程是通过递归调用(本质上是深度优先)的方式构造树,在构造树的同时,需要移动数据,将同一个子节点的数据移动到一起

分布式环境下采用的策略是逐层构建树节点(本质上是广度优先),这样遍历所有数据的次数 等于所有树中的最大层数。每次遍历时,只需要计算每个节点所有切分点统计参数,遍历完后,根据节点的特征划分,决定是否切分,以及如何切分。

五、使用Spark ML分别进行回归与分类建模

数据集

No,year,month,day,hour,pm,DEWP,TEMP,PRES,cbwd,Iws,Is,Ir 1,2010,1,1,0,NaN,-21.0,-11.0,1021.0,NW,1.79,0.0,0.0 2,2010,1,1,1,NaN,-21,-12,1020,NW,4.92,0,0 3,2010,1,1,2,NaN,-21,-11,1019,NW,6.71,0,0 4,2010,1,1,3,NaN,-21,-14,1019,NW,9.84,0,0 5,2010,1,1,4,NaN,-20,-12,1018,NW,12.97,0,0 6,2010,1,1,5,NaN,-19,-10,1017,NW,16.1,0,0 7,2010,1,1,6,NaN,-19,-9,1017,NW,19.23,0,0 8,2010,1,1,7,NaN,-19,-9,1017,NW,21.02,0,0 9,2010,1,1,8,NaN,-19,-9,1017,NW,24.15,0,0 10,2010,1,1,9,NaN,-20,-8,1017,NW,27.28,0,0 11,2010,1,1,10,NaN,-19,-7,1017,NW,31.3,0,0 12,2010,1,1,11,NaN,-18,-5,1017,NW,34.43,0,0 13,2010,1,1,12,NaN,-19,-5,1015,NW,37.56,0,0 14,2010,1,1,13,NaN,-18,-3,1015,NW,40.69,0,0 15,2010,1,1,14,NaN,-18,-2,1014,NW,43.82,0,0 16,2010,1,1,15,NaN,-18,-1,1014,cv,0.89,0,0 ……

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

1、读取pm.csv,将含有缺失值的行扔掉(或用均值填充)将数据集分为两部分,0.8比例作为训练集,0.2比例作为测试集。

case class data(No: Int, year: Int, month: Int, day: Int, hour: Int, pm: Double, DEWP: Double, TEMP: Double, PRES: Double, cbwd: String, Iws: Double, Is: Double, Ir: Double)

def main(args: Array[String]): Unit = {

val conf = new SparkConf().setMaster("local[\*]").setAppName("foreast")

val sc = new SparkContext(conf)

val sqlContext = new SQLContext(sc)

Logger.getLogger("org").setLevel(Level.ERROR)

val root = MyRandomForeast.getClass.getResource("/")

import sqlContext.implicits._

val df = sc.textFile(root + "pm.csv").map(_.split(",")).filter(_ (0) != "No").filter(!_.contains("NaN")).map(x => data(x(0).toInt, x(1).toInt, x(2).toInt, x(3).toInt, x(4).toInt, x(5).toDouble, x(6).toDouble, x(7).toDouble, x(8).toDouble, x(9), x(10).toDouble, x(11).toDouble, x(12).toDouble)).toDF.drop("No").drop("year")

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

2、使用 month、day、hour、DEWP、TEMP、PRES、cbwd、Iws、Is、Ir 作为特征列(除去No,year,pm),pm作为label列,使用训练集、随机森林算法进行回归建模,使用回归模型对测试集进行预测,并评估。

val splitdf = df.randomSplit(Array(0.8, 0.2)) val (train, test) = (splitdf(0), splitdf(1)) val traindf = train.withColumnRenamed("pm", "label") val indexer = new StringIndexer().setInputCol("cbwd").setOutputCol("cbwd\_") val assembler = new VectorAssembler().setInputCols(Array("month", "day", "hour", "DEWP", "TEMP", "PRES", "cbwd\_", "Iws", "Is", "Ir")).setOutputCol("features") import org.apache.spark.ml.Pipeline val rf = new RandomForestRegressor().setLabelCol("label").setFeaturesCol("features") // setMaxDepth最大20,会大幅增加计算时间,增大能有效减小根均方误差 // setMaxBins我觉得和数据量相关,单独增大适得其反,要和setNumTrees一起增大 // 目前这个参数得到的评估结果(根均方误差)46.0002637676162 // val numClasses=2 // val categoricalFeaturesInfo = Map[Int, Int]()// Empty categoricalFeaturesInfo indicates all features are continuous. val pipeline = new Pipeline().setStages(Array(indexer, assembler, rf)) val model = pipeline.fit(traindf) val testdf = test.withColumnRenamed("pm", "label") val labelsAndPredictions = model.transform(testdf) labelsAndPredictions.select("prediction", "label", "features").show(false) val eva = new RegressionEvaluator().setLabelCol("label").setPredictionCol("prediction") val accuracy = eva.evaluate(labelsAndPredictions) println(accuracy) val treemodel = model.stages(2).asInstanceOf[RandomForestRegressionModel] println("Learned regression forest model:\n" + treemodel.toDebugString)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

3、按照下面标准处理pm列,数字结果放进(levelNum)列,字符串结果放进(levelStr)列

优(0) 50

良(1)50~100

轻度污染(2) 100~150

中度污染(3) 150~200

重度污染(4) 200~300

严重污染(5) 大于300及以上

// 使用Bucketizer特征转换,按区间划分

val splits = Array(Double.NegativeInfinity, 50, 100, 150, 200, 300, Double.PositiveInfinity)

val bucketizer = new Bucketizer().setInputCol("pm").setOutputCol("levelNum").setSplits(splits)

val bucketizerdf = bucketizer.transform(df)

val tempdf = sqlContext.createDataFrame(

Seq((0.0, "优"), (1.0, "良"), (2.0, "轻度污染"), (3.0, "中度污染"), (4.0, "重度污染"), (5.0, "严重污染"))

).toDF("levelNum", "levelStr")

val df2 = bucketizerdf.join(tempdf, "levelNum").drop("pm")

df2 show

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

4、使用month、day、hour、DEWP、TEMP、PRES、cbwd、Iws、Is、Ir作为特征列(除去No,year,pm),levelNum作为label列,使用训练集、随机森林算法进行分类建模。使用分类模型对测试集进行预测对预测结果df进行处理,基于prediction列生成predictionStr(0-5转换优-严重污染),对结果进行评估。

val splitdf2 = df2.randomSplit(Array(0.8, 0.2)) val (train2, test2) = (splitdf2(0), splitdf2(1)) val traindf2 = train2.withColumnRenamed("levelNum", "label") val indexer2 = new StringIndexer().setInputCol("cbwd").setOutputCol("cbwd\_") val assembler2 = new VectorAssembler().setInputCols(Array("month", "day", "hour", "DEWP", "TEMP", "PRES", "cbwd\_", "Iws", "Is", "Ir")).setOutputCol("features") val rf2 = new RandomForestClassifier().setLabelCol("label").setFeaturesCol("features") val pipeline2 = new Pipeline().setStages(Array(indexer2, assembler2, rf2)) val model2 = pipeline2.fit(traindf2) val testdf2 = test2.withColumnRenamed("levelNum", "label") val labelsAndPredictions2 = model2.transform(testdf2)    **既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!** **由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新** **[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)** 涵盖了95%以上大数据知识点,真正体系化!** **由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新** **[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)**

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34