- 1Android蓝牙Ble基本操作-(连接2)_android stdio nordicsemi库如何实现绑定配对

- 2bnnmts_.bkb..i.bjn.knn./jmiblbb/b/.nk.l

- 3油管公式(全)_油管公式电子书下载

- 4Postgresql count 慢的处理方法_postgresql count2w多很慢

- 5[毕业设计]最新最全计算机专业毕业设计选题推荐精选汇总_计算机专业毕业设计选题方向

- 6Python项目实战:迷失航线游戏开发-关东升-专题视频课程

- 7python3异步框架_三分钟了解 Python3 的异步 Web 框架 FastAPI

- 8Windows基础安全设置_控制面板安全设置

- 9idea的Java窗体可视化工具Swing UI Designer的简单使用(二)_swing ui designer教程

- 10ACwing线段树学习笔记_线段树 acwing 笔记

K8s结合docker部署_docker和k8s环境搭建及使用

赞

踩

原生安装步骤

- 安装必要的环境依赖与工具

sudo apt-get install \

apt-transport-https \

ca-certificates \

curl \

gnupg \

lsb-release

- 1

- 2

- 3

- 4

- 5

- 6

- 下载证书更新

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /usr/share/keyrings/docker-archive-keyring.gpg

- 1

- 修改相关变量

echo "deb [arch=amd64 signed-by=/usr/share/keyrings/docker-archive-keyring.gpg] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

- 1

- 安装容器需要环境

sudo apt-get install openssh-server

sudo apt install net-tools

sudo apt-get update

sudo apt-get install docker-ce docker-ce-cli containerd.io

sudo systemctl enable ssh

sudo systemctl enable docker

- 1

- 2

- 3

- 4

- 5

- 6

- 关闭swap分区

修改/etc/fstab文件

sudo vi /etc/fstab

- 1

打开文件后的内容如下:

UUID=e2048966-750b-4795-a9a2-7b477d6681bf / ext4 errors=remount-ro 0 1

# /dev/fd0 /media/floppy0 auto rw,user,noauto,exec,utf8 0 0

- 1

- 2

第二条用 “# ”注释掉,注意第一条别注释,不然重启之后系统有可能会报“file system read-only”错误。

- 检查swap分区关闭情况

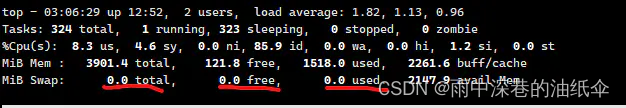

使用reboot命令重启,重启后使top命令查看任务管理器,如果看到如下参数均为0则成功关闭swap分区。

- 配置免密登陆

在master节点上生成密钥对,将共钥信息复制到各worker节点上

在master节点上执行如下命令:

sudo ssh-keygen

- 1

在默认的密钥保存路径/root/.ssh/目录下查看公钥id_rsa.pub的内容信息,复制信息粘贴在各个节点的/root/.ssh/authorized_keys文件中。

- 修改hostname

设置每个节点的名称,但所有节点名称都不可重复

hostnamectl set-hostname master

- 1

- 在host文件中添加节点名称与IP地址的映射关系

打开/etc/hosts文件,添加节点名称与IP地址的映射关系

ip k8s-master-node1

ip k8s-worker-node1

ip k8s-worker-node2

- 1

- 2

- 3

- 安装docker

在所有节点上面安装docker环境

注意:kubernetes在1.24的版本上已经放弃使用docker作为容器软件,改用container软件,因此安装docker环境时需要注意在kubernetes的版本上要指定到1.24不包含1.24以下的版本才可以正常运行。

sudo apt install docker.io

- 1

- 配置docker相关参数

docker安装完成之后需要修改配置,包括切换docker下载源为国内镜像站与修改cgroups。

打开docker配置文件

sudo vi /etc/docker/daemon.json

- 1

将配置内容添加到/etc/docker/daemon.json文件中

{"registry-mirrors": ["https://dockerhub.azk8s.cn","https://reg-mirror.qiniu.com","https://quay-mirror.qiniu.com"],"exec-opts": [ "native.cgroupdriver=systemd" ]

}

- 1

- 2

重新加载docker 配置

sudo systemctl daemon-reload

sudo systemctl restart docker

- 1

- 2

使用docker info | grep Cgroup来查看修改后的docker cgroup状态,发现变为systemd即为修改成功。

- kubernetes安装

kubernetes安装需要三个主要组件kubelet、kubeadm以及kubectl。这三个主要组件需要在各个节点上都安装。

三个组件的主要功能如下:

- kubelet: k8s 的核心服务

- kubeadm: 这个是用于快速安装 kubernetes 的一个集成工具,在各个节点上的Kubernetes安装都需要通过该组件完成。

- kubectl: k8s 的命令行工具,部署完成之后后续的操作都要用它来执行

三个组件的具体安装命令如下

apt-get update && apt-get install -y apt-transport-https

curl https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add -

cat <<EOF >/etc/apt/sources.list.d/kubernetes.list

deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main

EOF

apt-get update

apt-get install -y kubelet=1.23.9-00 kubeadm=1.23.9-00 kubectl=1.23.9-00

- 1

- 2

- 3

- 4

- 5

- 6

- 7

安装 master 节点

初始化master节点大致可以分为如下几步:

- 初始化master节点

- 部署flannel网络

- 配置kubectl工具

初始化 master 节点

使用kubeadm的init命令可以完成初始化,通过携带的参数进行相关的设置,下列命令中将赋值给–apiserver-advertise-address参数的 ip 地址修改为自己的master主机地址,然后再执行。

docker pull coredns/coredns:1.8.4

docker tag coredns/coredns:1.8.4 registry.aliyuncs.com/google_containers/coredns:v1.8.4

kubeadm init \

--apiserver-advertise-address=192.168.61.170 \

--image-repository registry.aliyuncs.com/google_containers \

--pod-network-cidr=10.244.0.0/16 \

--kubernetes-version 1.23

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

一些常用参数的含义:

- –apiserver-advertise-address: k8s 中的主要服务apiserver的部署地址,填自己的管理节点 ip

- –image-repository: 拉取的 docker 镜像源,因为初始化的时候kubeadm会去拉 k8s 的很多组件来进行部署,所以需要指定国内镜像源,下不然会拉取不到镜像。

- –pod-network-cidr: 这个是 k8s 采用的节点网络,因为要使用flannel作为 k8s 的网络,所以这里填10.244.0.0/16就好

- –kubernetes-version: 这个是用来指定你要部署的 k8s 版本的,一般不用填,不过如果初始化过程中出现了因为版本不对导致的安装错误的话,可以用这个参数手动指定。

- –ignore-preflight-errors: 忽略初始化时遇到的错误,比如说我想忽略 cpu 数量不够 2 核引起的错误,就可以用–ignore-preflight-errors=CpuNum。错误名称在初始化错误时会给出来。

如果执行结果如下所示,则初始化成功,把最后那行以kubeadm join开头的命令复制下来,之后安装woker节点时要用到的,如果丢失了该信息,可以在master节点上使用kubeadm token create --print-join-command命令重新生成。

Your Kubernetes master has initialized successfully!To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/You can now join any number of machines by running the following on each node

as root:

kubeadm join 192.168.56.11:6443 --token wbryr0.am1n476fgjsno6wa --discovery-token-ca-cert-hash sha256:7640582747efefe7c2d537655e428faa6275dbaff631de37822eb8fd4c054807

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

如果在初始化过程中出现了任何Error导致初始化终止了,使用kubeadm reset重置之后再重新进行初始化。

完整输出:

root@ubuntu:/home/mico# kubeadm init --apiserver-advertise-address=192.168.196.141 --image-repository registry.aliyuncs.com/google_containers --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.22.1

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local ubuntu] and IPs [10.96.0.1 192.168.196.141]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost ubuntu] and IPs [192.168.196.141 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost ubuntu] and IPs [192.168.196.141 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 20.516007 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.22" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node ubuntu as control-plane by adding the labels: [node-role.kubernetes.io/master(deprecated) node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node ubuntu as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: i9rpmm.8jqs342cmyj1hfwg

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.196.141:6443 --token i9rpmm.8jqs342cmyj1hfwg \

--discovery-token-ca-cert-hash sha256:95f83d494d4d484945f8017a70bf2f7c6b238c8ca844d431facc4b19dc4105f2

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

配置 kubectl 工具

执行如下命令:

mkdir -p /root/.kube && \

cp /etc/kubernetes/admin.conf /root/.kube/config

- 1

- 2

执行完成后通过下面两条命令测试 kubectl是否可用:

# 查看已加入的节点

kubectl get nodes

# 查看集群状态

kubectl get cs

- 1

- 2

- 3

- 4

部署 flannel 网络

flannel是一个专门为 k8s 设置的网络规划服务,可以让集群中的不同节点主机创建的 docker 容器都具有全集群唯一的虚拟IP地址。执行下述命令进行设置:

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml

- 1

报错:

root@ubuntu:/home/mico# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml

serviceaccount/flannel unchanged

configmap/kube-flannel-cfg unchanged

unable to recognize "https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml": no matches for kind "ClusterRole" in version "rbac.authorization.k8s.io/v1beta1"

unable to recognize "https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml": no matches for kind "ClusterRoleBinding" in version "rbac.authorization.k8s.io/v1beta1"

unable to recognize "https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml": no matches for kind "DaemonSet" in version "extensions/v1beta1"

unable to recognize "https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml": no matches for kind "DaemonSet" in version "extensions/v1beta1"

unable to recognize "https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml": no matches for kind "DaemonSet" in version "extensions/v1beta1"

unable to recognize "https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml": no matches for kind "DaemonSet" in version "extensions/v1beta1"

unable to recognize "https://raw.githubusercontent.com/coreos/flannel/a70459be0084506e4ec919aa1c114638878db11b/Documentation/kube-flannel.yml": no matches for kind "DaemonSet" in version "extensions/v1beta1"

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

换地址

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

Warning: policy/v1beta1 PodSecurityPolicy is deprecated in v1.21+, unavailable in v1.25+

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel unchanged

configmap/kube-flannel-cfg configured

daemonset.apps/kube-flannel-ds created

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

输出如下内容即为安装完成:

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.extensions/kube-flannel-ds-amd64 created

daemonset.extensions/kube-flannel-ds-arm64 created

daemonset.extensions/kube-flannel-ds-arm created

daemonset.extensions/kube-flannel-ds-ppc64le created

daemonset.extensions/kube-flannel-ds-s390x created

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

将 woker节点加入网络

需要安装 docker 、k8s 以及修改服务器配置,之后执行从master节点上保存的命令即可完成加入。

kubeadm join 192.168.56.11:6443 --token wbryr0.am1n476fgjsno6wa --discovery-token-ca-cert-hash sha256:7640582747efefe7c2d537655e428faa6275dbaff631de37822eb8fd4c054807

- 1

待控制台中输出以下内容后即为加入成功:

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the master to see this node join the cluster.

- 1

- 2

- 3

- 4

随后登录master1查看已加入节点状态,可以看到worker1已加入,并且状态均为就绪。至此,k8s 搭建完成:

root@master1:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master1 Ready master 145m v1.15.0

worker1 Ready <none> 87m v1.15.0

- 1

- 2

- 3

- 4

脚本安装步骤

- 准备工作

配置节点免密登陆,可参考原生安装中的配置方法。 - 下载脚本

# centos

wget https://ghproxy.com/https://raw.githubusercontent.com/lework/kainstall/master/kainstall-centos.sh

# debian

wget https://ghproxy.com/https://raw.githubusercontent.com/lework/kainstall/master/kainstall-debian.sh

# ubuntu

wget https://ghproxy.com/https://raw.githubusercontent.com/lework/kainstall/master/kainstall-ubuntu.sh

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 查看脚本信息

bash kainstall-centos.sh

- 1

- 初始化集群

bash kainstall-centos.sh init \

--master ip1,ip2,ip3 \

--worker ip4,ip5 \

--user root \

--password 123456 \

--port 22 \

--version 1.24

- 1

- 2

- 3

- 4

- 5

- 6

- 7