热门标签

热门文章

- 1细数GitHub 上既有趣又有用的 Java 项目Top14

- 2【独家源码】ssm课题申报系统ogvvo计算机毕业设计问题的解决方案与方法_ogv审核

- 3R+python︱XGBoost极端梯度上升以及forecastxgb(预测)+xgboost(回归)双案例解读

- 42023如果纯做业务测试的话,在测试行业有出路吗?_业务测试是不是最简单的

- 5斐波那契数列三种实现【C++】_c++斐波那契数列

- 6这里聚焦了全球嵌入式技术风景~

- 7hive一次查询多个分区表_《Hive用户指南》- Hive性能调优相关

- 8Git: ‘LF will be replaced by CRLF the next time Git touches it‘ 问题解决办法

- 9记录在阿L做外包的日子,给正在(金三银四)的你一点经验_阿里w2薪资

- 10百度智能云“千帆大模型平台”升级,大模型最多、Prompt模板最全—测评结果超预期_智能评分 评分 promot

当前位置: article > 正文

生物医药相关大模型:BioMedLM、Mixtral_BioMedical、Med-PaLM2、BioMistral、BioMedGPT

作者:花生_TL007 | 2024-04-15 12:32:53

赞

踩

生物医药相关大模型:BioMedLM、Mixtral_BioMedical、Med-PaLM2、BioMistral、BioMedGPT

BioMedLM:

https://huggingface.co/stanford-crfm/BioMedLM

Mixtral_BioMedical:

https://huggingface.co/LeroyDyer/Mixtral_BioMedical_7b

Med-PaLM:

https://sites.research.google/med-palm/

BioMistral:

https://huggingface.co/BioMistral/BioMistral-7B

BioMedGPT:

https://github.com/PharMolix/OpenBioMed/blob/main/README-CN.md

主要就是将生物医药相关知识,文本、图像等多模态数据进行构建的行业大模型进行知识问答

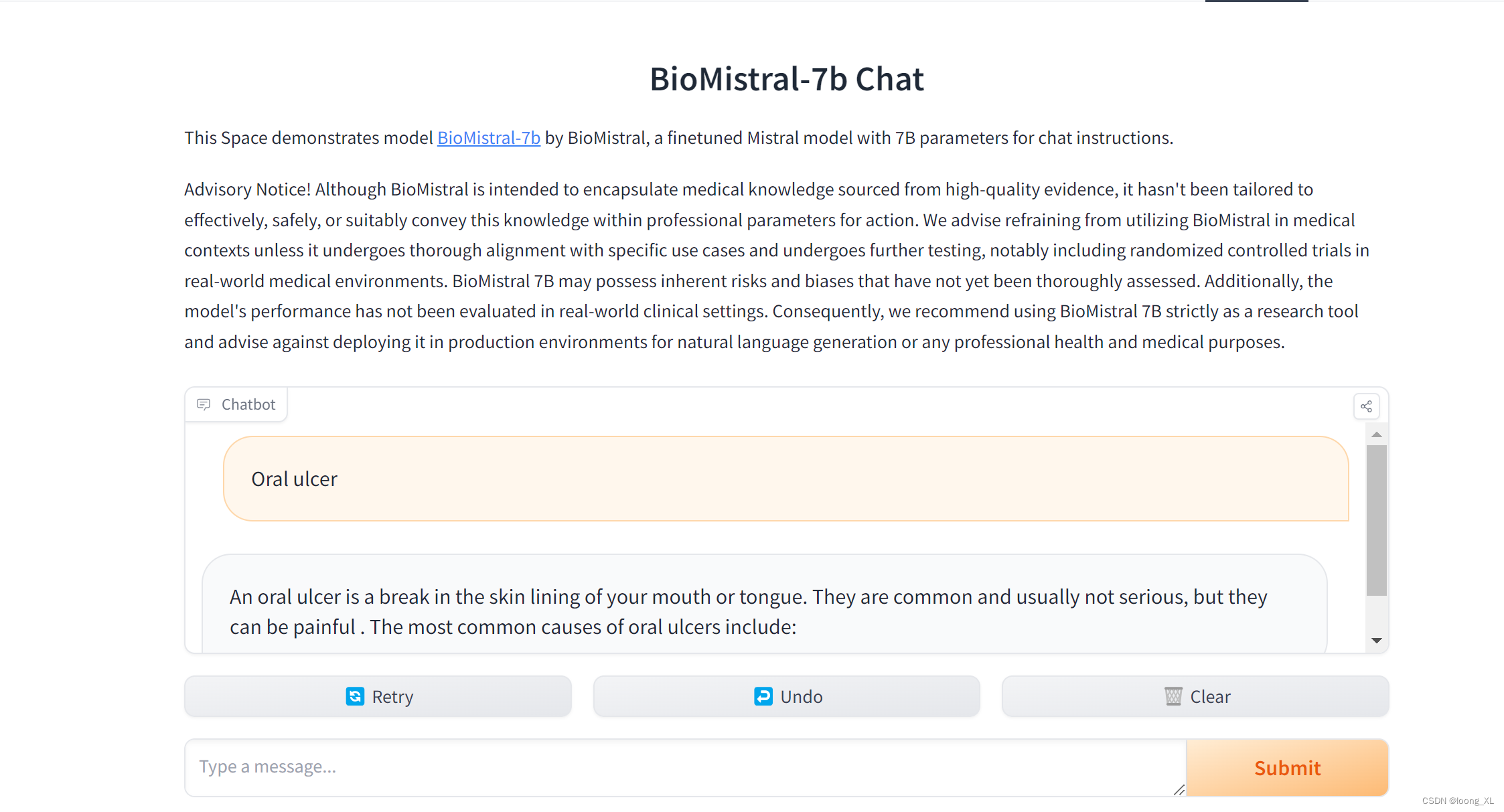

简单在线网页体验

https://huggingface.co/spaces/Artples/BioMistral-7b-Chat

代码运行

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM, BitsAndBytesConfig

model_id = "BioMistral/BioMistral-7B"

bnb_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_use_double_quant=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_compute_dtype=torch.bfloat16

)

model = AutoModelForCausalLM.from_pretrained(

model_id,

quantization_config=bnb_config,

device_map="auto",

trust_remote_code=True,

)

eval_tokenizer = AutoTokenizer.from_pretrained(model_id, add_bos_token=True, trust_remote_code=True)

eval_prompt = "The best way to "

model_input = eval_tokenizer(eval_prompt, return_tensors="pt").to("cuda")

model.eval()

with torch.no_grad():

print(eval_tokenizer.decode(model.generate(**model_input, max_new_tokens=100, repetition_penalty=1.15)[0], skip_special_tokens=True))

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/花生_TL007/article/detail/427909

推荐阅读

相关标签