- 1IDEA快速入门_intellij idea2020.3激活

- 2java实现的经典递归算法三例_经典递归算法java

- 3李德毅 | 人工智能看哲学

- 4手把手教你在云环境炼丹:Stable Diffusion LoRA 模型保姆级炼制教程_炼丹模型训练

- 5使用Python进行机器学习:从基础到实战

- 6[AI]文心一言出圈的同时,NLP处理下的ChatGPT-4.5最新资讯_chtagtp4.5

- 7android edittext显示光标不闪烁_单片机1602液晶屏显示 hello studet come to here

- 8uniapp 使用安卓模拟器运行调试_uniapp 安卓模拟器调试

- 9卡尔曼滤波和互补滤波的区别_互补滤波和卡尔曼滤波

- 10如何短时间通过2022年PMP考试?_pmp考试总结2022

Hadoop伪分布式安装教程_hadoop伪分布式环境安装

赞

踩

Hadoop伪分布式安装教程

一、安装背景

语雀博客地址:链接: 《Hadoop伪分布式安装教程》

1.1 软件列表

- Unbuntu 24.04LTS

- java 1.8

- Hadoop 3.1.3

- Hive 3.1.3

- mysql 8

- vmware 17pro

- finshell

-

- inshell

- 大数据软件资源链接:hadoop+hive+java1.8+mysql8.jar

https://pan.baidu.com/s/1k63c-srXl6CQACVyGjhlkg?pwd=5vqr

提取码:5vqr

--来自百度网盘超级会员V6的分享

- 1

- 2

- 3

1.2 系统软件列表

- openssh-server(ssh 连接)

sudo apt-get install ssh-contact-service

ssh 登陆时直接使用 root 最高级别用户登陆即可

教程详见 Linux学习笔记文章第一部分 root权限的设置

Linux学习笔记文章

- vim(文本编辑)

sudo apt-get install vim - net-tools(ifconfig 查看 IP 地址,ip addr 也可以直接查看)

sudo apt-get install net-tools

二、安装Hadoop

2.1 安装 Java 环境

2.1.1 前期准备

首先,在根目录下创建文件夹 Downloads 用来存放传输上来的文件,在 opt 目录下创建 module 文件用来存放使用解压出来的大数据软件, pwd可以查看当前的位置信息

# 回到根目录

cd ..

# 创建Downloads

mkdir Downloads

# 去到opt目录下

cd ..

cd opt

mkdir module

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

2.1.2 文件传输

将 jdk-8u411-linux-x64.tar.gz 安装传到虚拟机上

2.1.3 解压文件

# 解压文件

tar -zxvf jdk-8u411-linux-x64.tar.gz -C /opt/module/

# 进入Java目录并改名

cd /opt/module/

mv jdk1.8.0_411 jdk1.8

- 1

- 2

- 3

- 4

- 5

2.1.4 配置 jdk 的环境变量

vim /etc/profile

# 添加以下内容:

# JAVAHOME

export JAVA_HOME=/opt/module/jdk1.8

export CLASSPATH=.:$JAVA_HOME/lib:$JAVA_HOME/jre/lib

export PATH=$PATH:$JAVA_HOME/bin

# 让配置文件生效

source /etc/profile

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

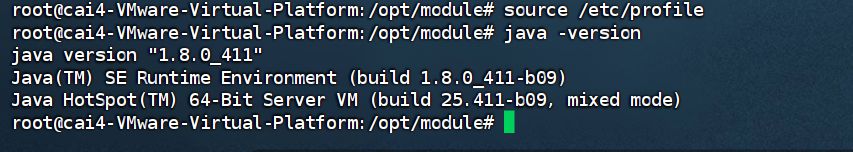

2.1.5 输入 java、javac、java -version 命令检验 jdk 是否安装成功

2.2 Hadoop 下载地址hadoop(hadoop-3.1.3.tar.gz 文件)

2.2.1 传输文件

用文件传输工具将hadoop-3.1.3.tar.gz导入到 Downloads目录里面,注意 非 root 用户操作上传文件操作可能会失败

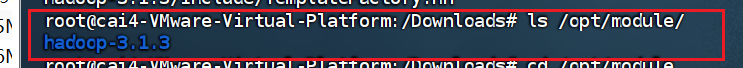

2.2.2 解压文件

# 解压安装文件到/opt/module 下面

tar -zxvf hadoop-3.1.3.tar.gz -C /opt/module/

#查看是否解压成功 ls /opt/module/ hadoop-3.1.3

- 1

- 2

- 3

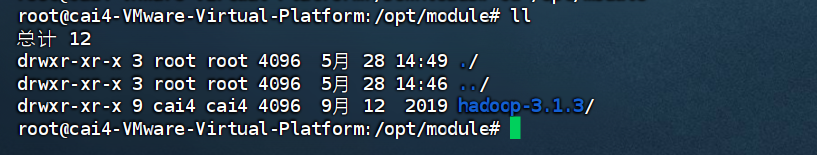

2.2.3 进入hadoop

# 进入hadoop解压位置

cd /opt/module

ll

# 修改hadoop-3.1.3名字

mv hadoop-3.1.3 hadoop

# 进入hadoop-3.1.3

cd hadoop

- 1

- 2

- 3

- 4

- 5

- 6

- 7

2.2.4 将 Hadoop 添加到环境变量

# (1) 打开/etc/profile

vim /etc/profile

# (2)在 my_env.sh 文件末尾添加如下内容:

# HADOOP_HOME

export HADOOP_HOME=/opt/module/hadoop

export PATH=$PATH:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbin

# (3)让修改后的文件生效

source /etc/profile

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

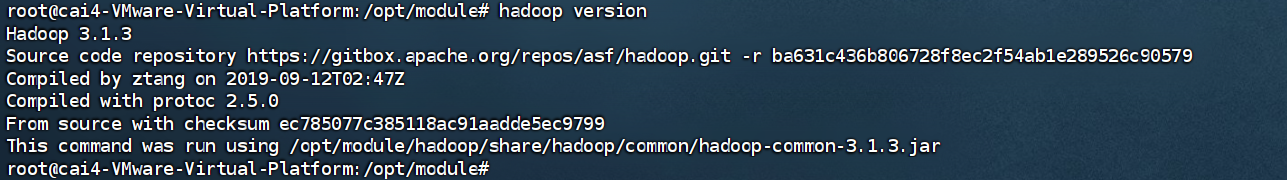

2.2.5 测试是否安装成功

hadoop version Hadoop 3.1.3

2.2.6 在伪分布式中,我们主要是修改Hadoop的两个配置文件:core-site.xml、hdfs-site.xml

# 进入到hadoop目录下 cd /opt/module/hadoop # 进入core-site.xml目录 cd ./etc/hadoop # 我们通过执行以下两个命令来实现对core-site.xml配置文件进行修改: vim core-site.xml # 在<configuration>-</configuration>标签中加入以下配置 <property> <name>hadoop.tmp.dir</name> <value>file:/opt/module/hadoop/tmp</value> <description>Abase for other temporary directories.</description> </property> <property> <name>fs.defaultFS</name> <value>hdfs://localhost:9000</value> </property> # 对hdfs-site.xml配置文件进行修改: vim hdfs-site.xml # 在<configuration>-</configuration>标签中加入以下配置 <property> <name>dfs.replication</name> <value>1</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>file:/opt/module/hadoop/tmp/dfs/name</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>file:/opt/module/hadoop/tmp/dfs/data</value> </property>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

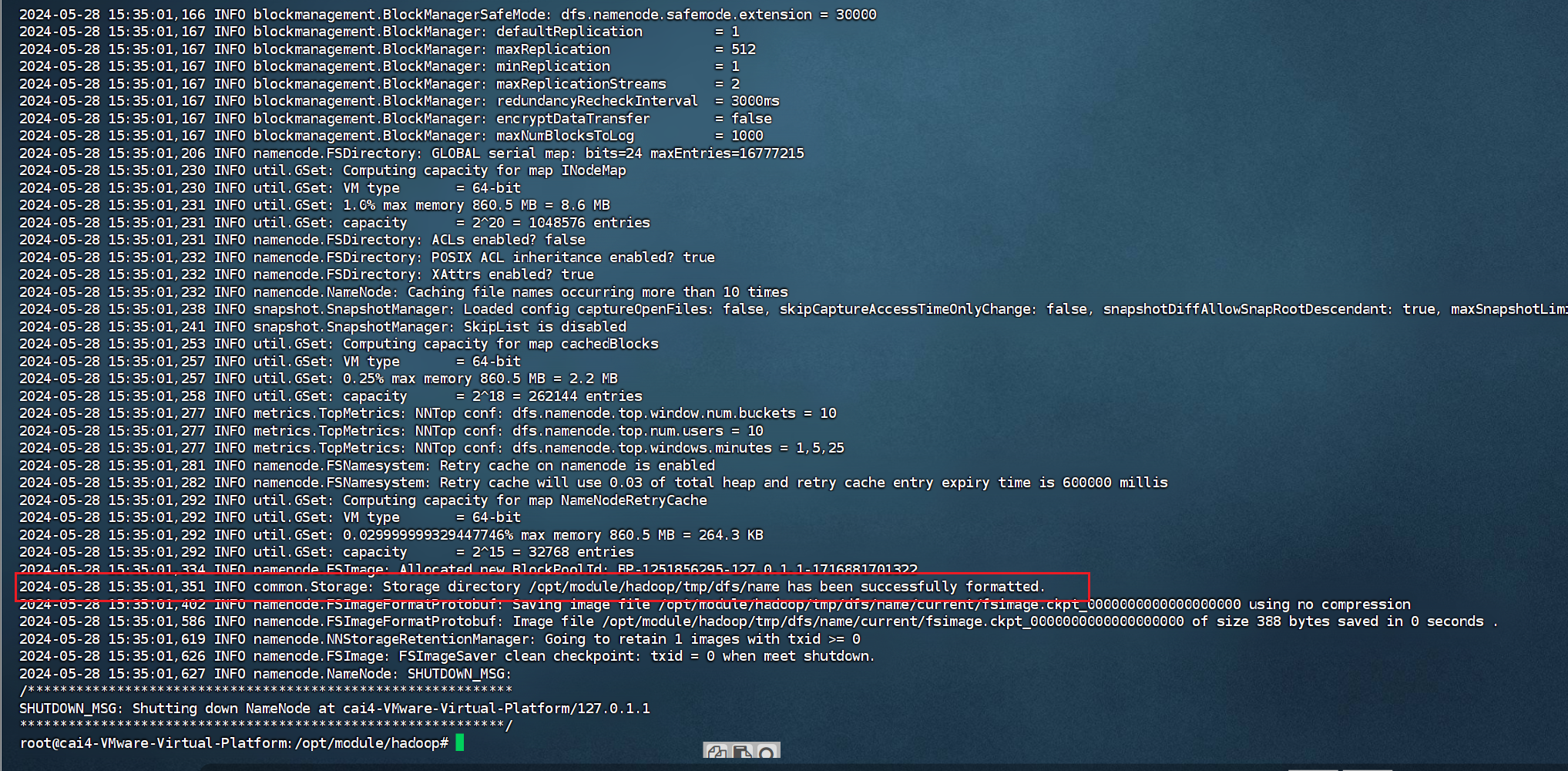

2.2.7 Hadoop初始化

初始化工作比较简单,只需要执行以下命令即可:

cd /opt/module/hadoop #进入hadoop目录

./bin/hdfs namenode -format #初始化hadoop

- 1

- 2

成功的话,会看到 “successfully formatted” 的提示,具体返回信息类似如下: 初始工作完成之后,我们就可以开启Hadoop了,具体命令如下:

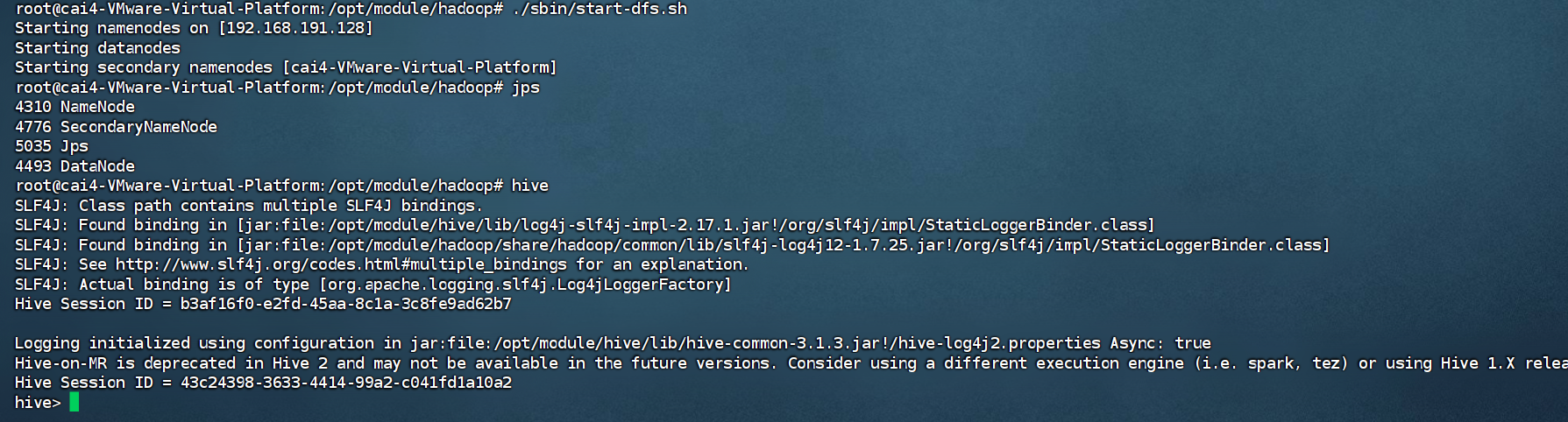

初始工作完成之后,我们就可以开启Hadoop了,具体命令如下:

cd /opt/module/hadoop

./sbin/start-dfs.sh #start-dfs.sh是个完整的可执行文件,中间没有空格

- 1

- 2

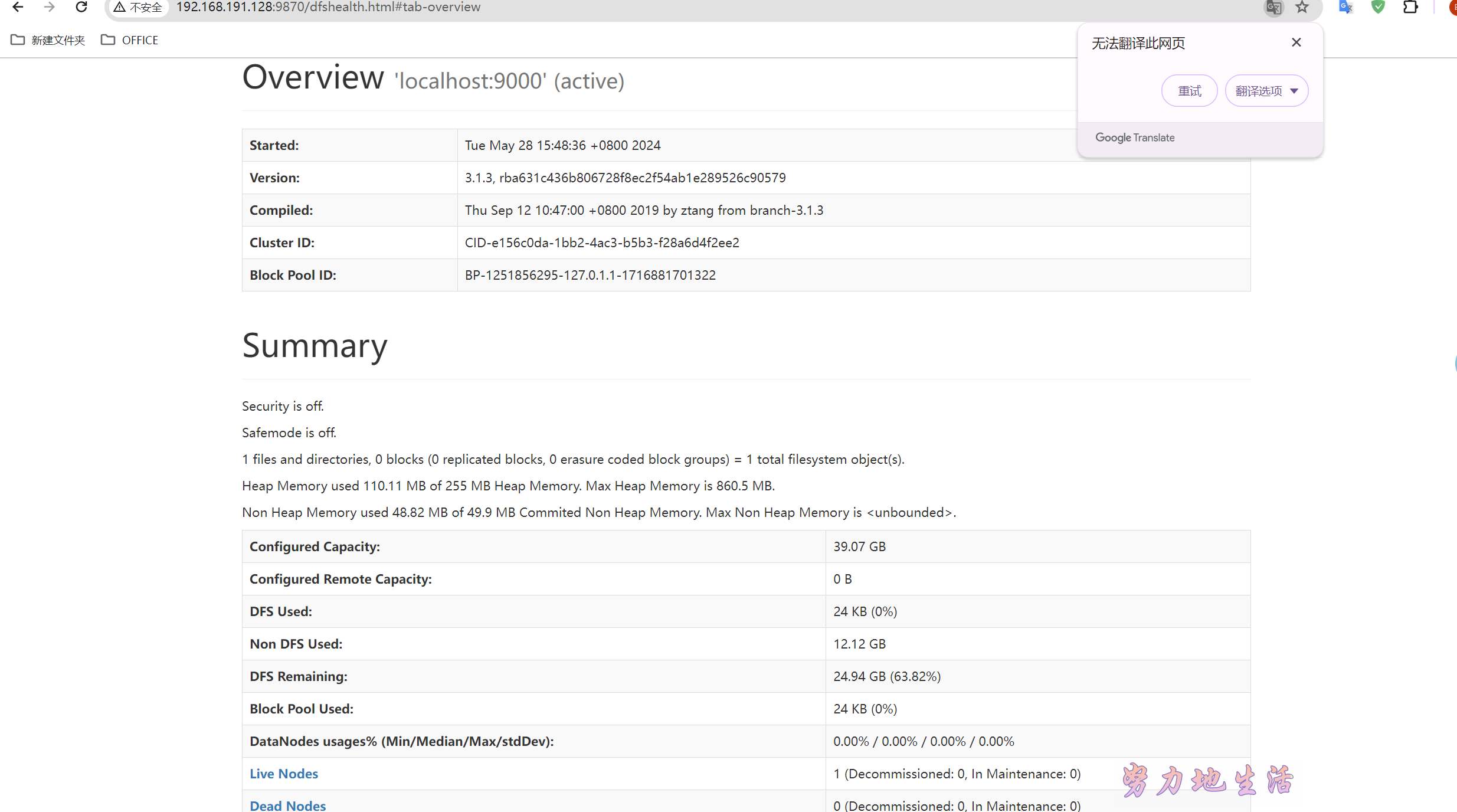

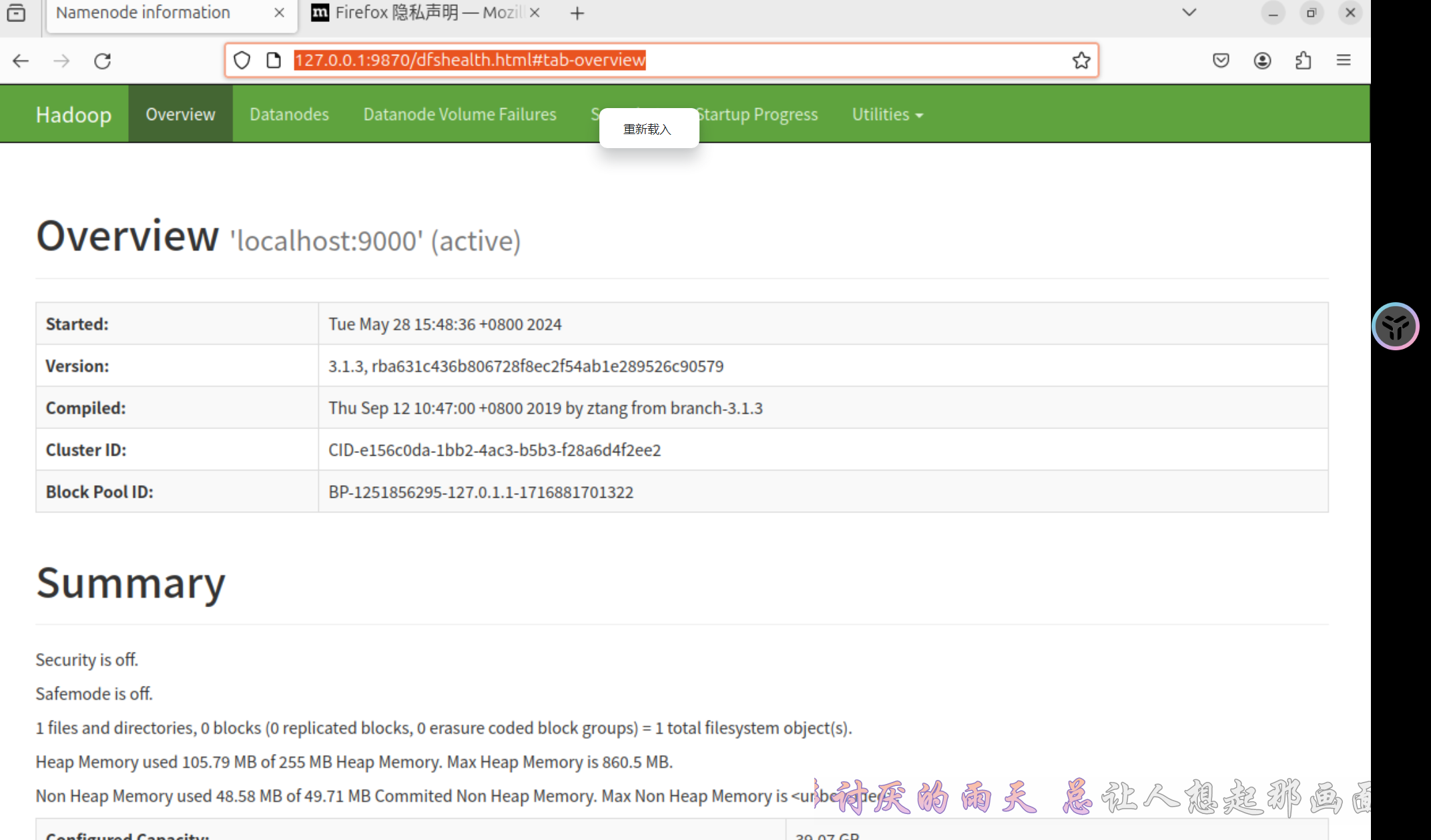

本地 web 访问:hadoop 虚拟机 web 访问:hadoop

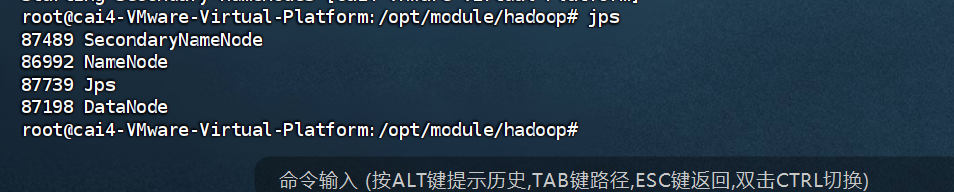

虚拟机 web 访问:hadoop 启动完成后,我们可以通过输入jps命令来进行验证Hadoop伪分布式是否配置成功:

启动完成后,我们可以通过输入jps命令来进行验证Hadoop伪分布式是否配置成功:

2.2.8 拓展: Hadoop 目录 结构

- bin 目录:存放对 Hadoop 相关服务(hdfs,yarn,mapred)进行操作的脚本

- etc 目录:Hadoop 的配置文件目录,存放 Hadoop 的配置文件

- lib 目录:存放 Hadoop 的本地库(对数据进行压缩解压缩功能)

- sbin 目录:存放启动或停止 Hadoop 相关服务的脚本

- share 目录:存放 Hadoop 的依赖 jar 包、文档、和官方案例

2.2.9 报错

- hadoop 启动时报如下错误

Starting namenodes on [localhost]

ERROR: Attempting to operate on hdfs namenode as root

ERROR: but there is no HDFS_NAMENODE_USER defined.

Aborting operation. Starting datanodes

ERROR: Attempting to operate on hdfs datanode as root

ERROR: but there is no HDFS_DATANODE_USER defined.

Aborting operation.

Starting secondary namenodes [cai4-VMware-Virtual-Platform]

ERROR: Attempting to operate on hdfs secondarynamenode as root

ERROR: but there is no HDFS_SECONDARYNAMENODE_USER defined. Aborting operation.

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

Starting namenodes on [localhost]

localhost: Warning: Permanently added 'localhost' (ED25519) to the list of known hosts.

localhost: root@localhost: Permission denied (publickey,password).

Starting datanodes

localhost: root@localhost: Permission denied (publickey,password).

Starting secondary namenodes [cai4-VMware-Virtual-Platform]

cai4-VMware-Virtual-Platform: Warning: Permanently added 'cai4-vmware-virtual-platform' (ED25519) to the list of known hosts.

cai4-VMware-Virtual-Platform: root@cai4-vmware-virtual-platform: Permission denied (publickey,password).

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

localhost: ERROR: JAVA_HOME is not set and could not be found.

Starting datanodes

localhost: ERROR: JAVA_HOME is not set and could not be found.

Starting secondary namenodes [cai4-VMware-Virtual-Platform]

cai4-VMware-Virtual-Platform: ERROR: JAVA_HOME is not set and could not be found.

- 1

- 2

- 3

- 4

- 5

解决方法:

# 输入如下命令,在环境变量中添加下面的配置

vi /etc/profile

# 然后向里面加入如下的内容

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

# 输入如下命令使改动生效

source /etc/profile

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

//Linux命令---实现SSH免密登录

exit # 退出前面的登录

cd ~/.ssh/ # 若没有该目录,请先执行一次ssh localhost

ssh-keygen -t rsa # 回车后,一直回车直到出现图形化界面

cat ./id_rsa.pub >> ./authorized_keys # 加入授权

- 1

- 2

- 3

- 4

- 5

# 修改hadoop-env.sh (我的hadoop安装在/usr/local/ 目录下)

vim /opt/module/hadoop/etc/hadoop/hadoop-env.sh

# 将原本的JAVA_HOME 替换为绝对路径就可以了

#export JAVA_HOME=${JAVA_HOME}

export JAVA_HOME=/opt/module/jdk1.8.

- 1

- 2

- 3

- 4

- 5

三、安装 hive

3.1 文件传输

把 apache-hive-3.1.3-bin.tar.gz上传到Linux的/Downloads 目录下

3.2 解压文件

解压apache-hive-3.1.3-bin.tar.gz到/opt/module/ 目录下面

tar -zxvf apache-hive-3.1.3-bin.tar.gz -C /opt/module/

- 1

3.3 修改名称

修改apache-hive-3.1.3-bin的名称为hive

cd /opt/module

mv apache-hive-3.1.3-bin hive

- 1

- 2

3.4 修改/etc/profile,添加环境变量

vim /etc/profile

# (1)添加内容

# HIVE_HOME

export HIVE_HOME=/opt/module/hive

export PATH=$PATH:$HIVE_HOME/bin

source /etc/profile

- 1

- 2

- 3

- 4

- 5

- 6

3.5 初始化元数据库(默认是derby数据库)

cd /opt/module/hive

bin/schematool -dbType derby -initSchema

- 1

- 2

报错:

Exception in thread "main" java.lang.NoSuchMethodError: com.google.common.base.Preconditions.checkArgument(ZLjava/lang/String;Ljava/lang/Object;)V at org.apache.hadoop.conf.Configuration.set(Configuration.java:1357) at org.apache.hadoop.conf.Configuration.set(Configuration.java:1338) at org.apache.hadoop.mapred.JobConf.setJar(JobConf.java:518) at org.apache.hadoop.mapred.JobConf.setJarByClass(JobConf.java:536) at org.apache.hadoop.mapred.JobConf.<init>(JobConf.java:430) at org.apache.hadoop.hive.conf.HiveConf.initialize(HiveConf.java:5144) at org.apache.hadoop.hive.conf.HiveConf.<init>(HiveConf.java:5107) at org.apache.hive.beeline.HiveSchemaTool.<init>(HiveSchemaTool.java:96) at org.apache.hive.beeline.HiveSchemaTool.main(HiveSchemaTool.java:1473) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.apache.hadoop.util.RunJar.run(RunJar.java:318) at org.apache.hadoop.util.RunJar.main(RunJar.java:232)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

原因是hadoop和hive的两个guava.jar版本不一致,两个jar位置分别位于下面两个目录:

/opt/module/hive/lib/guava-19.0.jar

/opt/module/hadoop/share/hadoop/common/lib/guava-27.0-jre.jar

# 解决办法是删除低版本的那个,将高版本的复制到低版本目录下。

cd /opt/module/hive/lib

rm -f guava-19.0.jar

cp /opt/module/hadoop/share/hadoop/common/lib/guava-27.0-jre.jar .

# 再次运行schematool -dbType derby -initSchema,即可成功初始化元数据库。

- 1

- 2

- 3

- 4

- 5

- 6

- 7

三、MySQL安装

1. 安装MySQL

1) 安装MySQL服务器

apt-get install mysql-server

- 1

在安装过程中,系统将提示您创建root密码。选择一个安全的,并确保记住它,因为后面需要用到这个密码。

2) 安装MySQL客户端

apt-get install mysql-client

- 1

3)配置MySQL

运行MySQL初始化安全脚本

mysql_secure_installation

- 1

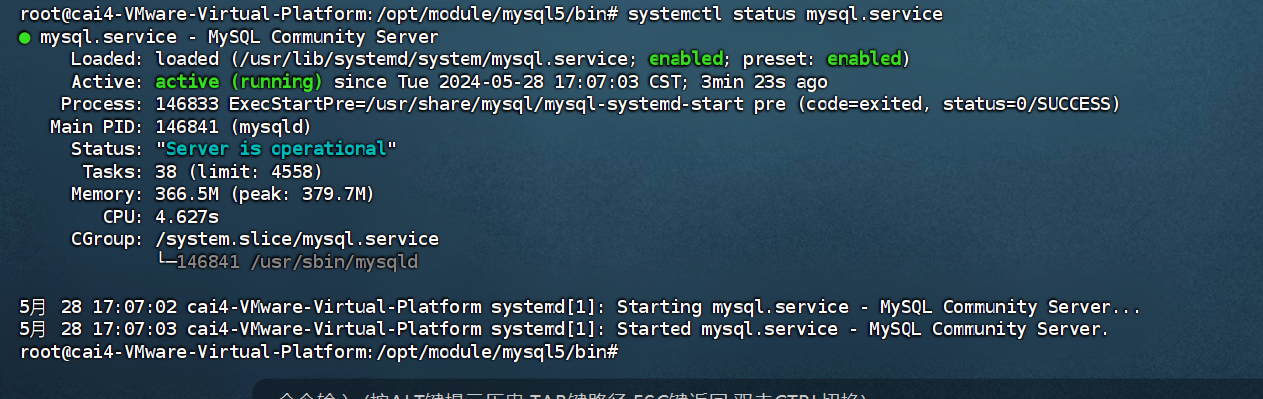

4) 测试MySQL

无论你如何安装它,MySQL应该已经开始自动运行。要测试它,请检查其状态。

systemctl status mysql.service

- 1

将看到类似于以下内容的输出:

5)配置MySQL

# 更改MySQL密码策略

set global validate_password_policy=0;

set global validate_password_length=1;

update user set host="%" where user="root";

ALTER USER 'root'@'%' IDENTIFIED WITH mysql_native_password BY '123456';

flush privileges;

- 1

- 2

- 3

- 4

- 5

- 6

6)一些 MySQL 命令

# 设置MySQL服务开机自启动

service mysql enable

或

systemctl enable mysql.service

# 停止MySQL服务开机自启动

service mysql disable

或

systemctl disable mysql.service

# 重启MySQL数据库服务

service mysql restart

或

systemctl restart mysql.service

# MySQL的配置文件

vim /etc/mysql/mysql.conf.d/mysqld.cnf

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

7)报错

Failed to restart mysqld.service: Unit mysqld.service not found.

- 1

“The MySQL server is running with the --skip-grant-tables option so it cannot execute”

- 1

Navicat报错10061,ERROR 1819 (HY000): Your password does not satisfy the current policy requirements

解决方法:

sudo vim /etc/mysql/mysql.conf.d/mysqld.cnf

# bind-address 127.0.0.1

mysql -u root -p

use mysql

select host,user from user;

update user set host='%' where user='root';

flush privileges;

grant all privileges on *.* to 'root'@'%';

ALTER USER 'root'@'%' IDENTIFIED WITH mysql_native_password BY 'root_pwd'; ## 授权root远程登录 后面的root_pwd代表登录密码

flush privileges;

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

解决方法:

/etc/init.d/mysql start

- 1

flush privileges;

ALTER USER 'root'@'localhost' IDENTIFIED BY '123456';

- 1

- 2

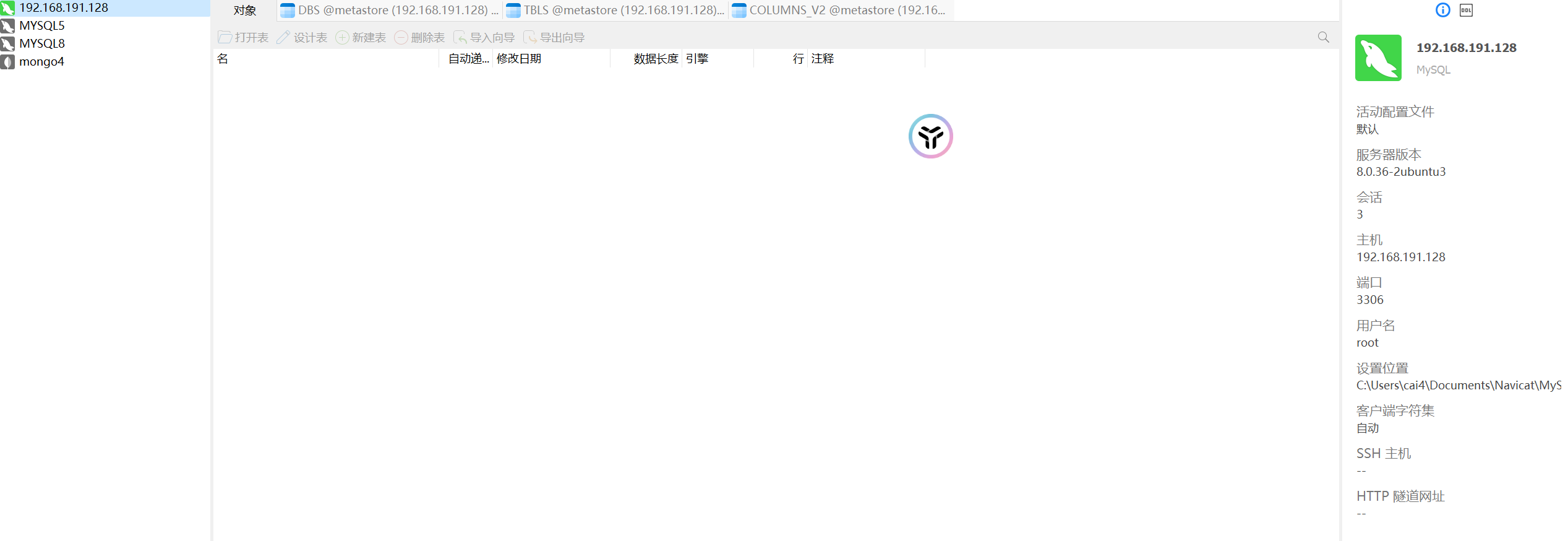

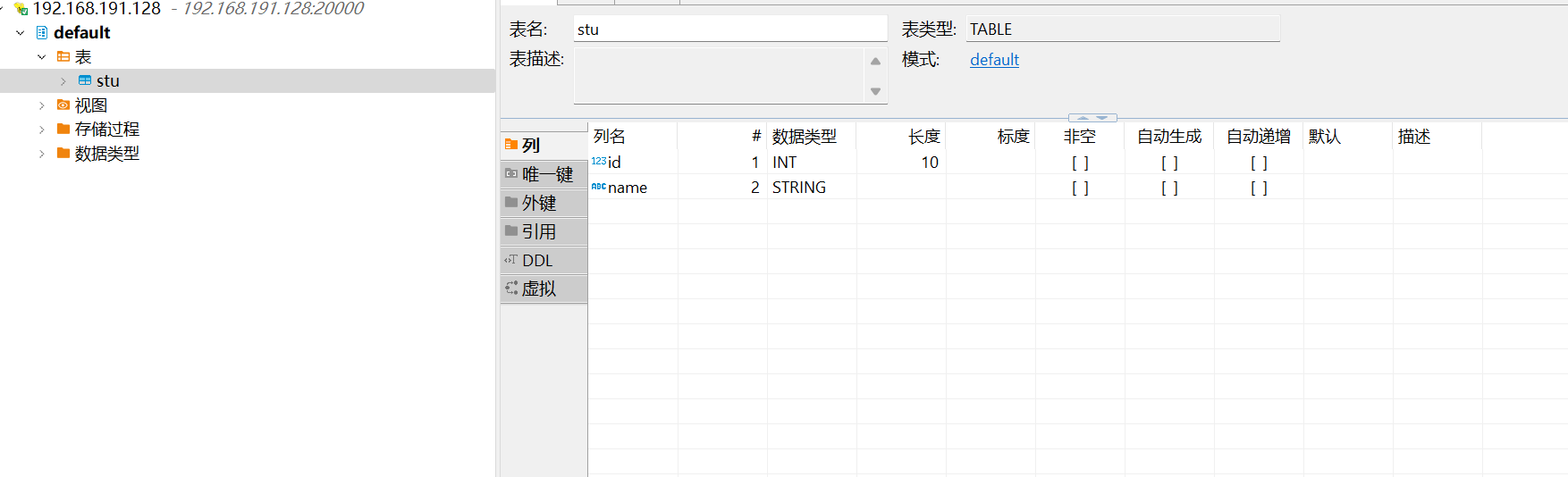

8)navicte 连接

四、配置Hive元数据存储到MySQL

1. 配置元数据到MySQL

1)新建Hive元数据库

#登录MySQL

mysql -uroot -p123456

#创建Hive元数据库

create database metastore;

quit;

- 1

- 2

- 3

- 4

- 5

2)在$HIVE_HOME/conf目录下新建hive-site.xml文件

vim $HIVE_HOME/conf/hive-site.xml # 添加如下内容: <?xml version="1.0"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <!-- jdbc连接的URL --> <property> <name>javax.jdo.option.ConnectionURL</name> <value>jdbc:mysql://localhost:3306/metastore?useSSL=false</value> </property> <!-- jdbc连接的Driver--> <property> <name>javax.jdo.option.ConnectionDriverName</name> <value>com.mysql.jdbc.Driver</value> </property> <!-- jdbc连接的username--> <property> <name>javax.jdo.option.ConnectionUserName</name> <value>root</value> </property> <!-- jdbc连接的password --> <property> <name>javax.jdo.option.ConnectionPassword</name> <value>123456</value> </property> <!-- Hive默认在HDFS的工作目录 --> <property> <name>hive.metastore.warehouse.dir</name> <value>/opt/module/hive/warehouse</value> </property> </configuration>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

3)初始化Hive元数据库(修改为采用MySQL存储元数据)

cd /opt/module/hive

bin/schematool -dbType mysql -initSchema -verbose

- 1

- 2

4)启动Hive

hive

- 1

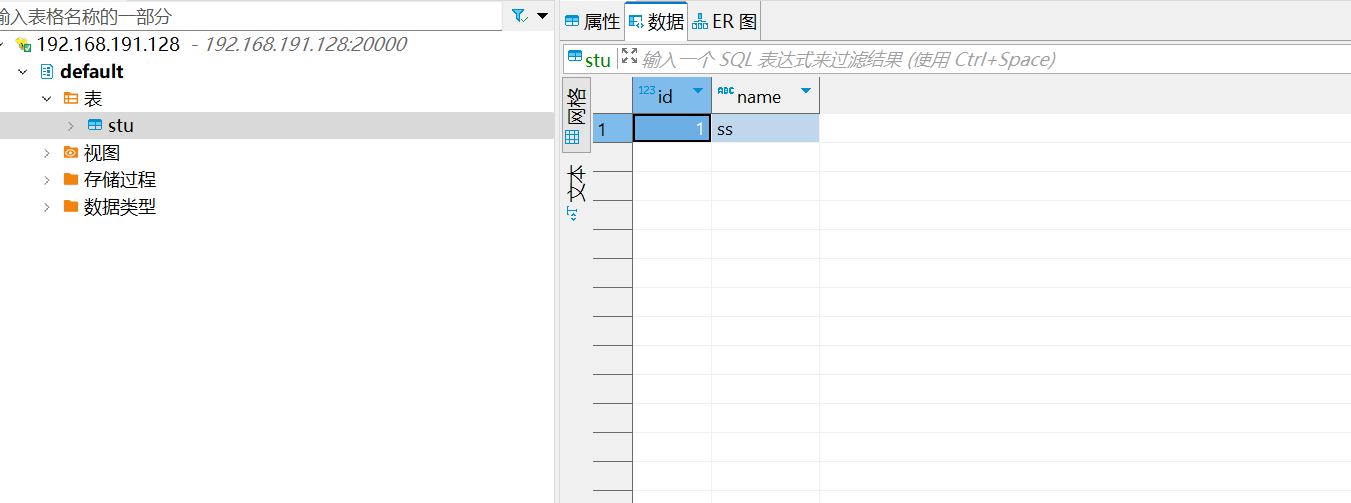

5)使用Hive

show databases;

show tables;

create table stu(id int, name string);

insert into stu values(1,"ss");

select * from stu;

- 1

- 2

- 3

- 4

- 5

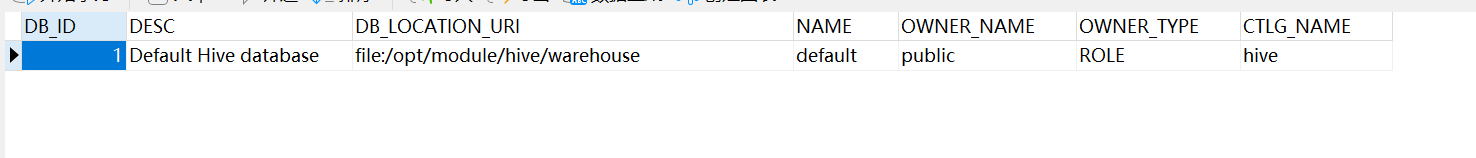

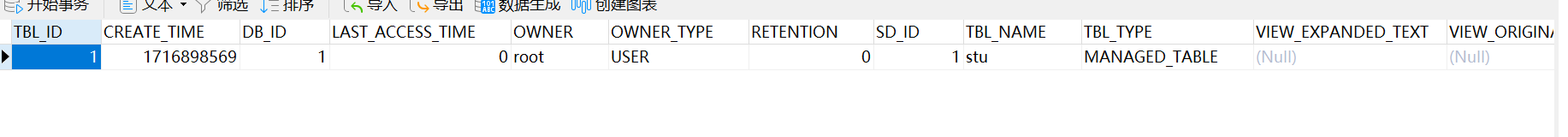

6)查看MySQL中的元数据

- 查看元数据库中存储的库信息(DBS)

-

查看元数据库中存储的表信息(TBLS)

-

查看元数据库中存储的表中列相关信息(COLUMNS_V2)

五、Hive服务部署

5.1 Hadoop端配置

hivesever2的模拟用户功能,依赖于Hadoop提供的proxy user(代理用户功能),只有Hadoop中的代理用户才能模拟其他用户的身份访问Hadoop集群。因此,需要将hiveserver2的启动用户设置为Hadoop的代理用户,配置方式如下:修改配置文件core-site.xml,然后记得分发三台机器:

cd $HADOOP_HOME/etc/hadoop

vim core-site.xml

# 增加如下配置:

<!-- 配置访问hadoop的权限,能够让hive访问到 -->

<property>

<name>hadoop.proxyuser.root.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.users</name>

<value>*</value>

</property>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

5.2 Hive端配置

在hive-site.xml文件中添加如下配置信息:

# 查看主机名

hostname cai4-VMware-Virtual-Platform

# 更改主机名

hostnamectl set-hostname hadoop100

# 同步更改/etc/hosts内容

<!-- 指定hiveserver2连接的host -->

<property>

<name>hive.server2.thrift.bind.host</name>

<value>hadoop</value>

</property>

<!-- 指定hiveserver2连接的端口号 -->

<property>

<name>hive.server2.thrift.port</name>

<value>10000</value>

</property>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

5.3 测试

# 启动hiveserver2

hive --service hiveserver2

# 若报错:Error starting HiveServer2 on attempt 1 , will retry in 60000ms

# 在 hive-site.xml 中添加如下配置:

<property>

<name>hive.server2.active.passive.ha.enable</name>

<value>true</value>

<description>Whether HiveServer2 Active/Passive High Availability be enabled when Hive Interactive sessions are enabled.This will also require hive.server2.support.dynamic.service.discovery to be enabled.</description>

</property>

# 重新启动hiveserver2服务:

hive --service hiveserver2

#使用命令行客户端beeline进行远程访问 启动beeline客户端

beeline -u jdbc:hive2://192.168.191.28:10000 -n root

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

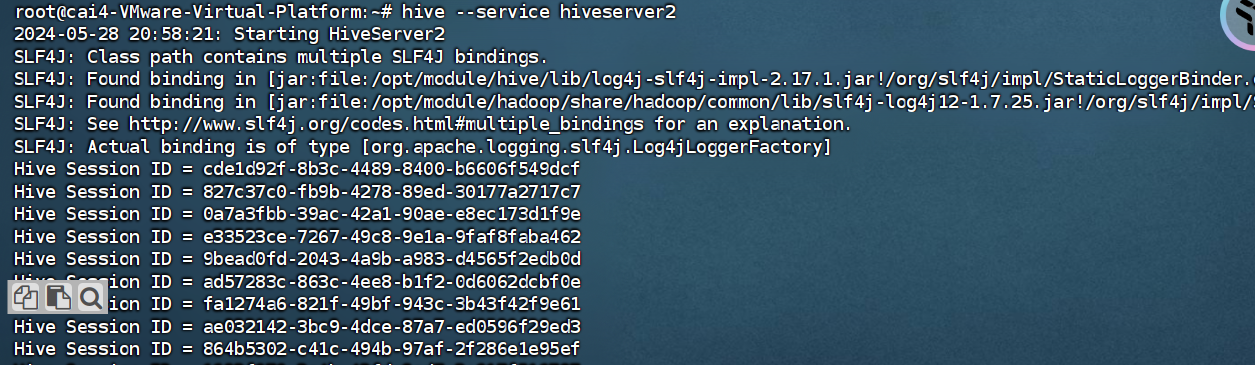

- 13

其中,hive --service hiveserver2命令启动后界面如下为正常,且未连接远程之前皆为正常

# 重启hadoop

sbin/stop-all.sh

sbin/start-all.sh

# 重启hive

ps -aux|grep hive 查找进程命令

kill -9 2323

#启动metastore服务

hive --service metastore &

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8