热门标签

当前位置: article > 正文

kafka部署_kafka项目部署

作者:小丑西瓜9 | 2024-04-17 05:17:58

赞

踩

kafka项目部署

目录

1.5 配置 kafka 启动脚本并设置开机自启,启动kafka

一、安装zookeeper集群

1.1安装zookeeper集群

可见上篇文章,接着做就行(部署所有集群服务器)

1.2下载安装包并安装kafka

- cd /opt/

- tar zxvf kafka_2.13-2.7.1.tgz

mv kafka_2.13-2.7.1 /usr/local/kafka

1.3修改配置文件

- cd /usr/local/kafka/config/

- cp server.properties{,.bak}

-

- vim server.properties

- broker.id=0 #21行,broker的全局唯一编号,每个broker不能重复,因此要在其他机器上配置 broker.id=1、broker.id=2

- listeners=PLAINTEXT://192.168.2.33:9092 #31行,指定监听的IP和端口,如果修改每个broker的IP需区分开来,也可保持默认配置不用修改

- num.network.threads=3 #42行,broker 处理网络请求的线程数量,一般情况下不需要去修改

- num.io.threads=8 #45行,用来处理磁盘IO的线程数量,数值应该大于硬盘数

- socket.send.buffer.bytes=102400 #48行,发送套接字的缓冲区大小

- socket.receive.buffer.bytes=102400 #51行,接收套接字的缓冲区大小

- socket.request.max.bytes=104857600 #54行,请求套接字的缓冲区大小

- log.dirs=/usr/local/kafka/logs #60行,kafka运行日志存放的路径,也是数据存放的路径

- num.partitions=1 #65行,topic在当前broker上的默认分区个数,会被topic创建时的指定参数覆盖

- num.recovery.threads.per.data.dir=1 #69行,用来恢复和清理data下数据的线程数量

- log.retention.hours=168 #103行,segment文件(数据文件)保留的最长时间,单位为小时,默认为7天,超时将被删除

- log.segment.bytes=1073741824 #110行,一个segment文件最大的大小,默认为 1G,超出将新建一个新的segment文件

- zookeeper.connect=192.168.2.33:2181,192.168.2.77:2181,192.168.2.99:2181 #123行,配置连接Zookeeper集群地址

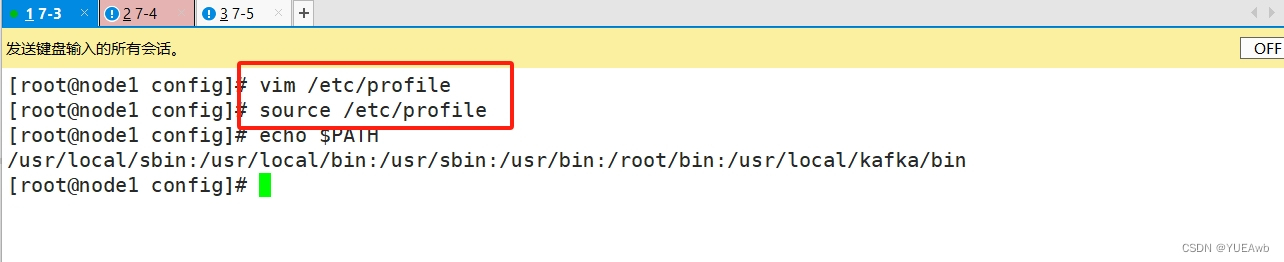

1.4修改环境变量

- vim /etc/profile

- export KAFKA_HOME=/usr/local/kafka

- export PATH=$PATH:$KAFKA_HOME/bin

-

- source /etc/profile

1.5 配置 kafka 启动脚本并设置开机自启,启动kafka

- #!/bin/bash

- #chkconfig:2345 22 88

- #description:Kafka Service Control Script

- KAFKA_HOME='/usr/local/kafka'

- case $1 in

- start)

- echo "---------- Kafka 启动 ------------"

- ${KAFKA_HOME}/bin/kafka-server-start.sh -daemon ${KAFKA_HOME}/config/server.properties

- ;;

- stop)

- echo "---------- Kafka 停止 ------------"

- ${KAFKA_HOME}/bin/kafka-server-stop.sh

- ;;

- restart)

- $0 stop

- $0 start

- ;;

- status)

- echo "---------- Kafka 状态 ------------"

- count=$(ps -ef | grep kafka | egrep -cv "grep|$$")

- if [ "$count" -eq 0 ];then

- echo "kafka is not running"

- else

- echo "kafka is running"

- fi

- ;;

- *)

- echo "Usage: $0 {start|stop|restart|status}"

- esac

1.6加权和将服务加到系统中

- chmod +x /etc/init.d/kafka

- chkconfig --add kafka

1.7分别启动kafka

service kafka start

1.8 Kafka 命令行操作

1.创建topic

- kafka-topics.sh --create --zookeeper 192.168.91.103:2181,192.168.91.104:2181,192.168.91.105:2181 --replication-factor 2 --partitions 3 --topic test

-

- -------------------------------------------------------------------------------------

- --zookeeper:定义 zookeeper 集群服务器地址,如果有多个 IP 地址使用逗号分割,一般使用一个 IP 即可

- --replication-factor:定义分区副本数,1 代表单副本,建议为 2

- --partitions:定义分区数

- --topic:定义 topic 名称

- ------------------------------------

2.查看当前服务器中的所有 topic

kafka-topics.sh --list --zookeeper 192.168.91.103:2181,192.168.91.104:2181,192.168.91.105:2181

3.查看某个 topic 的详情

kafka-topics.sh --describe --zookeeper 192.168.91.103:2181,192.168.91.104:2181,192.168.91.105:2181

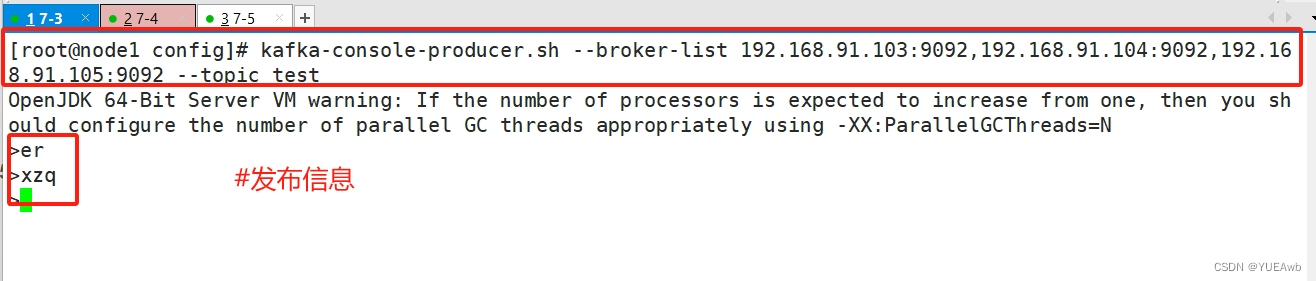

4.发布消息

kafka-console-producer.sh --broker-list 192.168.91.103:9092,192.168.91.104:9092,192.168.91.105:9092 --topic test

5.消费消息

- kafka-console-consumer.sh --bootstrap-server 192.168.91.103:9092,192.168.91104:9092,192.168.91.105:9092 --topic test --from-beginning

-

- -------------------------------------------------------------------------------------

- --from-beginning:会把主题中以往所有的数据都读取出来

- -------------------------------------------------------------------------------------

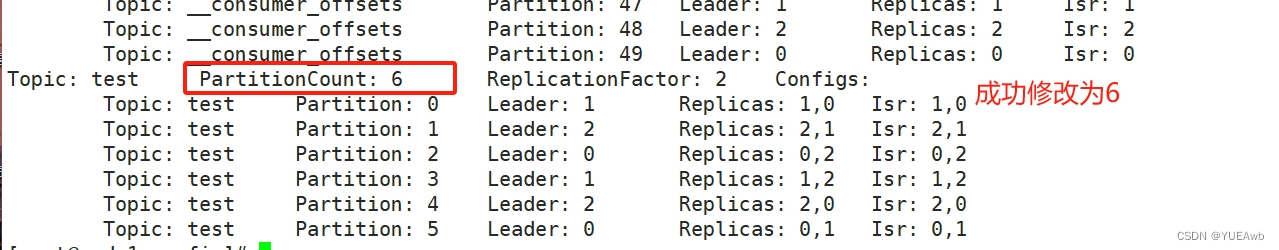

6.修改分区数

kafka-topics.sh --zookeeper 192.168.91.103:2181,192.168.91.104:2181,192.168.91.105:2181 --alter --topic test --partitions 6

7.删除topic

kafka-topics.sh --delete --zookeeper 192.168.91.103:2181,192.168.91.104:2181,192.168.91.105:2181 --topic test

二、部署Filebeat+Kafka+ELK

1.1部署Zookeeper+Kafka集群

接着上面实验做

1.2部署Filebeat

要搭建ELK,可见之前的文章,接着写

- cd /usr/local/filebeat

-

- vim filebeat.yml

- filebeat.prospectors:

- - type: log

- enabled: true

- paths:

- - /var/log/messages

- - /var/log/*.log

- ......

- #添加输出到 Kafka 的配置

- output.kafka:

- enabled: true

- hosts: ["192.168.91.103:9092","192.168.91.104:9092","192.168.91.105:9092"] #指定 Kafka 集群配置

- topic: "filebeat_test" #指定 Kafka 的 topic

-

- #启动 filebeat

- ./filebeat -e -c filebeat.yml

1.3部署ELK,在logstash组件所在节点上新建一个Logstash配置文件

- cd /etc/logstash/conf.d/

-

- vim filebeat.conf

- input {

- kafka {

- bootstrap_servers => "192.168.91.103:9092,192.168.91.104:9092,192.168.91.105:9092"

- topics => "filebeat_test"

- group_id => "test123"

- auto_offset_reset => "earliest"

- }

- }

-

- output {

- elasticsearch {

- hosts => ["192.168.91.102:9200"]

- index => "filebeat_test-%{+YYYY.MM.dd}"

- }

- stdout {

- codec => rubydebug

- }

- }

1.4启动logstash

logstash -f filebeat.conf

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/小丑西瓜9/article/detail/438389?site

推荐阅读

相关标签