- 1ssm139选课排课系统的设计与开发+vue

- 2第三篇:如何精准表达,高效沟通_如何学会高效沟通与表达

- 3AIGC基础通识讲解——图解,小白向_aigc 学习

- 4Sublime Text 崇高文本 ----最性感的编辑器(程序员必备)_sublimetext怎么加图片

- 5图的深度优先遍历和广度优先遍历、最小生成树、最短路径、拓扑排序、关键路径_最小生成树深度优先遍历

- 6企业资产|企业资产管理系统|基于springboot企业资产管理系统设计与实现(源码+数据库+文档)_搭建一个资产管理系统

- 7大数据各组件安装(数据中台搭建)_大数据安装包

- 8Android Automotive架构与流程:VehicleHAL,CarService

- 9【内存泄漏Bug】registerReceiver Are you missing a call to unregisterReceiver()异常分析及解决

- 10L1-080 乘法口诀数列 (20 分)_l1-080 乘法口诀数列 分数 20 作者 陈越 单位 浙江大学 本题要求你从任意给定的两

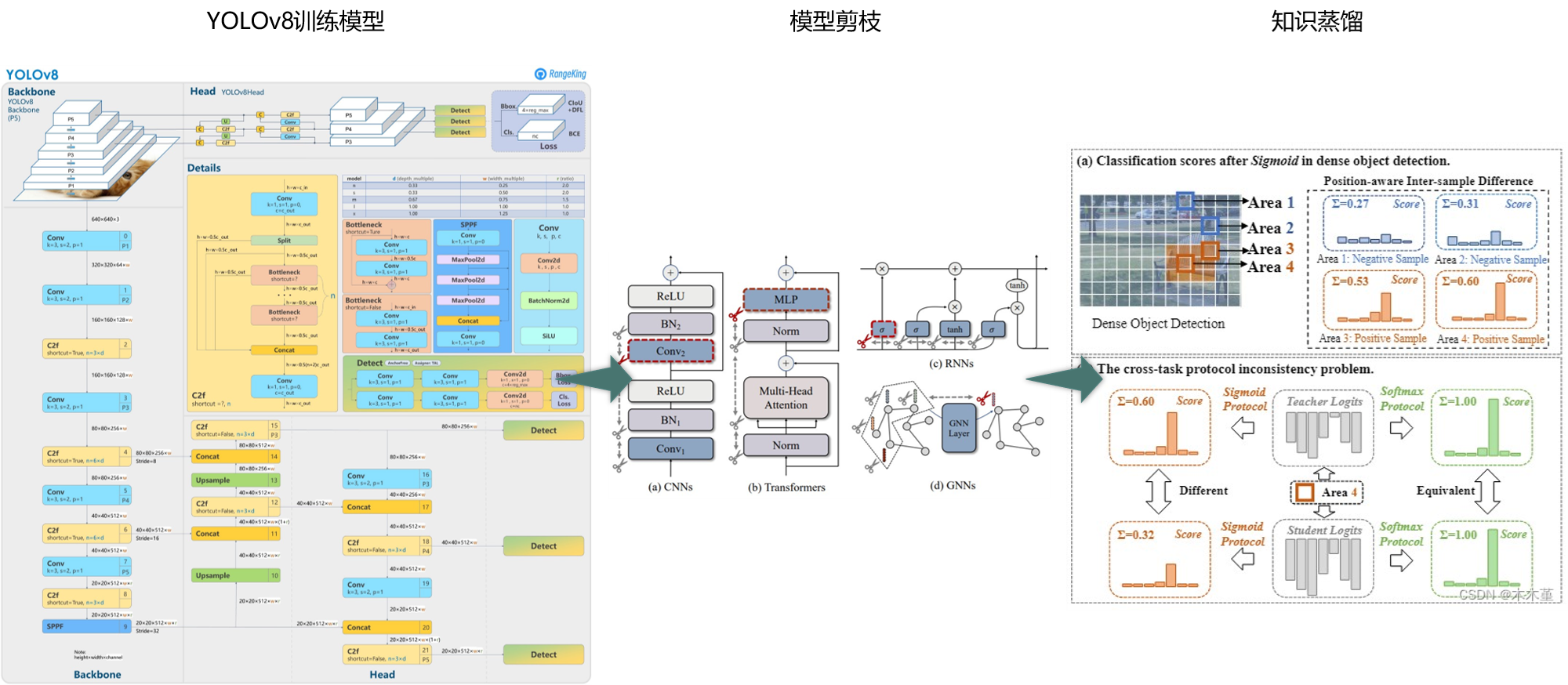

基于YOLOv8的剪枝+知识蒸馏=无损轻量化_yolov8剪枝和蒸馏

赞

踩

1.实验结果(自制数据集)

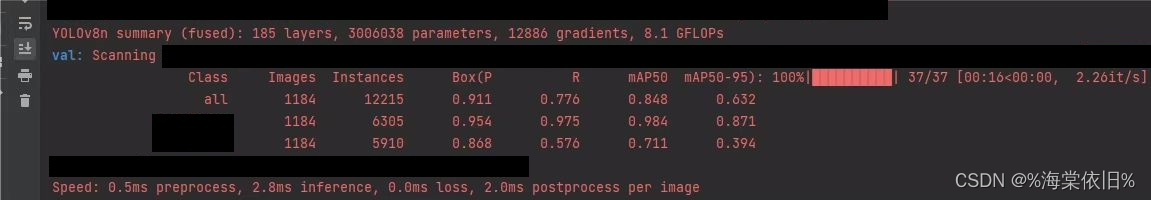

(1)YOLOv8n剪枝+蒸馏(YOLOv8s为教师模型):

Base:mAP 84.8%,Parameters 3.01M,GFLOPs 8.1G,FPS 188.7

Prune(蒸馏微调训练):mAP 84.9%,Parameters 1.67M,GFLOPs 5.0G,FPS 217.4

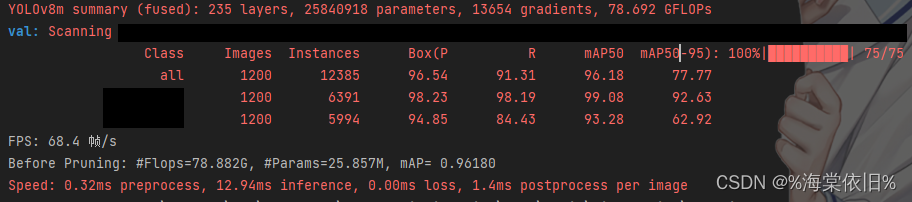

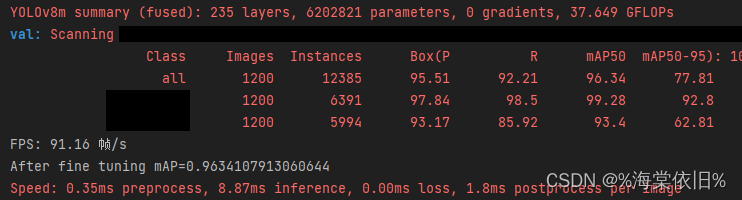

(2)YOLOv8m剪枝+蒸馏(YOLOv8m自蒸馏):

Base:mAP 96.18%,Parameters 25.84M,GFLOPs 78.692G,FPS 68.4

Prune(蒸馏微调训练):mAP 96.34%,Parameters 6.20M,GFLOPs 37.649G,FPS 91.2

2.支持的方法

(1)剪枝方法:

l1:https://arxiv.org/abs/1608.08710

lamp:https://arxiv.org/abs/2010.07611

slim:https://arxiv.org/abs/1708.06519

group_norm:https://openaccess.thecvf.com/content/CVPR2023/html/Fang_DepGraph_Towards_Any_Structural_Pruning_CVPR_2023_paper.html

group_taylor:https://openaccess.thecvf.com/content_CVPR_2019/papers/Molchanov_Importance_Estimation_for_Neural_Network_Pruning_CVPR_2019_paper.pdf

(2)知识蒸馏:

Logits蒸馏:

(Bridging Cross-task Protocol Inconsistency for Distillation in Dense Object Detection)https://arxiv.org/pdf/2308.14286.pdf

CrossKD(Cross-Head Knowledge Distillation for Dense Object Detection)https://arxiv.org/abs/2306.11369

NKD(From Knowledge Distillation to Self-Knowledge Distillation: A Unified Approach with Normalized Loss and Customized Soft Labels)https://arxiv.org/abs/2303.13005

DKD(Decoupled Knowledge Distillation) https://arxiv.org/pdf/2203.08679.pdf

LD(Localization Distillation for Dense Object Detection) https://arxiv.org/abs/2102.12252

WSLD(Rethinking the Soft Label of Knowledge Extraction: A Bias-Balance Perspective) https://arxiv.org/pdf/2102.00650.pdf

Distilling the Knowledge in a Neural Network https://arxiv.org/pdf/1503.02531.pd3f

RKD(Relational Knowledge Disitllation) http://arxiv.org/pdf/1904.05068。

特征蒸馏:

CWD(Channel-wise Knowledge Distillation for Dense Prediction)https://arxiv.org/pdf/2011.13256.pdf

MGD(Masked Generative Distillation)https://arxiv.org/abs/2205.01529

FGD(Focal and Global Knowledge Distillation for Detectors)https://arxiv.org/abs/2111.11837

FSP(A Gift from Knowledge Distillation: Fast Optimization, Network Minimization and Transfer Learning)https://openaccess.thecvf.com/content_cvpr_2017/papers/Yim_A_Gift_From_CVPR_2017_paper.pdf

PKD(General Distillation Framework for Object Detectors via Pearson Correlation Coefficient) https://arxiv.org/abs/2207.02039

VID(Variational Information Distillation for Knowledge Transfer) https://arxiv.org/pdf/1904.05835.pdf

Mimic(Quantization Mimic Towards Very Tiny CNN for Object Detection)https://arxiv.org/abs/1805.02152

代码复现不易,有偿获取。