- 1解决方案:Github Support for password authentication was removed on August 13, 2021.

- 2PySpark特征工程(I)--数据预处理_pyspark数据预处理

- 3DotNetBrowser 宣布支持 Avalonia UI:跨平台.NET网页视图控件再添新军_avalonia 浏览器

- 4【微信小程序学习】解决多个视频同时播放的问题_微信小程序video全屏模式下,可以叠加其他视频吗

- 5VUE3:统计分析页面布局+自适应页面参考_vue3自适应布局

- 6大数据spark计算引擎快速入门_spark 实现计算引擎

- 7pre-training && fine-tuning(预训练与微调)_pre-training and fine-tuning

- 8Spark的RDD操作:转换(transformation)和行动(action)_spark对rdd的操作主要分为行动(action)和转换(transformation)两种类型,

- 9使用源代码编译方式升级内核【笔记】

- 10springboot整合redis

Spark 实训1,RDD案例:词频统计_spark实训一

赞

踩

目录

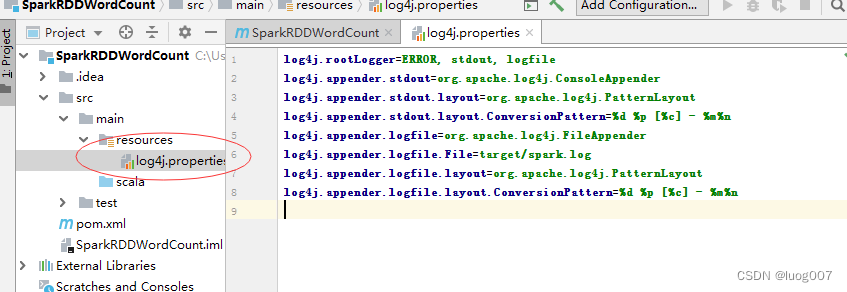

2.4在资源文件夹里创建日指数型文件 - log4j.properties

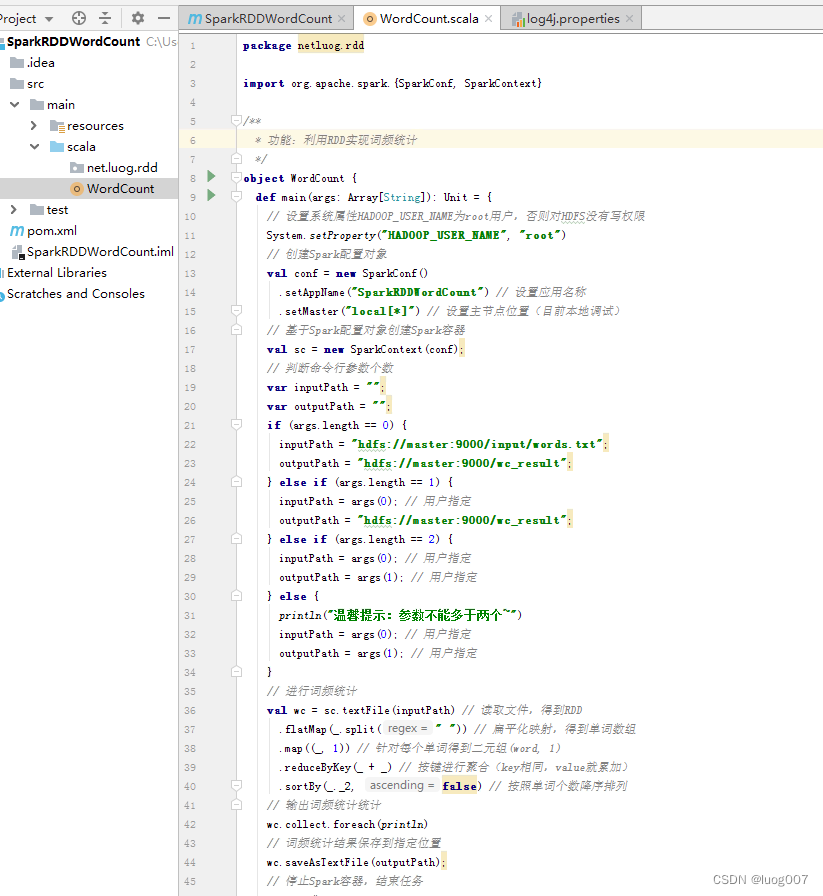

3.1在net.luog.rdd包里创建WordCount单例对象

1.准备工作

1.1启动spark集群

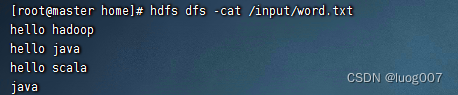

2.1编辑并上传word.txt文件

2.创建项目

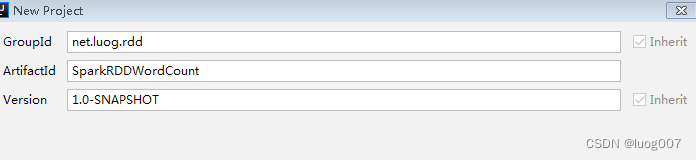

2.1创建项目

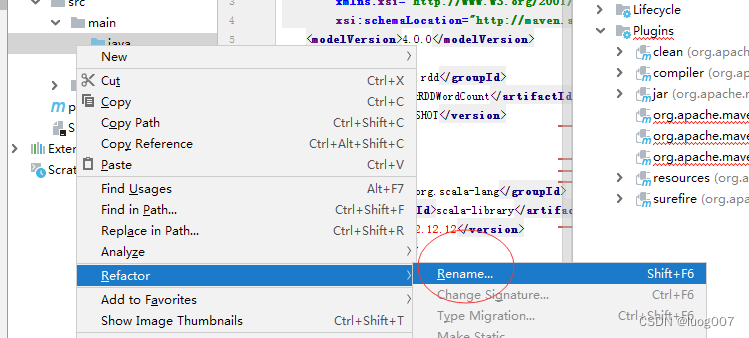

2.2修改文件夹名

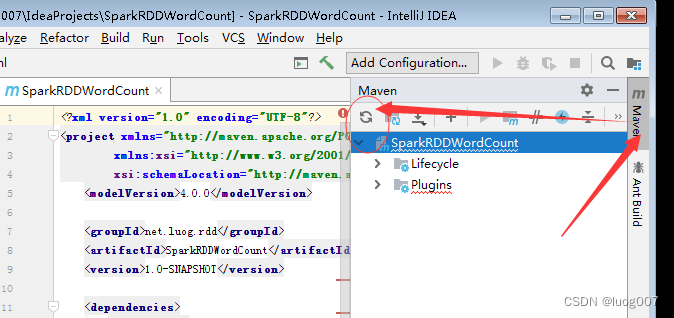

2.3配置依赖配置文件pom.xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0

http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion><groupId>net.luog.rdd</groupId>

<artifactId>SparkRDDWordCount</artifactId>

<version>1.0-SNAPSHOT</version><dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>2.12.15</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>2.4.4</version>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.3.0</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.3.2</version>

<executions>

<execution>

<id>scala-compile-first</id>

<phase>process-resources</phase>

<goals>

<goal>add-source</goal>

<goal>compile</goal>

</goals>

</execution>

<execution>

<id>scala-test-compile</id>

<phase>process-test-resources</phase>

<goals>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

2.4在资源文件夹里创建日指数型文件 - log4j.properties

log4j.rootLogger=ERROR, stdout, logfile

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spark.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

3.创建词频统计单例对象

3.1在net.luog.rdd包里创建WordCount单例对象

package net.luog.rdd

import org.apache.spark.{SparkConf, SparkContext}

/**

* 功能:利用RDD实现词频统计

*/

object WordCount {

def main(args: Array[String]): Unit = {

// 设置系统属性HADOOP_USER_NAME为root用户,否则对HDFS没有写权限

System.setProperty("HADOOP_USER_NAME", "root")

// 创建Spark配置对象

val conf = new SparkConf()

.setAppName("SparkRDDWordCount") // 设置应用名称

.setMaster("local[*]") // 设置主节点位置(目前本地调试)

// 基于Spark配置对象创建Spark容器

val sc = new SparkContext(conf);

// 判断命令行参数个数

var inputPath = "";

var outputPath = "";

if (args.length == 0) {

inputPath = "hdfs://master:9000/input/words.txt";

outputPath = "hdfs://master:9000/wc_result";

} else if (args.length == 1) {

inputPath = args(0); // 用户指定

outputPath = "hdfs://master:9000/wc_result";

} else if (args.length == 2) {

inputPath = args(0); // 用户指定

outputPath = args(1); // 用户指定

} else {

println("温馨提示:参数不能多于两个~")

inputPath = args(0); // 用户指定

outputPath = args(1); // 用户指定

}

// 进行词频统计

val wc = sc.textFile(inputPath) // 读取文件,得到RDD

.flatMap(_.split(" ")) // 扁平化映射,得到单词数组

.map((_, 1)) // 针对每个单词得到二元组(word, 1)

.reduceByKey(_ + _) // 按键进行聚合(key相同,value就累加)

.sortBy(_._2, false) // 按照单词个数降序排列

// 输出词频统计统计

wc.collect.foreach(println)

// 词频统计结果保存到指定位置

wc.saveAsTextFile(outputPath);

// 停止Spark容器,结束任务

sc.stop()

}

}

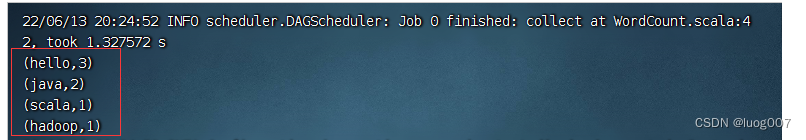

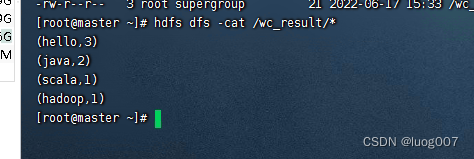

3.2本地运行程序,查看结果

然后查看HDFS上的结果文件

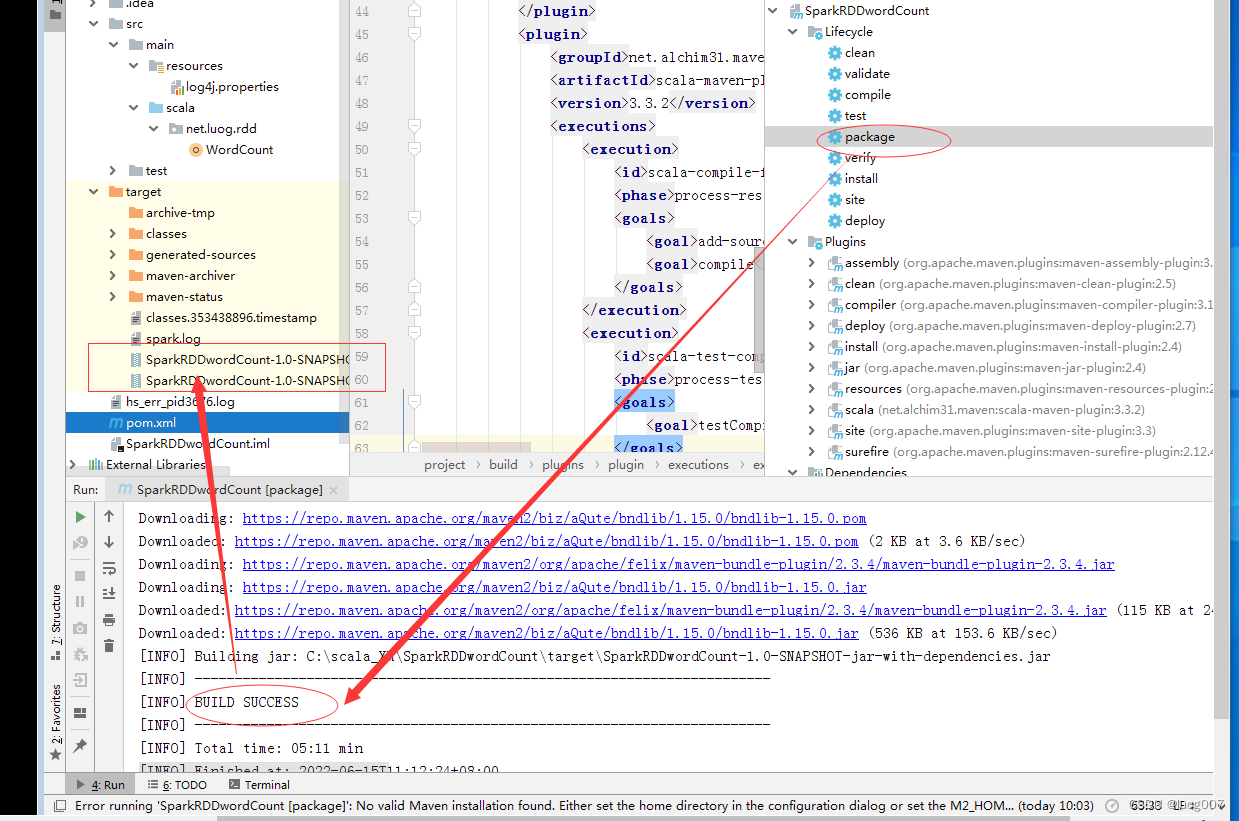

4.将词频统计应用打包上传到虚拟机

打包

在master虚拟机上新建目录/app,并上传

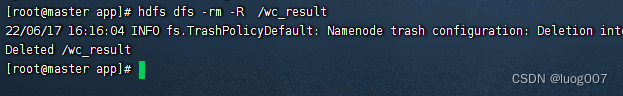

删除HDFS上存放结果文件的目录/wc_result(否则会报错)

spark-submit --master spark://master:7077 --class net.luog.rdd.WordCount SparkRDDWordCount-1.0-SNAPSHOT.jar hdfs://master:9000/input/word.txt hdfs://master:9000/word_result