- 1【NeRF】2、NeRF 首篇经典论文介绍(ECCV2020)_nerf第一篇论文

- 2震惊!谁上春晚最多?我用 Python 分析过往 39 届央视春晚的数据

- 3python从小白到大师-第一章Python应用(二)思考题

- 4anaconda安装教程(window)(个人经验,安装失败我不负责哼哼)_anaconda可以安装在d盘吗

- 5算法开发:Anaconda环境配置(windows python3.8版本)_anaconda python3.8

- 6多测师肖sir_高级金牌讲师_app测试之环境安装(001)_com.dangbeimarket activity

- 7[Docker] Portainer + nginx + AList 打造Docker操作三板斧_portainer 安装alist

- 8什么是游戏引擎

- 9idea使用Deployment部署项目到阿里云服务器的全过程

- 10EasyCVR智能视频监控平台云台降低延迟小tips

第二节笔记及课后作业(在最后) -- 书生-浦语大模型demo体验_from unsafe_import import model_from_hf_path

赞

踩

大模型和InternLM介绍

大模型,顾名思义就是指使用参数量巨大的模型,参数量为数十亿或百亿,可以使用一个模型完成多种任务,是实现通用人工智能的途径。

InternLM是一个轻量级训练框架,自己也体验了一下,使用起来确实方便,不需要大量的依赖就可以开始训练了。我们在有了大模型之后,并不能将其直接在业务中应用起来,还要将其与环境结合训练出智能体,而Lagent就是实现这个功能的。

本节一共要实现3个demo,这里注重实现,先看到效果,不会太注重原理,原理的知识要后面慢慢补。

项目地址:https://github.com/InternLM/tutorial/blob/main/helloworld/hello_world.md

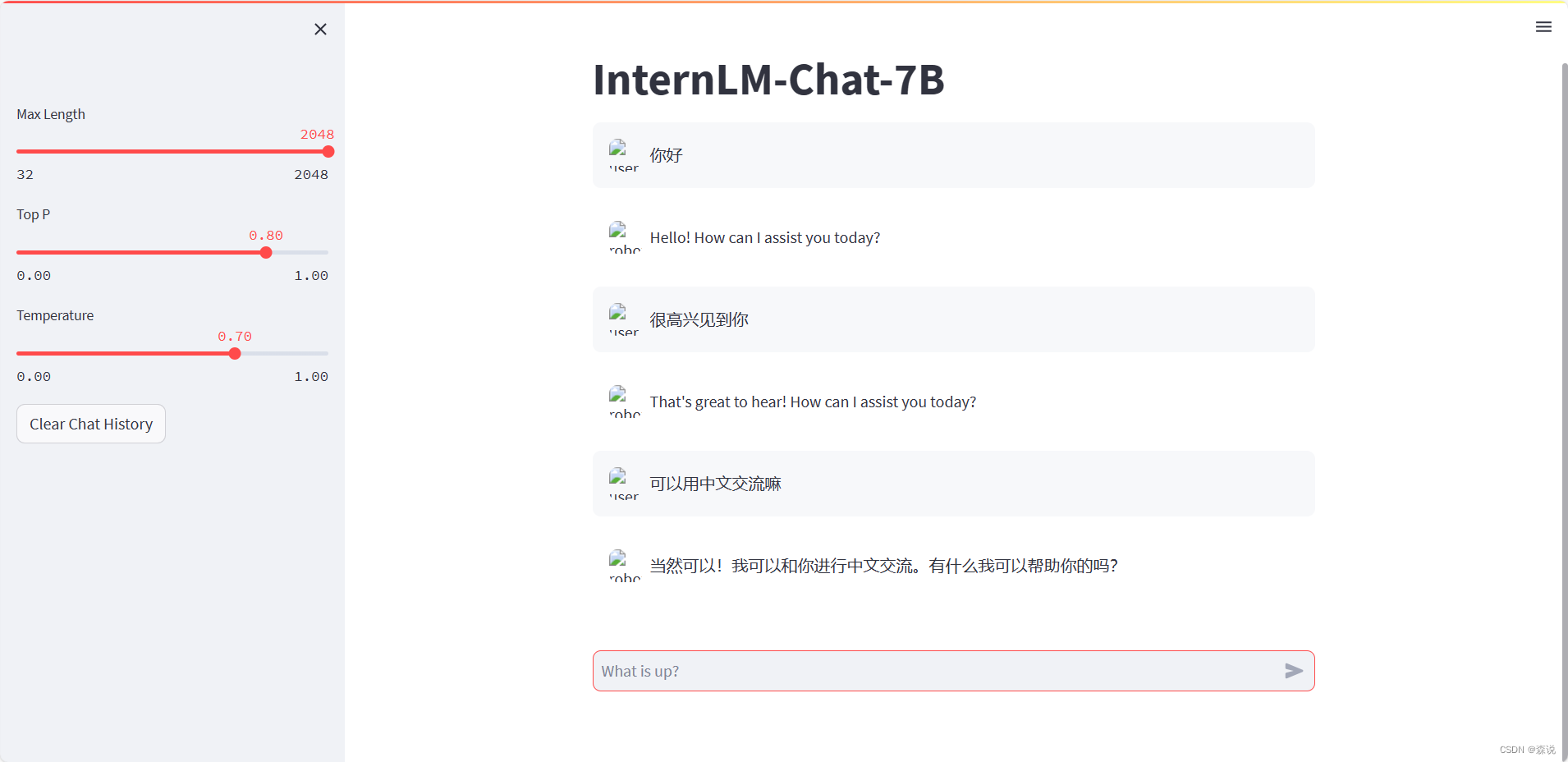

智能对话demo

我们这里使用的模型主要是拥有70亿参数的InternLM-7B,其使用数千个GPU进行训练,并且支持在单个GPU上进行微调。

环境配置

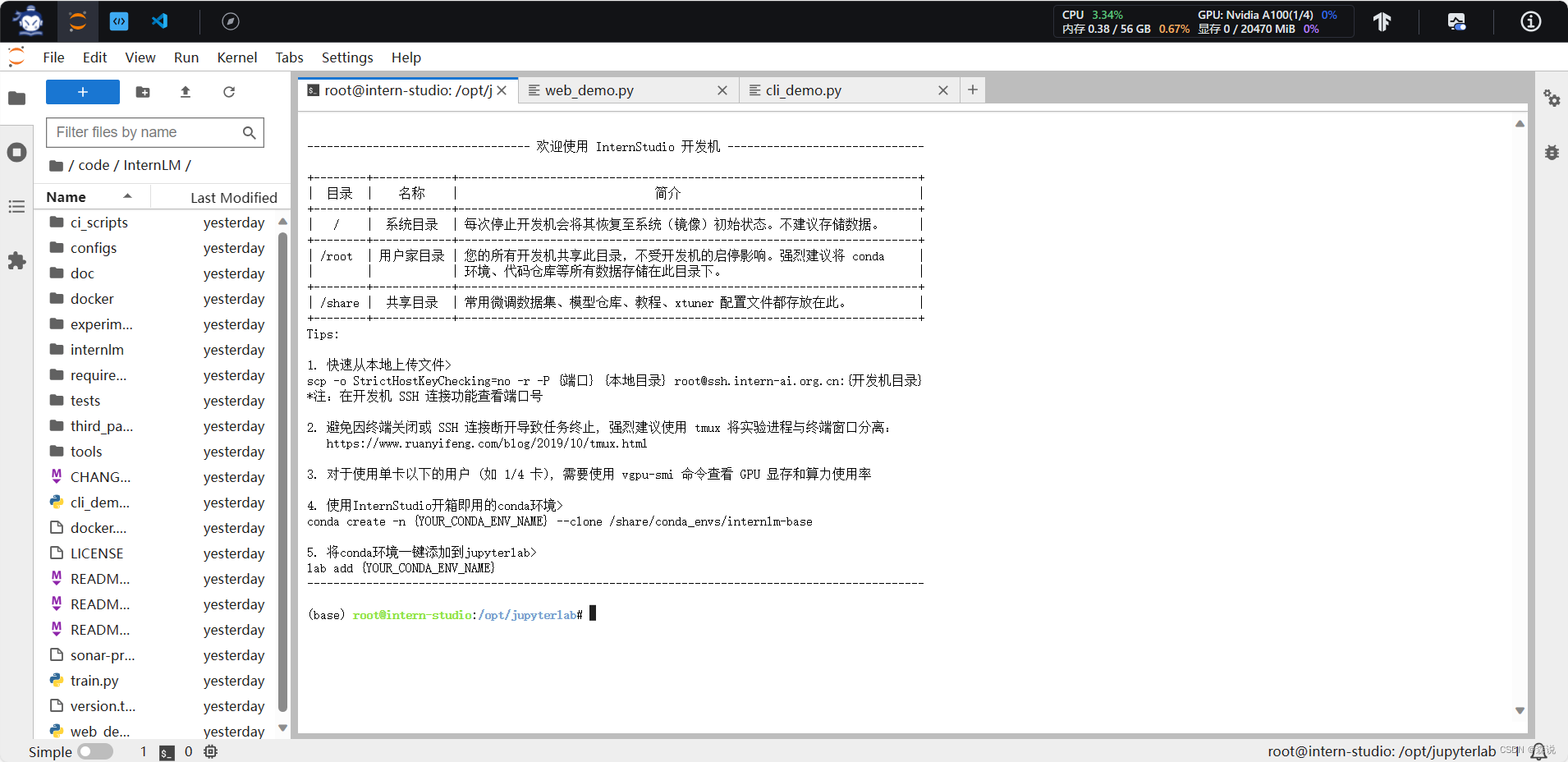

第一步就是进行环境的配置,这里我们在Intern studio中创建一个开发机

之后我们进入开发机,是下面这个页面,有三种开发模式,很人性化呀

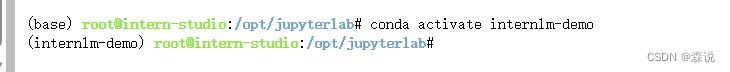

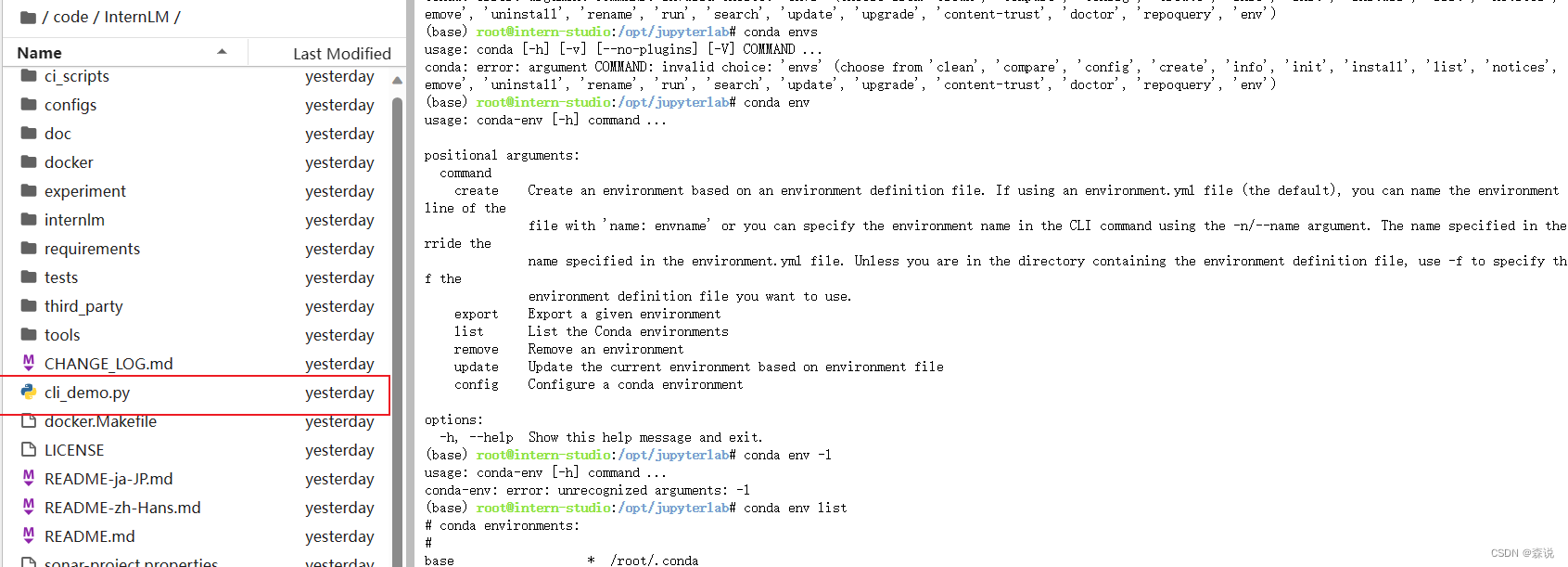

这里我们因为我之前完成了第一个demo,所以已经有了虚拟环境了,所以这里我直接激活了,没有的先按照文档里的弄一下。然后把依赖的包都安装一下。

模型下载

其实最好的一点就是不同的开发机之间的文件居然是共享的,这真是太方便了,我本来合计做笔记就把上一个开发机删除了,这里我重新弄一个还有上面的文件,那么我直接就可以运用了。

mkdir -p /root/model/Shanghai_AI_Laboratory

cp -r /root/share/temp/model_repos/internlm-chat-7b /root/model/Shanghai_AI_Laboratory

import torch

from modelscope import snapshot_download, AutoModel, AutoTokenizer

import os

model_dir = snapshot_download('Shanghai_AI_Laboratory/internlm-chat-7b', cache_dir='/root/model', revision='v1.0.3')

- 1

- 2

- 3

- 4

- 5

- 6

- 7

通过运行上面的命令就可以得到7B的模型了。

代码准备

cd /root/code

git clone https://gitee.com/internlm/InternLM.git

cd InternLM

git checkout 3028f07cb79e5b1d7342f4ad8d11efad3fd13d17

- 1

- 2

- 3

- 4

- 5

然后之后将里面的web_demo的模型路径改为自己模型的路径

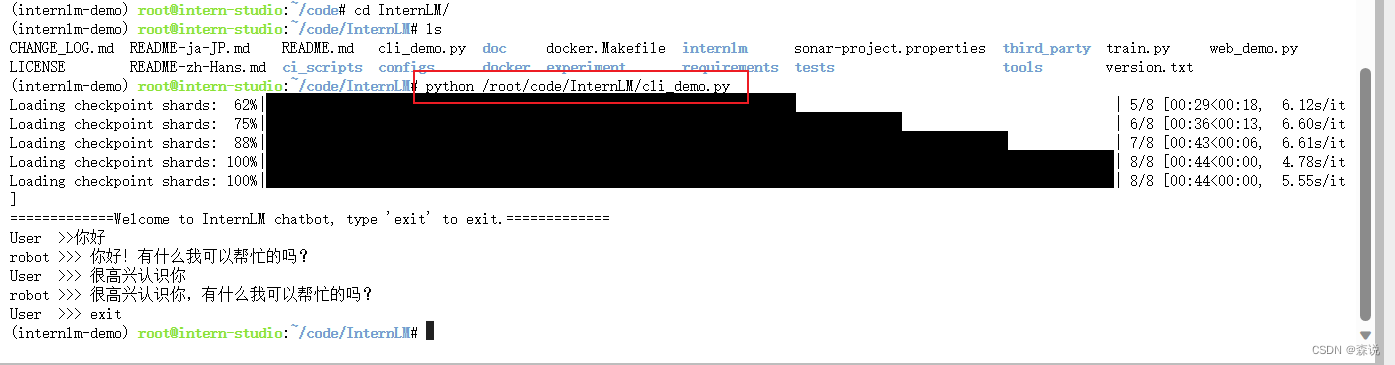

终端运行demo

创建一下下面图片上的文件,之后复制下面的代码

import torch from transformers import AutoTokenizer, AutoModelForCausalLM model_name_or_path = "/root/model/Shanghai_AI_Laboratory/internlm-chat-7b" tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True) model = AutoModelForCausalLM.from_pretrained(model_name_or_path, trust_remote_code=True, torch_dtype=torch.bfloat16, device_map='auto') model = model.eval() system_prompt = """You are an AI assistant whose name is InternLM (书生·浦语). - InternLM (书生·浦语) is a conversational language model that is developed by Shanghai AI Laboratory (上海人工智能实验室). It is designed to be helpful, honest, and harmless. - InternLM (书生·浦语) can understand and communicate fluently in the language chosen by the user such as English and 中文. """ messages = [(system_prompt, '')] print("=============Welcome to InternLM chatbot, type 'exit' to exit.=============") while True: input_text = input("User >>> ") input_text.replace(' ', '') if input_text == "exit": break response, history = model.chat(tokenizer, input_text, history=messages) messages.append((input_text, response)) print(f"robot >>> {response}")

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

之后在终端上运行这个代码

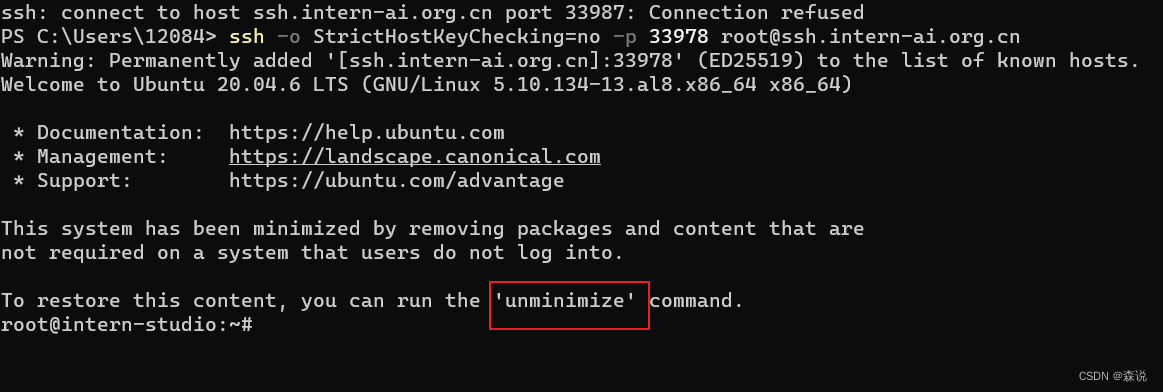

web_demo运行

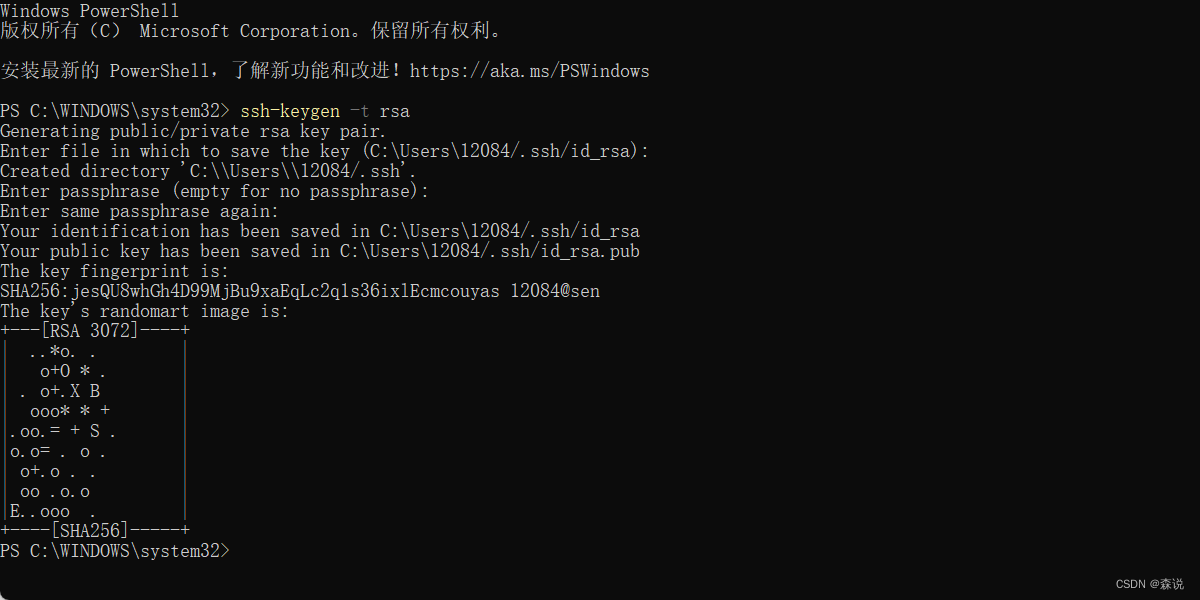

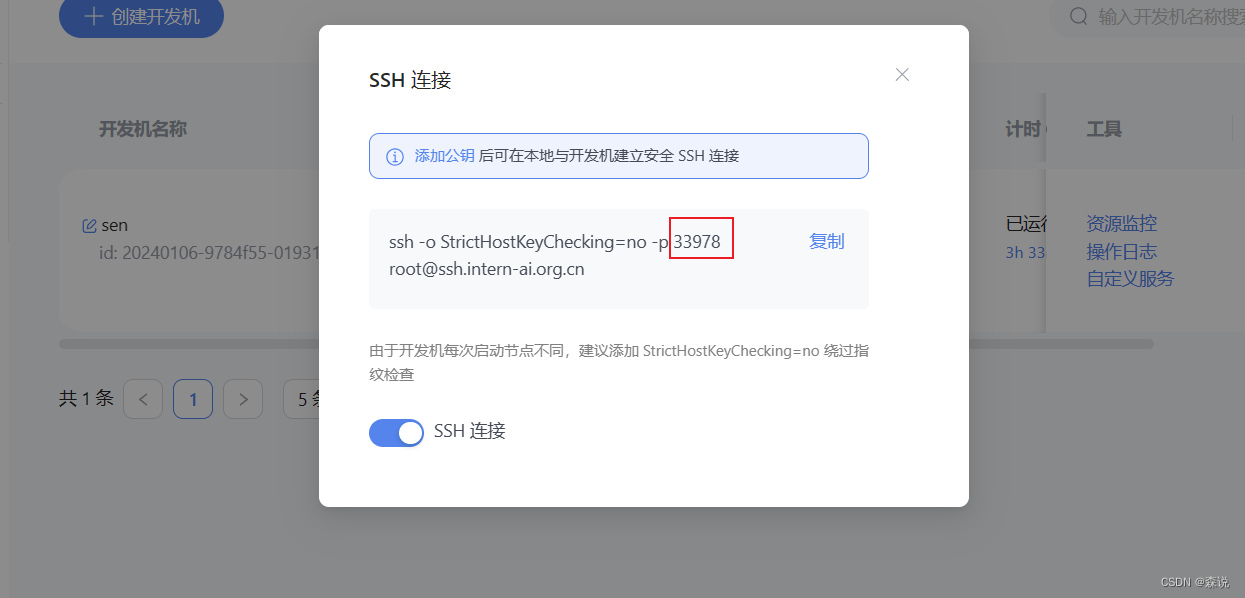

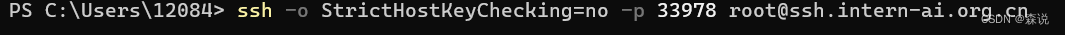

这里有一点比较麻烦,就是要ssh连接,第一次弄了好久,现在再弄一次(我这里是因为放假回家了,就使用我的笔记本电脑了),

配置本地端口

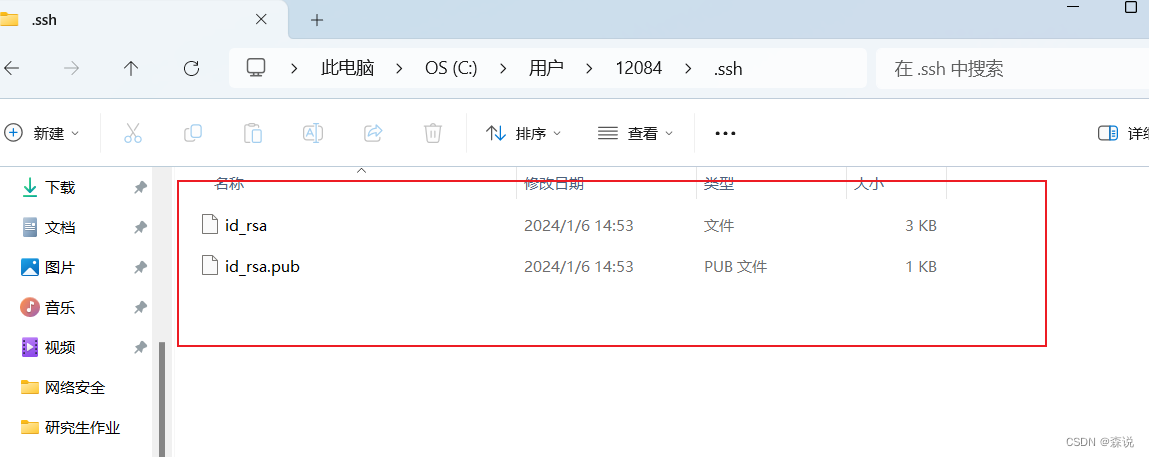

先生成ssh密钥对,一顿回车就行,然后找到这个文件

ssh-keygen -t rsa

- 1

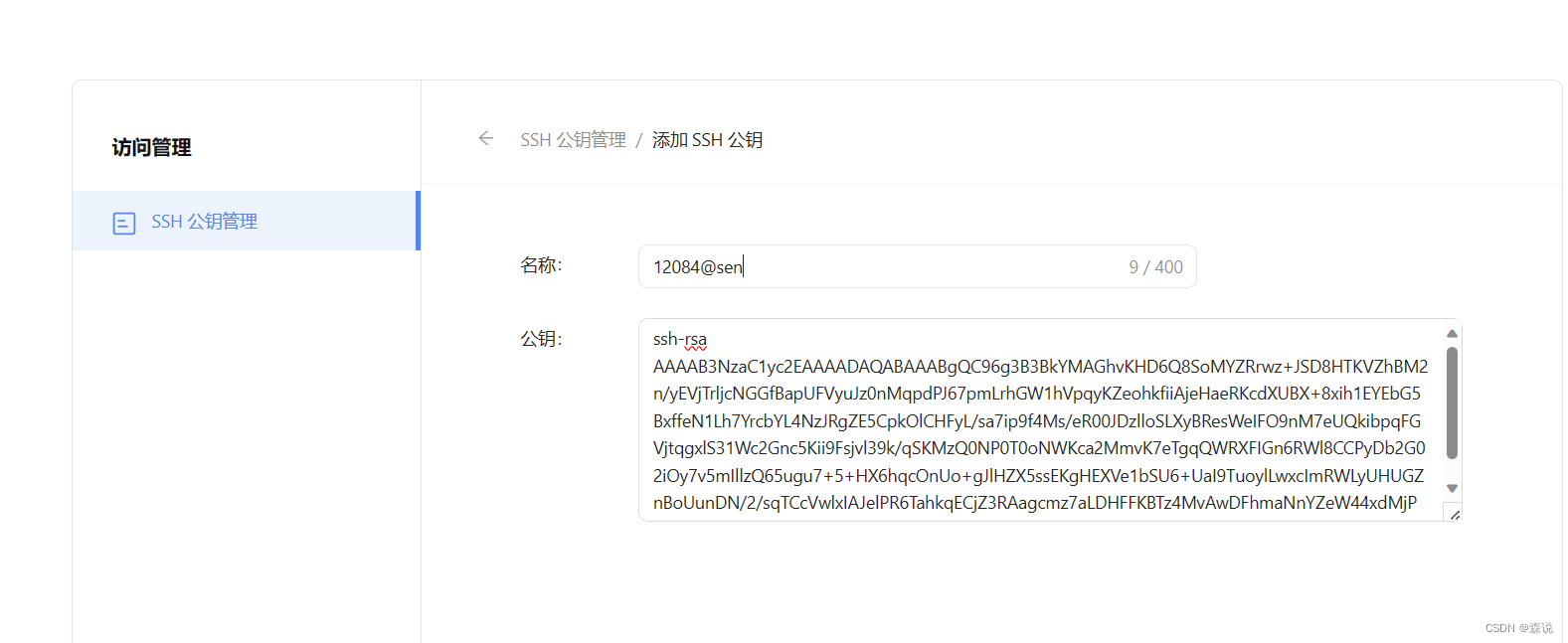

之后这个密钥到开发机中:

之后我们得到我们的id号:我的id是33978

先在本地运行上图的代码,

这里会先会报个错,先运行一下红框里面的单词 我们先在studio的vs code下运行下面的代码。

我们先在studio的vs code下运行下面的代码。

这里的话点击这个连接,会出现下面这个页面,要加载很长时间。

然后下面这个页面

这个demo就到这里结束了。

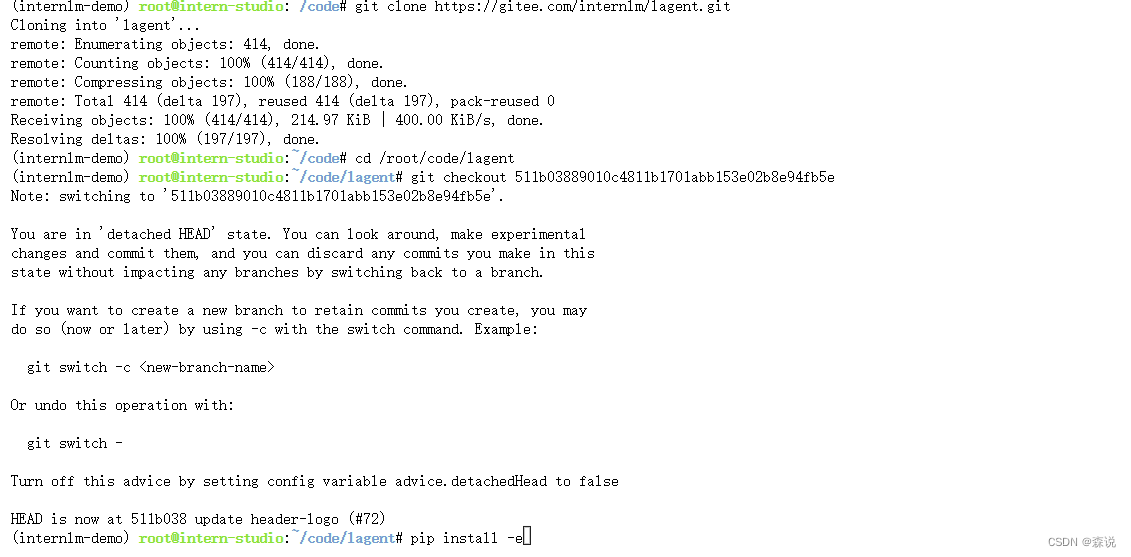

Lagent 智能体工具调用 Demo

下面开始第二个demo,InternLM-Chat-7B 模型和Lagent框架部署一个智能工具调用 Demo。Lagent 是一个轻量级、开源的基于大语言模型的智能体(agent)框架,支持用户快速地将一个大语言模型转变为多种类型的智能体,并提供了一些典型工具为大语言模型赋能。通过 Lagent框架可以更好的发挥 InternLM的全部性能。

安装lagent

cd /root/code

git clone https://gitee.com/internlm/lagent.git

cd /root/code/lagent

git checkout 511b03889010c4811b1701abb153e02b8e94fb5e # 尽量保证和教程commit版本一致

pip install -e . # 源码安装

- 1

- 2

- 3

- 4

- 5

更改demo代码

直接将 /root/code/lagent/examples/react_web_demo.py 内容替换为以下代码

import copy import os import streamlit as st from streamlit.logger import get_logger from lagent.actions import ActionExecutor, GoogleSearch, PythonInterpreter from lagent.agents.react import ReAct from lagent.llms import GPTAPI from lagent.llms.huggingface import HFTransformerCasualLM class SessionState: def init_state(self): """Initialize session state variables.""" st.session_state['assistant'] = [] st.session_state['user'] = [] #action_list = [PythonInterpreter(), GoogleSearch()] action_list = [PythonInterpreter()] st.session_state['plugin_map'] = { action.name: action for action in action_list } st.session_state['model_map'] = {} st.session_state['model_selected'] = None st.session_state['plugin_actions'] = set() def clear_state(self): """Clear the existing session state.""" st.session_state['assistant'] = [] st.session_state['user'] = [] st.session_state['model_selected'] = None if 'chatbot' in st.session_state: st.session_state['chatbot']._session_history = [] class StreamlitUI: def __init__(self, session_state: SessionState): self.init_streamlit() self.session_state = session_state def init_streamlit(self): """Initialize Streamlit's UI settings.""" st.set_page_config( layout='wide', page_title='lagent-web', page_icon='./docs/imgs/lagent_icon.png') # st.header(':robot_face: :blue[Lagent] Web Demo ', divider='rainbow') st.sidebar.title('模型控制') def setup_sidebar(self): """Setup the sidebar for model and plugin selection.""" model_name = st.sidebar.selectbox( '模型选择:', options=['gpt-3.5-turbo','internlm']) if model_name != st.session_state['model_selected']: model = self.init_model(model_name) self.session_state.clear_state() st.session_state['model_selected'] = model_name if 'chatbot' in st.session_state: del st.session_state['chatbot'] else: model = st.session_state['model_map'][model_name] plugin_name = st.sidebar.multiselect( '插件选择', options=list(st.session_state['plugin_map'].keys()), default=[list(st.session_state['plugin_map'].keys())[0]], ) plugin_action = [ st.session_state['plugin_map'][name] for name in plugin_name ] if 'chatbot' in st.session_state: st.session_state['chatbot']._action_executor = ActionExecutor( actions=plugin_action) if st.sidebar.button('清空对话', key='clear'): self.session_state.clear_state() uploaded_file = st.sidebar.file_uploader( '上传文件', type=['png', 'jpg', 'jpeg', 'mp4', 'mp3', 'wav']) return model_name, model, plugin_action, uploaded_file def init_model(self, option): """Initialize the model based on the selected option.""" if option not in st.session_state['model_map']: if option.startswith('gpt'): st.session_state['model_map'][option] = GPTAPI( model_type=option) else: st.session_state['model_map'][option] = HFTransformerCasualLM( '/root/model/Shanghai_AI_Laboratory/internlm-chat-7b') return st.session_state['model_map'][option] def initialize_chatbot(self, model, plugin_action): """Initialize the chatbot with the given model and plugin actions.""" return ReAct( llm=model, action_executor=ActionExecutor(actions=plugin_action)) def render_user(self, prompt: str): with st.chat_message('user'): st.markdown(prompt) def render_assistant(self, agent_return): with st.chat_message('assistant'): for action in agent_return.actions: if (action): self.render_action(action) st.markdown(agent_return.response) def render_action(self, action): with st.expander(action.type, expanded=True): st.markdown( "<p style='text-align: left;display:flex;'> <span style='font-size:14px;font-weight:600;width:70px;text-align-last: justify;'>插 件</span><span style='width:14px;text-align:left;display:block;'>:</span><span style='flex:1;'>" # noqa E501 + action.type + '</span></p>', unsafe_allow_html=True) st.markdown( "<p style='text-align: left;display:flex;'> <span style='font-size:14px;font-weight:600;width:70px;text-align-last: justify;'>思考步骤</span><span style='width:14px;text-align:left;display:block;'>:</span><span style='flex:1;'>" # noqa E501 + action.thought + '</span></p>', unsafe_allow_html=True) if (isinstance(action.args, dict) and 'text' in action.args): st.markdown( "<p style='text-align: left;display:flex;'><span style='font-size:14px;font-weight:600;width:70px;text-align-last: justify;'> 执行内容</span><span style='width:14px;text-align:left;display:block;'>:</span></p>", # noqa E501 unsafe_allow_html=True) st.markdown(action.args['text']) self.render_action_results(action) def render_action_results(self, action): """Render the results of action, including text, images, videos, and audios.""" if (isinstance(action.result, dict)): st.markdown( "<p style='text-align: left;display:flex;'><span style='font-size:14px;font-weight:600;width:70px;text-align-last: justify;'> 执行结果</span><span style='width:14px;text-align:left;display:block;'>:</span></p>", # noqa E501 unsafe_allow_html=True) if 'text' in action.result: st.markdown( "<p style='text-align: left;'>" + action.result['text'] + '</p>', unsafe_allow_html=True) if 'image' in action.result: image_path = action.result['image'] image_data = open(image_path, 'rb').read() st.image(image_data, caption='Generated Image') if 'video' in action.result: video_data = action.result['video'] video_data = open(video_data, 'rb').read() st.video(video_data) if 'audio' in action.result: audio_data = action.result['audio'] audio_data = open(audio_data, 'rb').read() st.audio(audio_data) def main(): logger = get_logger(__name__) # Initialize Streamlit UI and setup sidebar if 'ui' not in st.session_state: session_state = SessionState() session_state.init_state() st.session_state['ui'] = StreamlitUI(session_state) else: st.set_page_config( layout='wide', page_title='lagent-web', page_icon='./docs/imgs/lagent_icon.png') # st.header(':robot_face: :blue[Lagent] Web Demo ', divider='rainbow') model_name, model, plugin_action, uploaded_file = st.session_state[ 'ui'].setup_sidebar() # Initialize chatbot if it is not already initialized # or if the model has changed if 'chatbot' not in st.session_state or model != st.session_state[ 'chatbot']._llm: st.session_state['chatbot'] = st.session_state[ 'ui'].initialize_chatbot(model, plugin_action) for prompt, agent_return in zip(st.session_state['user'], st.session_state['assistant']): st.session_state['ui'].render_user(prompt) st.session_state['ui'].render_assistant(agent_return) # User input form at the bottom (this part will be at the bottom) # with st.form(key='my_form', clear_on_submit=True): if user_input := st.chat_input(''): st.session_state['ui'].render_user(user_input) st.session_state['user'].append(user_input) # Add file uploader to sidebar if uploaded_file: file_bytes = uploaded_file.read() file_type = uploaded_file.type if 'image' in file_type: st.image(file_bytes, caption='Uploaded Image') elif 'video' in file_type: st.video(file_bytes, caption='Uploaded Video') elif 'audio' in file_type: st.audio(file_bytes, caption='Uploaded Audio') # Save the file to a temporary location and get the path file_path = os.path.join(root_dir, uploaded_file.name) with open(file_path, 'wb') as tmpfile: tmpfile.write(file_bytes) st.write(f'File saved at: {file_path}') user_input = '我上传了一个图像,路径为: {file_path}. {user_input}'.format( file_path=file_path, user_input=user_input) agent_return = st.session_state['chatbot'].chat(user_input) st.session_state['assistant'].append(copy.deepcopy(agent_return)) logger.info(agent_return.inner_steps) st.session_state['ui'].render_assistant(agent_return) if __name__ == '__main__': root_dir = os.path.dirname(os.path.dirname(os.path.abspath(__file__))) root_dir = os.path.join(root_dir, 'tmp_dir') os.makedirs(root_dir, exist_ok=True) main()

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

- 149

- 150

- 151

- 152

- 153

- 154

- 155

- 156

- 157

- 158

- 159

- 160

- 161

- 162

- 163

- 164

- 165

- 166

- 167

- 168

- 169

- 170

- 171

- 172

- 173

- 174

- 175

- 176

- 177

- 178

- 179

- 180

- 181

- 182

- 183

- 184

- 185

- 186

- 187

- 188

- 189

- 190

- 191

- 192

- 193

- 194

- 195

- 196

- 197

- 198

- 199

- 200

- 201

- 202

- 203

- 204

- 205

- 206

- 207

- 208

- 209

- 210

- 211

- 212

- 213

- 214

- 215

- 216

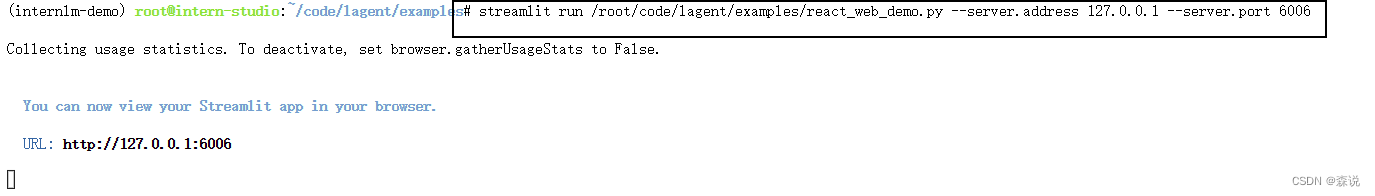

运行demo

在运行demo之前一定要先在本地运行 ssh -CNg -L 6006:127.0.0.1:6006 root@ssh.intern-ai.org.cn -p 33978

然后点击这个链接:之后就可以看到下面的页面:

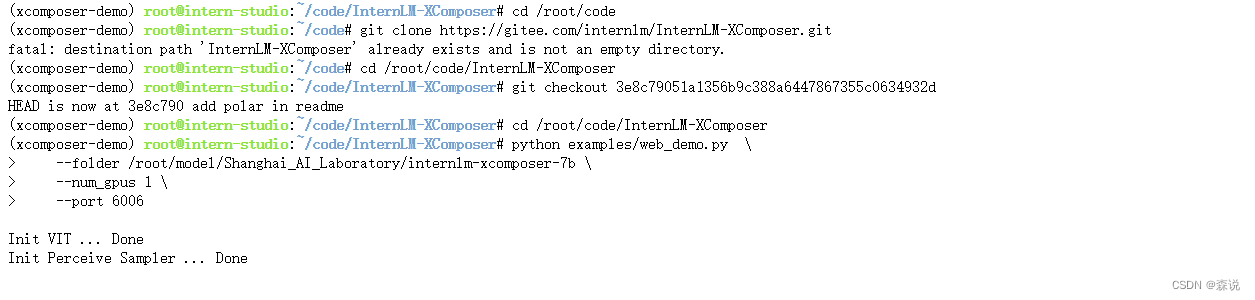

demo3 图文创作

准备代码

pip install transformers==4.33.1 timm==0.4.12 sentencepiece==0.1.99 gradio==3.44.4 markdown2==2.4.10 xlsxwriter==3.1.2 einops accelerate

cd /root/code/InternLM-XComposer

python examples/web_demo.py \

--folder /root/model/Shanghai_AI_Laboratory/internlm-xcomposer-7b \

--num_gpus 1 \

--port 6006

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

运行demo

看见下面这个输出,然后加载模型,

加载模型后点击那个链接,就可以体验效果了。

作业

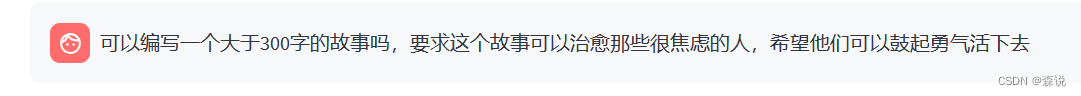

作业1:写个小故事

如下:

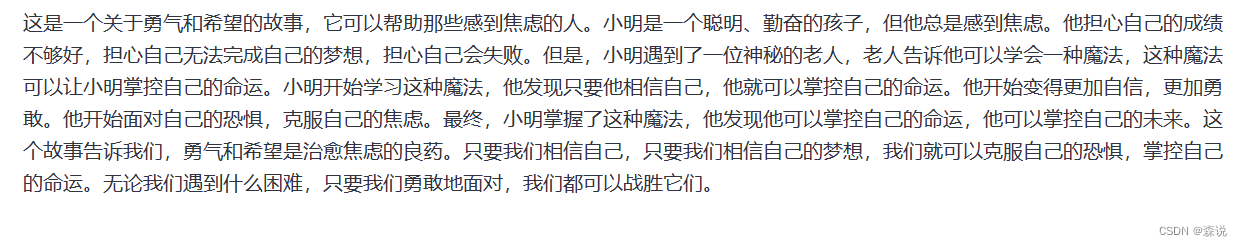

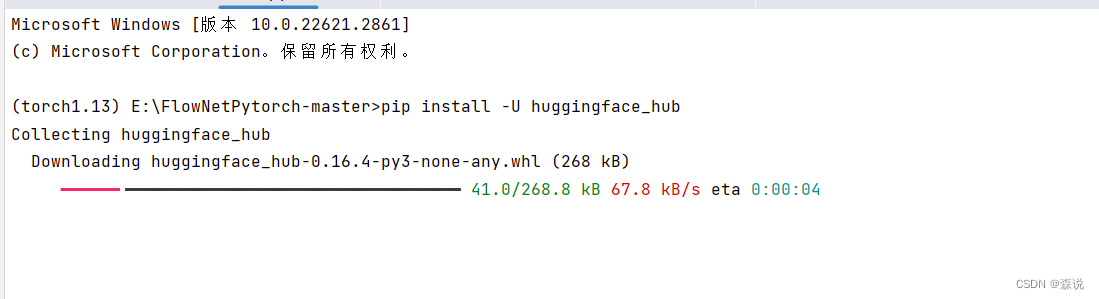

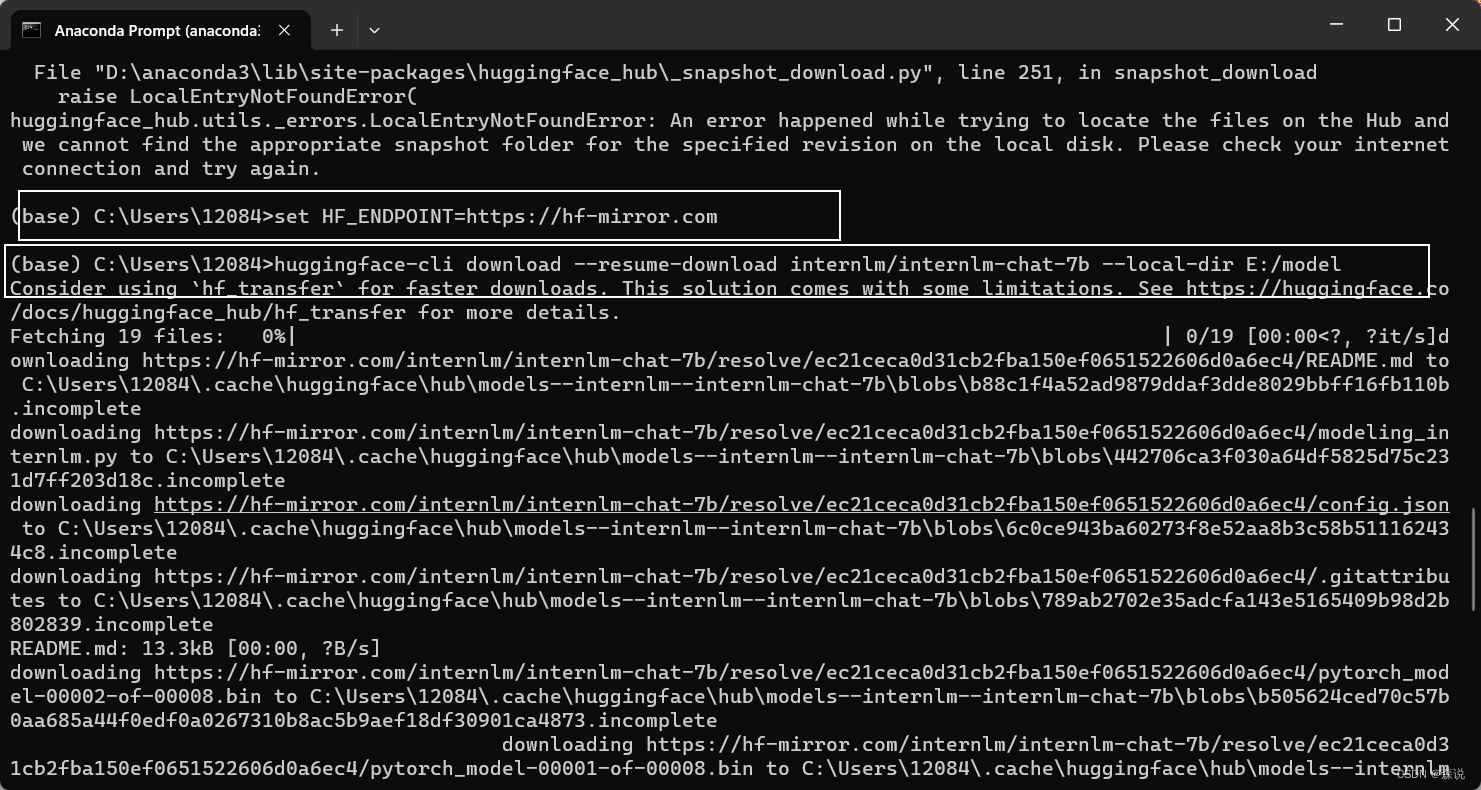

作业2:下载

模型下载

我们打开pycharm

先安装包

pip install -U huggingface_hub

- 1

但是之后运行会出现bug,后直接在,命令行中运行成功了

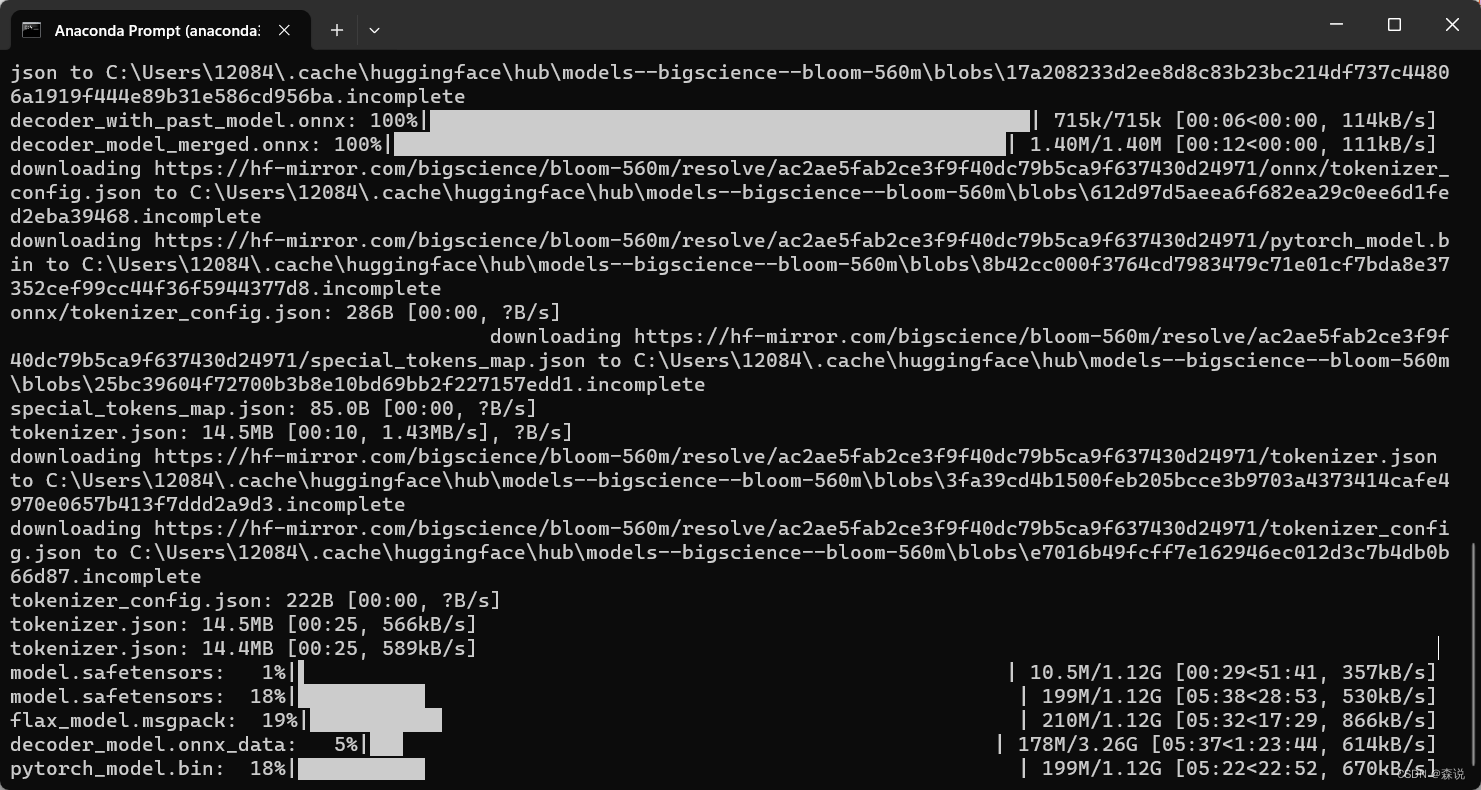

开始下载,但是好慢呀。

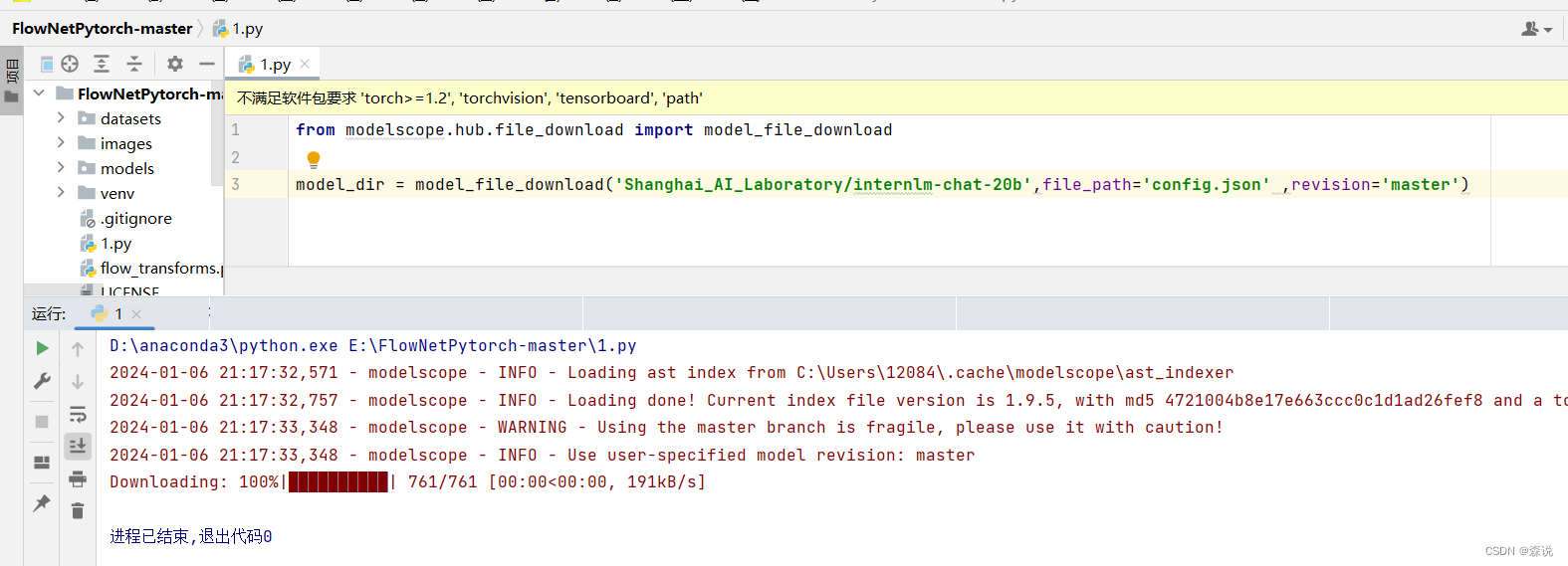

单个配置文件下载

上面是下载模型的文件的方式,下面我们下载一个20B模型的配置文件。并且这里选择使用ModelScope进行下载当个文件,先对其进行安装:

pip install modelscope==1.9.5

pip install transformers==4.35.2

- 1

- 2

接下来使用我的代码

from modelscope.hub.file_download import model_file_download

model_dir = model_file_download('Shanghai_AI_Laboratory/internlm-chat-20b',file_path='config.json' ,revision='master')

- 1

- 2

- 3

下载成功